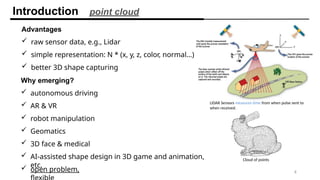

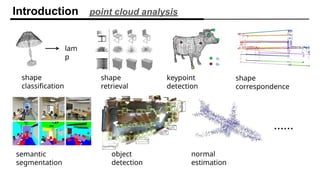

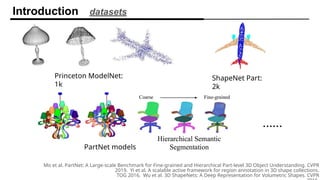

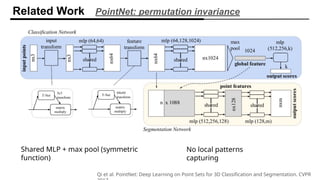

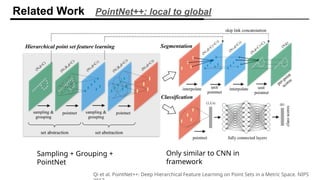

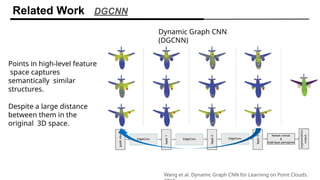

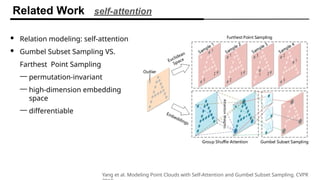

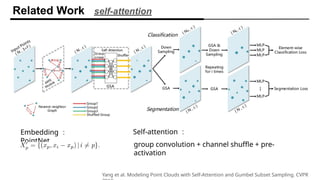

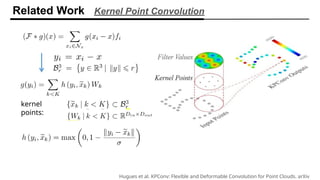

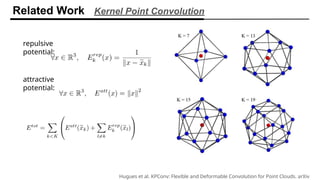

The document discusses self-supervised representation learning methods for 3D point clouds, highlighting the advantages of point clouds in various applications such as autonomous driving and medical imaging. It reviews datasets and challenges in analyzing 3D shapes, presents related work like PointNet and Dynamic Graph CNN, and contrasts discrete and continuous conditional random fields. Key features of the continuous CRF include direct learning and closed-form inference, making it advantageous for modeling data affinity in feature space.

![INPUT GRAPH

TARGET NODE

D

E

F

C

A

B B

D

A

A

In GNN, we have aggregated the neighbor messages by taking their weighted

average. Can we do better?

C

F

B

E

A

?

?

?

?

�

�

Any differentiable function that maps set of vectors in to a single vector can be used

as the Aggregate function.

GCN defines the message passing function as

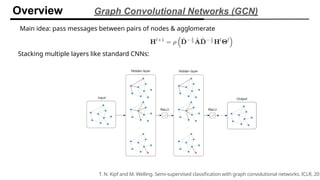

Overview Graph Convolutional Networks (GCN)

𝒉𝑢

𝑘 −1

𝒉𝑣

𝑘 −1

𝒉𝑣

𝑘

C

h𝑣

𝑘

=𝜎 ([𝑊 𝑘 . 𝐴𝑔𝑔𝑟𝑒𝑔𝑎𝑡𝑒 ({h𝑢

𝑘 −1

|𝑢𝜖 𝒩 (𝑣 )}), 𝐵𝑘 h𝑣

𝑘

])

Neighborhood aggregation

h𝑣

𝑘

=𝜎

(𝑊

𝑘

∑

𝑢∈ 𝑁 ( 𝑣) ∪ {𝑣 }

h𝑢

√|𝑁 (𝑢)∨¿ 𝑁(𝑣)|)](https://image.slidesharecdn.com/self-supervisedrepresentationlearningonpointclouds-copy-241117091136-135a3ab0/85/Self-supervised-representation-learning-on-point-clouds-Copy-pptx-23-320.jpg)