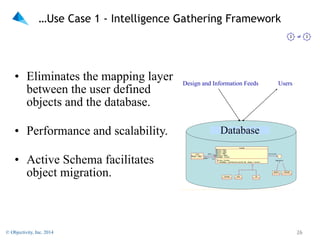

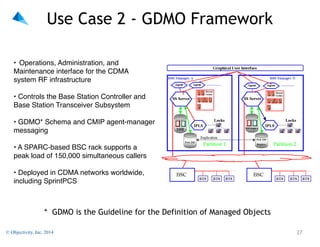

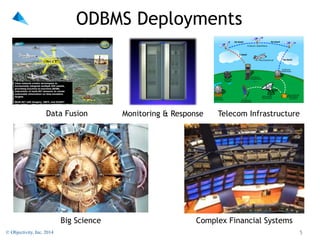

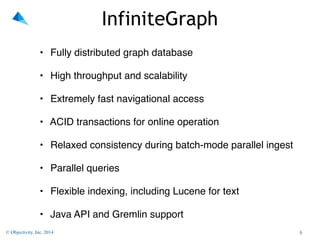

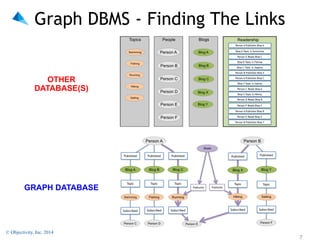

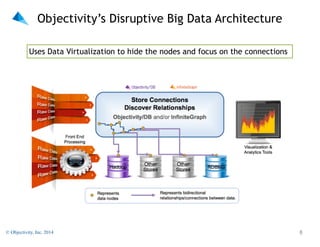

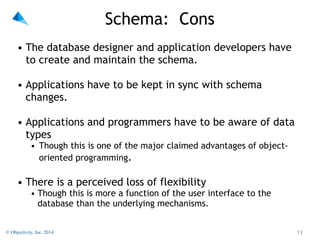

The document discusses schema versus schema-less NoSQL databases, highlighting the pros and cons of each. It introduces Objectivity, Inc. and its software products like Objectivity/DB and InfiniteGraph, emphasizing their capabilities in handling complex data. The summary also outlines various use cases and the importance of flexibility in database design while demonstrating that schemas can enhance database management system power.

![Who's Who?

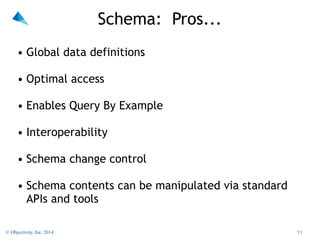

• SCHEMA:

• Network [CODASYL] databases - DDL [1972]

• Relational Databases - Data Dictionary

• Object Databases - ODMG'93

• Most Graph Databases

"

• Schema-less:

• KSAM/ISAM/DSAM/ESAM

• IMS (hierarchical)

• Pick OS Database (hash-tables)

• MUMPS (hierarchical array-storage)

• MongoDB - a specialized JSON (and JSON-like)

document store.

• CouchDB - a JSON document store.

© Objectivity, Inc. 2014

!10](https://image.slidesharecdn.com/schema-meetup-feb-2014-140221182952-phpapp02/85/NoSQL-Simplified-Schema-vs-Schema-less-10-320.jpg)

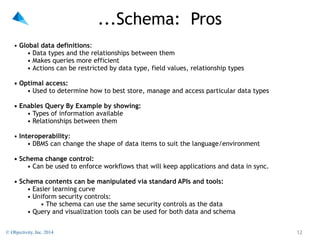

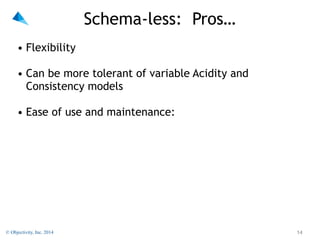

![…Schema-less: Pros

• Flexibility - Users can, in theory:

"

• Put any kind of data into the system

• Create new kinds of relationships between things (in a few

products)

• Find data without worrying about the types of data

involved.

"

• Can be more tolerant of variable Acidity and Consistency

models

"

• Ease of use and maintenance:

• No need to worry about data types

• No need for a DBA

• Applications will [probably] work when new data arrives

© Objectivity, Inc. 2014

!15](https://image.slidesharecdn.com/schema-meetup-feb-2014-140221182952-phpapp02/85/NoSQL-Simplified-Schema-vs-Schema-less-15-320.jpg)

![…Schema-less: Cons

• Apparent tolerance of variable CAP models is actually orthogonal to

the schema vs schema-less debate [as is support for sharding].

"

• Performance suffers

"

• Integrity is practically non-existent

• Maintaining referential integrity is hard

• Queries may misinterpret values within an object

• 54686973206973206120737472696e6720706c7573206120666c6f

6174696e6720706f696e74206e756d62657258585858706c757320

616e6f7468657220737472696e67

© Objectivity, Inc. 2014

!17](https://image.slidesharecdn.com/schema-meetup-feb-2014-140221182952-phpapp02/85/NoSQL-Simplified-Schema-vs-Schema-less-17-320.jpg)

![Schema-less: Cons

• Apparent tolerance of variable CAP models is actually orthogonal to

the schema vs schema-less debate [as is support for sharding].

"

• Performance suffers

"

• Integrity is practically non-existent

• Maintaining referential integrity is hard

• Queries may misinterpret values within an object

• 54686973206973206120737472696e6720706c7573206120666c6f

6174696e6720706f696e74206e756d62657258585858706c757320

616e6f7468657220737472696e67

Floating Point

© Objectivity, Inc. 2014

!18](https://image.slidesharecdn.com/schema-meetup-feb-2014-140221182952-phpapp02/85/NoSQL-Simplified-Schema-vs-Schema-less-18-320.jpg)

![Schema-less: Cons

• Apparent tolerance of variable CAP models is actually orthogonal to

the schema vs schema-less debate [as is support for sharding].

"

• Performance suffers

"

• Integrity is practically non-existent

• Maintaining referential integrity is hard

• Queries may misinterpret values within an object

• 54686973206973206120737472696e6720706c7573206120666c6f

6174696e6720706f696e74206e756d62657258585858706c757320

616e6f7468657220737472696e67

Floating Point

• A ZIPcode may be stored as an integer (01234) or a string (“01234”)

in JSON, causing query and display problems.

© Objectivity, Inc. 2014

!19](https://image.slidesharecdn.com/schema-meetup-feb-2014-140221182952-phpapp02/85/NoSQL-Simplified-Schema-vs-Schema-less-19-320.jpg)