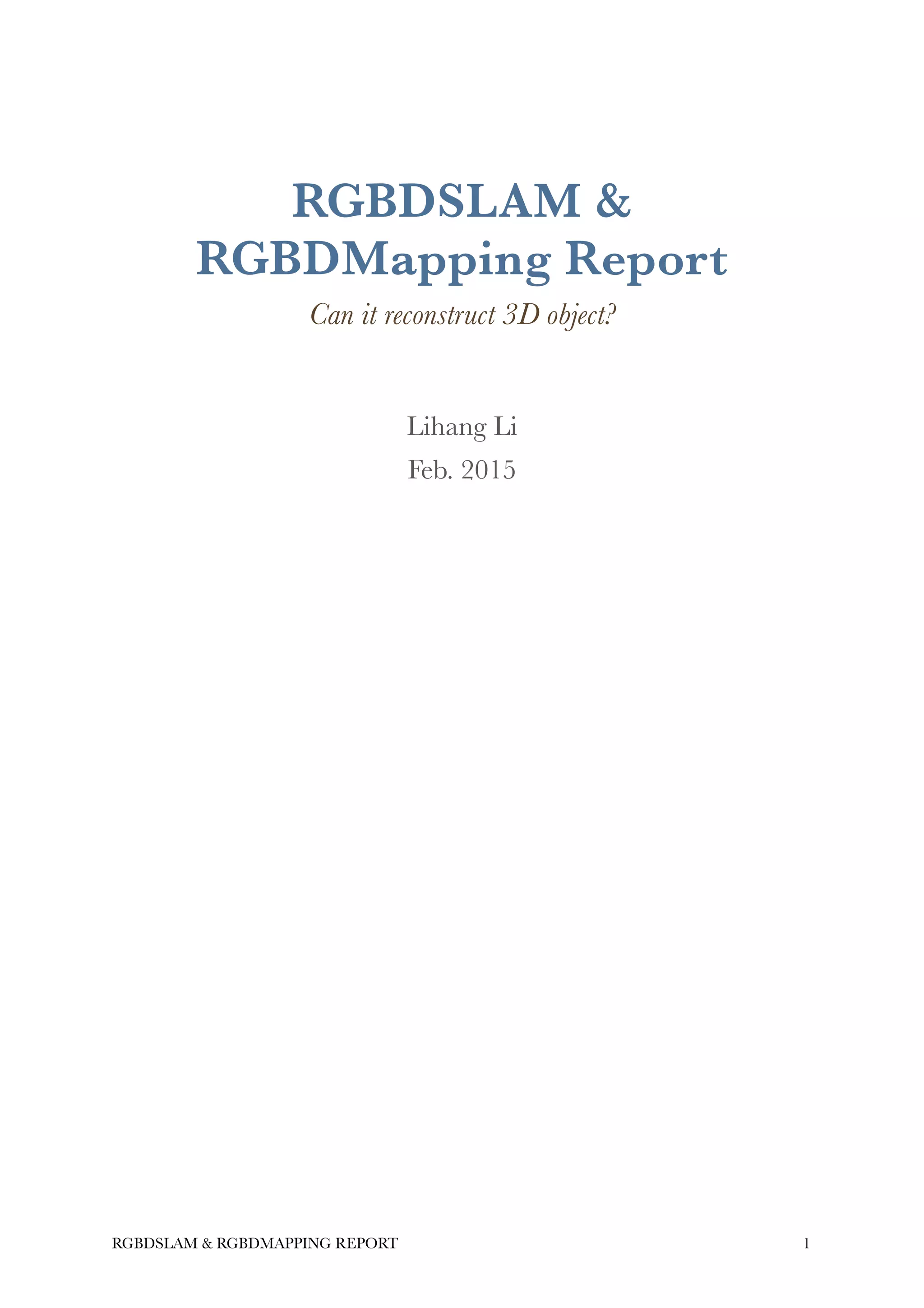

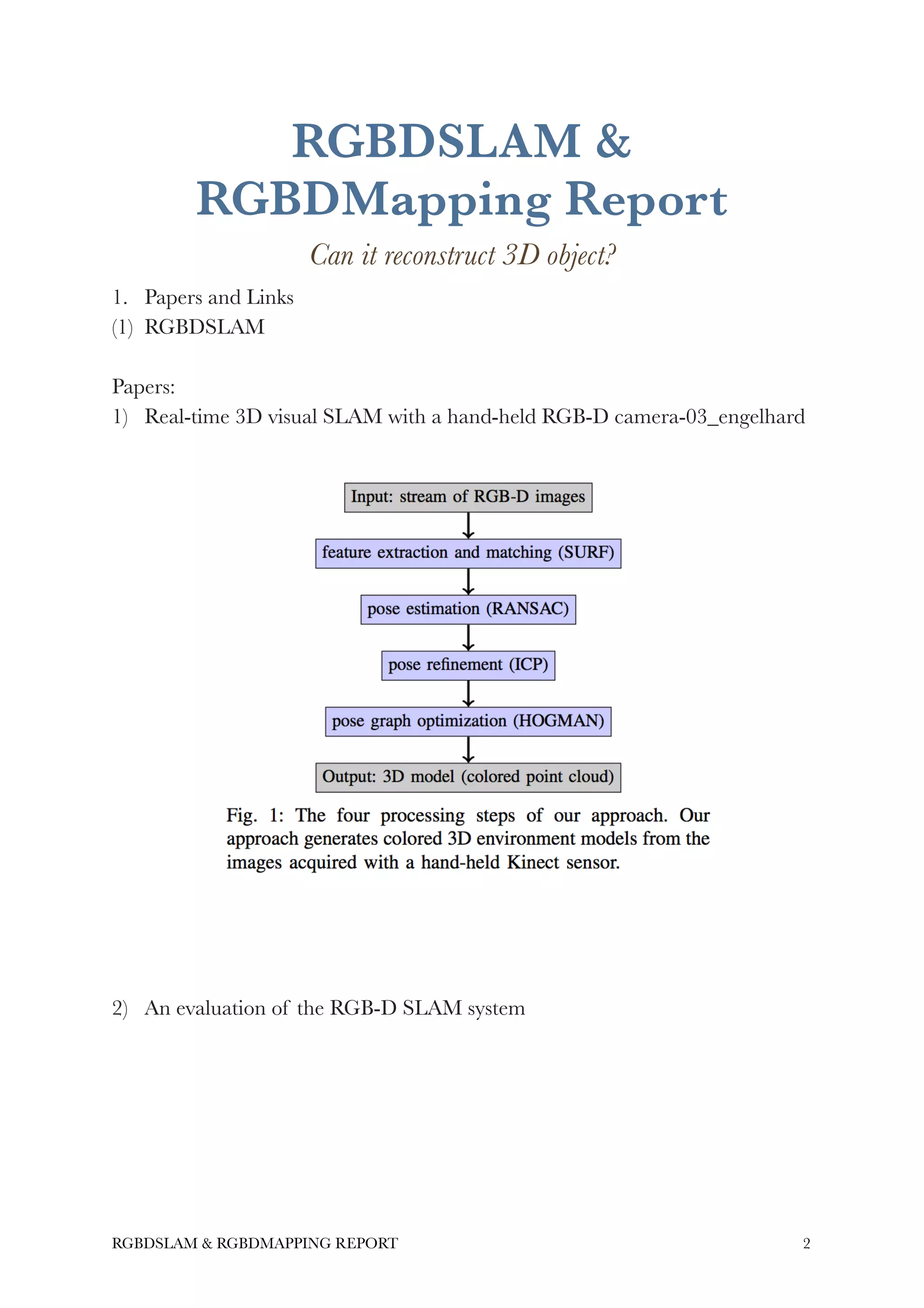

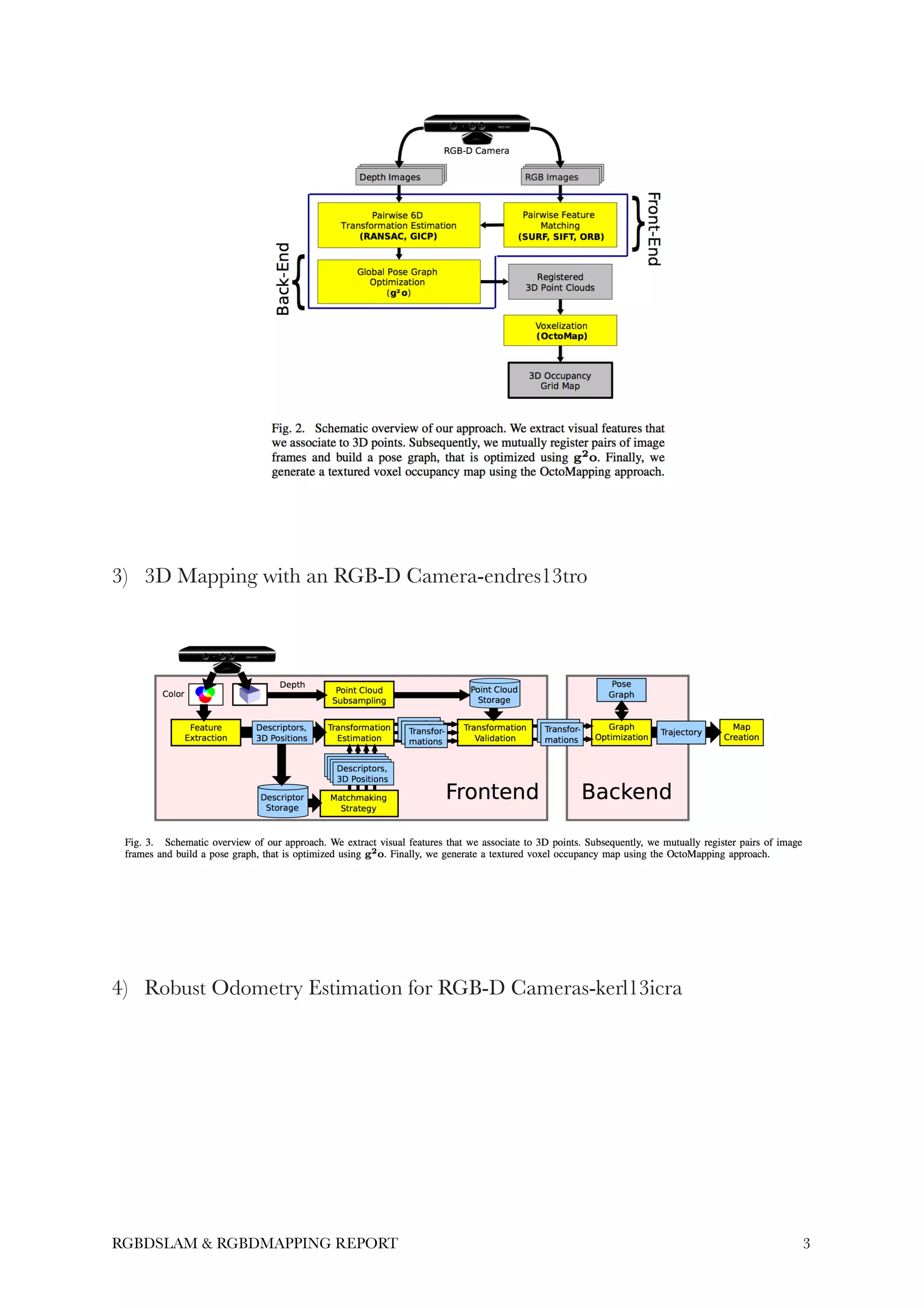

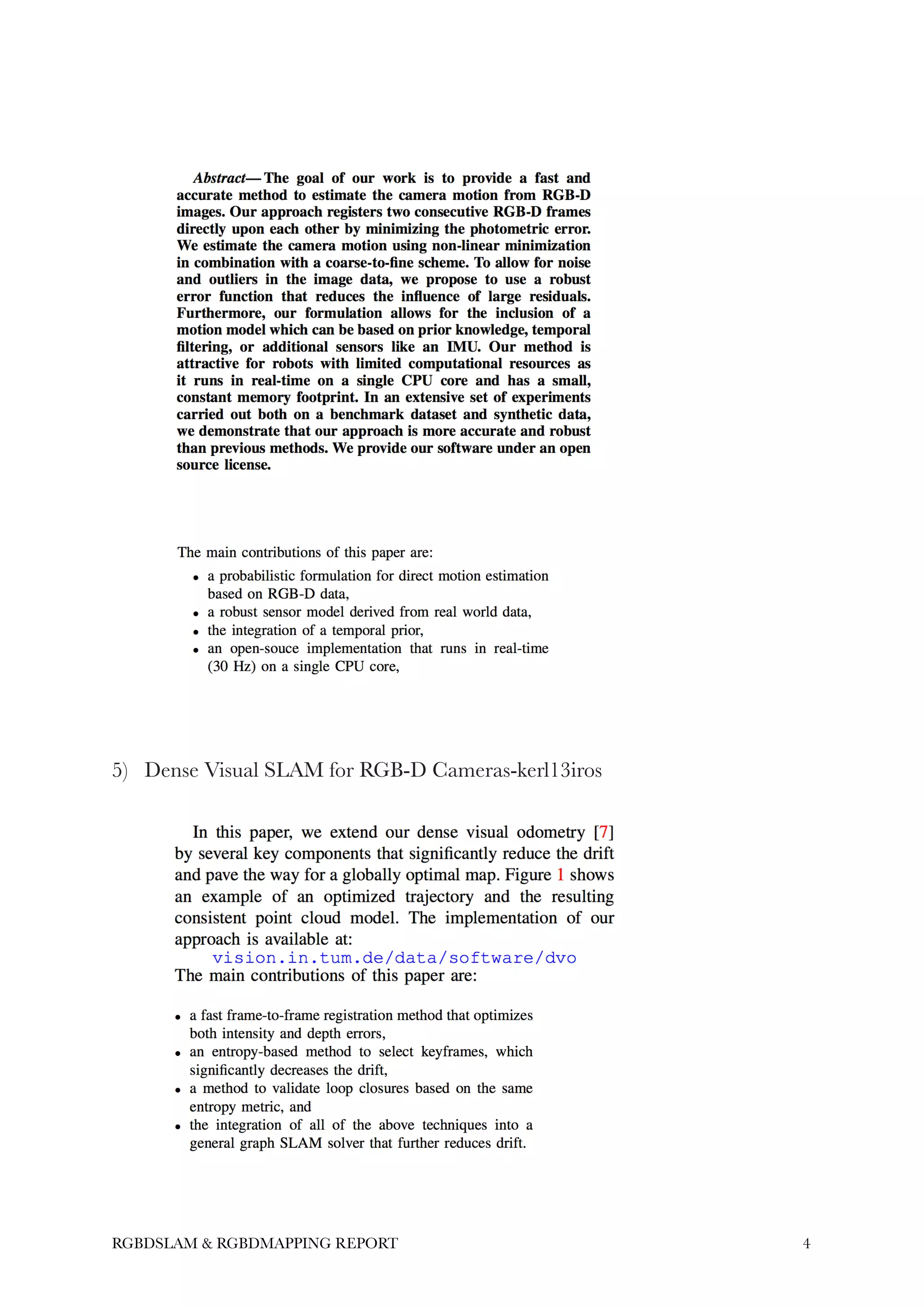

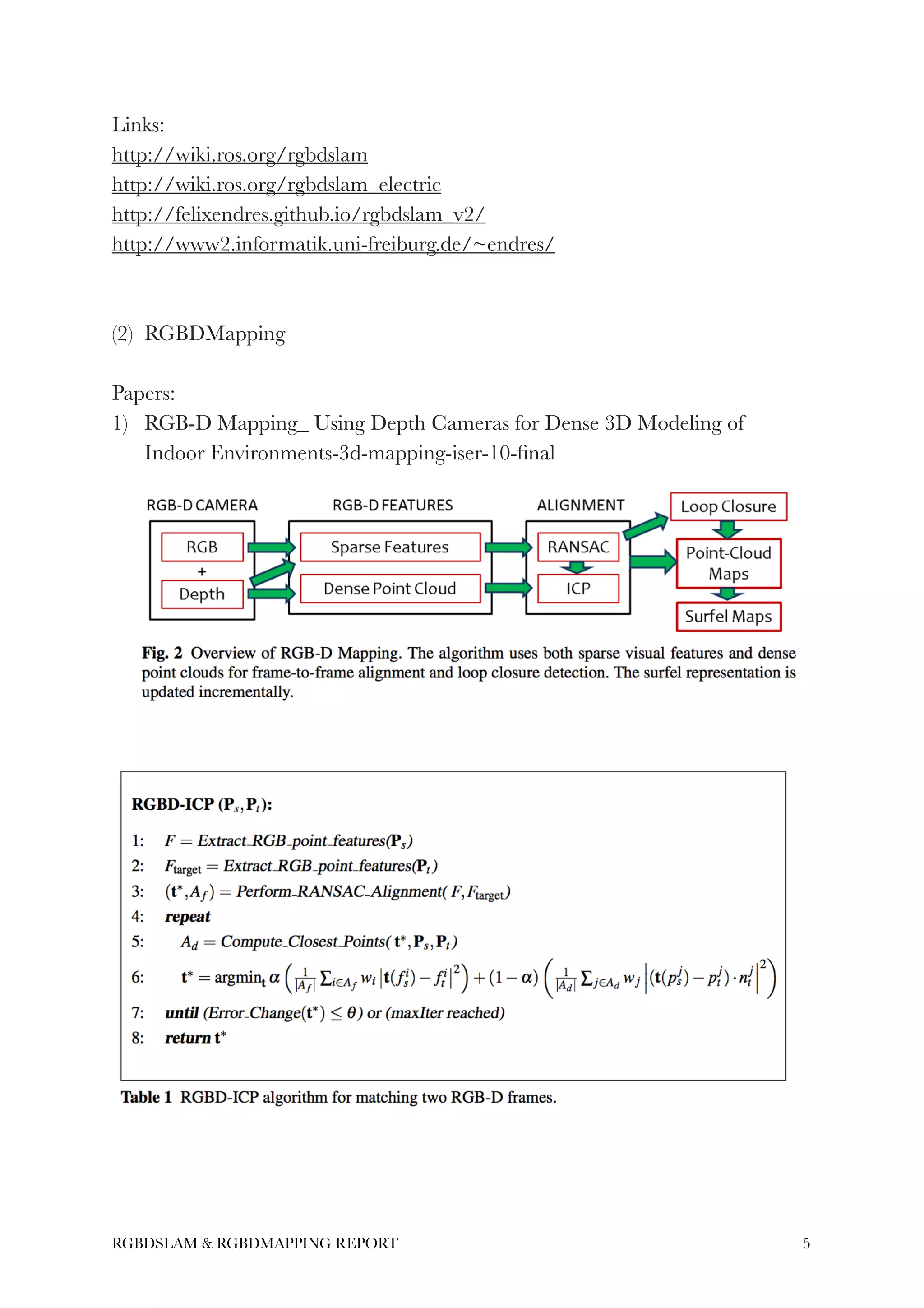

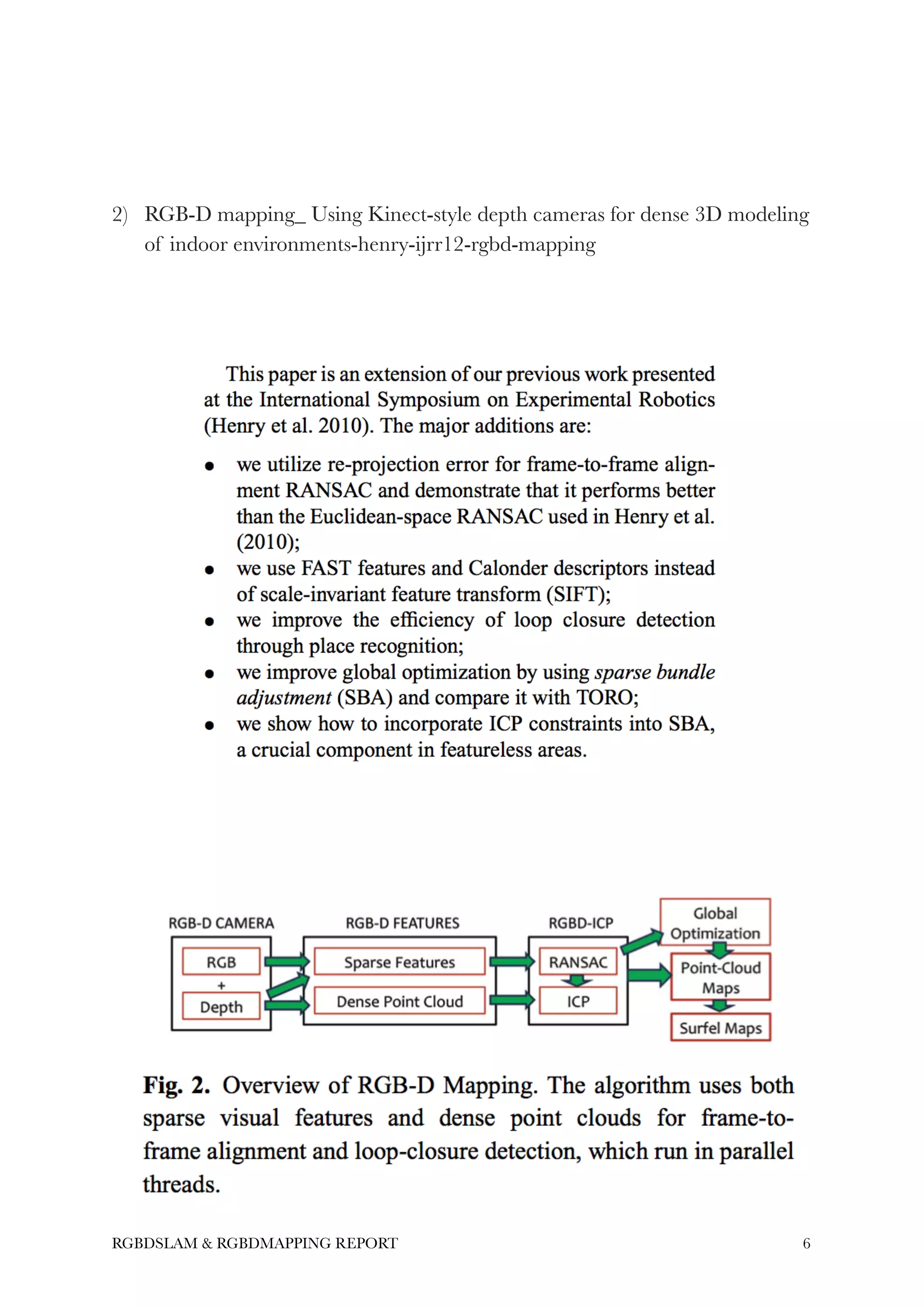

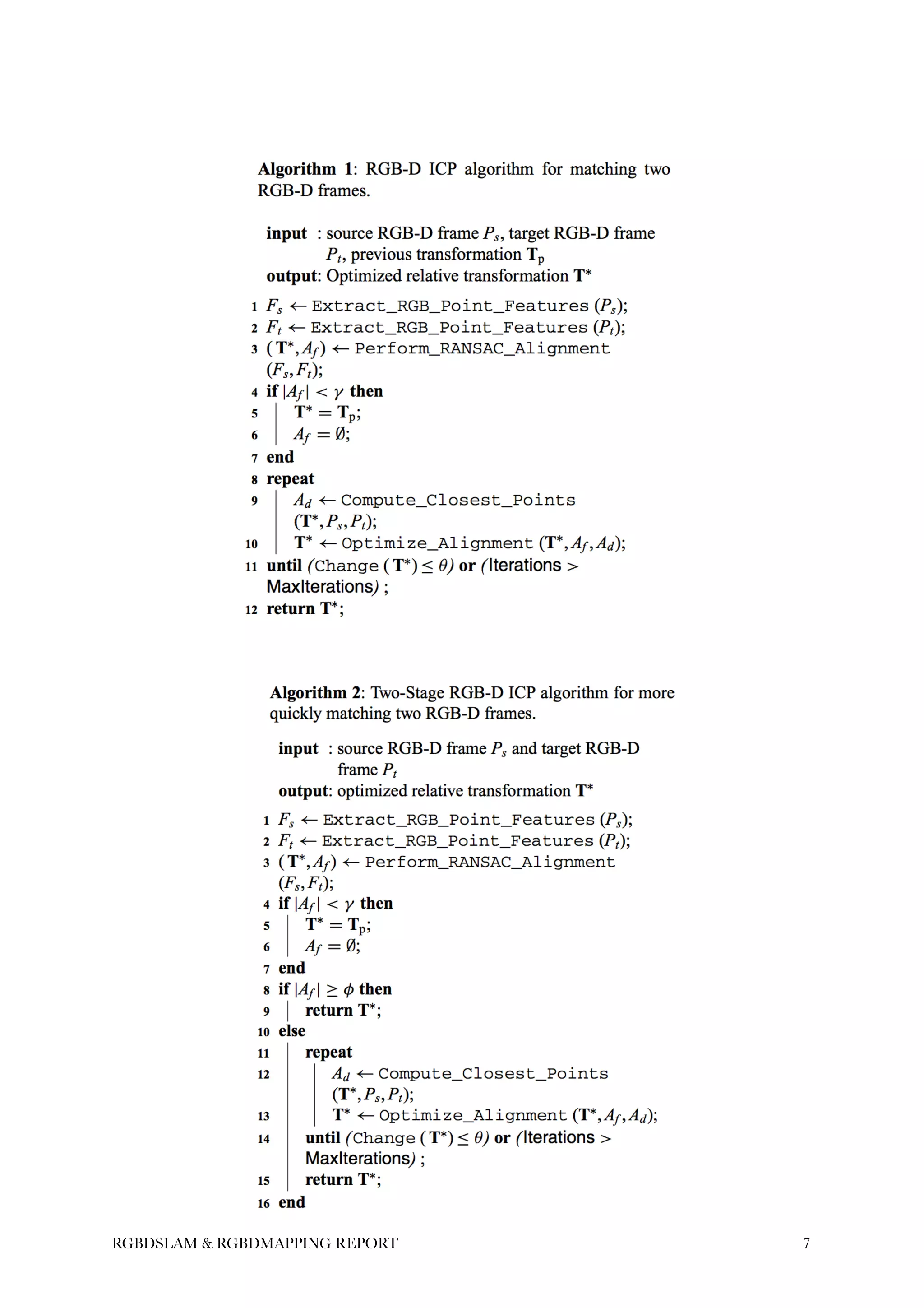

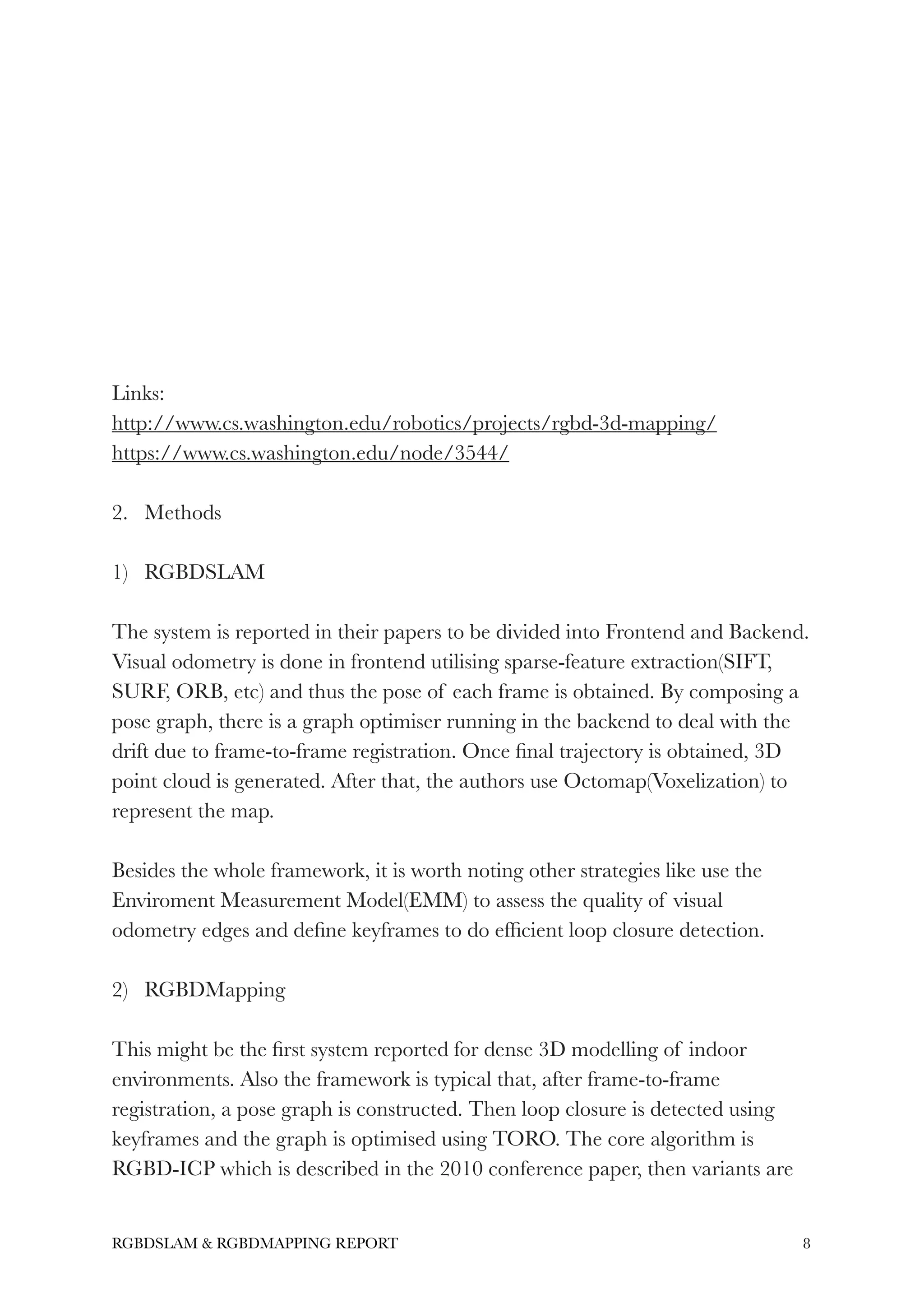

This document summarizes and compares RGBD SLAM and mapping systems, including RGBDSLAM, RGBDMapping, and RTABMAP. These systems typically perform frame-to-frame registration, loop closure detection, and pose graph optimization to reconstruct 3D maps from RGBD camera data. While RGBDSLAM and RGBDMapping produce near real-time results, RTABMAP has better performance and memory management. The document recommends following RTABMAP as a framework for 3D object modeling due to its experimental results, timing, and open source code. Areas for future improvement include quantitative evaluation methods and fusing techniques from multiple RGBD systems.