Here are the key steps to create an application from the catalog in the OpenShift web console:

1. Click on "Add to Project" on the top navigation bar and select "Browse Catalog".

2. This will open the catalog page showing available templates. You can search for a template or browse by category.

3. Select the template you want to use, for example Node.js.

4. On the next page you can review the template details and parameters. Fill in any required parameters.

5. Click "Create" to instantiate the template and create the application resources in your current project.

6. OpenShift will then provision the application, including building container images if required.

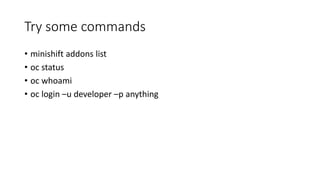

![Using oc cluster up

• Always check the current version of the documentation

• Install docker-ce

• Edit file: /etc/docker/daemon.json

{

“insecure-registries”: [“172.30.0.0/16”]

}

• This is to allow running Docker registry in a private network

• systemctl daemon-reload; systemctl restart docker

• Disable the firewall

• Docker run nginx to create local config to start a random container

• Type sudo oc cluster up, takes about 10-15 minutes

• Check using docker ps

• Shutdown: oc cluster down](https://image.slidesharecdn.com/redhatopenshiftfundamentals-220917195219-cb79388d/85/Red-Hat-Openshift-Fundamentals-pptx-49-320.jpg)

![Create a yaml file to create pod – helloworld.yaml

apiVersion: v1

kind: Pod

metadata:

name: examplepod

spec:

containers:

- name: ubuntu

image: ubuntu:latest

command: [“echo”]

args: [“hello world”]](https://image.slidesharecdn.com/redhatopenshiftfundamentals-220917195219-cb79388d/85/Red-Hat-Openshift-Fundamentals-pptx-60-320.jpg)

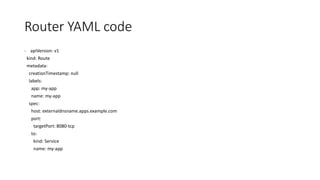

![Creating Routes

• oc expose service my-app –name my-app [--hostname=my-

app.apps.example.com] to create a route on top of an existing service

• Specify DNS name only if this name can be resolved to a wildcard DNS domain

name

• If a DNS name is not specified, a name will be automatically generated

• Alternatively, use oc create combined with a YAML or JSON file

• Note that oc new-app does NOT create a route

• Because you don’t want your newly deployed application automatically

exposed, for security reason

• Use oc delete route to un-expose a service](https://image.slidesharecdn.com/redhatopenshiftfundamentals-220917195219-cb79388d/85/Red-Hat-Openshift-Fundamentals-pptx-93-320.jpg)

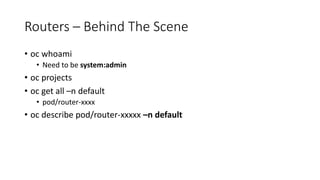

![Try To Create Routes

• oc whoami

• As developer

• oc get all

• Find out what pods and service do we have

• oc expose [servicename]

• oc expose svc/httpd

• oc expose httpd –name httpd

• oc get all

• Now it’s there

• oc describe route [routername]

• oc describe httpd

• Pay attention to Requested Host:

• Endpoints: -> how we get to the Pod](https://image.slidesharecdn.com/redhatopenshiftfundamentals-220917195219-cb79388d/85/Red-Hat-Openshift-Fundamentals-pptx-96-320.jpg)