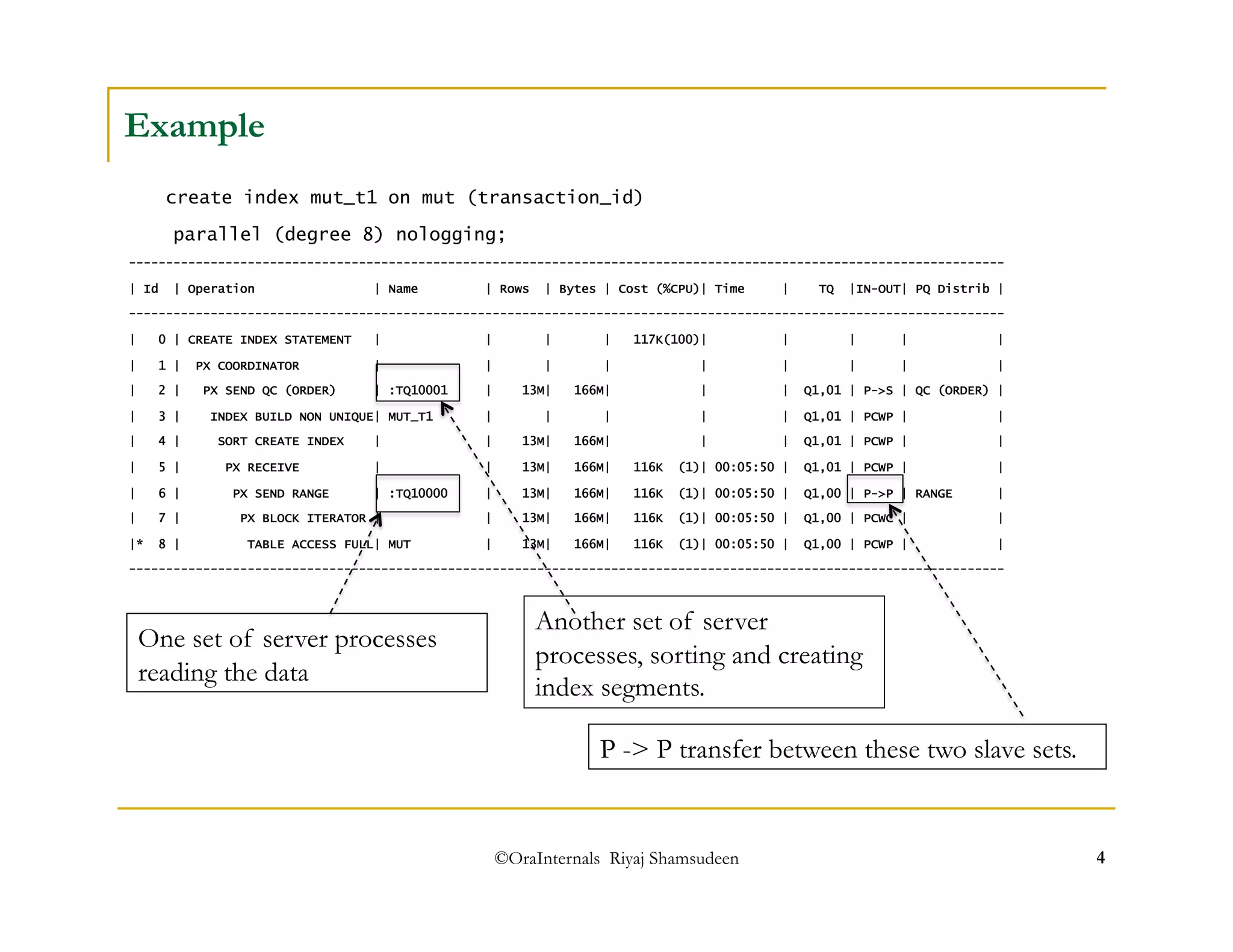

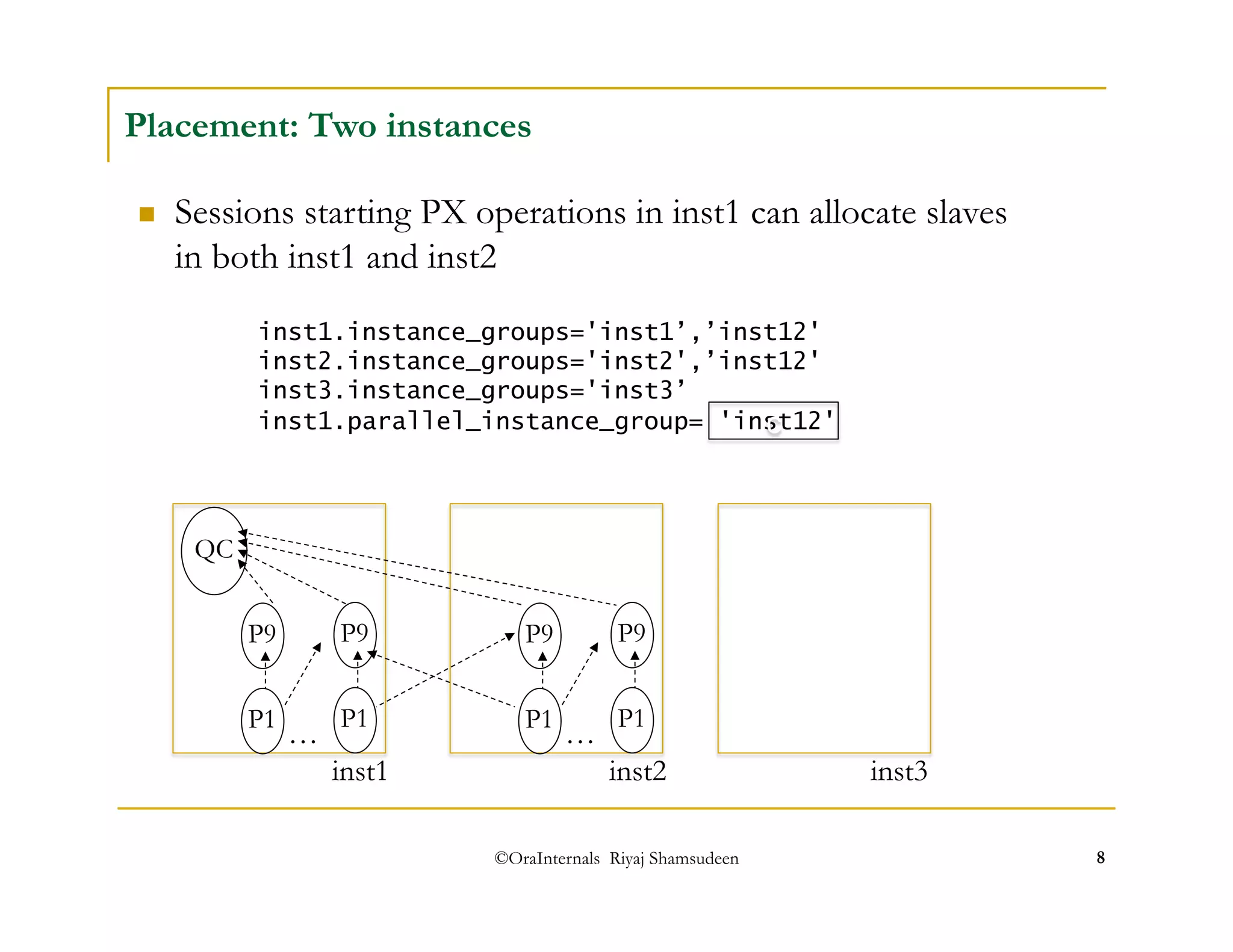

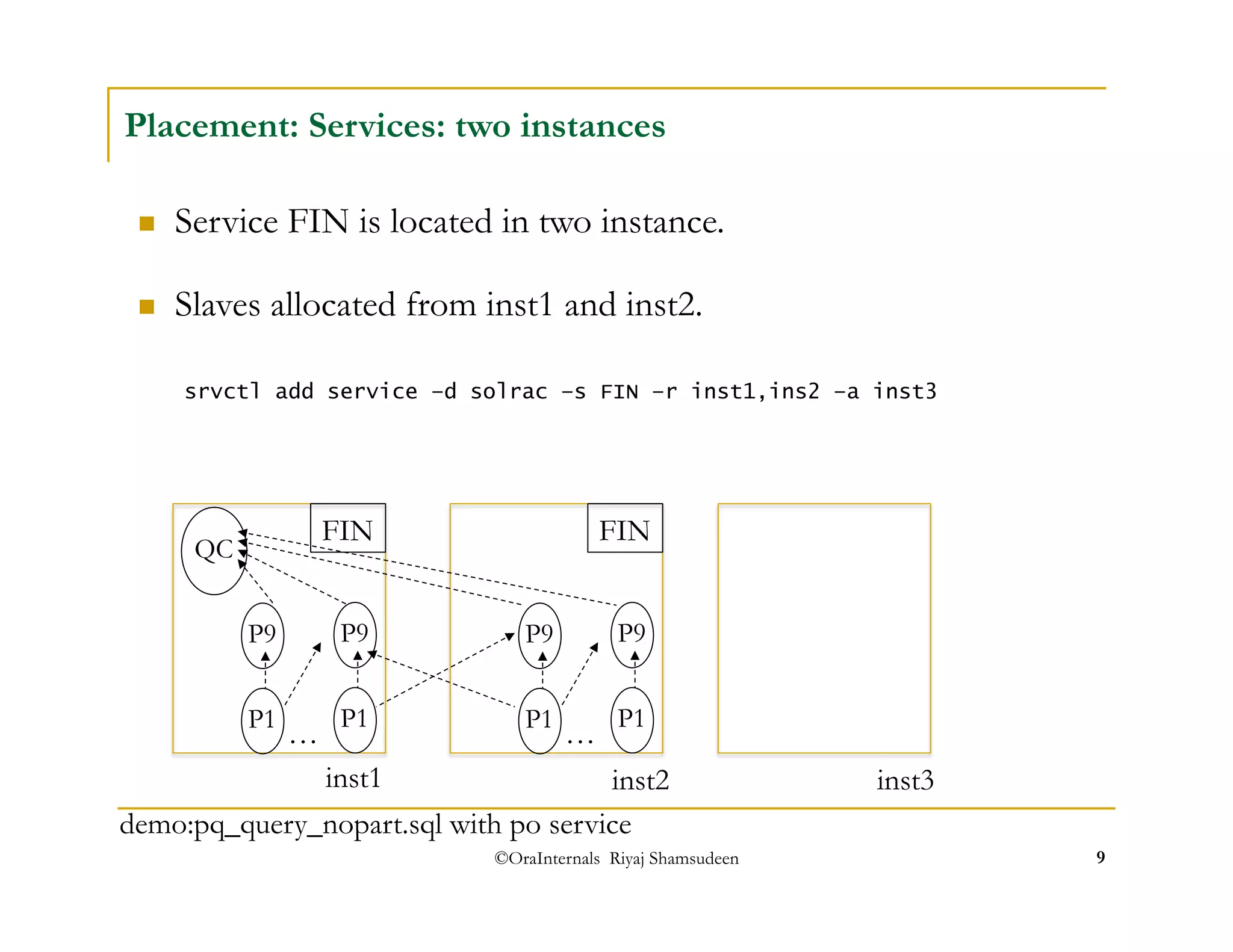

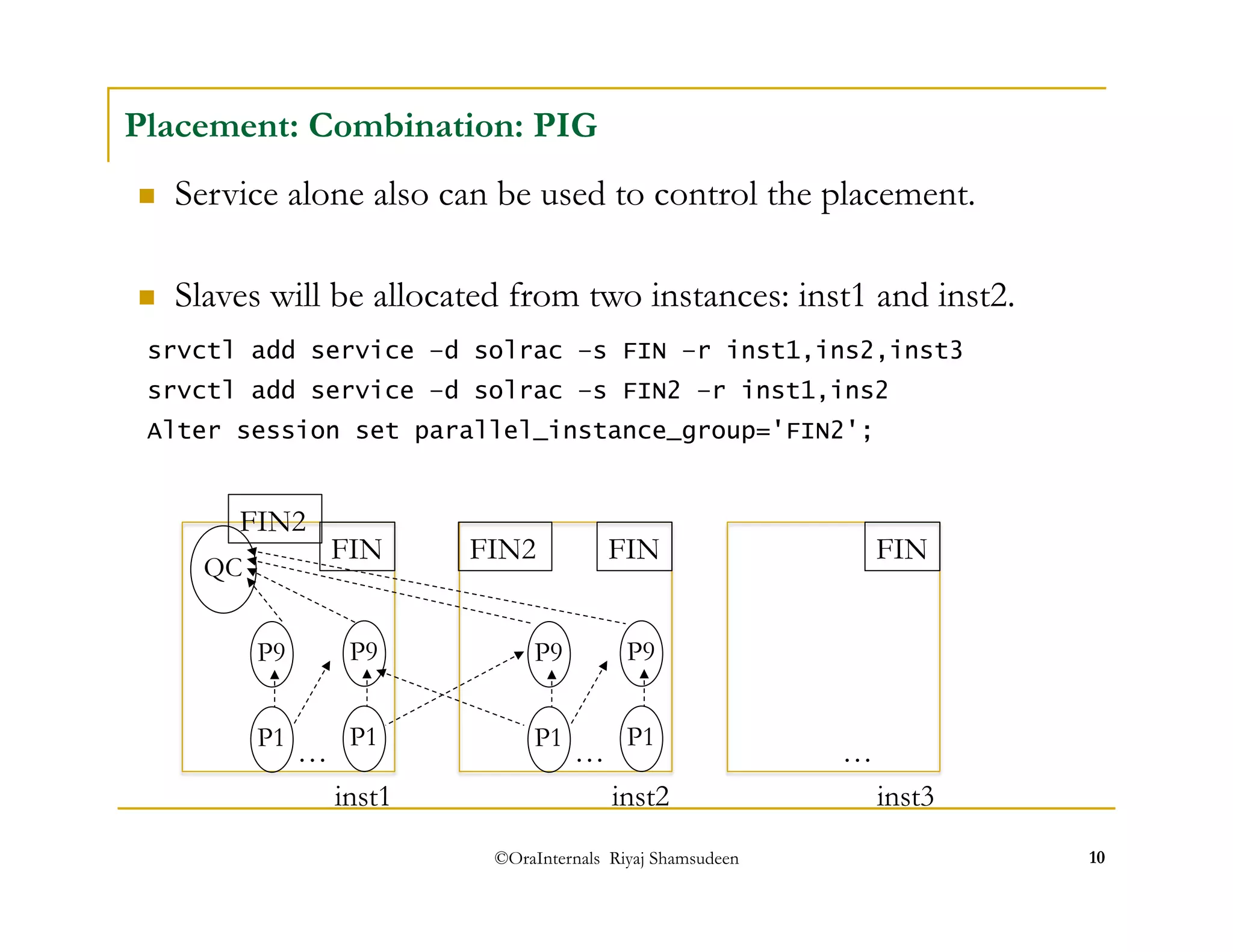

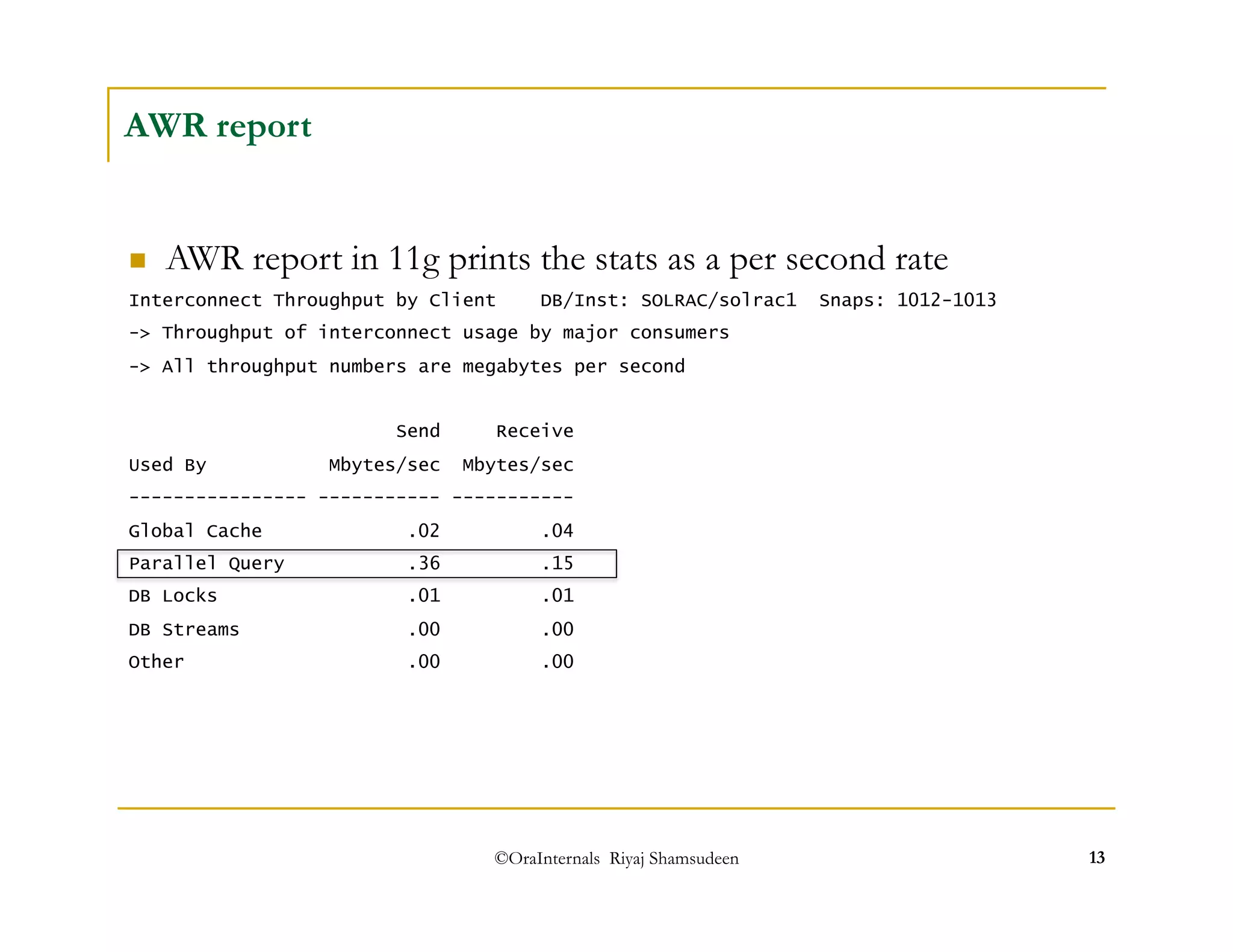

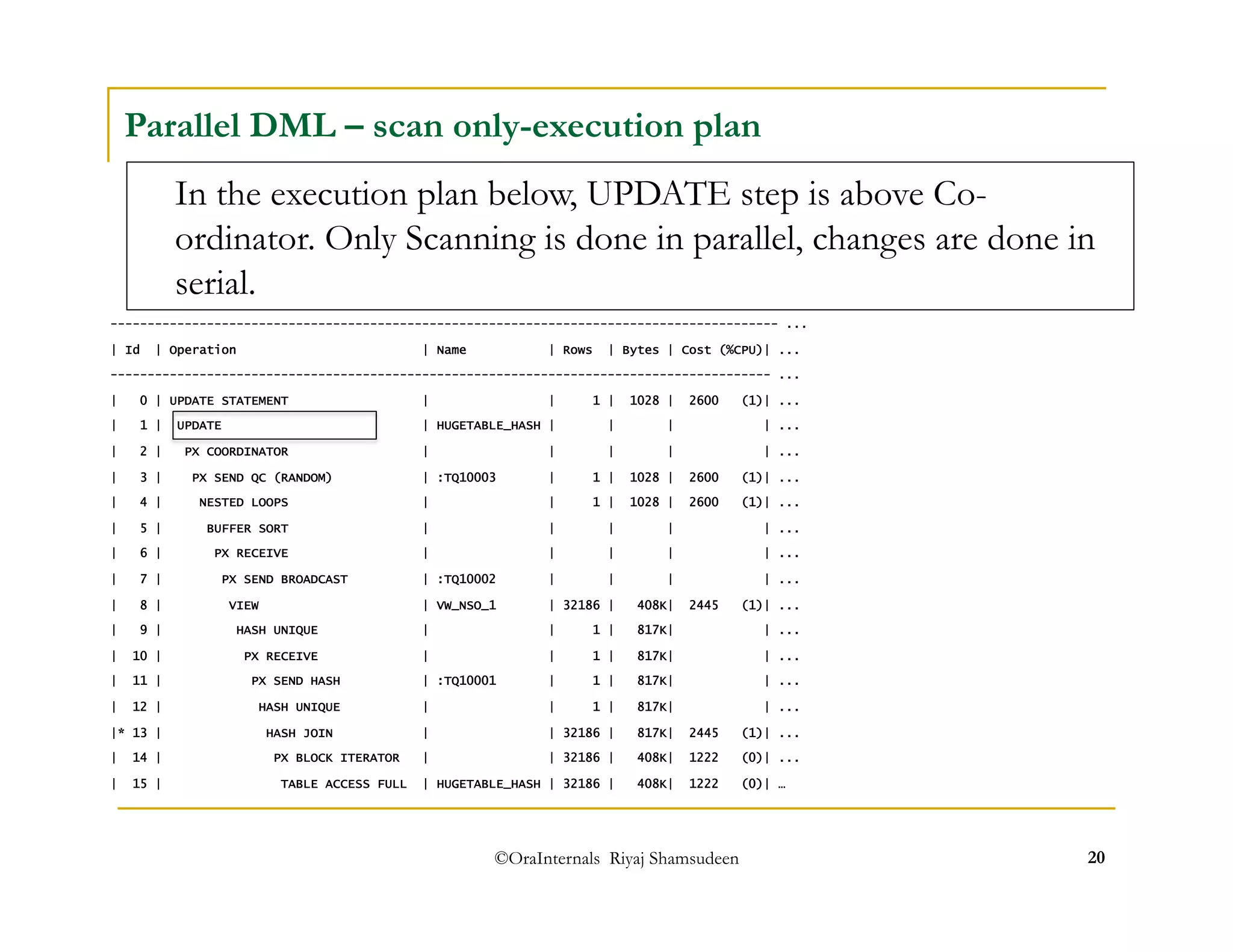

The document discusses administering parallel execution in Oracle databases. It describes how parallel query uses slave processes to perform work across instances, and how the placement of slaves can be controlled using services or parallel instance groups. It provides an example execution plan showing how slaves perform different tasks like scanning and sorting. It also covers best practices, new features in Oracle 11g like parallel statement queueing, and how parallel DML works.