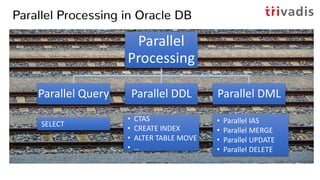

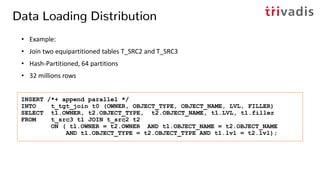

- Properly using parallel DML (PDML) for ETL can improve performance by leveraging multiple CPUs/cores.

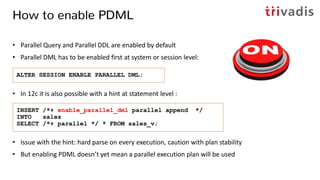

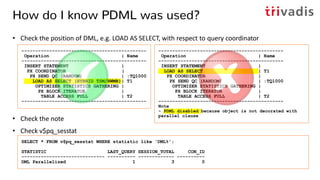

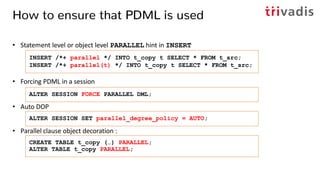

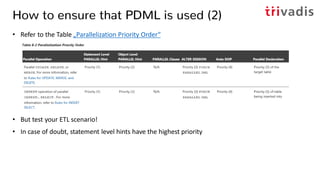

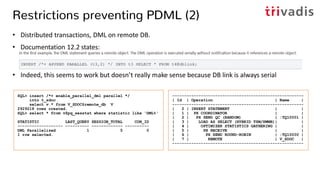

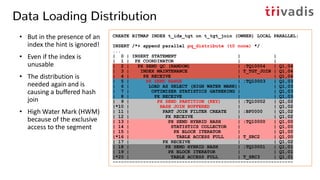

- To enable PDML, it must be enabled at the system, session, or statement level. Additional steps may be needed to ensure the optimizer chooses a parallel plan.

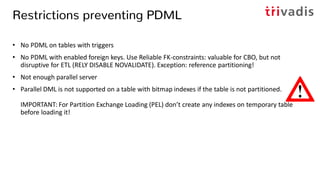

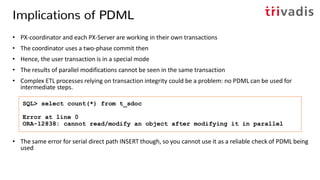

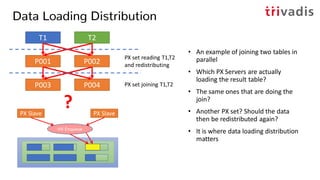

- Considerations for using PDML include available parallel servers, restrictions like triggers or foreign keys, and implications on transactions.

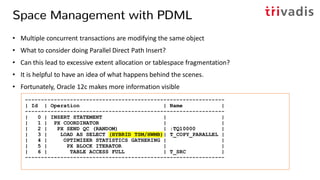

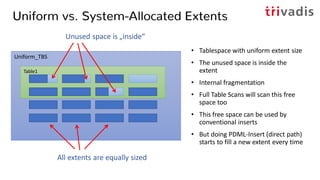

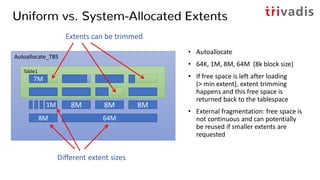

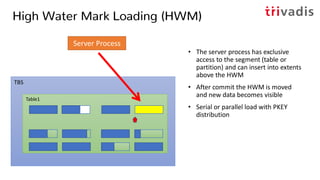

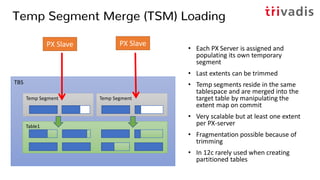

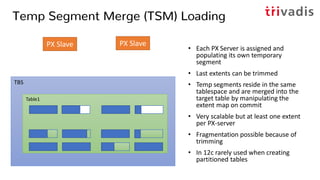

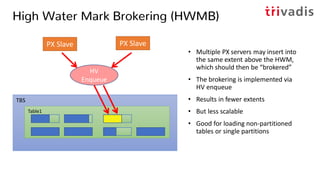

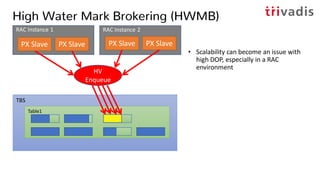

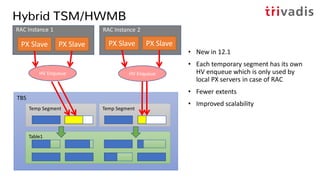

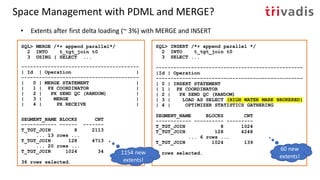

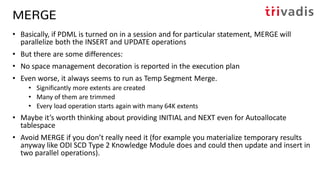

- Oracle has different methods for data loading in PDML like HWM, TSM, and HWMB that impact extent allocation and fragmentation.

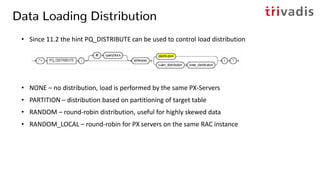

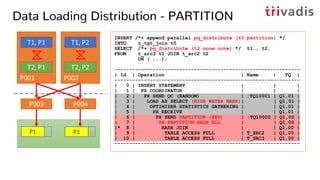

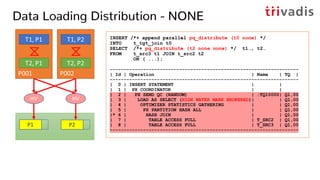

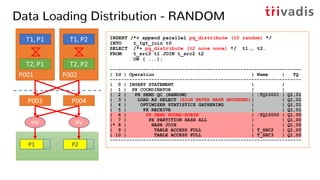

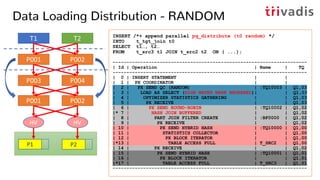

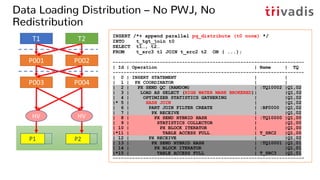

- The PQ_DISTRIBUTE hint controls how rows are distributed among parallel servers during the load to optimize performance and scalability.