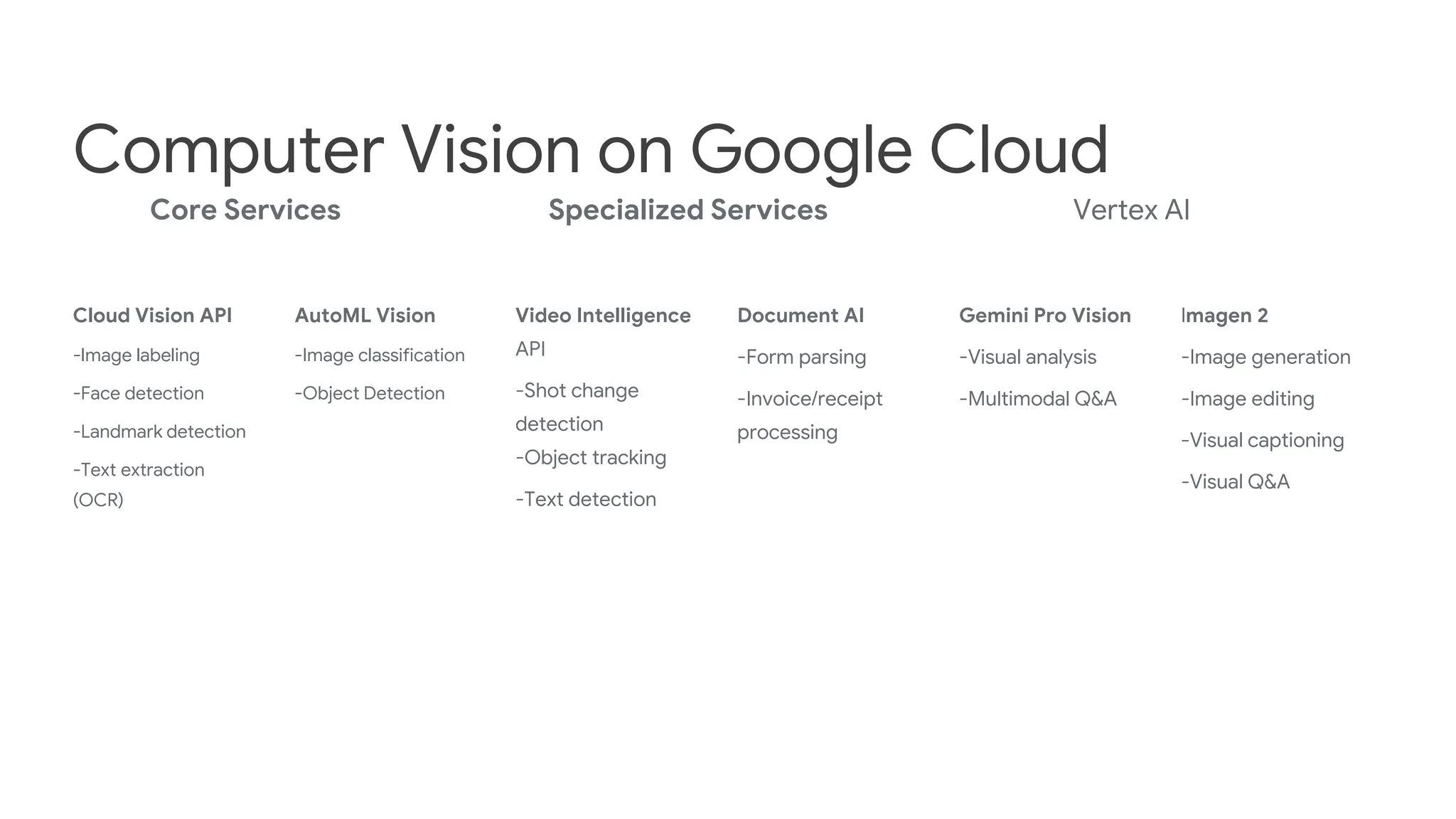

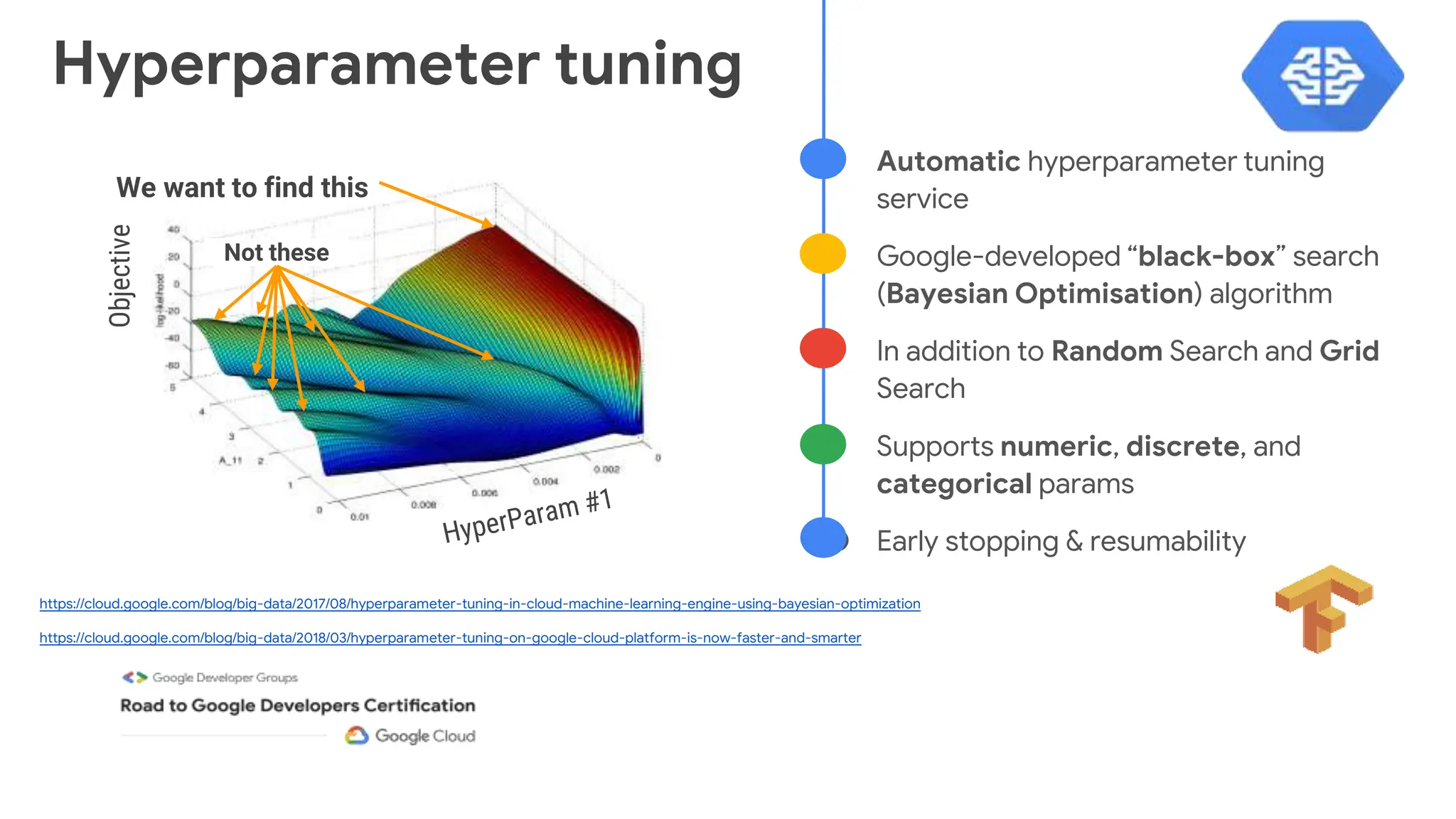

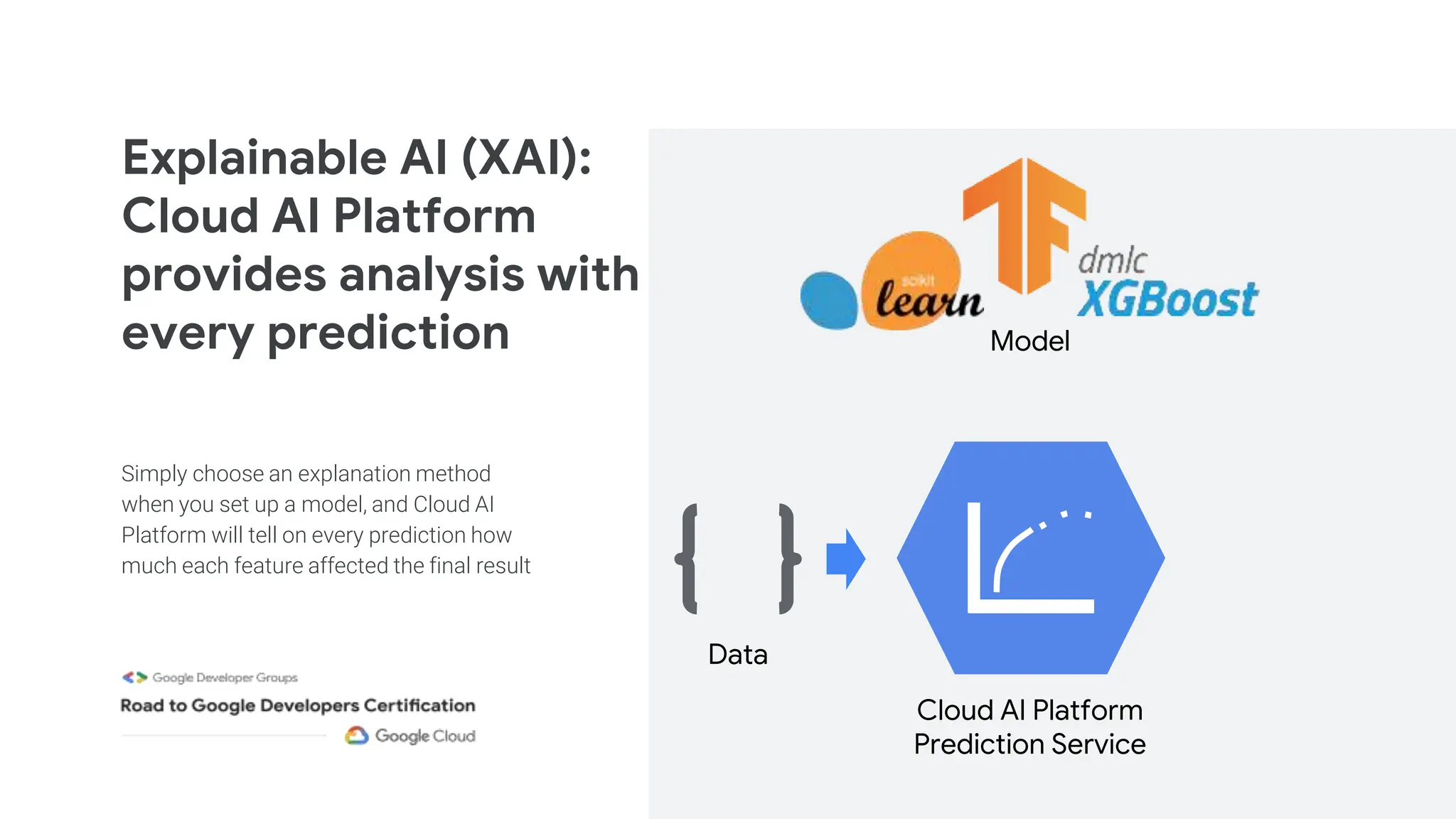

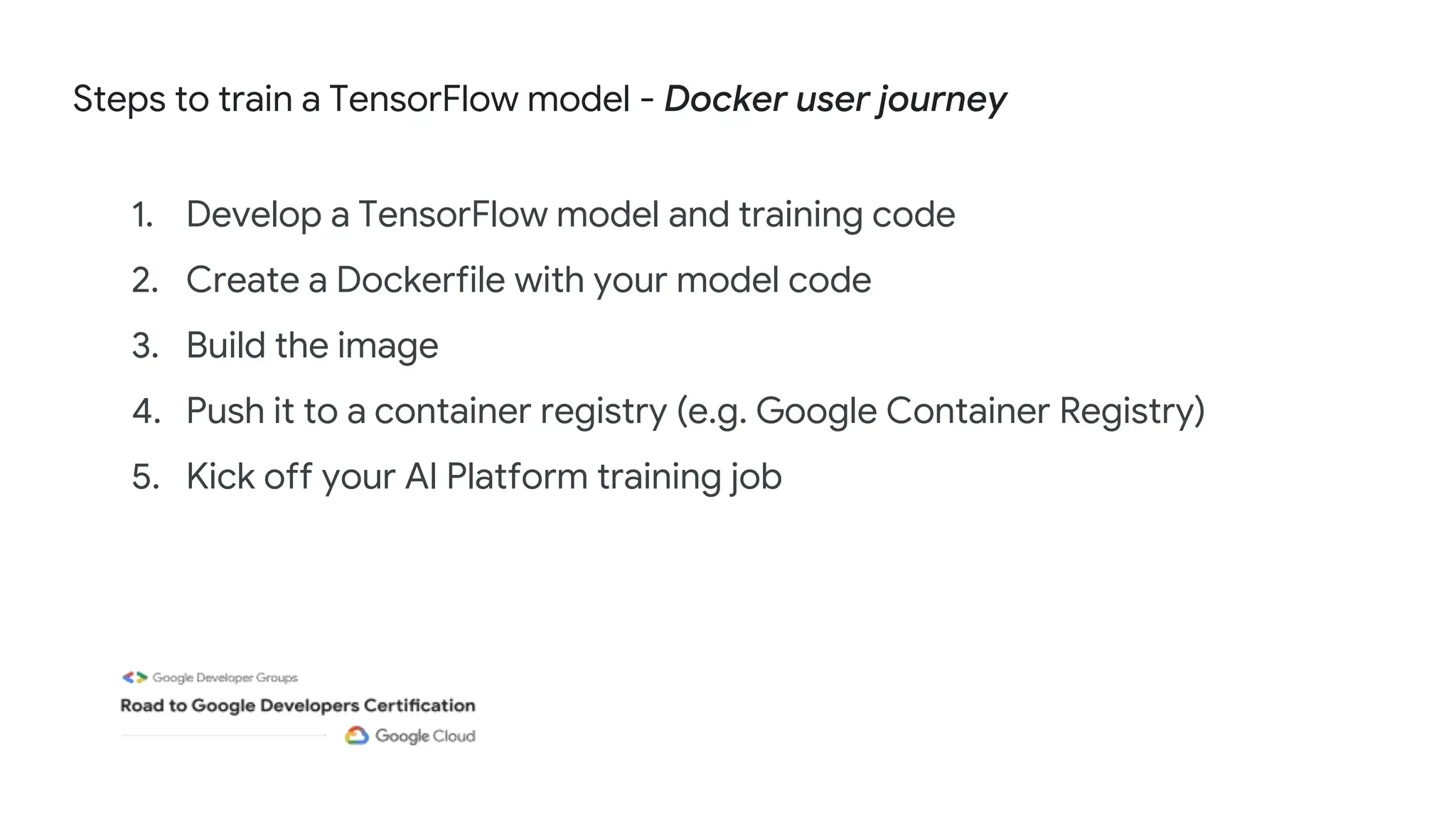

The document outlines a comprehensive learning journey for professional machine learning engineers, organized by Google Developer Groups, focusing on various aspects of machine learning and Google Cloud tools. It includes a series of virtual sessions, hands-on labs, and courses covering topics such as computer vision, natural language processing, and model deployment. Additional details on training, hyperparameter tuning, and explainable AI are provided to enhance understanding and practical skills.

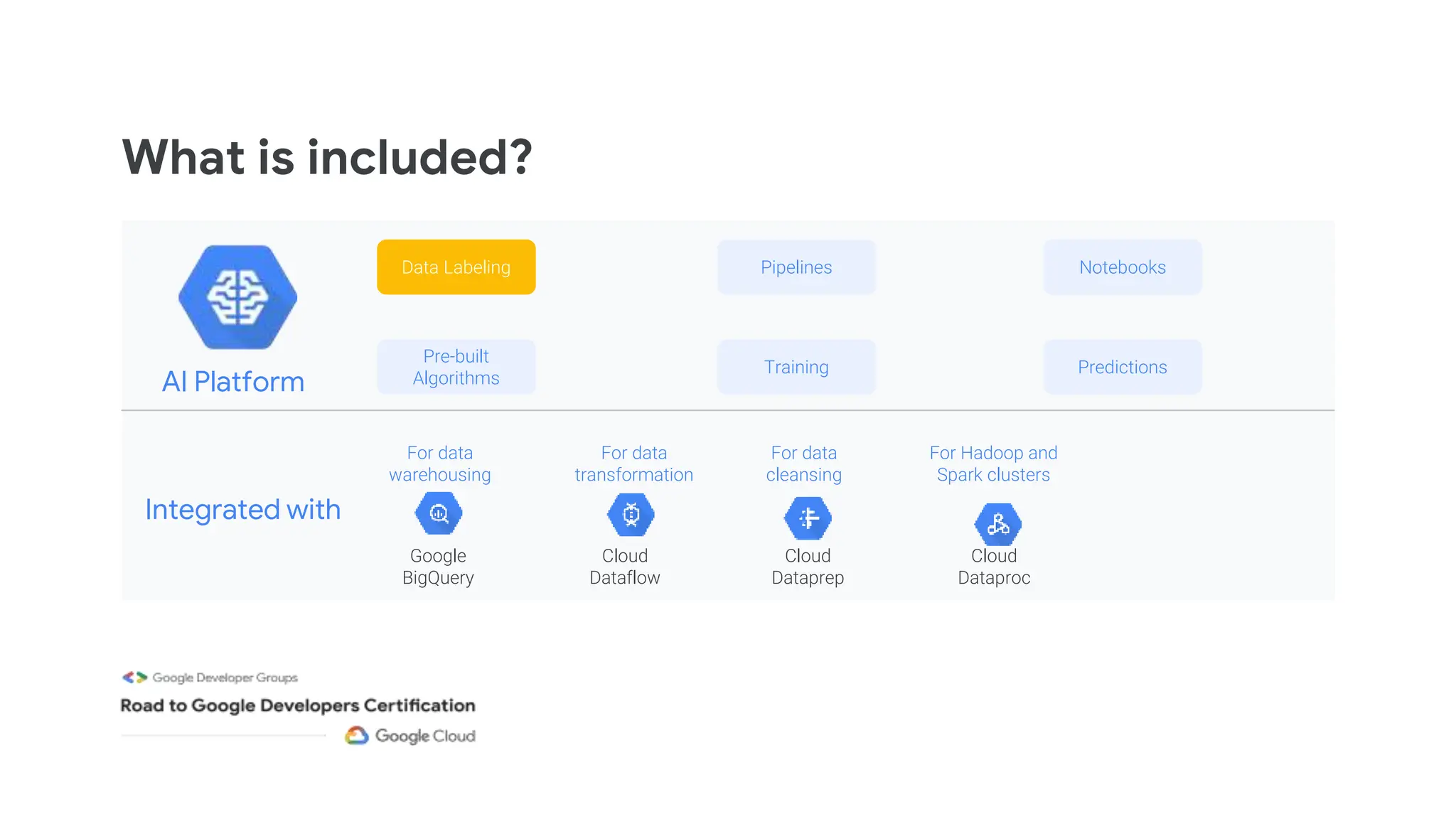

![Predicting

POST https://ml.googleapis.com/v1/projects/your-project-id/

models/${model-name}/

versions/${version}:predict

Request Body:

{

"instances": [

[0.0, 1.1, 2.2],

[3.3, 4.4, 5.5],

...

]

}

batch prediction*: online prediction*:

cloud ai-platform jobs submit prediction

$JOB_NAME

--model $NAME

--version $VERSION

--data-format TEXT

--input-paths $GCS_DATA_DIR

--output-path $GCS_OUT_DIR

*gcloud commands and APIs exists for both methods](https://image.slidesharecdn.com/2024-03-16session4-pmle-240318055518-fd260c19/75/Production-ML-Systems-and-Computer-Vision-with-Google-Cloud-53-2048.jpg)

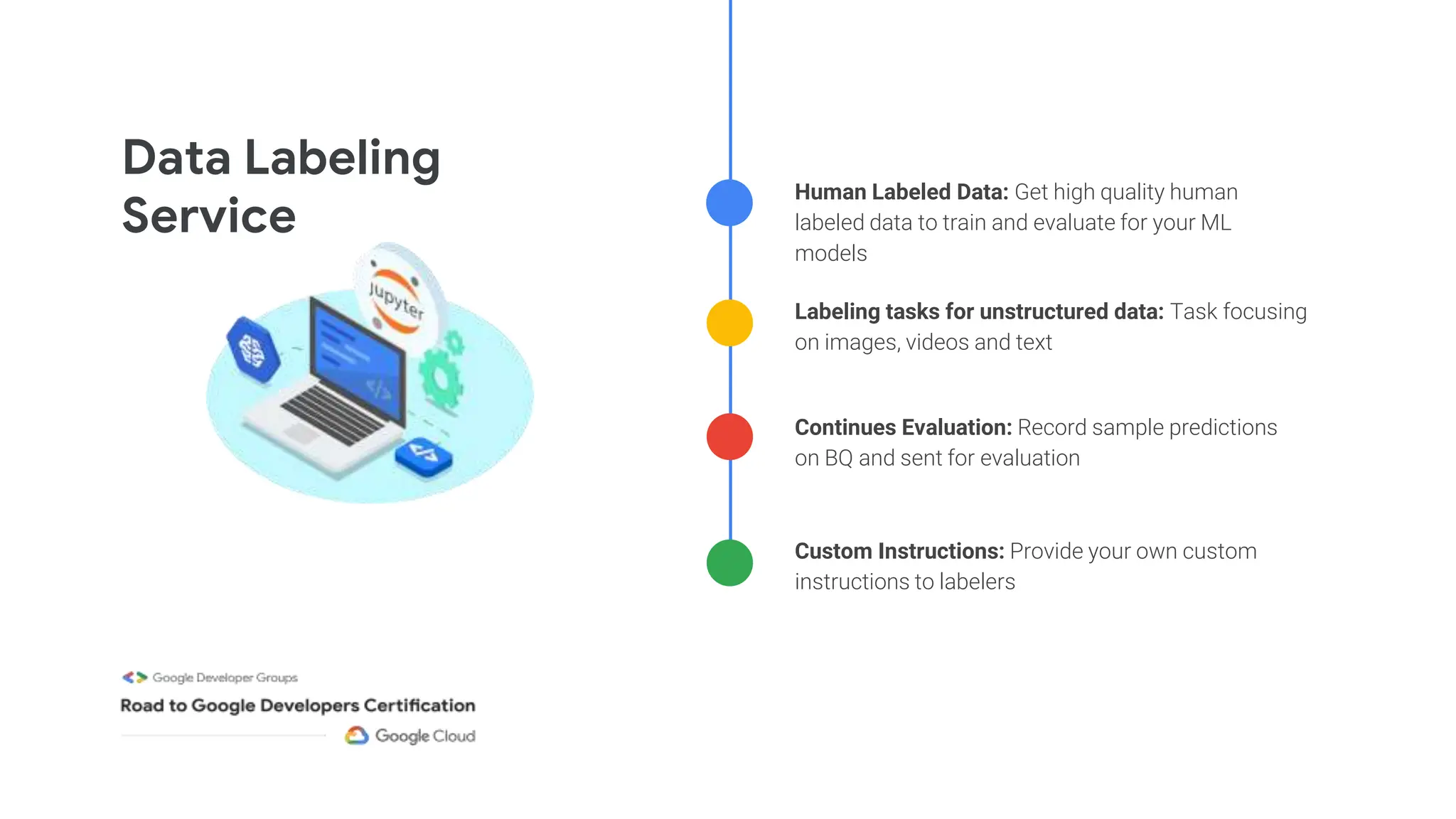

![First: Create your model and training code

model = tf.keras.Sequential(

[

Dense(100, activation=relu,

input_shape=(input_dim,)),

Dense(75, activation=relu),

Dense(50, activation=relu),

Dense(25, activation=relu),

Dense(1, activation=sigmoid)

])

Sample code showing structure in cloudml-samples repo

# Train model

keras_model.fit(

training_dataset,

steps_per_epoch=int(num_train_examples /

args.batch_size),

epochs=args.num_epochs,

validation_data=validation_dataset,

validation_steps=1,

verbose=1,

callbacks=[lr_decay_cb, tensorboard_cb])

model.py task.py](https://image.slidesharecdn.com/2024-03-16session4-pmle-240318055518-fd260c19/75/Production-ML-Systems-and-Computer-Vision-with-Google-Cloud-59-2048.jpg)

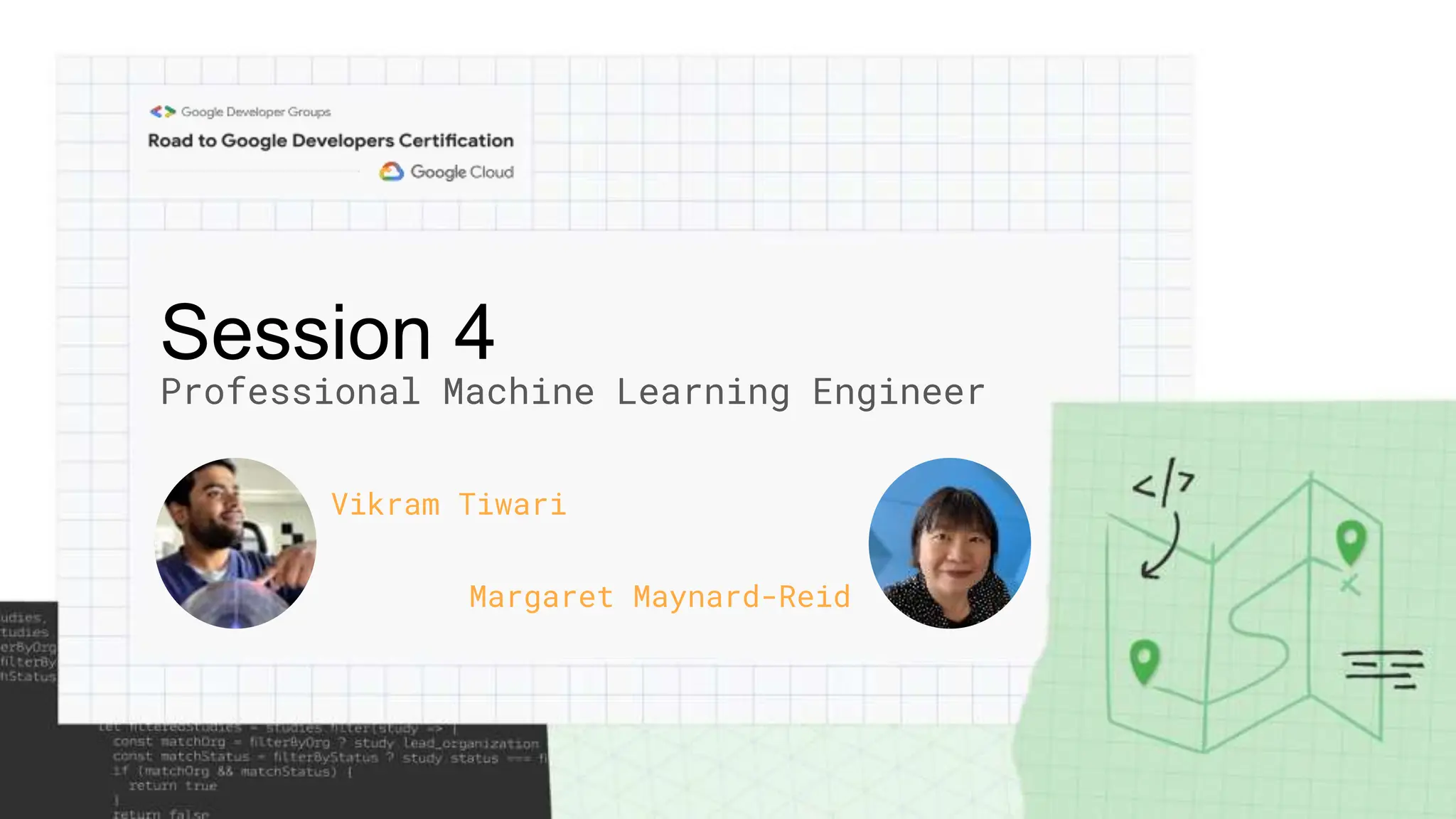

![Second: Create your Dockerfile

FROM gcr.io/deeplearning-platform-release/tf2-ent-latest-gpu

WORKDIR /root

COPY model.py /root/model.py

COPY task.py /root/task.py

ENTRYPOINT ["python", "task.py"]

Extend DLVM Image](https://image.slidesharecdn.com/2024-03-16session4-pmle-240318055518-fd260c19/75/Production-ML-Systems-and-Computer-Vision-with-Google-Cloud-60-2048.jpg)