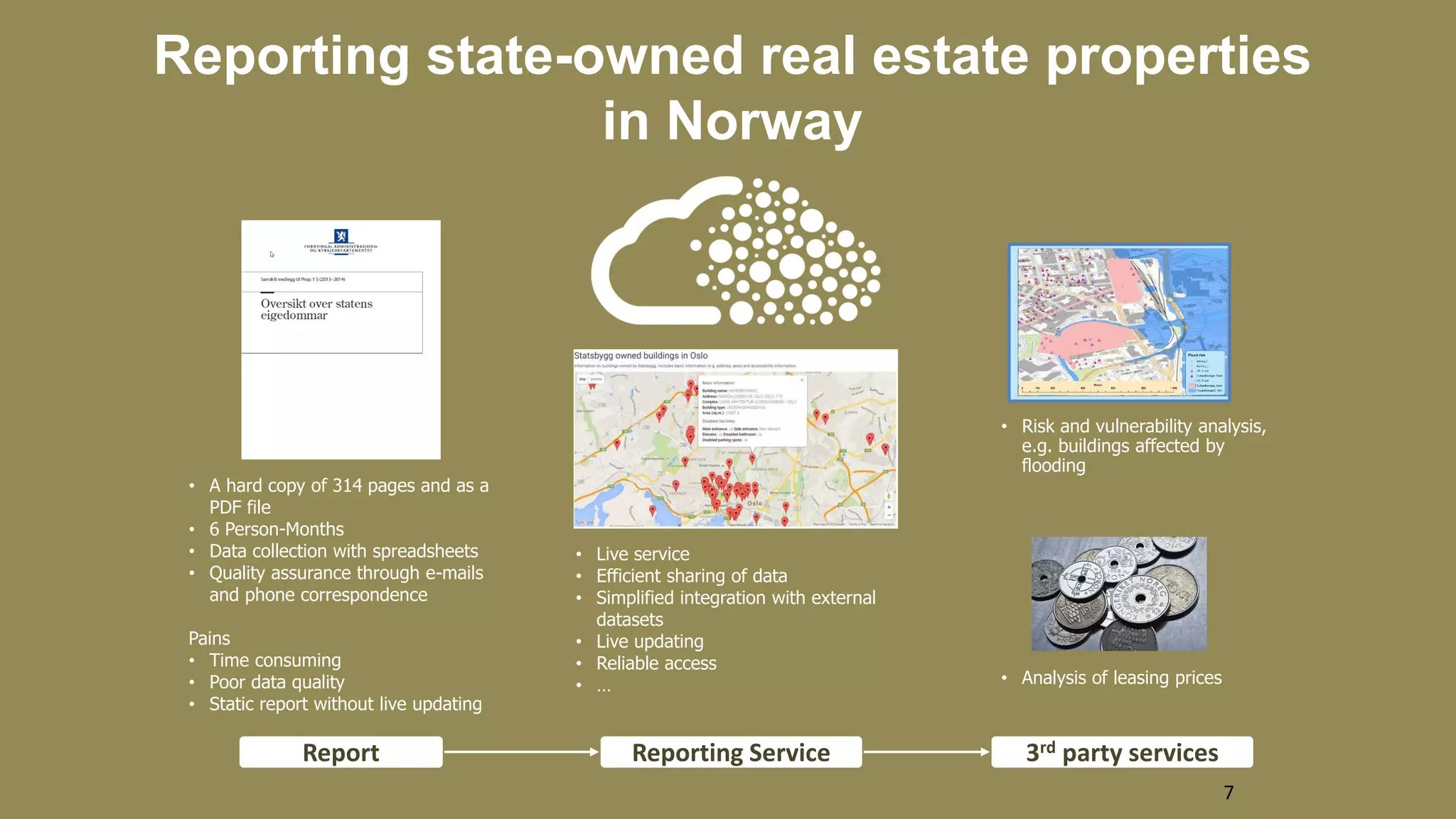

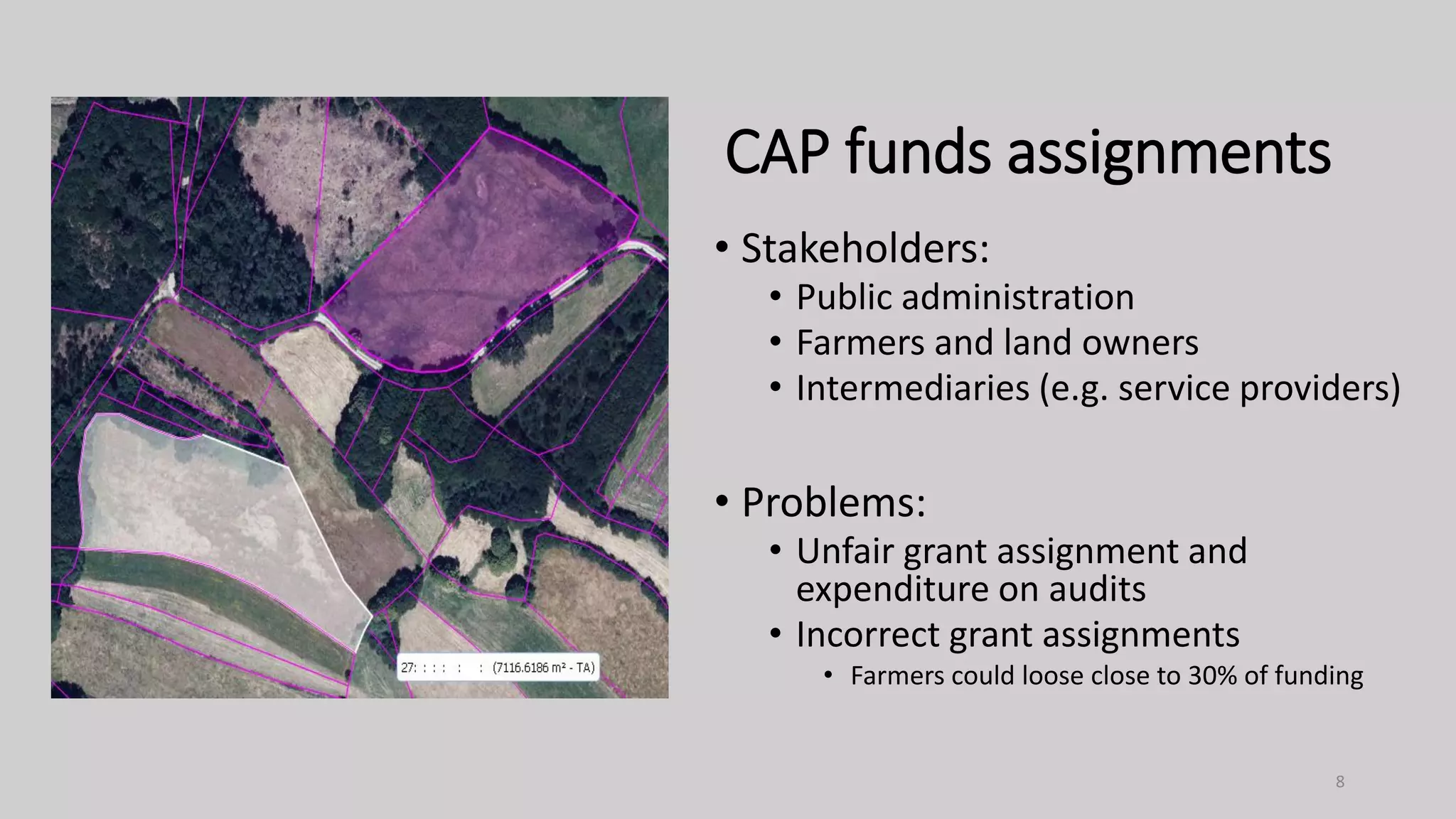

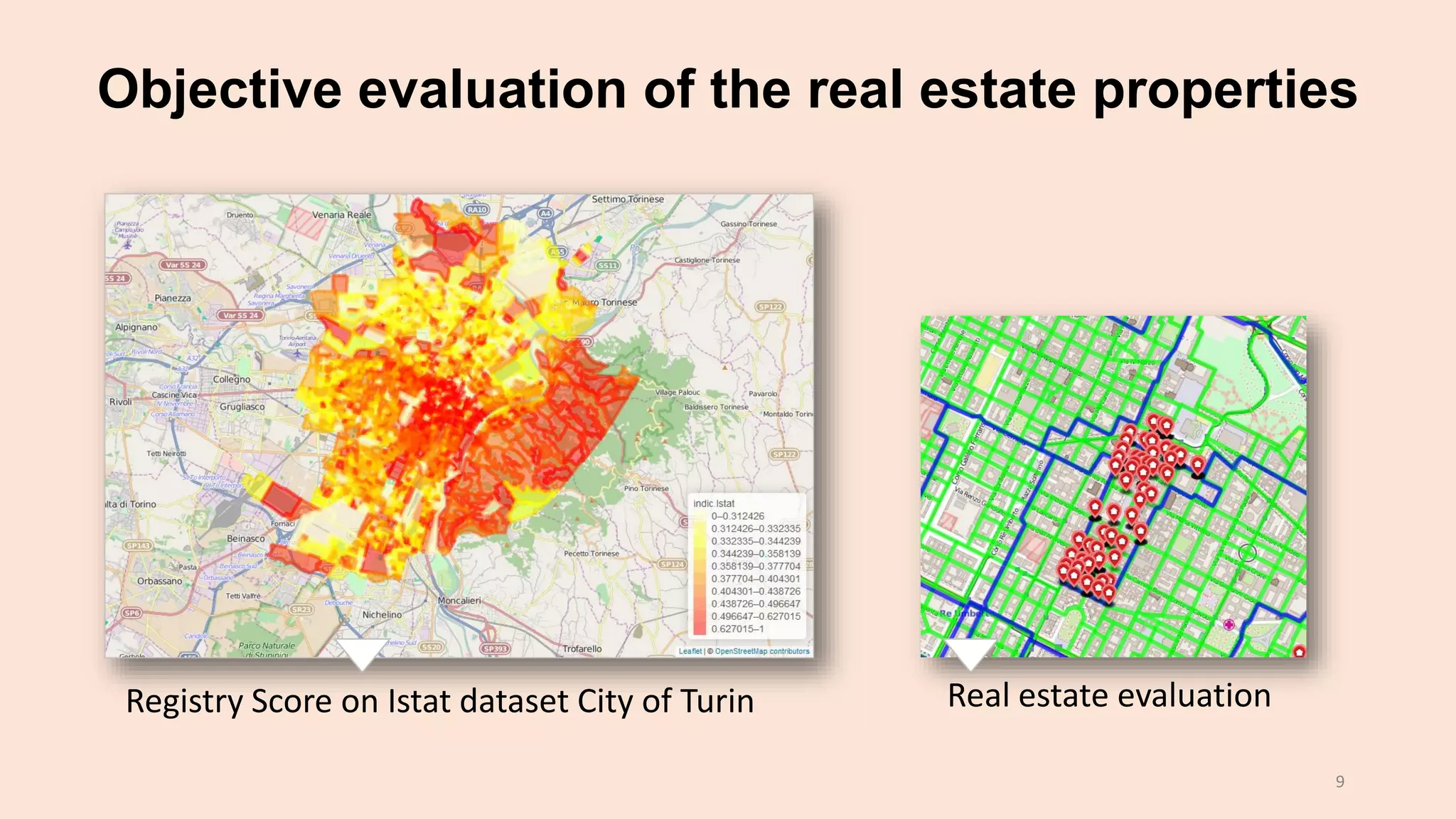

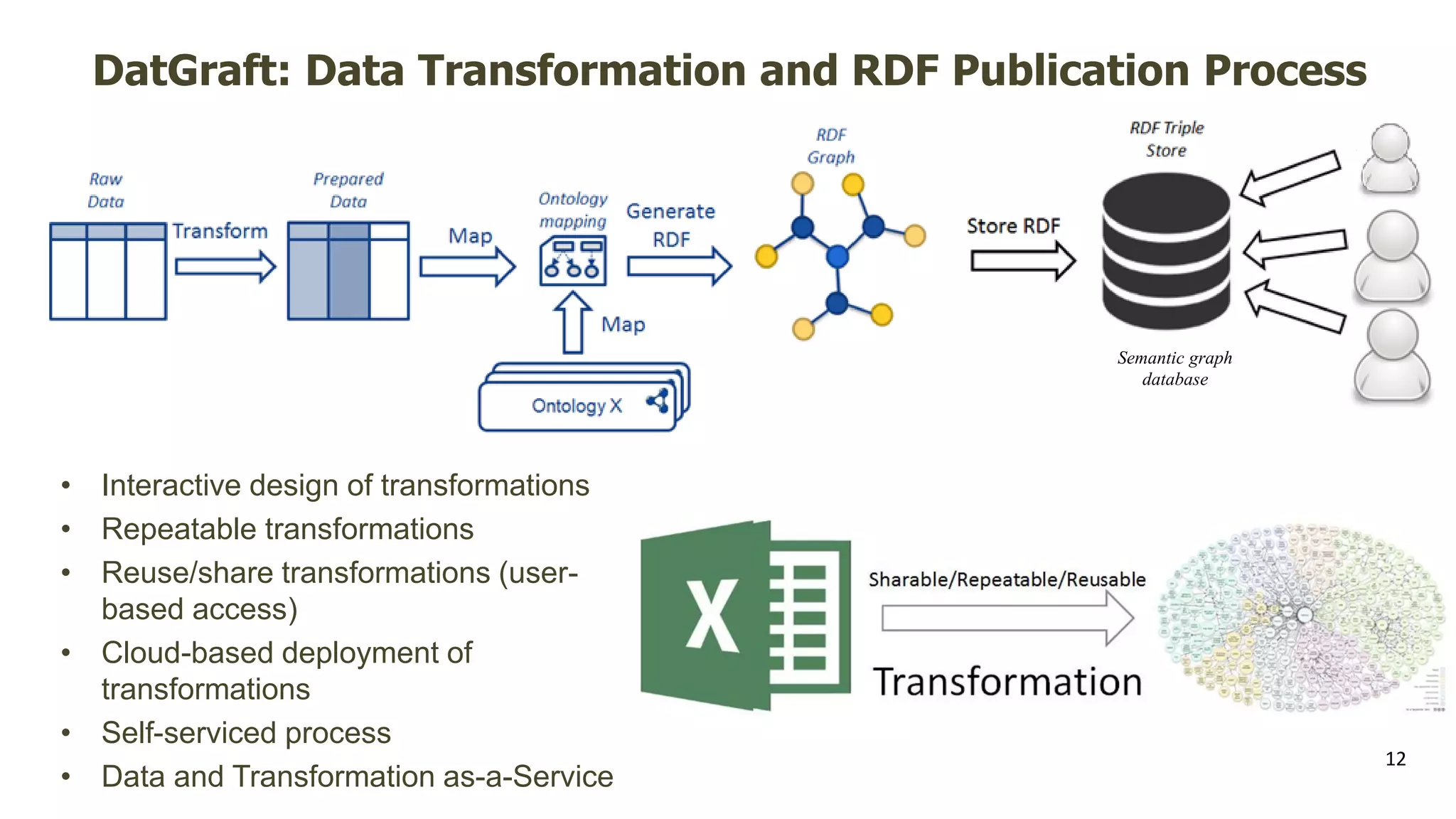

The document discusses the ProdataMarket project, which aims to improve access and usability of property-related open data, addressing challenges such as data quality and integration. Over a span of 2.5 years and a budget of €4.5 million, the project involved efforts to make property data more accessible to both providers and consumers. Additionally, it highlights the need for a live, efficient data-sharing service and the use of a linked data approach for better data transformation and publication.