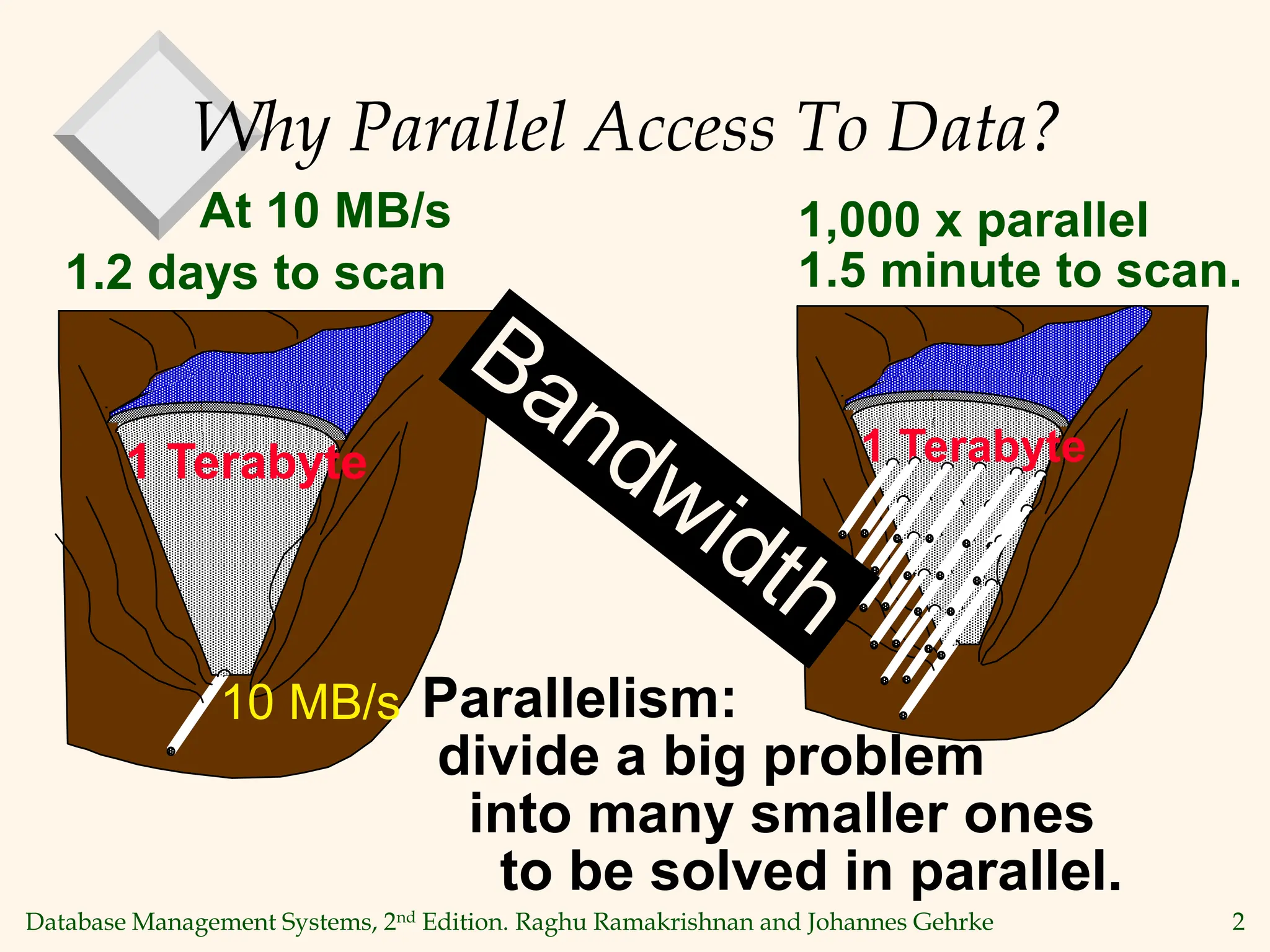

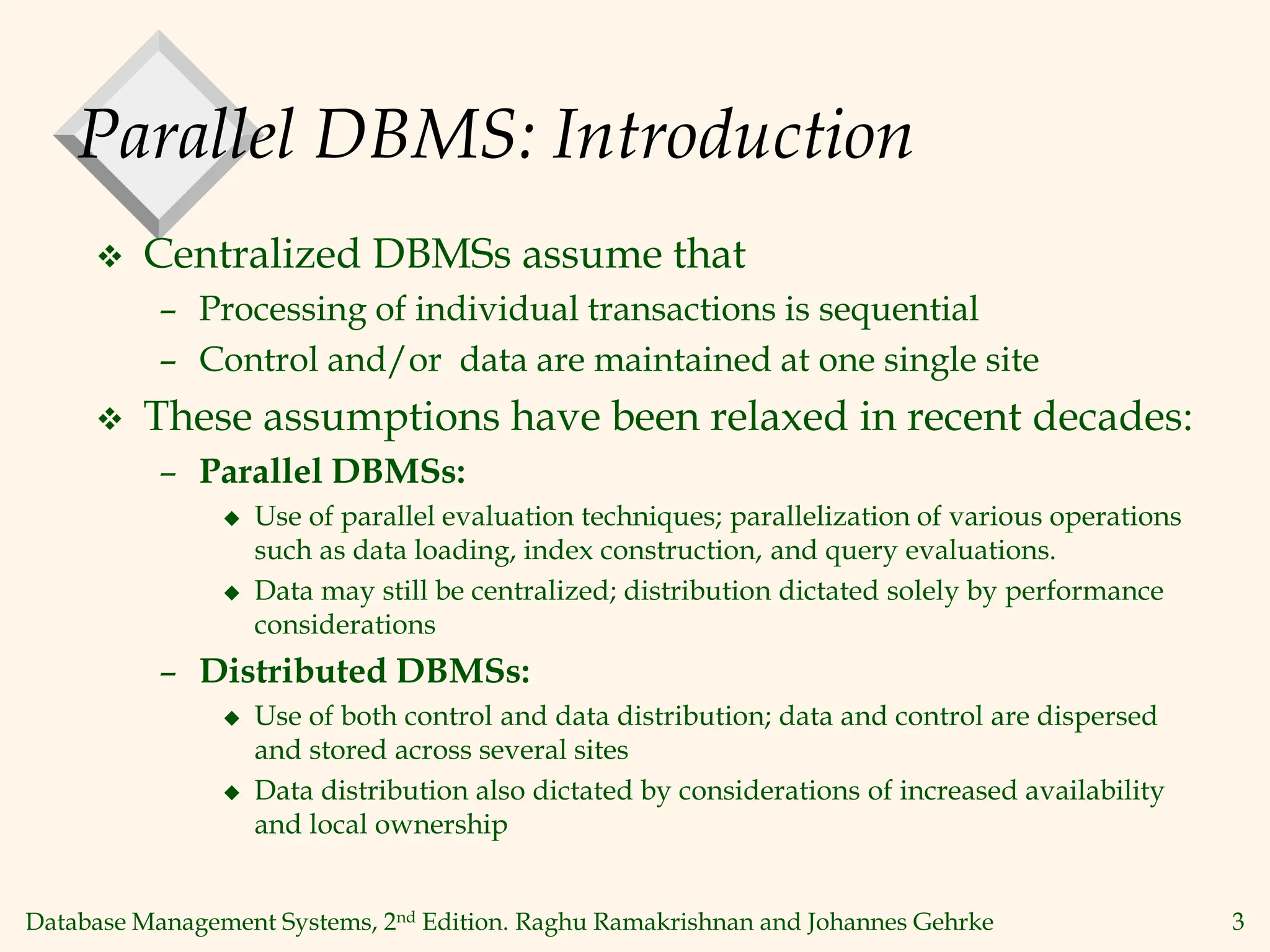

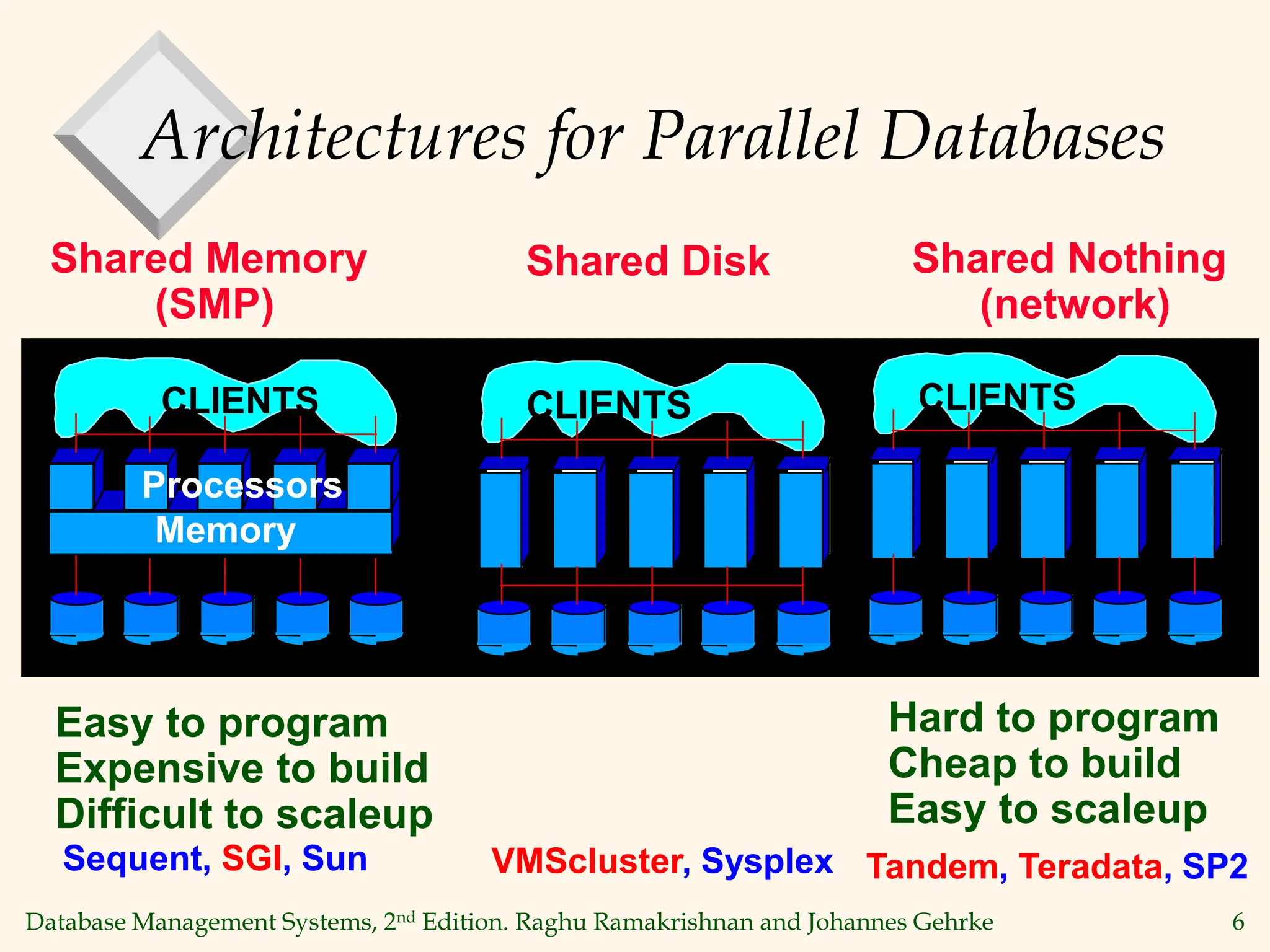

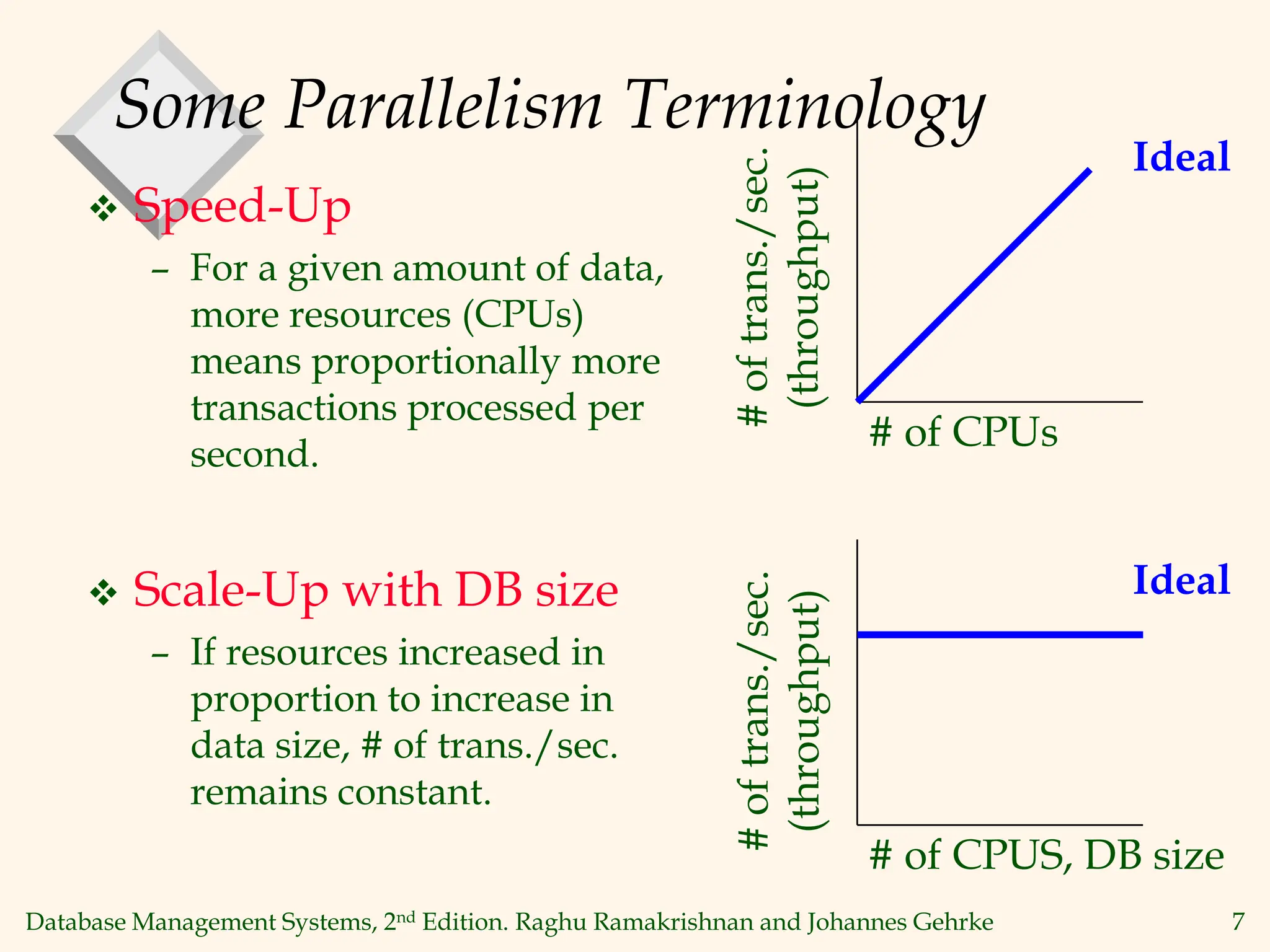

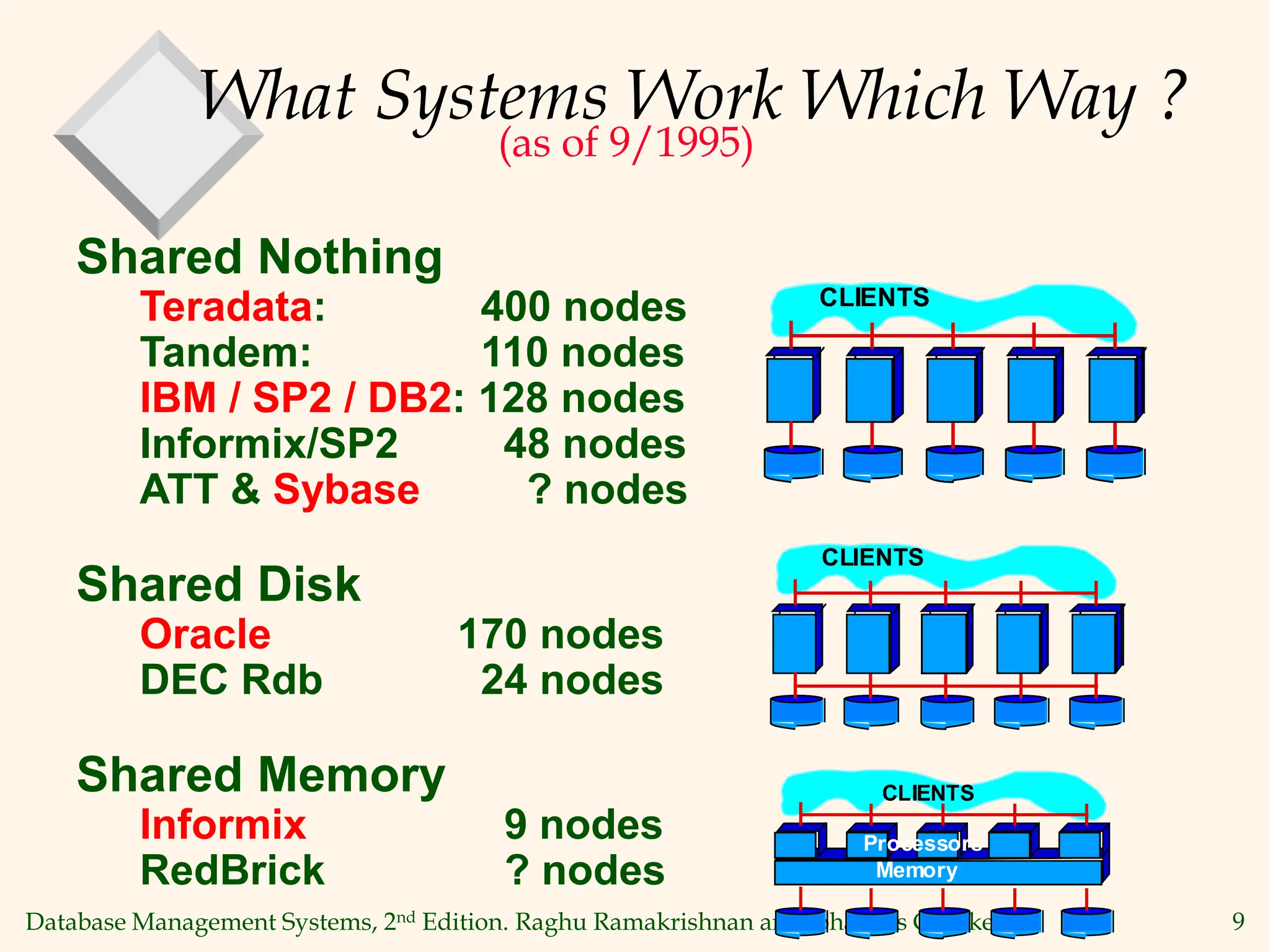

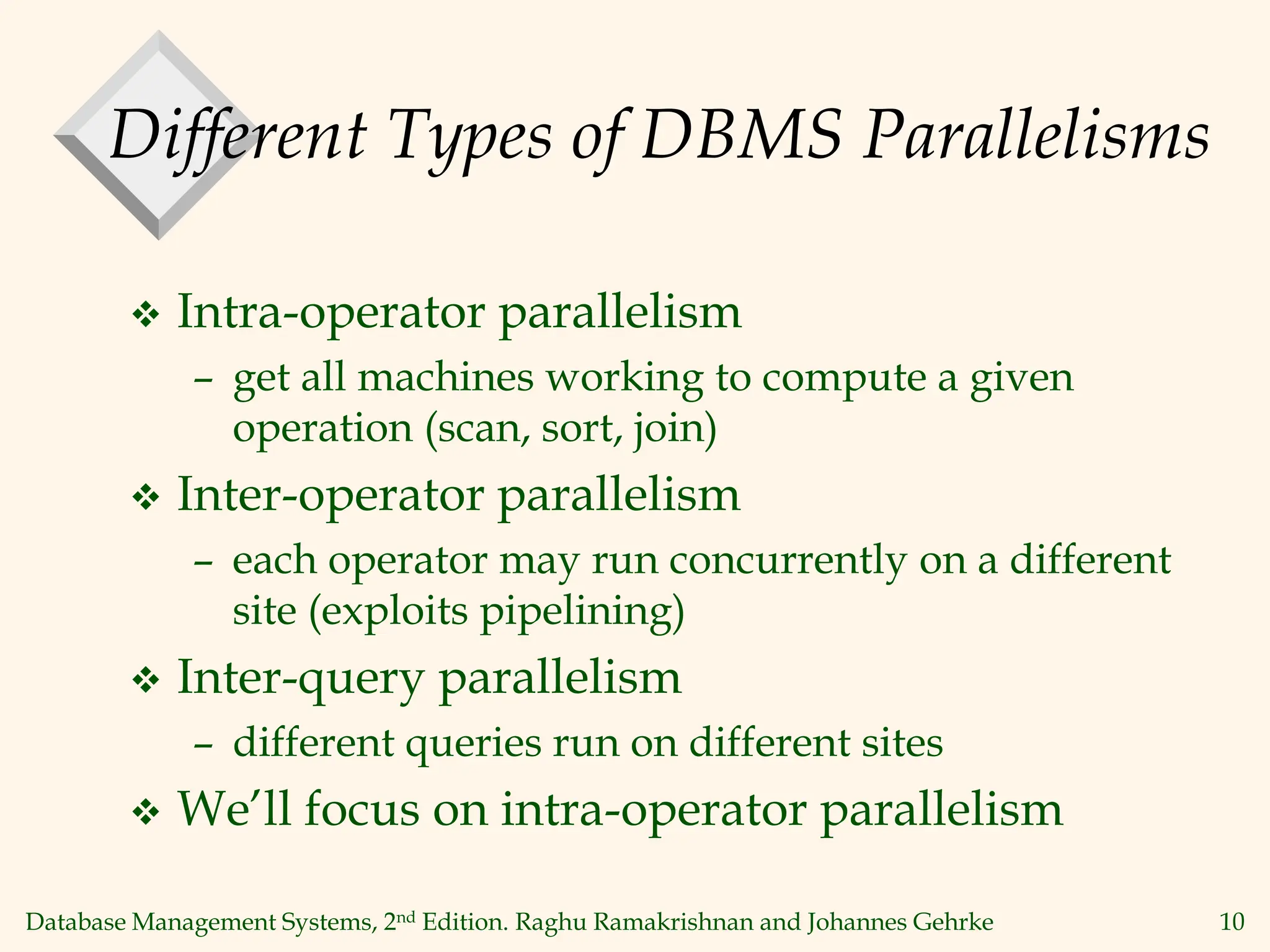

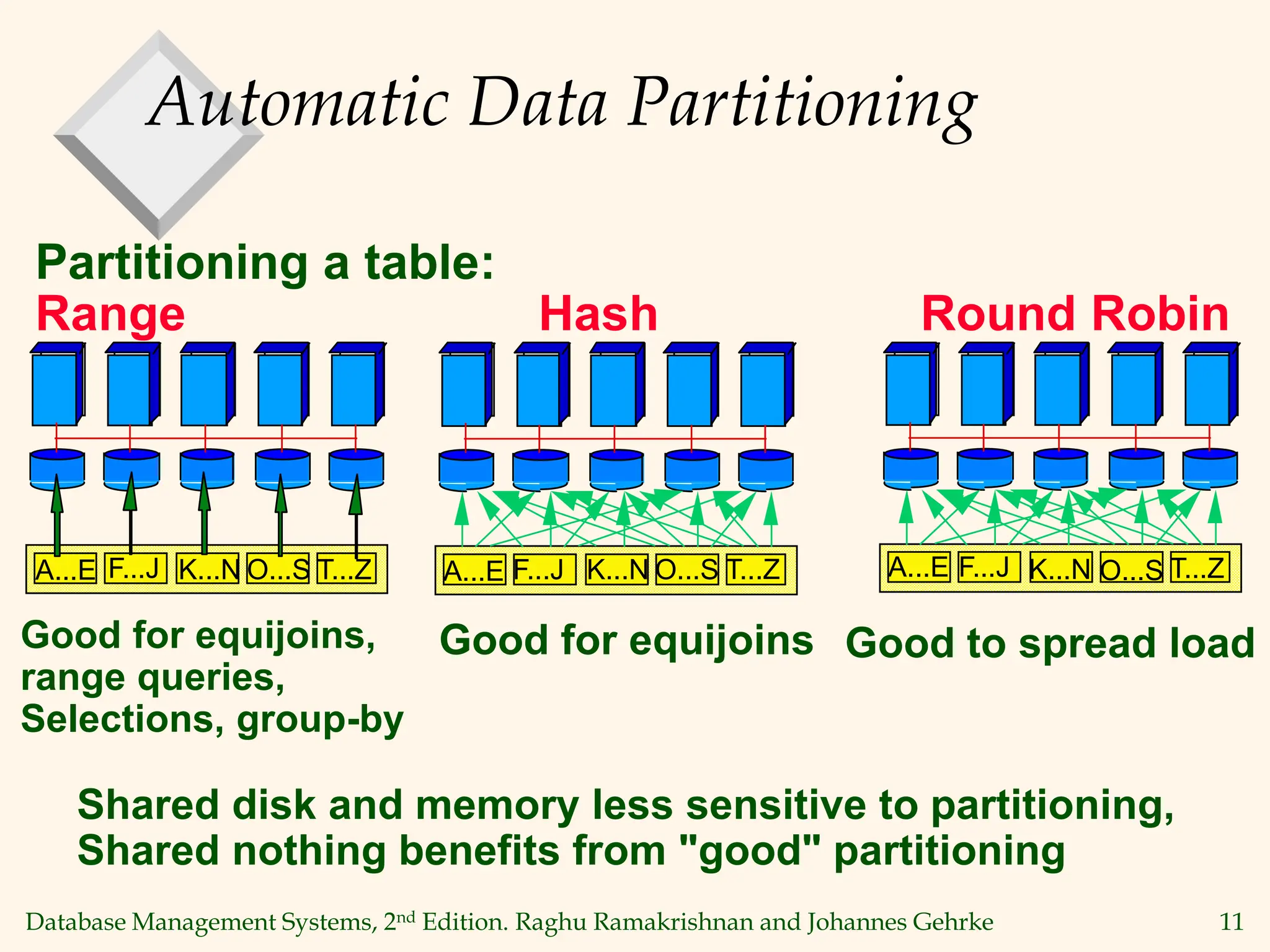

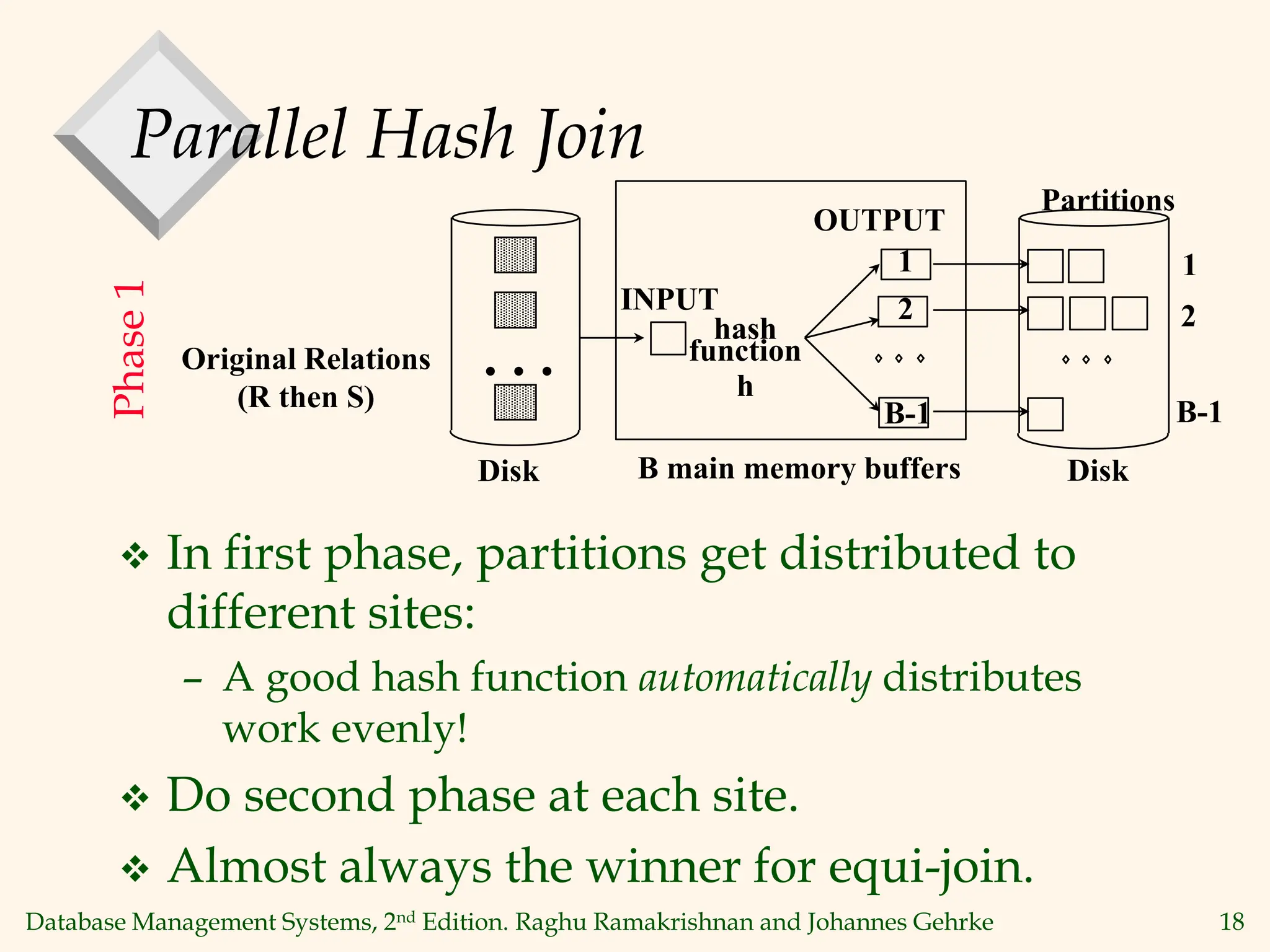

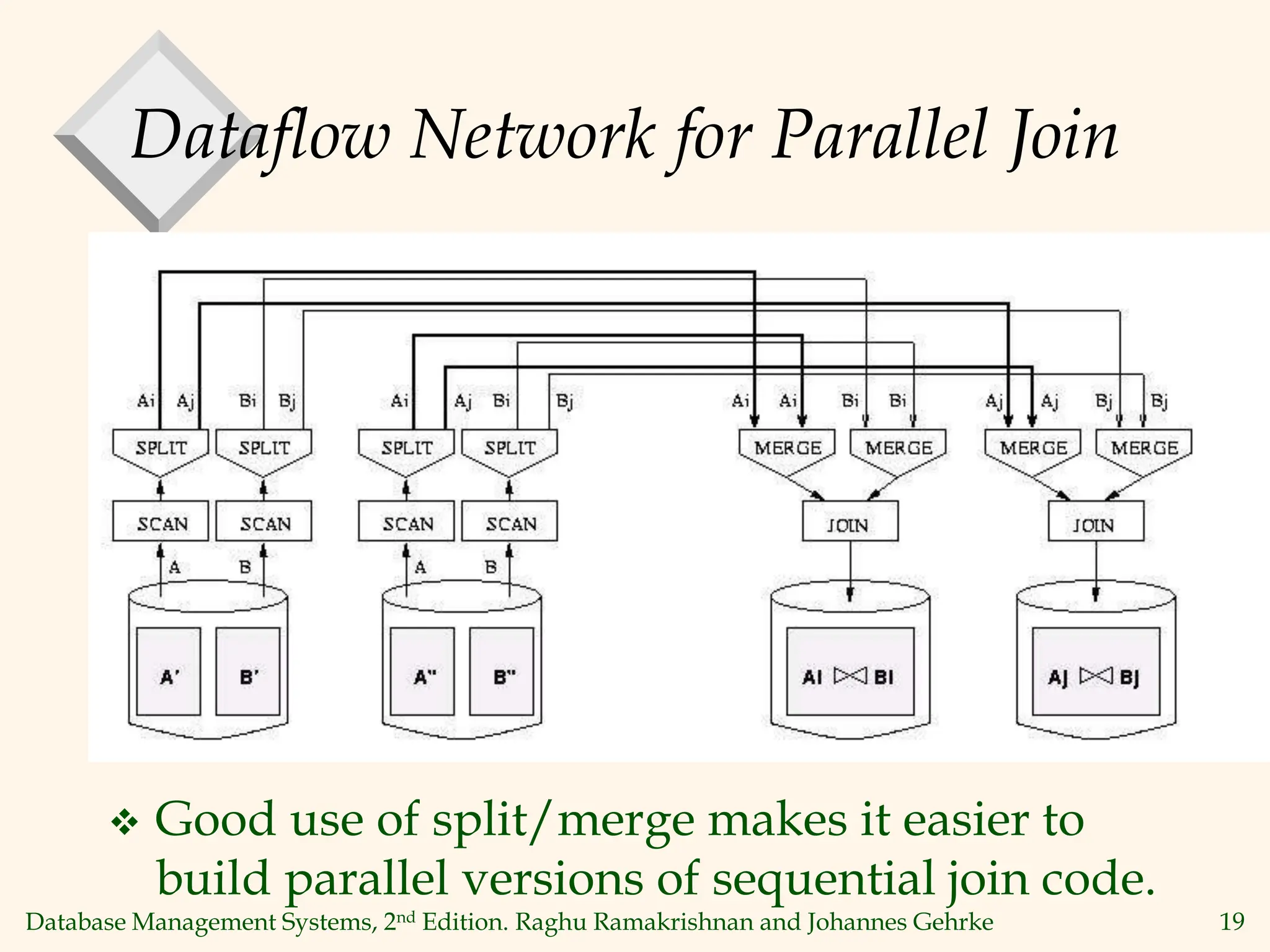

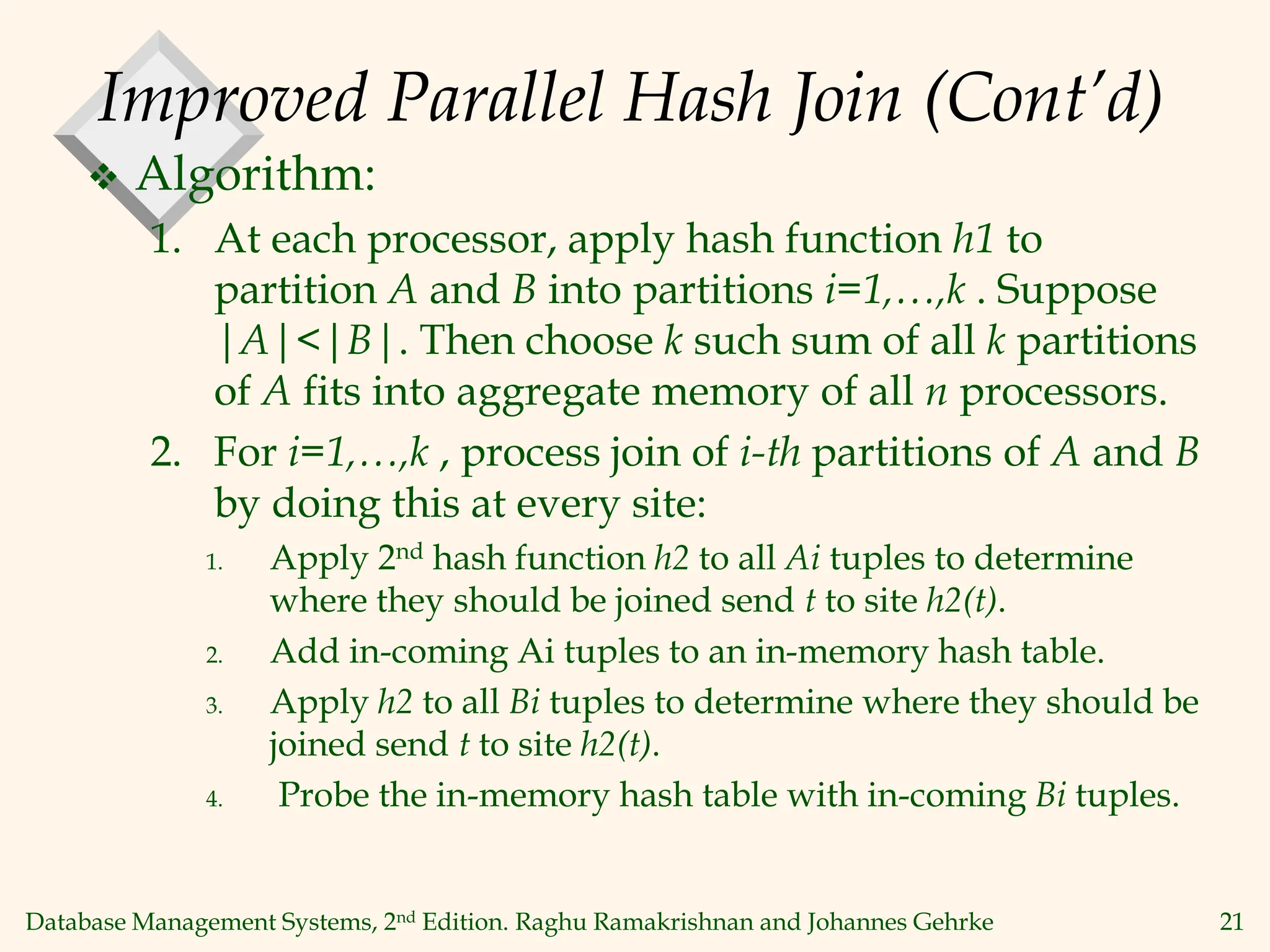

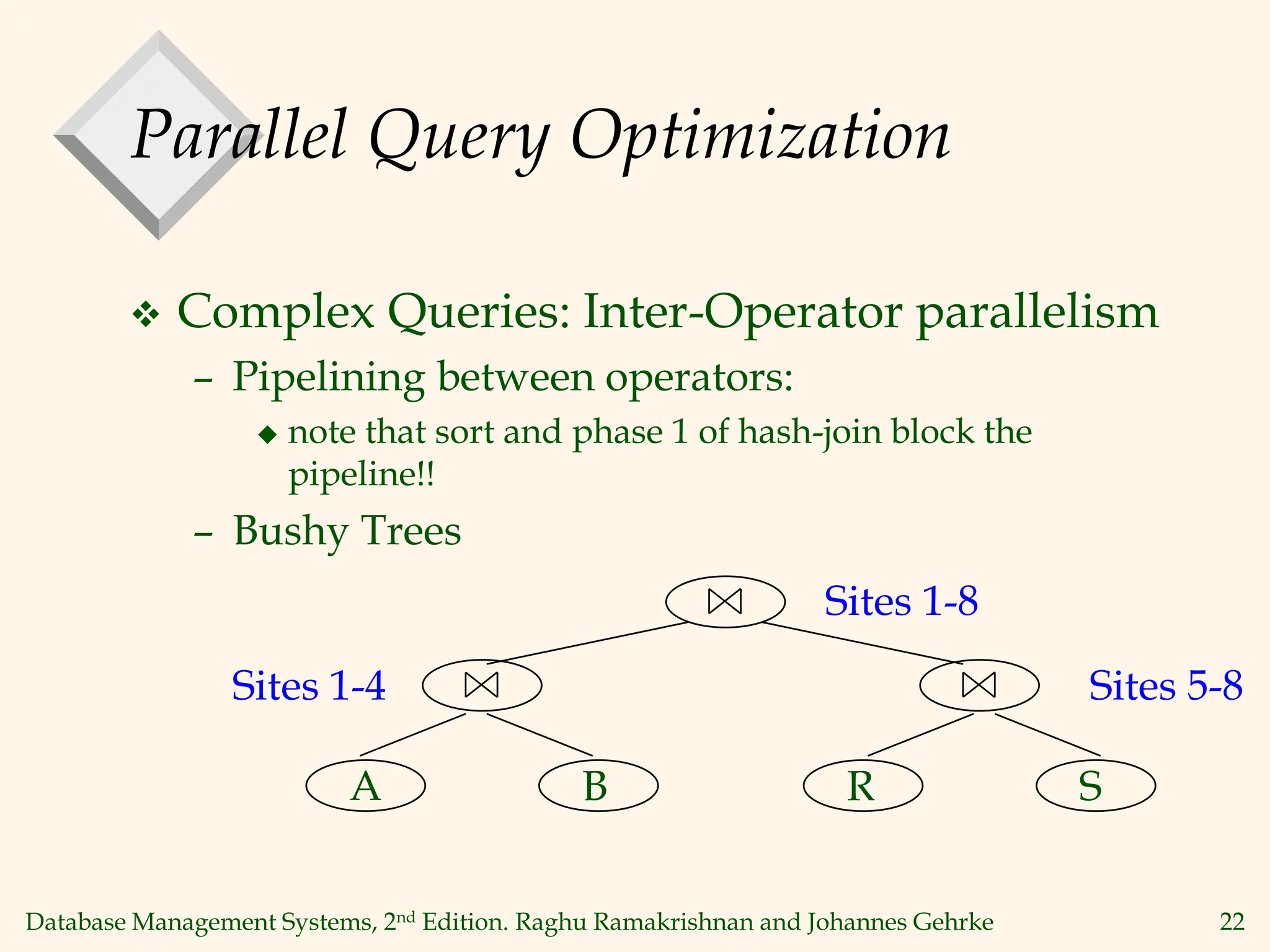

This document discusses parallel database management systems. It covers different architectures for parallel databases including shared memory, shared disk, and shared nothing. It also discusses techniques for parallelizing database operations like scanning, sorting, joining, and querying through techniques like partition parallelism and pipeline parallelism. The key challenges in parallel databases are data partitioning, query optimization, and transaction processing across multiple nodes.