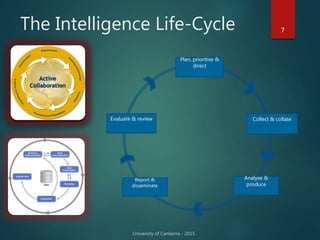

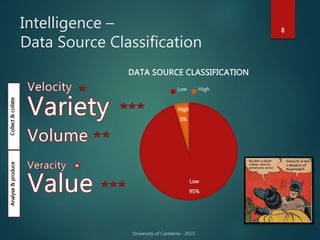

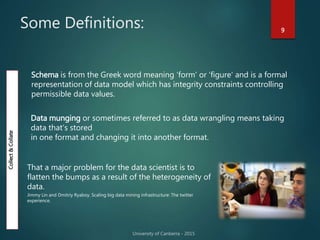

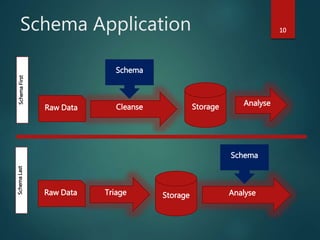

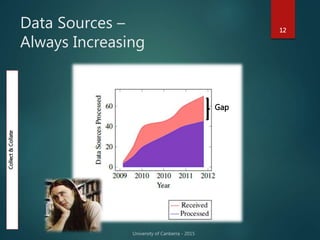

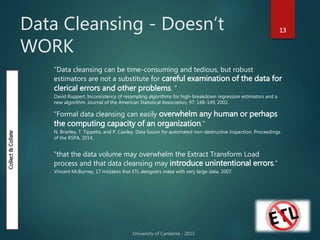

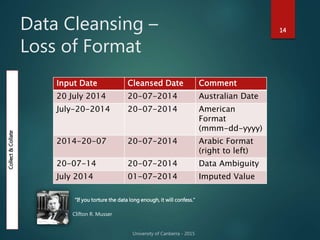

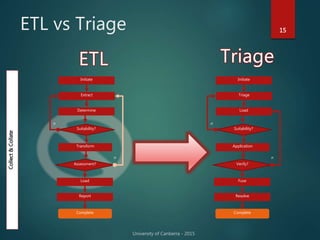

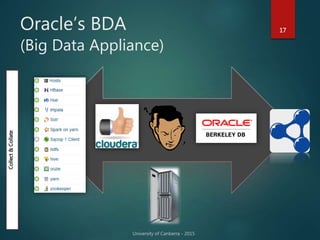

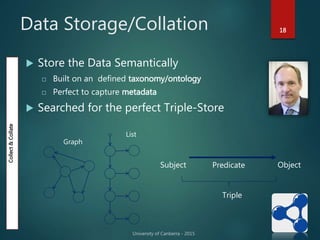

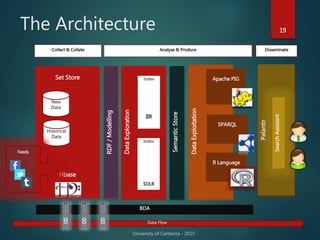

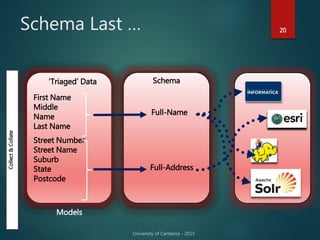

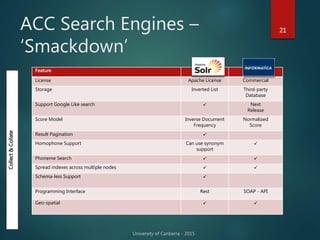

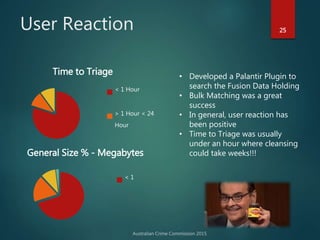

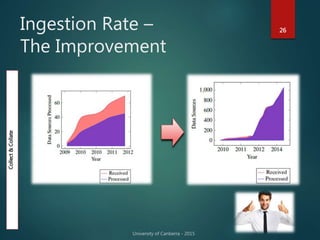

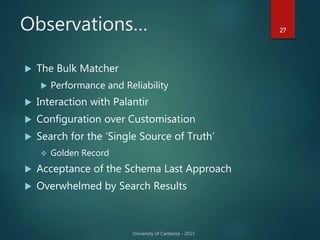

The document outlines Dr. Neil Brittliff's approach to leveraging big data within the context of law enforcement and criminal intelligence, focusing on a schema-last approach to data management. It discusses the challenges of data cleansing and transformation in the intelligence life-cycle and highlights the benefits of improved data integration and analysis through advanced technological solutions. The material emphasizes the need for efficient data ingestion processes and the importance of collaboration among various agencies to enhance crime analysis in Australia.