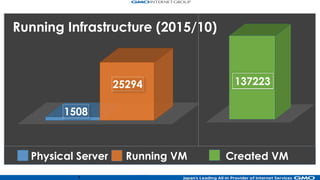

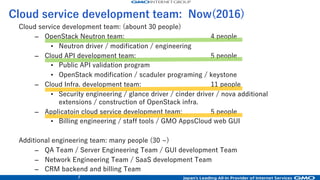

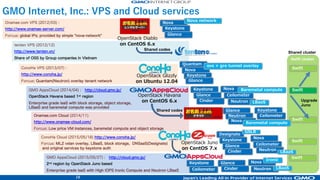

1. GMO Internet operates multiple public cloud services using OpenStack including ConoHa public cloud and GMO AppsCloud.

2. They have a limited number of staff developing and operating OpenStack services across many clusters but must run a large number of OpenStack services.

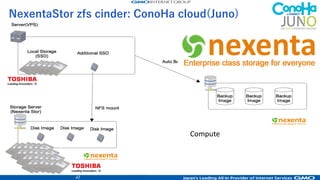

3. They have upgraded their OpenStack installations over time from Diablo to Juno, expanding services from basic compute to block storage, object storage, load balancing, and more.

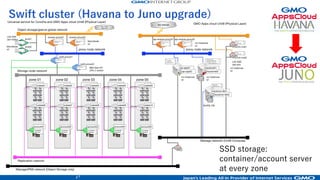

![20

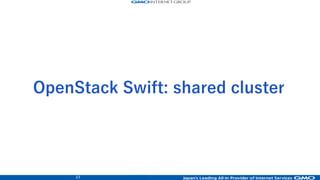

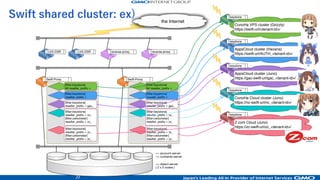

Swift shared cluster: ex)

Swift-Proxy

[filter:keystone]

reseller_prefix = nc_

[filter:ceilometer]

reseller_prefix = zc_

[filter:keystone]

reseller_prefix = gac_

[filter:keystone]

reseller_prefix =

[filter:keystone]

## reseller_prefix =

[filter:keystone]

reseller_prefix = zc_

[filter:ceilometer]

reseller_prefix = zc_

Swift-Proxy

[filter:keystone]

reseller_prefix = nc_

[filter:ceilometer]

reseller_prefix = zc_

[filter:keystone]

reseller_prefix = gac_

[filter:keystone]

reseller_prefix =

[filter:keystone]

## reseller_prefix =

[filter:keystone]

reseller_prefix = zc_

[filter:ceilometer]

reseller_prefix = zc_

<< account-server

<< container-server

<< object-server

( 2 x 5 nodes )

reverse-proxyreverse-proxyLVS-DSR LVS-DSR

keystone

ConoHa VPS cluster (Grizzly)

https://swift-url/<tenant-id>/

keystone

AppsCloud cluster (Havana)

https://swift-url/AUTH_<tenant-id>/

keystone

AppsCloud cluster (Juno)

https://gac-swift-url/gac_<tenant-id>/

keystone

ConoHa Cloud cluster (Juno)

https://nc-swift-url/nc_<tenant-id>/

keystone

Z.com Cloud (Juno)

https://zc-swift-url/zc_<tenant-id>/

the Internet](https://image.slidesharecdn.com/openstack-days-taiwan-2016-0712-03-ipad-160712050841/85/Openstack-days-taiwan-2016-0712-19-320.jpg)

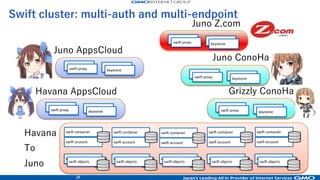

![33

Public API global network

LVS-DSR

(act-stby)

the Cloud

(Internet)

HAProxy

LVS

heatbeat

api-reverse-proxy01 api-reverse-proxy02elvs01

elvs02

VMx2

LVS

heatbeat

VMx2

HAProxy

ext-api-wrapper01

php + httpd

- keystone

- nova

- cinder

- neutron

- glance

- account

ext-api-wrapper02

php + httpd

- keystone

- nova

- cinder

- neutron

- glance

- account

control-nodes01

- keystone API

- nova API

- cinder API

- neutron API

- glance API

control-nodes02

- keystone API

- nova API

- cinder API

- neutron API

- glance API

OpenStack Management network

step 1)

step 2)

step 3)

step 4)

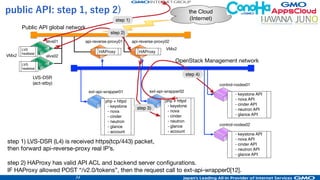

public API: step 1, step 2)

step 1) LVS-DSR (L4) is received https(tcp/443) packet,

then forward api-reverse-proxy real IP’s.

step 2) HAProxy has valid API ACL and backend server configurations.

IF HAProxy allowed POST “/v2.0/tokens”, then the request call to ext-api-wrapper0[12].](https://image.slidesharecdn.com/openstack-days-taiwan-2016-0712-03-ipad-160712050841/85/Openstack-days-taiwan-2016-0712-31-320.jpg)

![34

Public API global network

LVS-DSR

(act-stby)

the Cloud

(Internet)

HAProxy

LVS

heatbeat

api-reverse-proxy01 api-reverse-proxy02elvs01

elvs02

VMx2

LVS

heatbeat

VMx2

HAProxy

ext-api-wrapper01

php + httpd

- keystone

- nova

- cinder

- neutron

- glance

- account

ext-api-wrapper02

php + httpd

- keystone

- nova

- cinder

- neutron

- glance

- account

control-nodes01

- keystone API

- nova API

- cinder API

- neutron API

- glance API

control-nodes02

- keystone API

- nova API

- cinder API

- neutron API

- glance API

OpenStack Management network

step 1)

step 2)

step 3)

step 4)

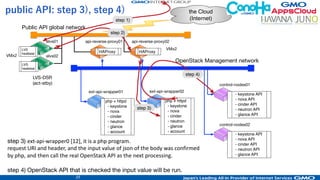

public API: step 3), step 4)

step 3) ext-api-wrapper0 [12], it is a php program.

request URI and header, and the input value of json of the body was confirmed

by php, and then call the real OpenStack API as the next processing.

step 4) OpenStack API that is checked the input value will be run.](https://image.slidesharecdn.com/openstack-days-taiwan-2016-0712-03-ipad-160712050841/85/Openstack-days-taiwan-2016-0712-32-320.jpg)