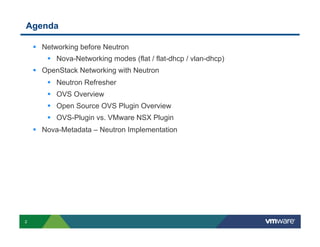

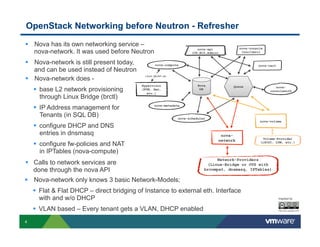

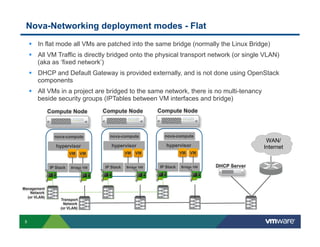

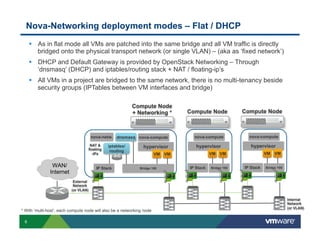

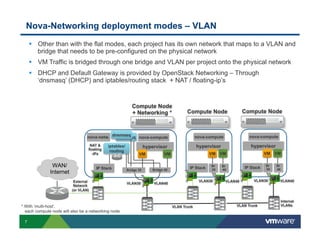

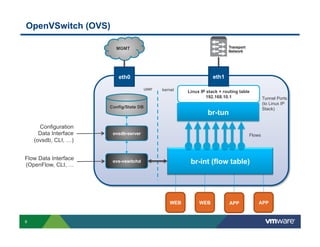

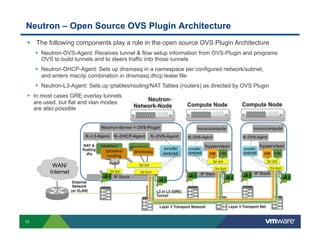

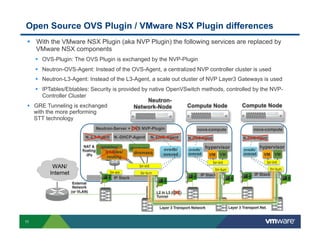

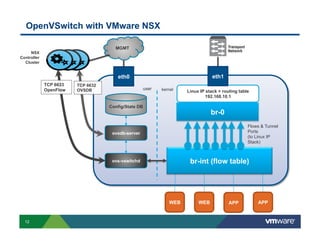

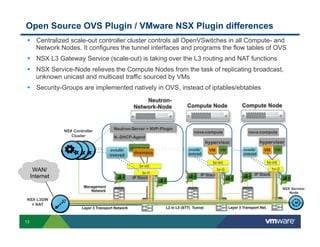

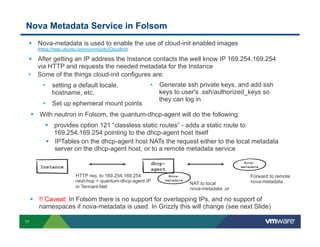

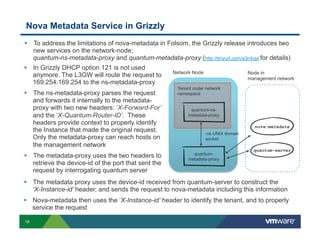

The document provides a technical overview of networking in OpenStack, focusing on the evolution from nova-networking to neutron, including the architecture and deployment modes. It discusses key elements such as flat and VLAN networking models, as well as the integration of Open vSwitch (OVS) and VMware NSX plugins, detailing their functionalities and differences. Additionally, it touches on the nova-metadata service and enhancements introduced in later releases of OpenStack to improve metadata handling.