Maximizing performance via tuning and optimization involves:

- Defining service level agreements and translating them to database transactions.

- Capturing metrics on business, application, and database transactions to identify bottlenecks.

- Tuning from the start and periodically reviewing production systems for changes.

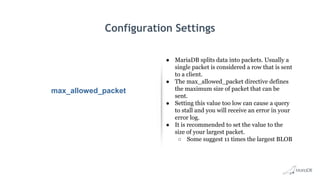

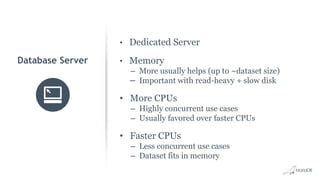

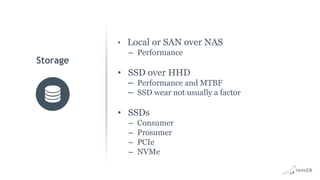

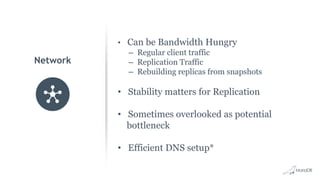

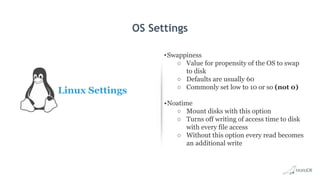

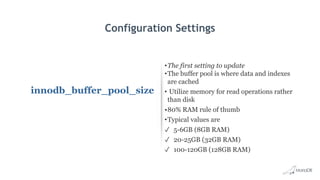

- Optimizing server, storage, network and OS settings as well as MariaDB configuration settings like buffer pool size, query cache size, and connection settings.

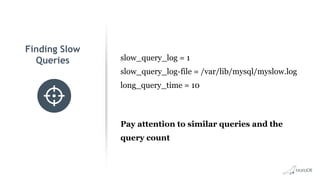

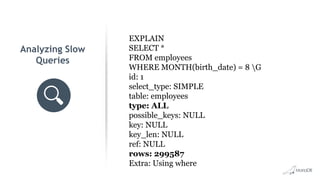

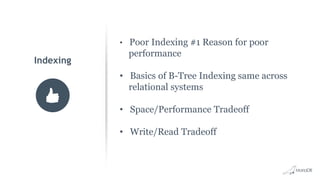

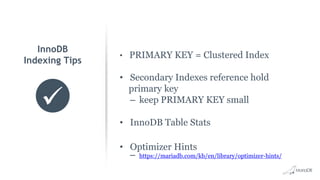

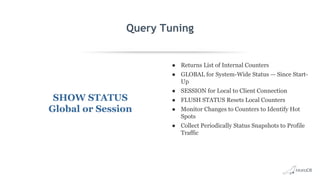

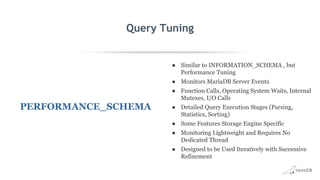

- Analyzing slow queries, indexing appropriately, and monitoring tools like Performance Schema.

- Designing databases and choosing optimal data types.

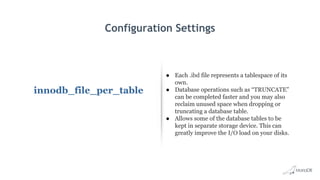

![Configuration Settings

Disable MySQL Reverse

DNS Lookups

● MariaDB performs a DNS lookup of the

user’s IP address and Hostname with

connection

● The IP address is checked by resolving it to a

host name. The hostname is then resolved to

an IP to verify

● This allows DNS issues to cause delays

● You can disable and use IP addresses only

○ skip-name-resolve under [mysqld] in

my.cnf](https://image.slidesharecdn.com/mdb-roadshow-2018-max-performancev2jday-180713225252/85/Maximizing-performance-via-tuning-and-optimization-25-320.jpg)