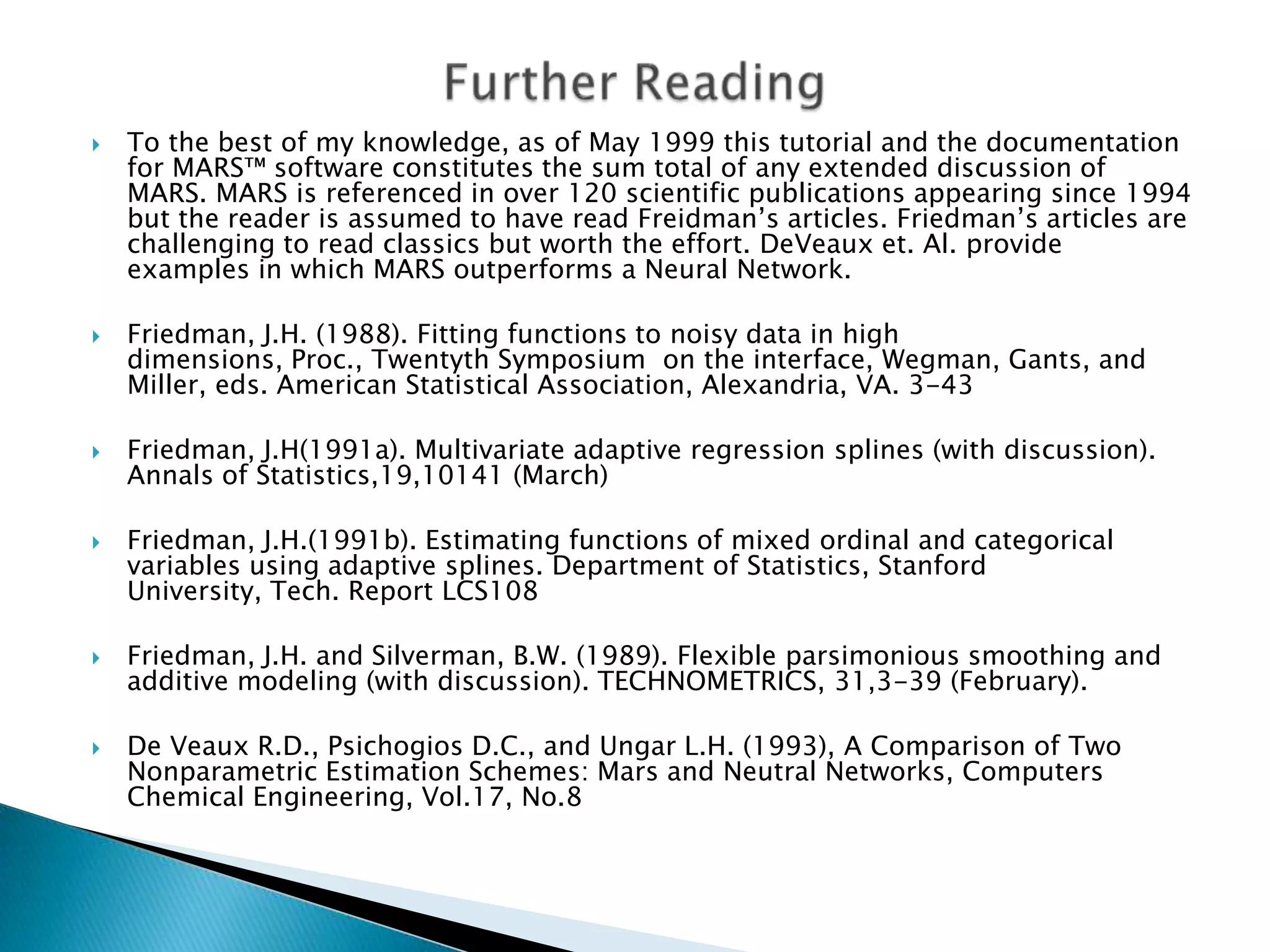

The document discusses MARS (Multivariate Adaptive Regression Splines), a new tool for regression analysis. MARS can automatically select variables, detect interactions between variables, and produce models that are protected against overfitting. It was developed by Jerome Friedman and produces smooth curves rather than step functions like CART. The document provides an introduction to MARS concepts and guidelines for using MARS in practice.