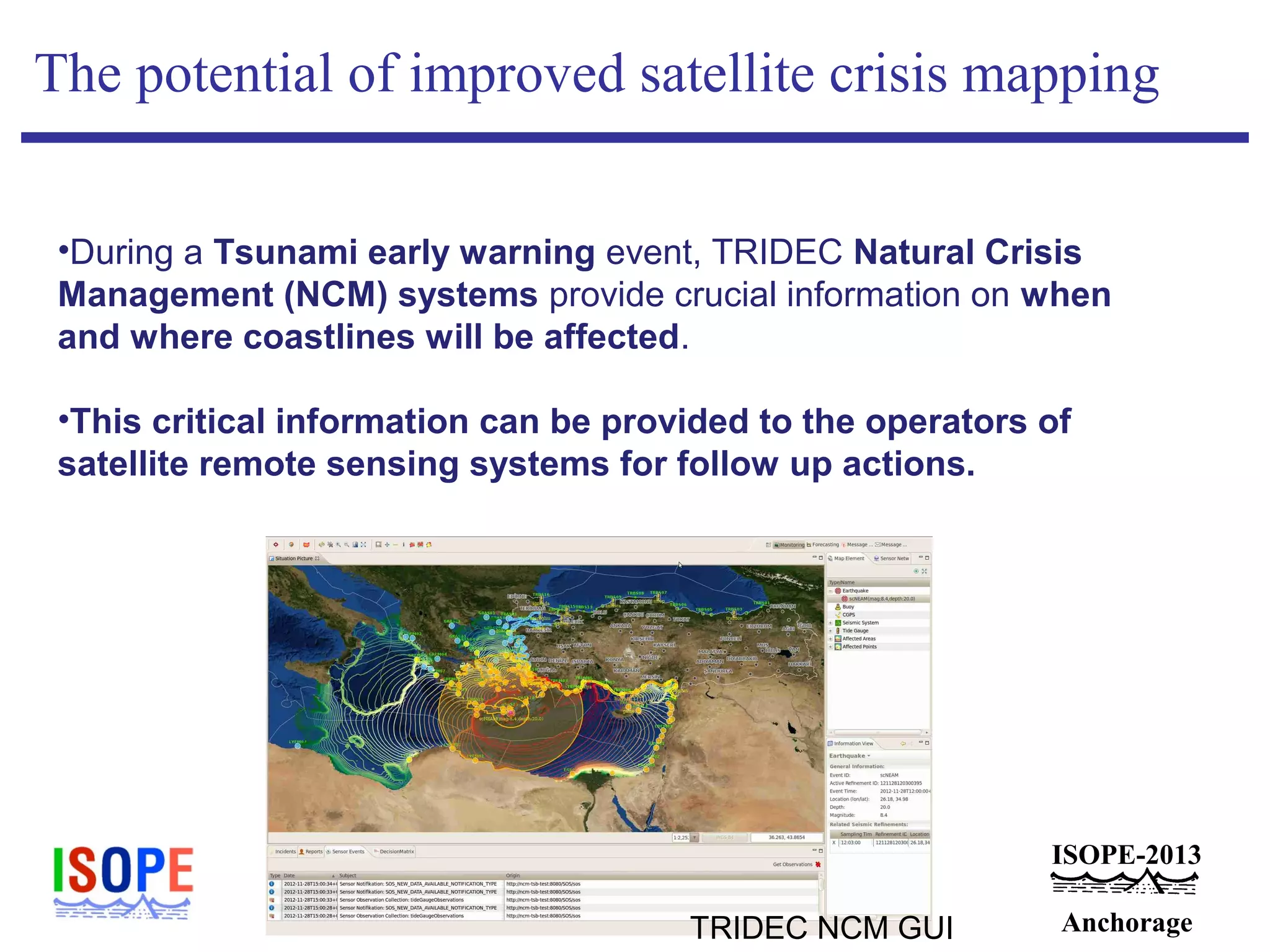

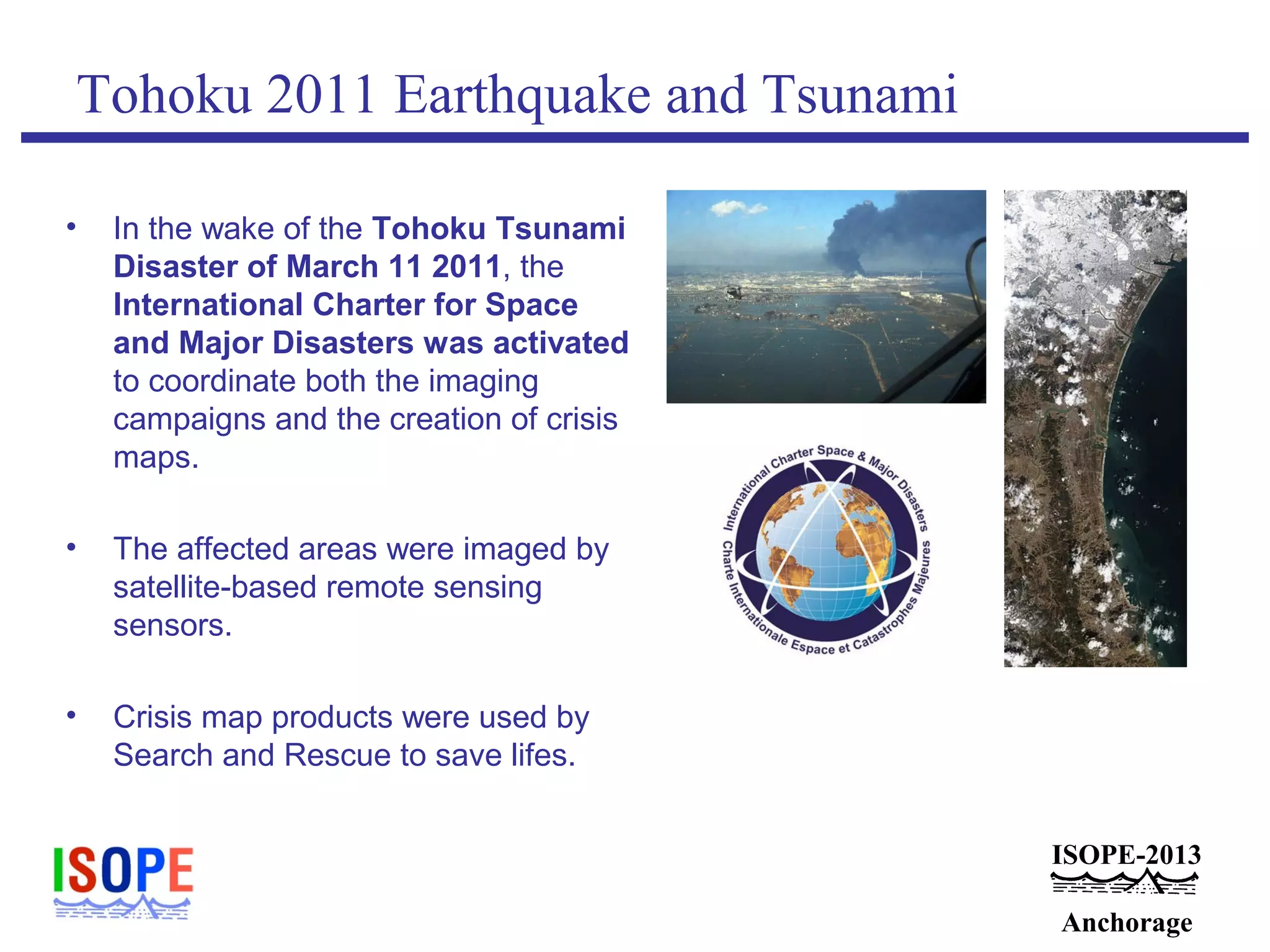

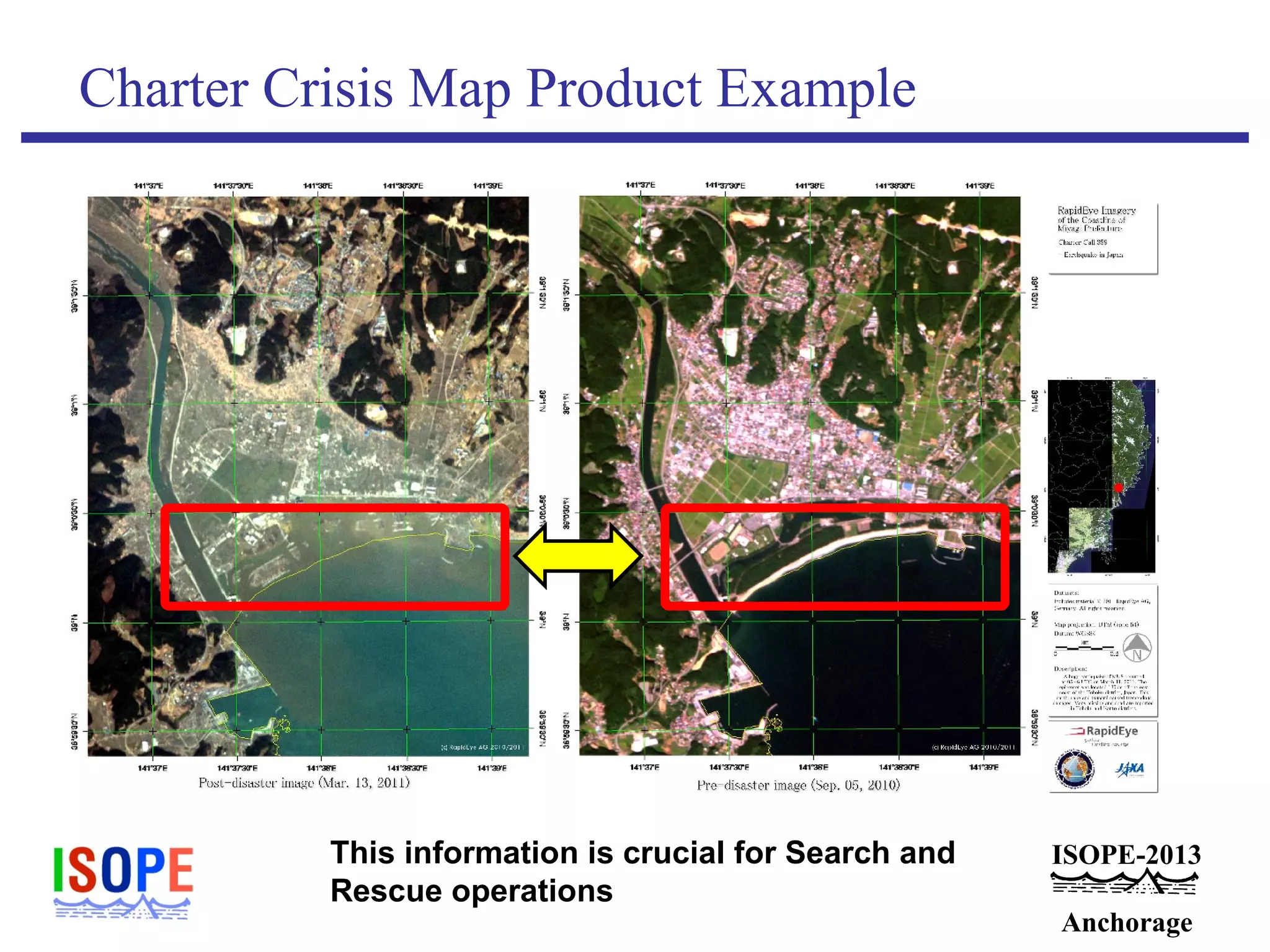

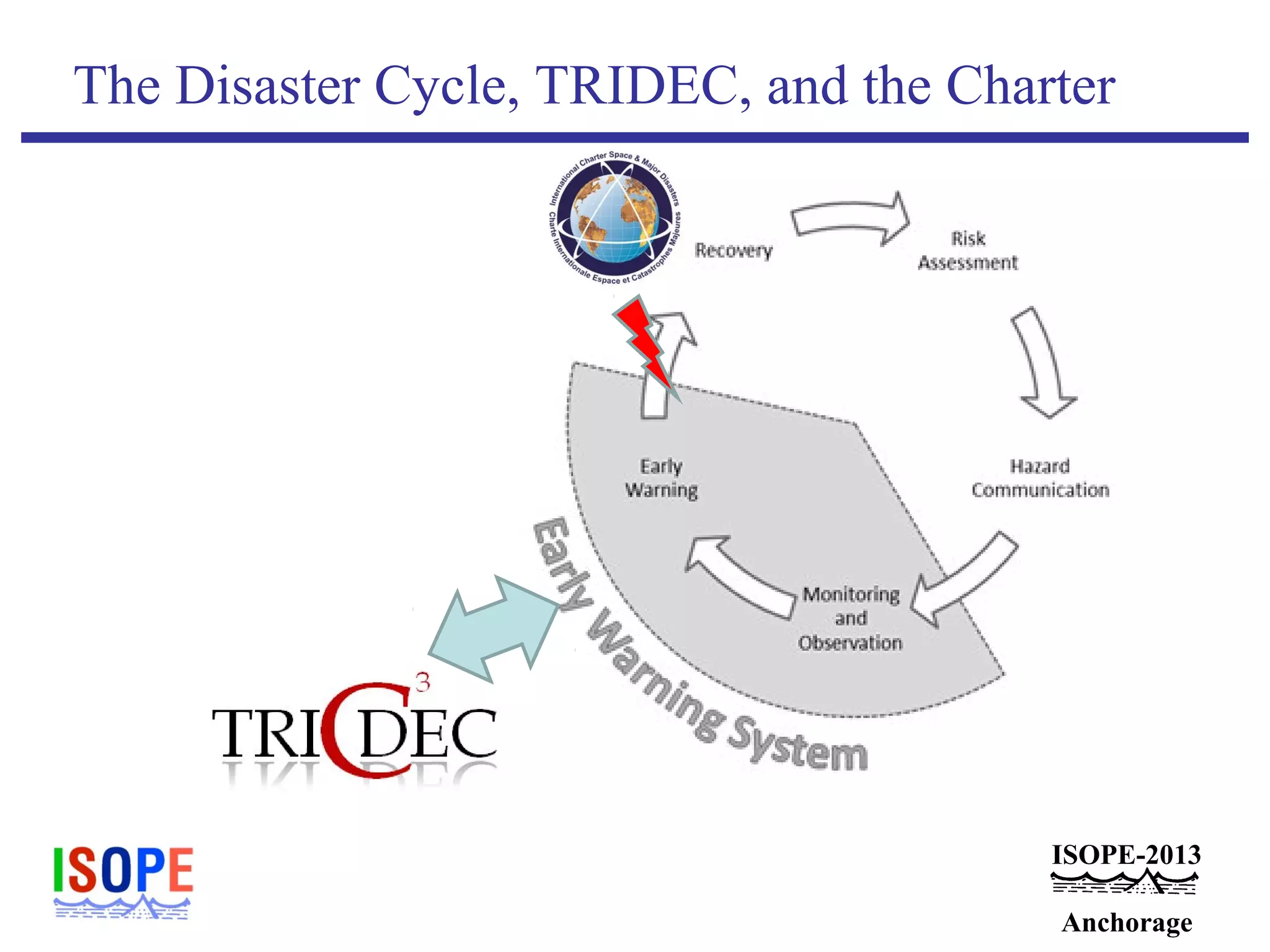

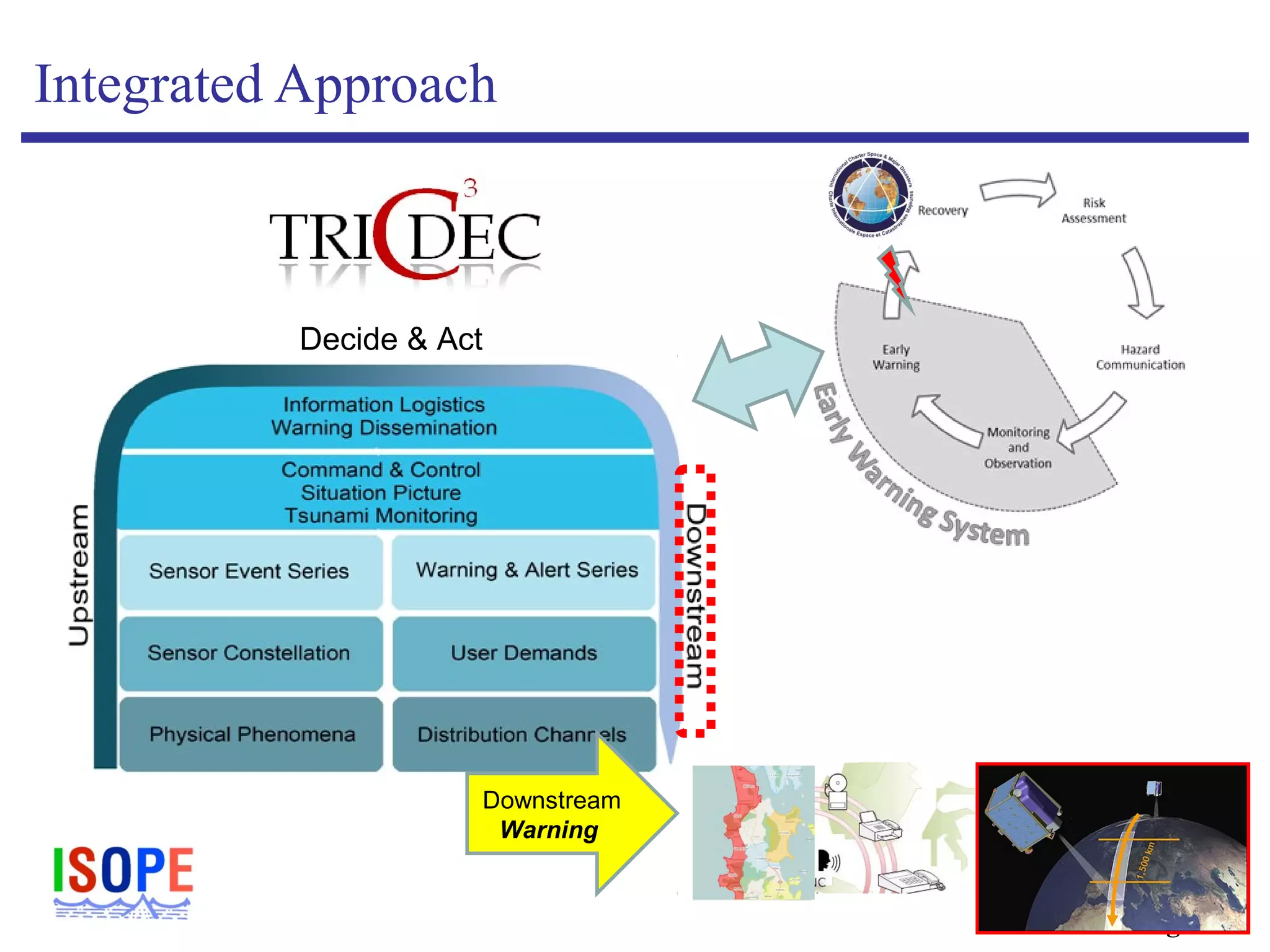

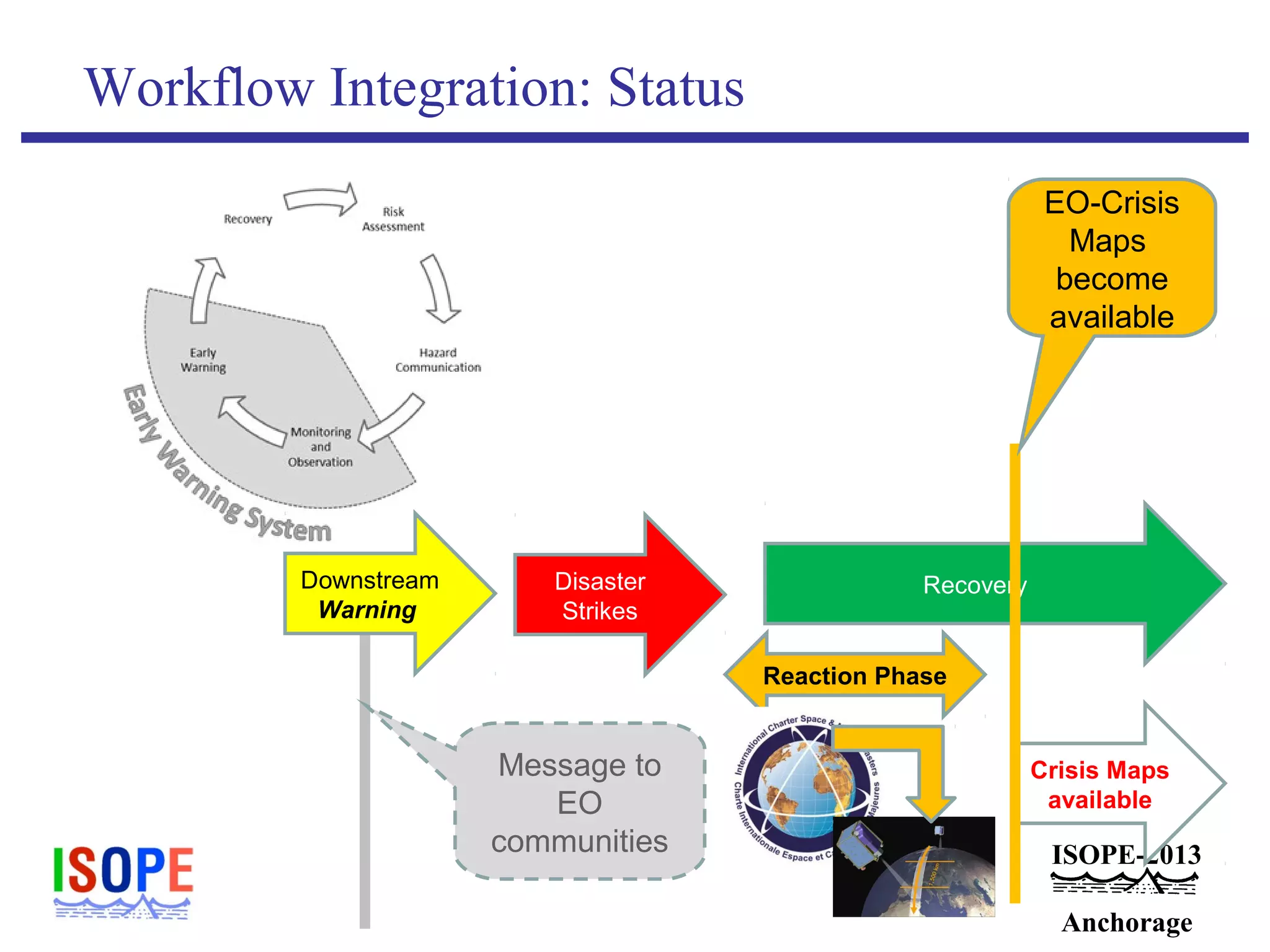

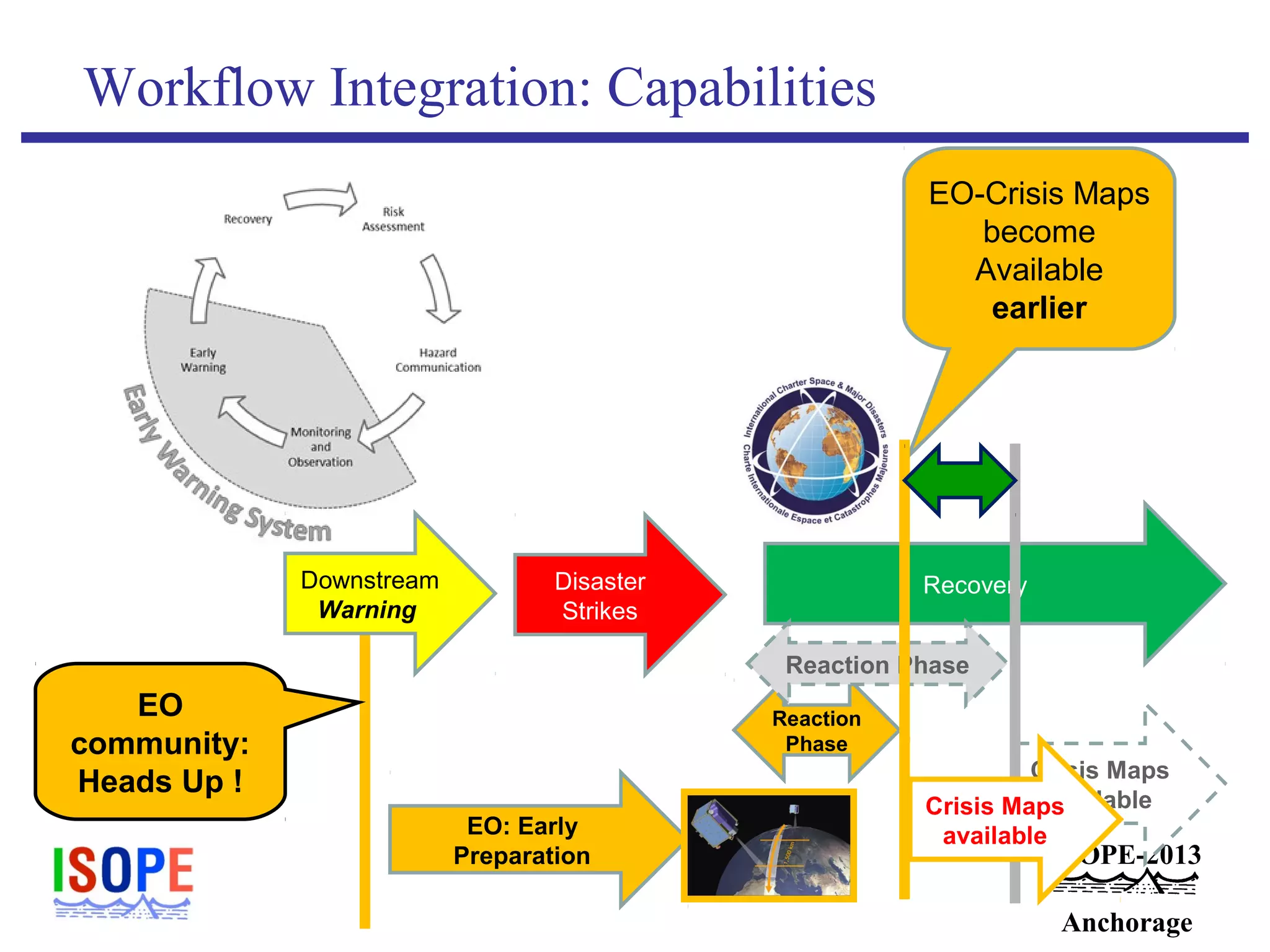

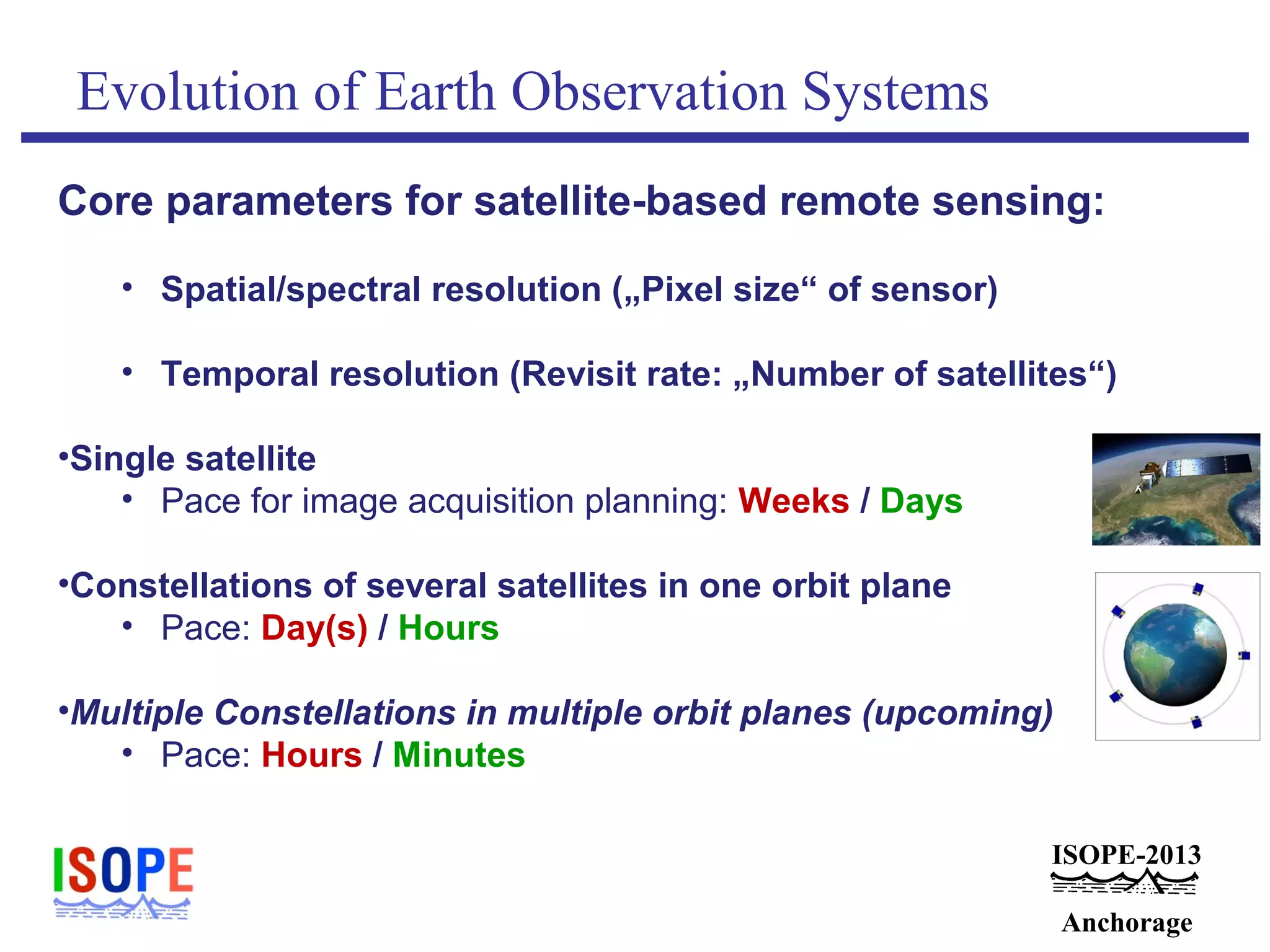

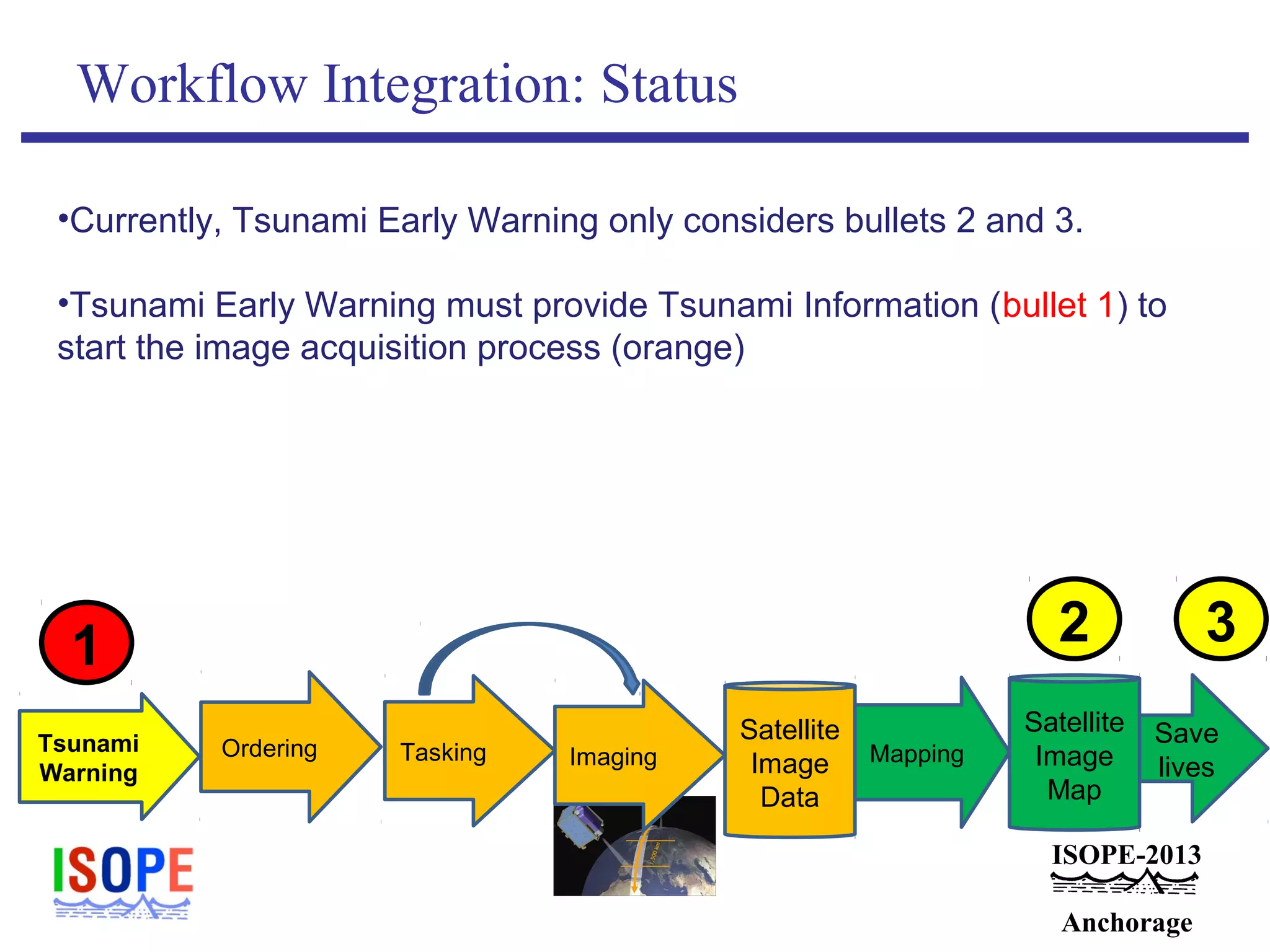

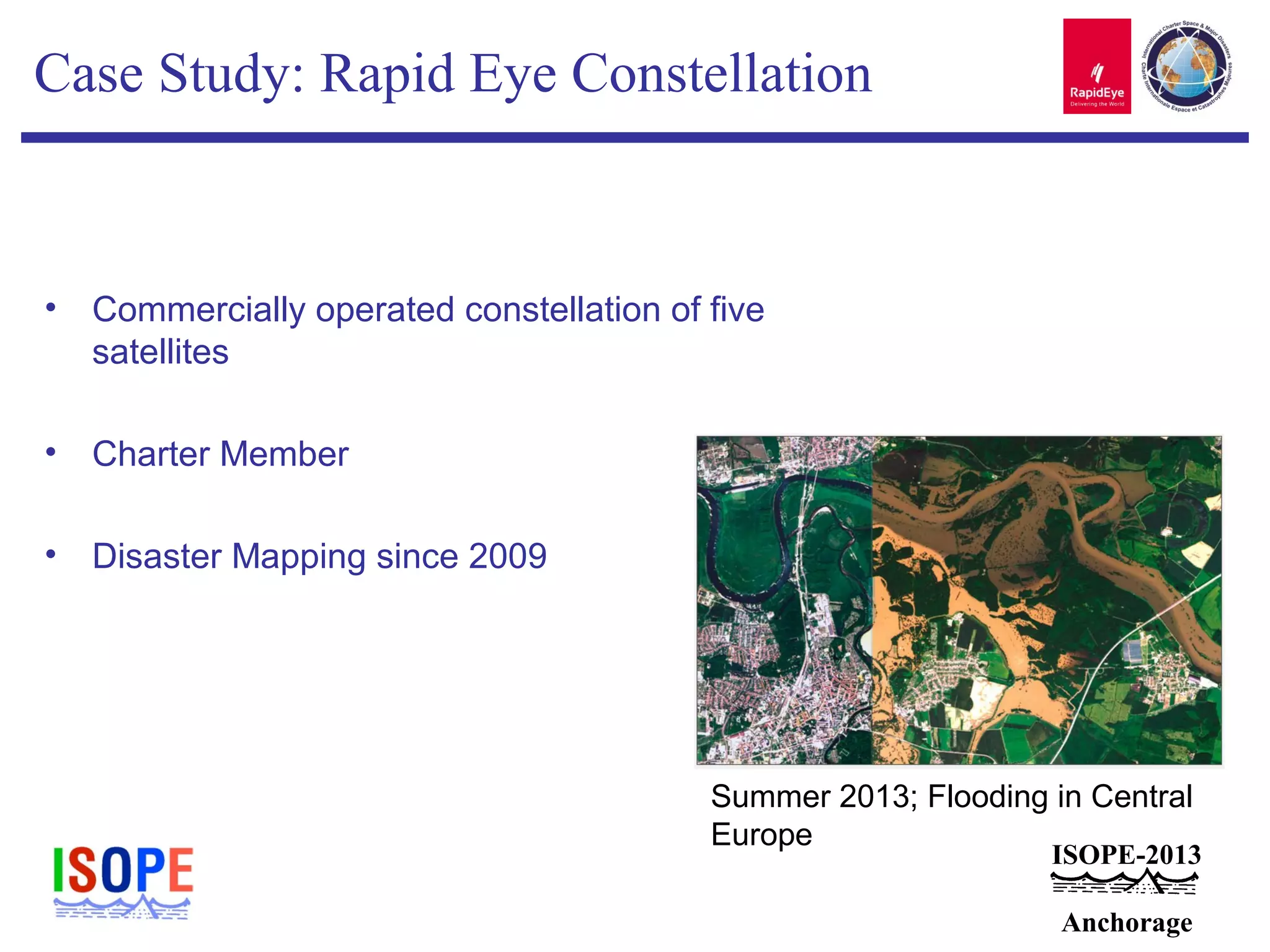

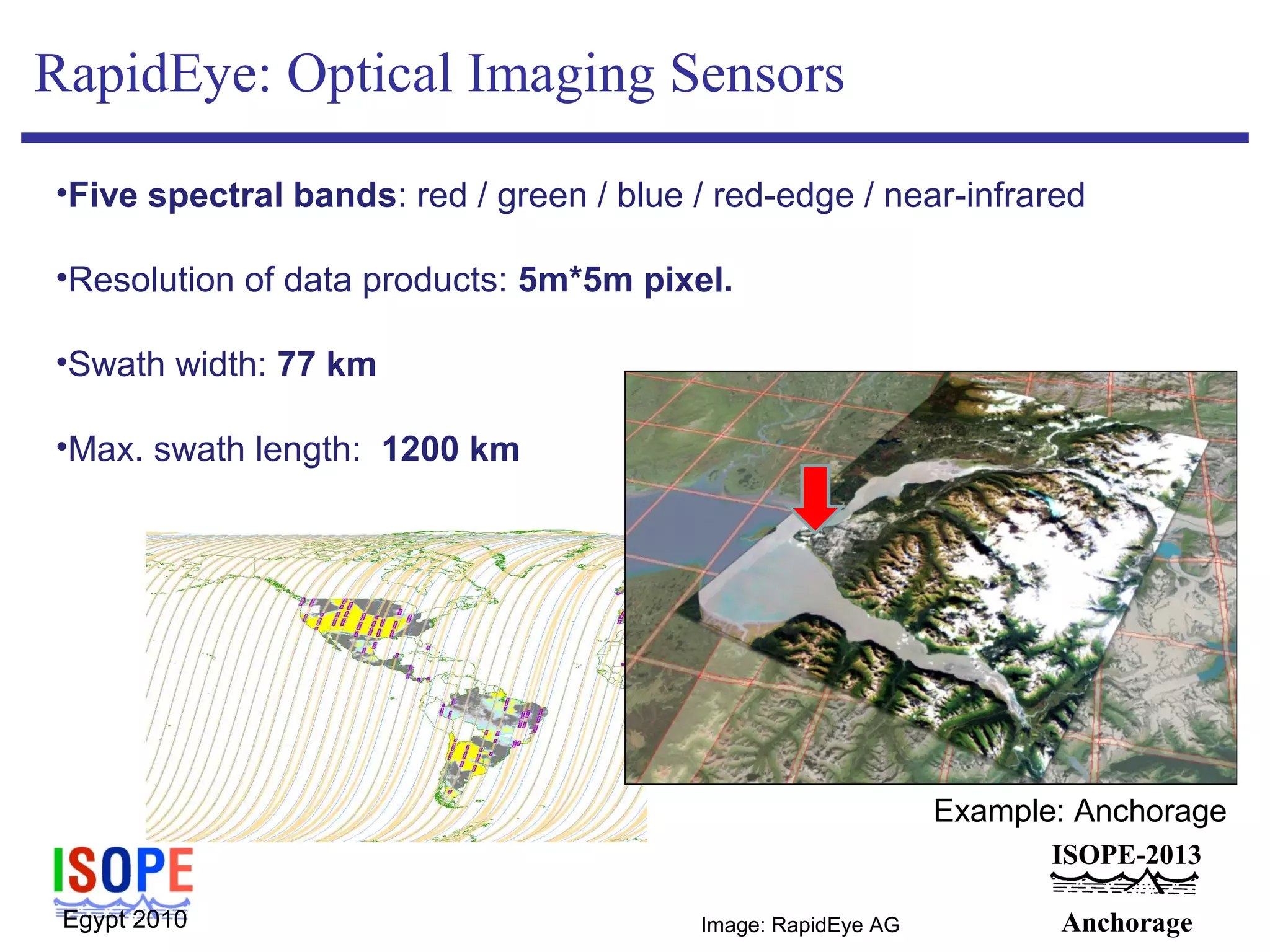

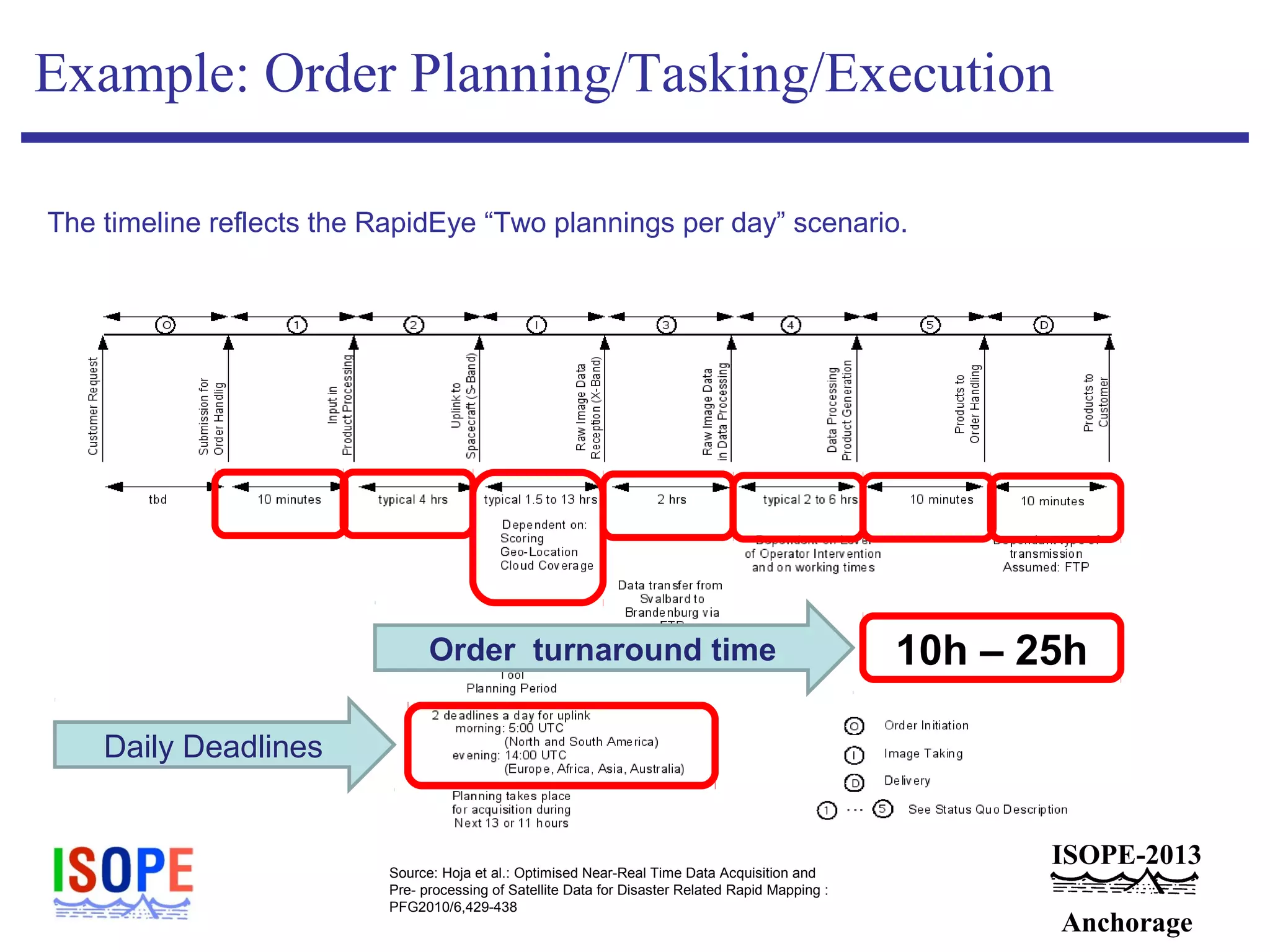

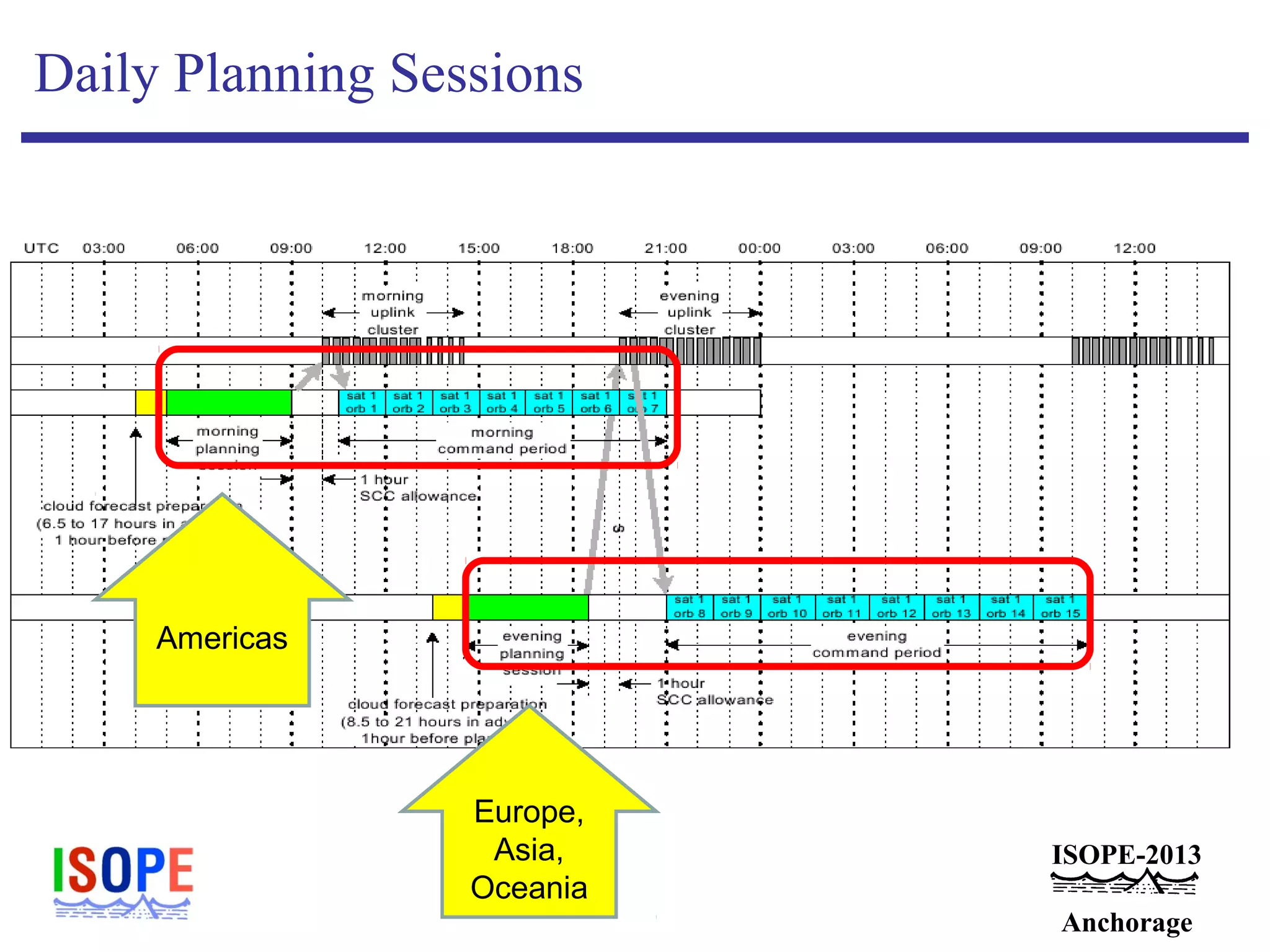

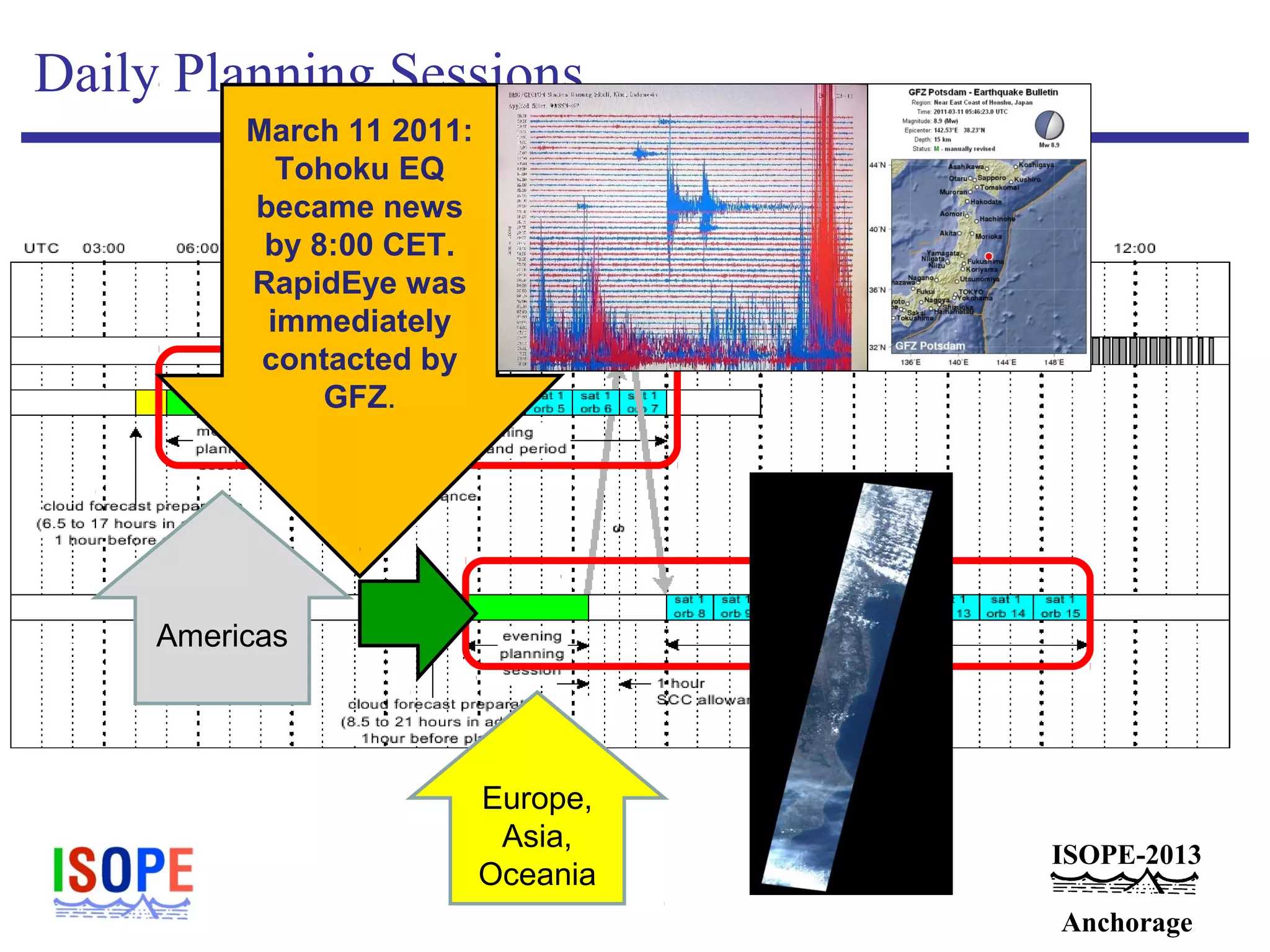

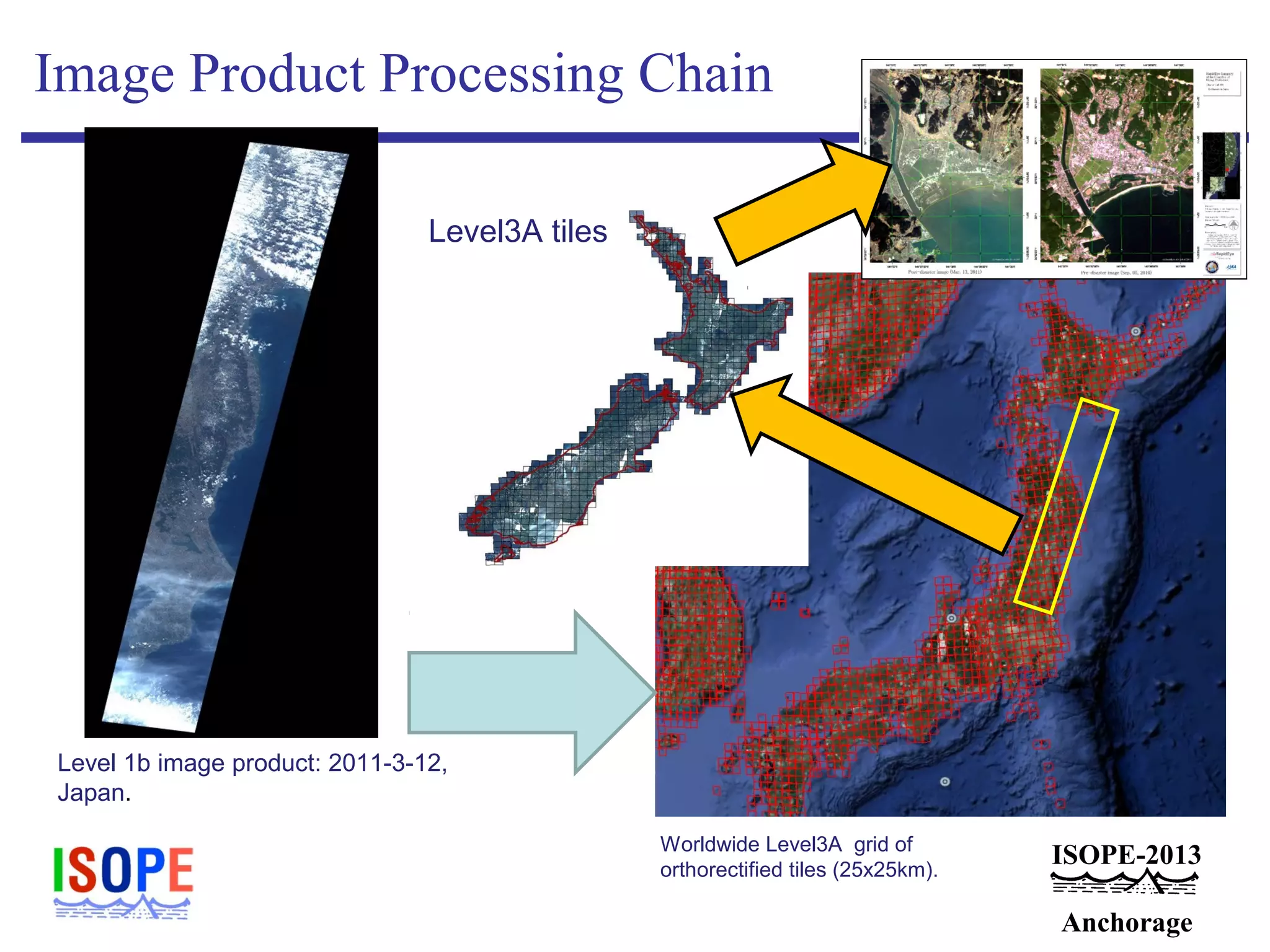

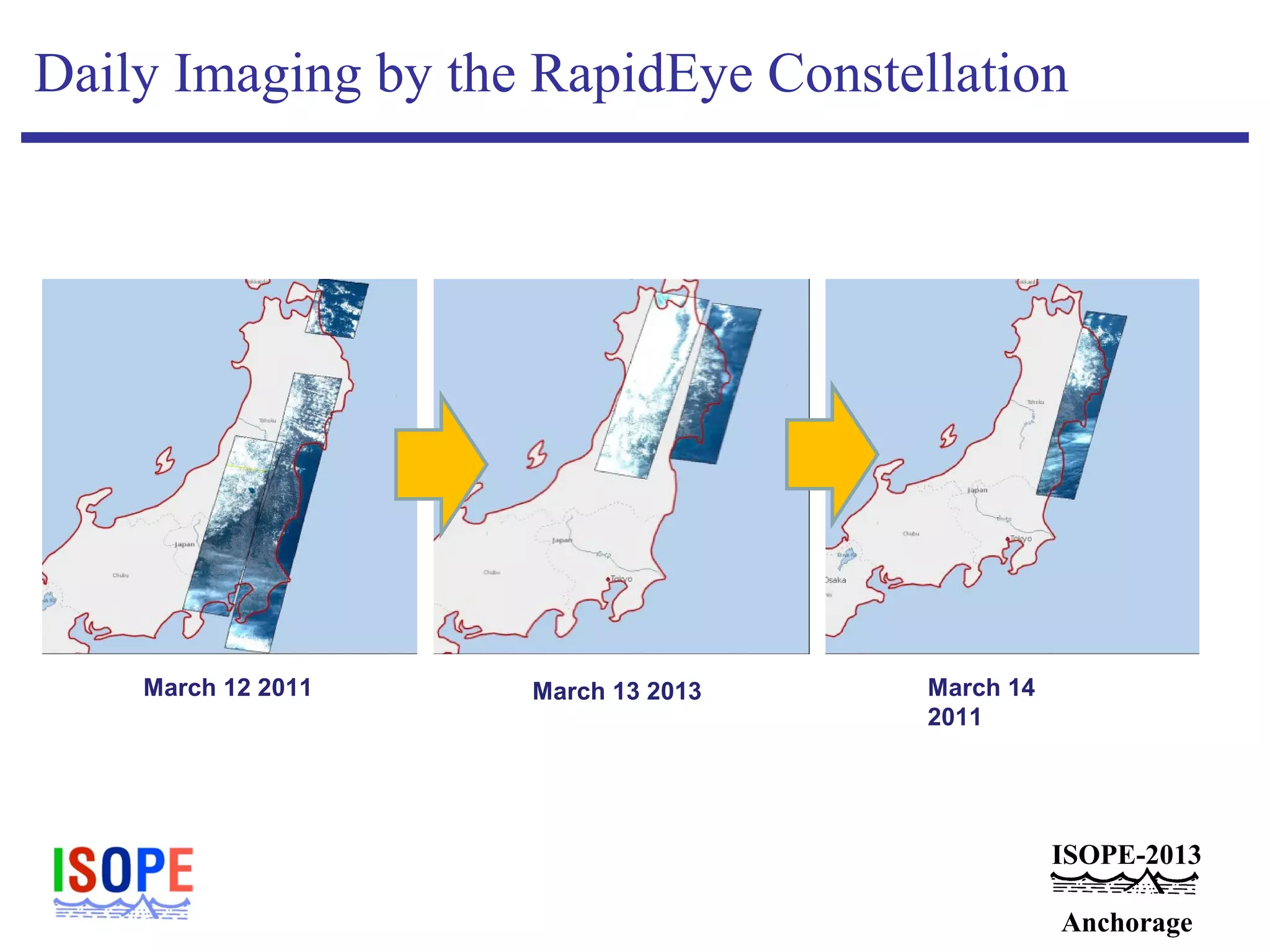

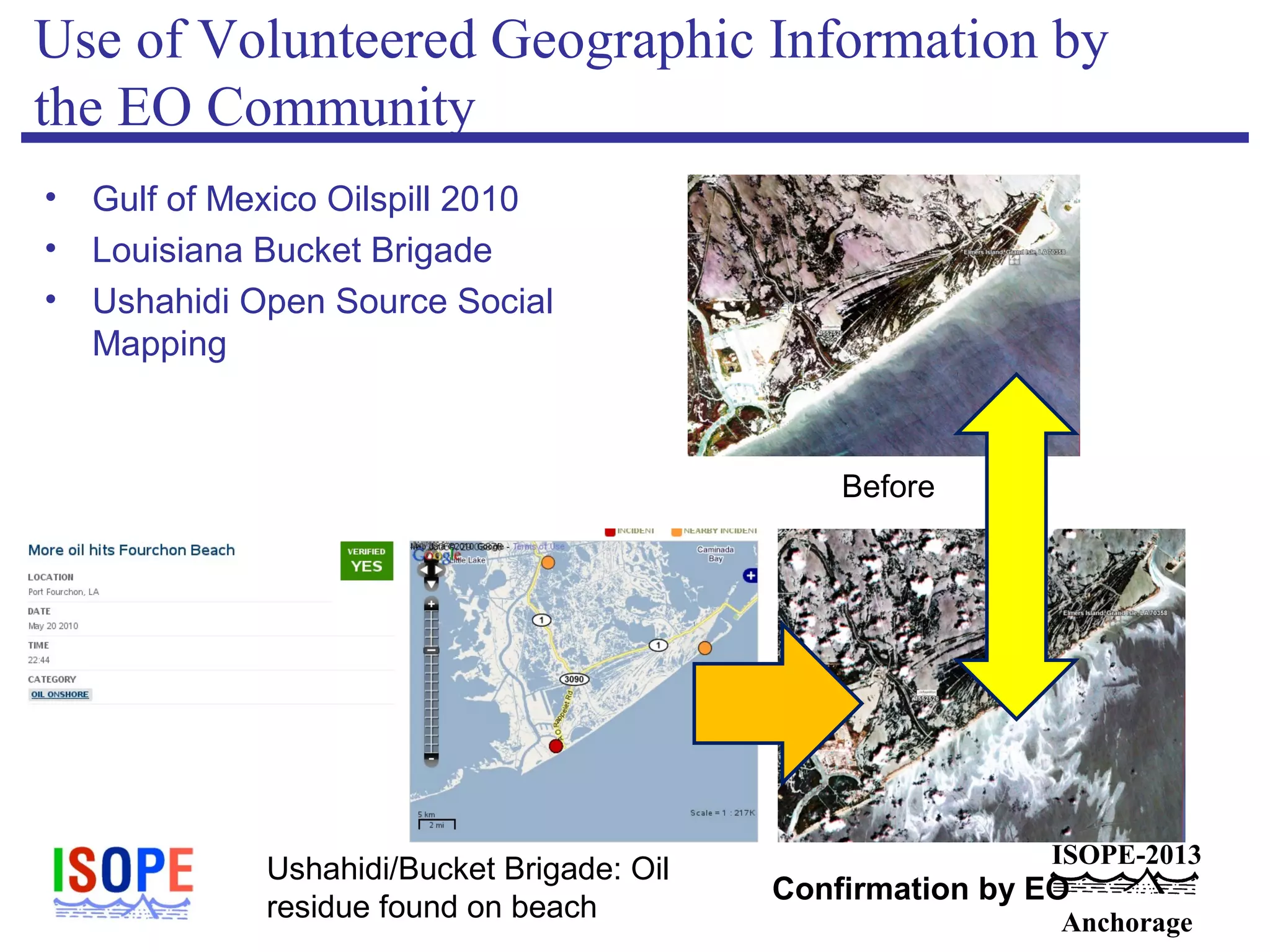

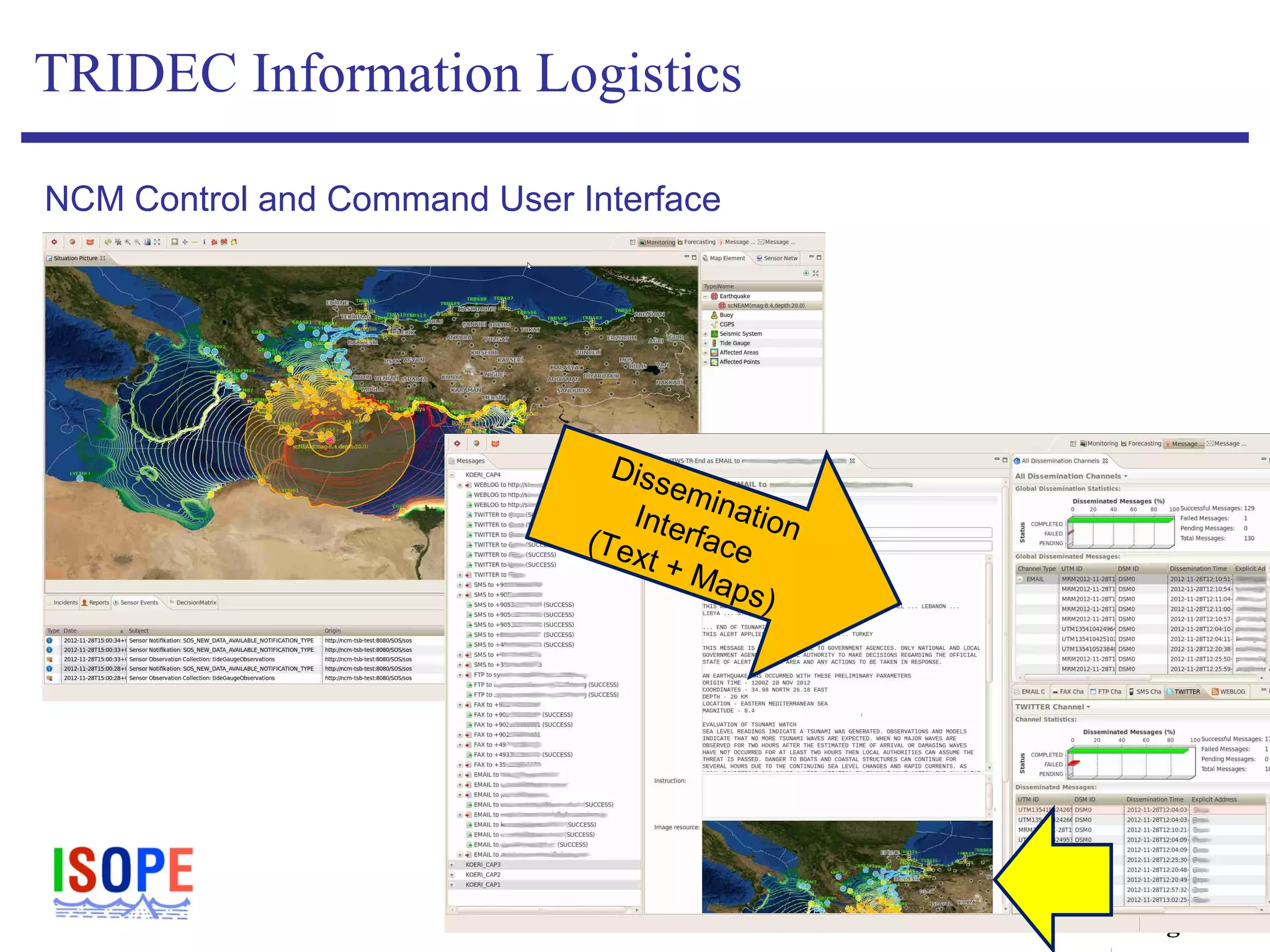

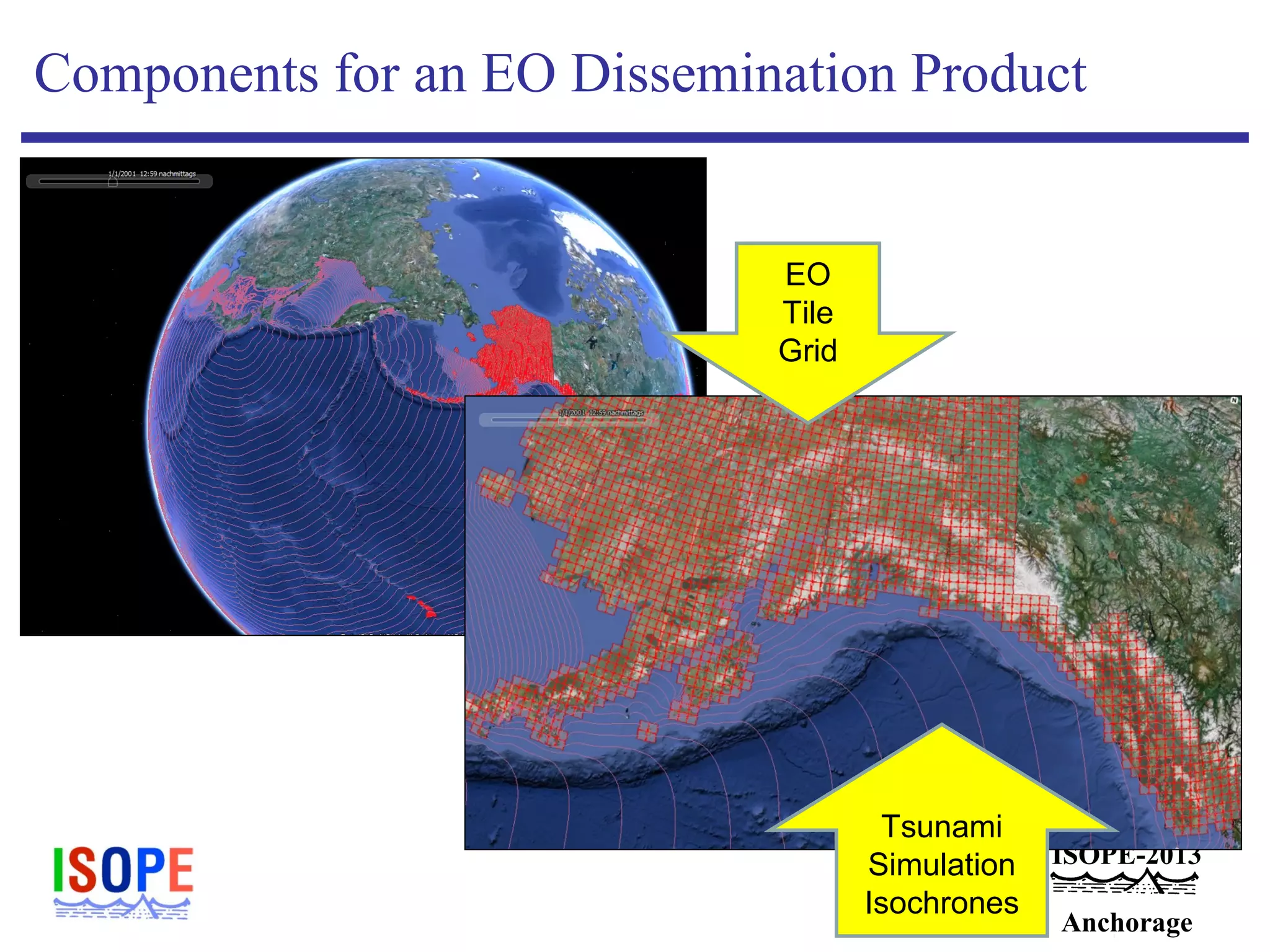

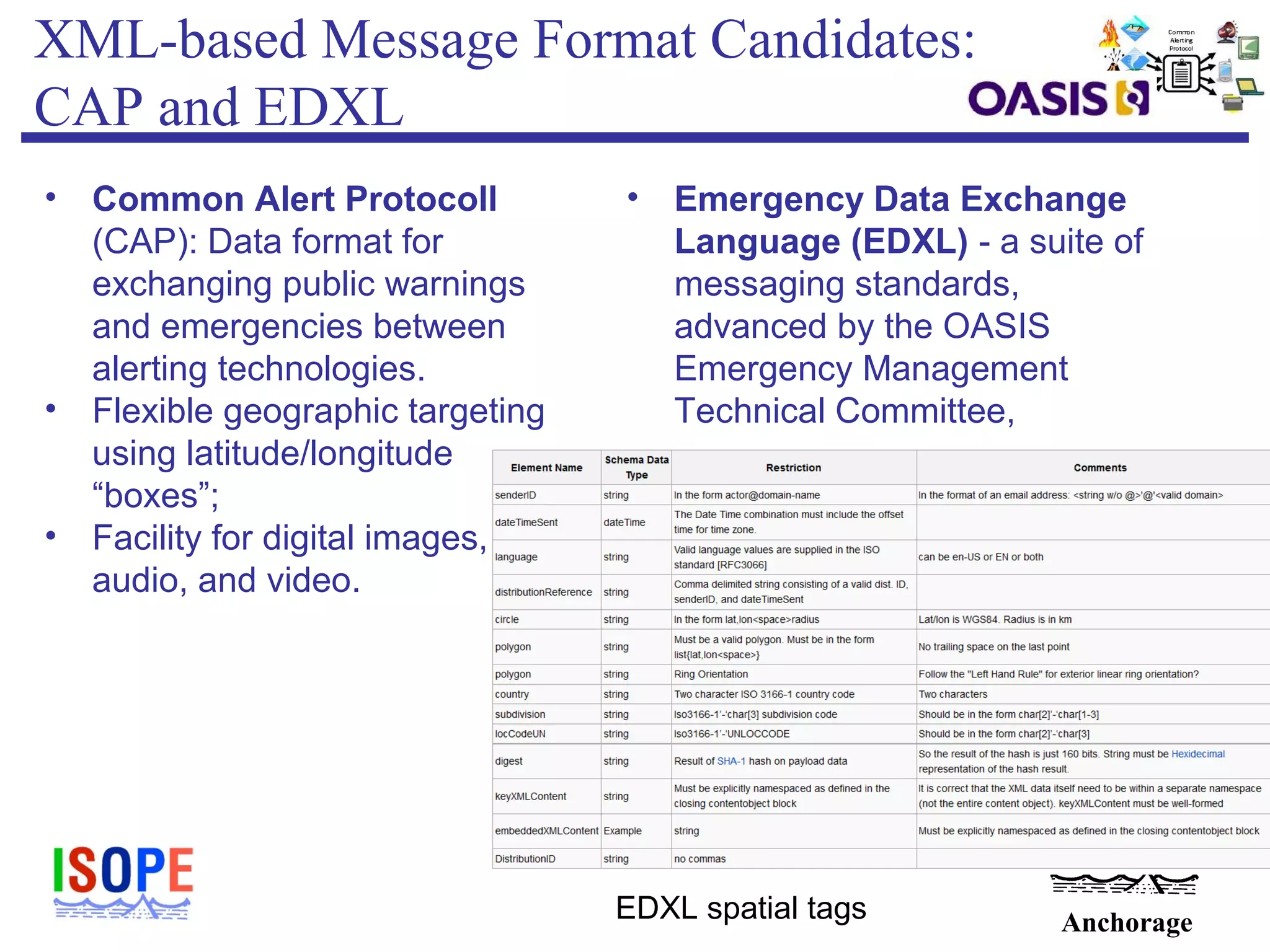

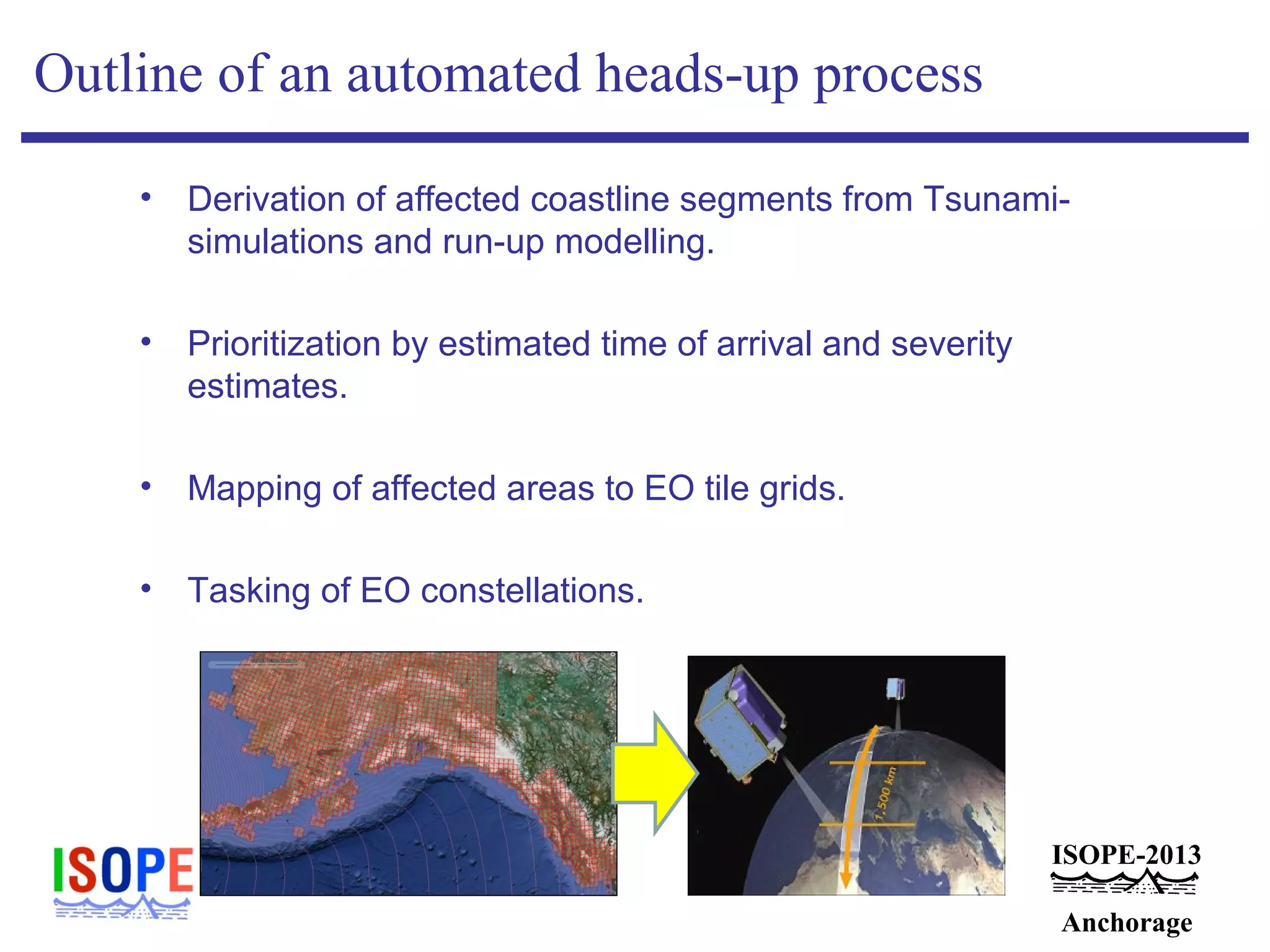

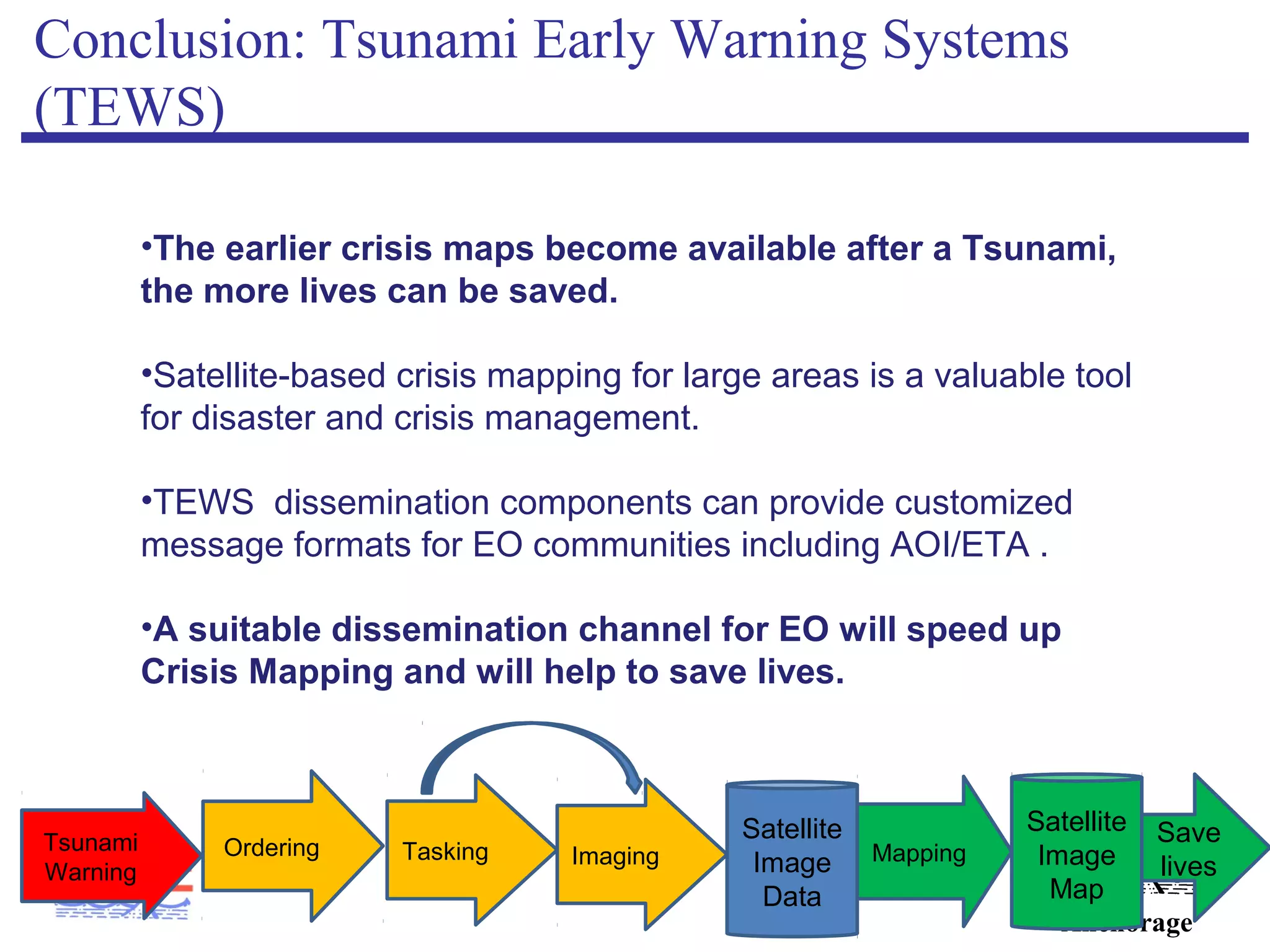

This document discusses how tsunami early warning systems could provide information to satellite operators to help speed up crisis mapping after disasters. It describes a project called TRIDEC that aims to integrate tsunami warnings with satellite tasking to allow imaging of affected areas sooner. During the 2011 Tohoku tsunami, satellite imagery through the International Charter helped create maps for rescue efforts. Faster coordination between warnings and satellite tasking could produce maps even sooner to further aid response. Standard messaging formats may help disseminate early warnings to satellite operators for quicker crisis mapping following disasters.