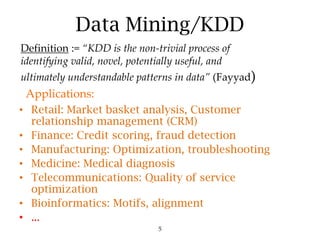

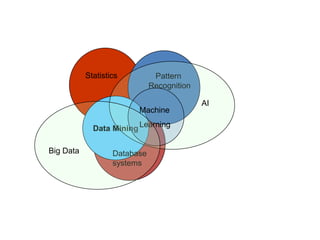

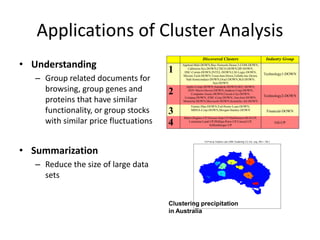

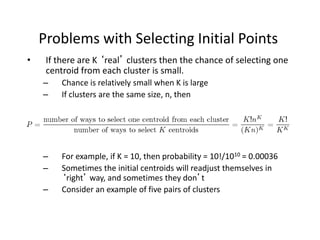

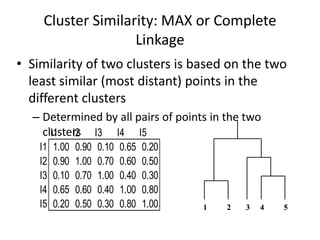

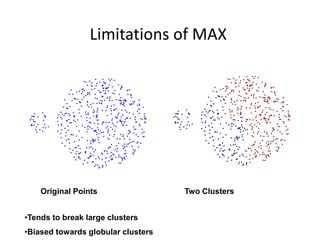

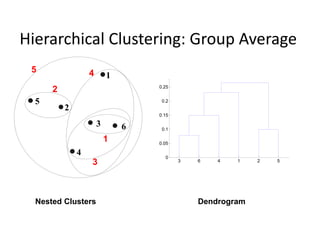

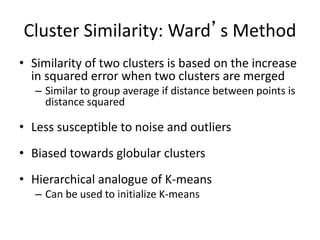

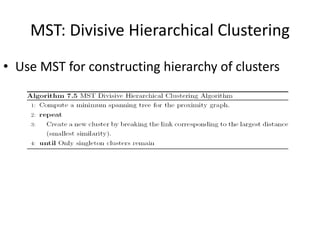

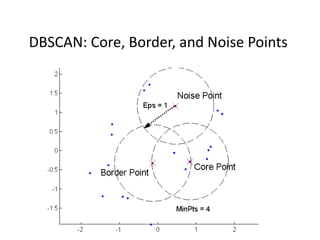

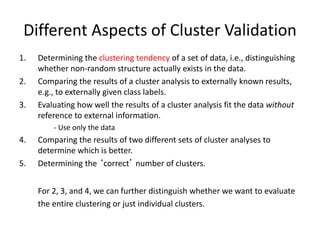

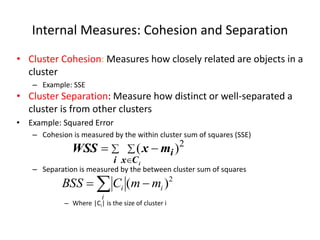

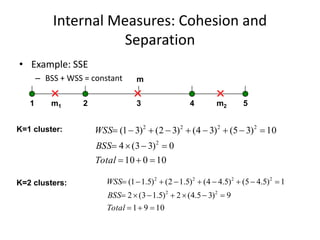

The document provides an overview of machine learning and data mining, highlighting their definitions, types, applications, and importance in various fields. Machine learning, a branch of artificial intelligence, focuses on predictive modeling, while data mining is concerned with discovering previously unknown patterns in data. Additionally, it discusses clustering techniques and algorithms, such as k-means and hierarchical clustering, outlining their processes, strengths, and limitations.