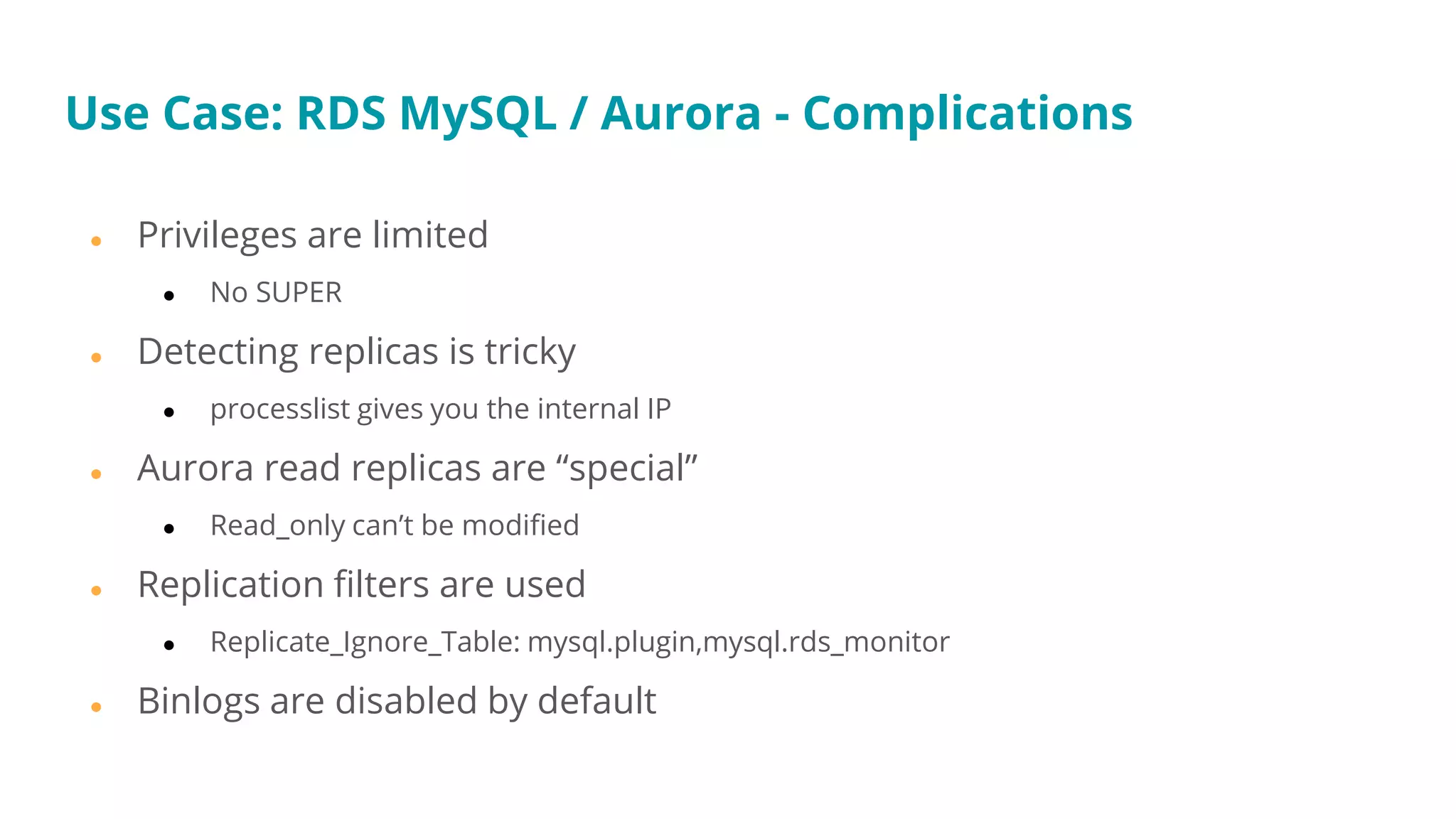

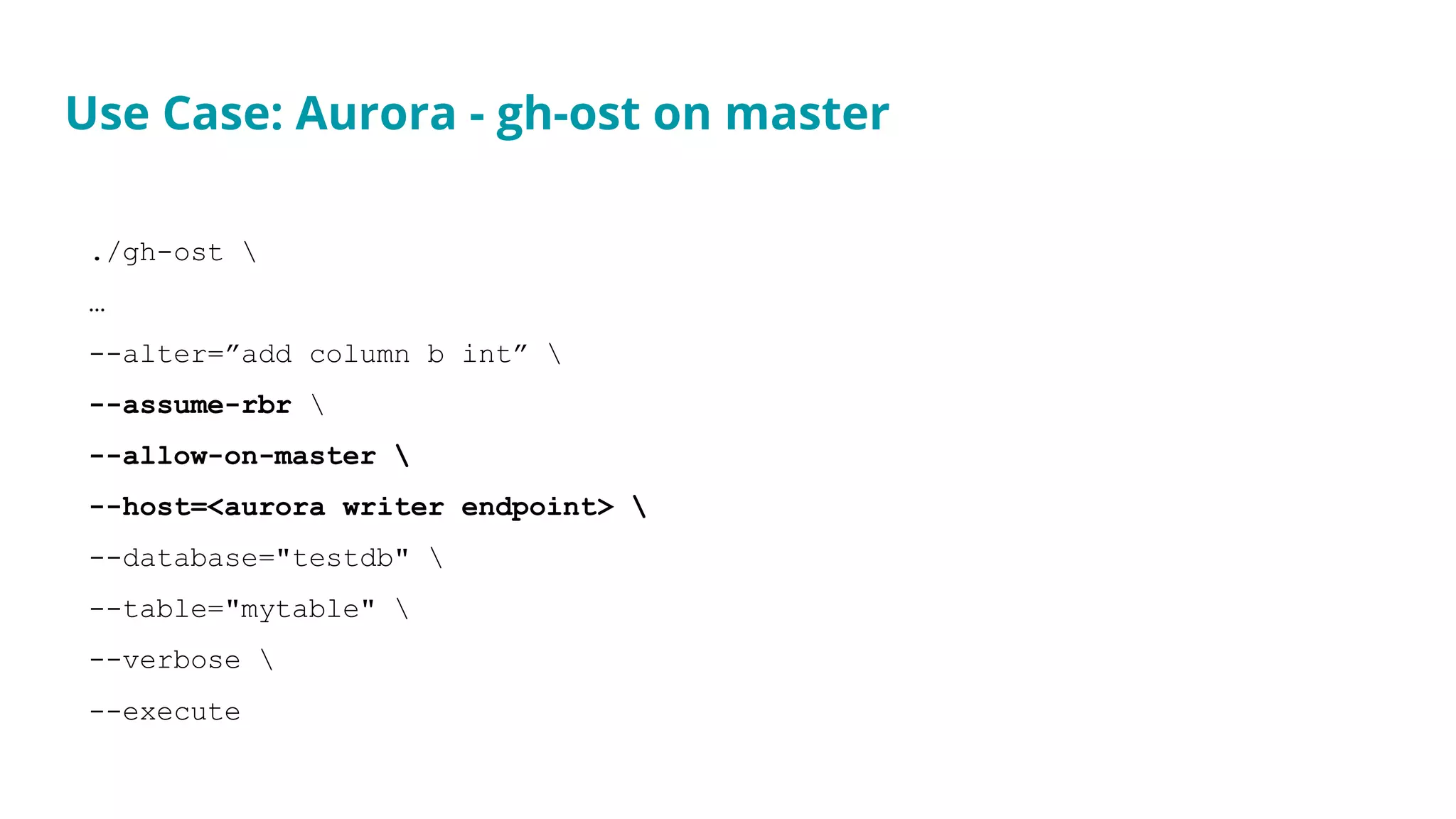

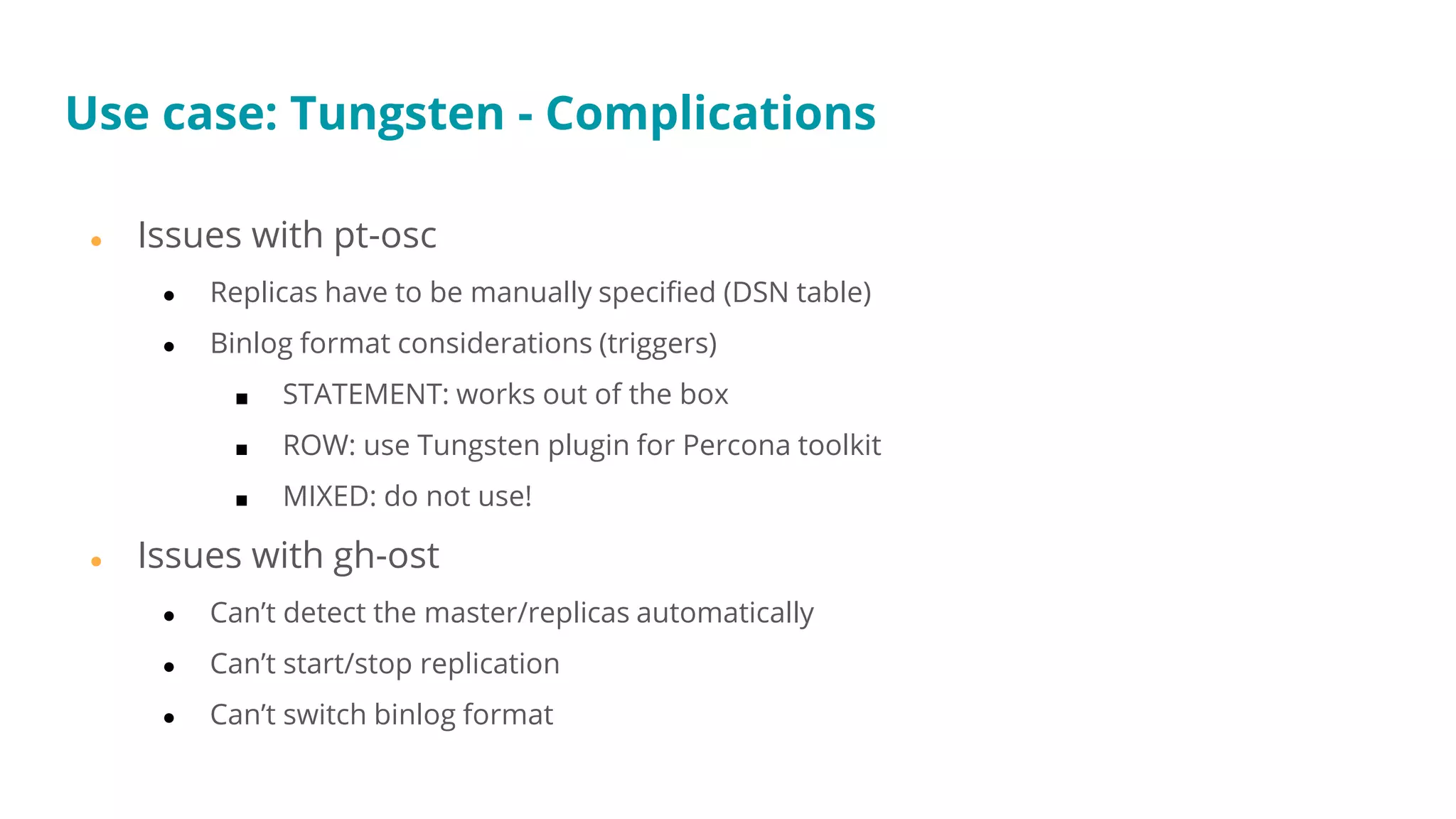

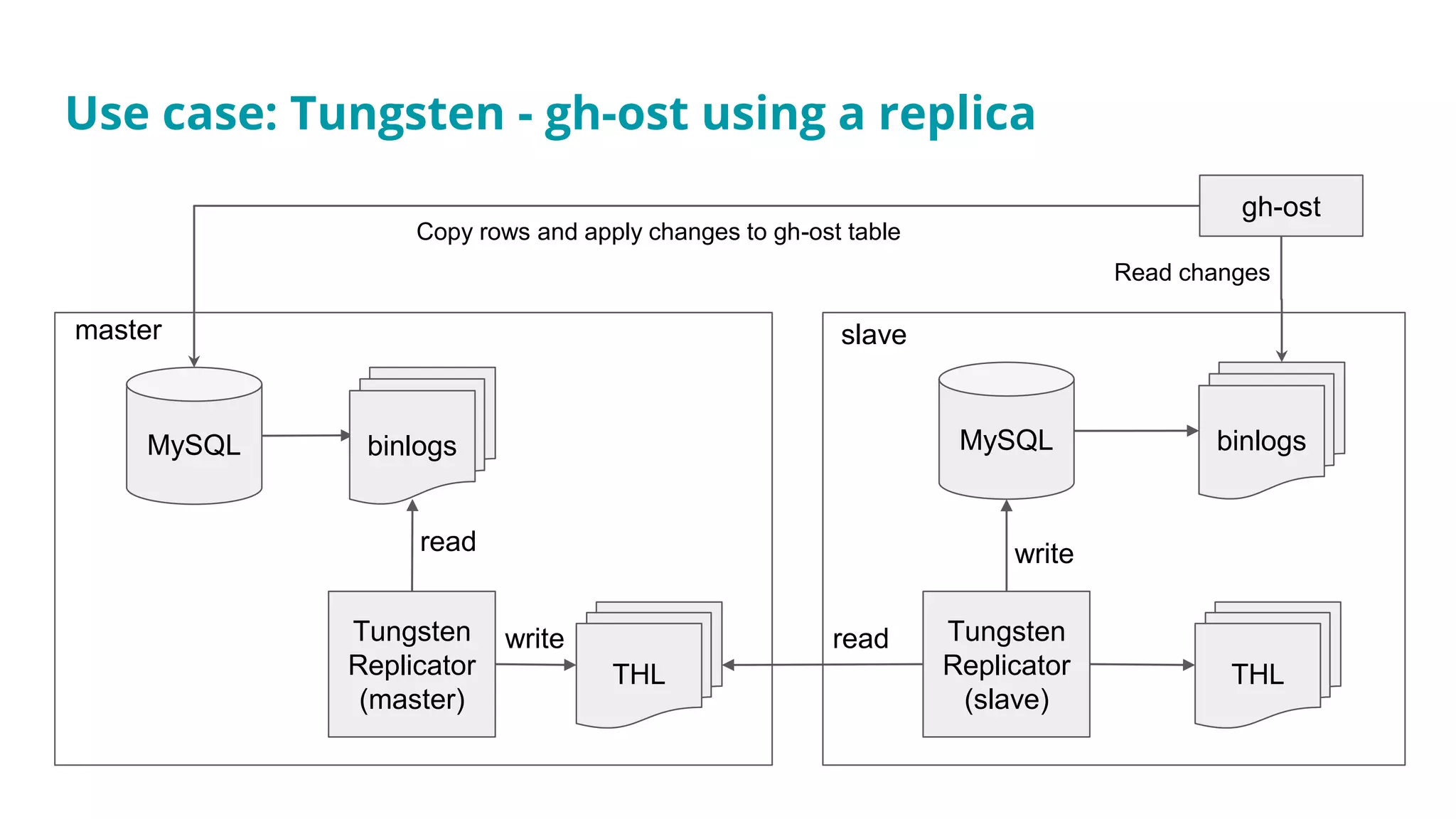

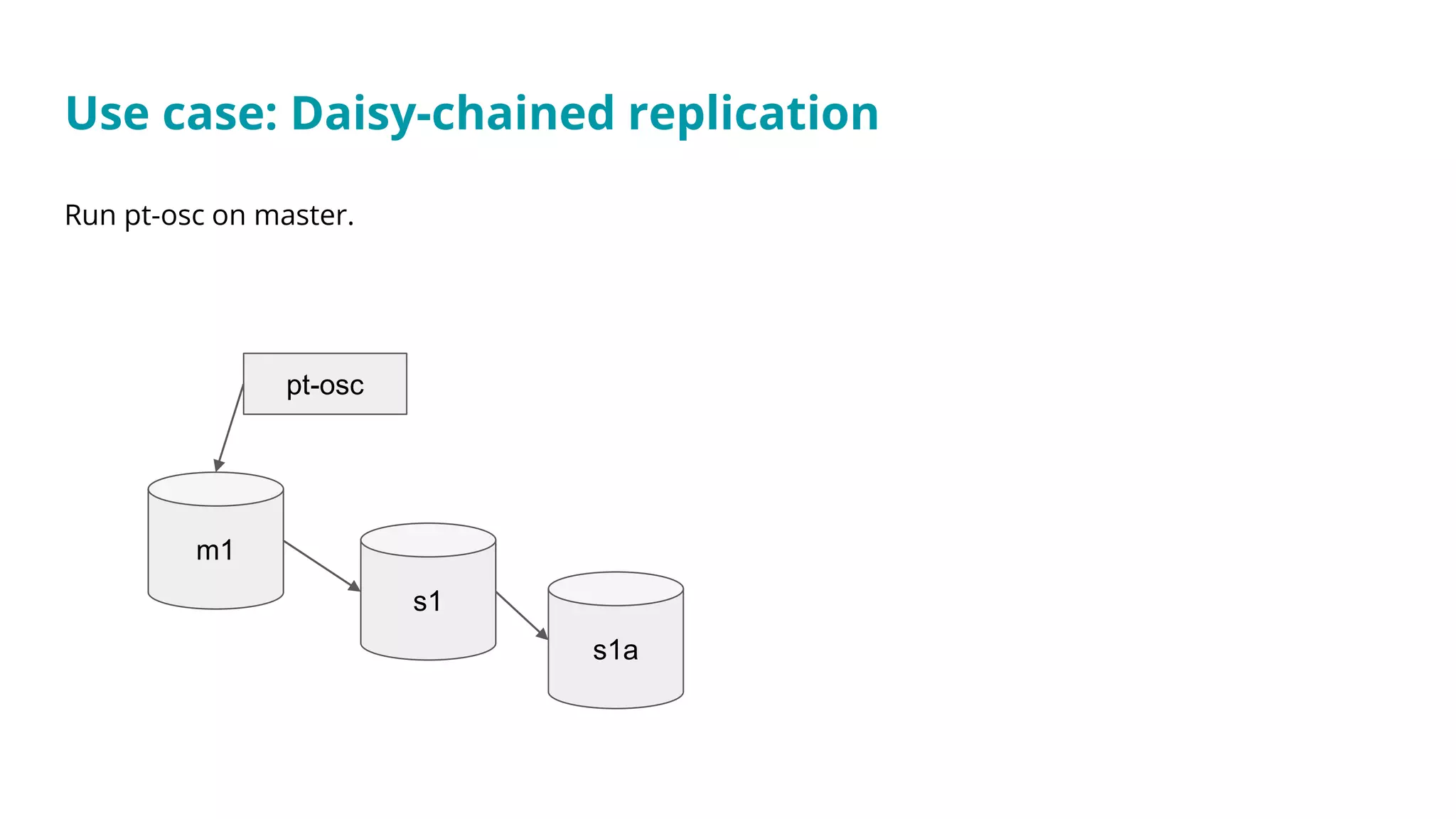

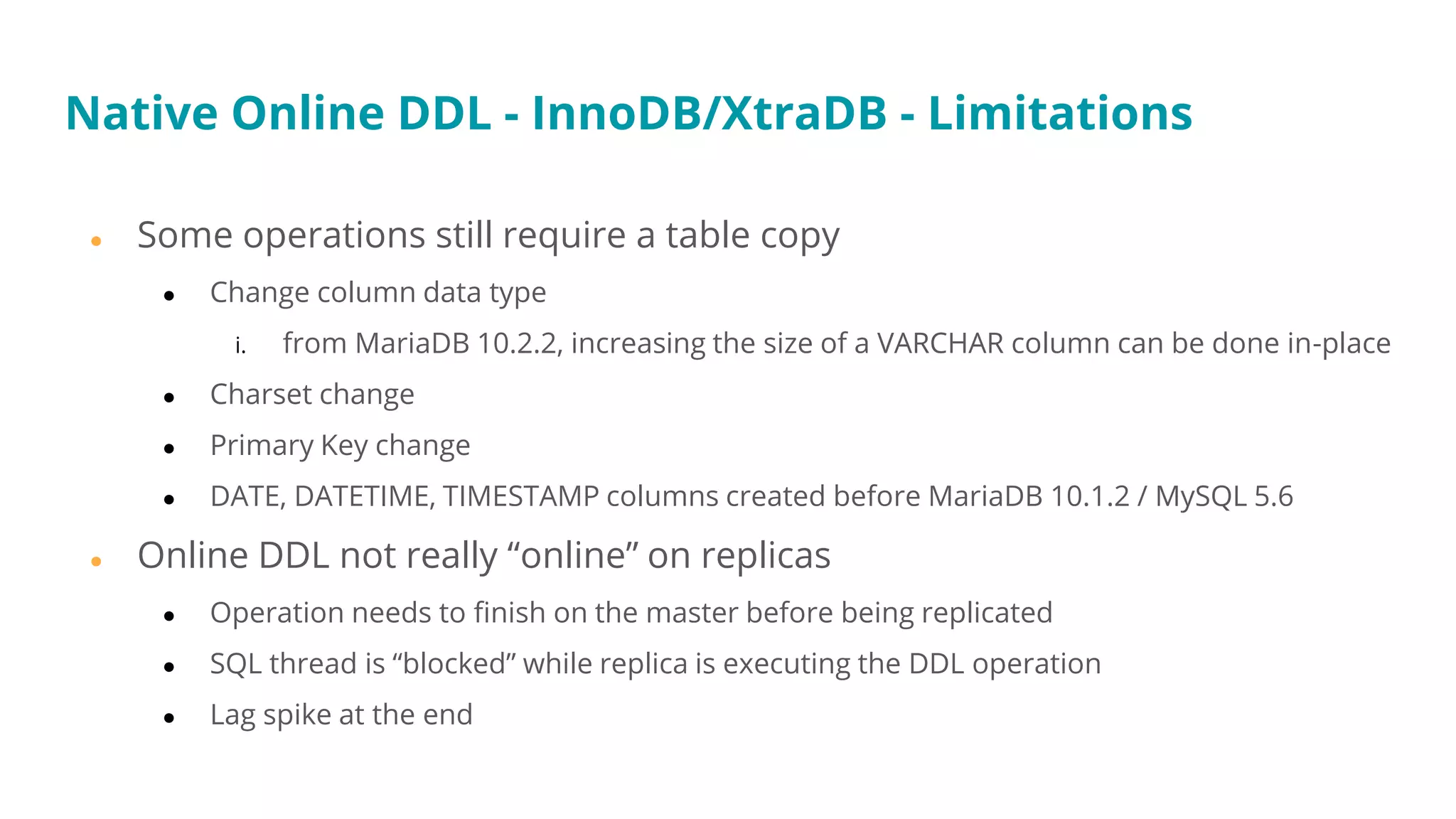

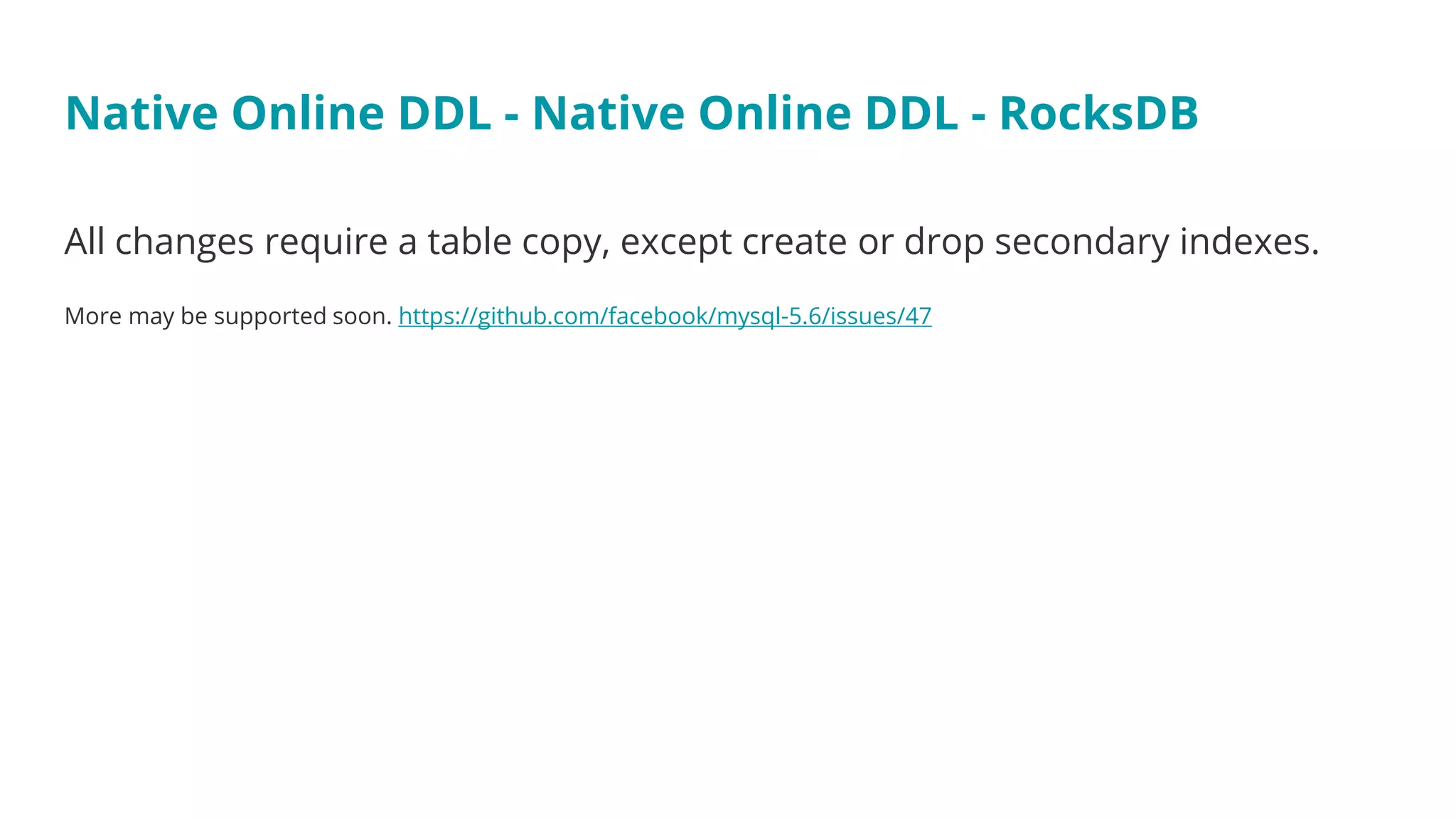

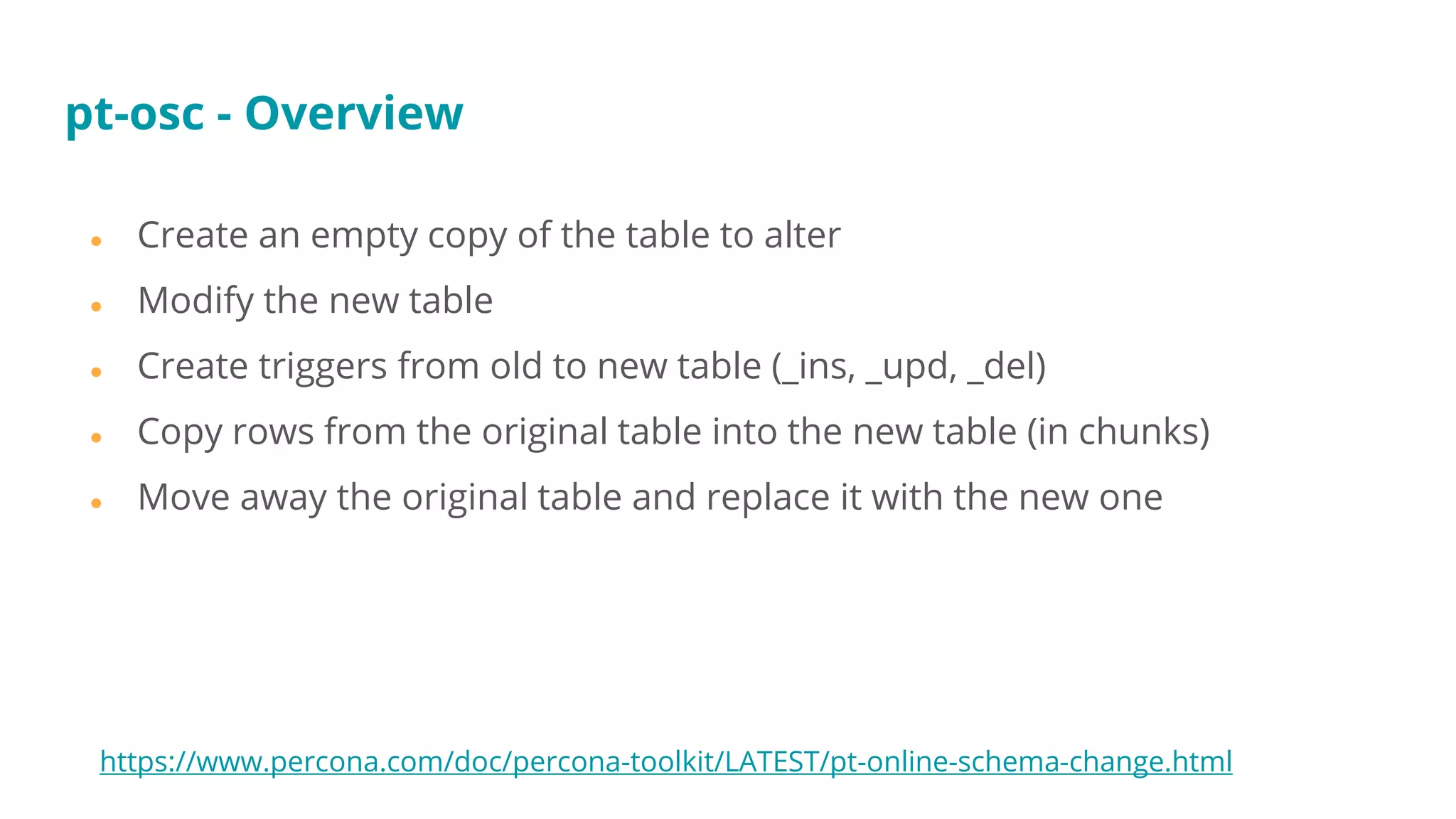

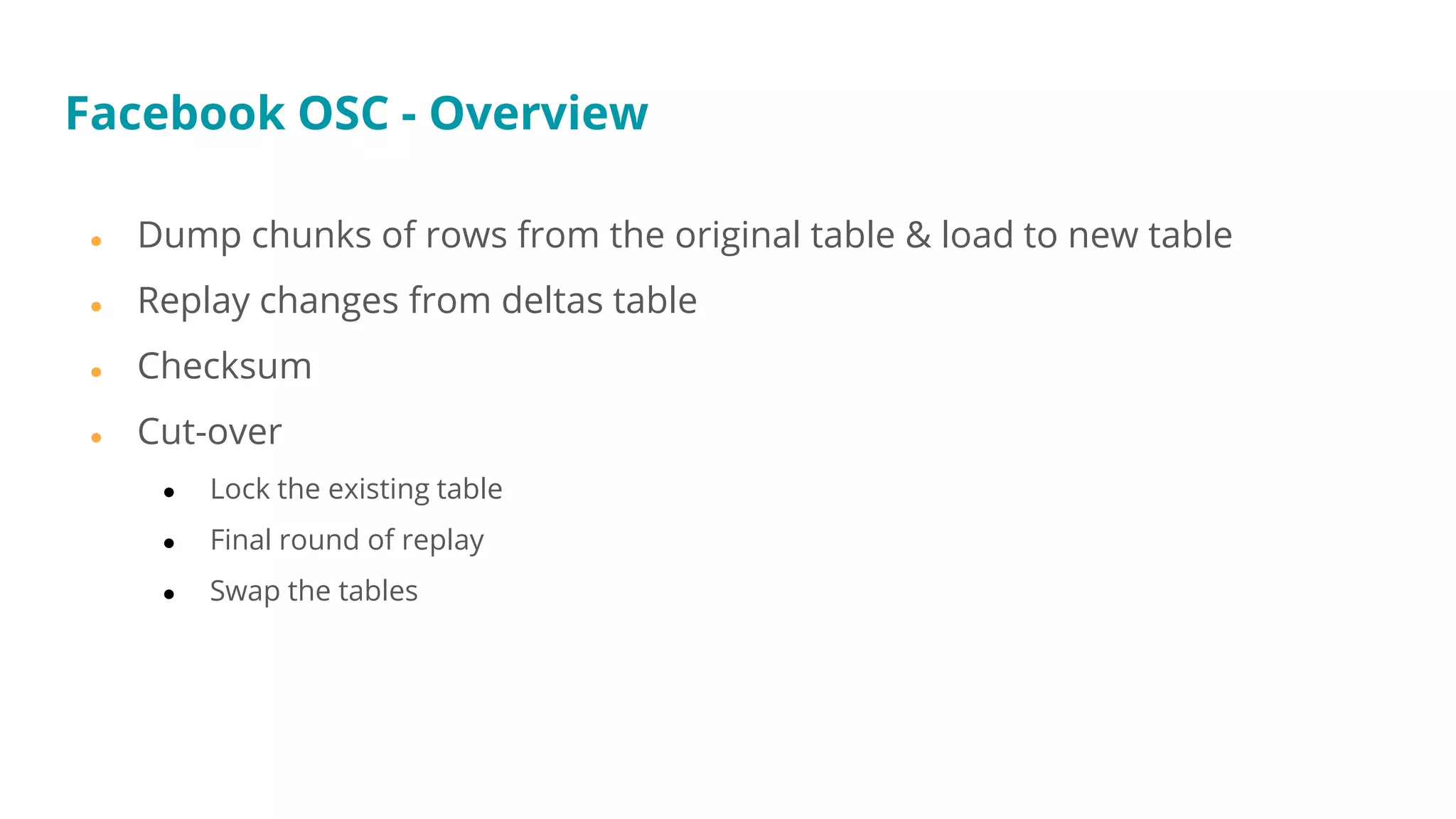

This document provides an overview and comparison of different methods for performing online schema changes in databases. It discusses native online DDL capabilities in MySQL/MariaDB and TokuDB, as well as alternative methods like rolling schema updates, downtime windows, and the pt-online-schema-change tool. The document outlines features, limitations, and special cases to consider for different workloads and replication scenarios.

![● Send commands via Unix socket

● echo ‘status’ | nc -U /tmp/gh-ost.test.sock

● echo chunk-size=? | nc -U /tmp/gh-ost.test.sock

● echo ‘chunk-size=2500’ | nc -U /tmp/gh-ost.test.sock

● echo ‘[no-]throttle’ | nc -U /tmp/gh-ost.test.sock

■ Make sure binlogs don’t get purged!

● Delay cutover

■ --postpone-cut-over-flag-file=/tmp/file

■ echo unpostpone | nc -U /tmp/gh-ost.test.sock

https://github.com/github/gh-ost/blob/master/doc/interactive-commands.md

gh-ost - Interactive commands](https://image.slidesharecdn.com/sessison5-180306225454/75/M-18-Battle-of-the-Online-Schema-Change-Methods-43-2048.jpg)