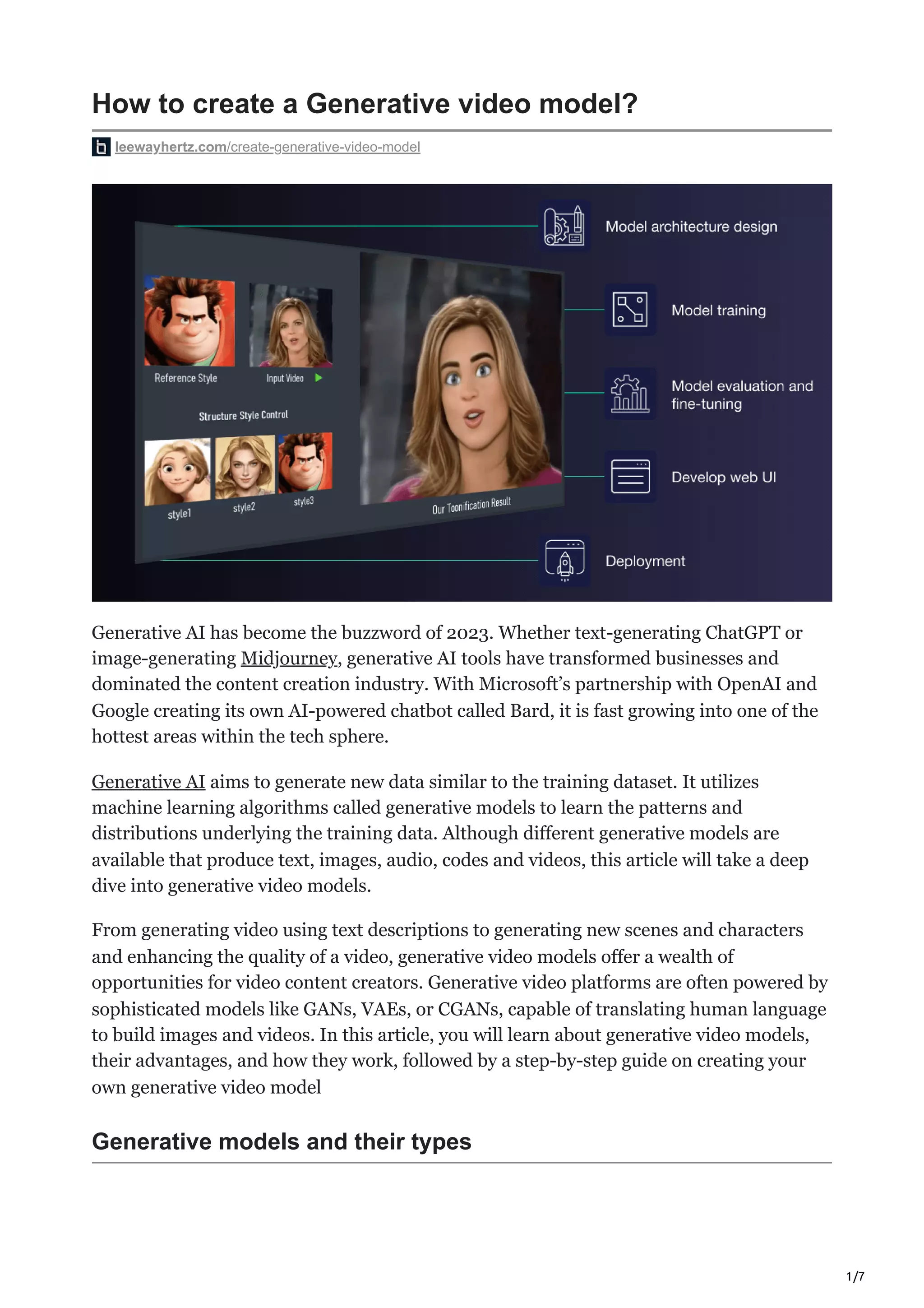

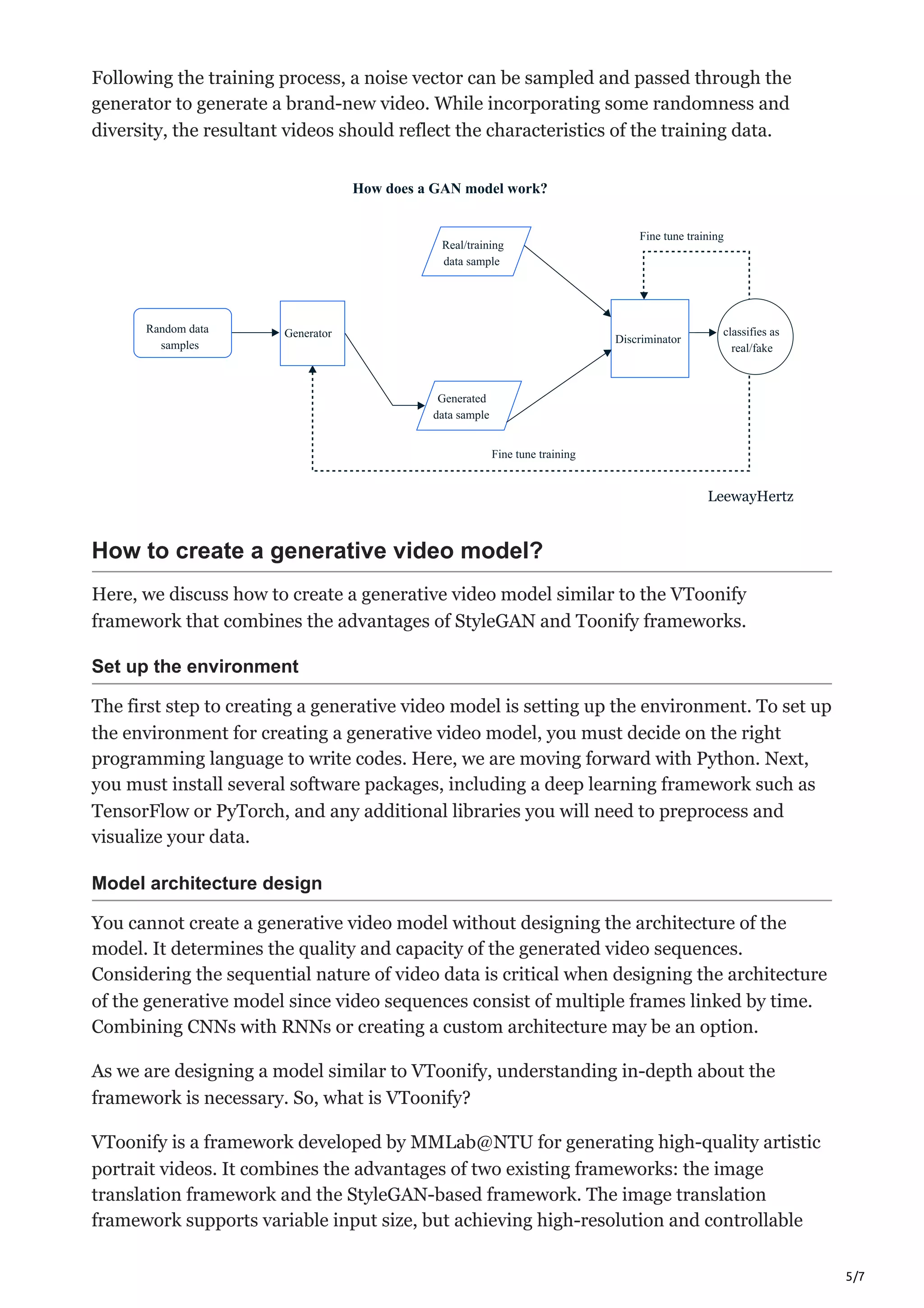

The document discusses the creation and advantages of generative video models, which utilize machine learning algorithms to produce new video data based on learned patterns from training datasets. It outlines various types of generative models, including GANs and VAEs, and describes tasks they can perform such as video synthesis, style transfer, and denoising. Additionally, the document provides a step-by-step guide on creating a generative video model and emphasizes its applications and benefits in video content creation.