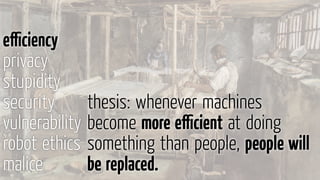

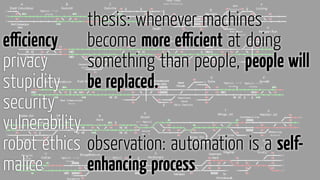

The document discusses various issues related to increasing automation and artificial intelligence technologies, including their impacts on jobs, privacy, security, and ethics. It notes that automation has already eliminated many agricultural jobs and is projected to replace humans in other occupations like driving. Some experts argue that increased automation will lead to most jobs being replaced, while others believe certain jobs will remain safe from innovation. The document also examines privacy and surveillance concerns related to technologies, as well as security issues like malware infections. It discusses concepts like Asimov's Three Laws of Robotics and challenges around regulating advanced computer systems like robots and artificial intelligence.

![»Two hundred years ago, 70% of

American workers lived on the farm.

Today automation has eliminated

all but 1% of their jobs, replacing

them (and their work animals)

with machines.«

Will a robot take your job? [New

Yorker]

efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-4-320.jpg)

![among others:

› soldier

› journalist

› farmer

› pharmacist

10 jobs robots already do better

than you [MarketWatch]

efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-5-320.jpg)

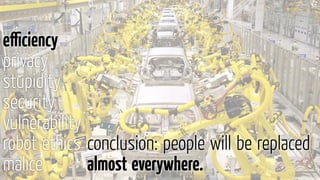

![Robots replacing factory workers

at faster pace [LA Times]

efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice

Cheaper, better robots will replace human workers in the

world's factories at a faster pace over the next decade,

pushing labor costs down 16%, a report Tuesday said.[…]

Robots will cut labor costs by 33% in South Korea, 25%

in Japan, 24% in Canada and 22% in the United States

and Taiwan.

The cost of owning and operating a robotic spot welder,

for instance, has tumbled from $182,000 in 2005 to

$133,000 last year, and will drop to $103,000 by 2025](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-6-320.jpg)

![Man vs. Machine: Are Any Jobs

Safe from Innovation? [Spiegel]

efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice

»›There are approximately 4 million truck, taxi, limousine

and bus drivers in the United States, not to mention gas

station attendants and traffic policemen,‹ writes Posner,

the University of Chicago scholar, in his essay on

automation and employment. ›Not all these jobs will be

eliminated overnight,‹ he says, ›but they could go quite

fast.‹«](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-9-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice

»›I don't think we have to worry about autonomous

cars, because that's sort of like a narrow form of AI. It

would be like an elevator. They used to have elevator

operators, and then we developed some simple circuitry

to have elevators just automatically come to the floor

that you're at ... the car is going to be just like that«

So what happens when we get there? Musk said that

the obvious move is to outlaw driving cars. ›It’s too

dangerous. You can't have a person driving a two-ton death

machine.‹«

Elon Musk: cars you can drive will

eventually be outlawed [Verge]](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-10-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice

»Will Googles own Executives buy

their self-driving car?

Never!« [Bruce Sterling]](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-12-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice

»Surveillance as a Business

Model« [Bruce Schneier]

»It shouldn't come as a surprise that big technology

companies are tracking us on the Internet even more

aggressively than before.

If [that doesn’t] sound particularly beneficial to you, it's

because you're not the customer of any of these

companies. You're the product, and you're being improved

for their actual customers: their advertisers.

Surveillance is the business model of the Internet«](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-13-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice »Robots and Privacy« [Ryan Calo]

An extensive literature in communications and

psychology demonstrates that humans are hardwired to

react to social machines as though a person were really

present. […]

People cooperate with sufficiently human-like machines,

are polite to them, decline to sustain eye-contact, decline

to mistreat or roughhouse with them, and respond

positively to their flattery.

There is even a neurological correlation to the reaction;

the same ›mirror‹ neurons fire in the presence of real and

virtual social agents.](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-15-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice malware in personal computers

Report: 48% of 22 million scanned computers infected

with malware [ZDnet]

32% of computers around the world are infected with

viruses and malware [dotTech]

Malware infects 30% of computers in the U.S.

[InfoWorld]](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-17-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice

»In the world of malware threats, only a few rare

examples can truly be considered groundbreaking and

almost peerless. What we have seen in Regin is just such

a class of malware.

[…]it is one of the main cyberespionage tools used by a

nation state

[…]many components of Regin remain undiscovered and

additional functionality and versions may exist.«

[symantec.com]](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-20-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice Three Laws of Robotics [S. Lem]

1 A robot may not injure a human being or, through

inaction, allow a human being to come to harm.

2 A robot must obey the orders given it by human beings,

except where such orders would conflict with the First

Law.

3 A robot must protect its own existence as long as such

protection does not conflict with the First or Second

Laws.](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-23-320.jpg)

![efficiency

privacy

stupidity

security

vulnerability

robot ethics

malice

Why it is not possible to regulate

robots [Cory Doctorow]

A robot is basically a computer that causes some physical

change in the world. We can and do regulate machines,

from cars to drills to implanted defibrillators. But the

thing that distinguishes a power-drill from a robot-drill is

that the robot-drill has a driver: a computer that operates

it. Regulating that computer in the way that we regulate

other machines – by mandating the characteristics of

their manufacture – will be no more effective at preventing

undesirable robotic outcomes than the copyright mandates

of the past 20 years have been effective at preventing

copyright infringement (that is, not at all).](https://image.slidesharecdn.com/jetztredeichkurz-150616111118-lva1-app6892/85/Jetzt-rede-ich-kurz-24-320.jpg)