Embed presentation

Download to read offline

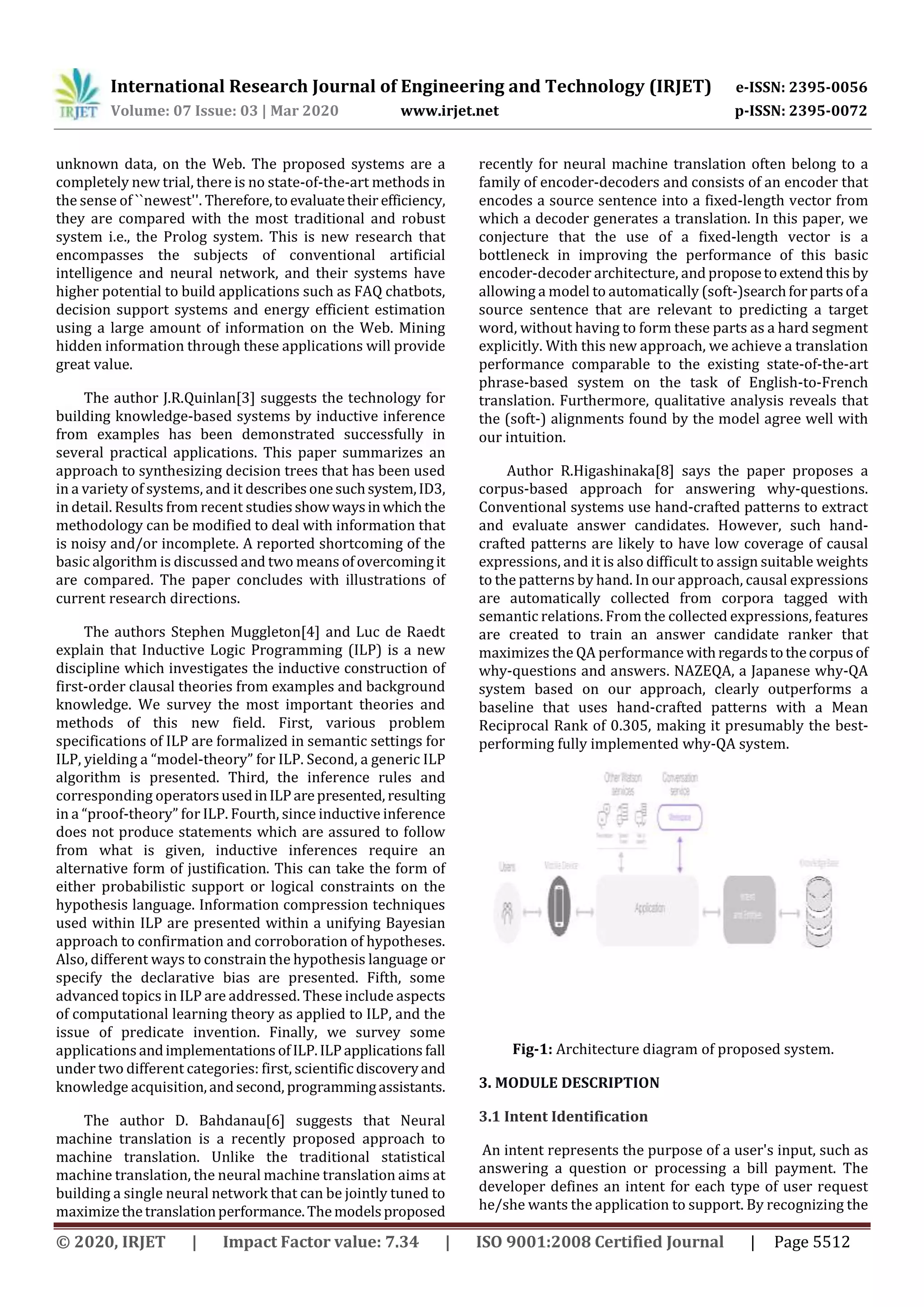

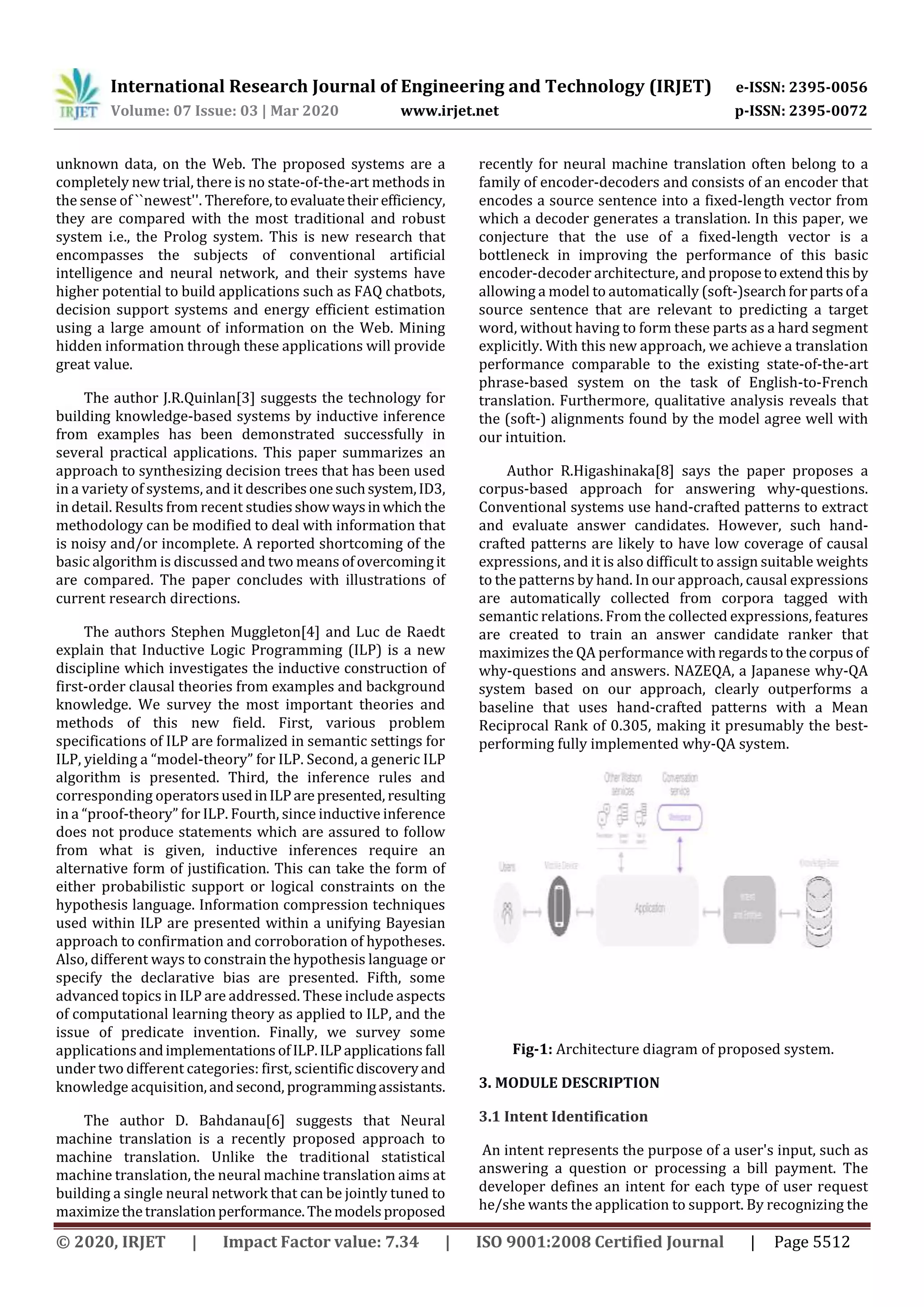

This document summarizes a research paper on developing a mobile chatbot using IBM Watson services to allow students to search for their exam scores. The chatbot uses Watson Assistant for natural language processing, a SQL database as a knowledge base to store score information, and text-to-speech and speech-to-text for input and output. It was built with Android Studio and Java to provide an intuitive mobile interface for users to interact with the chatbot.