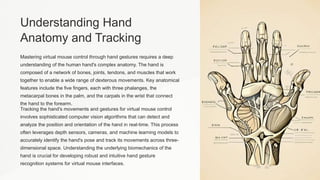

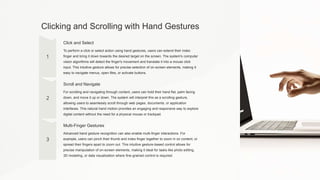

This document provides an introduction to virtual mouse technology controlled by hand gestures. It discusses how hand tracking and gesture recognition algorithms can translate natural hand movements into computer input like cursor control, clicking, and scrolling. This allows for an intuitive interface that leverages the dexterity of the human hand. The document covers topics like hand anatomy, gesture recognition techniques, hardware requirements, and integrating hand tracking with computer input. It also describes common gestures for cursor control, clicking, scrolling, and multi-finger interactions. Finally, it discusses customizing the gesture mapping and sensitivity to individual user needs and preferences.