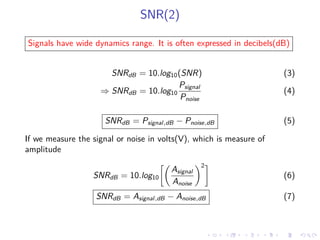

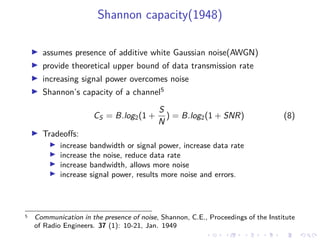

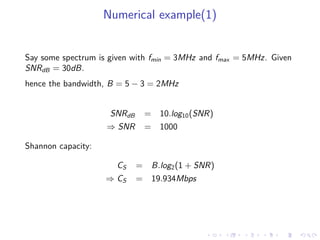

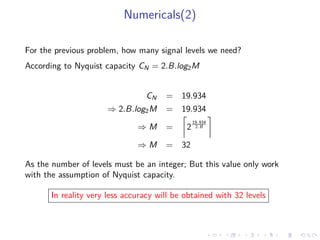

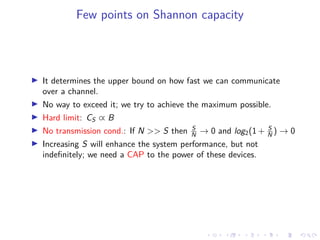

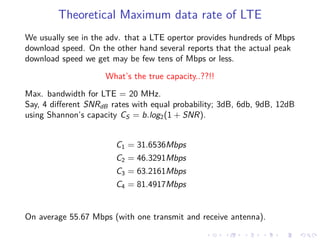

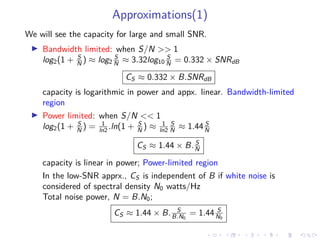

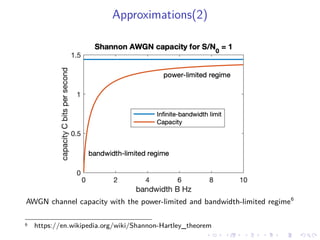

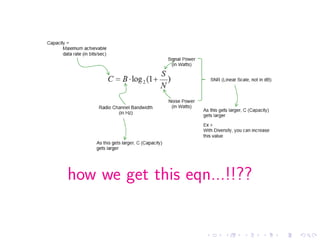

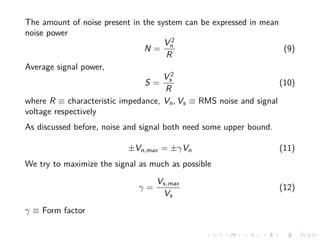

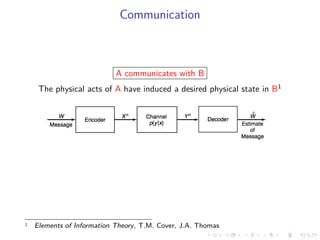

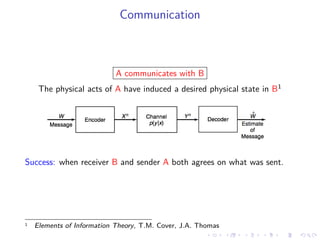

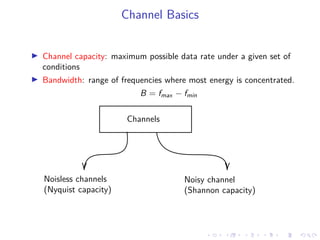

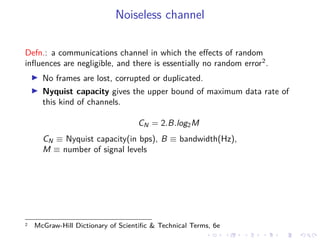

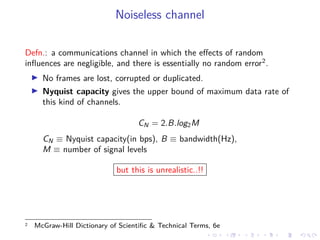

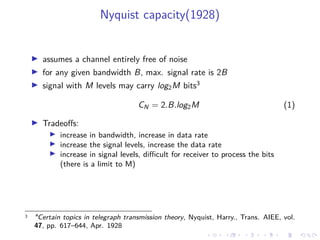

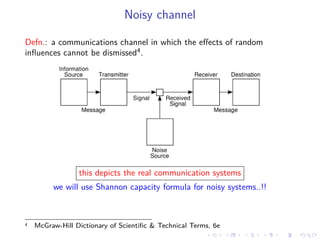

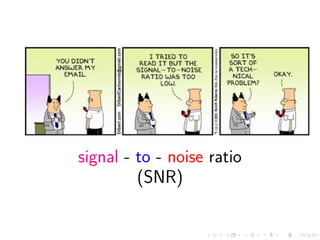

The document discusses channel capacity in communication systems, detailing concepts related to noiseless channels, such as Nyquist capacity, and noisy channels with Shannon capacity. It explains the impact of bandwidth and signal-to-noise ratio (SNR) on data transmission rates, providing formulas and numerical examples to illustrate these principles. The limitations and trade-offs of increasing bandwidth and signal levels are highlighted, emphasizing the realistic challenges in achieving maximum communication efficiency.

![SNR(1)

Defn.: Ratio of signal power to noise power; unit-less quantity.

SNR =

Psignal

Pnoise

, where P = average power

for random signal(S), SNR = E[S2

]

E[N2] ,

where E is the expectation value; mean square value of the quantity

SNR =

Psignal

Pnoise

=

Asignal

Anoise

2

(2)

where A ≡ RMS amplitude(voltage)

If SNR < 1 the signal become unusable..!!](https://image.slidesharecdn.com/dcnldtalks1channelcapacity-200427082803/85/Introduction-to-Channel-Capacity-DCNIT-LDTalks-1-15-320.jpg)