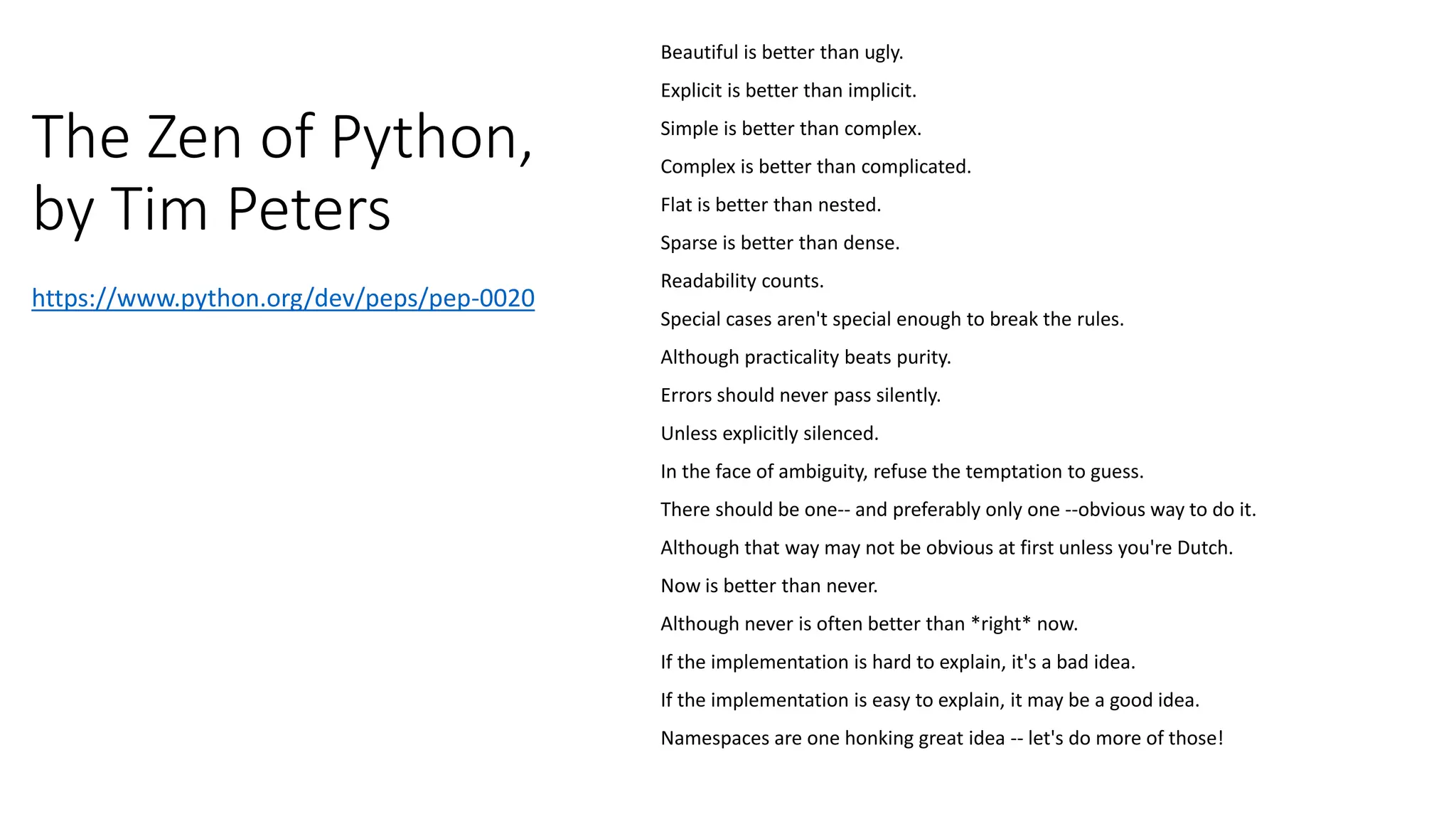

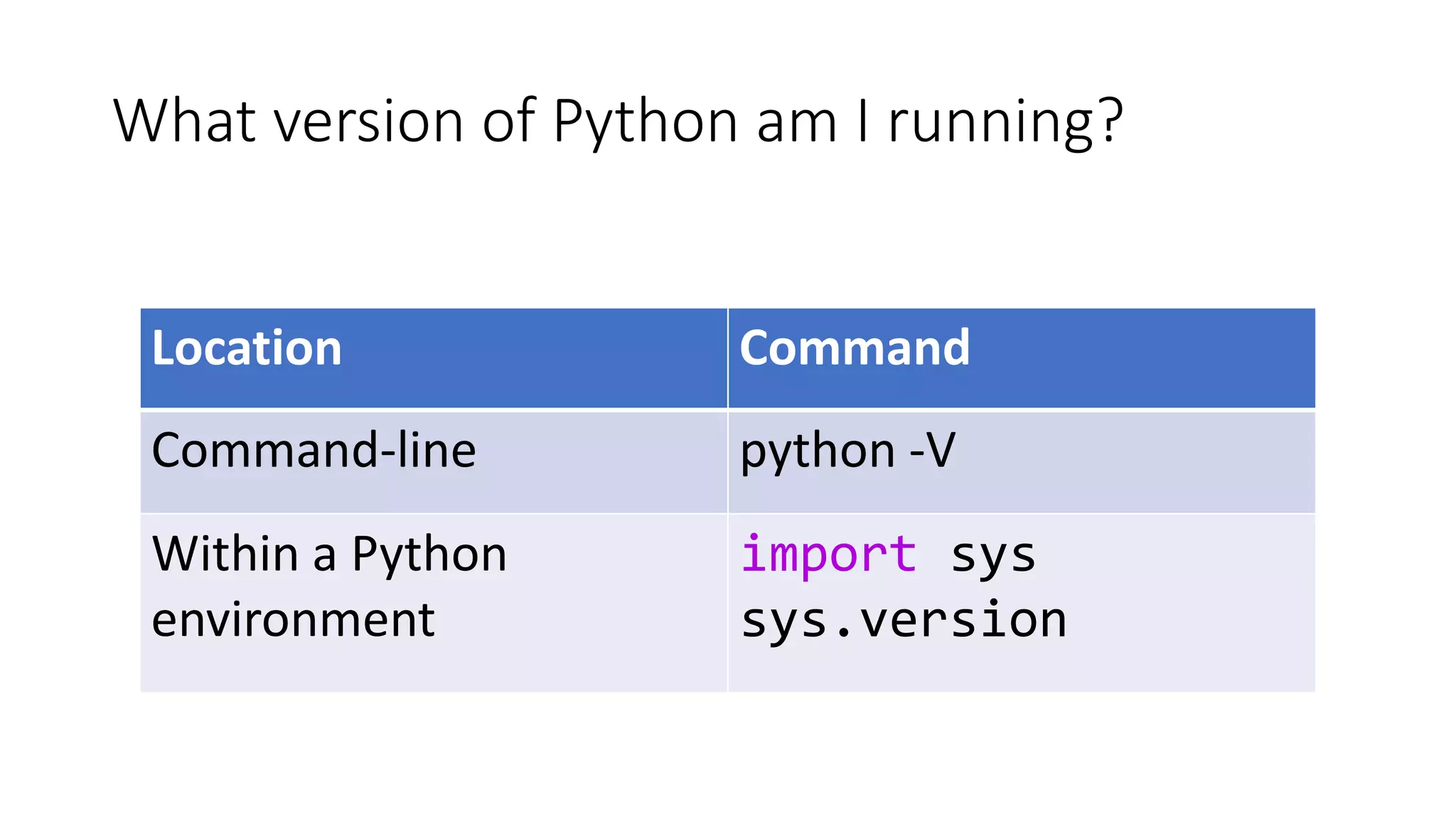

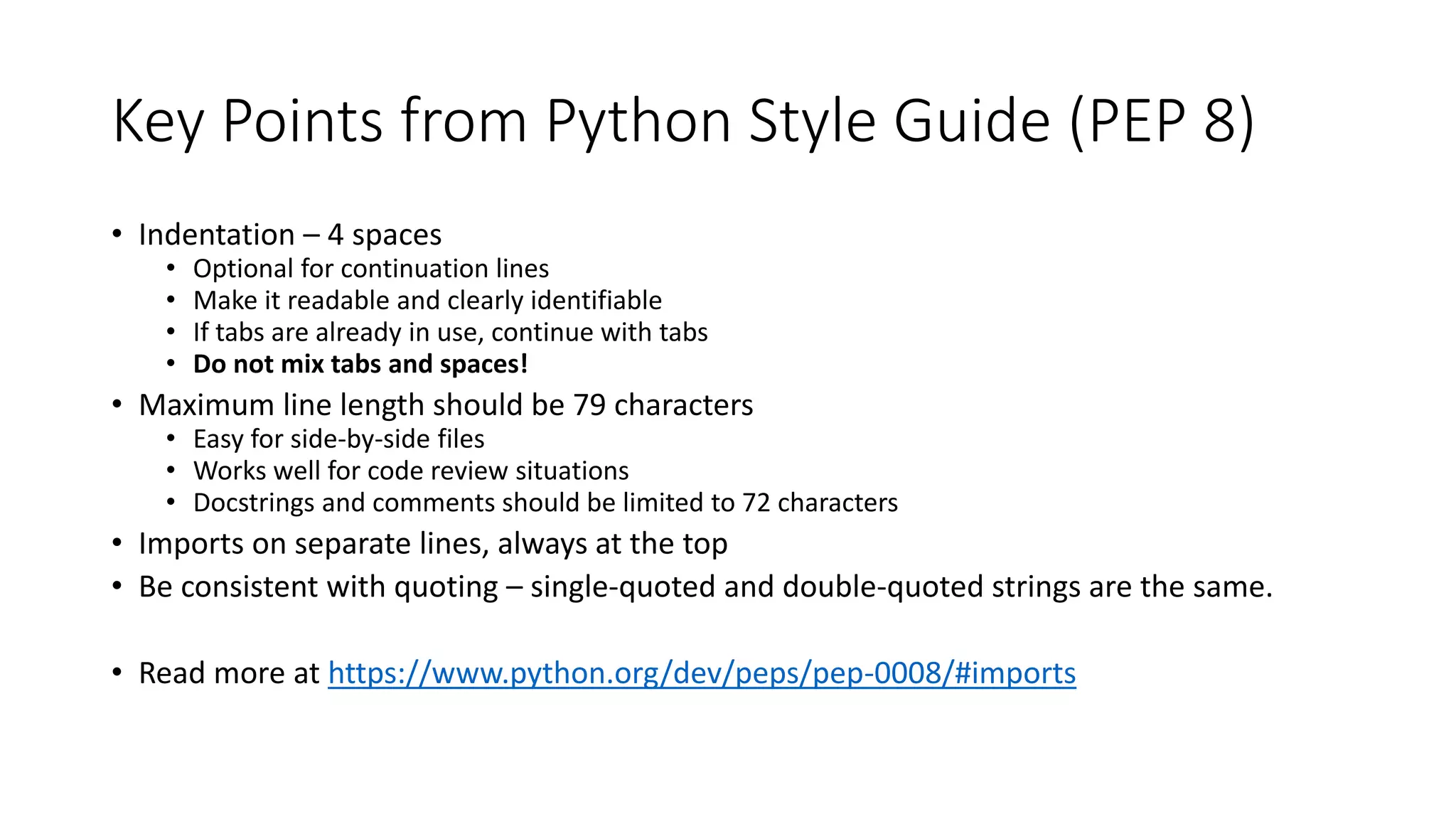

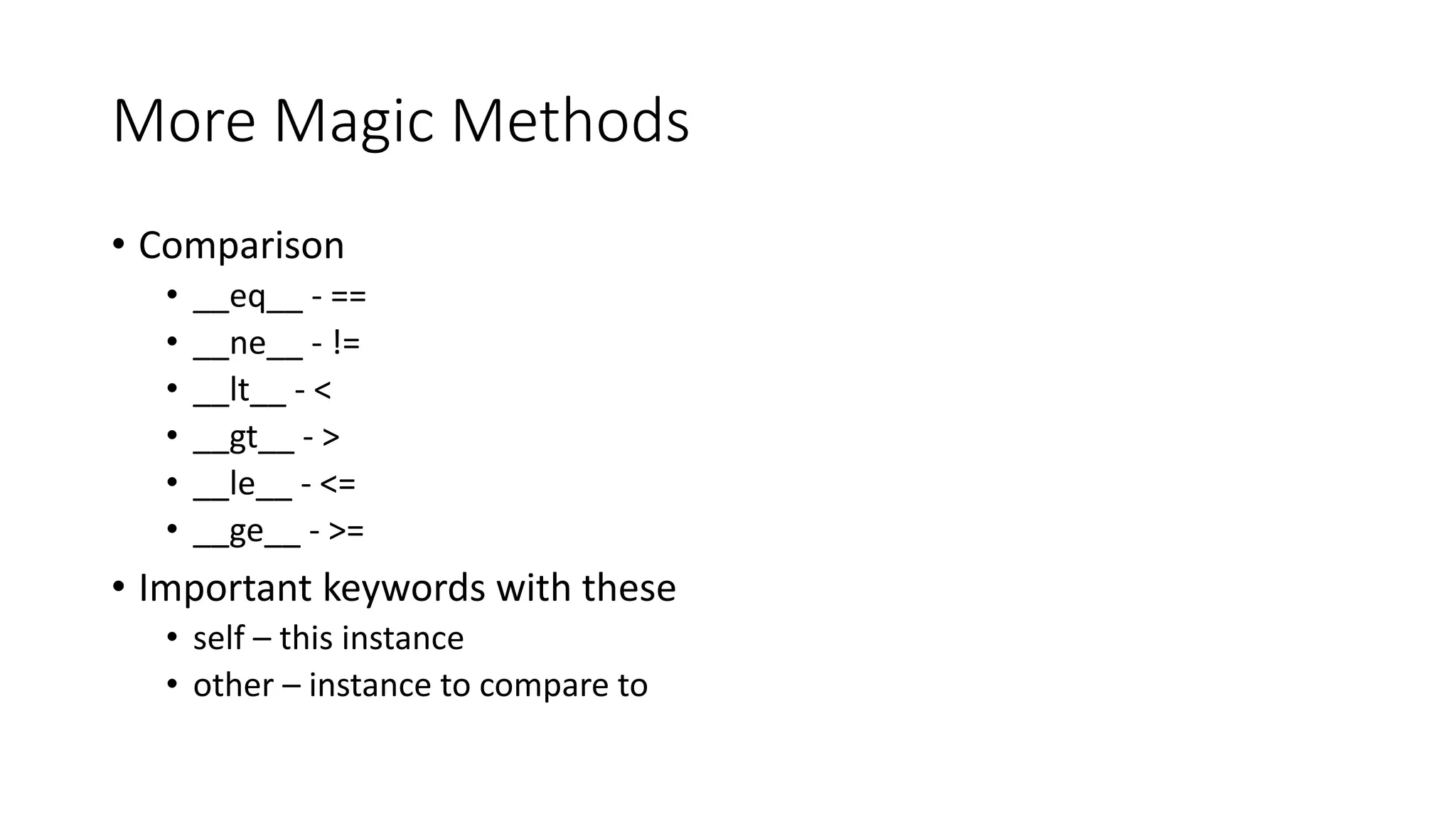

The document is a presentation by Sarah Dutkiewicz on transitioning from C# to Python, highlighting the Python community's diversity and values, as well as the importance of Python 3 due to its improvements over 2.x. It covers key concepts such as Python's design philosophies, areas of application, and various tools and libraries for development. Additionally, the presentation discusses object-oriented programming in Python, focusing on classes, methods, inheritance, and magic methods.