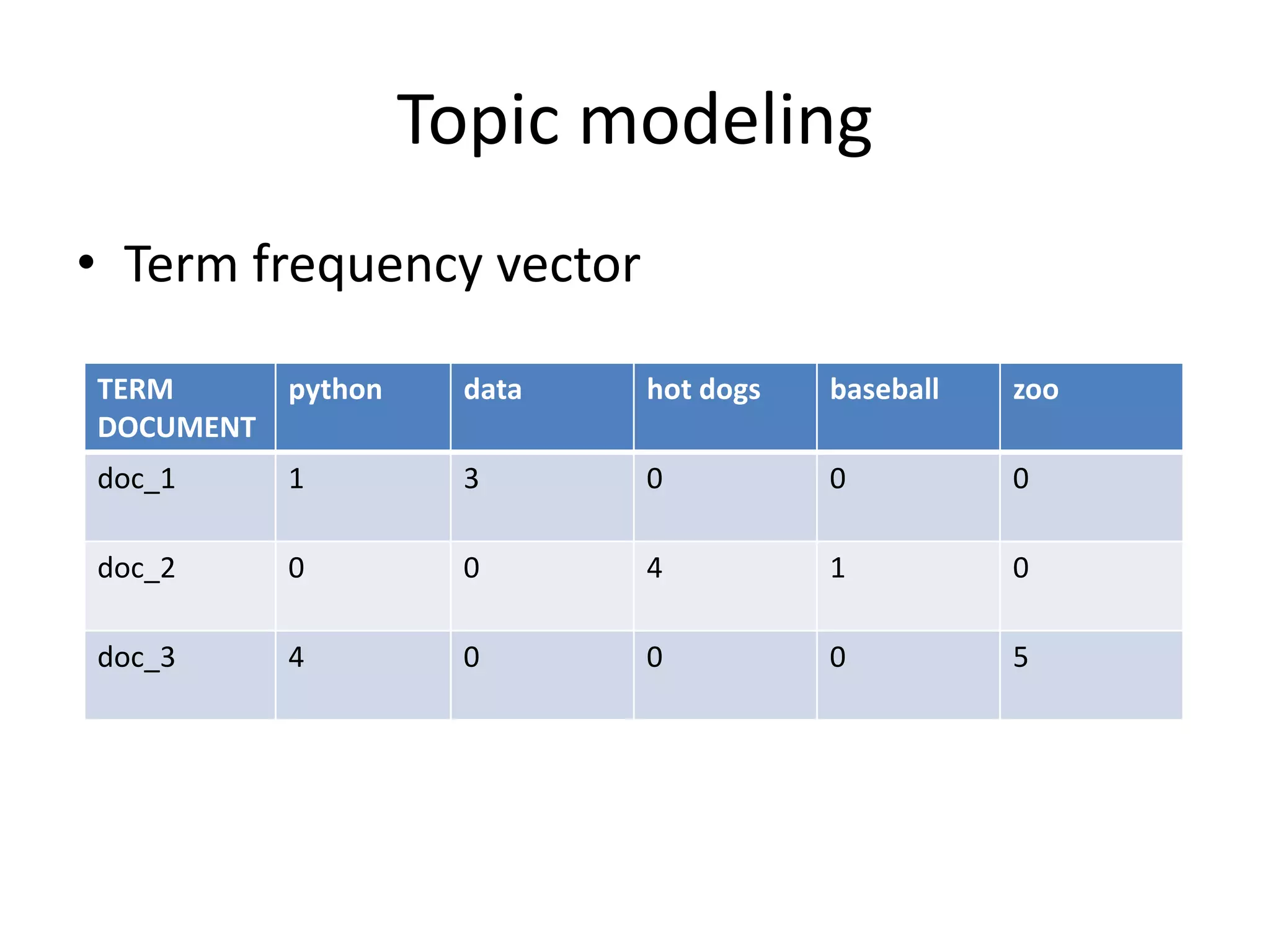

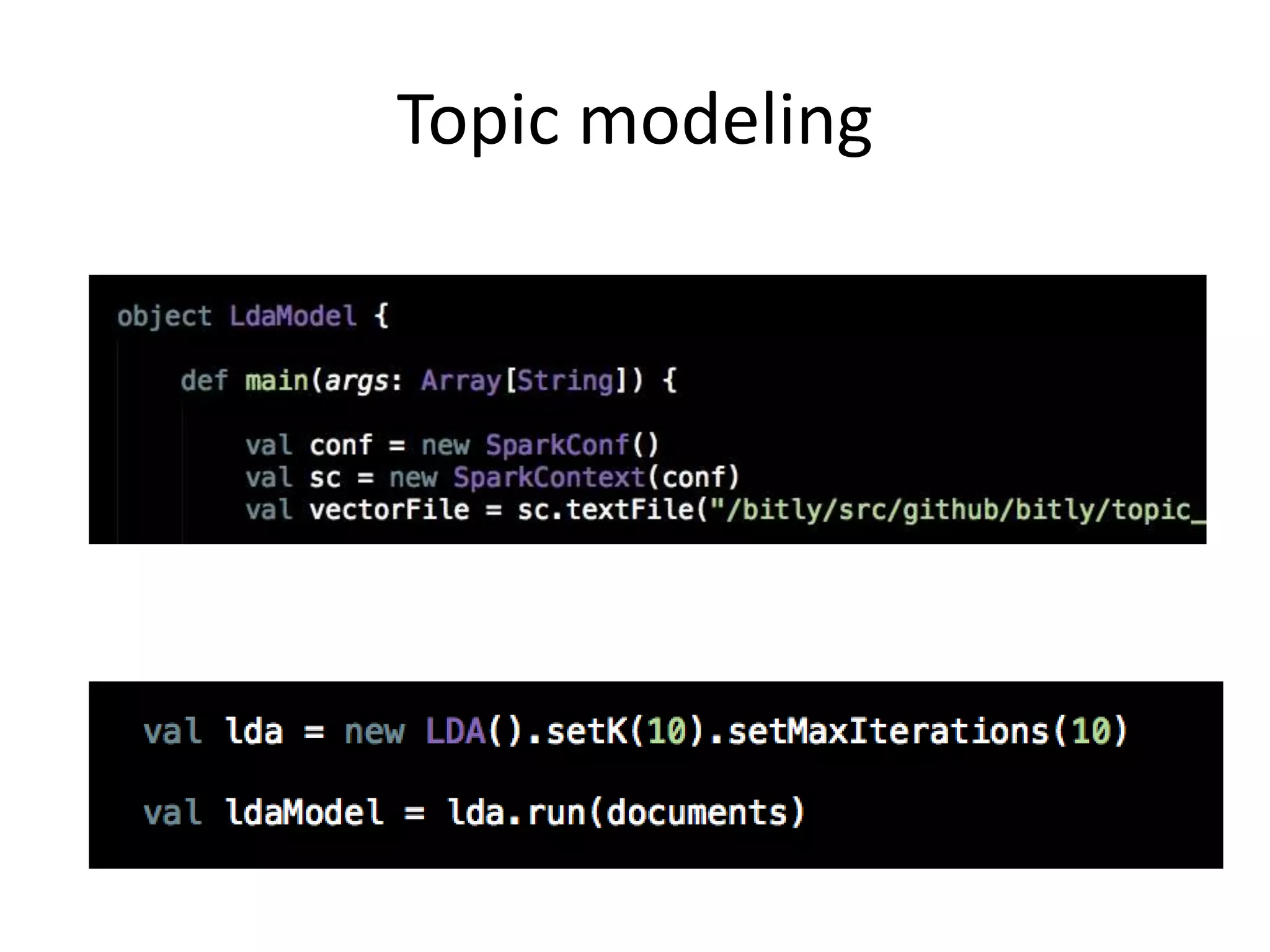

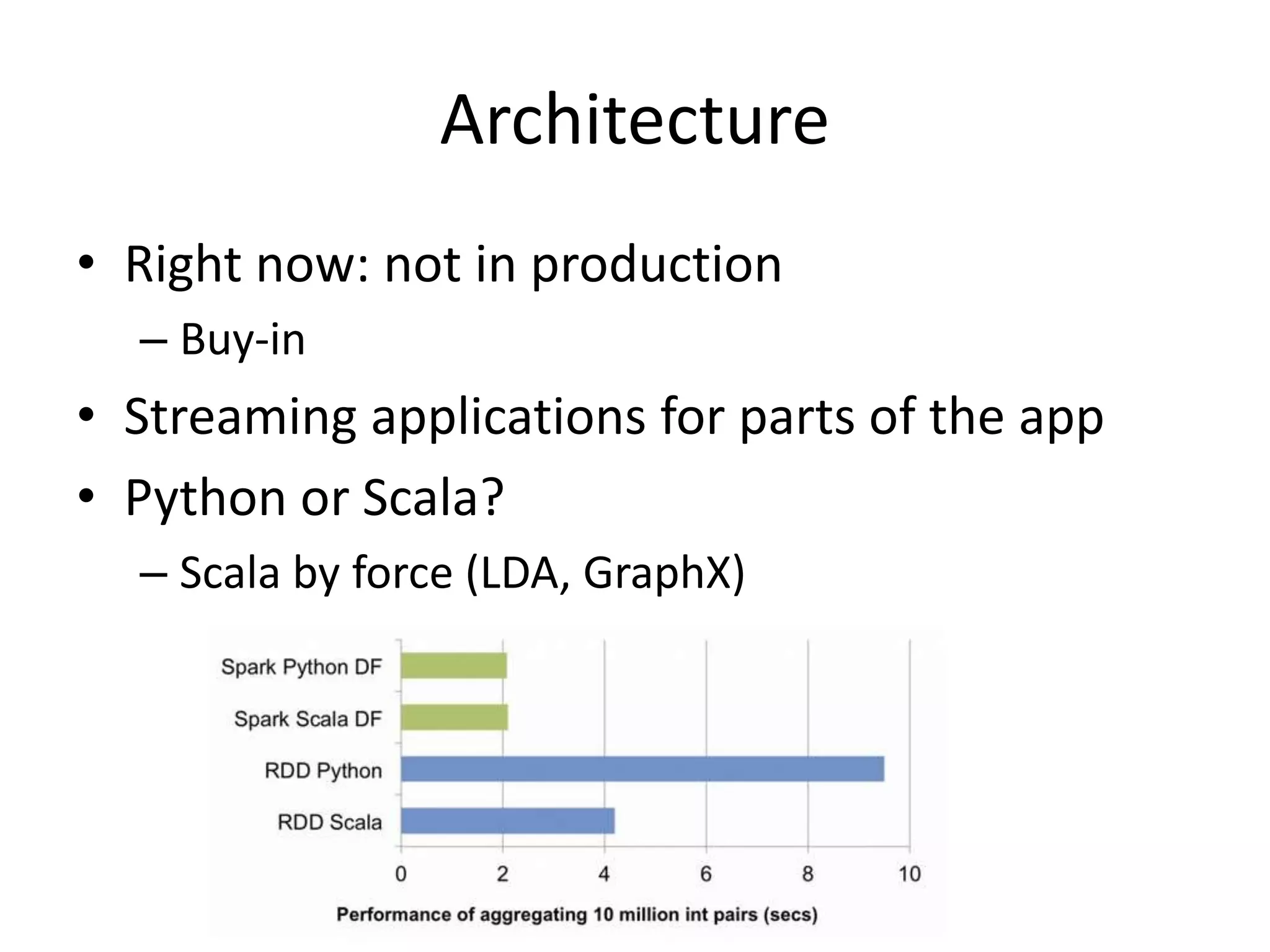

The document outlines Sarah Guido's presentation on utilizing Apache Spark for data science at Bitly, sharing her workflow and the tools within Spark. It emphasizes the need for efficient big data analysis and explores challenges faced in data processing, including exploratory data analysis and topic modeling. The talk also touches on future applications of Spark in production and ongoing projects at Bitly.