1. The document discusses physical database design for data warehousing, including star and snowflake schemas that are commonly used.

2. It provides examples of complex queries against a data warehouse schema that involve joins between large dimension and fact tables to retrieve aggregates and filter on dimension attributes.

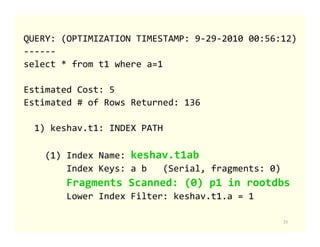

3. The execution plan for a sample query joining multiple large tables in a data warehouse is shown, utilizing index scans, sequential scans, hash joins, and filters to efficiently evaluate the query.

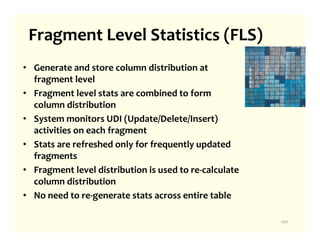

![STATLEVEL property

STATLEVEL defines the granularity or level of statistics created for the

table.

Can be set using CREATE or ALTER TABLE.

STATLEVEL [TABLE | FRAGMENT | AUTO] are the allowed values for

STATLEVEL.

TABLE – entire table dataset is read and table level statistics are

stored in sysdistrib catalog.

FRAGMENT – dataset of each fragment is read an fragment level

statistics are stored in new sysfragdist catalog. This option is only

allowed for fragmented tables.

AUTO – System determines when update statistics is run if TABLE or

FRAGMENT level statistics should be created.

103](https://image.slidesharecdn.com/informixphysicaldatabasedesign-110830121705-phpapp01/85/Informix-physical-database-design-for-data-warehousing-104-320.jpg)

![UPDATE STATISTICS extensions

• UPDATE STATISTICS [AUTO | FORCE];

• UPDATE STATISTICS HIGH FOR TABLE [AUTO |

FORCE];

• UPDATE STATISTICS MEDIUM FOR TABLE tab1

SAMPLING SIZE 0.8 RESOLUTION 1.0 [AUTO | FORCE

];

• Mode specified in UPDATE STATISTICS statement

overrides the AUTO_STAT_MODE session setting.

Session setting overrides the ONCONFIG's

AUTO_STAT_MODE parameter.

104](https://image.slidesharecdn.com/informixphysicaldatabasedesign-110830121705-phpapp01/85/Informix-physical-database-design-for-data-warehousing-105-320.jpg)