InAccel FPGA resource manager and orchestrator

•

0 likes•73 views

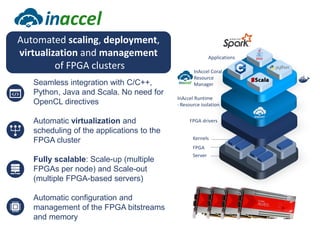

Use InAccel's FPGA resource manager to automatically deploy, scale, virtualize and manage FPGA clusters on cloud or on-prem instantly with zero overhead.

Report

Share

Report

Share

Download to read offline

Recommended

Putting the Spark into Functional Fashion Tech Analystics

Metail uses Apache Spark and a functional programming approach to process and analyze data from its fashion recommendation application. It collects data through various pipelines to understand user journeys and optimize business processes like photography. Metail's data pipeline is influenced by functional paradigms like immutability and uses Spark on AWS to operate on datasets in a distributed, scalable manner. The presentation demonstrated Metail's use of Clojure, Spark, and AWS services to build a functional data pipeline for analytics purposes.

Performance Monitoring with Icinga2, Graphite und Grafana

Talk given by Blerim Sheqa at Icinga Camp San Francisco 2016 - https://www.icinga.org/community/events/archive/2016-archive/icinga-camp-san-francisco/

Getting started with Riak in the Cloud

Getting started with Riak in the Cloud involves provisioning a Riak cluster on Engine Yard and optimizing it for performance. Key steps include choosing instance types like m1.large or m1.xlarge that are EBS-optimized, having at least 5 nodes, setting the ring size to 256, disabling swap, using the Bitcask backend, enabling kernel optimizations, and monitoring and backing up the cluster. Benchmarks show best performance from high I/O instance types like hi1.4xlarge that use SSDs rather than EBS storage.

Creating pools of Virtual Machines - ApacheCon NA 2013

My slides on creating pools of virtual machines for ApacheCon NA 2013 in Portland.

Provisionr Source code:

https://github.com/axemblr/axemblr-provisionr

Apache Incubator proposal:

https://github.com/axemblr/axemblr-provisionr/wiki/Provisionr-Proposal

Biomatters and Amazon Web Services

- The company was founded in 2003 and provides sophisticated visualization and interpretation of genetic data through targeted analysis workflows and actionable results.

- The document discusses the architecture for a genome browser application, including a revised architecture with a VPC across two availability zones with private subnets for security.

- It describes the web stack behind a load balancer with session info stored in Elasticache and autoscaling from 2-6 machines depending on load. The database is a statically scaled MongoDB cluster that cannot use RDS due to local deployment requirements.

Putting the Spark into Functional Fashion Tech Analystics

A talk highlighting the power of functional paradigms for big data pipeline building and Clojure and Apache Spark are a good fit. We cover the lambda architecture and some of the pros and cons that we discovered while using Spark at Metail.

Akanda: Open Source, Production-Ready Network Virtualization for OpenStack

DreamHost has been working on our OpenStack Public Cloud, DreamCompute, for several years. At the onset of the project, we set out with an aggressive set of requirements for our networking functionality, including L2 tenant isolation, IPv6 support from the ground up, and complete support for the then emerging OpenStack Neutron APIs. Our search ended with the realization that there was a gap in OpenStack SDN for L3+ services. Thus, the Akanda project was born.

Akanda is an open source suite of software, services, orchestration, and tools for providing L3+ services in OpenStack. It builds on top of Linux, iptables, and OpenStack Neutron, and is used in production to power DreamCompute's networking capabilities. Using Akanda, an OpenStack provider can provide tenants with a rich, powerful set of L3+ services, including routing, port forwarding, firewalling, and more. This talk will give an introduction to the Akanda project, review the DreamCompute use case, and illustrate how Akanda works under the hood. In addition, we'll discuss future capabilities, operational challenges and tips, and more.

Watch the talk video - https://www.openstack.org/summit/openstack-paris-summit-2014/session-videos/presentation/akanda-layer-3-virtual-networking-services-for-openstack

Neutron scale

In this session, we will discuss the operational issues that Rackspace has encountered during and after implementing Neutron at a large scale. Neutron at scale required a significant amount of development and operations effort, some of which resulted in deviations from upstream code. Finally, our team would like to discuss our solutions and our upstream differences for Neutron and OpenStack that we believe are necessary so that it can be more performant at scale.

Recommended

Putting the Spark into Functional Fashion Tech Analystics

Metail uses Apache Spark and a functional programming approach to process and analyze data from its fashion recommendation application. It collects data through various pipelines to understand user journeys and optimize business processes like photography. Metail's data pipeline is influenced by functional paradigms like immutability and uses Spark on AWS to operate on datasets in a distributed, scalable manner. The presentation demonstrated Metail's use of Clojure, Spark, and AWS services to build a functional data pipeline for analytics purposes.

Performance Monitoring with Icinga2, Graphite und Grafana

Talk given by Blerim Sheqa at Icinga Camp San Francisco 2016 - https://www.icinga.org/community/events/archive/2016-archive/icinga-camp-san-francisco/

Getting started with Riak in the Cloud

Getting started with Riak in the Cloud involves provisioning a Riak cluster on Engine Yard and optimizing it for performance. Key steps include choosing instance types like m1.large or m1.xlarge that are EBS-optimized, having at least 5 nodes, setting the ring size to 256, disabling swap, using the Bitcask backend, enabling kernel optimizations, and monitoring and backing up the cluster. Benchmarks show best performance from high I/O instance types like hi1.4xlarge that use SSDs rather than EBS storage.

Creating pools of Virtual Machines - ApacheCon NA 2013

My slides on creating pools of virtual machines for ApacheCon NA 2013 in Portland.

Provisionr Source code:

https://github.com/axemblr/axemblr-provisionr

Apache Incubator proposal:

https://github.com/axemblr/axemblr-provisionr/wiki/Provisionr-Proposal

Biomatters and Amazon Web Services

- The company was founded in 2003 and provides sophisticated visualization and interpretation of genetic data through targeted analysis workflows and actionable results.

- The document discusses the architecture for a genome browser application, including a revised architecture with a VPC across two availability zones with private subnets for security.

- It describes the web stack behind a load balancer with session info stored in Elasticache and autoscaling from 2-6 machines depending on load. The database is a statically scaled MongoDB cluster that cannot use RDS due to local deployment requirements.

Putting the Spark into Functional Fashion Tech Analystics

A talk highlighting the power of functional paradigms for big data pipeline building and Clojure and Apache Spark are a good fit. We cover the lambda architecture and some of the pros and cons that we discovered while using Spark at Metail.

Akanda: Open Source, Production-Ready Network Virtualization for OpenStack

DreamHost has been working on our OpenStack Public Cloud, DreamCompute, for several years. At the onset of the project, we set out with an aggressive set of requirements for our networking functionality, including L2 tenant isolation, IPv6 support from the ground up, and complete support for the then emerging OpenStack Neutron APIs. Our search ended with the realization that there was a gap in OpenStack SDN for L3+ services. Thus, the Akanda project was born.

Akanda is an open source suite of software, services, orchestration, and tools for providing L3+ services in OpenStack. It builds on top of Linux, iptables, and OpenStack Neutron, and is used in production to power DreamCompute's networking capabilities. Using Akanda, an OpenStack provider can provide tenants with a rich, powerful set of L3+ services, including routing, port forwarding, firewalling, and more. This talk will give an introduction to the Akanda project, review the DreamCompute use case, and illustrate how Akanda works under the hood. In addition, we'll discuss future capabilities, operational challenges and tips, and more.

Watch the talk video - https://www.openstack.org/summit/openstack-paris-summit-2014/session-videos/presentation/akanda-layer-3-virtual-networking-services-for-openstack

Neutron scale

In this session, we will discuss the operational issues that Rackspace has encountered during and after implementing Neutron at a large scale. Neutron at scale required a significant amount of development and operations effort, some of which resulted in deviations from upstream code. Finally, our team would like to discuss our solutions and our upstream differences for Neutron and OpenStack that we believe are necessary so that it can be more performant at scale.

Neutron scaling

Deploying neutron at scale in a lrage enterprise environment. This presentation was made at Openstack Atlanta 2014 summit.

fsharp goodness for everyday work

The document discusses using F# for building a .NET client library for Apache Pulsar. It highlights features of F# like null safety, immutability, and builders that help create concise and safe code. It also presents solutions in F# for common problems like implementing connection state, using identifiers safely, and testing without mocks. Overall it argues that F# is well-suited for enterprise applications due to its functional programming principles.

Monitoring with Icinga2 at Adobe

This document discusses Adobe's use of Icinga2 for infrastructure monitoring. It provides an overview of Adobe's Online Experience Management team and environments, why they chose Icinga2, their current Icinga2 configuration and statistics, and how they design configurations for maintainability. It also covers notifications, performance data collection with Graphite, and using Docker to easily deploy Icinga2.

Understanding and Improving Code Generation

Code generation is integral to Spark’s physical execution engine. When implemented, the Spark engine creates optimized bytecode at runtime improving performance when compared to interpreted execution. Spark has taken the next step with whole-stage codegen which collapses an entire query into a single function.

Sneaking Scala through the Back Door

Is your organization committed to the power of the Java platform but stuck in the Java language? Do you spend your time wishing you could use Scala at work, but don’t see how you can get it approved?

There are good reasons to push for it! Scala is a popular language with Java developers because of its expressiveness and lack of boilerplate code. It allows you to focus on the problem rather than the ceremony required by Java. Yet, businesses are often reluctant to move to Scala, citing concerns about availability of developers and risks of working in a new language.

Learn strategies for bringing Scala into your organization, including using it in non-production code, integrating Scala into a Java application, and easing your fellow programmers into functional programming. See how to use Scala for new development while maintaining your investment in existing Java code. Hear stories of how other organizations have done this and succeeded. You’ll leave knowing how you can move to Scala without risking your job or the success of your projects.

Icinga Camp Amsterdam - Icinga2 and Ansible

The document discusses monitoring options and chooses to focus on deploying Icinga2 through Ansible. It covers monitoring, automation, virtual machines, Icinga2 masters and clients, and concludes by discussing how Ansible can be used to deploy Icinga2 in an automated way across inventory.

グラフデータベース Neptune 使ってみた

This document discusses and compares Neptune and JanusGraph graph databases. It provides an overview of Neptune's features like multi-AZ deployment and storage in S3. It also describes how to access Neptune using Gremlin and SPARQL query languages. The document then introduces JanusGraph and notes some key differences when using Gremlin APIs with Neptune versus JanusGraph. It shares the results of a performance test loading Amazon product graph data into both systems. Finally, it discusses options for loading and querying data between Neptune, Athena, Kinesis and other AWS services.

RESTEasy Reactive: Why should you care? | DevNation Tech Talk

RESTEasy Reactive is a JAX-RS implementation built for Quarkus that is async by default using Vert.x. It provides reactive support with Mutiny and has an ultra-fast architecture using code generation and endpoint pipelining. RESTEasy Reactive makes REST endpoints non-blocking and faster by default while also supporting blocking use cases. The presentation demonstrates how to make endpoints faster using RESTEasy Reactive and encourages feedback on the framework.

Scylla Summit 2018: Cassandra and ScyllaDB at Yahoo! Japan

Yahoo! JAPAN is one of the most successful internet service companies in Japan. Their NoSQL Team's Takahiro Iwase and Murukesh Mohanan have been testing out ScyllaDB, comparing it with Cassandra on multiple parameters: performance (both throughout and latency), reliability and ease of use. They will discuss the motivations behind their search for a successor of Cassandra that can handle exceedingly heavy traffic, and their evaluation of ScyllaDB in this regard.

A Deeper Look Into Reactive Streams with Akka Streams 1.0 and Slick 3.0

A Deeper Look Into Reactive Streams with Akka Streams 1.0 and Slick 3.0Legacy Typesafe (now Lightbend)

Reactive Streams 1.0.0 is now live, and so are our implementations in Akka Streams 1.0 and Slick 3.0.

Reactive Streams is an engineering collaboration between heavy hitters in the area of streaming data on the JVM. With the Reactive Streams Special Interest Group, we set out to standardize a common ground for achieving statically-typed, high-performance, low latency, asynchronous streams of data with built-in non-blocking back pressure—with the goal of creating a vibrant ecosystem of interoperating implementations, and with a vision of one day making it into a future version of Java.

Akka (recent winner of “Most Innovative Open Source Tech in 2015”) is a toolkit for building message-driven applications. With Akka Streams 1.0, Akka has incorporated a graphical DSL for composing data streams, an execution model that decouples the stream’s staged computation—it’s “blueprint”—from its execution (allowing for actor-based, single-threaded and fully distributed and clustered execution), type safe stream composition, an implementation of the Reactive Streaming specification that enables back-pressure, and more than 20 predefined stream “processing stages” that provide common streaming transformations that developers can tap into (for splitting streams, transforming streams, merging streams, and more).

Slick is a relational database query and access library for Scala that enables loose-coupling, minimal configuration requirements and abstraction of the complexities of connecting with relational databases. With Slick 3.0, Slick now supports the Reactive Streams API for providing asynchronous stream processing with non-blocking back-pressure. Slick 3.0 also allows elegant mapping across multiple data types, static verification and type inference for embedded SQL statements, compile-time error discovery, and JDBC support for interoperability with all existing drivers.High availability and fault tolerance of openstack

This document discusses building a fault tolerant and highly available architecture for OpenStack. It proposes:

1. A master-master cluster architecture for MySQL and session-level replication for RabbitMQ to provide high availability for the database and message broker components.

2. Disk-level replication using DBRD for Glance, Swift, and Cinder to provide redundancy at the storage level.

3. Ensuring high availability for networking and the Horizon dashboard.

4. Developing predictive and reactive models to detect failures in Nova, Swift, and compute instances and enable recovery of all components.

The document recommends using Pacemaker for cluster-level management and Corosync for reliable messaging between cluster nodes.

Alpakka - Connecting Kafka and ElasticSearch to Akka Streams

In order to work with Akka streams, we need a mechanism to connect Akka Streams to the existing system components. That is where Alpakka comes into the picture.

The Alpakka project is an open source initiative to implement stream-

aware, reactive, integration pipelines for Java and Scala.

It is built on top of Akka Streams and has been designed from ground

up to understand streaming natively and provide a DSL for reactive

and stream-oriented programming, with built-in support for

back pressure.

Icinga 2011 at Chemnitzer Linuxtage

This document provides an overview and agenda for an Icinga Development Team presentation. It introduces Icinga as an open source monitoring project and discusses the team, project structure, tools, current architecture, planned improvements to distributed monitoring capabilities using a Core API and ABA architecture, new HTTP interface, addons, reporting, and roadmap. The presentation concludes with a live demo and Q&A session.

Deployment topologies for high availability (ha)

The document discusses different deployment topologies for OpenStack high availability configurations. It describes the types of nodes in an OpenStack deployment including endpoint, controller, compute, and cinder volume nodes. It then examines several specific topology examples: one using a hardware load balancer with API services on compute nodes, another with a dedicated endpoint node and API services on controller nodes, and a third with simple controller redundancy and API services on controller nodes. Across all the examples, the key is distributing OpenStack services across nodes in a redundant and highly available manner.

Monitoring Open Source Databases with Icinga

This document summarizes a presentation on monitoring open source databases with Icinga. The presentation introduces Icinga as a scalable and extensible monitoring system. It discusses Icinga 2 architecture including zones for availability and scaling. It provides examples of monitoring checks for databases like MySQL, PostgreSQL and MongoDB. Templates and plugins for database monitoring with Icinga are demonstrated. Metrics collection and the Icinga API are also briefly covered.

Introduction to Akka Streams

With the advent of “big data”, it has become inevitable to analyze huge volumes of data in real-time to make sense out of it. For this to happen seamlessly, the streaming of that data is necessary. This is where Reactive Streams step in.

Akka Streams is built on top of the Reactive Streams interface. This webinar will be an introduction to Akka Streams and how it simplifies the aspect of back-pressure in real-time streaming.

Here’s an outline of the webinar -

~ Introduction to the problem set

~ How do Akka Streams help simplify the problem of back-pressure?

~ Basic terminologies of Akka Streams

~ Live demo of a real-life problem being solved with Akka Streams

How Scylla Manager Handles Backups

No matter how resilient your database infrastructure is, backups are still needed to defend against catastrophic failures. Be it the unlikely hardware failure of all data centers, or the more likely and all-too-human user error. Acknowledging the importance of good backup procedures, the Scylla Manager now natively supports backup and restore operations. In this talk, we will learn more about how that works and the guarantees provided, as well as how to set it up to guarantee maximum resiliency to your cluster.

Global Azure Bootcamp 2016 - Azure Automation Invades Your Data Centre

Azure Automation wants you to automate everything, everywhere. Hybrid Workers allow Azure Automation to reach new places within your infrastructure, allowing for more automation and less complexity. Learn how to deploy Hybrid Workers, balance automation workloads across groups of workers, trigger jobs off via web hooks, monitor jobs, remove scheduled tasks and much more.

Salt Air 19 - Intro to SaltStack RAET (reliable asyncronous event transport)

Thomas Hatch, SaltStack CTO, and Sam Smith, SaltStack director of product development, introduce SaltStack RAET as a new alternative transport medium developed specifically with SaltStack infrastructure automation and configuration management in mind. SaltStack built RAET for customers needing substantial speed and scale to automate management of massive data center infrastructure environments.

SaltStack RAET is primarily an async communication layer over truly async connections, defaulting to UDP. The SaltStack RAET system uses CurveCP encryption by default. SaltStack users can now leverage substantial flexibility via either Salt SSH, ØMQ, or RAET to best address numerous use cases.

Building machine learning applications locally with Spark — Joel Pinho Lucas ...

In times of huge amounts of heterogeneous data available, processing and extracting knowledge requires more and more efforts on building complex software architectures. In this context, Apache Spark provides a powerful and efficient approach for large-scale data processing. This talk will briefly introduce a powerful machine learning library (MLlib) along with a general overview of the Spark framework, describing how to launch applications within a cluster. In this way, a demo will show how to simulate a Spark cluster in a local machine using images available on a Docker Hub public repository. In the end, another demo will show how to save time using unit tests for validating jobs before running them in a cluster.

Instant scaling and deployment of Vitis Libraries on Alveo clusters using InA...

Instant scaling and deployment of Vitis Libraries on Alveo clusters using InA...Christoforos Kachris

This document discusses InAccel's FPGA orchestration platform. It provides instant and scalable deployment of FPGA applications through a unified API. The platform allows easy sharing of FPGA resources across multiple users and applications. It demonstrates scaling ResNet50 and Keras models on multiple Alveo cards with no code changes. The platform is integrated with Kubernetes and compatible with Xilinx tools like Vitis.InAccel FPGA resource manager

Coral is a framework that allows the distributed acceleration of large data sets across clusters of FPGA resources using simple programming models. It is designed to scale up from single devices to multiple FPGAs, each offering local computation and storage. Rather than rely on hardware to deliver high-availability, the library itself is designed to detect and handle failures at the application layer, so delivering a highly-available service on top of a cluster of FPGAs, each of which may be prone to failures.

Coral abstracts FPGA resources (device, memory), enabling fault-tolerant heterogeneous distributed systems to easily be built and run effectively.

It allows:

- instant scalability to multiple FPGAs

- seamless virtualization of the FPGA cluster

More Related Content

What's hot

Neutron scaling

Deploying neutron at scale in a lrage enterprise environment. This presentation was made at Openstack Atlanta 2014 summit.

fsharp goodness for everyday work

The document discusses using F# for building a .NET client library for Apache Pulsar. It highlights features of F# like null safety, immutability, and builders that help create concise and safe code. It also presents solutions in F# for common problems like implementing connection state, using identifiers safely, and testing without mocks. Overall it argues that F# is well-suited for enterprise applications due to its functional programming principles.

Monitoring with Icinga2 at Adobe

This document discusses Adobe's use of Icinga2 for infrastructure monitoring. It provides an overview of Adobe's Online Experience Management team and environments, why they chose Icinga2, their current Icinga2 configuration and statistics, and how they design configurations for maintainability. It also covers notifications, performance data collection with Graphite, and using Docker to easily deploy Icinga2.

Understanding and Improving Code Generation

Code generation is integral to Spark’s physical execution engine. When implemented, the Spark engine creates optimized bytecode at runtime improving performance when compared to interpreted execution. Spark has taken the next step with whole-stage codegen which collapses an entire query into a single function.

Sneaking Scala through the Back Door

Is your organization committed to the power of the Java platform but stuck in the Java language? Do you spend your time wishing you could use Scala at work, but don’t see how you can get it approved?

There are good reasons to push for it! Scala is a popular language with Java developers because of its expressiveness and lack of boilerplate code. It allows you to focus on the problem rather than the ceremony required by Java. Yet, businesses are often reluctant to move to Scala, citing concerns about availability of developers and risks of working in a new language.

Learn strategies for bringing Scala into your organization, including using it in non-production code, integrating Scala into a Java application, and easing your fellow programmers into functional programming. See how to use Scala for new development while maintaining your investment in existing Java code. Hear stories of how other organizations have done this and succeeded. You’ll leave knowing how you can move to Scala without risking your job or the success of your projects.

Icinga Camp Amsterdam - Icinga2 and Ansible

The document discusses monitoring options and chooses to focus on deploying Icinga2 through Ansible. It covers monitoring, automation, virtual machines, Icinga2 masters and clients, and concludes by discussing how Ansible can be used to deploy Icinga2 in an automated way across inventory.

グラフデータベース Neptune 使ってみた

This document discusses and compares Neptune and JanusGraph graph databases. It provides an overview of Neptune's features like multi-AZ deployment and storage in S3. It also describes how to access Neptune using Gremlin and SPARQL query languages. The document then introduces JanusGraph and notes some key differences when using Gremlin APIs with Neptune versus JanusGraph. It shares the results of a performance test loading Amazon product graph data into both systems. Finally, it discusses options for loading and querying data between Neptune, Athena, Kinesis and other AWS services.

RESTEasy Reactive: Why should you care? | DevNation Tech Talk

RESTEasy Reactive is a JAX-RS implementation built for Quarkus that is async by default using Vert.x. It provides reactive support with Mutiny and has an ultra-fast architecture using code generation and endpoint pipelining. RESTEasy Reactive makes REST endpoints non-blocking and faster by default while also supporting blocking use cases. The presentation demonstrates how to make endpoints faster using RESTEasy Reactive and encourages feedback on the framework.

Scylla Summit 2018: Cassandra and ScyllaDB at Yahoo! Japan

Yahoo! JAPAN is one of the most successful internet service companies in Japan. Their NoSQL Team's Takahiro Iwase and Murukesh Mohanan have been testing out ScyllaDB, comparing it with Cassandra on multiple parameters: performance (both throughout and latency), reliability and ease of use. They will discuss the motivations behind their search for a successor of Cassandra that can handle exceedingly heavy traffic, and their evaluation of ScyllaDB in this regard.

A Deeper Look Into Reactive Streams with Akka Streams 1.0 and Slick 3.0

A Deeper Look Into Reactive Streams with Akka Streams 1.0 and Slick 3.0Legacy Typesafe (now Lightbend)

Reactive Streams 1.0.0 is now live, and so are our implementations in Akka Streams 1.0 and Slick 3.0.

Reactive Streams is an engineering collaboration between heavy hitters in the area of streaming data on the JVM. With the Reactive Streams Special Interest Group, we set out to standardize a common ground for achieving statically-typed, high-performance, low latency, asynchronous streams of data with built-in non-blocking back pressure—with the goal of creating a vibrant ecosystem of interoperating implementations, and with a vision of one day making it into a future version of Java.

Akka (recent winner of “Most Innovative Open Source Tech in 2015”) is a toolkit for building message-driven applications. With Akka Streams 1.0, Akka has incorporated a graphical DSL for composing data streams, an execution model that decouples the stream’s staged computation—it’s “blueprint”—from its execution (allowing for actor-based, single-threaded and fully distributed and clustered execution), type safe stream composition, an implementation of the Reactive Streaming specification that enables back-pressure, and more than 20 predefined stream “processing stages” that provide common streaming transformations that developers can tap into (for splitting streams, transforming streams, merging streams, and more).

Slick is a relational database query and access library for Scala that enables loose-coupling, minimal configuration requirements and abstraction of the complexities of connecting with relational databases. With Slick 3.0, Slick now supports the Reactive Streams API for providing asynchronous stream processing with non-blocking back-pressure. Slick 3.0 also allows elegant mapping across multiple data types, static verification and type inference for embedded SQL statements, compile-time error discovery, and JDBC support for interoperability with all existing drivers.High availability and fault tolerance of openstack

This document discusses building a fault tolerant and highly available architecture for OpenStack. It proposes:

1. A master-master cluster architecture for MySQL and session-level replication for RabbitMQ to provide high availability for the database and message broker components.

2. Disk-level replication using DBRD for Glance, Swift, and Cinder to provide redundancy at the storage level.

3. Ensuring high availability for networking and the Horizon dashboard.

4. Developing predictive and reactive models to detect failures in Nova, Swift, and compute instances and enable recovery of all components.

The document recommends using Pacemaker for cluster-level management and Corosync for reliable messaging between cluster nodes.

Alpakka - Connecting Kafka and ElasticSearch to Akka Streams

In order to work with Akka streams, we need a mechanism to connect Akka Streams to the existing system components. That is where Alpakka comes into the picture.

The Alpakka project is an open source initiative to implement stream-

aware, reactive, integration pipelines for Java and Scala.

It is built on top of Akka Streams and has been designed from ground

up to understand streaming natively and provide a DSL for reactive

and stream-oriented programming, with built-in support for

back pressure.

Icinga 2011 at Chemnitzer Linuxtage

This document provides an overview and agenda for an Icinga Development Team presentation. It introduces Icinga as an open source monitoring project and discusses the team, project structure, tools, current architecture, planned improvements to distributed monitoring capabilities using a Core API and ABA architecture, new HTTP interface, addons, reporting, and roadmap. The presentation concludes with a live demo and Q&A session.

Deployment topologies for high availability (ha)

The document discusses different deployment topologies for OpenStack high availability configurations. It describes the types of nodes in an OpenStack deployment including endpoint, controller, compute, and cinder volume nodes. It then examines several specific topology examples: one using a hardware load balancer with API services on compute nodes, another with a dedicated endpoint node and API services on controller nodes, and a third with simple controller redundancy and API services on controller nodes. Across all the examples, the key is distributing OpenStack services across nodes in a redundant and highly available manner.

Monitoring Open Source Databases with Icinga

This document summarizes a presentation on monitoring open source databases with Icinga. The presentation introduces Icinga as a scalable and extensible monitoring system. It discusses Icinga 2 architecture including zones for availability and scaling. It provides examples of monitoring checks for databases like MySQL, PostgreSQL and MongoDB. Templates and plugins for database monitoring with Icinga are demonstrated. Metrics collection and the Icinga API are also briefly covered.

Introduction to Akka Streams

With the advent of “big data”, it has become inevitable to analyze huge volumes of data in real-time to make sense out of it. For this to happen seamlessly, the streaming of that data is necessary. This is where Reactive Streams step in.

Akka Streams is built on top of the Reactive Streams interface. This webinar will be an introduction to Akka Streams and how it simplifies the aspect of back-pressure in real-time streaming.

Here’s an outline of the webinar -

~ Introduction to the problem set

~ How do Akka Streams help simplify the problem of back-pressure?

~ Basic terminologies of Akka Streams

~ Live demo of a real-life problem being solved with Akka Streams

How Scylla Manager Handles Backups

No matter how resilient your database infrastructure is, backups are still needed to defend against catastrophic failures. Be it the unlikely hardware failure of all data centers, or the more likely and all-too-human user error. Acknowledging the importance of good backup procedures, the Scylla Manager now natively supports backup and restore operations. In this talk, we will learn more about how that works and the guarantees provided, as well as how to set it up to guarantee maximum resiliency to your cluster.

Global Azure Bootcamp 2016 - Azure Automation Invades Your Data Centre

Azure Automation wants you to automate everything, everywhere. Hybrid Workers allow Azure Automation to reach new places within your infrastructure, allowing for more automation and less complexity. Learn how to deploy Hybrid Workers, balance automation workloads across groups of workers, trigger jobs off via web hooks, monitor jobs, remove scheduled tasks and much more.

Salt Air 19 - Intro to SaltStack RAET (reliable asyncronous event transport)

Thomas Hatch, SaltStack CTO, and Sam Smith, SaltStack director of product development, introduce SaltStack RAET as a new alternative transport medium developed specifically with SaltStack infrastructure automation and configuration management in mind. SaltStack built RAET for customers needing substantial speed and scale to automate management of massive data center infrastructure environments.

SaltStack RAET is primarily an async communication layer over truly async connections, defaulting to UDP. The SaltStack RAET system uses CurveCP encryption by default. SaltStack users can now leverage substantial flexibility via either Salt SSH, ØMQ, or RAET to best address numerous use cases.

Building machine learning applications locally with Spark — Joel Pinho Lucas ...

In times of huge amounts of heterogeneous data available, processing and extracting knowledge requires more and more efforts on building complex software architectures. In this context, Apache Spark provides a powerful and efficient approach for large-scale data processing. This talk will briefly introduce a powerful machine learning library (MLlib) along with a general overview of the Spark framework, describing how to launch applications within a cluster. In this way, a demo will show how to simulate a Spark cluster in a local machine using images available on a Docker Hub public repository. In the end, another demo will show how to save time using unit tests for validating jobs before running them in a cluster.

What's hot (20)

RESTEasy Reactive: Why should you care? | DevNation Tech Talk

RESTEasy Reactive: Why should you care? | DevNation Tech Talk

Scylla Summit 2018: Cassandra and ScyllaDB at Yahoo! Japan

Scylla Summit 2018: Cassandra and ScyllaDB at Yahoo! Japan

A Deeper Look Into Reactive Streams with Akka Streams 1.0 and Slick 3.0

A Deeper Look Into Reactive Streams with Akka Streams 1.0 and Slick 3.0

High availability and fault tolerance of openstack

High availability and fault tolerance of openstack

Alpakka - Connecting Kafka and ElasticSearch to Akka Streams

Alpakka - Connecting Kafka and ElasticSearch to Akka Streams

Global Azure Bootcamp 2016 - Azure Automation Invades Your Data Centre

Global Azure Bootcamp 2016 - Azure Automation Invades Your Data Centre

Salt Air 19 - Intro to SaltStack RAET (reliable asyncronous event transport)

Salt Air 19 - Intro to SaltStack RAET (reliable asyncronous event transport)

Building machine learning applications locally with Spark — Joel Pinho Lucas ...

Building machine learning applications locally with Spark — Joel Pinho Lucas ...

Similar to InAccel FPGA resource manager and orchestrator

Instant scaling and deployment of Vitis Libraries on Alveo clusters using InA...

Instant scaling and deployment of Vitis Libraries on Alveo clusters using InA...Christoforos Kachris

This document discusses InAccel's FPGA orchestration platform. It provides instant and scalable deployment of FPGA applications through a unified API. The platform allows easy sharing of FPGA resources across multiple users and applications. It demonstrates scaling ResNet50 and Keras models on multiple Alveo cards with no code changes. The platform is integrated with Kubernetes and compatible with Xilinx tools like Vitis.InAccel FPGA resource manager

Coral is a framework that allows the distributed acceleration of large data sets across clusters of FPGA resources using simple programming models. It is designed to scale up from single devices to multiple FPGAs, each offering local computation and storage. Rather than rely on hardware to deliver high-availability, the library itself is designed to detect and handle failures at the application layer, so delivering a highly-available service on top of a cluster of FPGAs, each of which may be prone to failures.

Coral abstracts FPGA resources (device, memory), enabling fault-tolerant heterogeneous distributed systems to easily be built and run effectively.

It allows:

- instant scalability to multiple FPGAs

- seamless virtualization of the FPGA cluster

InAccel FPGA Operator for instant Cloud deployment

The InAccel FPGA operator manages FPGA resources in a Kubernetes cluster and automates tasks related to bootstrapping FPGA nodes. Since the FPGA is a special resource in the cluster, it requires a few components to be installed before application workloads can be deployed onto the FPGA. These components include the FPGA drivers, Kubernetes device plugin, container runtime and others such as automatic node labelling, monitoring and more.

A Helm chart is provided for easily deploying the FPGA operator in a cluster to provision the InAccel software on FPGA-enabled nodes.

FPGA-Based Acceleration Architecture for Spark SQL Qi Xie and Quanfu Wang

In this session we will present a Configurable FPGA-Based Spark SQL Acceleration Architecture. It is target to leverage FPGA highly parallel computing capability to accelerate Spark SQL Query and for FPGA’s higher power efficiency than CPU we can lower the power consumption at the same time. The Architecture consists of SQL query decomposition algorithms, fine-grained FPGA based Engine Units which perform basic computation of sub string, arithmetic and logic operations. Using SQL query decomposition algorithm, we are able to decompose a complex SQL query into basic operations and according to their patterns each is fed into an Engine Unit. SQL Engine Units are highly configurable and can be chained together to perform complex Spark SQL queries, finally one SQL query is transformed into a Hardware Pipeline. We will present the performance benchmark results comparing the queries with FGPA-Based Spark SQL Acceleration Architecture on XEON E5 and FPGA to the ones with Spark SQL Query on XEON E5 with 10X ~ 100X improvement and we will demonstrate one SQL query workload from a real customer.

00 opencapi acceleration framework yonglu_ver2

This document discusses OpenCAPI acceleration using the OpenCAPI Acceleration Framework (oc-accel). It provides an overview of the oc-accel components and workflow, benchmarks the OC-Accel bandwidth and latency, and provides examples of how to fully utilize OC-Accel capabilities to accelerate functions on an FPGA. The document also outlines the OC-Accel development process and previews upcoming features like support for ODMA to port existing PCIe accelerators to OpenCAPI.

Using FPGA in Embedded Devices

This presentation is dedicated to Field Programmable Gate Array (FPGA), its interaction with CPU, distrbuted resources, use of FPGA for prototyping chips, and OpenCL in FPGA.

This presentation by Andriy Smolskyy, GlobalLogic Engineering Consultant, was delivered at a GlobalLogic Embedded TechTalk in Lviv on March 29, 2017.

SCFE 2020 OpenCAPI presentation as part of OpenPWOER Tutorial

This document introduces hardware acceleration using FPGAs with OpenCAPI. It discusses how classic FPGA acceleration has issues like slow CPU-managed memory access and lack of data coherency. OpenCAPI allows FPGAs to directly access host memory, providing faster memory access and data coherency. It also introduces the OC-Accel framework that allows programming FPGAs using C/C++ instead of HDL languages, addressing issues like long development times. Example applications demonstrated significant performance improvements using this approach over CPU-only or classic FPGA acceleration methods.

FPGAs in the cloud? (October 2017)

The case for non-CPU architectures

What is an FPGA?

Using FPGAs on AWS

Demo: running an FPGA image on AWS

FPGAs and Deep Learning

Resources

Introduction to EDA Tools

This document provides an introduction to electronic design automation (EDA) tools and discusses different types of programmable logic devices including field programmable gate arrays (FPGAs) and complex programmable logic devices (CPLDs). It describes the basic architecture of FPGAs including logic blocks, interconnects, and input/output blocks. The advantages of FPGAs such as shorter development time and flexibility are also summarized.

Announcing Amazon EC2 F1 Instances with Custom FPGAs

Amazon EC2 F1 is a new compute instance with programmable hardware for application acceleration. With F1, you can directly access custom FPGA hardware on the instance in a few clicks.

Learning Objectives:

• Learn about the capabilities, features, and benefits of the new F1 instances

• Develop your FPGA using the F1 Hardware Developer Kit and FPGA Developer AMI

• Deploy your FPGA acceleration code using F1 instances

• Use F1 instances for hardware acceleration in your applications

• Learn how to offer pre-packaged Amazon FPGA Machine Images (AFIs) to your customers through the AWS Marketplace

Making the most out of Heterogeneous Chips with CPU, GPU and FPGA

Heterogeneous computing is seen as a path forward to deliver the energy and performance improvements needed over the next decade. That way, heterogeneous systems feature GPUs (Graphics Processing Units) or FPGAs (Field Programmable Gate Arrays) that excel at accelerating complex tasks while consuming less energy. There are also heterogeneous architectures on-chip, like the processors developed for mobile devices (laptops, tablets and smartphones) comprised of multiple cores and a GPU.

This talk covers hardware and software aspects of this kind of heterogeneous architectures. Regarding the HW, we briefly discuss the underlying architecture of some heterogeneous chips composed of multicores+GPU and multicores+FPGA, delving into the differences between both kind of accelerators and how to measure the energy they consume. We also address the different solutions to get a coherent view of the memory shared between the cores and the GPU or between the cores and the FPGA.

Accelerating Spark SQL Workloads to 50X Performance with Apache Arrow-Based F...

In Big Data field, Spark SQL is important data processing module for Apache Spark to work with structured row-based data in a majority of operators. Field-programmable gate array(FPGA) with highly customized intellectual property(IP) can not only bring better performance but also lower power consumption to accelerate CPU-intensive segments for an application.

Data science online camp using the flipn stack for edge ai (flink, nifi, pu...

Data science online camp using the flipn stack for edge ai (flink, nifi, pulsar)

Dec 3, 2021

Apache NiFi

Apache Flink

Apache Pulsar

Edge AI

Cloud Native Made Easy

StreamNative

CAPI and OpenCAPI Hardware acceleration enablement

The document describes an IBM workshop on CAPI and OpenCAPI technologies. It provides an overview of FPGA acceleration using SNAP, including how SNAP simplifies FPGA programming using a C/C++ based approach. Examples of use cases for FPGA acceleration like video processing and machine learning inference are also presented.

Programmable logic device (PLD)

This document discusses programmable logic devices (PLDs) like field programmable gate arrays (FPGAs). It provides details on FPGA types and vendors like Xilinx. FPGAs offer efficient resource utilization and flexibility. Xilinx is a major FPGA vendor and the document describes Xilinx FPGA families and features. It also includes an example VHDL code for a half adder circuit implemented on an FPGA.

Make Accelerator Pluggable for Container Engine

This document proposes making hardware accelerators like GPUs and FPGAs pluggable for container engines. It discusses identifying accelerator requirements, preparing runtimes, and managing resources. A pluggable accelerator interface is suggested to integrate different plugins for devices like GPUs, DPDK, and FPGAs in a standardized way and manage their lifecycles together with containers. This would allow containers to easily leverage various hardware accelerators.

Java 40 versions_sgp

The document provides an overview of Java and its evolution over the past 40 versions. It discusses key features and enhancements in recent versions of Java including Java 9-14. It also outlines some of Oracle's major Java projects including Valhalla, Loom, Panama, Amber, and ZGC which are focused on improving performance, concurrency, and developer productivity. The document contains several copyright notices and is intended for informational purposes only.

A Primer on FPGAs - Field Programmable Gate Arrays

A focus on the use of FPGAs by cloud service providers. Includes Microsoft Azure Catapult, Google Tensor Processors, and Amazon EC2 F1 instances. Also includes background info on how to get started with FPGAs

SDAccel Design Contest: SDAccel and F1 Instances

The document discusses Amazon EC2 F1 instances which provide customizable FPGAs for hardware acceleration in the cloud. It describes various FPGA-based cloud infrastructures from companies like Amazon, IBM, Microsoft, and Ryft. It also provides details on the Vivado/SDAccel design contest including dates, team size limits, and prizes for winners. Finally, it discusses the SDAccel development environment for designing FPGA accelerators and deploying them on F1 instances.

nios.ppt

This document provides an introduction to FPGA and SOPC development boards. It discusses the architecture of programmable logic devices including PLDs, CPLDs, and FPGAs. Examples are given of Altera MAX7000 CPLD and Stratix series FPGA architectures. The benefits of FPGAs are outlined compared to ASICs. The document then reviews the FPGA design flow and different design entry methods like VHDL and block diagrams. It provides examples of the Altera Stratix Nios development board and UP2 development board. Finally, it introduces the Altera Quartus II design software used for FPGA development.

Similar to InAccel FPGA resource manager and orchestrator (20)

Instant scaling and deployment of Vitis Libraries on Alveo clusters using InA...

Instant scaling and deployment of Vitis Libraries on Alveo clusters using InA...

InAccel FPGA Operator for instant Cloud deployment

InAccel FPGA Operator for instant Cloud deployment

FPGA-Based Acceleration Architecture for Spark SQL Qi Xie and Quanfu Wang

FPGA-Based Acceleration Architecture for Spark SQL Qi Xie and Quanfu Wang

SCFE 2020 OpenCAPI presentation as part of OpenPWOER Tutorial

SCFE 2020 OpenCAPI presentation as part of OpenPWOER Tutorial

Announcing Amazon EC2 F1 Instances with Custom FPGAs

Announcing Amazon EC2 F1 Instances with Custom FPGAs

Making the most out of Heterogeneous Chips with CPU, GPU and FPGA

Making the most out of Heterogeneous Chips with CPU, GPU and FPGA

Accelerating Spark SQL Workloads to 50X Performance with Apache Arrow-Based F...

Accelerating Spark SQL Workloads to 50X Performance with Apache Arrow-Based F...

Data science online camp using the flipn stack for edge ai (flink, nifi, pu...

Data science online camp using the flipn stack for edge ai (flink, nifi, pu...

CAPI and OpenCAPI Hardware acceleration enablement

CAPI and OpenCAPI Hardware acceleration enablement

A Primer on FPGAs - Field Programmable Gate Arrays

A Primer on FPGAs - Field Programmable Gate Arrays

Recently uploaded

Driving Business Innovation: Latest Generative AI Advancements & Success Story

Are you ready to revolutionize how you handle data? Join us for a webinar where we’ll bring you up to speed with the latest advancements in Generative AI technology and discover how leveraging FME with tools from giants like Google Gemini, Amazon, and Microsoft OpenAI can supercharge your workflow efficiency.

During the hour, we’ll take you through:

Guest Speaker Segment with Hannah Barrington: Dive into the world of dynamic real estate marketing with Hannah, the Marketing Manager at Workspace Group. Hear firsthand how their team generates engaging descriptions for thousands of office units by integrating diverse data sources—from PDF floorplans to web pages—using FME transformers, like OpenAIVisionConnector and AnthropicVisionConnector. This use case will show you how GenAI can streamline content creation for marketing across the board.

Ollama Use Case: Learn how Scenario Specialist Dmitri Bagh has utilized Ollama within FME to input data, create custom models, and enhance security protocols. This segment will include demos to illustrate the full capabilities of FME in AI-driven processes.

Custom AI Models: Discover how to leverage FME to build personalized AI models using your data. Whether it’s populating a model with local data for added security or integrating public AI tools, find out how FME facilitates a versatile and secure approach to AI.

We’ll wrap up with a live Q&A session where you can engage with our experts on your specific use cases, and learn more about optimizing your data workflows with AI.

This webinar is ideal for professionals seeking to harness the power of AI within their data management systems while ensuring high levels of customization and security. Whether you're a novice or an expert, gain actionable insights and strategies to elevate your data processes. Join us to see how FME and AI can revolutionize how you work with data!

HCL Notes and Domino License Cost Reduction in the World of DLAU

Webinar Recording: https://www.panagenda.com/webinars/hcl-notes-and-domino-license-cost-reduction-in-the-world-of-dlau/

The introduction of DLAU and the CCB & CCX licensing model caused quite a stir in the HCL community. As a Notes and Domino customer, you may have faced challenges with unexpected user counts and license costs. You probably have questions on how this new licensing approach works and how to benefit from it. Most importantly, you likely have budget constraints and want to save money where possible. Don’t worry, we can help with all of this!

We’ll show you how to fix common misconfigurations that cause higher-than-expected user counts, and how to identify accounts which you can deactivate to save money. There are also frequent patterns that can cause unnecessary cost, like using a person document instead of a mail-in for shared mailboxes. We’ll provide examples and solutions for those as well. And naturally we’ll explain the new licensing model.

Join HCL Ambassador Marc Thomas in this webinar with a special guest appearance from Franz Walder. It will give you the tools and know-how to stay on top of what is going on with Domino licensing. You will be able lower your cost through an optimized configuration and keep it low going forward.

These topics will be covered

- Reducing license cost by finding and fixing misconfigurations and superfluous accounts

- How do CCB and CCX licenses really work?

- Understanding the DLAU tool and how to best utilize it

- Tips for common problem areas, like team mailboxes, functional/test users, etc

- Practical examples and best practices to implement right away

Building Production Ready Search Pipelines with Spark and Milvus

Spark is the widely used ETL tool for processing, indexing and ingesting data to serving stack for search. Milvus is the production-ready open-source vector database. In this talk we will show how to use Spark to process unstructured data to extract vector representations, and push the vectors to Milvus vector database for search serving.

Artificial Intelligence for XMLDevelopment

In the rapidly evolving landscape of technologies, XML continues to play a vital role in structuring, storing, and transporting data across diverse systems. The recent advancements in artificial intelligence (AI) present new methodologies for enhancing XML development workflows, introducing efficiency, automation, and intelligent capabilities. This presentation will outline the scope and perspective of utilizing AI in XML development. The potential benefits and the possible pitfalls will be highlighted, providing a balanced view of the subject.

We will explore the capabilities of AI in understanding XML markup languages and autonomously creating structured XML content. Additionally, we will examine the capacity of AI to enrich plain text with appropriate XML markup. Practical examples and methodological guidelines will be provided to elucidate how AI can be effectively prompted to interpret and generate accurate XML markup.

Further emphasis will be placed on the role of AI in developing XSLT, or schemas such as XSD and Schematron. We will address the techniques and strategies adopted to create prompts for generating code, explaining code, or refactoring the code, and the results achieved.

The discussion will extend to how AI can be used to transform XML content. In particular, the focus will be on the use of AI XPath extension functions in XSLT, Schematron, Schematron Quick Fixes, or for XML content refactoring.

The presentation aims to deliver a comprehensive overview of AI usage in XML development, providing attendees with the necessary knowledge to make informed decisions. Whether you’re at the early stages of adopting AI or considering integrating it in advanced XML development, this presentation will cover all levels of expertise.

By highlighting the potential advantages and challenges of integrating AI with XML development tools and languages, the presentation seeks to inspire thoughtful conversation around the future of XML development. We’ll not only delve into the technical aspects of AI-powered XML development but also discuss practical implications and possible future directions.

Removing Uninteresting Bytes in Software Fuzzing

Imagine a world where software fuzzing, the process of mutating bytes in test seeds to uncover hidden and erroneous program behaviors, becomes faster and more effective. A lot depends on the initial seeds, which can significantly dictate the trajectory of a fuzzing campaign, particularly in terms of how long it takes to uncover interesting behaviour in your code. We introduce DIAR, a technique designed to speedup fuzzing campaigns by pinpointing and eliminating those uninteresting bytes in the seeds. Picture this: instead of wasting valuable resources on meaningless mutations in large, bloated seeds, DIAR removes the unnecessary bytes, streamlining the entire process.

In this work, we equipped AFL, a popular fuzzer, with DIAR and examined two critical Linux libraries -- Libxml's xmllint, a tool for parsing xml documents, and Binutil's readelf, an essential debugging and security analysis command-line tool used to display detailed information about ELF (Executable and Linkable Format). Our preliminary results show that AFL+DIAR does not only discover new paths more quickly but also achieves higher coverage overall. This work thus showcases how starting with lean and optimized seeds can lead to faster, more comprehensive fuzzing campaigns -- and DIAR helps you find such seeds.

- These are slides of the talk given at IEEE International Conference on Software Testing Verification and Validation Workshop, ICSTW 2022.

Ocean lotus Threat actors project by John Sitima 2024 (1).pptx

Ocean Lotus cyber threat actors represent a sophisticated, persistent, and politically motivated group that poses a significant risk to organizations and individuals in the Southeast Asian region. Their continuous evolution and adaptability underscore the need for robust cybersecurity measures and international cooperation to identify and mitigate the threats posed by such advanced persistent threat groups.

TrustArc Webinar - 2024 Global Privacy Survey

How does your privacy program stack up against your peers? What challenges are privacy teams tackling and prioritizing in 2024?

In the fifth annual Global Privacy Benchmarks Survey, we asked over 1,800 global privacy professionals and business executives to share their perspectives on the current state of privacy inside and outside of their organizations. This year’s report focused on emerging areas of importance for privacy and compliance professionals, including considerations and implications of Artificial Intelligence (AI) technologies, building brand trust, and different approaches for achieving higher privacy competence scores.

See how organizational priorities and strategic approaches to data security and privacy are evolving around the globe.

This webinar will review:

- The top 10 privacy insights from the fifth annual Global Privacy Benchmarks Survey

- The top challenges for privacy leaders, practitioners, and organizations in 2024

- Key themes to consider in developing and maintaining your privacy program

CAKE: Sharing Slices of Confidential Data on Blockchain

Presented at the CAiSE 2024 Forum, Intelligent Information Systems, June 6th, Limassol, Cyprus.

Synopsis: Cooperative information systems typically involve various entities in a collaborative process within a distributed environment. Blockchain technology offers a mechanism for automating such processes, even when only partial trust exists among participants. The data stored on the blockchain is replicated across all nodes in the network, ensuring accessibility to all participants. While this aspect facilitates traceability, integrity, and persistence, it poses challenges for adopting public blockchains in enterprise settings due to confidentiality issues. In this paper, we present a software tool named Control Access via Key Encryption (CAKE), designed to ensure data confidentiality in scenarios involving public blockchains. After outlining its core components and functionalities, we showcase the application of CAKE in the context of a real-world cyber-security project within the logistics domain.

Paper: https://doi.org/10.1007/978-3-031-61000-4_16

Your One-Stop Shop for Python Success: Top 10 US Python Development Providers

Simplify your search for a reliable Python development partner! This list presents the top 10 trusted US providers offering comprehensive Python development services, ensuring your project's success from conception to completion.

20240607 QFM018 Elixir Reading List May 2024

Everything I found interesting about the Elixir programming ecosystem in May 2024

AI-Powered Food Delivery Transforming App Development in Saudi Arabia.pdf

In this blog post, we'll delve into the intersection of AI and app development in Saudi Arabia, focusing on the food delivery sector. We'll explore how AI is revolutionizing the way Saudi consumers order food, how restaurants manage their operations, and how delivery partners navigate the bustling streets of cities like Riyadh, Jeddah, and Dammam. Through real-world case studies, we'll showcase how leading Saudi food delivery apps are leveraging AI to redefine convenience, personalization, and efficiency.

OpenID AuthZEN Interop Read Out - Authorization

During Identiverse 2024 and EIC 2024, members of the OpenID AuthZEN WG got together and demoed their authorization endpoints conforming to the AuthZEN API

Generating privacy-protected synthetic data using Secludy and Milvus

During this demo, the founders of Secludy will demonstrate how their system utilizes Milvus to store and manipulate embeddings for generating privacy-protected synthetic data. Their approach not only maintains the confidentiality of the original data but also enhances the utility and scalability of LLMs under privacy constraints. Attendees, including machine learning engineers, data scientists, and data managers, will witness first-hand how Secludy's integration with Milvus empowers organizations to harness the power of LLMs securely and efficiently.

Essentials of Automations: The Art of Triggers and Actions in FME

In this second installment of our Essentials of Automations webinar series, we’ll explore the landscape of triggers and actions, guiding you through the nuances of authoring and adapting workspaces for seamless automations. Gain an understanding of the full spectrum of triggers and actions available in FME, empowering you to enhance your workspaces for efficient automation.

We’ll kick things off by showcasing the most commonly used event-based triggers, introducing you to various automation workflows like manual triggers, schedules, directory watchers, and more. Plus, see how these elements play out in real scenarios.

Whether you’re tweaking your current setup or building from the ground up, this session will arm you with the tools and insights needed to transform your FME usage into a powerhouse of productivity. Join us to discover effective strategies that simplify complex processes, enhancing your productivity and transforming your data management practices with FME. Let’s turn complexity into clarity and make your workspaces work wonders!

National Security Agency - NSA mobile device best practices

Threats to mobile devices are more prevalent and increasing in scope and complexity. Users of mobile devices desire to take full advantage of the features

available on those devices, but many of the features provide convenience and capability but sacrifice security. This best practices guide outlines steps the users can take to better protect personal devices and information.

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

ABSTRACT: A prima vista, un mattoncino Lego e la backdoor XZ potrebbero avere in comune il fatto di essere entrambi blocchi di costruzione, o dipendenze di progetti creativi e software. La realtà è che un mattoncino Lego e il caso della backdoor XZ hanno molto di più di tutto ciò in comune.

Partecipate alla presentazione per immergervi in una storia di interoperabilità, standard e formati aperti, per poi discutere del ruolo importante che i contributori hanno in una comunità open source sostenibile.

BIO: Sostenitrice del software libero e dei formati standard e aperti. È stata un membro attivo dei progetti Fedora e openSUSE e ha co-fondato l'Associazione LibreItalia dove è stata coinvolta in diversi eventi, migrazioni e formazione relativi a LibreOffice. In precedenza ha lavorato a migrazioni e corsi di formazione su LibreOffice per diverse amministrazioni pubbliche e privati. Da gennaio 2020 lavora in SUSE come Software Release Engineer per Uyuni e SUSE Manager e quando non segue la sua passione per i computer e per Geeko coltiva la sua curiosità per l'astronomia (da cui deriva il suo nickname deneb_alpha).

How to Get CNIC Information System with Paksim Ga.pptx

Pakdata Cf is a groundbreaking system designed to streamline and facilitate access to CNIC information. This innovative platform leverages advanced technology to provide users with efficient and secure access to their CNIC details.

Recently uploaded (20)

Driving Business Innovation: Latest Generative AI Advancements & Success Story

Driving Business Innovation: Latest Generative AI Advancements & Success Story

HCL Notes and Domino License Cost Reduction in the World of DLAU

HCL Notes and Domino License Cost Reduction in the World of DLAU

Building Production Ready Search Pipelines with Spark and Milvus

Building Production Ready Search Pipelines with Spark and Milvus

Ocean lotus Threat actors project by John Sitima 2024 (1).pptx

Ocean lotus Threat actors project by John Sitima 2024 (1).pptx

CAKE: Sharing Slices of Confidential Data on Blockchain

CAKE: Sharing Slices of Confidential Data on Blockchain

Your One-Stop Shop for Python Success: Top 10 US Python Development Providers

Your One-Stop Shop for Python Success: Top 10 US Python Development Providers

AI-Powered Food Delivery Transforming App Development in Saudi Arabia.pdf

AI-Powered Food Delivery Transforming App Development in Saudi Arabia.pdf

Generating privacy-protected synthetic data using Secludy and Milvus

Generating privacy-protected synthetic data using Secludy and Milvus

Essentials of Automations: The Art of Triggers and Actions in FME

Essentials of Automations: The Art of Triggers and Actions in FME

National Security Agency - NSA mobile device best practices

National Security Agency - NSA mobile device best practices

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

How to Get CNIC Information System with Paksim Ga.pptx

How to Get CNIC Information System with Paksim Ga.pptx

InAccel FPGA resource manager and orchestrator

- 1. Seamless integration with C/C++, Python, Java and Scala. No need for OpenCL directives Automatic virtualization and scheduling of the applications to the FPGA cluster Fully scalable: Scale-up (multiple FPGAs per node) and Scale-out (multiple FPGA-based servers) Automatic configuration and management of the FPGA bitstreams and memory InAccel Coral Resource Manager InAccel Runtime - Resource isolation Applications FPGA drivers Server FPGA Kernels Automated scaling, deployment, virtualization and management of FPGA clusters

- 2. • 30x simpler code • Software-alike function invoking • No need for OpenCL directives FPGA deployment with software-alike function invoking, No need for OpenCL

- 3. Instant distribution of accelerated functions to the FPGA cluster Instant Scalability inaccel start --fpga= board:0, board:1, board:2

- 4. Automated sharing of the FPGA resources Automated resource sharing from multiple applications or multi-thread applications

- 6. Easy FPGA management with integrated Bitstream artifactory

- 7. https://inaccel.com/coral-fpga-resource-manager/ Learn more and try now for free at: Automated scaling, deployment, virtualization and management of FPGA clusters