This document presents a novel method for improving word alignments in bilingual corpora, specifically focusing on Bulgarian and Russian languages. The authors leverage web resources and various linguistic techniques to enhance translation quality, demonstrating significant improvements through evaluations on a parallel corpus. Key methodologies include modified minimum edit distance, competitive linking, and a combined similarity measure approach, resulting in a more effective translation alignment model.

![Improved Word Alignments Using the Web as a Corpus

Preslav Nakov Svetlin Nakov Elena Paskaleva

UC Berkeley Sofia University Bulgarian Academy of Sciences

EECS, CS division 5 James Boucher Blvd. 25A Acad. G. Bonchev Str.

Berkeley, CA 94720 Sofia, Bulgaria Sofia, Bulgaria

nakov @cs.berkeley.edu nakov @fmi.uni-sofia.bg hellen@lml.bas.bg

Abstract fact, there are more papers published yearly on word

We propose a novel method for improving word alignments than on any other SMT subproblem.

alignments in a parallel sentence-aligned bilin- In the present paper, we describe a novel method for

gual corpus based on the idea that if two words improving word alignments using the Web as a corpus,

are translations of each other then so should a glossary of known word translations (dynamically

be many words in their local contexts. The

idea is formalised using the Web as a corpus,

augmented from the Web using bootstrapping), the

a glossary of known word translations (dynami- vector space model, weighted minimum edit distance,

cally augmented from the Web using bootstrap- competitive linking, and the IBM models. The poten-

ping), the vector space model, linguistically mo- tial of the method is demonstrated on a Bulgarian-

tivated weighted minimum edit distance, com- Russian bilingual corpus.

petitive linking, and the IBM models. Evalua- The rest of the paper is organised as follows: section

tion results on a Bulgarian-Russian corpus show 2 explains the method in detail, section 3 describes

a sizable improvement both in word alignment the corpus and the resources used, section 4 contains

and in translation quality. the evaluation, section 5 points to important related

research, and section 6 concludes with some possible

directions for future work.

Keywords

Machine translation, word alignments, competitive linking, Web

as a corpus, string similarity, edit distance. 2 Method

Our method combines two similarity measures which

1 Introduction make use of different information sources. First, we

define a language-specific modified minimum edit dis-

The beginning of modern Statistical Machine Transla- tance, based on linguistically-motivated rules target-

tion (SMT) can be traced back to 1988, when Brown et ing Bulgarian-Russian cognate pairs. Second, we

al. [5] from IBM published a formalised mathematical define a distributional semantic similarity measure,

formulation of the translation problem and proposed based on the idea that if two words represent a transla-

five word alignment models – IBM models 1, 2, 3, 4 and tions pair, then the most frequently co-occurring words

5. Starting with a bilingual parallel sentence-aligned in their local contexts should be translations of each

corpus, the IBM models learn how to translate individ- other as well. This intuition is formalised using the

ual words and the probabilities of these translations. Web as a corpus, a bilingual glossary of word trans-

Later, decoders like the ISI ReWrite Decoder [9] lation pairs used as “bridges”, and the vector space

became available, which made it possible to quickly model. The two measures are combined with com-

build SMT systems with decent quality. petitive linking [19] in order to obtain high quality

An important shift happened in 2004, when the word translation pairs, which are then appended to

Pharaoh model [11] has been proposed, which uses the bilingual sentence-aligned corpus in order to bias

whole phrases (typically of length up to 7, not neces- the subsequent training of the IBM word alignment

sarily representing linguistic units), rather than just models [5].

words. This led to a significant improvement in trans-

lation quality, since phrases can encode local gen-

der/number agreement, facilitate choosing the correct 2.1 Orthographic Similarity

sense for ambiguous words, and naturally handle fixed

phrases and idioms. While methods have been pro- We use an orthographic similarity measure, which is

posed for learning translation phrases directly [17], based on the minimum edit distance (med) or Leven-

the most popular alignment template approach [23] re- shtein distance [16]. med calculates the distance be-

quires bi-directional word alignments at the sentence tween two strings s1 and s2 as the minimum number of

level from which phrases consistent with those align- edit operations – insert, replace, delete – needed

ments are extracted. Since better word alignments can to transform s1 into s2 . For example, the med be-

lead to better phrases1 , improving word alignments re- tween r. pervyi (Russian, ‘first’) and b. p rvi t

mains one of the primary research problems in SMT: in

quality is indirect; improving the former does not necessarily

1 The dependency between word alignments and translation improve the latter.

1](https://image.slidesharecdn.com/nakov-nakov-paskaleva-ranlp-2007-improved-word-alignments-090830190228-phpapp01/75/Improved-Word-Alignments-Using-the-Web-as-a-Corpus-1-2048.jpg)

![MMED(s1 ,s2 )

(Bulgarian, ‘the first’) is 4: three replace operations MMEDR(s1 , s2 ) = 1 − max(|s1 |,|s2 |)

(e → , y → i, i → ) and one insert (of t).

We modify the classic med in two ways. First, we Below we compare mmedr with minimum edit dis-

normalise the two strings, taking into account some tance ratio (medr):

general graphemic correlations between the phonetico- MED(s1 ,s2 )

graphemic systems of the two closely-related Slavonic MEDR(s1 , s2 ) = 1 − max(|s1 |,|s2 |)

languages – Bulgarian and Russian:

and longest common subsequence ratio (lcsr) [18]:

• For Russian words, we remove the letters and |LCS(s1 ,s2 )|

, as their graphemic collocations are excluded LCSR(s1 , s2 ) = max(|s1 |,|s2 |)

in Bulgarian, e.g. between two consonants (r.

sil no ↔ b. silno, strongly), following a In the last definition, lcs(s1 , s2 ) refers to the

consonant (r. ob vlenie ↔ b. ob vlenie, longest common subsequence of s1 and s2 , e.g.

an announcement), etc. lcs(pervyi, p rvi t) = prv, and therefore

• For Russian words, we remove the ending i, mmedr(pervyi, p rvi t) = 3/7 ≈ 0.43

which is the typical nominative adjective ending

in Russian, but not in Bulgarian, e.g. r. detskii We obtain the same score using mmed:

↔ b. detski (children’s).

mmed(pervyi, p rvi t) = 1 − 4/7 ≈ 0.43

• For Bulgarian words, we remove the definite arti-

cle, e.g. b. gorski t (the forestal) → b. gorski while with mmedr we have:

(forestal). The definite article is the only aggluti-

native morpheme in Bulgarian and has no coun- mmedr(pervyi, p rvi t) = 1 − 0.5/7 ≈ 0.93

terpart in Russian: Bulgarian has definite, but

not indefinite article, and there are no articles in

Russian.

2.2 Semantic Similarity

• We transliterate the Russian-specific letters

The second basic similarity measure we use is web-

(missing in the Bulgarian alphabet) or letter com-

only, which measures the semantic similarity between

binations in a regular way: y ↔ i, ↔ e, and

a Russian word wru and a Bulgarian word wbg us-

xt ↔ w, e.g. r. lektron ↔ b. elektron (an

ing the Web as a corpus and a glossary G of known

electron), r. vyl ↔ b. vil (past participle of to

Bulgarian-Russian translation pairs used as “bridges”.

howl), r. xtab ↔ b. wab (mil. a staff), etc.

The basic idea is that if two words are translations of

• Finally, we remove all double letters in both lan- each other then many of the words in their respective

guages (e.g. nn → n; ss → s): While consonant local contexts should be mutual translations as well.

and vowel doubling is very rare in Bulgarian (ex- First, we issue a query to Google for wru or wbg ,

cept at morpheme boundaries for a limited num- limiting the language to Russian or Bulgarian, and we

ber of morphemes), it is more common in Russian, collect the text from the resulting 1,000 snippets. We

e.g. in case of words of foreign origin: r. assam- then extract the words from the local context (two

ble → b. asamble (an assembly) words on either side of the target word), we remove

the stopwords (prepositions, pronouns, conjunctions,

Second, we use different letter-pair specific costs for interjections and some adverbs), we lemmatise the re-

replace. We use 0.5 for all vowel to vowel substitu- maining words, and we filter out the words that are

tions, e.g. o ↔ e as in r. lico ↔ b. lice (a face). not in G. We further replace each Russian word with

We also use 0.5 for some consonant-consonant replace- its Bulgarian counter-part in G. As a result, we end

ments, e.g. s ↔ z. Such regular phonetic changes are up with two Bulgarian frequency vectors, correspond-

reflected in different ways in the orthographic systems ing to wru and wbg , respectively. Finally, we tf.idf-

of the two languages, Bulgarian being more conser- weight the vector coordinates [31] and we calculate the

vative and sticking to morphological principles. For semantic similarity between wbg and wru as the cosine

example, in Bulgarian the final z in prefixes like iz- between their corresponding vectors.

and raz- never change to s, while in Russian they

sometimes do, e.g. r. issledovatel ↔ b. izsle- 2.3 Combined Similarity Measures

dovatel (an explorer), r. rasskaz ↔ b. razkaz (a

story), etc. In our experiments (see below), we have found that

We use a cost of 1 for all other replacements. mmedr yields a better precision, while web-only has

It is easy to see that this modified minimum edit a better recall. Therefore we tried to combine the two

distance (mmed) is more adequate than med – it is similarity measures in different ways:

only 0.5 for r. pervyi and b. p rvi t: we first

• web-avg: average of web-only and mmedr;

normalise them to pervi and p rvi, and then we

do a single vowel-vowel replace with the cost of 0.5. • web-max: maximum of web-only and mmedr;

We transform mmed into a similarity measure, mod-

ified minimum edit distance ratio (mmedr) using the • web-cut: The value of web-cut(s1 , s2 ) is 1, if

following formula (|s| is the number of letters in s be- mmedr(s1 , s2 ) ≥ α (0 < α < 1), and is equal to

fore the normalisation): web-only(s1 , s2 ), otherwise.

2](https://image.slidesharecdn.com/nakov-nakov-paskaleva-ranlp-2007-improved-word-alignments-090830190228-phpapp01/75/Improved-Word-Alignments-Using-the-Web-as-a-Corpus-2-2048.jpg)

![2.4 Competitive Linking sian word with each Bulgarian one – due to poly-

semy/homonymy some words had multiple transla-

The above similarity measures are used in combina- tions. As a result, we obtained a glossary G of 3,794

tion with competitive linking [19], which assumes that word translation pairs.

a source word is either translated with a single target Due to the modest glossary size, in our initial ex-

word or is not translated at all. Given a sentence pair, periments, we were lacking translations for many of

the similarity between all Bulgarian-Russian word the most frequent context words. For example, when

pairs is calculated2 , which induces a fully-connected comparing r. plat e (a dress) and b. rokl (a

weighted bipartite graph. Then a greedy approxima- dress), we find adjectives like r. svadebnoe (wed-

tion to the maximum weighted bipartite matching in ding) and r. veqernee (evening) among the most fre-

that graph is extracted as follows: First, the most sim- quent Russian context words, and b. svatbena and

ilar pair of unaligned words is aligned and both words b. veqerna among the most frequent Bulgarian con-

are discarded from further consideration. Then the text words. While missing in our bilingual glossary,

next most similar pair of unaligned words is aligned it is easy to see that they are orthographically similar

and the two words are discarded, and so forth. The and thus likely cognates. Therefore, we automatically

process is repeated until there are no unaligned words extended G with possible cognate pairs. For the pur-

left or until the maximal word pair similarity falls be- pose, we collected the most frequent 30 non-stopwords

low a pre-specified threshold θ (0 ≤ θ ≤ 1), which RU30 and BG30 from the local contexts of wru and

could leave some words unaligned. wbg , respectively, that were missing in our glossary.

We then calculated the mmedr for every word pair

(r, b) ∈ (RU30 , BG30 ), and we added to G all pairs for

3 Resources which the value was above 0.90. As a result, we man-

aged to extend G with 6,289 additional high-quality

3.1 Parallel Corpus translation pairs.

We use a parallel sentence-aligned Bulgarian-Russian

corpus: the Russian novel Lord of the World3 by 4 Evaluation

Alexander Beliaev and its Bulgarian translation4 . The

text has been sentence aligned automatically using the We evaluate the similarity measures in four different

alignment tool MARK ALISTeR [26], which is based ways: manual analysis of web-cut, alignment quality

on the Gale-Church algorithm [8]. As a result, we ob- of competitive linking, alignment quality of the IBM

tained 5,827 parallel sentences, which we divided into models for a corpus augmented with word translations

training (4,827 sentences), tuning (500 sentences), and from competitive linking, and translation quality of a

testing set (500 sentences). phrase-based SMT trained on that corpus.

3.2 Grammatical Resources 4.1 Manual Evaluation of web-cut

We use monolingual dictionaries for lemmatisation. Recall that by definition web-cut(s1 , s2 ) is 1, if

For Bulgarian, we use a large morphological dictionary, mmedr(s1 , s2 ) ≥ α, and is equal to web-only(s1 , s2 ),

containing about 1,000,000 wordforms and 70,000 lem- otherwise. To find the best value for α, we tried all

mata [25], created at the Linguistic Modeling De- values α ∈ {0.01, 0.02, 0.03, . . . , 0.99}. For each value,

partment, Institute for Parallel Processing, Bulgar- we word-aligned the training sentences from the par-

ian Academy of Sciences. The dictionary is in DE- allel corpus using competitive linking and web-cut,

LAF format [30]: each entry consists of a wordform, a and we extracted a list of the distinct aligned word

corresponding lemma, followed by morphological and pairs, which we added twice as additional “sentence”

grammatical information. There can be multiple en- pairs to the training corpus. We then calculated the

tries for the same wordform, in case of multiple homo- perplexity of IBM model 4 for that augmented corpus.

graphs. We also use a large grammatical dictionary This procedure was repeated for all candidate values

of Russian in the same format, consisting of 1,500,000 of α, and finally α = 0.62 was selected as it yielded

wordforms and 100,000 lemmata, based on the Gram- the lowest perplexity.6

matical Dictionary of A. Zaliznjak [33]. Its electronic The last author, a native speaker of Bulgarian who

version was supplied by the Computerised fund of Rus- is fluent in Russian, manually examined and anno-

sian language, Institute of Russian language, Russian tated as correct, rough or wrong the 14,246 distinct

Academy of Sciences. aligned Bulgarian-Russian word type pairs, obtained

with competitive linking and web-cut for α = 0.62.

The following groups naturally emerge:

3.3 Bilingual Glossary

We built a bilingual glossary from an online Bulgarian- 1. “Identical” word pairs (mmedr(s1 , s2 ) = 1):

Russian dictionary5 . First, we removed all multi- 1,309 or 9% of all pairs. 70% of them are com-

word expressions. Then we combined each Rus- pletely identical, e.g. skoro (soon) is spelled the

same way in both Bulgarian and Russian. The re-

2 Due to their special distribution, stopwords and short words maining 30% exhibit regular graphemic changes,

(one or two letters) are not used in competitive linking. which are recognised by mmedr (See section 2.1.)

3 http://www.lib.ru

4 http://borislav.free.fr/mylib 6 This value is close to 0.58, which has been found to perform

5 http://www.bgru.net/intr/dictionary/ best for lcsr on Western-European languages [15].

3](https://image.slidesharecdn.com/nakov-nakov-paskaleva-ranlp-2007-improved-word-alignments-090830190228-phpapp01/75/Improved-Word-Alignments-Using-the-Web-as-a-Corpus-3-2048.jpg)

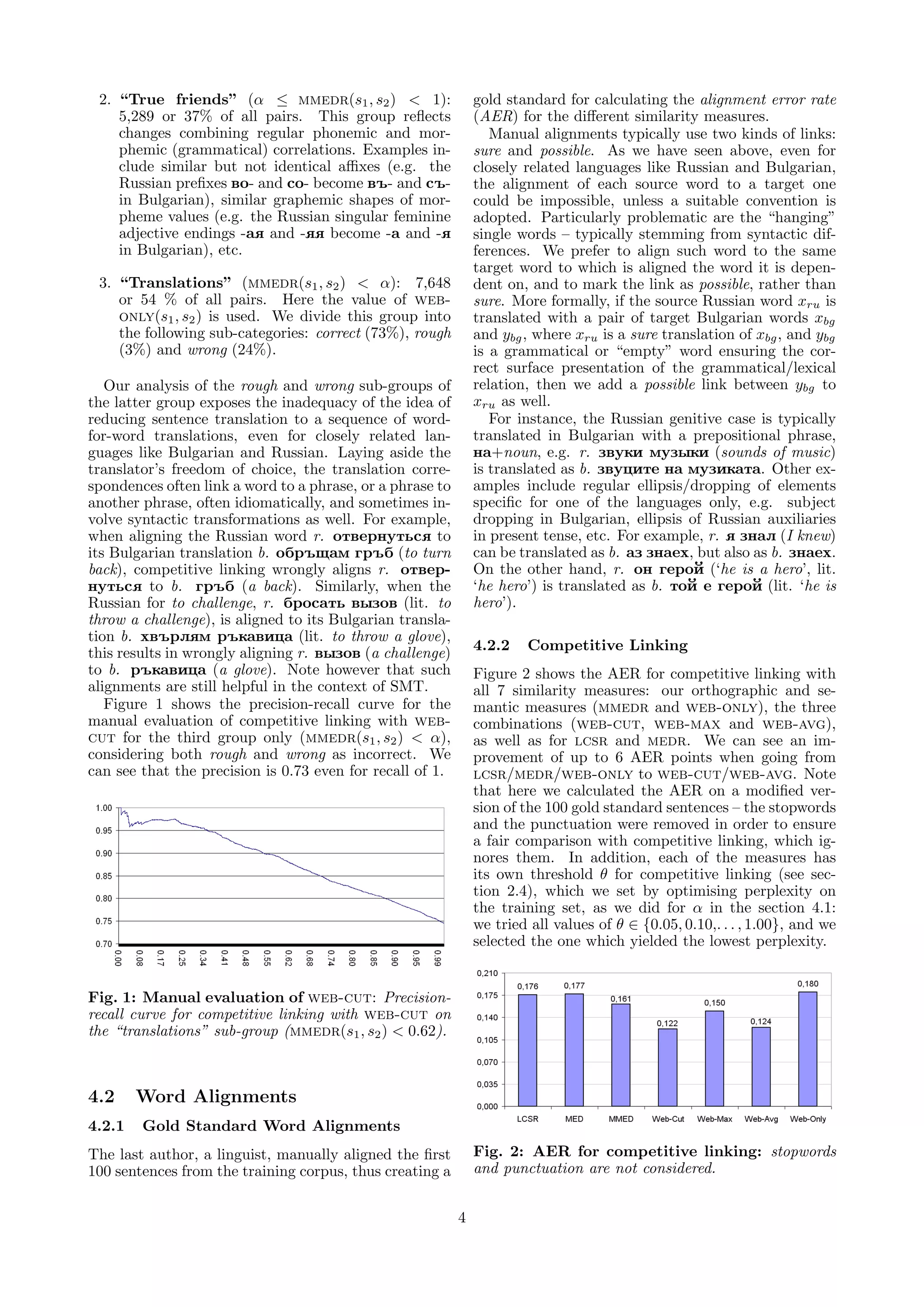

![4.2.3 IBM Models

In our next experiment, we first extracted a list of

the distinct word pairs aligned with competitive link-

ing, and we added them twice as additional “sen-

tence” pairs to the training corpus, as in section 4.1.

We then generated two directed IBM model 4 word

alignments (Bulgarian → Russian, Russian → Bulgar-

ian) for the new corpus, and we combined them using

the interect+grow heuristic [22]. Table 3 shows the

AER for these combined alignments. We can see that

while training on the augmented corpus lowers AER Fig. 4: Translation quality: Bleu score.

by about 4 points compared to the baseline (which is

trained on the original corpus), there is little difference

between the similarity measures.

Fig. 5: Translation quality: NIST score.

Fig. 3: AER for IBM model 4: intersect+grow. Al-Onaizan & al. [1] create improved Czech-English

word alignments using probable cognates extracted

with one of the variations of lcsr [18] described in

[32]. They tried to constrain the co-occurrences, to

4.3 Machine Translation seed the parameters of IBM model 1, but their best

As we said in the introduction, word alignments are results were achieved by simply adding the cognates

an important first step in the process of building a to the training corpus as additional “sentences”. Us-

phrase-based SMT. However, as many researchers have ing a variation of that technique, Kondrak, Marcu and

reported, better AER does not necessarily mean im- Knight [15] demonstrated improved translation qual-

proved machine translation quality [2]. Therefore, we ity for nine European languages. We extend this work,

built a full Russian → Bulgarian SMT system in order by adding competitive linking [19], language-specific

to assess the actual impact of the corpus augmentation weights, and a Web-based semantic similarity mea-

(as described in the previous section) on the transla- sure.

tion quality. Koehn & Knight [12] describe several techniques for

Starting with the symmetrised word alignments de- inducing translation lexicons. Starting with unrelated

scribed in the previous section, we extracted phrase- German and English corpora, they look for (1) identi-

level translation pairs using the alignment template ap- cal words, (2) cognates, (3) words with similar frequen-

proach [13]. We then trained a log-linear model with cies, (4) words with similar meanings, and (5) words

the standard feature functions: language model prob- with similar contexts. This is a bootstrapping process,

ability, word penalty, distortion cost, forward phrase where new translation pairs are added to the lexicon

translation probability, backward phrase translation at each iteration.

probability, forward lexical weight, backward lexical Rapp [27] describes a correlation between the co-

weight, and phrase penalty. The feature weights, were occurrences of words that are translations of each

set by maximising Bleu [24] on the development set other. In particular, he shows that if in a text in

using minimum error rate training [21]. one language two words A and B co-occur more of-

Tables 4 and 5 show the evaluation on the test set ten than expected by chance, then in a text in an-

in terms of Bleu and NIST scores. We can see a siz- other language the translations of A and B are also

able difference between the different similarity mea- likely to co-occur frequently. Based on this observa-

sures: the combined measures (web-cut, web-max tion, he proposes a model for finding the most accurate

and web-avg) clearly outperforming lcsr and medr. cross-linguistic mapping between German and English

mmedr outperforms them as well, but the difference words using non-parallel corpora. His approach differs

from lcsr is negligible. from ours in the similarity measure, the text source,

and the addressed problem. In later work on the same

problem, Rapp [28] represents the context of the target

5 Related Work word with four vectors: one for the words immediately

preceding the target, another one for the ones immedi-

Many researchers have exploited the intuition that ately following the target, and two more for the words

words in two different languages with similar or identi- one more word before/after the target.

cal spelling are likely to be translations of each other. Fung and Yee [7] extract word-level translations

5](https://image.slidesharecdn.com/nakov-nakov-paskaleva-ranlp-2007-improved-word-alignments-090830190228-phpapp01/75/Improved-Word-Alignments-Using-the-Web-as-a-Corpus-5-2048.jpg)

![from non-parallel corpora. They count the number of [8] W. Gale and K. Church. A program for aligning sentences in

bilingual corpora. Computational Linguistics, 19(1):75–102,

sentence-level co-occurrences of the target word with 1993.

a fixed set of “seed” words in order to rank the candi-

[9] U. Germann, M. Jahr, K. Knight, D. Marcu, and K. Yamada.

dates in a vector-space model using different similarity Fast decoding and optimal decoding for machine translation.

measures, after normalisation and tf.idf-weighting In Proceedings of ACL, pages 228–235, 2001.

[31]. The process starts with a small initial set of [10] A. Klementiev and D. Roth. Named entity transliteration and

seed words, which are dynamically augmented as new discovery from multilingual comparable corpora. In Proceed-

ings of HLT-NAACL, pages 82–88, June 2006.

translation pairs are identified. We do not have a

fixed set of seed words, but generate it dynamically, [11] P. Koehn. Pharaoh: a beam search decoder for phrase-based

statistical machine translation models. In Proceedings of

since finding the number of co-occurrences of the tar- AMTA, pages 115–124, 2004.

get word with each of the seed words would require

[12] P. Koehn and K. Knight. Learning a translation lexicon from

prohibitively many search engine queries. monolingual corpora. In Proceedings of ACL workshop on

Diab & Finch [6] propose a statistical word-level Unsupervised Lexical Acquisition, pages 9–16, 2002.

translation model for comparable corpora, which finds [13] P. Koehn, F. J. Och, and D. Marcu. Statistical phrase-based

a cross-linguistic mapping between the words in the translation. In Proceedings of NAACL, pages 48–54, 2003.

two corpora such that the source language word-level [14] G. Kondrak. Cognates and word alignment in bitexts. In Pro-

co-occurrences are preserved as closely as possible. ceedings of the 10th Machine Translation Summit, pages 305–

312, 2005.

Finally, there is a lot of research on string sim-

ilarity which has been or potentially could be ap- [15] G. Kondrak, D. Marcu, and K. Knight. Cognates can improve

statistical translation models. In Proceedings of HLT-NAACL

plied to cognate identification: Ristad&Yianilos’98 2003 (companion volume), pages 44–48, 2003.

[29] learn the med weights using a stochastic trans- [16] V. Levenshtein. Binary codes capable of correcting deletions,

ducer. Tiedemann’99 [32] and Mulloni&Pekar’06 [20] insertions, and reversals. Soviet Physics Doklady, (10):707–

learn spelling changes between two languages for lcsr 710, 1966.

and for nedr respectively. Kondrak’05 [14] pro- [17] D. Marcu and W. Wong. A phrase-based, joint probability

poses longest common prefix ratio, and longest com- model for statistical machine translation. In Proceedings of

EMNLP, pages 133–139, 2002.

mon subsequence formula, which counters lcsr’s pref-

erence for short words. Klementiev&Roth’06 [10] [18] D. Melamed. Automatic evaluation and uniform filter cascades

for inducing N-best translation lexicons. In Proceedings of the

and Bergsma&Kondrak’07 [3] propose a discrimina- Third Workshop on Very Large Corpora, pages 184–198, 1995.

tive frameworks for string similarity. Brill&Moore’00 [19] D. Melamed. Models of translational equivalence among words.

[4] learn string-level substitutions. Computational Linguistics, 26(2):221–249, 2000.

[20] A. Mulloni and V. Pekar. Automatic detection of orthographic

cues for cognate recognition. In Proceedings of LREC, pages

6 Conclusion and Future Work 2387–2390, 2006.

[21] F. J. Och. Minimum error rate training in statistical machine

We have proposed and demonstrated the potential of translation. In Proceedings of ACL, 2003.

a novel method for improving word alignments using [22] F. J. Och and H. Ney. A systematic comparison of various sta-

linguistic knowledge and the Web as a corpus. tistical alignment models. Computational Linguistics, 29(1),

2003.

There are many things we plan to do in the future.

First, we would like to replace competitive linking with [23] F. J. Och and H. Ney. The alignment template approach to

statistical machine translation. Computational Linguistics,

maximum weight bipartite matching. We also want to 30(4):417–449, 2004.

improve mmed by adding more linguistically knowl- [24] K. Papineni, S. Roukos, T. Ward, and W.-J. Zhu. Bleu: a

edge or by learning the nedr or lcsr weights auto- method for automatic evaluation of machine translation. In

matically as described in [20, 29, 32]. Even better re- Proceedings of ACL, pages 311–318, 2002.

sults could be achieved with string-level substitutions [25] E. Paskaleva. Compilation and validation of morphological re-

[4] or a discriminative approach [3, 10] . sources. In Workshop on Balkan Language Resources and

Tools (Balkan Conference on Informatics), pages 68–74, 2003.

[26] E. Paskaleva and S. Mihov. Second language acquisition from

References aligned corpora. In Proceedings of Language Technology and

Language Teaching, pages 43–52, 1998.

[1] Y. Al-Onaizan, J. Curin, M. Jahr, K. Knight, J. Lafferty,

D. Melamed, F. Och, D. Purdy, N. Smith, and D. Yarowsky. [27] R. Rapp. Identifying word translations in non-parallel texts.

Statistical machine translation. Technical report, 1999. In Proceedings of ACL, pages 320–322, 1995.

[2] N. Ayan and B. Dorr. Going beyond AER: an extensive analy- [28] R. Rapp. Automatic identification of word translations from

sis of word alignments and their impact on MT. In Proceedings unrelated english and german corpora. In Proceedings of ACL,

of ACL, pages 9–16, 2006. pages 519–526, 1999.

[3] S. Bergsma and G. Kondrak. Alignment-based discriminative [29] E. Ristad and P. Yianilos. Learning string-edit distance. IEEE

string similarity. In Proceedings of ACL, pages 656–663, 2007. Trans. Pattern Anal. Mach. Intell., 20(5):522–532, 1998.

[4] E. Brill and R. C. Moore. An improved error model for noisy [30] M. Silberztein. Dictionnaires ´lectroniques et analyse au-

e

channel spelling correction. In Proceedings of ACL, pages 286– tomatique de textes: le syst`me INTEX. Masson, Paris, 1993.

e

293, 2000.

[31] K. Sparck-Jones. A statistical interpretation of term specificity

[5] P. Brown, J. Cocke, S. D. Pietra, V. D. Pietra, F. Jelinek, and its application in retrieval. Journal of Documentation,

R. Mercer, and P. Roossin. A statistical approach to language 28:11–21, 1972.

translation. In Proceedings of the 12th conference on Com-

putational linguistics, pages 71–76, 1988. [32] J. Tiedemann. Automatic construction of weighted string sim-

ilarity measures. In Proceedings of EMNLP-VLC, pages 213–

[6] M. Diab and S. Finch. A statistical word-level translation 219, 1999.

model for comparable corpora. In Proceedings of RIAO, 2000.

[33] A. Zaliznyak. Grammatical Dictionary of Russian. Russky

[7] P. Fung and L. Yee. An IR approach for translating from non- yazyk, Moscow, 1977 (A. Zalizn k, Grammatiqeskii slo-

parallel, comparable texts. In Proceedings of ACL, volume 1, var russkogo zyka. “Russkii zyk”, Moskva, 1977).

pages 414–420, 1998.

6](https://image.slidesharecdn.com/nakov-nakov-paskaleva-ranlp-2007-improved-word-alignments-090830190228-phpapp01/75/Improved-Word-Alignments-Using-the-Web-as-a-Corpus-6-2048.jpg)