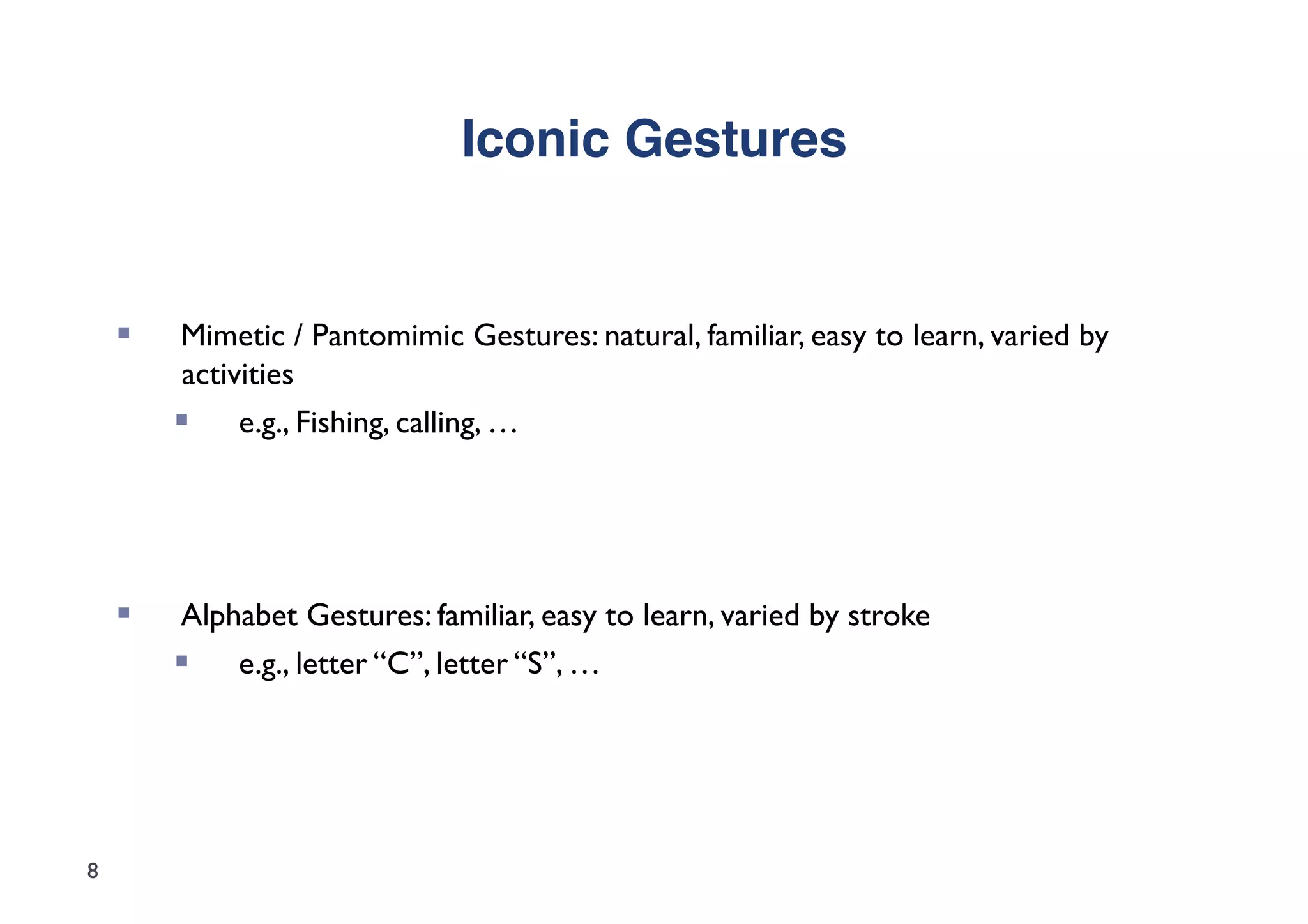

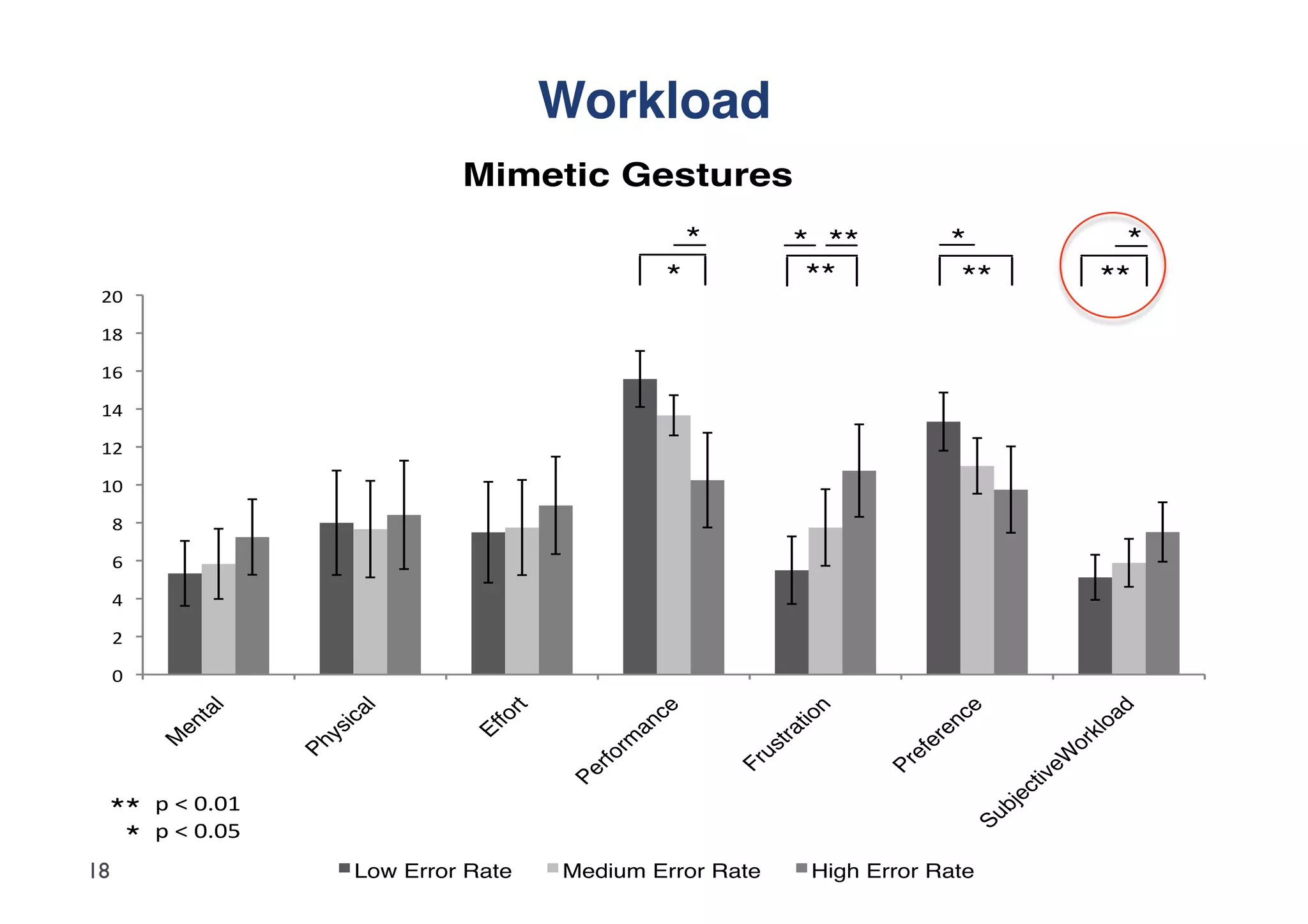

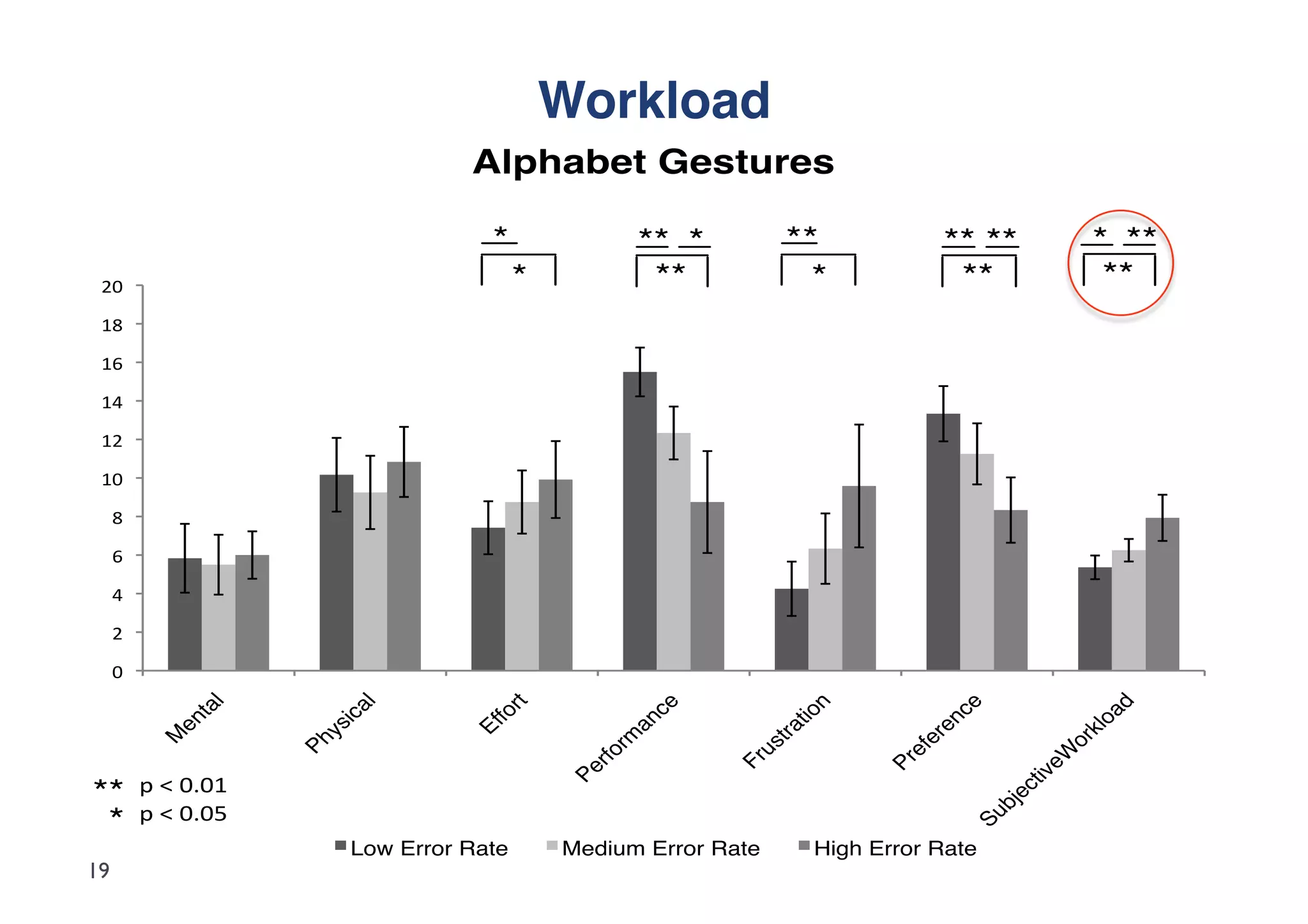

The document investigates user error tolerance during gesture-based interactions, specifically comparing mimetic and alphabet gestures under varying error rates. Results indicate that mimetic gestures are more robust and better tolerated up to 40% error rates, while alphabet gestures are less effective, with more rigid responses leading to greater user frustration. The study highlights implications for gesture recognition technologies and interaction design, advocating for superior user experiences with mimetic gestures despite recognition failures.

![Study Design"

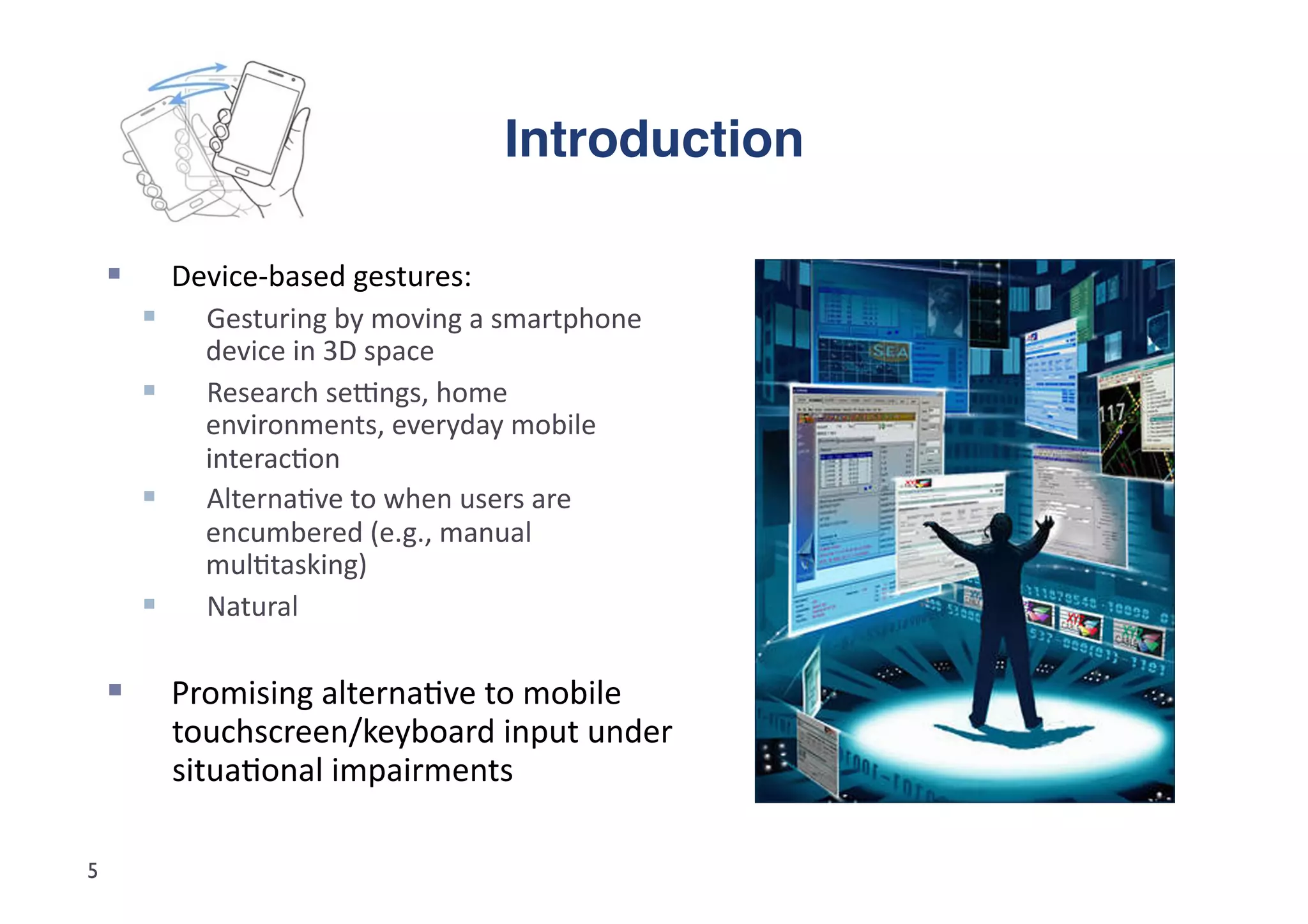

Qualita5ve

Study;

Automated

Wizard-‐of-‐Oz

method

(Fabbrizio

et

al.,

2005)

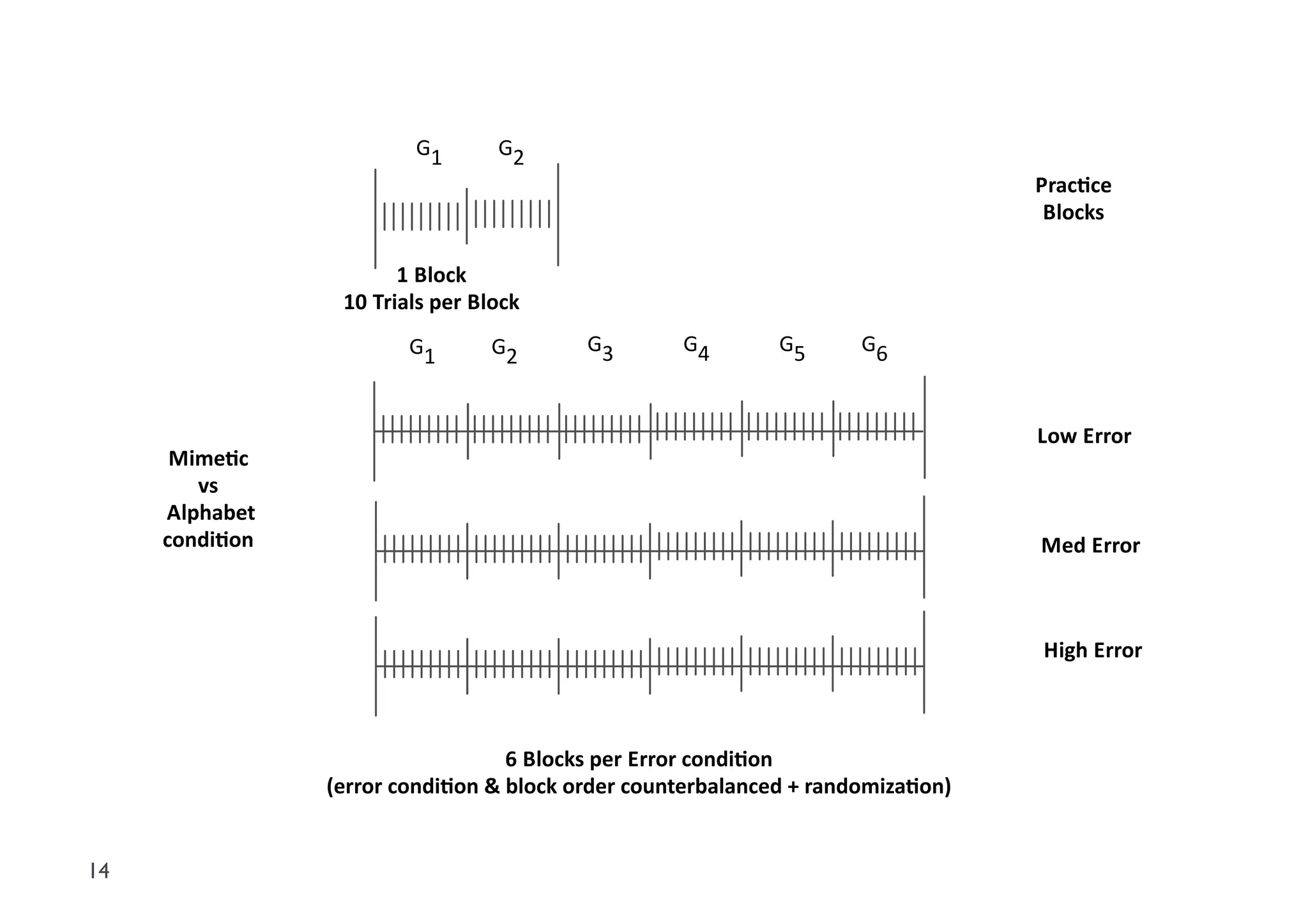

24

subjects

(16

male,

8

female)

aged

between

22-‐41

(M=

29.6,

SD=

4.5)

Mixed

between-‐

and

within

subject

factorial

design:

2

(gesture

type:

mime5c

vs.

alphabet)

x

3

(error

rate:

low

vs.

med

vs.

high)

Experiment

in

Presenta5on®,

Wii

Remote®

interac5on

using

GlovePie™

Random

error

distribu5on

across

trials

(20

prac5ce,

180

test)

Tutorial

&

videos

given

of

how

to

'properly'

perform

each

gesture

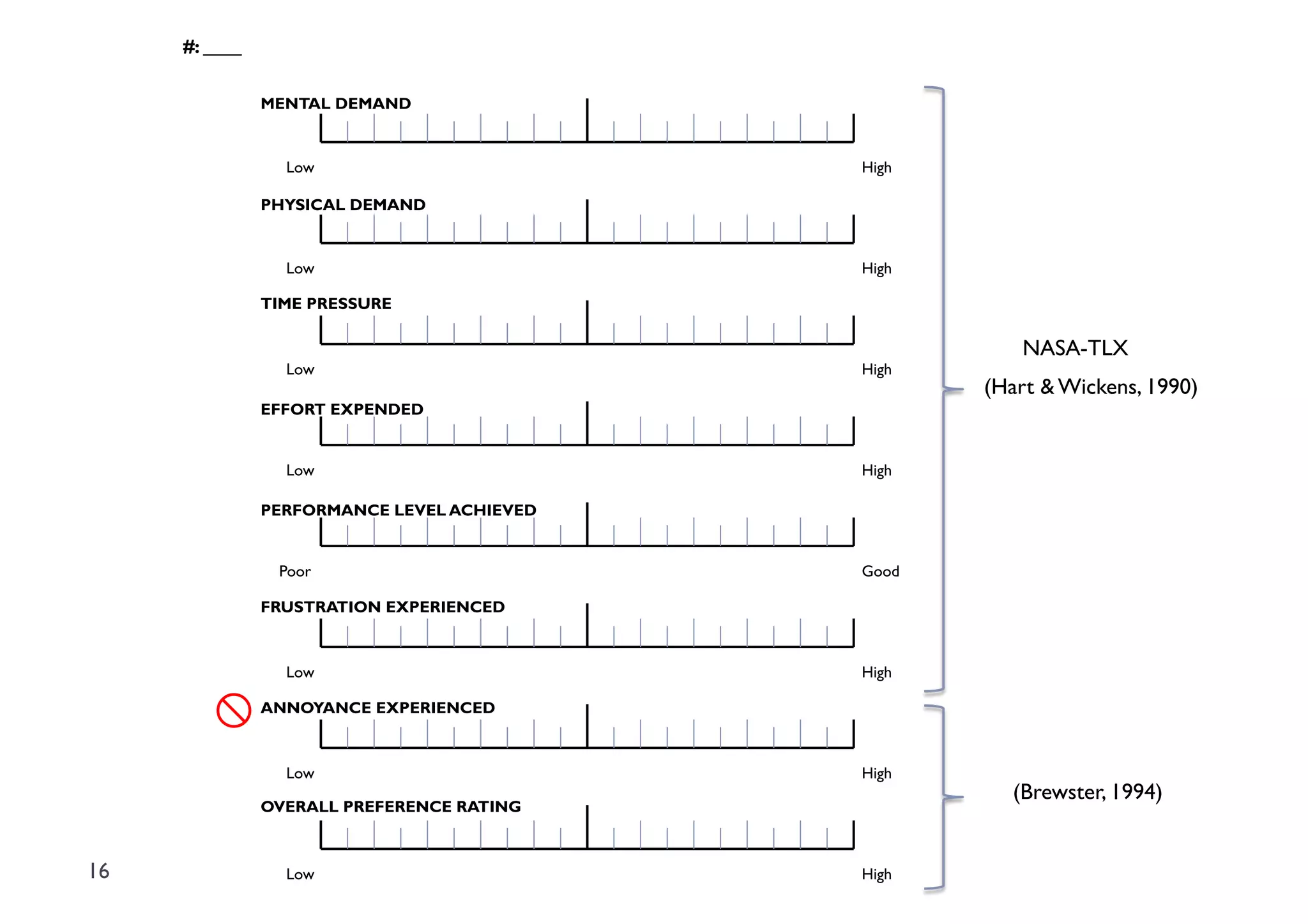

Data

collected:

1. Modified

NASA-‐TLX

workload

[0-‐20

range]

ques5onnaire

data

(Hart

&

Wickens,

1990;

Brewster,

1994)

2. Experiment

logs

3. Video

recordings

of

subjects’

gesture

interac5on

13 4. Post-‐experiment

interviews](https://image.slidesharecdn.com/imci12presentation-121029081013-phpapp02/75/Fishing-or-a-Z-Investigating-the-Effects-of-Error-on-Mimetic-and-Alphabet-Device-based-Gesture-Interaction-13-2048.jpg)

![Setup & Procedure"

≈1 hr

Reward

Exit Interview

x3

NASA-TLX

Questionnaire

Automated

Wizard-of-Oz Test [...]

Block

Practice

Block

Instructions Perform

& Tutorial Fishing

Gesture

Personal

Information

Form

Perform

Fishing

Experiment Gesture

Session

Fishing

Gesture

t Test

Block

15](https://image.slidesharecdn.com/imci12presentation-121029081013-phpapp02/75/Fishing-or-a-Z-Investigating-the-Effects-of-Error-on-Mimetic-and-Alphabet-Device-based-Gesture-Interaction-15-2048.jpg)

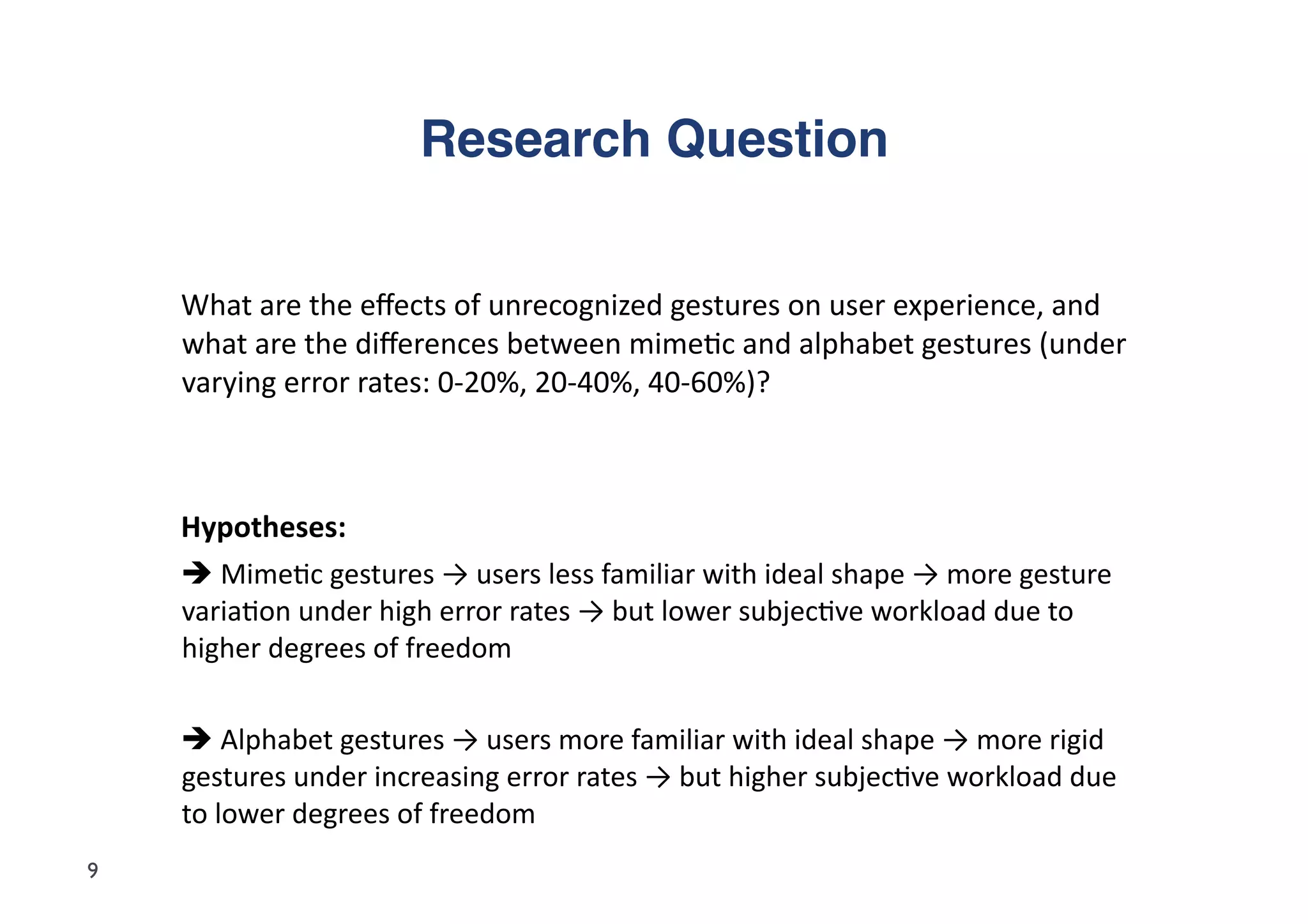

![User Feedback"

Perceived

Canonical

Varia5ons

S9:

“ The

shaking,

that

was

the

hardest

one

because

you

couldn’t

just

shake

freely

[gestures

in

hand],

it

had

to

be

more

precise

shaking

[swing

to

the

leA,

swing

to

the

right]

so

not

just

any

sort

of

shaking

[shakes

hand

in

many

dimensions]”

Cultural

and

Individual

differences

S10:

“For

the

glass

filling,

there

are

many

ways

to

do

it.

SomeFmes

very

fast,

someFmes

slow

like

beer.”

Perceived

Performance

Ad

hoc

explana5ons

(e.g.,

fa5gue)

given

why

there

were

more

errors

in

some

blocks

S18:

“[Performance]

between

the

first

and

second

blocks

[baseline

and

low

error

rate

condiFons],

it

was

the

same...

10-‐15%.”

Social

Acceptability

Alphabet

gestures

less

socially

acceptable

when

they

fail

S16:

“When

it

doesn’t

take

your

C,

you

keep

doing

it,

and

it

looks

ridiculous.”

22](https://image.slidesharecdn.com/imci12presentation-121029081013-phpapp02/75/Fishing-or-a-Z-Investigating-the-Effects-of-Error-on-Mimetic-and-Alphabet-Device-based-Gesture-Interaction-22-2048.jpg)