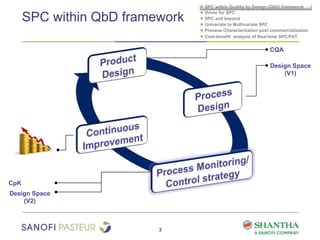

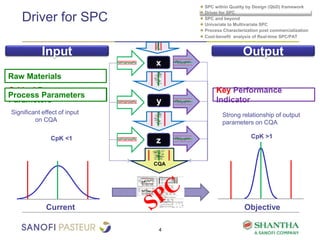

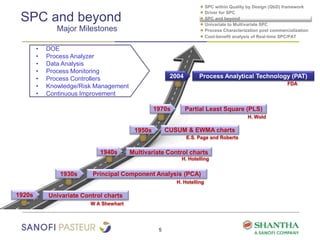

1) The document discusses strategies for statistical process control (SPC) within a quality by design (QbD) framework for efficient manufacturing.

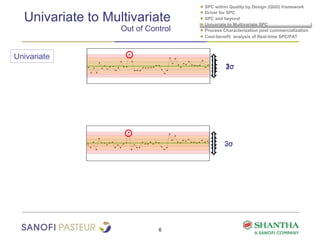

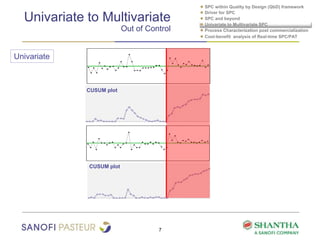

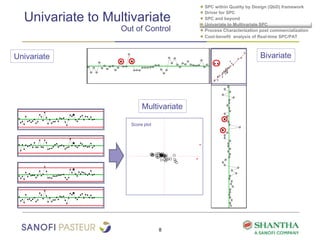

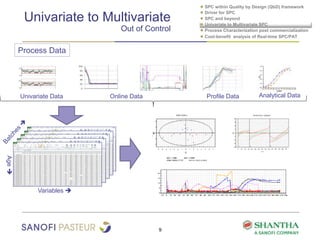

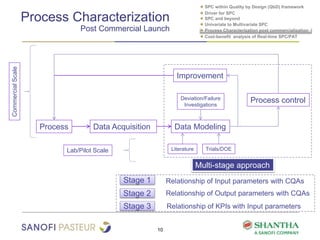

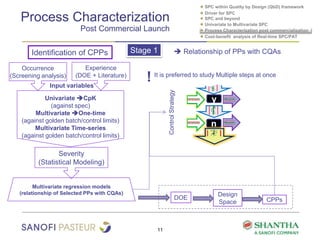

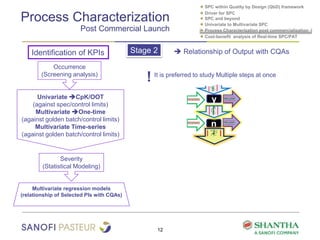

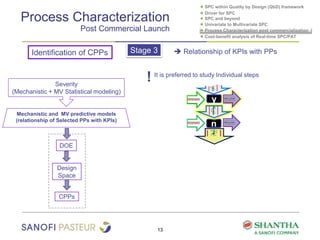

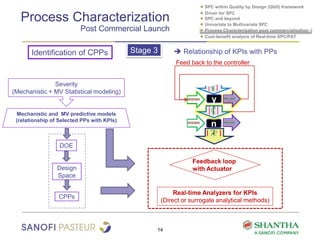

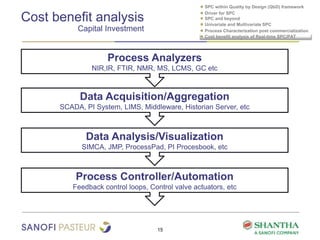

2) It covers univariate and multivariate SPC, process characterization post-commercialization using a multi-stage approach, and a cost-benefit analysis of real-time SPC and process analytical technology (PAT).

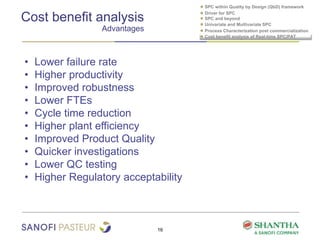

3) The benefits of real-time SPC/PAT include lower failure rates, higher productivity, improved robustness, lower costs, quicker investigations, and higher regulatory acceptability.