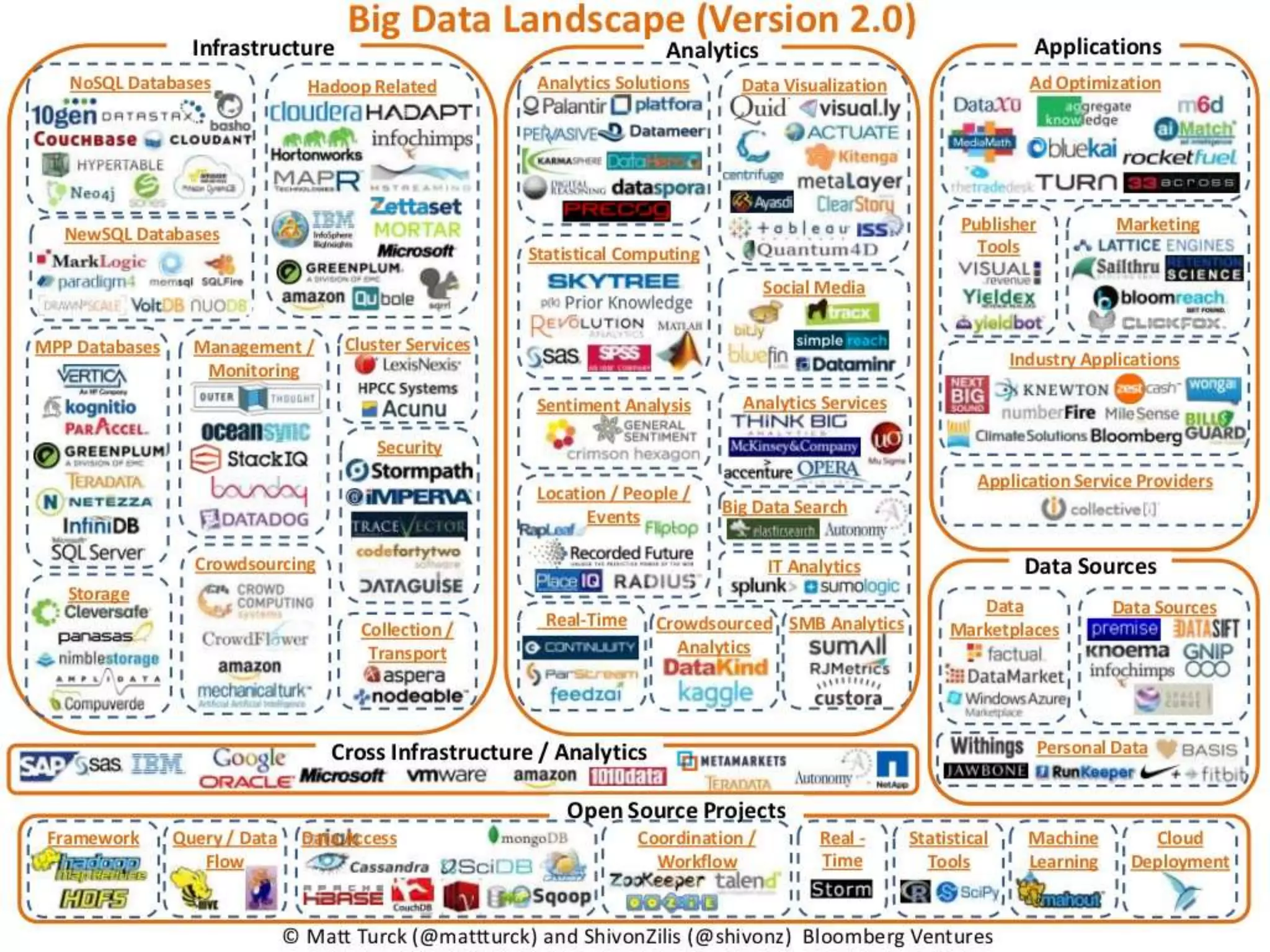

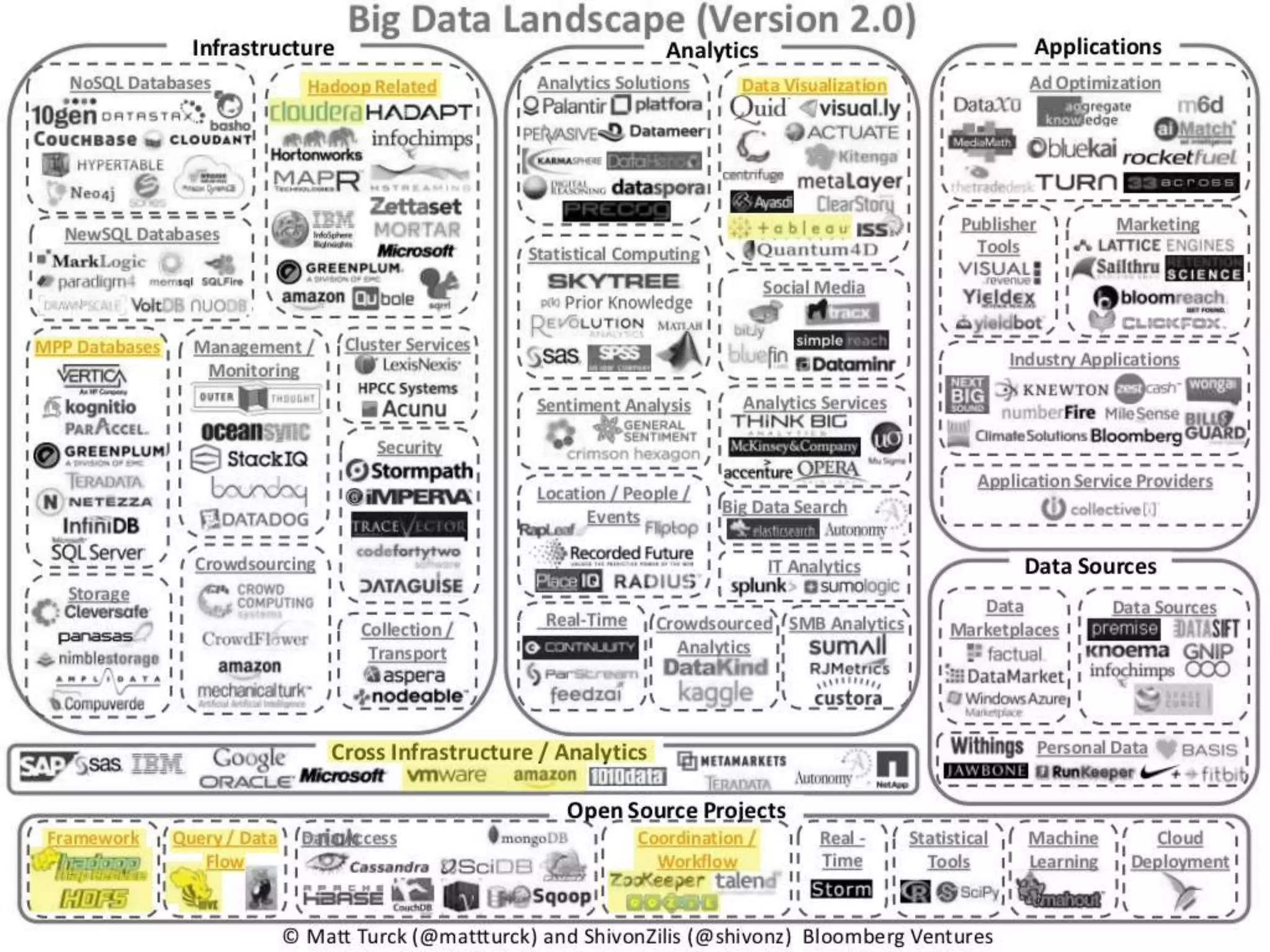

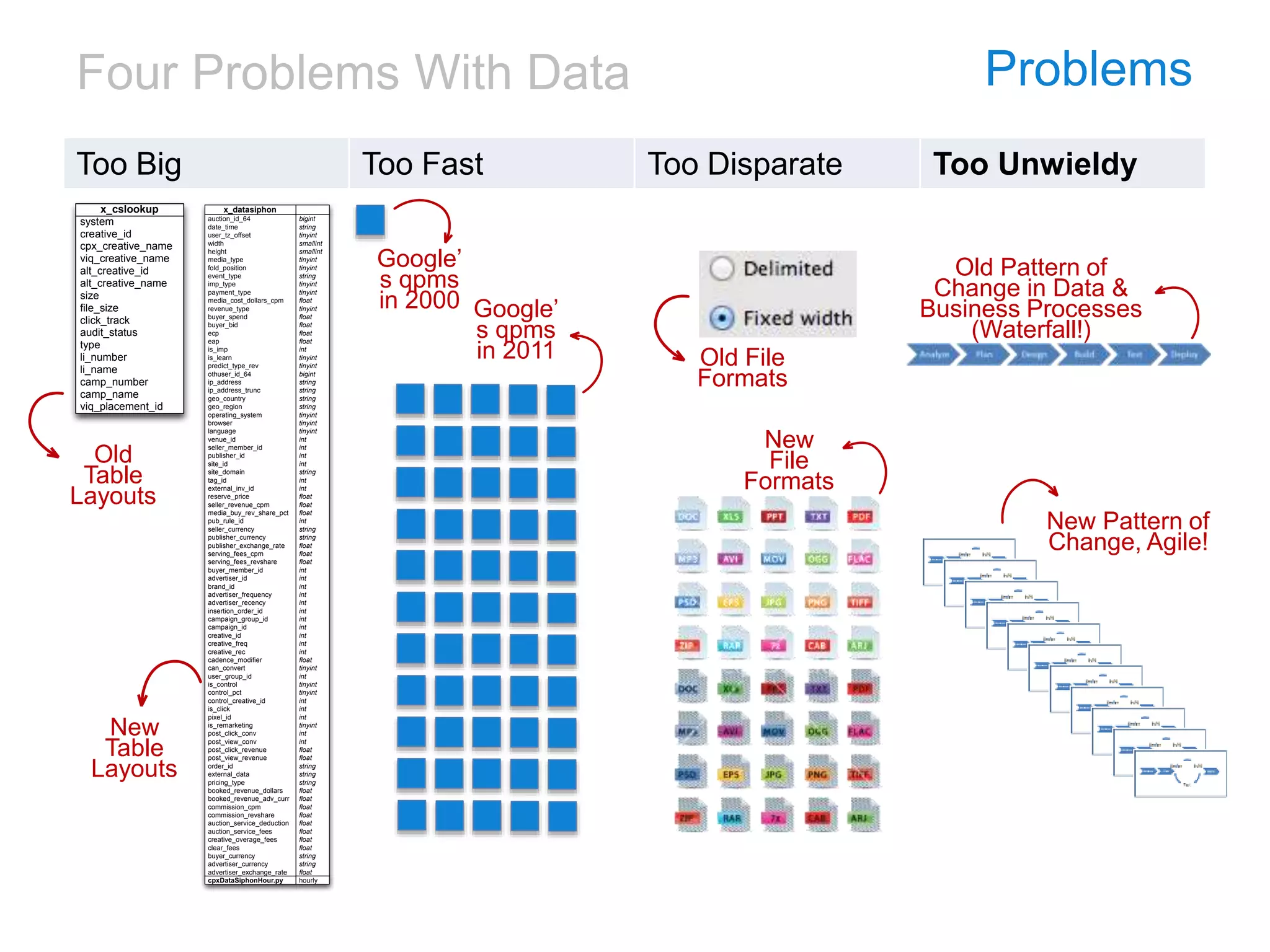

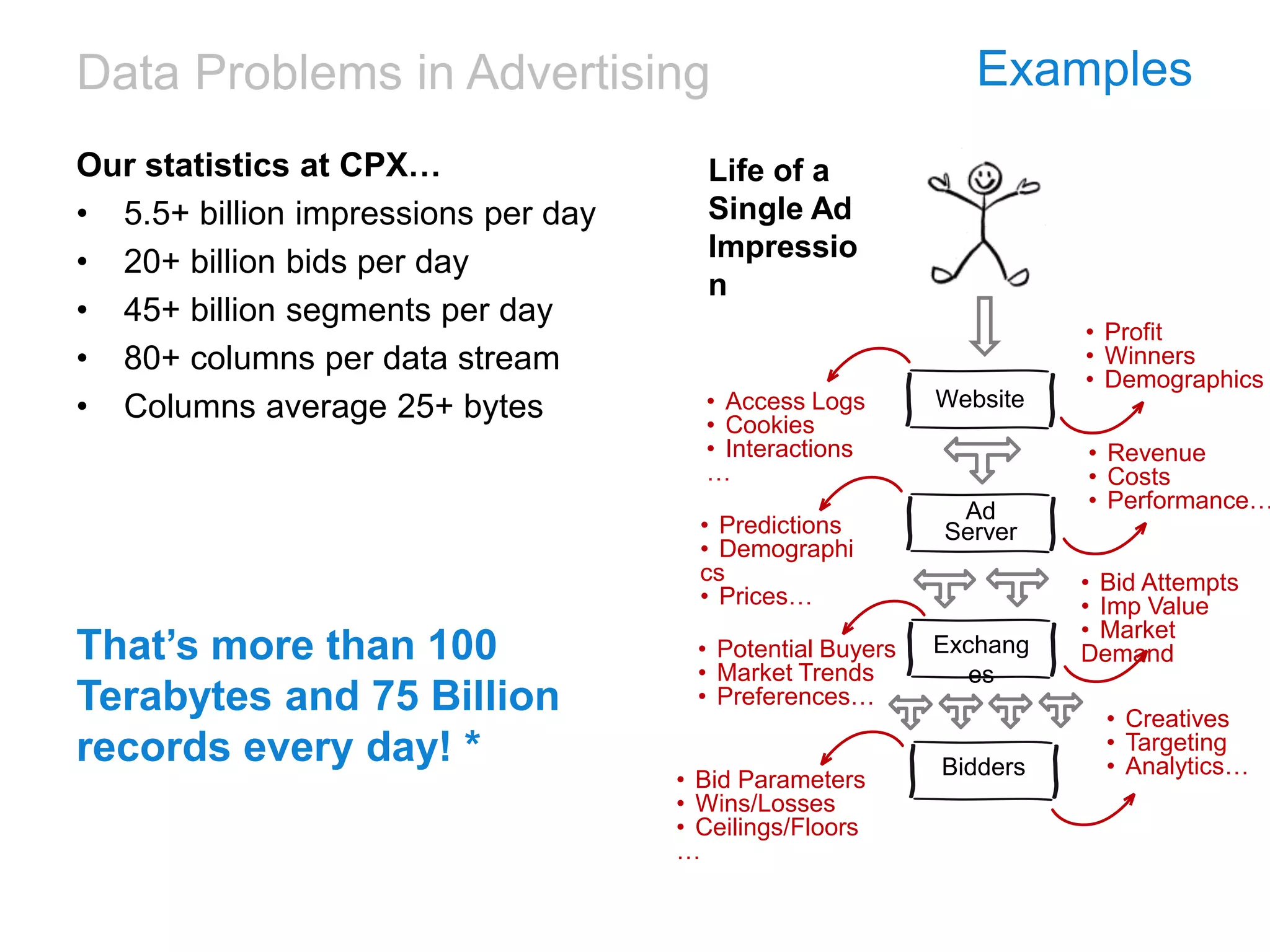

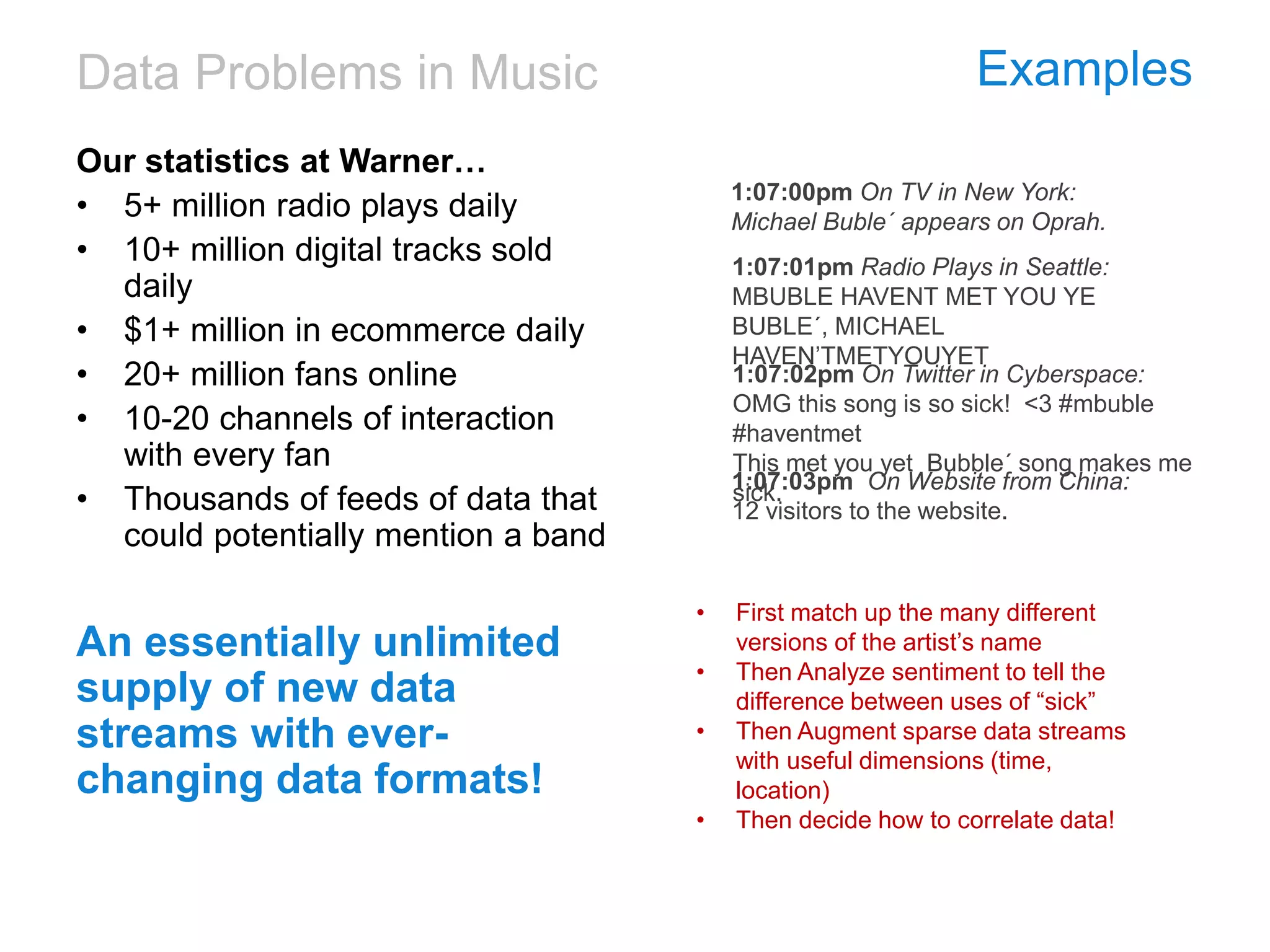

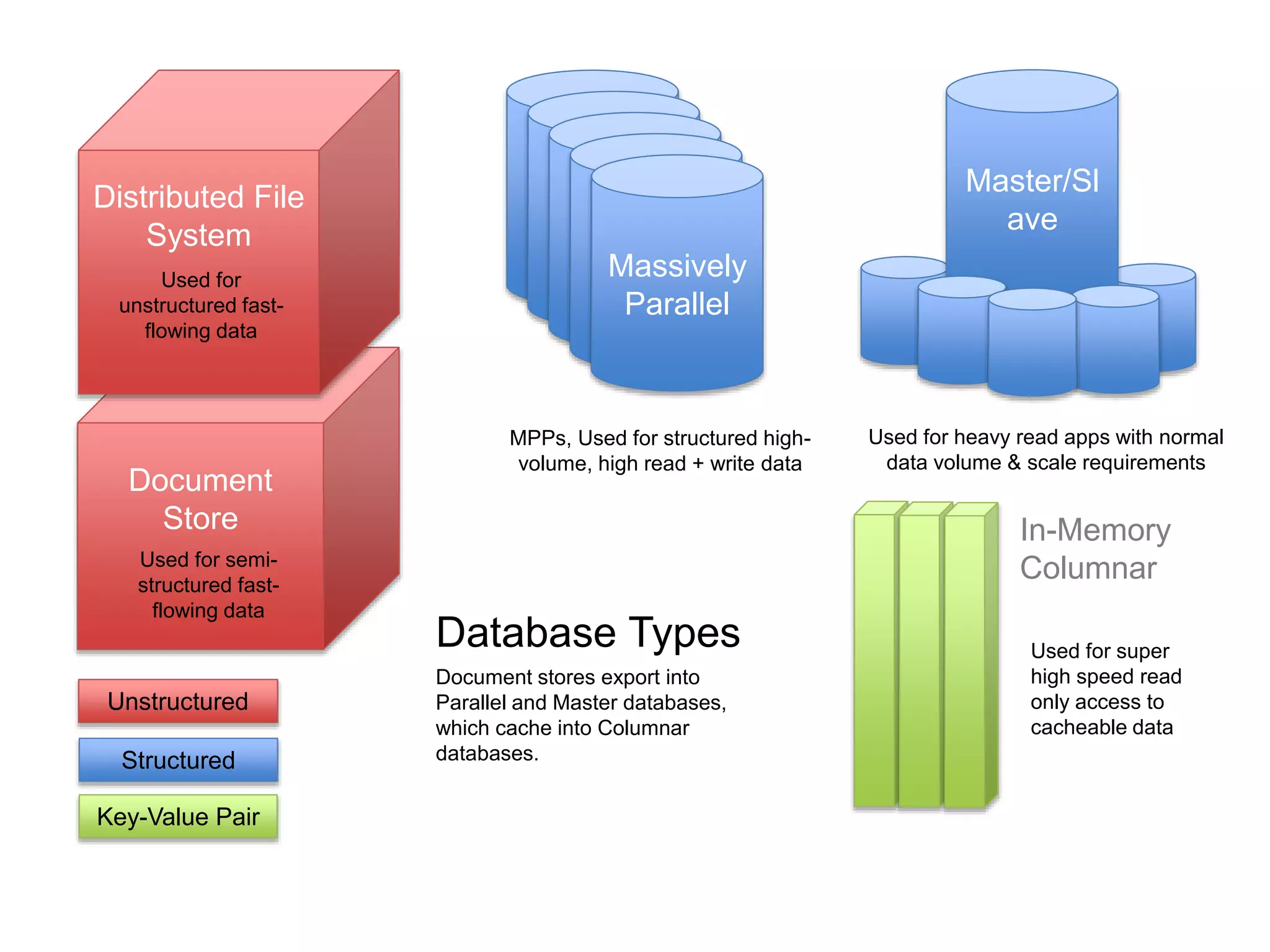

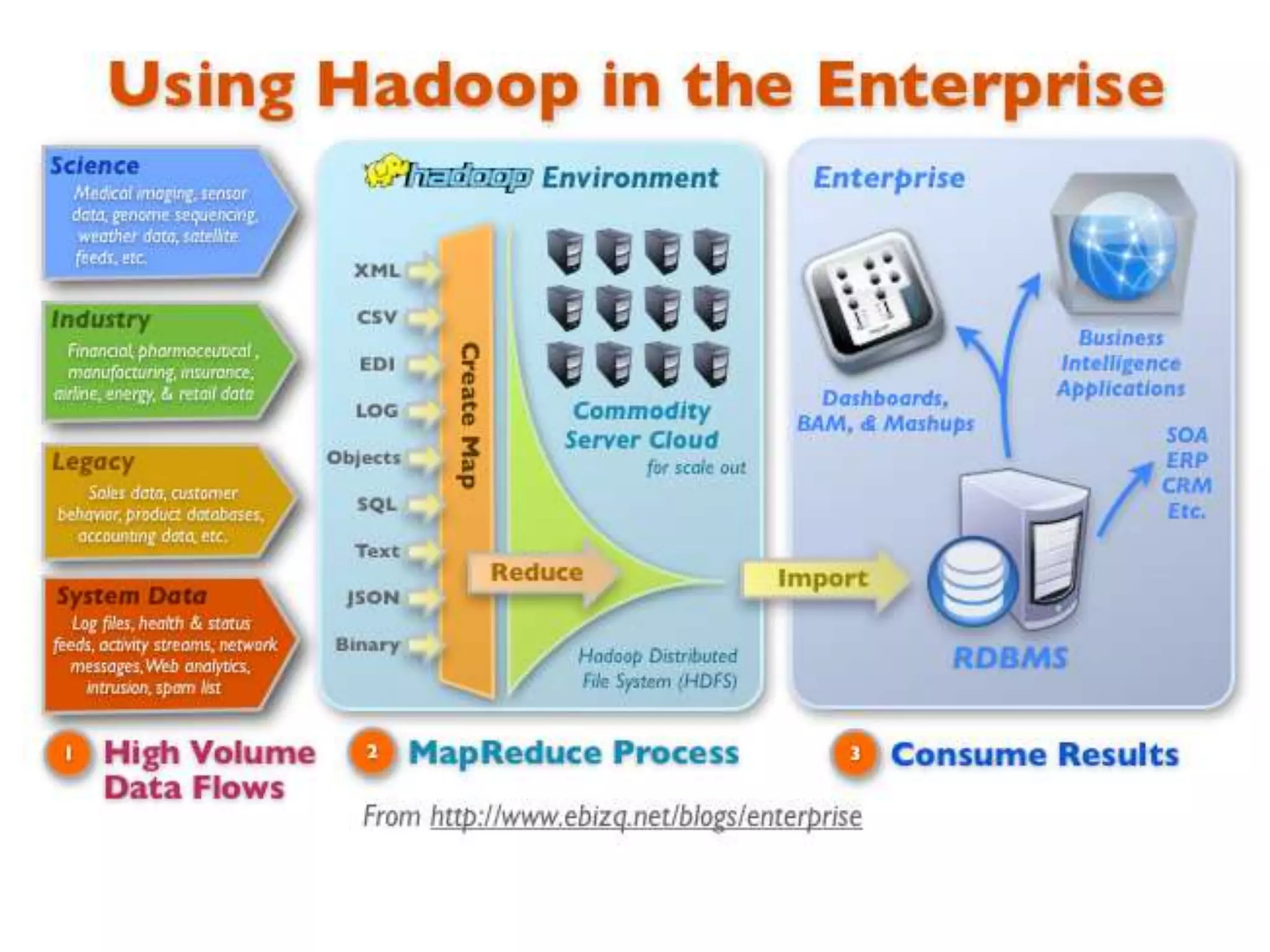

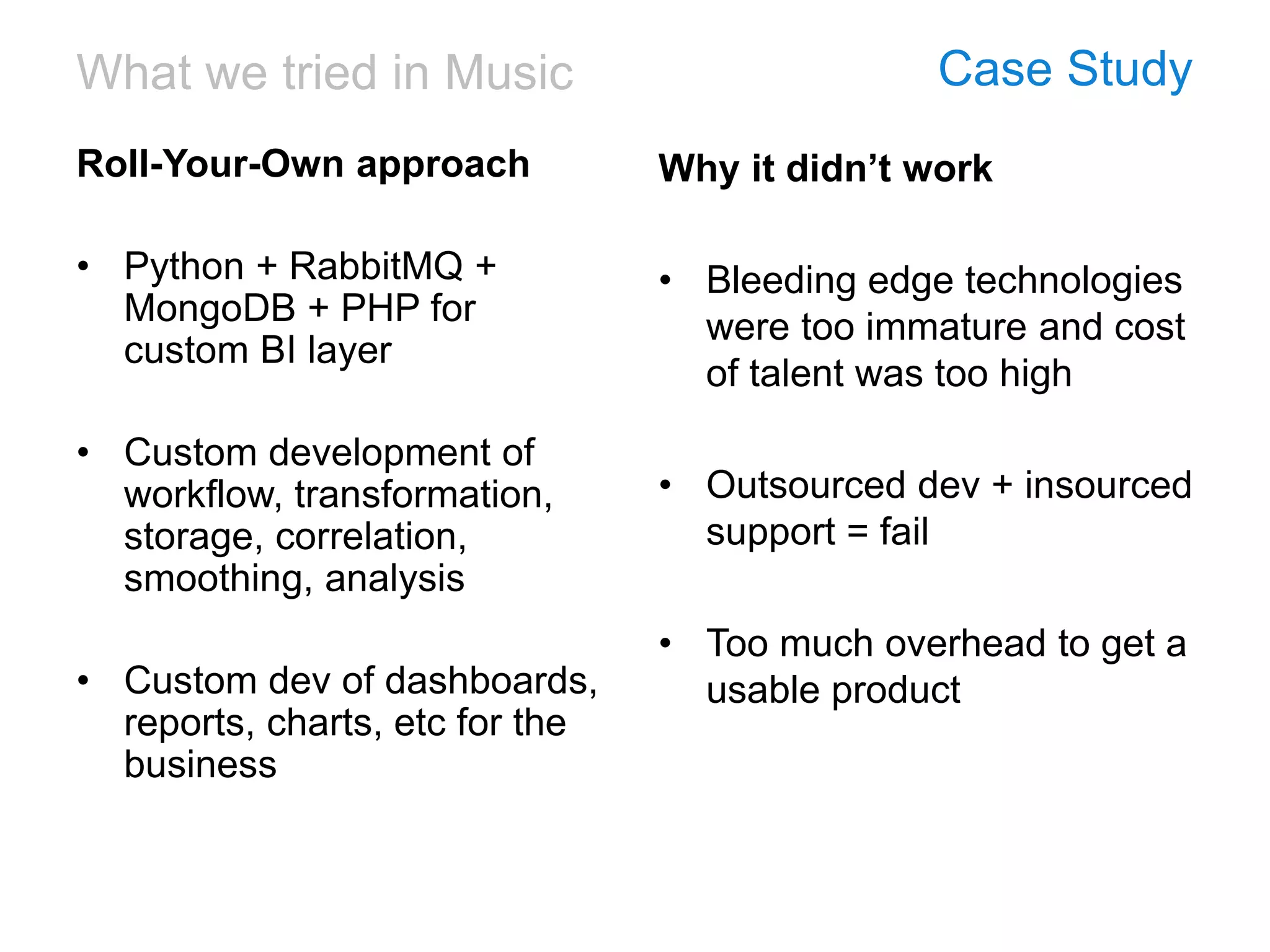

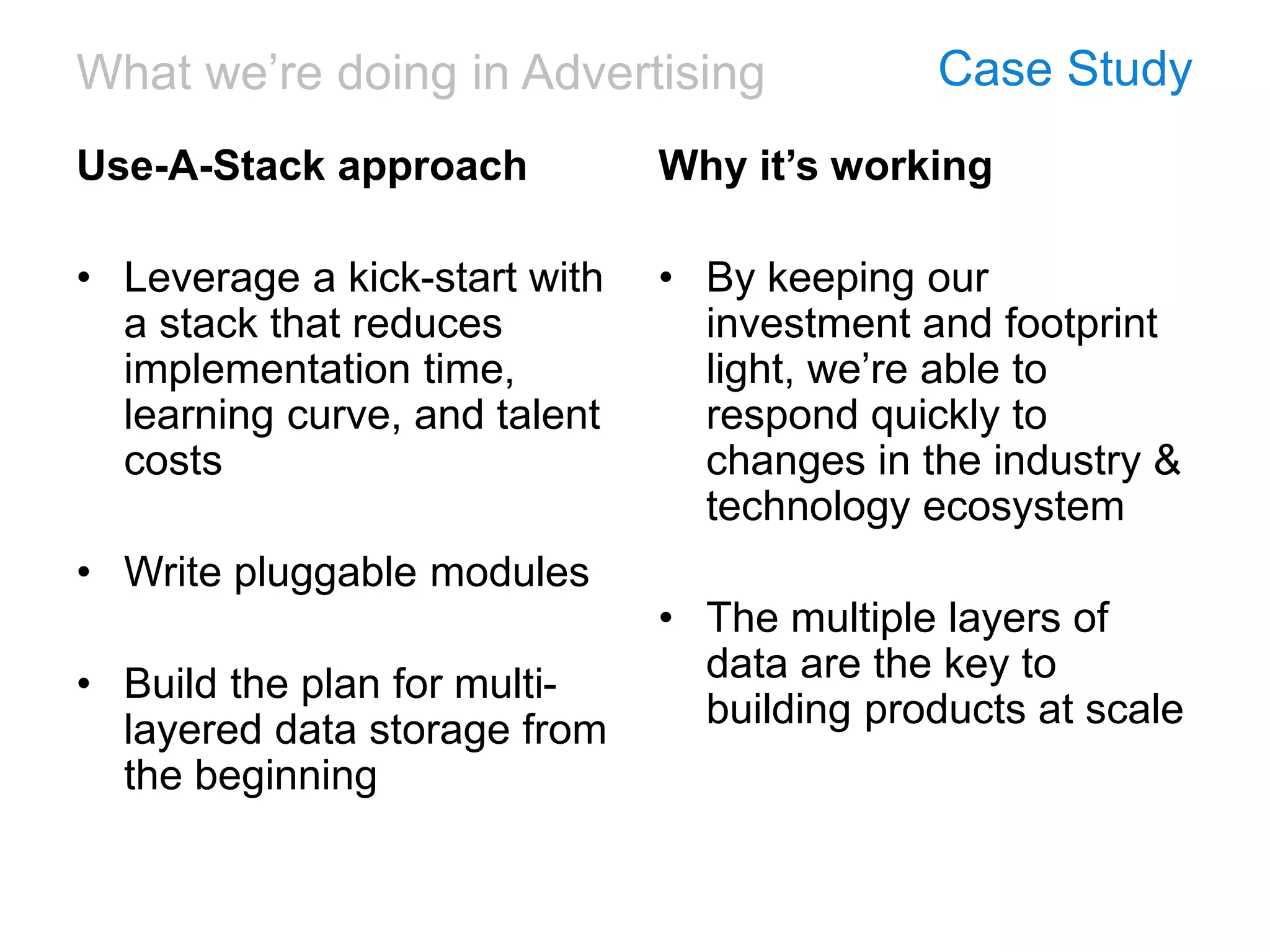

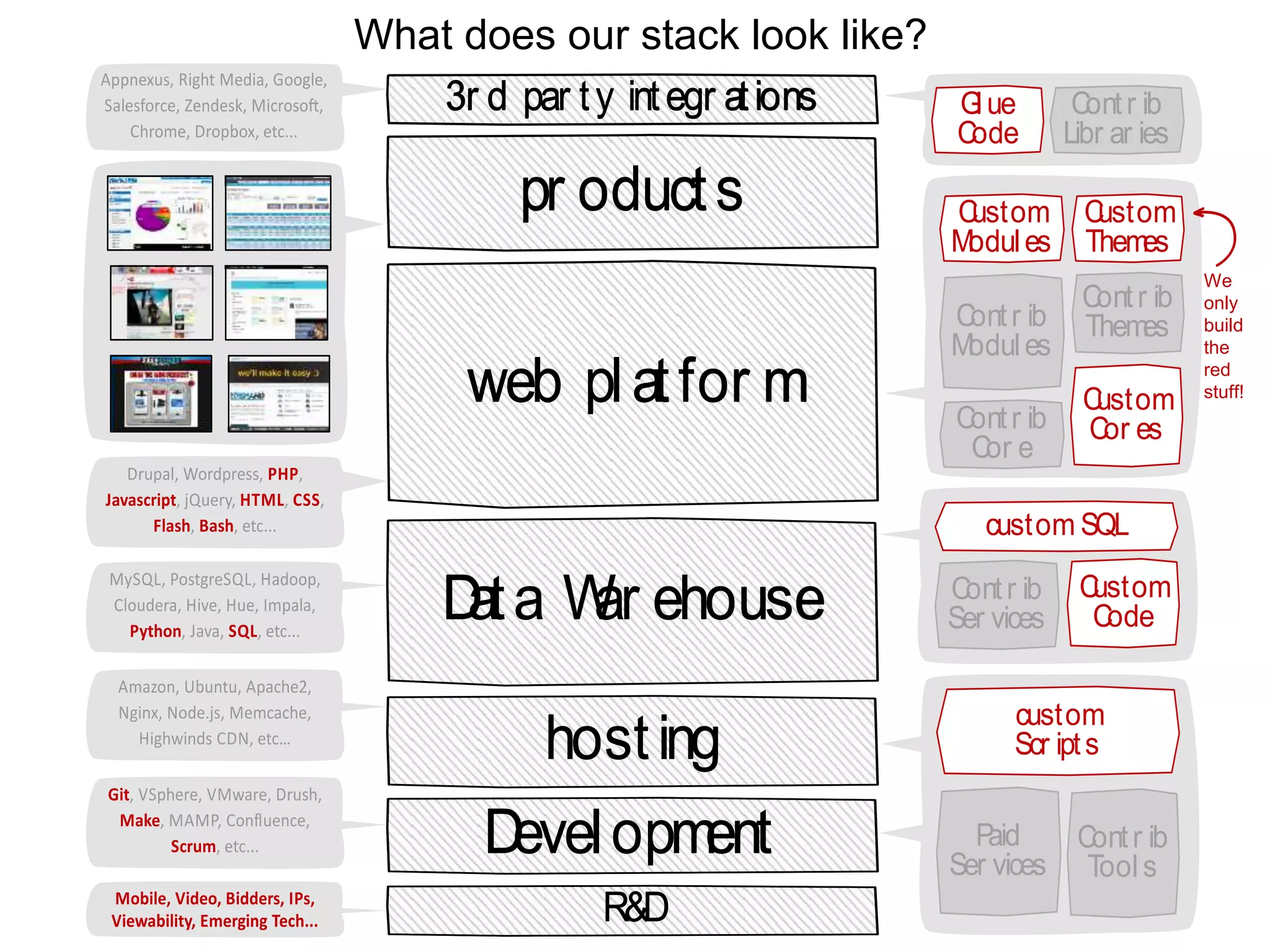

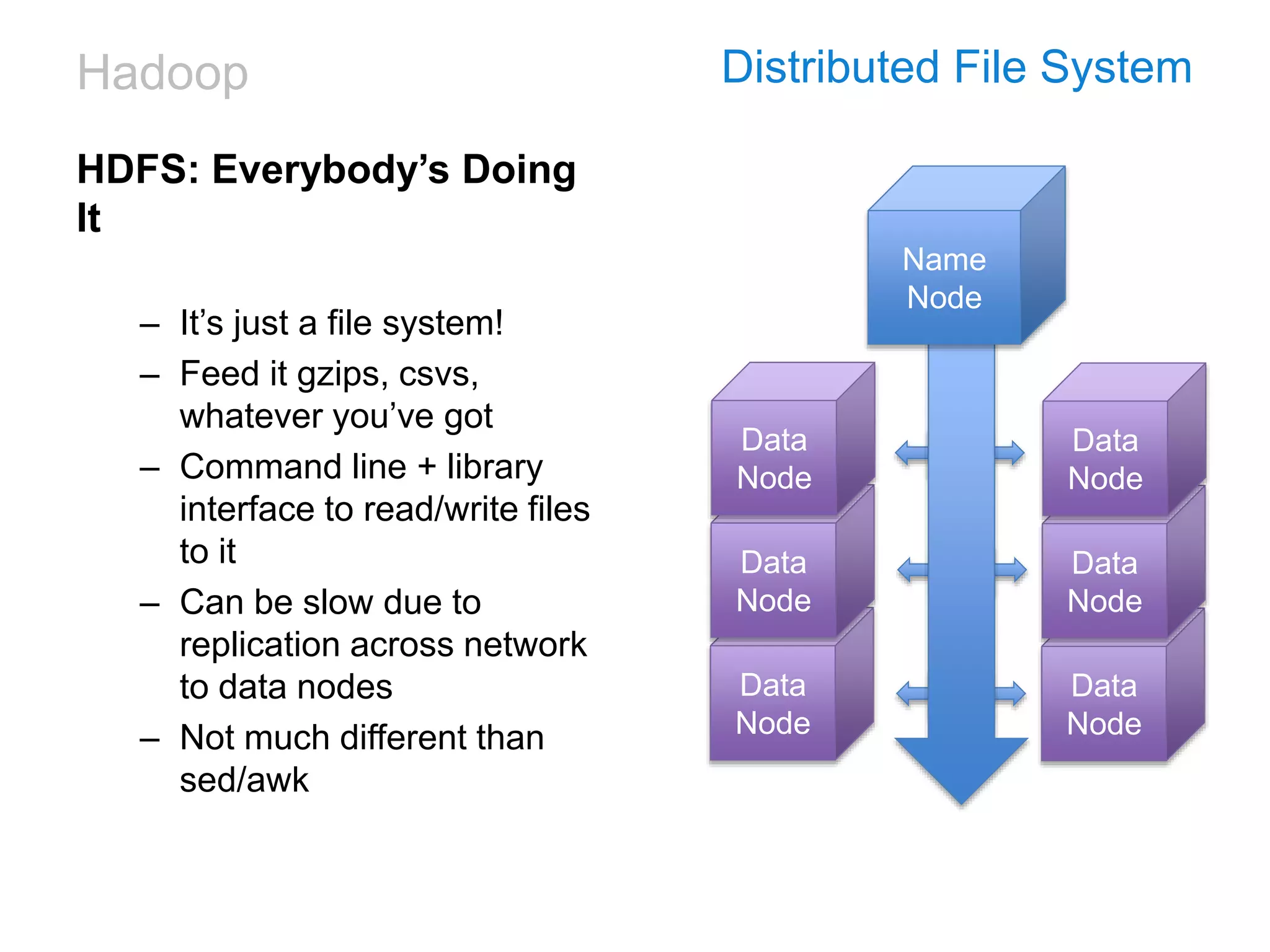

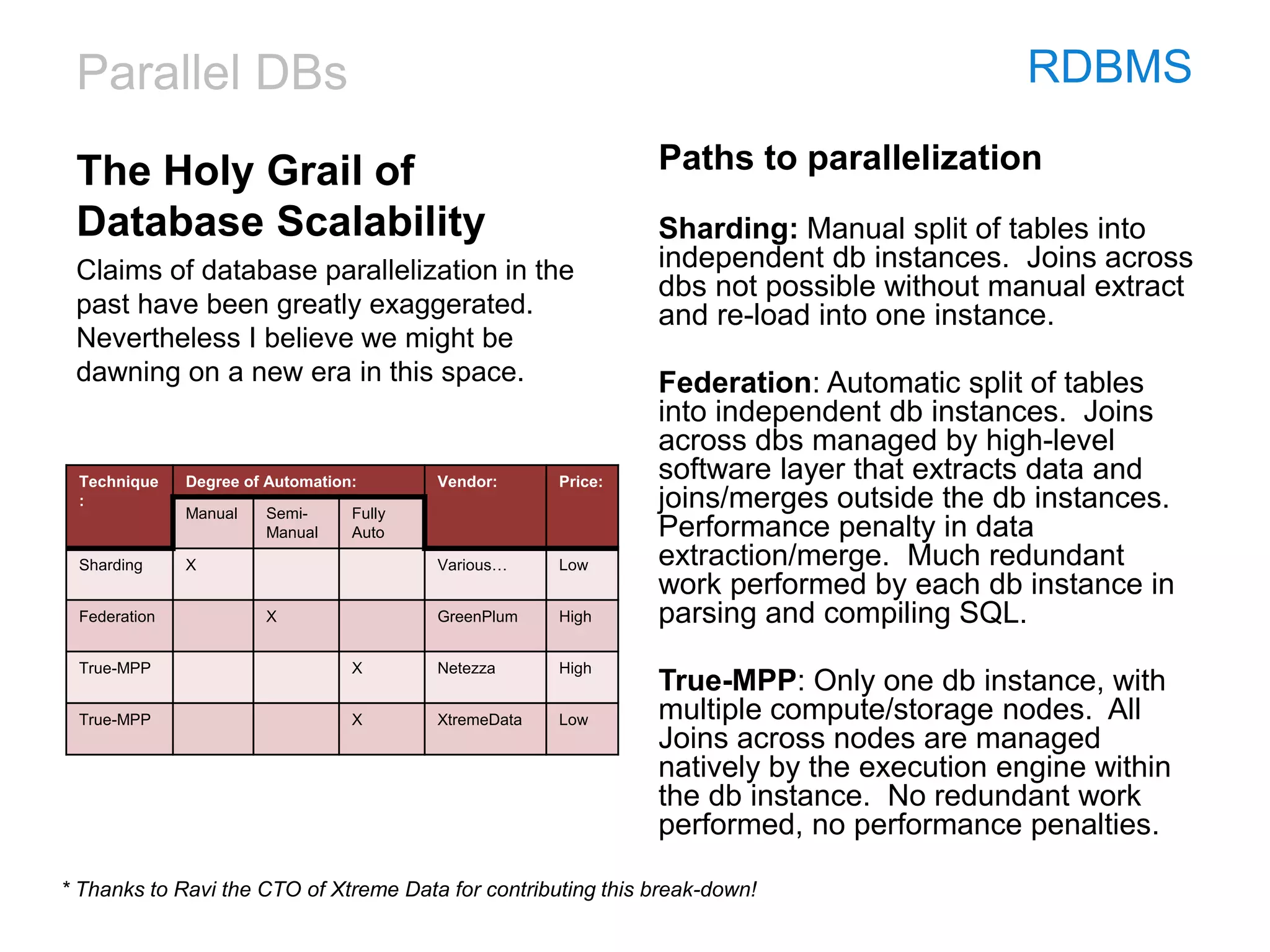

This document discusses the challenges and technologies associated with big data in business, particularly in advertising and music industries. It emphasizes issues such as data volume, speed, variety, and the necessity for agile and efficient solutions. The author shares personal experiences and insights on data management tools and strategies, highlighting the importance of aligning technology with user needs and business objectives.