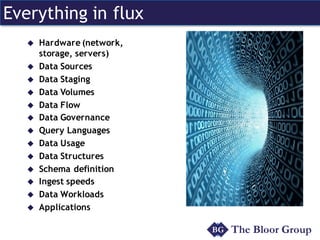

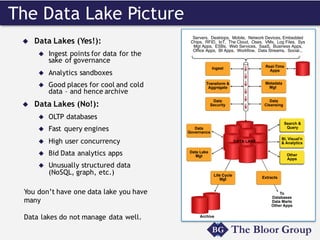

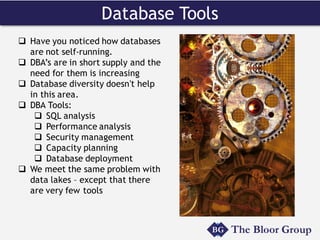

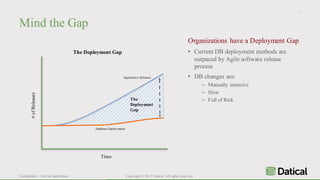

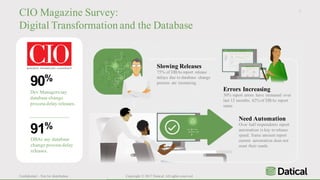

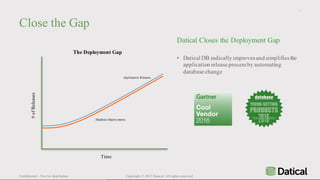

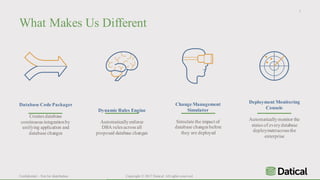

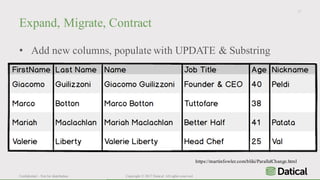

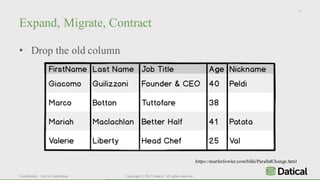

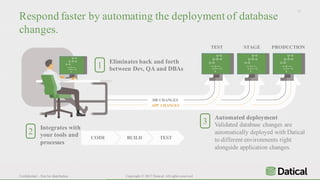

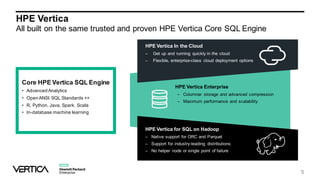

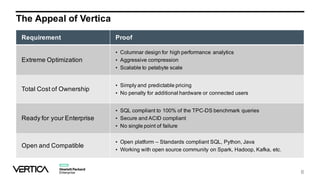

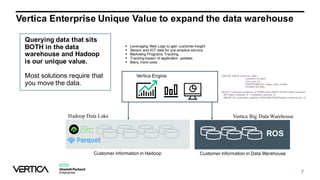

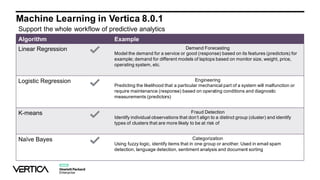

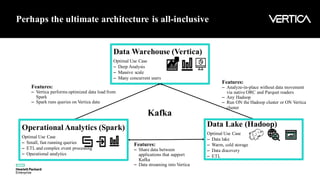

The document discusses the similarities and differences between data lakes and traditional databases, emphasizing the complexities of data management and the necessity for automation in database deployments due to increasing release delays and errors. It presents Datical's solutions for automating database changes to close the deployment gap and enhance application release processes. Additionally, the document highlights HPE Vertica's capabilities in data analytics, scalability, and integration with various data sources for optimized performance.