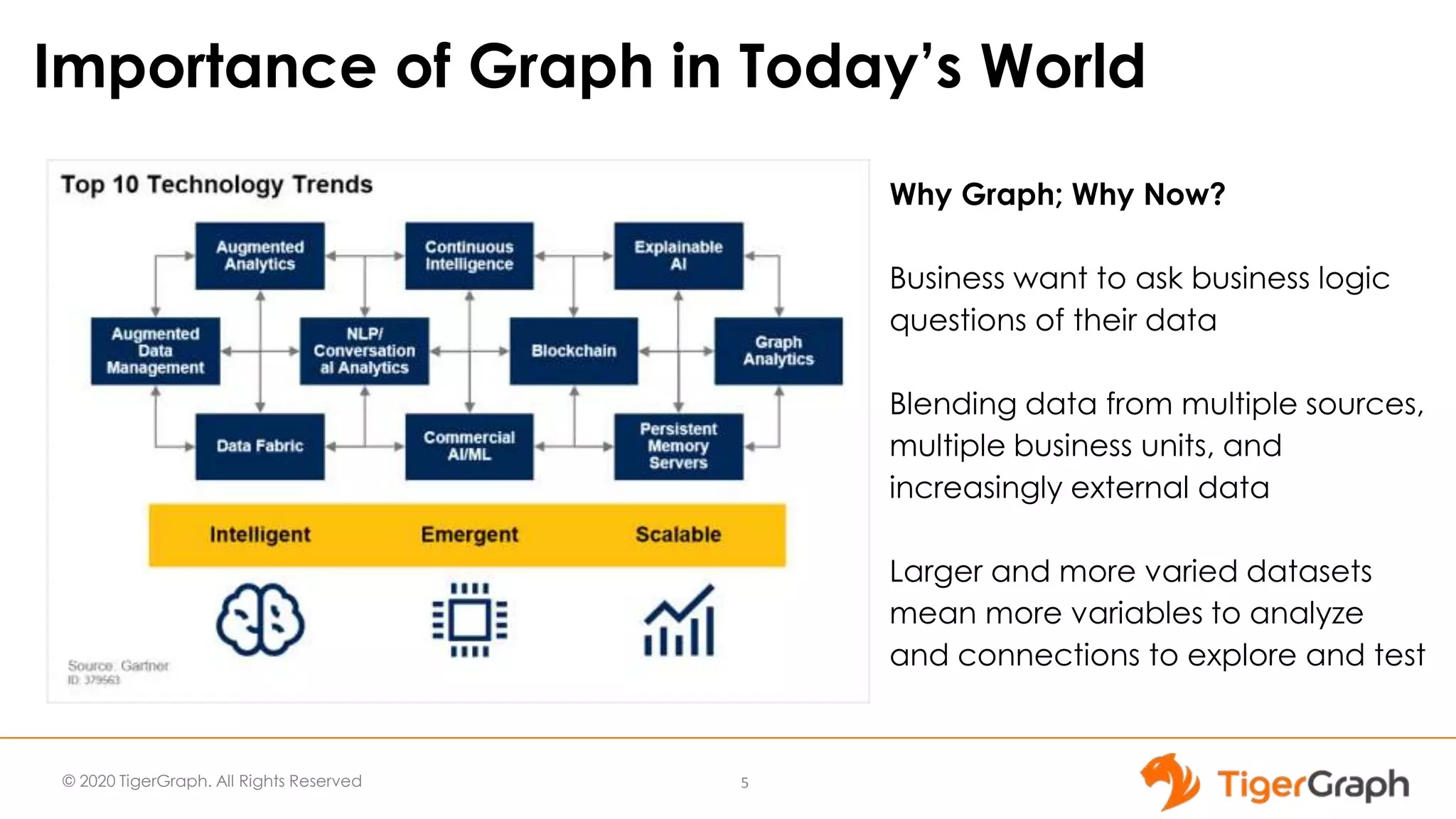

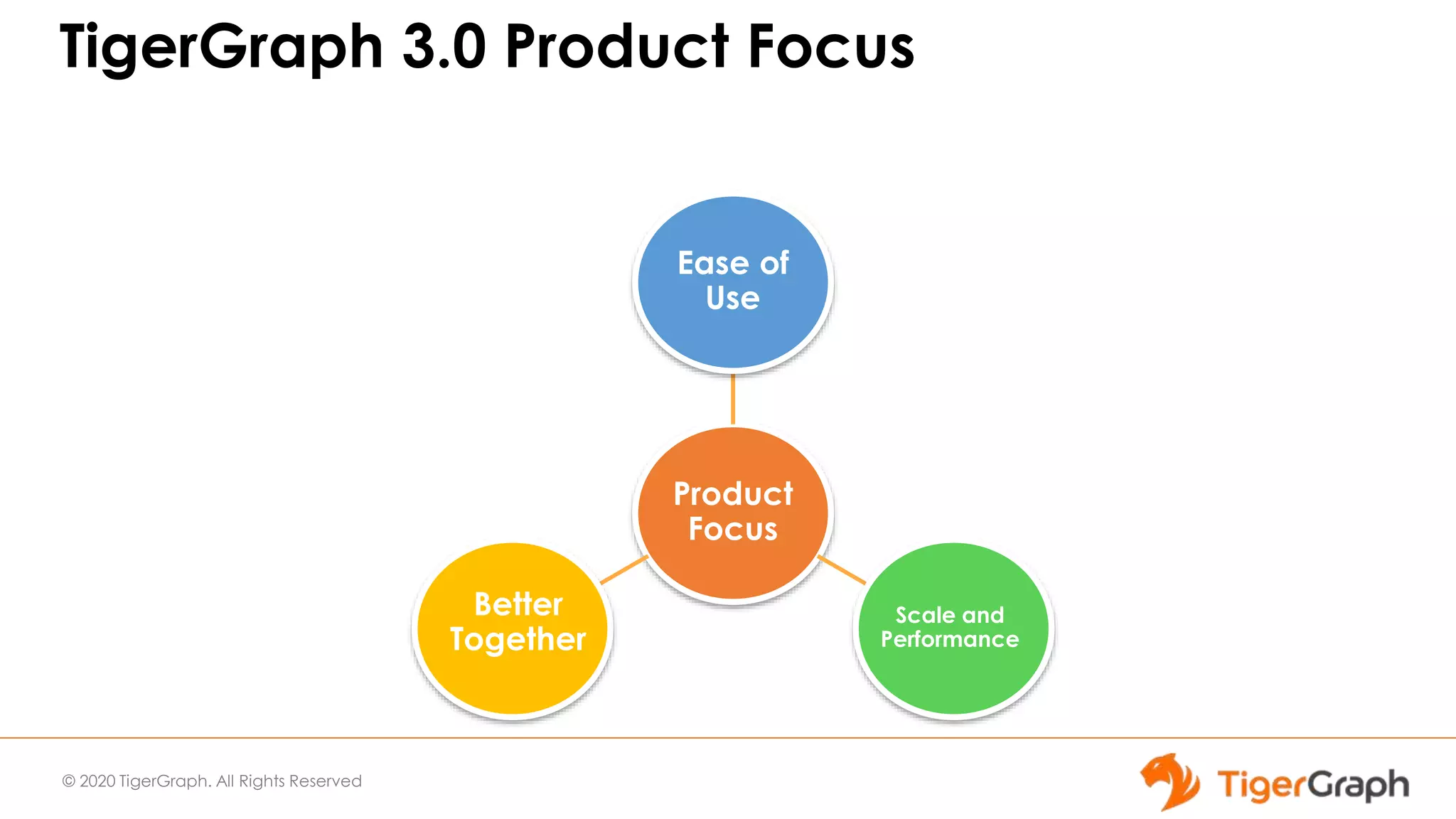

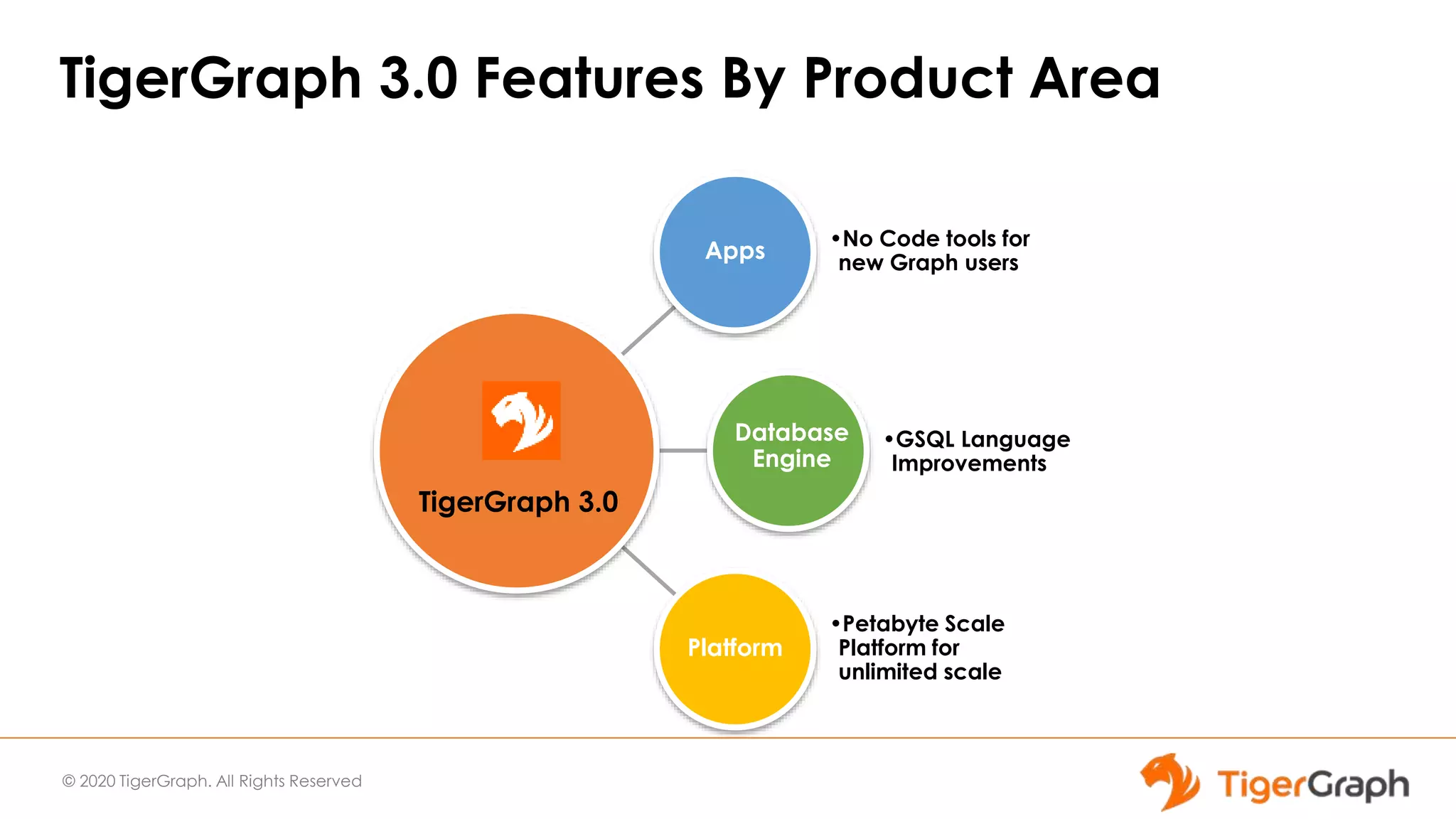

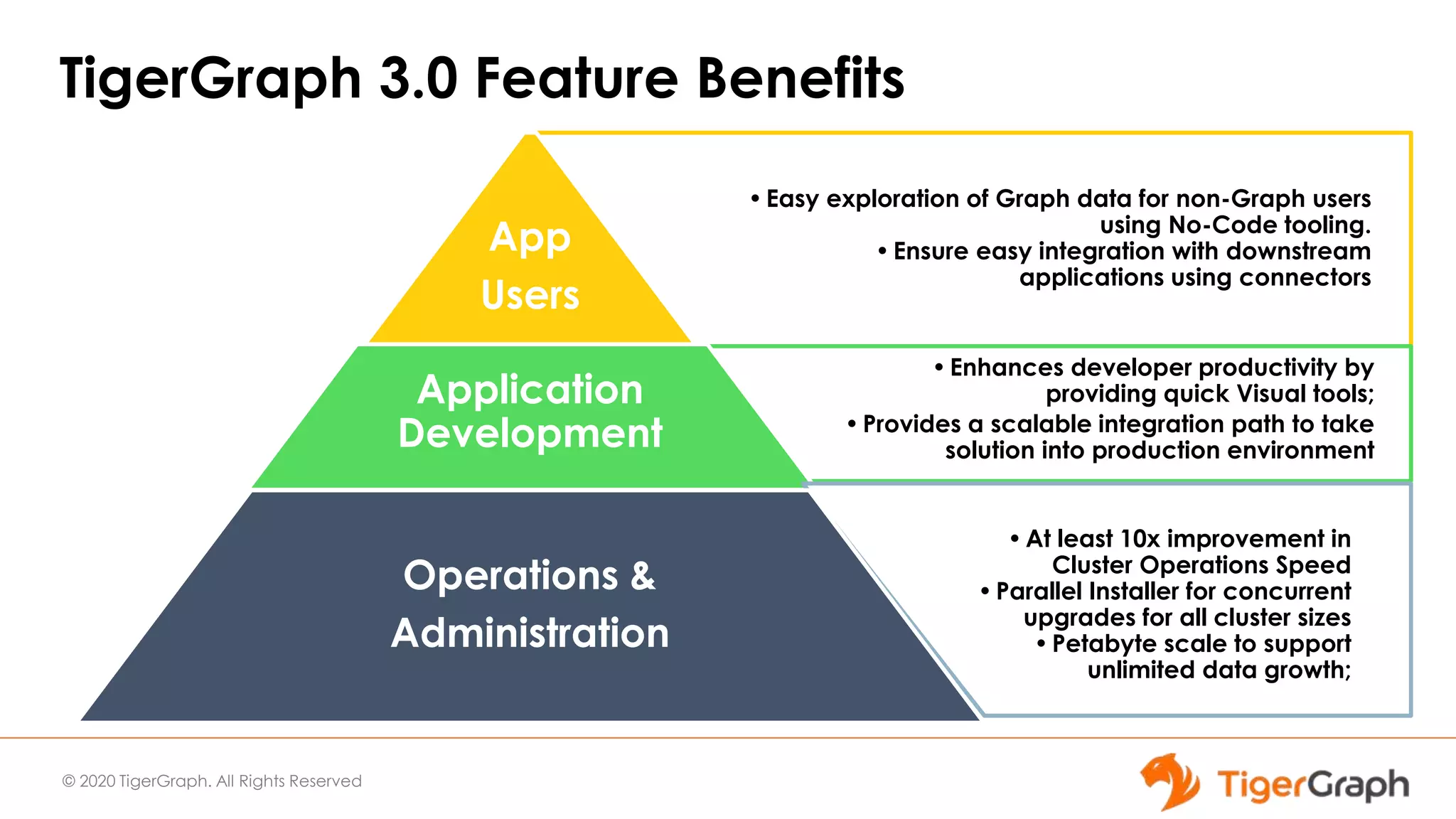

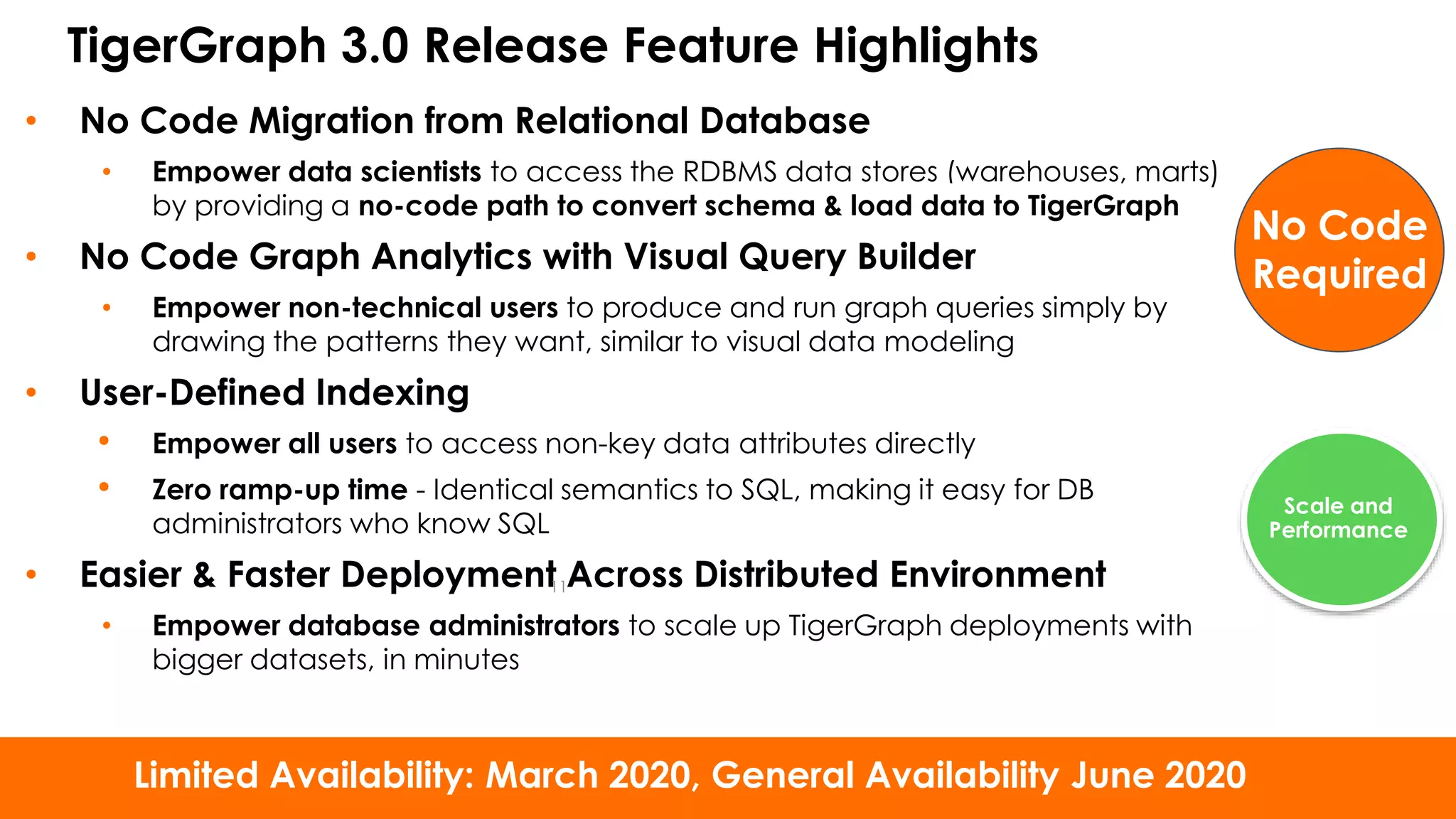

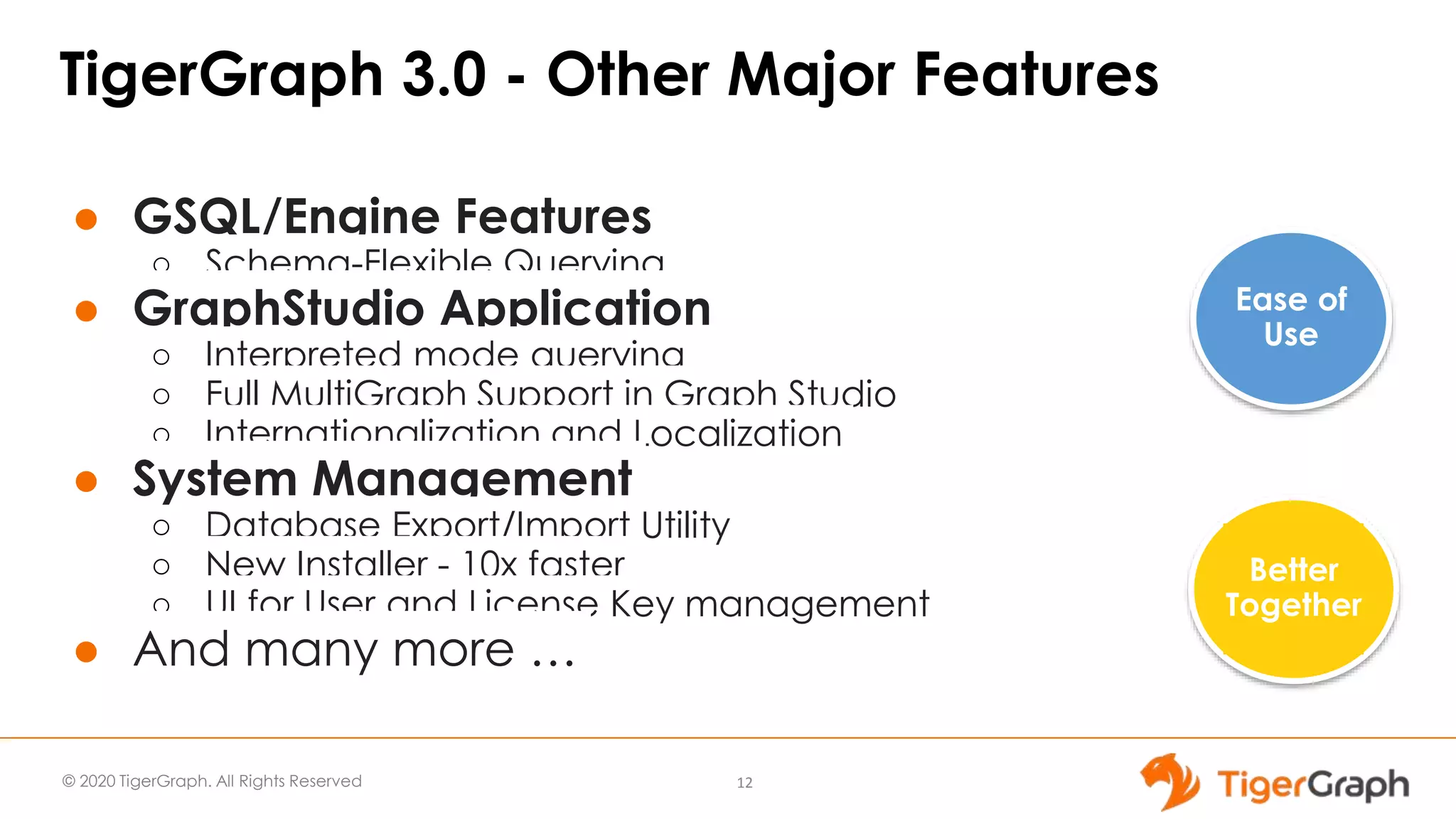

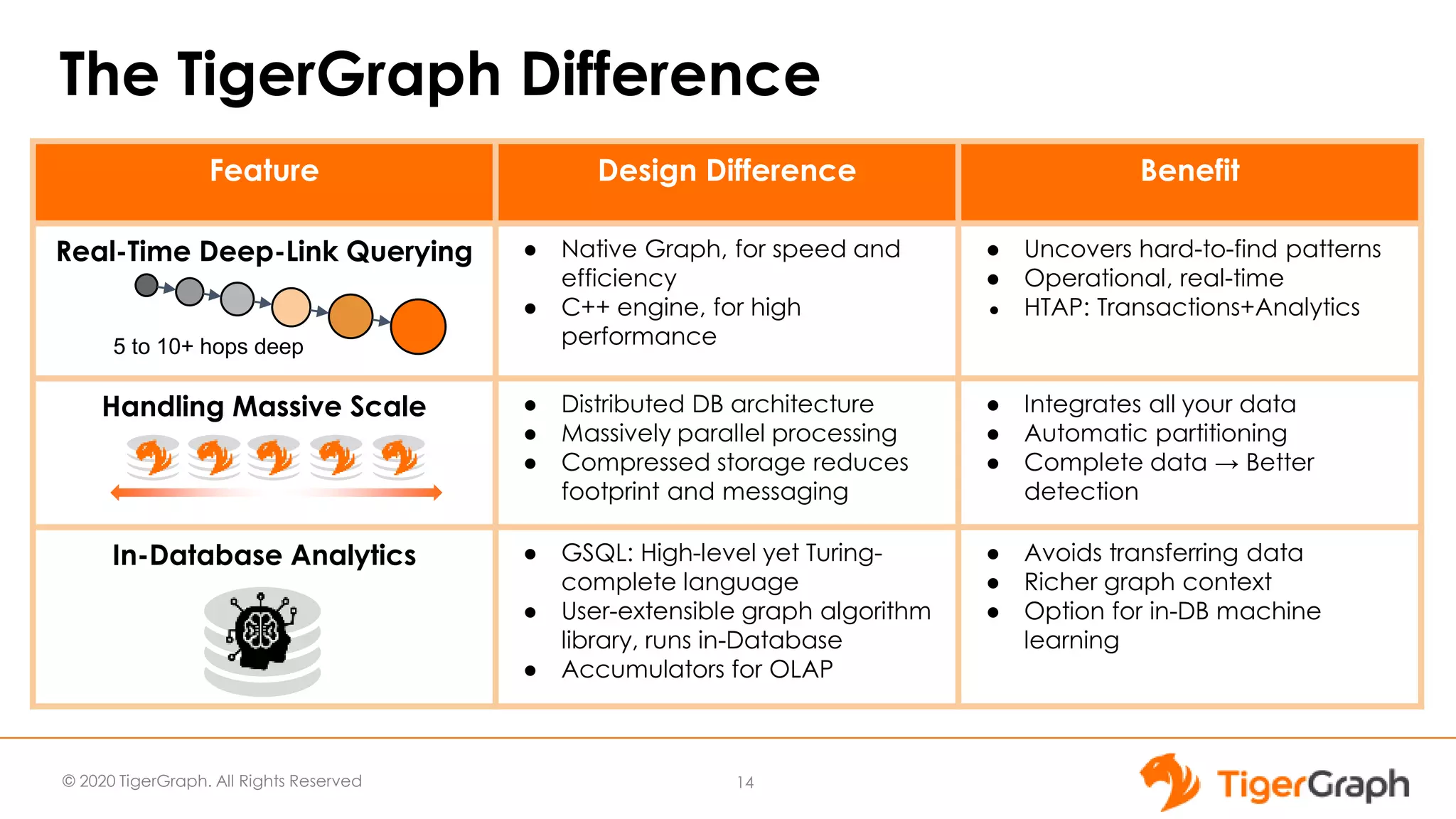

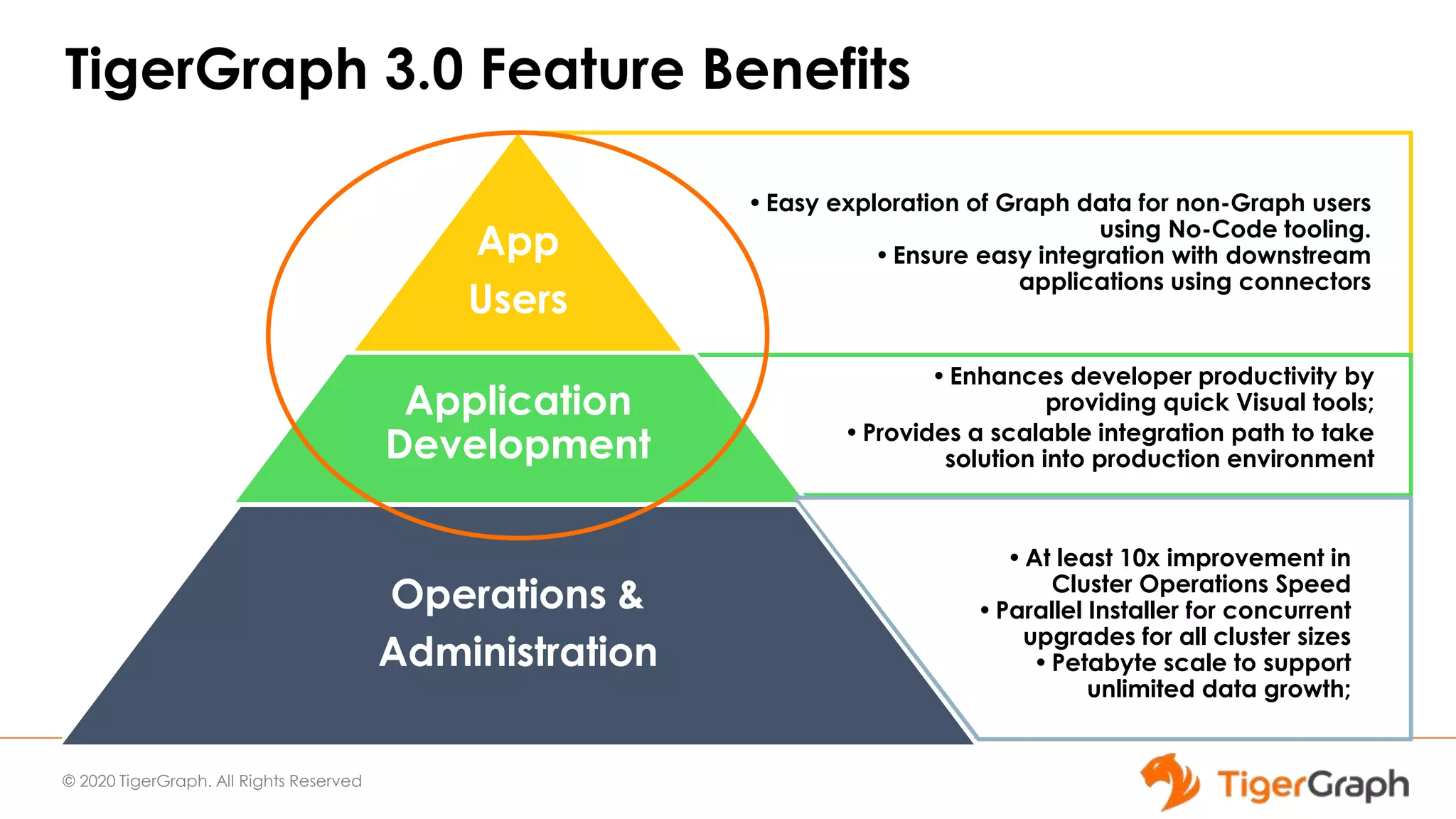

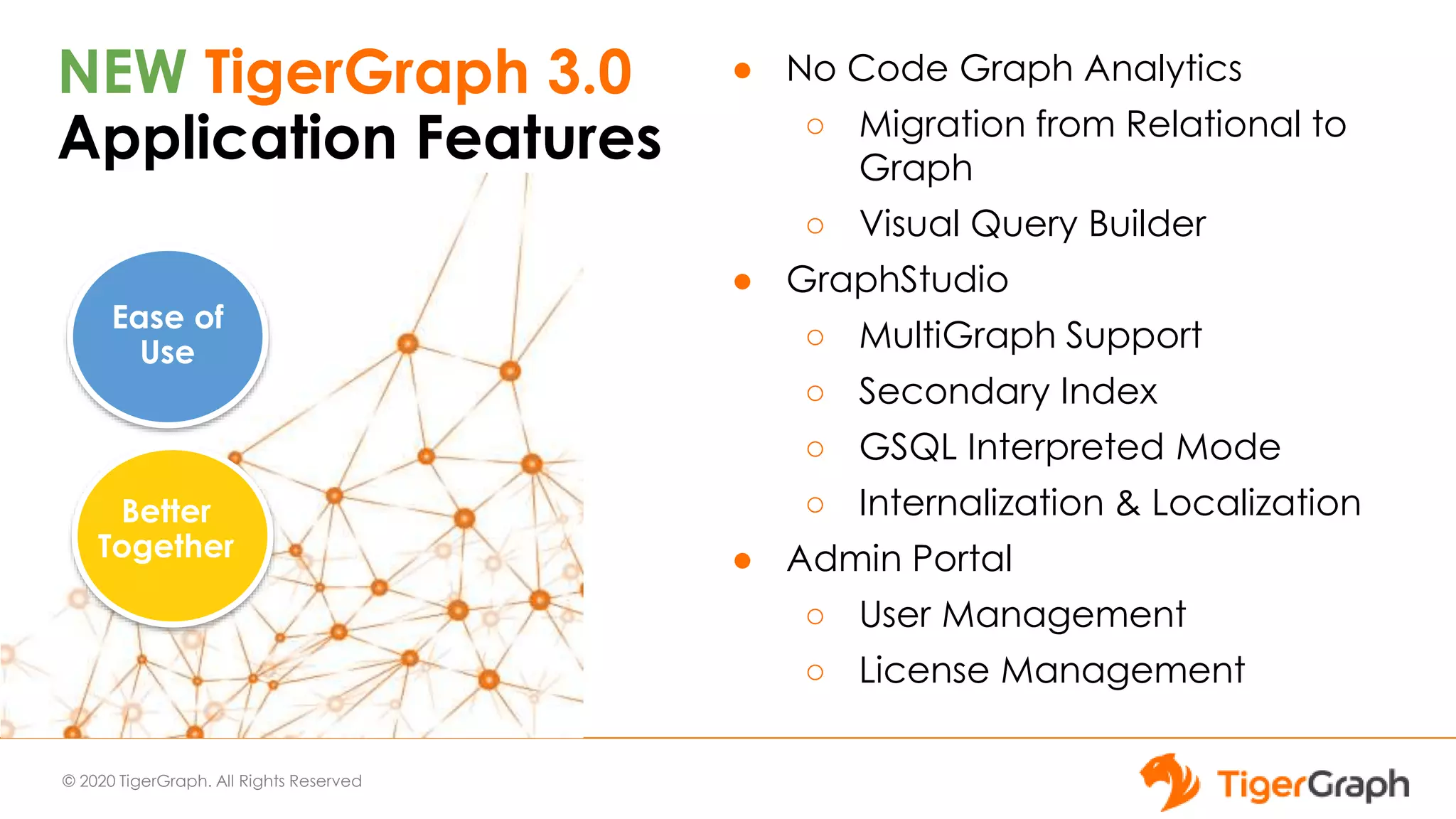

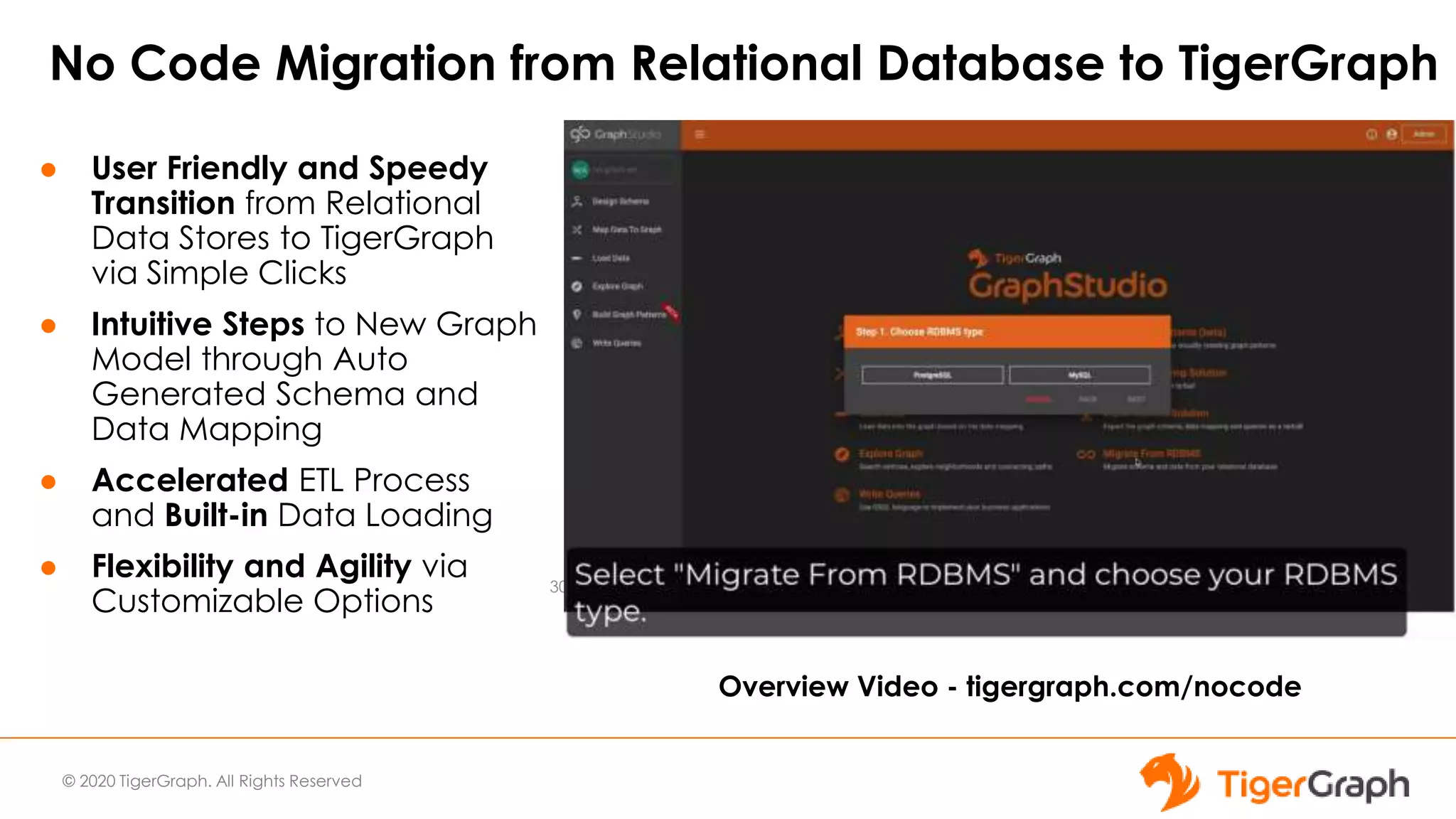

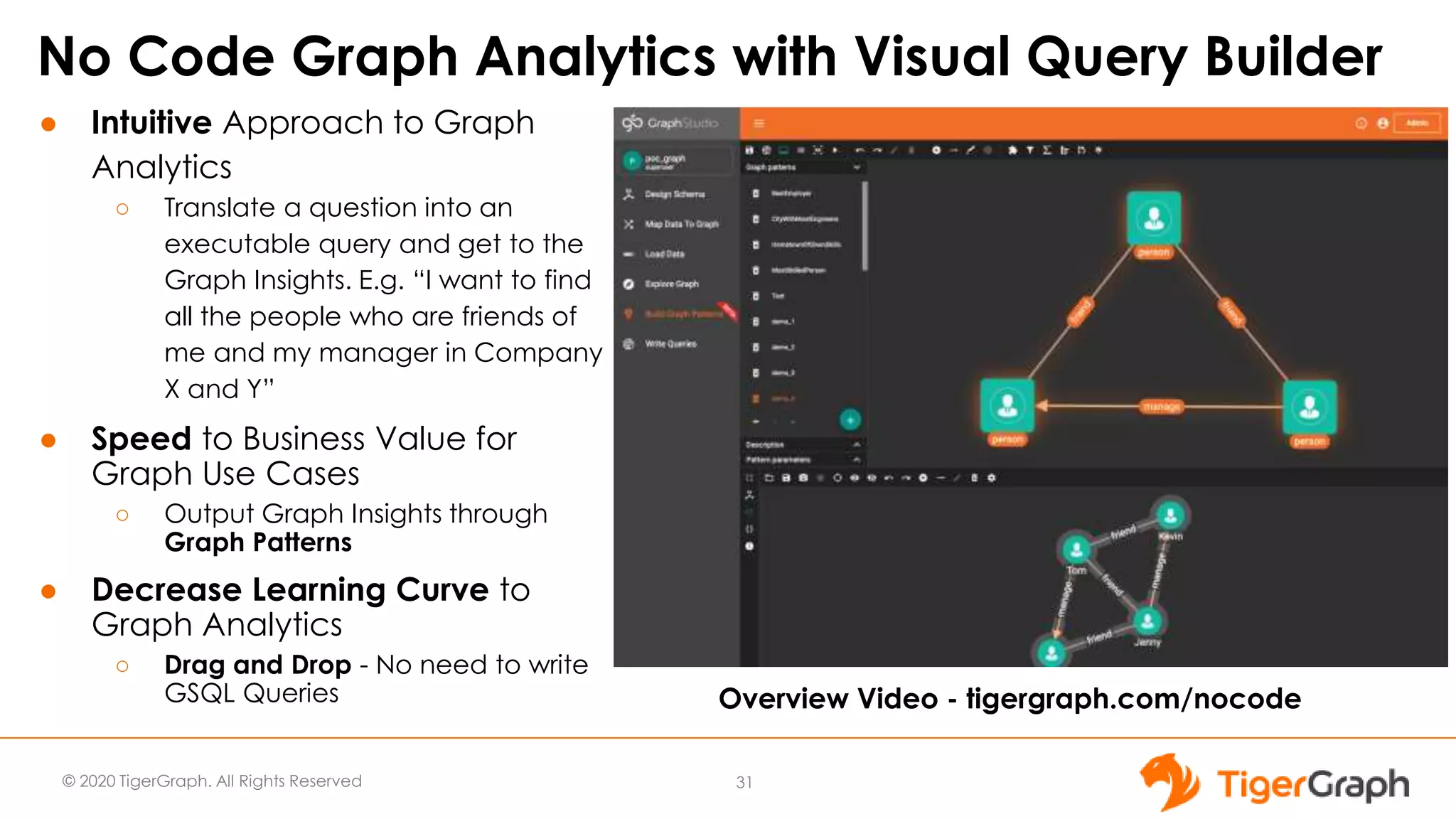

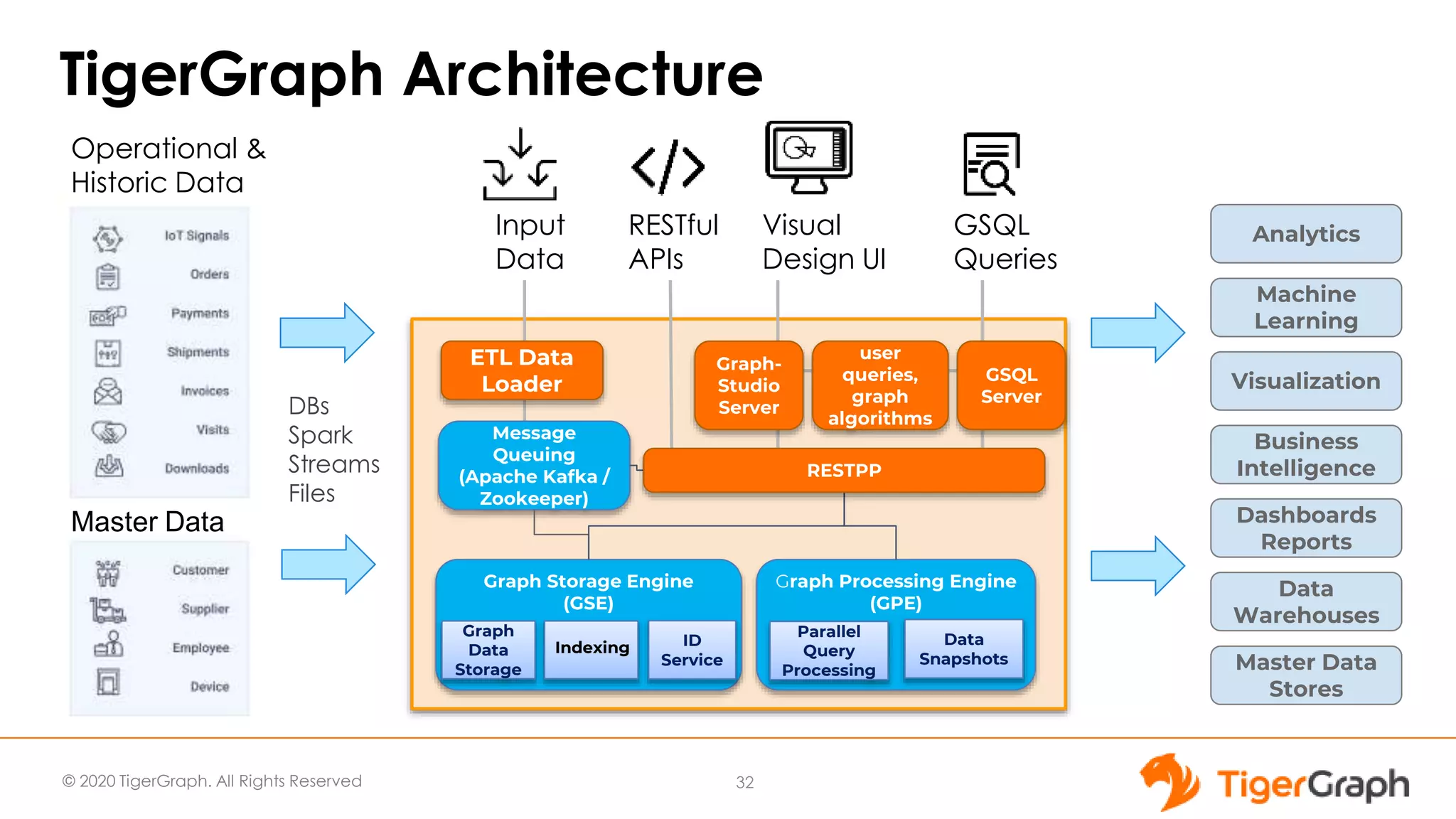

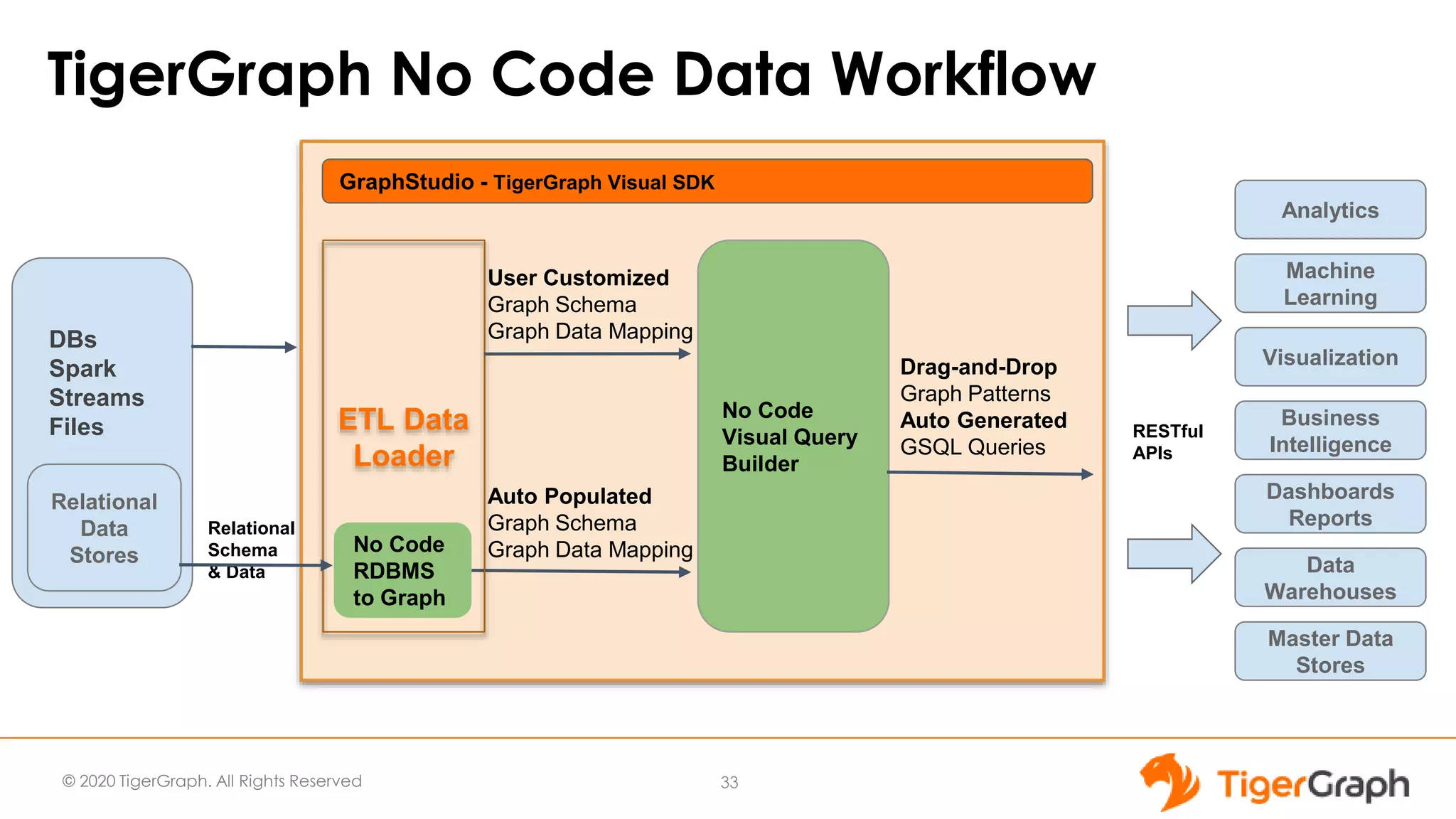

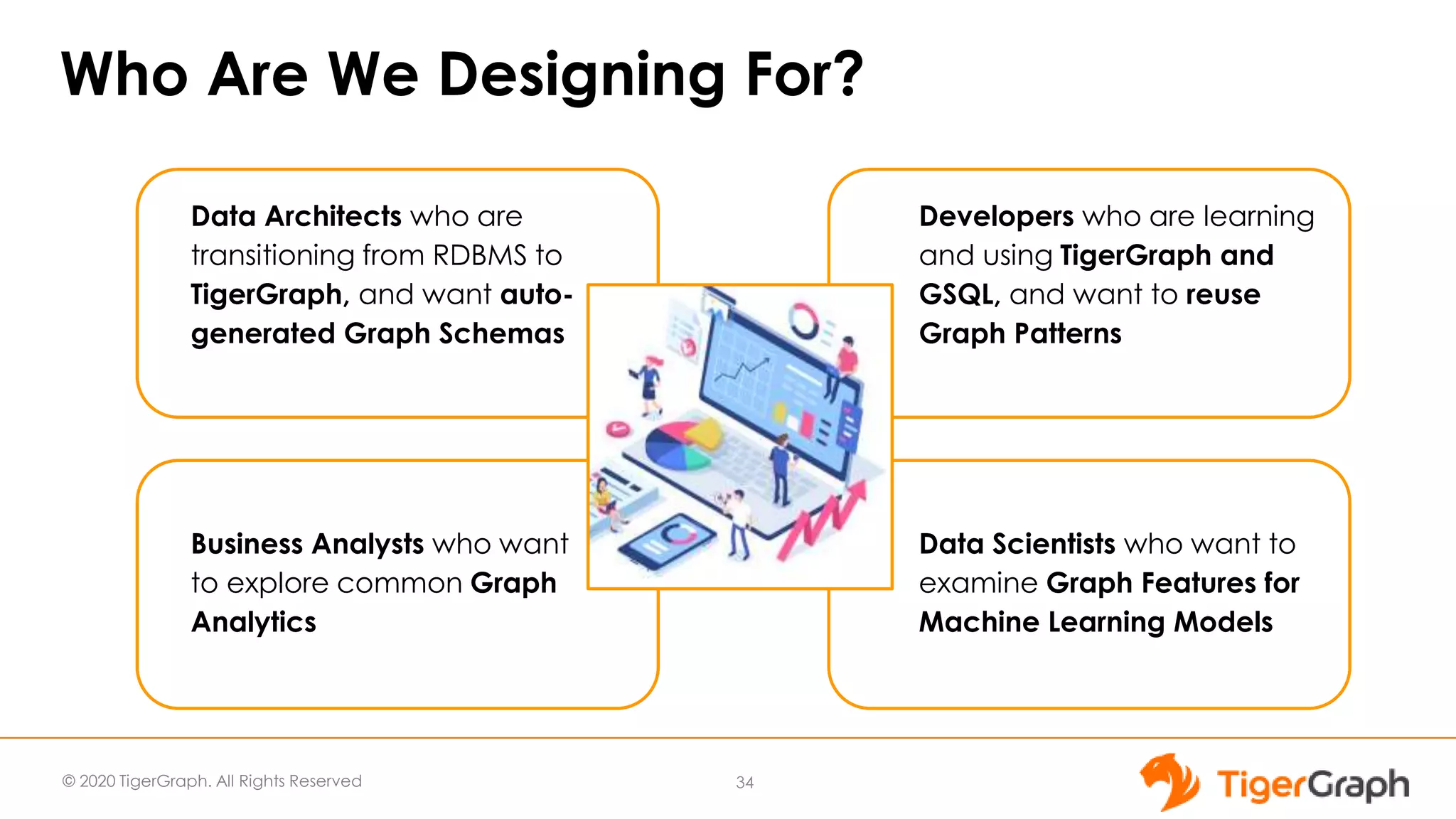

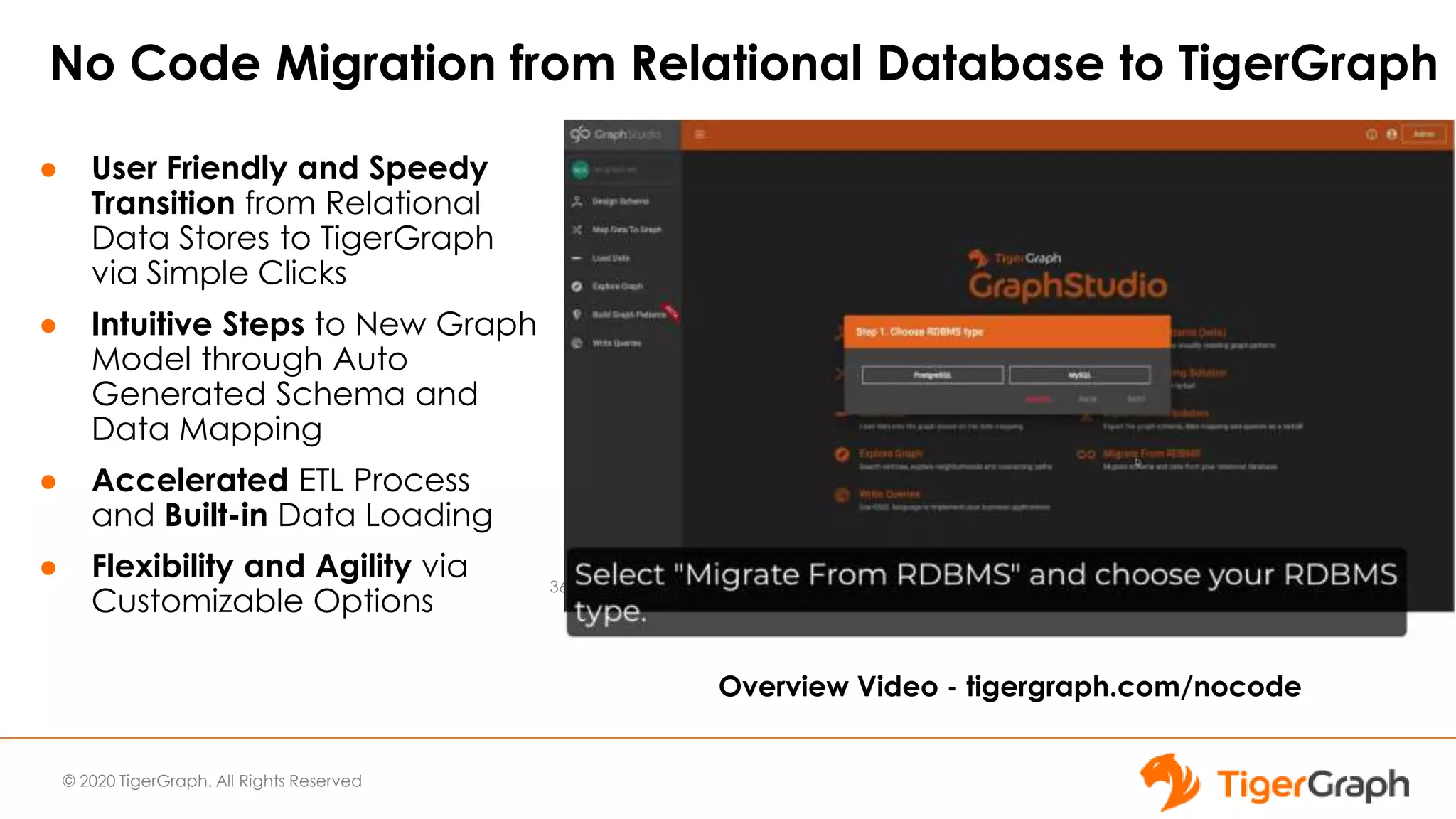

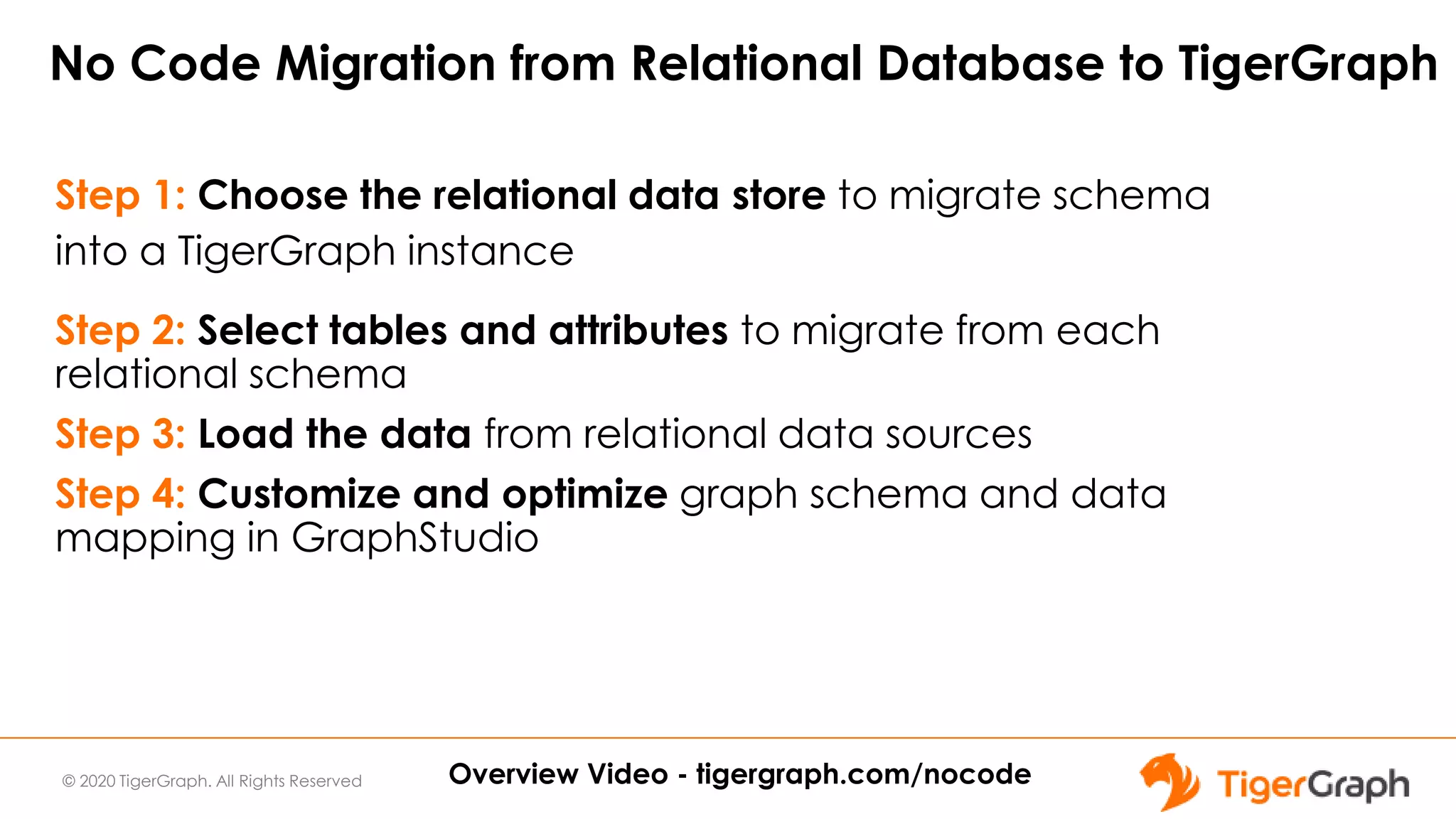

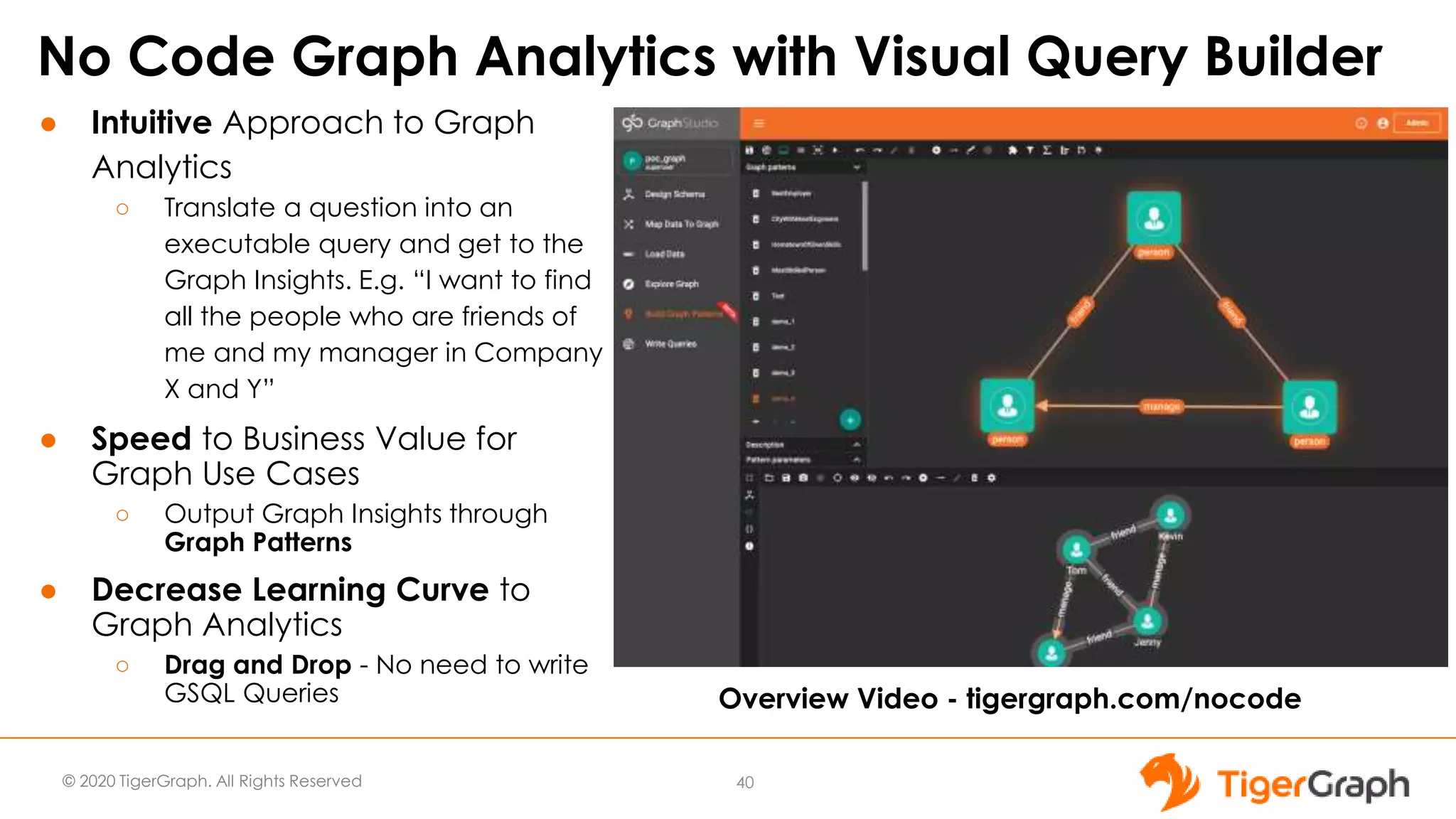

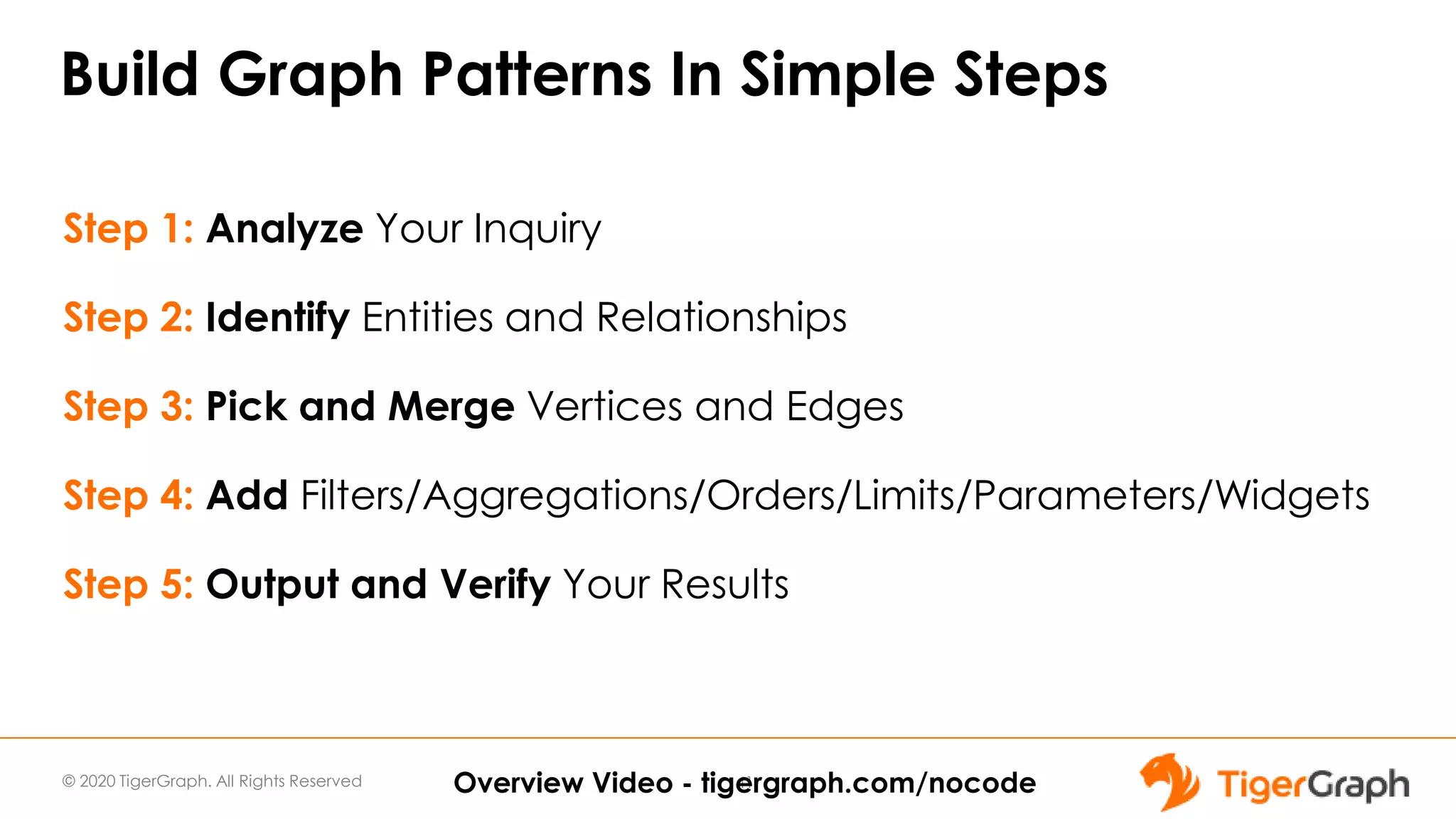

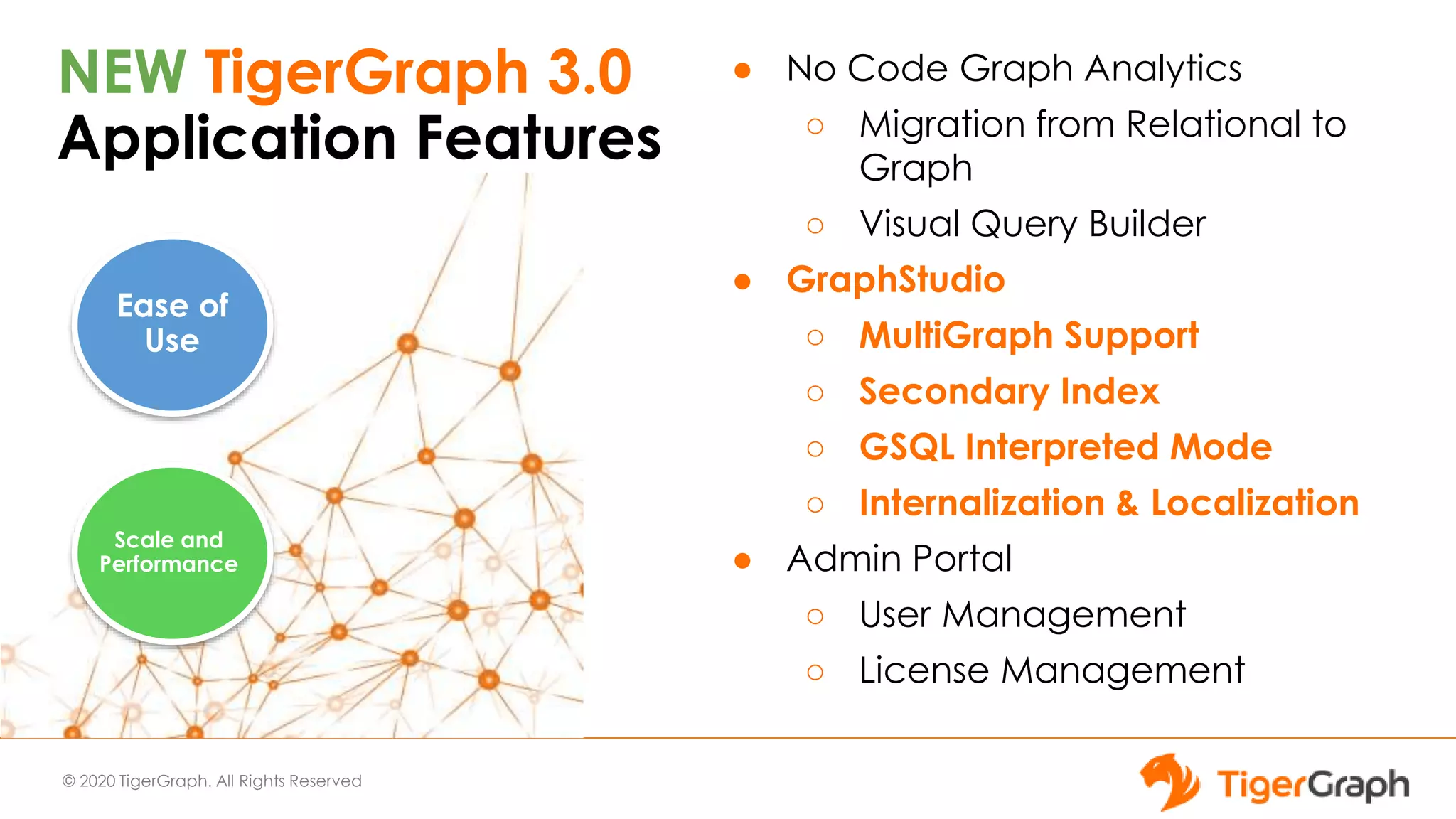

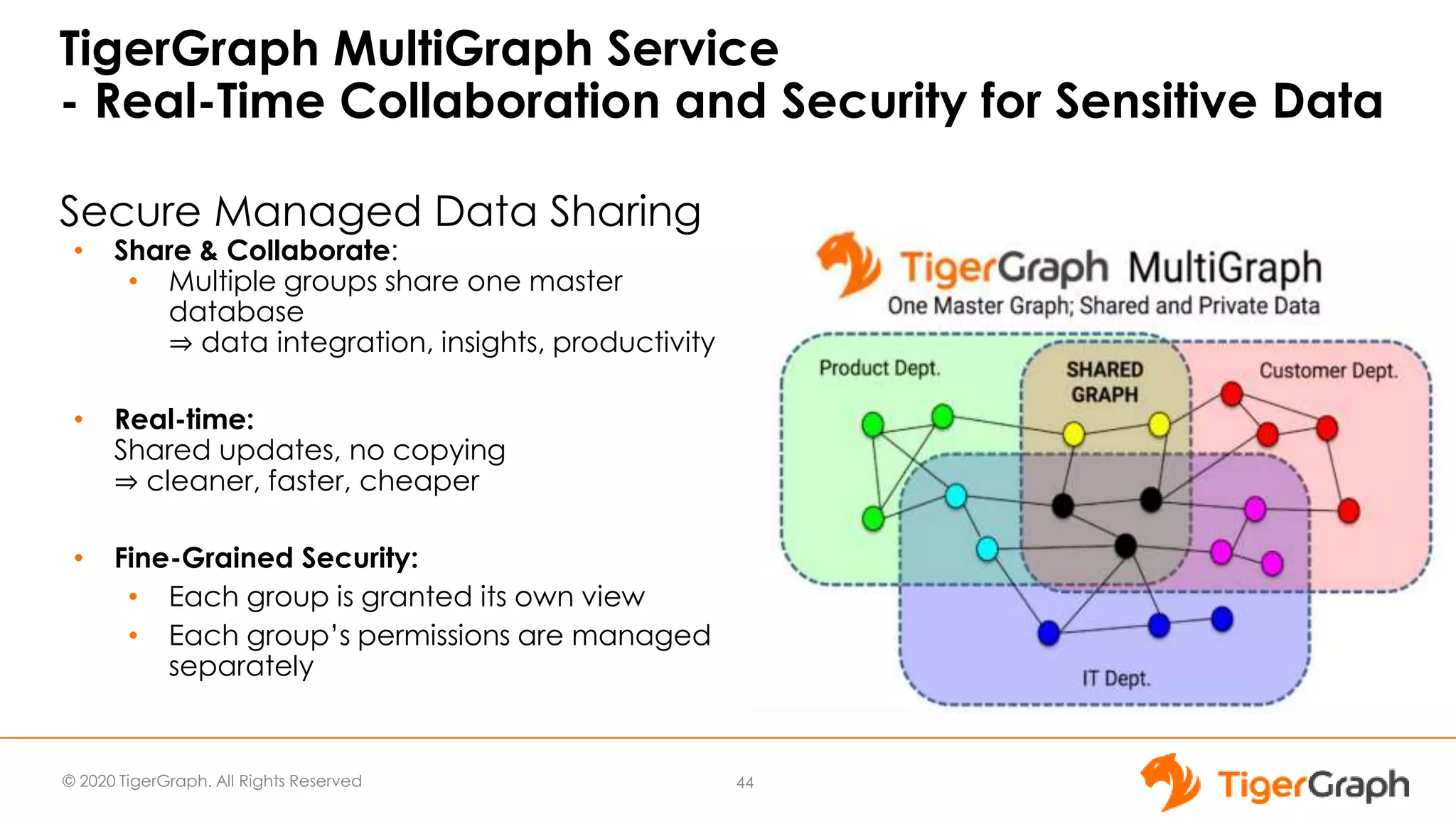

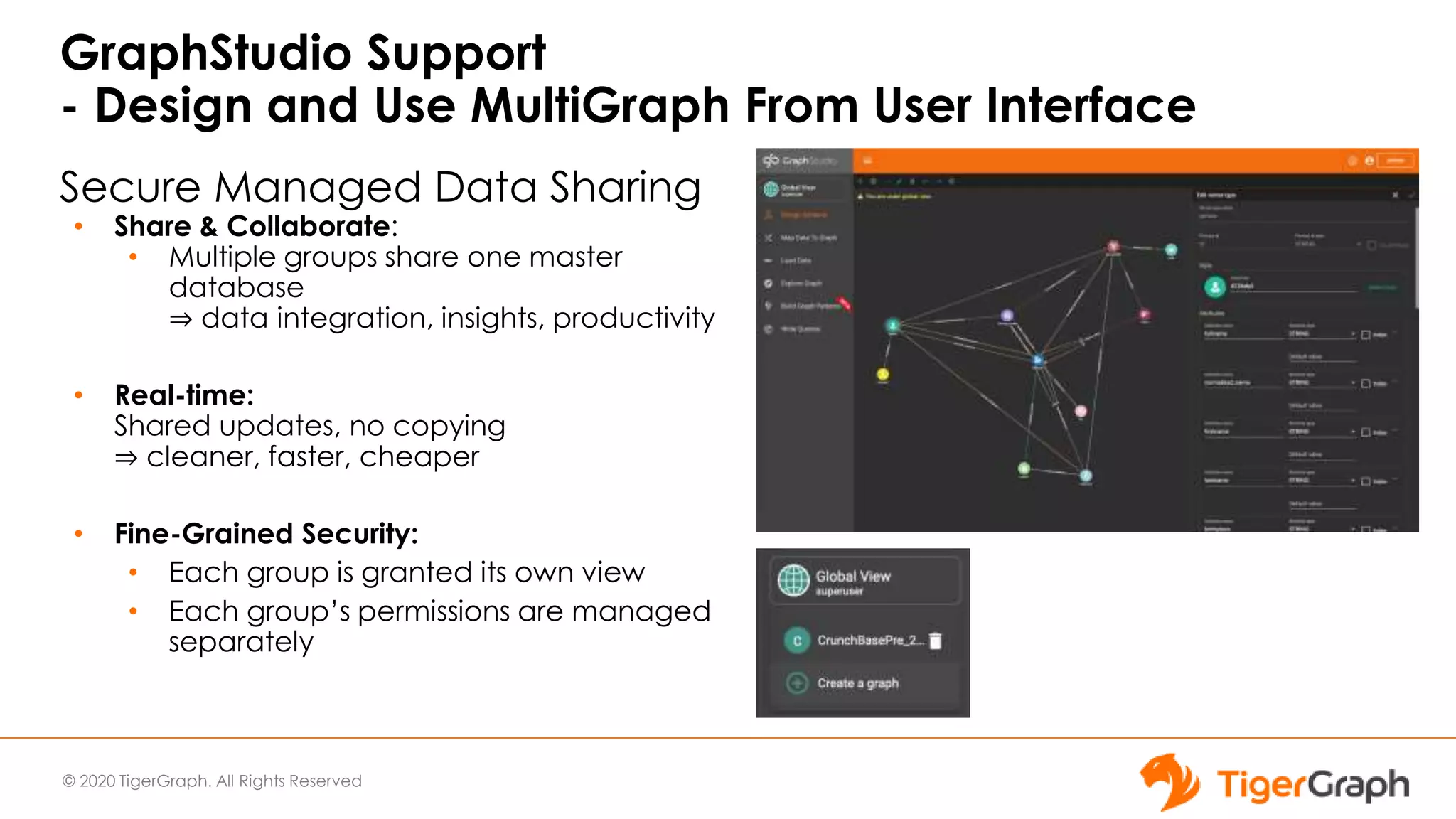

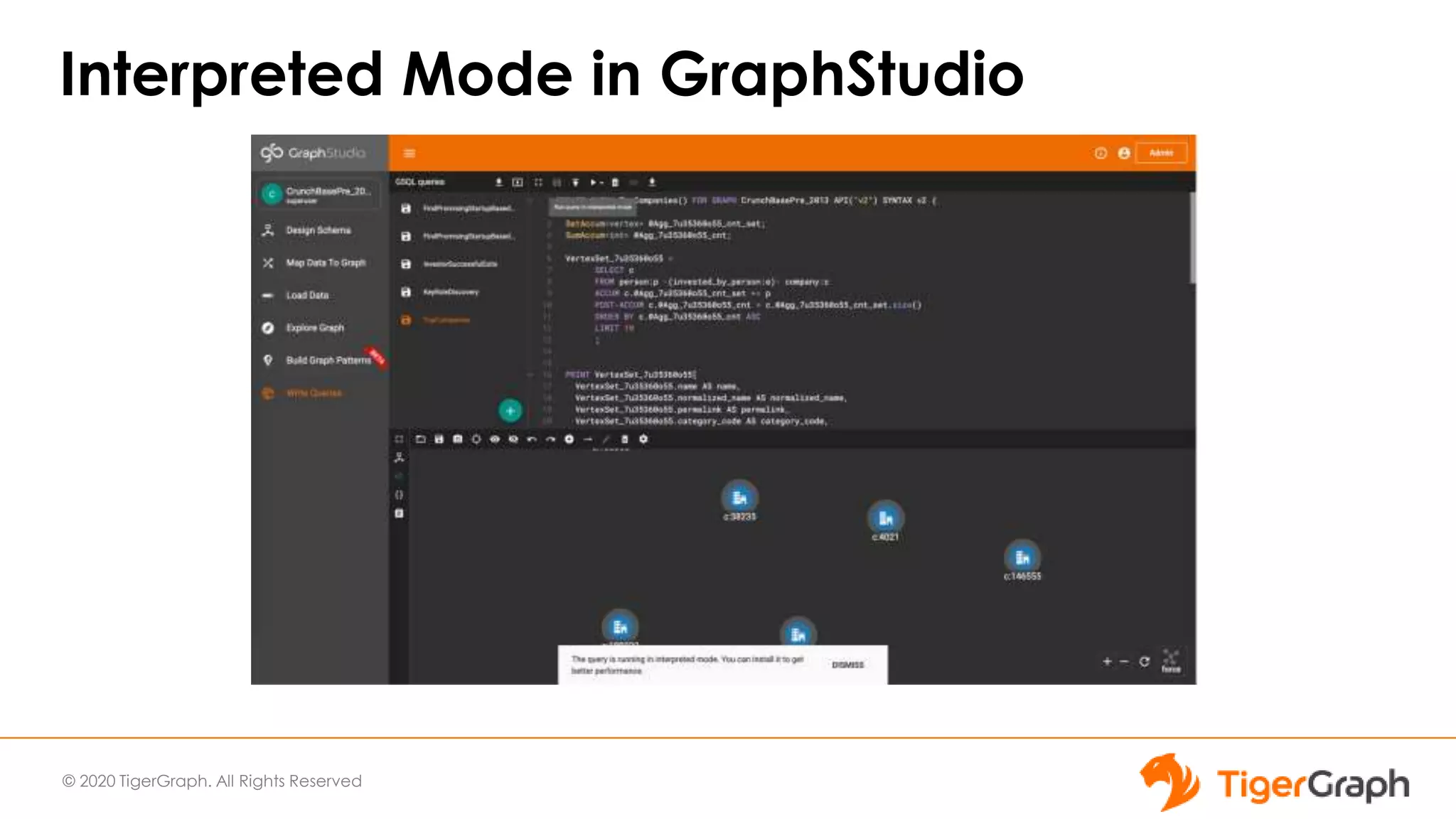

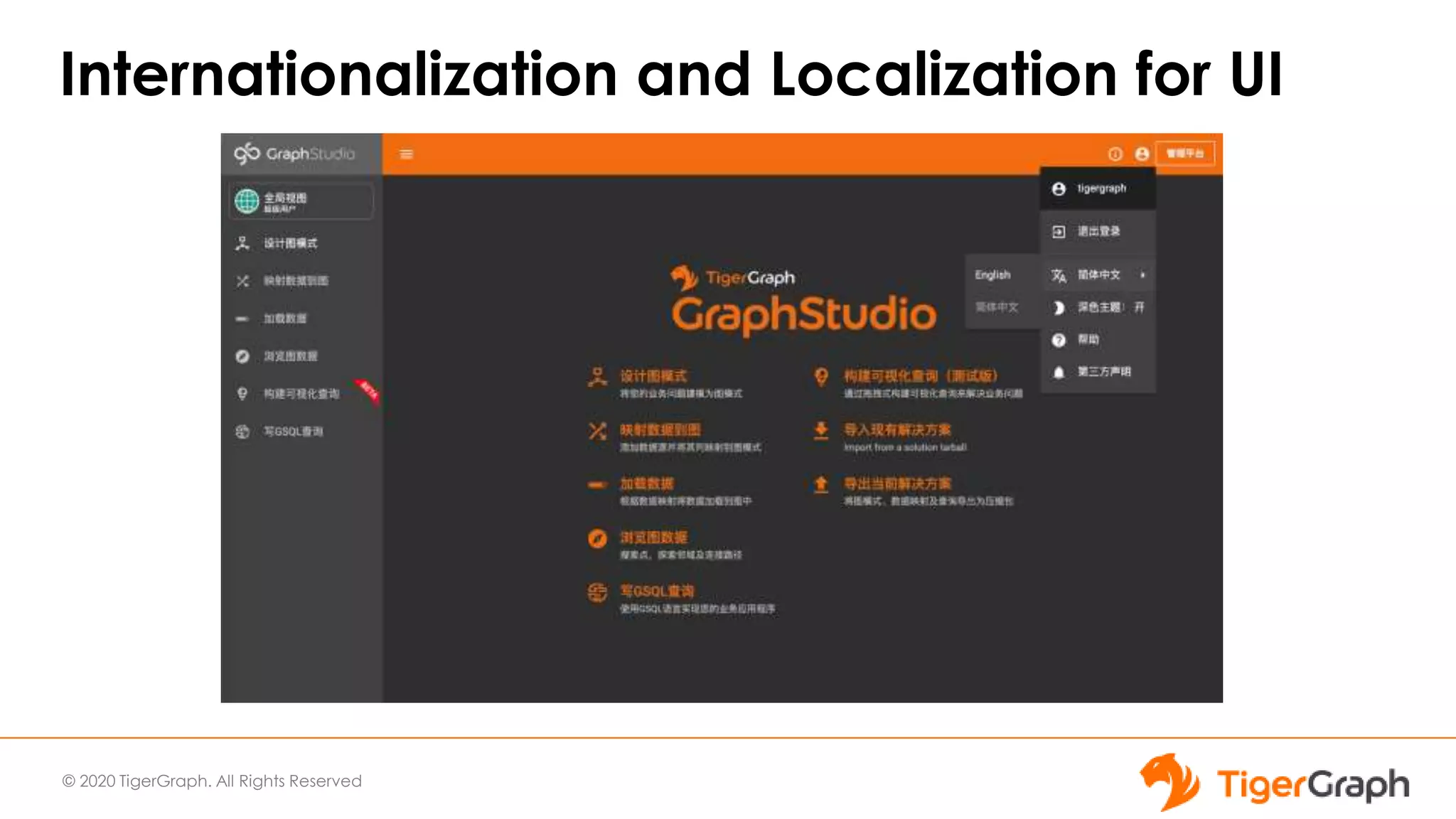

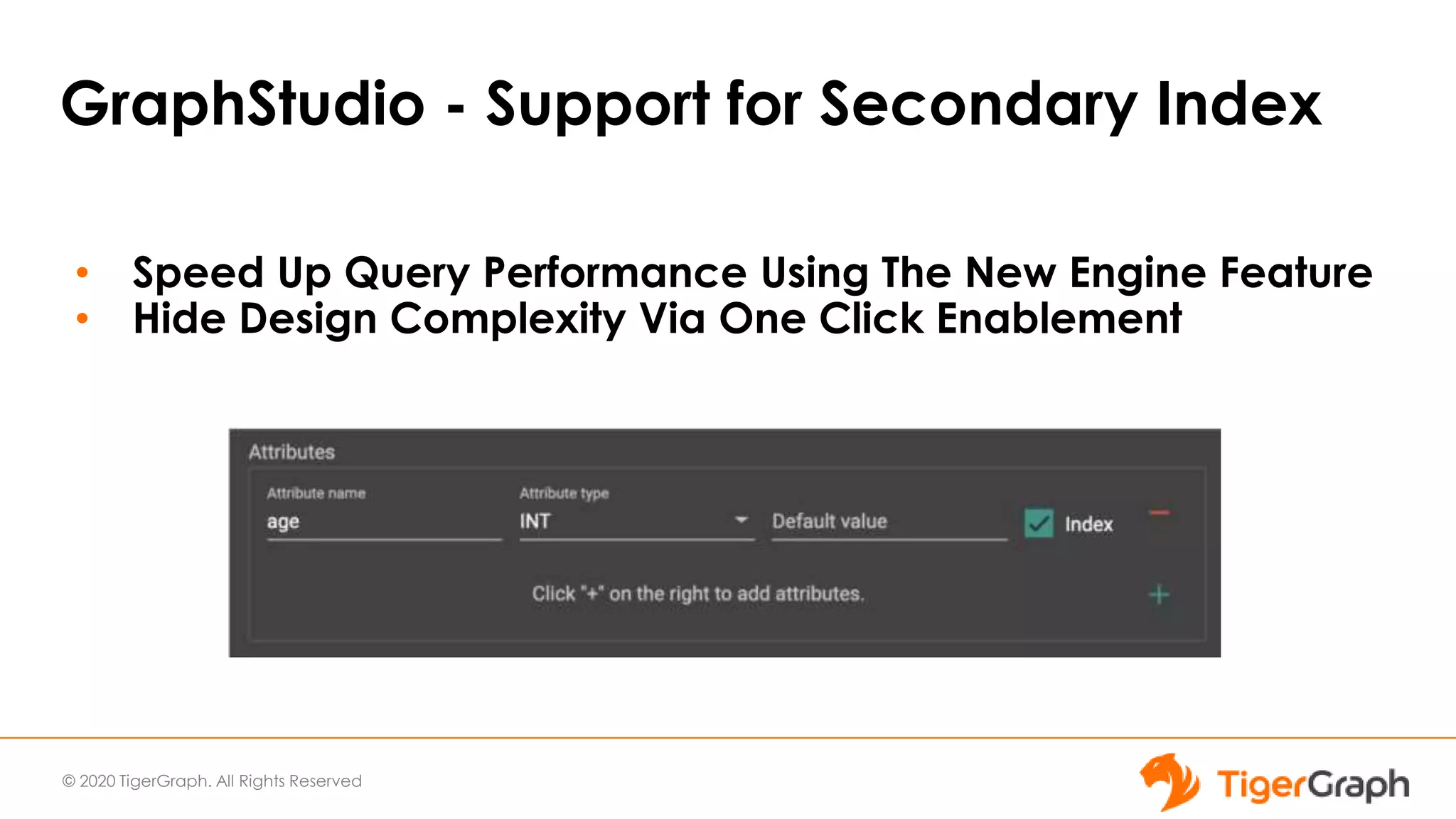

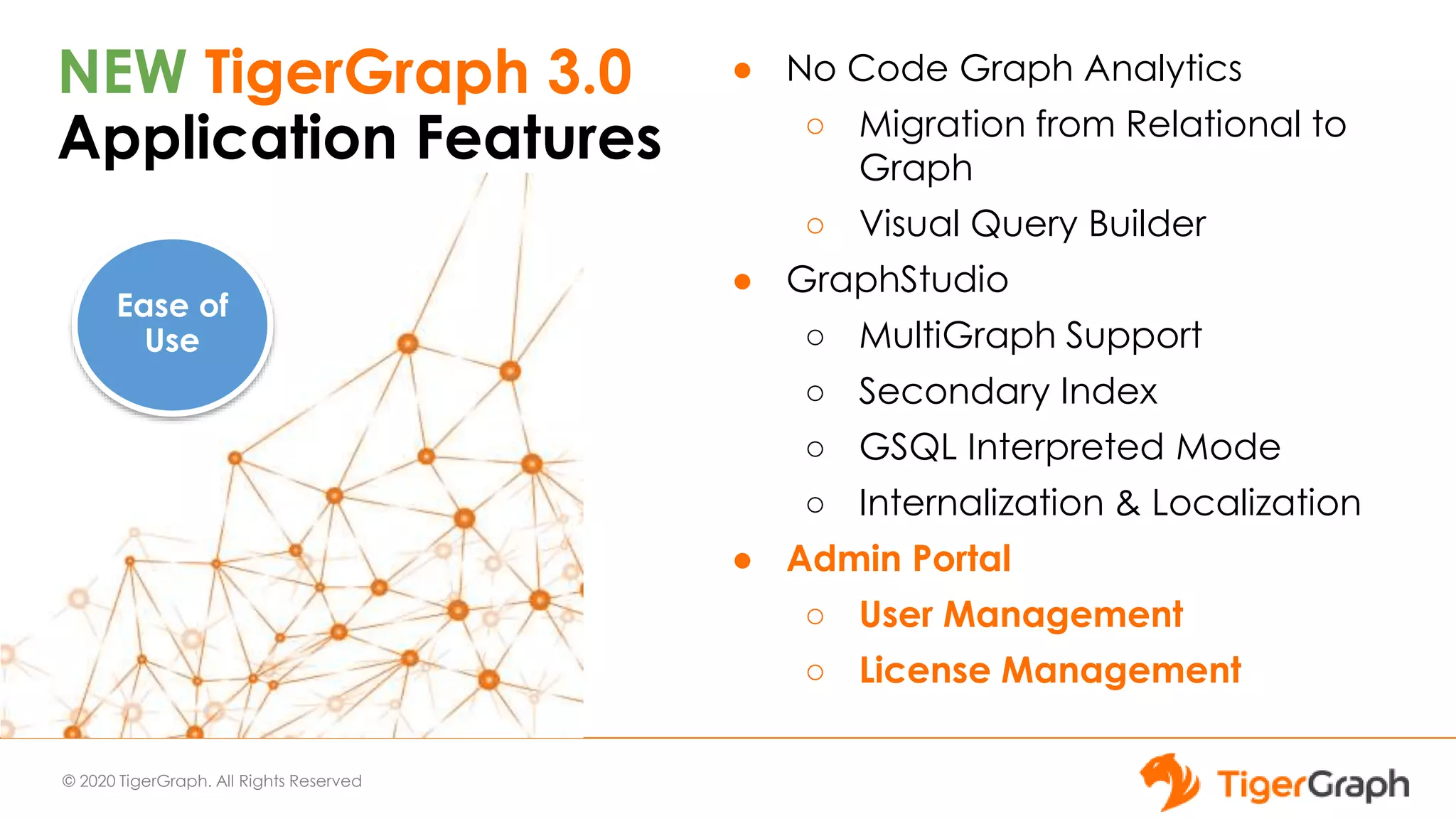

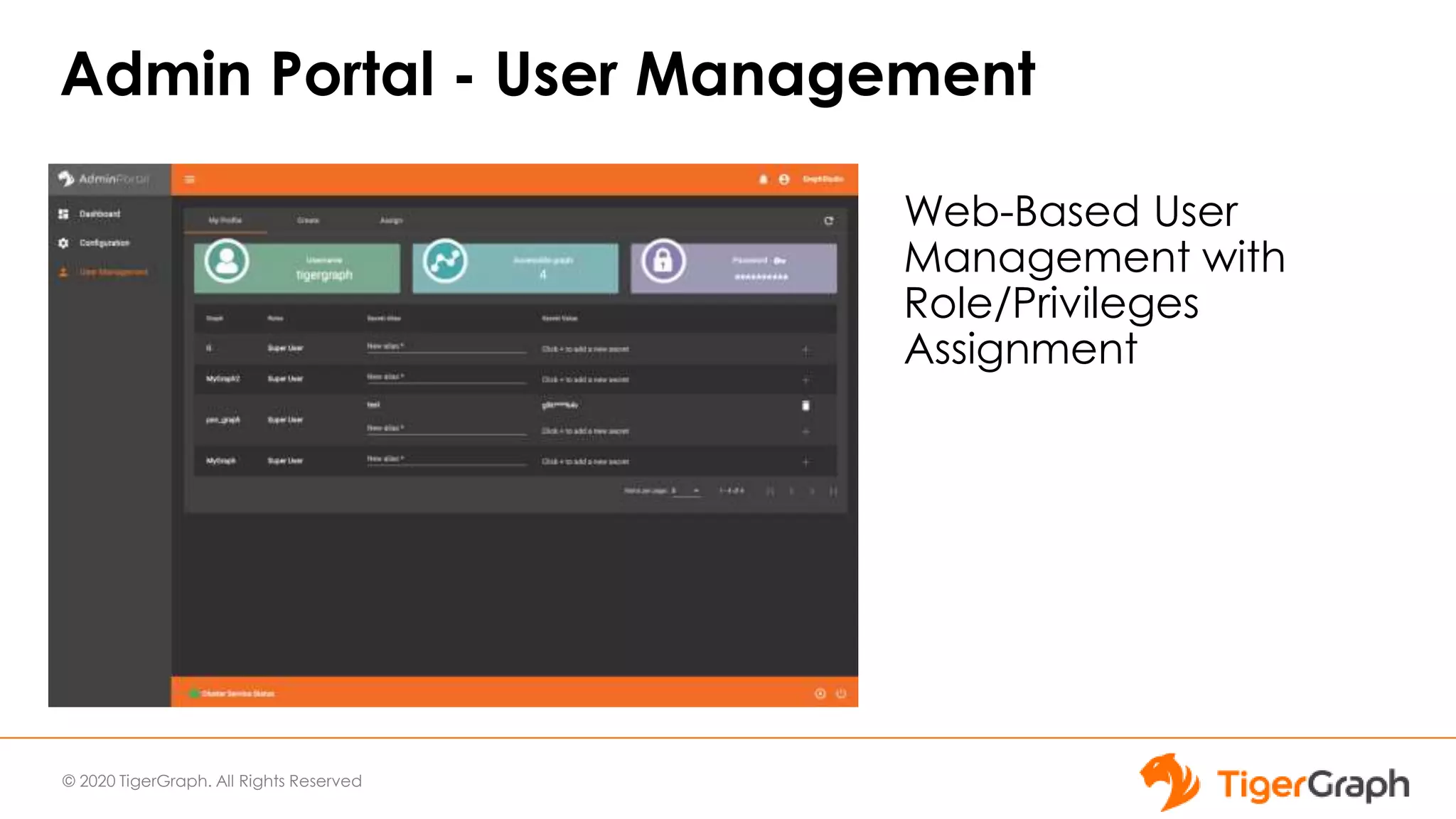

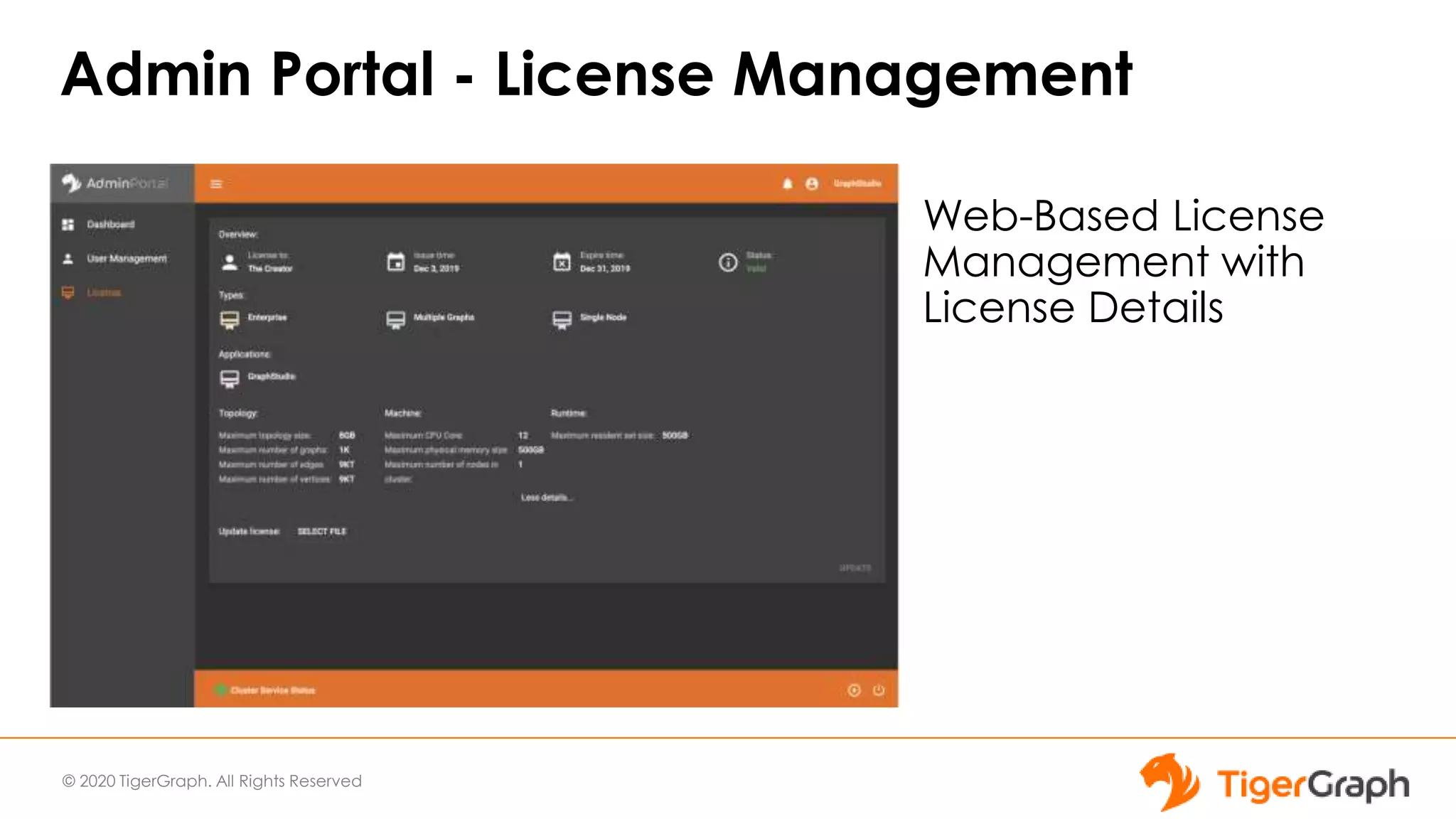

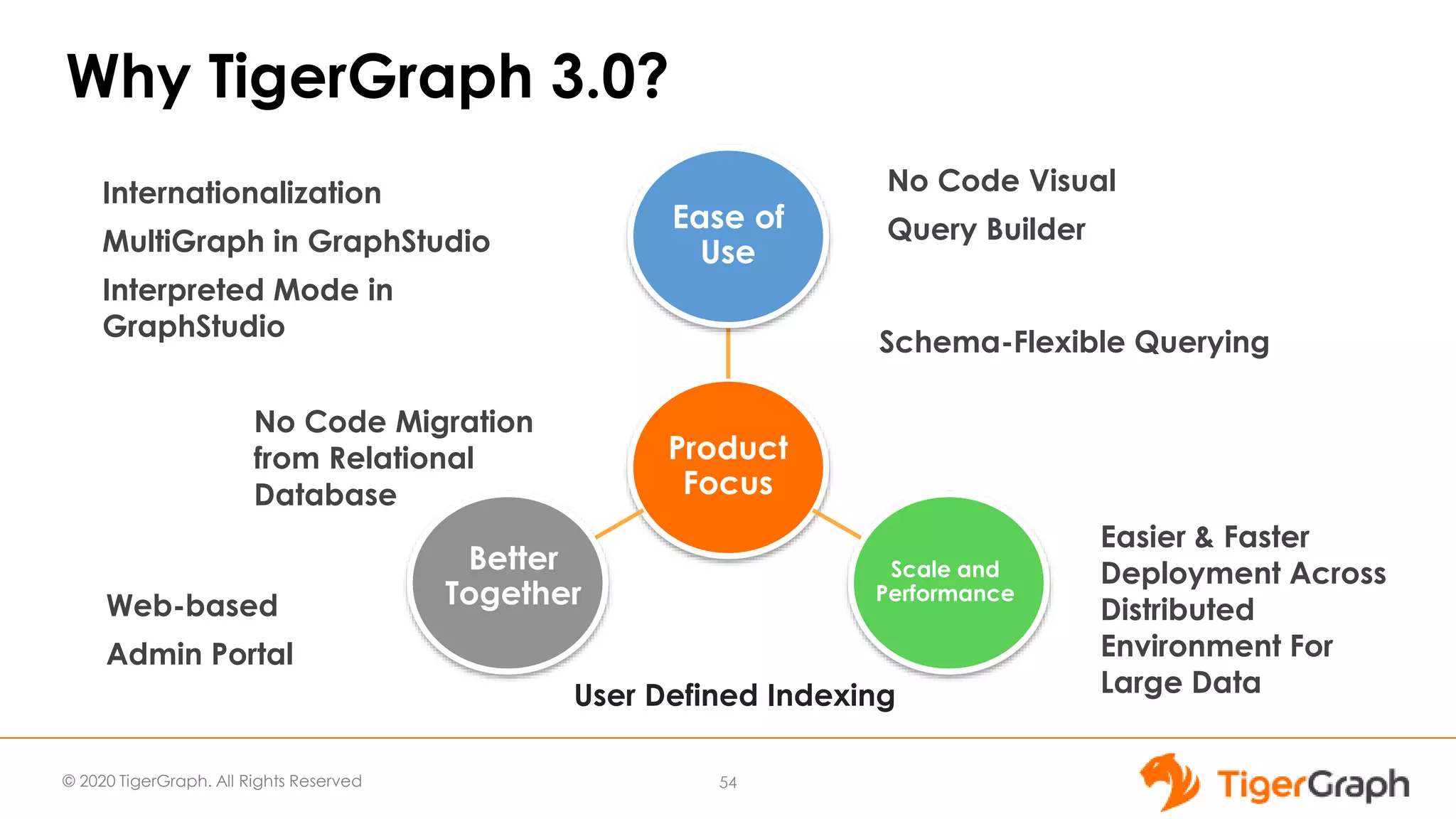

The document introduces TigerGraph 3.0, highlighting its no-code graph analytics capabilities designed for ease of use and accessibility for non-technical users. Key features include improved integration with other applications, a parallel installer for faster upgrades, and significant enhancements in query performance and database management. It also emphasizes the advantages of migrating from relational databases to graph structure to support large datasets and complex data relationships.