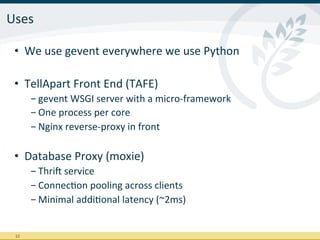

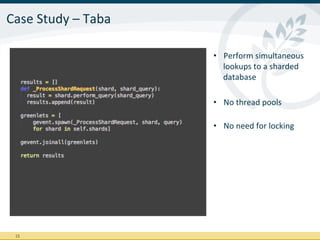

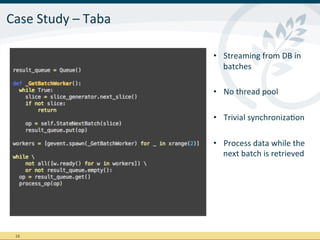

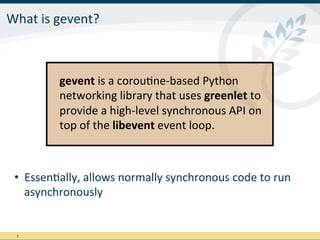

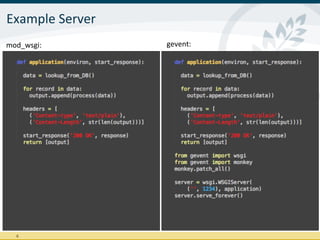

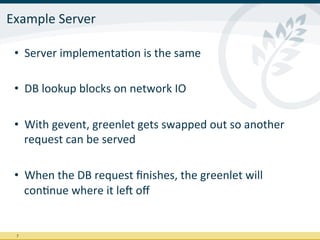

TellApart uses the gevent library to build highly concurrent and asynchronous Python applications. Gevent allows synchronous Python code to run asynchronously by using greenlets. It monkey-patches blocking libraries so that when blocking calls occur, other greenlets can run instead of the entire process blocking. This allows TellApart to build applications like their front-end server and database proxy that can handle millions of requests per day with low latency using a single process per core.

![What

is

gevent?

lib·∙e·∙vent

(ˈlib-‐i-‐ˈvent):

efficient

cross-‐pla]orm

library

for

execuIng

callbacks

when

specific

events

occur

or

a

Imeout

has

been

reached.

Includes

several

networking

libraries

(e.g.

DNS,

HTTP)

green·∙let

(ˈgrēn-‐lət):

lightweight

co-‐rouInes

for

in-‐process

concurrent

programming.

Ported

from

Stackless

Python

as

a

library

for

the

CPython

interpreter

4](https://image.slidesharecdn.com/geventpresentation-120919153746-phpapp02/85/Gevent-at-TellApart-4-320.jpg)

![Advantages

(conInued)

• gevent

is

fast

- Very

thorough

set

of

benchmarks

by

Nicholas

Piël

hrp://nichol.as/benchmark-‐of-‐python-‐web-‐servers

And

then

there

is

Gevent

[...]

[…]

if

you

want

to

dive

into

high

performance

websockets

with

lots

of

concurrent

connecIons

you

really

have

to

go

with

an

asynchronous

framework.

Gevent

seems

like

the

perfect

companion

for

that,

at

least

that

is

what

we

are

going

to

use.

9](https://image.slidesharecdn.com/geventpresentation-120919153746-phpapp02/85/Gevent-at-TellApart-9-320.jpg)