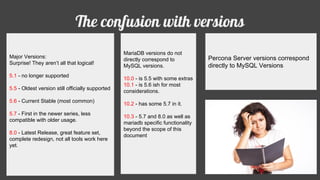

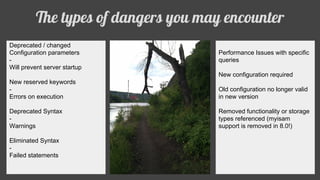

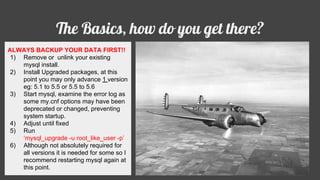

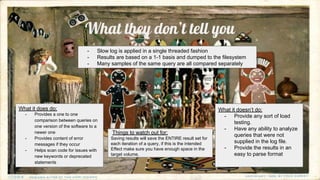

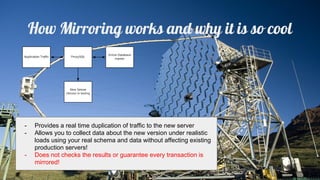

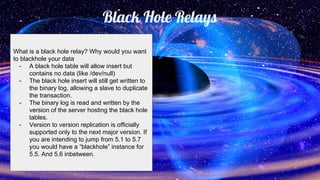

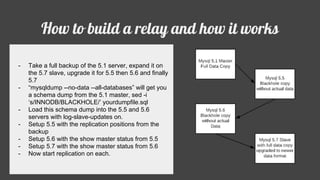

Jakob Lorberblatt is an open source database consultant who loves to talk about software and MySQL. The document discusses the confusion around MySQL versions, potential issues when upgrading versions like deprecated parameters or syntax, and strategies for upgrading versions safely such as backing up data, testing on a clone, and using tools like Percona Toolkit to analyze differences. It also covers techniques for gradually moving to a newer version like using ProxySQL for real-time mirroring or black hole relays for multi-version replication.