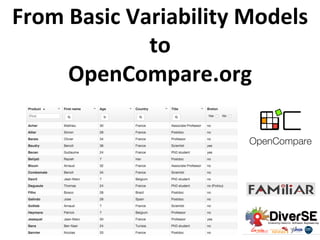

From Basic Variability Models to OpenCompare.org

- 1. From Basic Variability Models to OpenCompare.org

- 3. Architectural/ Component Models Dependencies Files Web Configurators Source Code Regulatory Requirements Video Variants Language Units Informal Product DescripHons (e.g., product overviews) EMF/UML Models

- 4. Or Xor Mandatory Optional not, and, or, implies Variability Models (feature models) Variants of code (e.g., Java or C) Variants of user interfaces Variants of video sequences Variants of models (e.g., UML or SysML) Variants of « things » (3D models) … Mining/Extracting Encoding/Formalizing Synthesising

- 6. Agenda (the journey) from basic variability models to opencompare.org • Mining Variability • From Feature Models to Product Comparison Matrices • Synthesis of Feature Models • Synthesis of Attributed Feature Models • Mining Product Comparison Matrices • OpenCompare.org

- 8. 8

- 11. 11 « Extrac+on and Evolu+on of Architectural Variability Models in Plugin-‐based Systems » Mathieu Acher, Anthony Cleve, Philippe Collet, Philippe Merle, Laurence Duchien, Philippe Lahire ECSA/SoSyM’14 FraSCAti SCAParser Java Compiler JDK6 JDT Optional Mandatory Alternative- Group Or-Group Assembly Factory resthttp Binding MMFrascati Component Factory Metamodel MMTuscany constraints rest requires MMFrascati http requires MMTuscany FM1 Variability Model

- 12. 12 « Feature Model Extrac+on from Large Collec+ons of Informal Product Descrip+ons » Jean-‐Marc Davril, Edouard Delfosse, Negar Hariri, Mathieu Acher, Jane Cleland-‐Huang, Patrick Heymans (ESEC/FSE’13)

- 13. 13 “Reverse Engineering Web Configurators” Ebrahim Khalil Abbasi, Mathieu Acher, Patrick Heymans, and Anthony Cleve. In 17th European Conference on Soware Maintenance and Reengineering (CSMR'14)

- 14. Guillaume Bécan, Mathieu Acher, Jean-‐Marc Jézéquel, and Thomas Menguy. On the Variability Secrets of an Online Video Generator (2015). In VaMoS'15 "sq": ["dwlcjv", "1y60t3z", "1lymk", "wqzv0y", "1xxivi2", "1oxnvtu", "lolbe9", "wvo06o", "1u6y5t2", "1eqb8bw", "1j9aij7", "nr7jom", "1jmv11y", "1qgn9dh", "1bv7rka", "19ykyyw", "5znrg7", "116hv1k"]

- 16. Feature Models (defacto standard for modeling variability) 16 Hierarchy: rooted tree Variability: • mandatory, • op+onal, • Groups: exclusive or inclusive features • Cross-‐tree constraints Optional Mandatory Xor-Group Or-Group

- 17. 17 Hierarchy + Variability = set of valid configuraHons {CarEquipment, Comfort, DrivingAndSafety, Healthing, AirCondi+oning, FrontFogLights} configuraHon = set of features selected Optional Mandatory Xor-Group Or-Group

- 18. 18 Hierarchy + Variability = set of valid configuraHons {CarEquipment, Comfort, DrivingAndSafety, Healthing, AirCondi+oning} configuraHon = set of features selected Optional Mandatory Xor-Group Or-Group

- 19. 19 Hierarchy + Variability = set of valid configuraHons Optional Mandatory Xor-Group Or-Group {CarEquipment, Comfort, DrivingAndSafety, Healthing, AirCondi+oning, Automa+cHeadLights} configuraHon = set of features selected ü ü ü ü ü ü

- 20. 20 Hierarchy + Variability = set of valid configuraHons Optional Mandatory Xor-Group Or-Group {AirCondi+oning, FrontFogLights} {Automa+cHeadLights, AirCondi+oning, FrontFogLights} {Automa+cHeadLights, FrontFogLights, AirCondi+oningFrontAndRear} {AirCondi+oningFrontAndRear} {AirCondi+oning} {AirCondi+oningFrontAndRear, FrontFogLights} {CarEquipment, Comfort, DrivingAndSafety, Healthing} X

- 21. 21 Hierarchy + Variability = set of valid configuraHons Optional Mandatory Xor-Group Or-Group {AirCondi+oning, FrontFogLights} {Automa+cHeadLights, AirCondi+oning, FrontFogLights} {Automa+cHeadLights, FrontFogLights, AirCondi+oningFrontAndRear} {AirCondi+oningFrontAndRear} {AirCondi+oning} {AirCondi+oningFrontAndRear, FrontFogLights} {CarEquipment, Comfort, DrivingAndSafety, Healthing} X

- 22. 22 Hierarchy + Variability = set of valid configuraHons Optional Mandatory Xor-Group Or-Group {AirCondi+oning, FrontFogLights} {Automa+cHeadLights, AirCondi+oning, FrontFogLights} {Automa+cHeadLights, FrontFogLights, AirCondi+oningFrontAndRear} {AirCondi+oningFrontAndRear} {AirCondi+oning} {AirCondi+oningFrontAndRear, FrontFogLights} {CarEquipment, Comfort, DrivingAndSafety, Healthing} X

- 23. 23 Hierarchy + Variability = set of valid configuraHons Optional Mandatory Xor-Group Or-Group {AirCondi+oning, FrontFogLights} {Automa+cHeadLights, AirCondi+oning, FrontFogLights} {Automa+cHeadLights, FrontFogLights, AirCondi+oningFrontAndRear} {AirCondi+oningFrontAndRear} {AirCondi+oning} {AirCondi+oningFrontAndRear, FrontFogLights} {CarEquipment, Comfort, DrivingAndSafety, Healthing} X

- 24. 24 Hierarchy + Variability = set of valid configuraHons {CarEquipment, Comfort, DrivingAndSafety, Healthing, AirCondi+oning} configuraHon = set of features selected Optional Mandatory Xor-Group Or-Group

- 25. (FeAture Model scrIpt Language for manIpula+on and Automa+c Reasoning) imporHng, exporHng, composing, decomposing, ediHng, configuring, reverse engineering, compuHng "diffs", refactoring, tesHng, and reasoning about (mulHple) variability models not, and, or, implies φTVL DIMACS hVp://familiar-‐project.github.com/ Mathieu Acher, Philippe Collet, Philippe Lahire, Robert B. France « A Domain-‐Specific Language for Large-‐ Scale Management of Feature Models » Science of Computer Programming (SCP), 2013

- 26. φ (Boolean) Feature Models (Boolean) Product Comparison Matrix (Boolean) Formula

- 28. Or Xor Mandatory Optional not, and, or, implies Variability Models (feature models) Mining/Extracting Encoding/Formalizing Synthesising

- 29. φ

- 30. For a given configuraHon set, many (maximal) feature diagrams with different ontological semanHcs [She et al. ICSE’11, Andersen et al. SPLC’12, Acher et al. VaMoS’13] 30

- 31. For a given configuraHon set, many (maximal) feature diagrams with different ontological semanHcs [She et al. ICSE’11, Andersen et al. SPLC’12, Acher et al. VaMoS’13] 31

- 32. Importance of ontological semanHcs (1) 32

- 33. Importance of ontological semanHcs (2) 33

- 34. Importance of ontological semanHcs (3) 34 Communica+on Comprehension Forward engineering (e.g., genera+on)

- 35. 35 Two product configurators generated from two FMs with the same configuraHon semanHcs but different ontological semanHcs. Good FM Good configuraHon interface Bad FM Bad configuraHon interface Importance of ontological semanHcs (4)

- 36. Most of the exisHng approaches neglect either configuraHon or ontological semanHcs. We want both! 36 φ

- 37. Fundamental Problem SelecHng a Spanning Tree of the Binary ImplicaHon Graph (BIG) 37

- 38. 38 #0 OpHmum branching (Tarjan) weighHng edges Fundamental Problem SelecHng a Spanning Tree of the Binary ImplicaHon Graph (BIG)

- 39. 39 #1 Ranking lists best parent candidates for each feature Fundamental Problem SelecHng a Spanning Tree of the Binary ImplicaHon Graph (BIG)

- 40. 40 #2 Clusters ~possible siblings Fundamental Problem SelecHng a Spanning Tree of the Binary ImplicaHon Graph (BIG)

- 41. 41 #3 Cliques ~bi-‐implicaHons Fundamental Problem SelecHng a Spanning Tree of the Binary ImplicaHon Graph (BIG)

- 42. 42 #4 small BIG Fundamental Problem SelecHng a Spanning Tree of the Binary ImplicaHon Graph (BIG)

- 43. 43 #4 reduced BIG incomplete but dramaHcally reduce the problem Fundamental Problem SelecHng a Spanning Tree of the Binary ImplicaHon Graph (BIG)

- 44. Ontological HeurisHcs • For op+mum branching, compu+ng ranking lists and clusters – ~ « closedness » of features based on their names • Syntac+cal heuris+cs – Smith-‐Waterman (SW) and Levenshtein (L) • Wordnet – PathLength (PL) and Wu&Palmer (WP) • Wikipedia Miner offers an API to browse Wikipedia's ar+cles and compute their relatedness – Wik+onary (Wikt) 44 Milne, D.N., WiVen, I.H.: An open-‐source toolkit for mining wikipedia. ArHf. Intell. 194, 222{239 (2013) 40 GB!

- 45. WebFML 45

- 46. • Dataset – 120+ feature models of SPLOT – 30+ product comparison matrices from Wikipedia (see Becan et al. ASE’14 and ASE’13) – Ground truths are known • Effec+veness of techniques (reduced BIG + ontological heuris+cs) – One shot synthesis – Quality of the ranking lists (top 2), clusters – Comparison with randomized and exis+ng techniques 46 Support and Empirical Study (1) Goal: evidence and empirical insights of what heurisHcs are effecHve and what support is needed in WebFML

- 47. • Dataset – 120+ feature models of SPLOT – 30+ product comparison matrices from Wikipedia (see Becan et al. ASE’14 and ASE’13) – Ground truths are known • Effec+veness of techniques (reduced BIG + ontological heuris+cs) – One shot synthesis – Quality of the ranking lists (top 2), clusters – Comparison with randomized and exis+ng techniques 47 Support and Empirical Study (2) Default heurisHcs/support has been determined through an empirical study

- 48. • One-‐step synthesis is far from the ground truth – despite state-‐of-‐the-‐art techniques we have developed – interac+ve support is thus crucial • State-‐of-‐the-‐art heuris+cs for ranking lists and clusters • Empirical insights on « cliques » and BIG reduc+on – e.g., support for unfolding of cliques 48 Support and Empirical Study (3) Default heurisHcs/support has been determined through an empirical study

- 49. hVp://Hnyurl.com/ OntoFMExperiments 49 hVps://hal.inria.fr/hal-‐01096969 Support and Empirical Study (3)

- 50. 50 Guillaume Bécan, Mathieu Acher, Benoit Baudry, and Sana Ben Nasr. Breathing Ontological Knowledge Into Feature Model Synthesis: An Empirical Study (2015). In Empirical Soware Engineering (ESE) WebFML: Synthesizing Feature Models Everywhere (SPLC’14)

- 53. Guillaume Bécan, Razieh Behja+, Arnaud Gotlieb, and Mathieu Acher. Synthesis of Axributed Feature Models From Product Descrip+ons (2015). In 19th Interna+onal Soware Product Line Conference (SPLC'15) (research track, long paper)

- 54. Guillaume Bécan, Razieh Behja+, Arnaud Gotlieb, and Mathieu Acher. Synthesis of Axributed Feature Models From Product Descrip+ons (2015). In 19th Interna+onal Soware Product Line Conference (SPLC'15) (research track, long paper)

- 58. Jean-‐Marc Davril, Mathieu Acher, Guillaume Bécan, and Patrick Heymans. Towards Breaking The Curse of Dimensionality in Reverse Engineering Feature Models (2015). In Configura+on Workshop Data Mining Fuzzy Logic …

- 63. Choose your BEST Laptop.. Values ? Differences ? CommonaliHes AlternaHves ? Features ?

- 64. Manual case-‐by-‐case review of each product L Manually idenHfying features

- 65. MatrixMiner: A Red Pill to Architect Informal Product DescripHons in the Matrix (Ben Nasr et al., ESEC/FSE’15 tool) hVp://matrix-‐miner.variability.io/

- 66. Guillaume Bécan, Nicolas Sannier, Mathieu Acher, Olivier Barais, Arnaud Blouin, and Benoit Baudry. Automa+ng the Formaliza+on of Product Comparison Matrices (2014). In 29th IEEE/ACM Interna+onal Conference on Automated Soware Engineering (ASE'14)

- 67. Product Comparison Matrix (PCM) • Initial points: VaMoS’12 (VariCell), ASE’13 (we coined the term), VaMoS’14 (comparators) • Metamodel for encoding PCMs and developing services (eg editors), cross-validated by users Guillaume Bécan, Nicolas Sannier, Mathieu Acher, Olivier Barais, Arnaud Blouin, and Benoit Baudry. Automating the Formalization of Product Comparison Matrices (2014). In 29th IEEE/ACM International Conference on Automated Software Engineering (ASE'14)

- 68. Evaluation Automating the Formalization of Product Comparison Matrices - 68 Experimental settings: • 75 Wikipedia pages • Headers specified manually • Automated extraction of information PCM PCM model parsing preprocessing extracting information PCM model PCM model PCM metamodel exploiting S E R V I C E S RQ1 RQ2 RQ3

- 69. Evaluation Automating the Formalization of Product Comparison Matrices - 69 Experimental settings: • Evaluated by 20 persons (researchers and engineers) • Online editor

- 70. Evaluation Automating the Formalization of Product Comparison Matrices - 70 RQ1: How to formalize data contained in PCMs? 95.72% cells are valid or corrected with concepts from the metamodel 4.28% are invalid and the evaluators proposed a new concept • Dates • Dimensions and units • Versions Solution: • Add corresponding data types to the metamodel • Create new rules for interpreting cells

- 71. Evaluation Automating the Formalization of Product Comparison Matrices - 71 RQ2: How to automate the formalization of PCMs? 93,11% of the cells are correctly formalized The rest can be manually formalized with an editor Formalization errors arise from 4 main areas: • Overlapping concepts (e.g. what does an empty cell mean?) • Missing concepts (e.g. dates, versions…) • Missing interpretation rules • Bad rules

- 72. Evaluation Automating the Formalization of Product Comparison Matrices - 72 RQ3: What kind of tools and services can be built on top of formalized PCMs? Editing and formalizing PCMs

- 73. Evaluation Automating the Formalization of Product Comparison Matrices - 73 Providing guidance during edition or refactoring Detect inconsistent cells to provide warnings RQ3: What kind of tools and services can be built on top of formalized PCMs?

- 74. Evaluation Automating the Formalization of Product Comparison Matrices - 74 Metamodel • Feature/product oriented • Clear semantics RQ3: What kind of tools and services can be built on top of formalized PCMs? Comparing products Translate PCMs to variability models

- 75. Let us do it seriously ;-)

- 77. OpenCompare.org standard format collaborative edition import/export of numerous formats embeddable editor open source open data innovative services (e.g., for visualizing, configuring, filtering and « playing » with comparisons; ways to share, collaborate, and easily create comparisons) (https://github.com/gbecan/OpenCompare) (opencompare.org/api/get/ID)

- 78. Current case study: Wikipedia Import Export Edit |- ! {{rh}} | [[KickassTorrents]] | {{yes|None}} | {{no}} | {{yes}} | {{no}}<ref>{{cite web|url=http://kickass.so/ dmca/|title=KAT DMCA|work=kickasstorrents}}</ ref> | {{sort|0000076|76}}<ref>[http://www.alexa.com/ siteinfo/kickass.so KickassTorrents] at Alexa</ ref> |-

- 81. Key Contributions • Mining Variability (see ESEC/FSE’13, SPLC’14, SoSyM’14, VaMoS’12/13/14/15) • From Feature Models to Product Comparison Matrices – Syntax and semantics • Synthesis of Feature Models – Becan et al. (ESE journal, 2015) • Synthesis of Attributed Feature Models – Becan et al. (SPLC’15) • Mining Product Comparison Matrices – Ben Nasr et al. (ESEC/FSE’15, MatrixMiner) – Becan et al. (ASE’14) • OpenCompare.org

- 82. Future Work • Synthesis of Feature Models – scalability, expressiveness, readability, usefullness • Comparison Matrices – Mining everything – Synthesis – Reasoning • The link between the two • OpenCompare.org