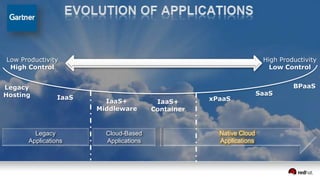

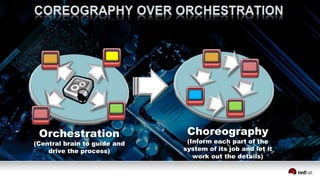

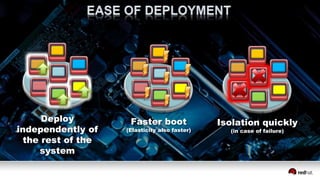

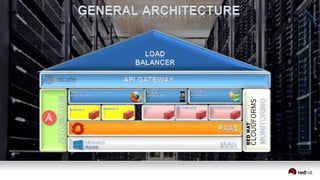

The document discusses the challenges and benefits of developing native cloud applications (NCAs), emphasizing the need for applications to be designed specifically for cloud environments. It highlights the importance of redundancy, breaking tasks into separate services, and fostering a culture of automation and cross-functional collaboration. The document also outlines strategies for deployment and orchestration to enhance scalability and resilience in application design.