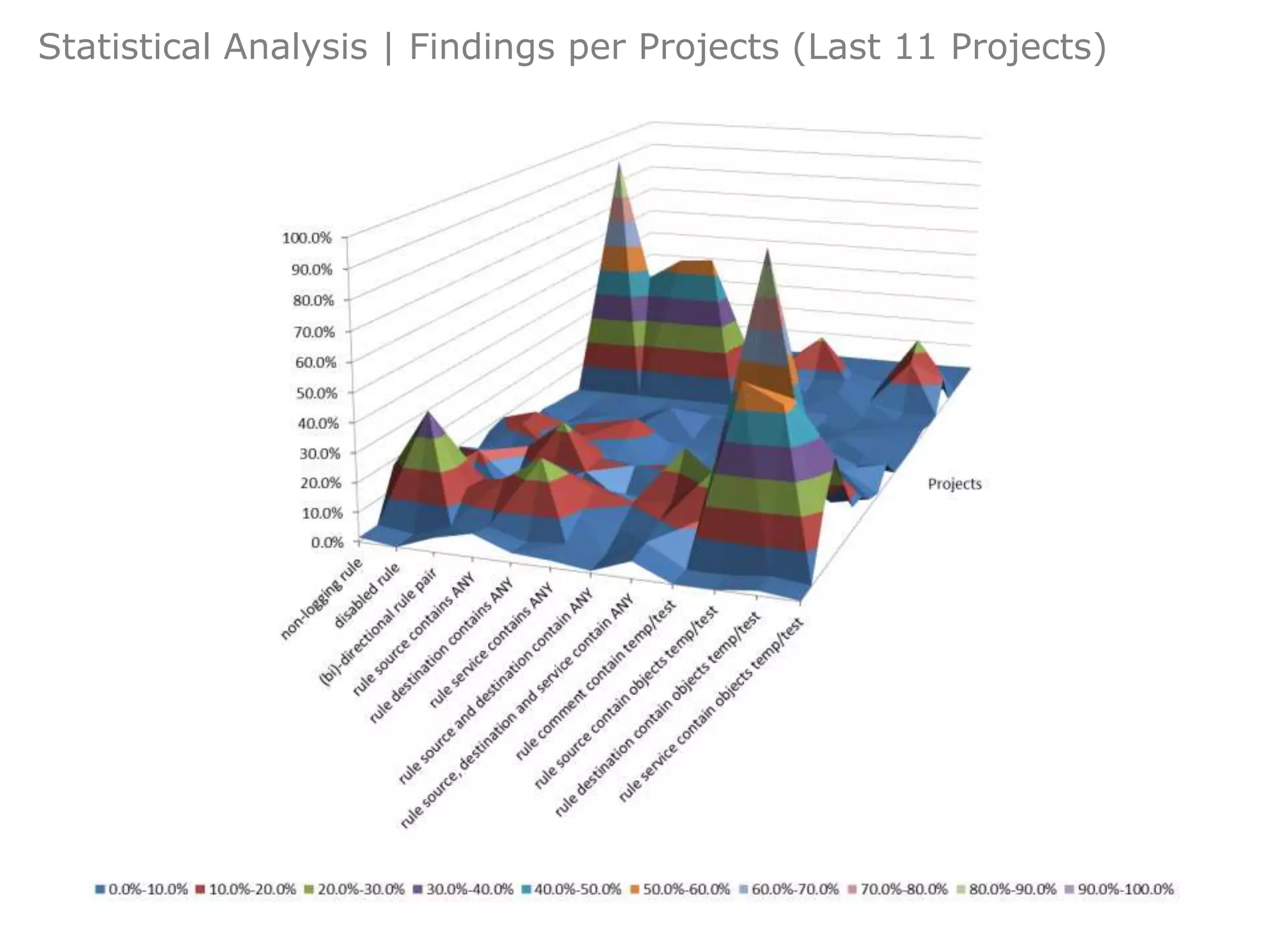

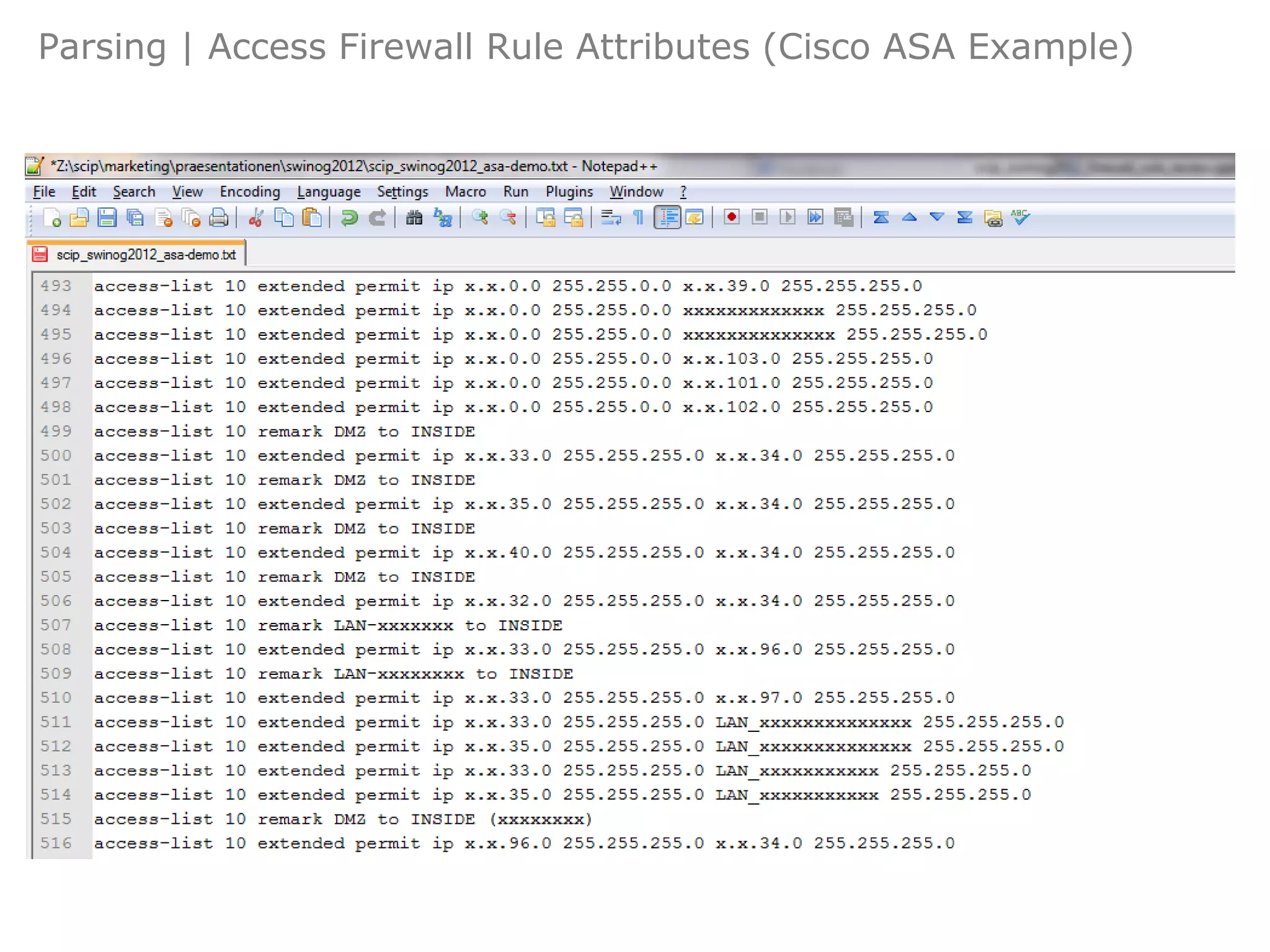

The document discusses firewall rule modeling and review, highlighting approaches for extracting, parsing, and dissecting firewall rulesets to identify weaknesses. It outlines various types of insecure, obsolete, and inefficient rules that can compromise security, along with statistical analysis methods. The overall goal is to improve firewall configuration and security through systematic analysis and review of rulesets.

![Dissection | Access Rule Attributes Intro

Who?

What?

◦ A packet filter rule consists of at least:

Modelling & Review

◦ Source Host/Net [10.0.0.0/8] Extract

◦ Source Port [>1023] Parse

◦ Destination Host/Net [192.168.0.10/32] Dissect

◦ Destination Port [80] Review

Additional Settings

◦ Protocol [TCP]

Routing Criticality

◦ Action [ALLOW] Statistical Analysis

◦ Additional rule attributes might be: Outro

◦ ID [42] Summary

Questions

◦ Active [enabled]

◦ Timeframe [01/01/2012 – 12/31/2012]

◦ User [testuser2012]

◦ Logging [disabled]

◦ Priority (QoS) [bandwidth percent 30]

◦ ...

SwiNOG 24 11/28](https://image.slidesharecdn.com/scipswinog2012firewallrulereviewslideshare-120514014303-phpapp02/75/Firewall-Rule-Review-and-Modelling-11-2048.jpg)

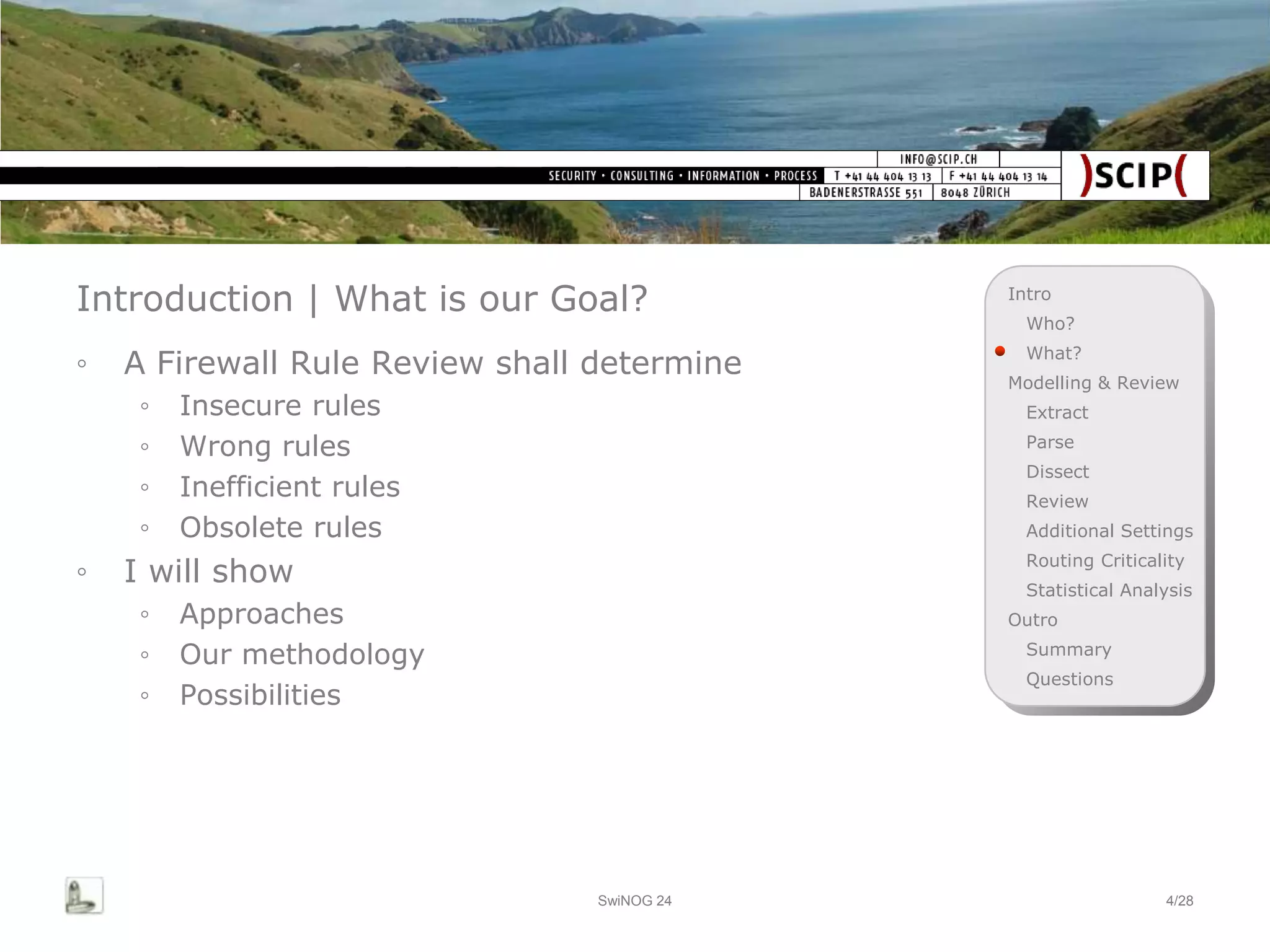

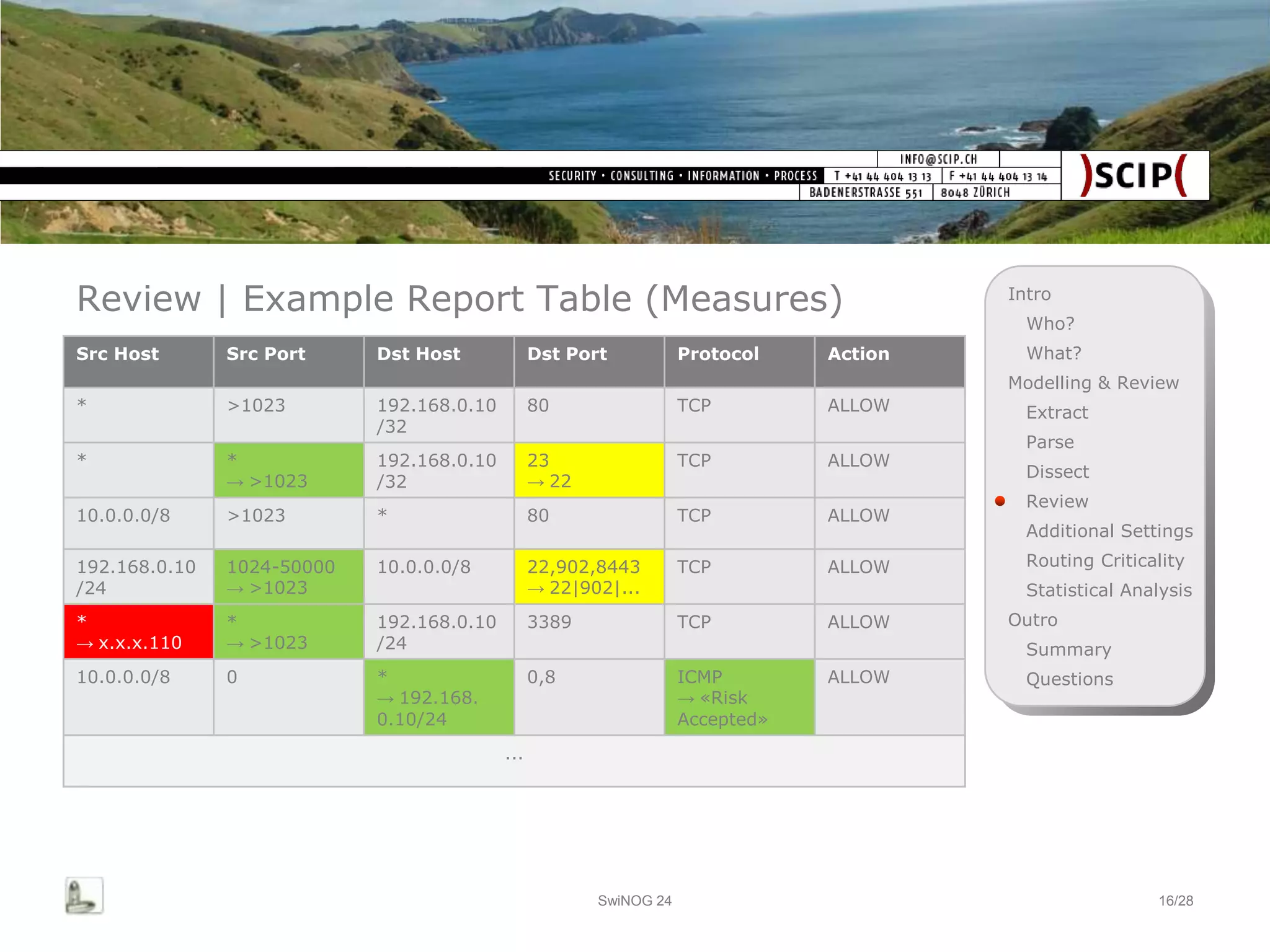

![Review | Example Report Table (Findings) Intro

Who?

Src Host Src Port Dst Host Dst Port Protocol Action What?

Modelling & Review

* >1023 192.168.0.10 80 TCP ALLOW Extract

/32

Parse

* * 192.168.0.10 23 TCP ALLOW

Dissect

[ANY Rule] /32 [Insecure]

Review

10.0.0.0/8 >1023 * 80 TCP ALLOW

Additional Settings

192.168.0.10 1024-50000 10.0.0.0/8 22,902,8443 TCP ALLOW Routing Criticality

/24 [Inadequate] [Mash-Up] Statistical Analysis

* * 192.168.0.10 3389 TCP ALLOW Outro

[ANY Rule] [ANY Rule] /24 Summary

10.0.0.0/8 0 * 0,8 ICMP ALLOW Questions

[ANY Rule] [Insecure]

...

SwiNOG 24 15/28](https://image.slidesharecdn.com/scipswinog2012firewallrulereviewslideshare-120514014303-phpapp02/75/Firewall-Rule-Review-and-Modelling-15-2048.jpg)

![Additional Settings | Global Settings Intro

Who?

What?

◦ Some FWs, especially proxies, introduce additional

Modelling & Review

(global) settings, which might affect the rules. Example

Extract

McAfee Web Gateway: Parse

◦ Antivirus Dissect

◦ Enabled [1=enabled] Review

◦ HeuristicWWScan [0=disabled] Additional Settings

◦ AutoUpdate [0=disabled] Routing Criticality

◦ Caching Statistical Analysis

◦ Enabled [1=enabled] Outro

◦ CacheSize [536870912] Summary

◦ MaxObjectSize [8192] Questions

◦ HTTP Proxy Settings

◦ Enabled [1=enabled]

◦ AddViaHeader [1=enabled]

◦ ClientIpHeader ['X-Forwarded-For']

◦ ...

SwiNOG 24 18/28](https://image.slidesharecdn.com/scipswinog2012firewallrulereviewslideshare-120514014303-phpapp02/75/Firewall-Rule-Review-and-Modelling-18-2048.jpg)