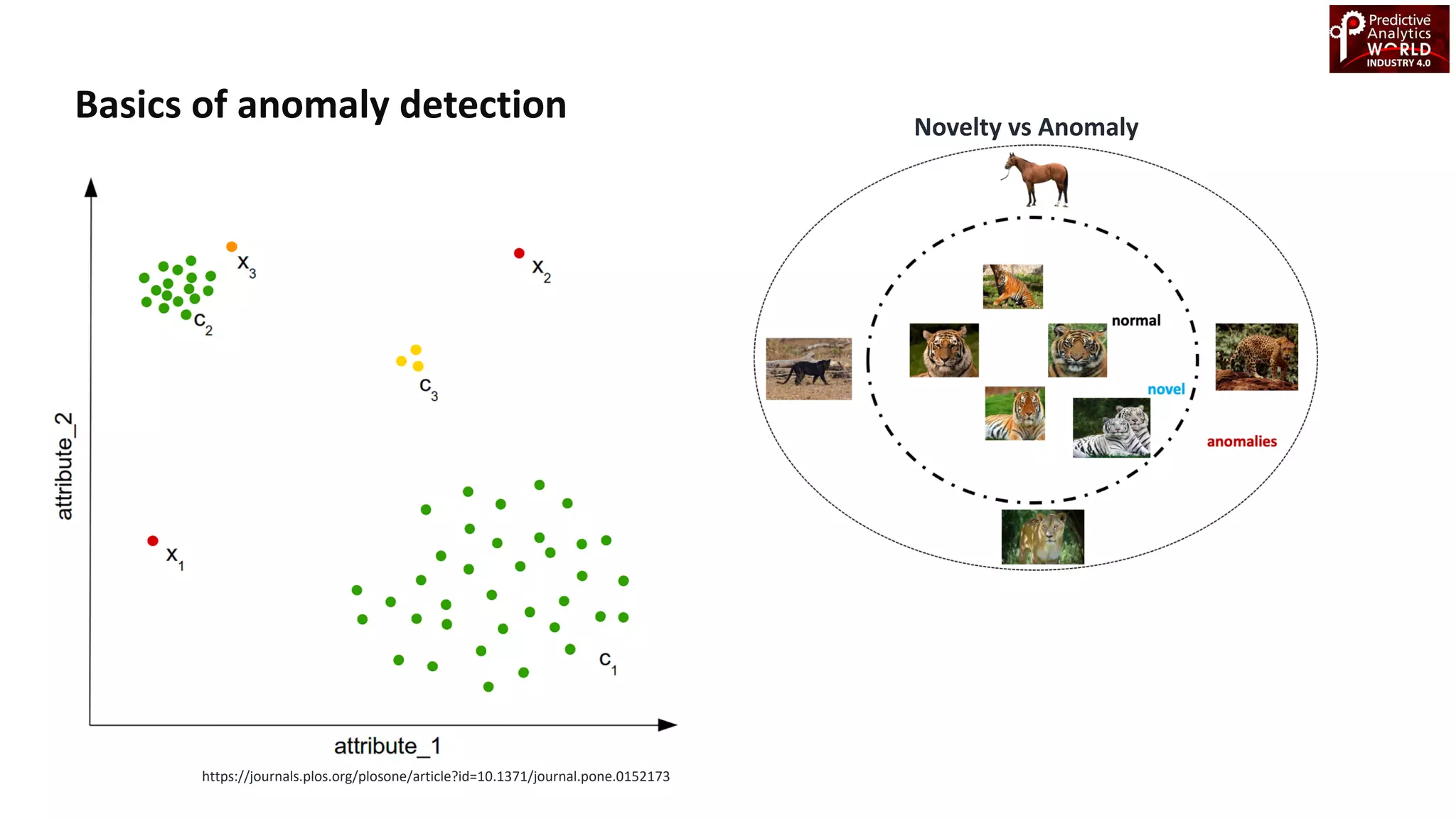

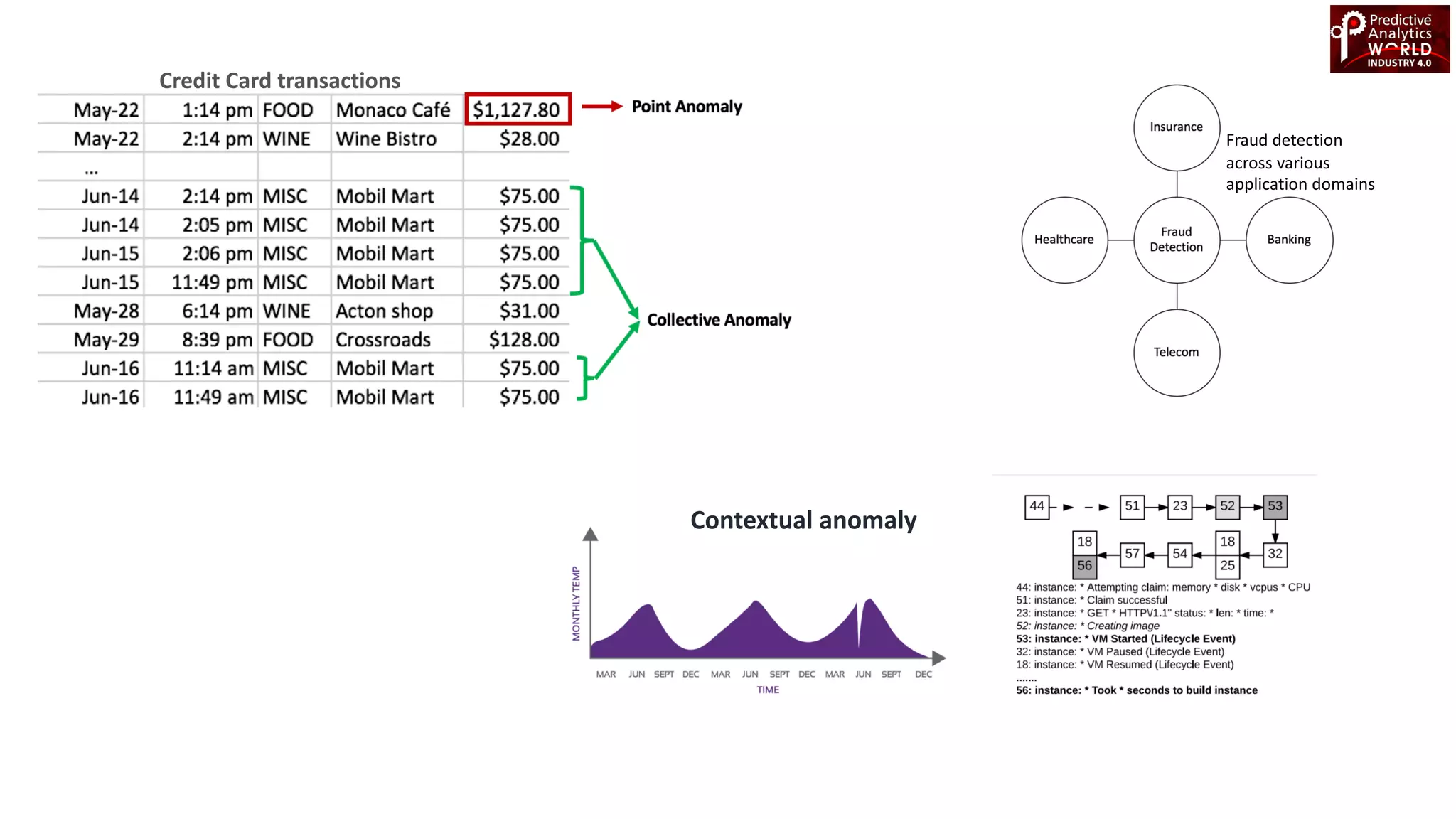

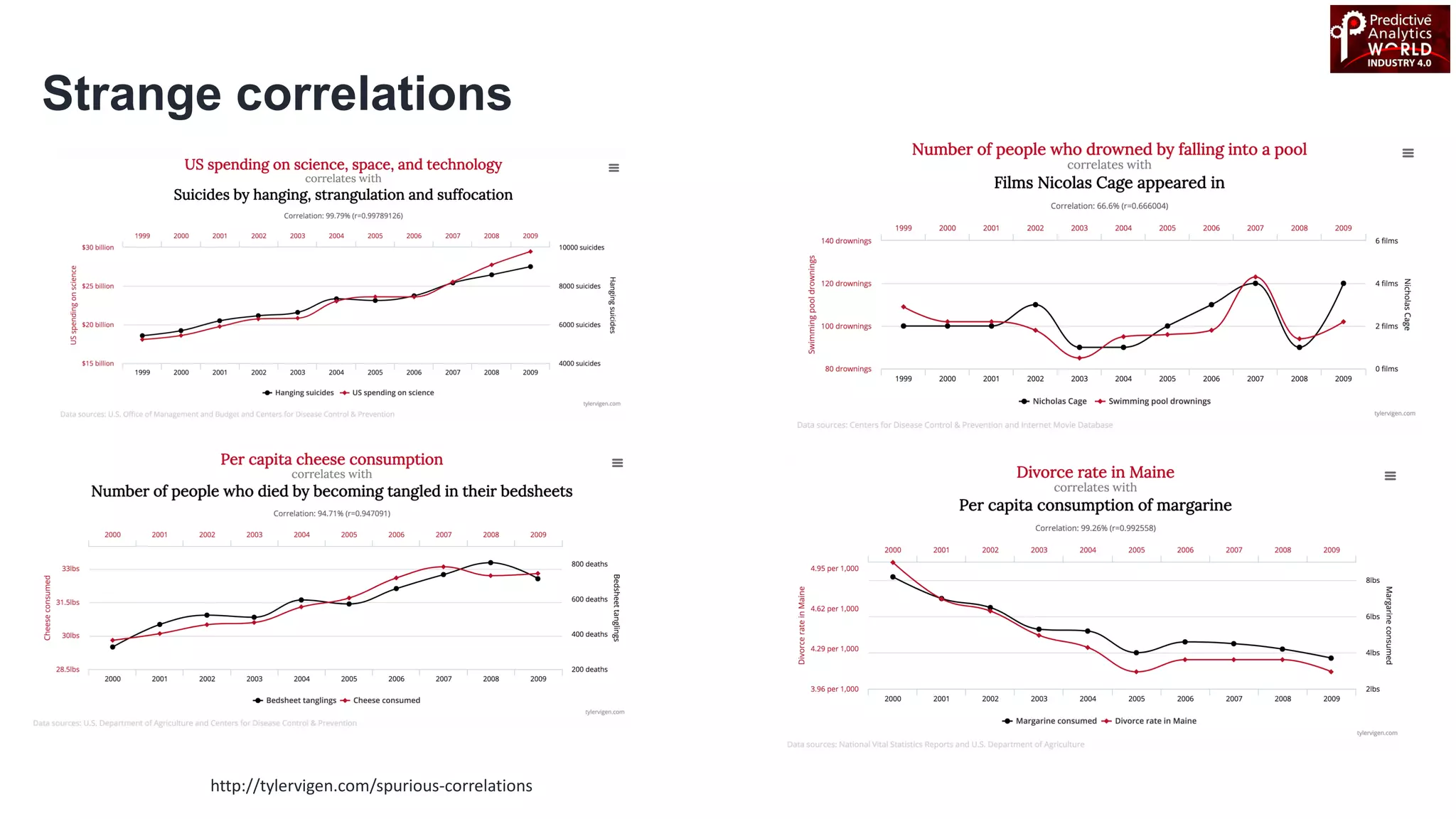

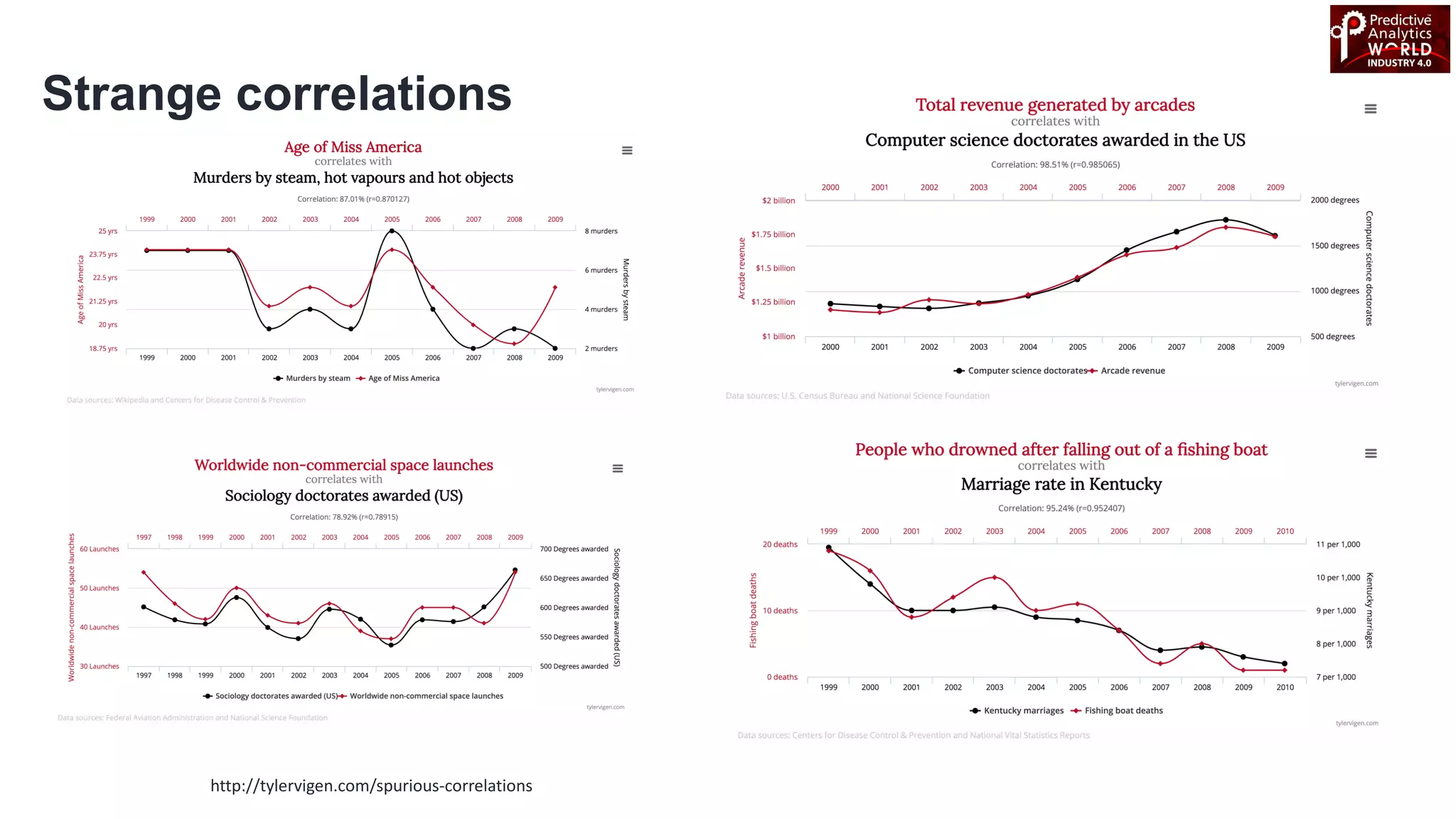

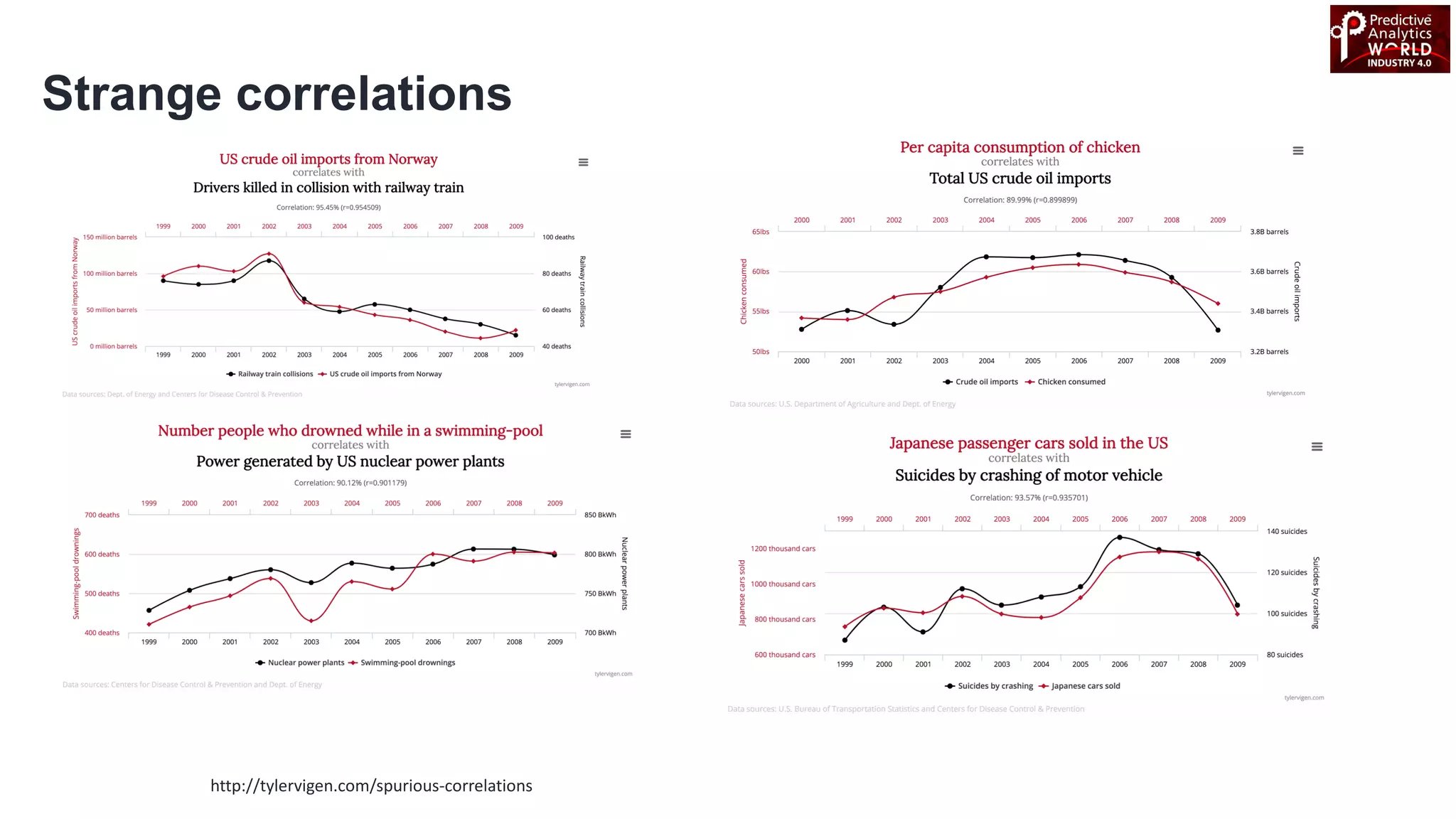

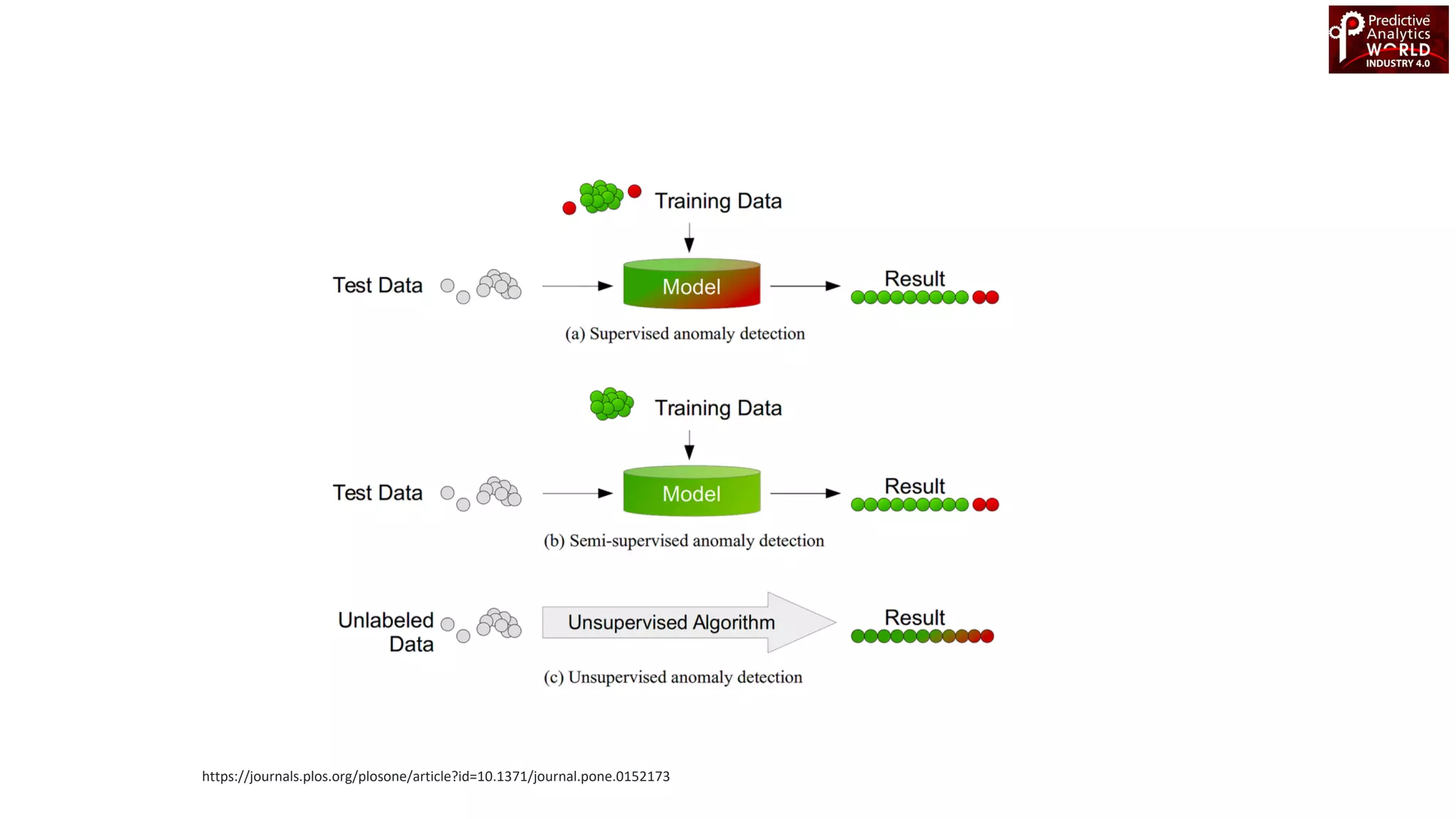

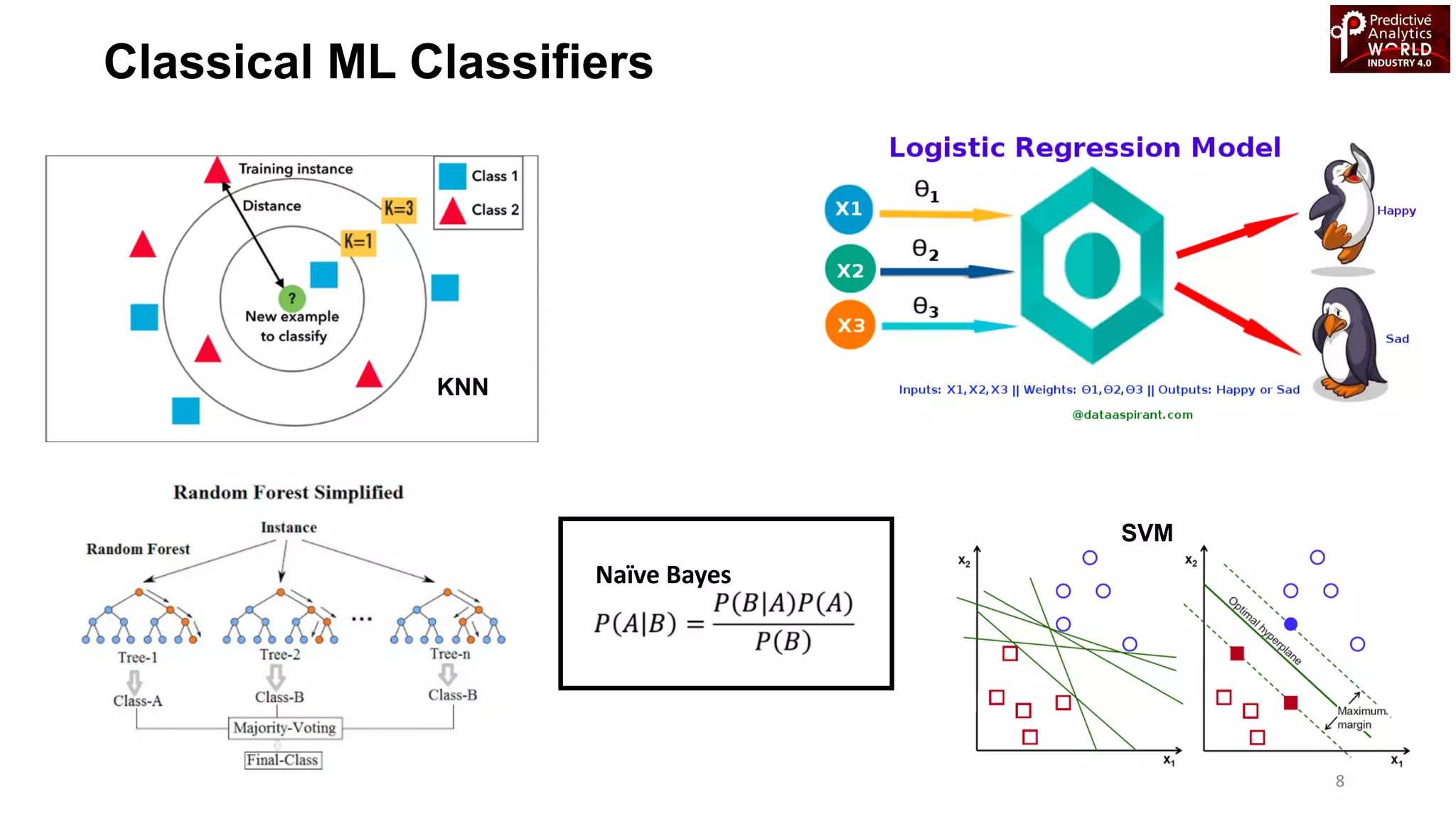

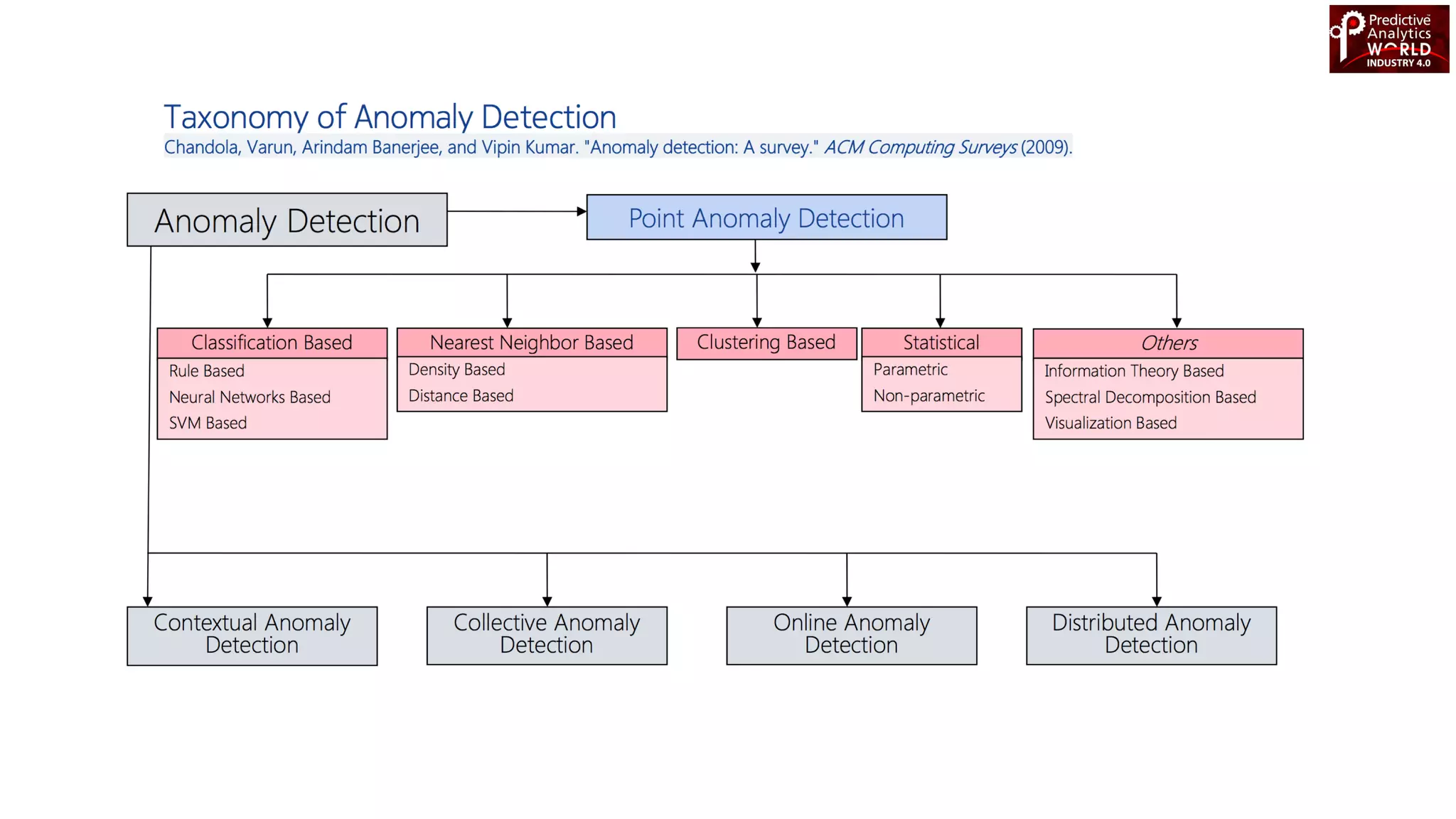

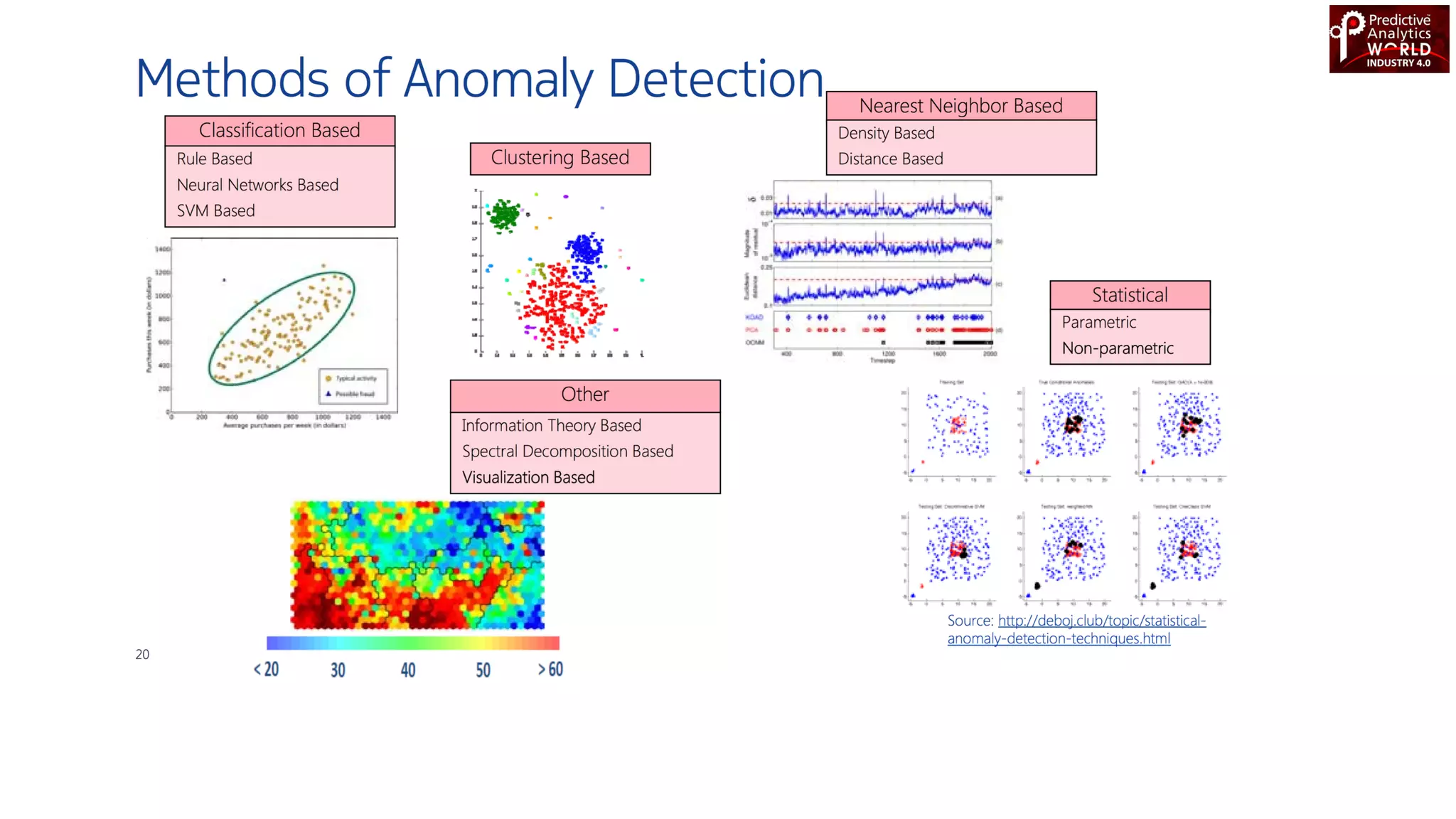

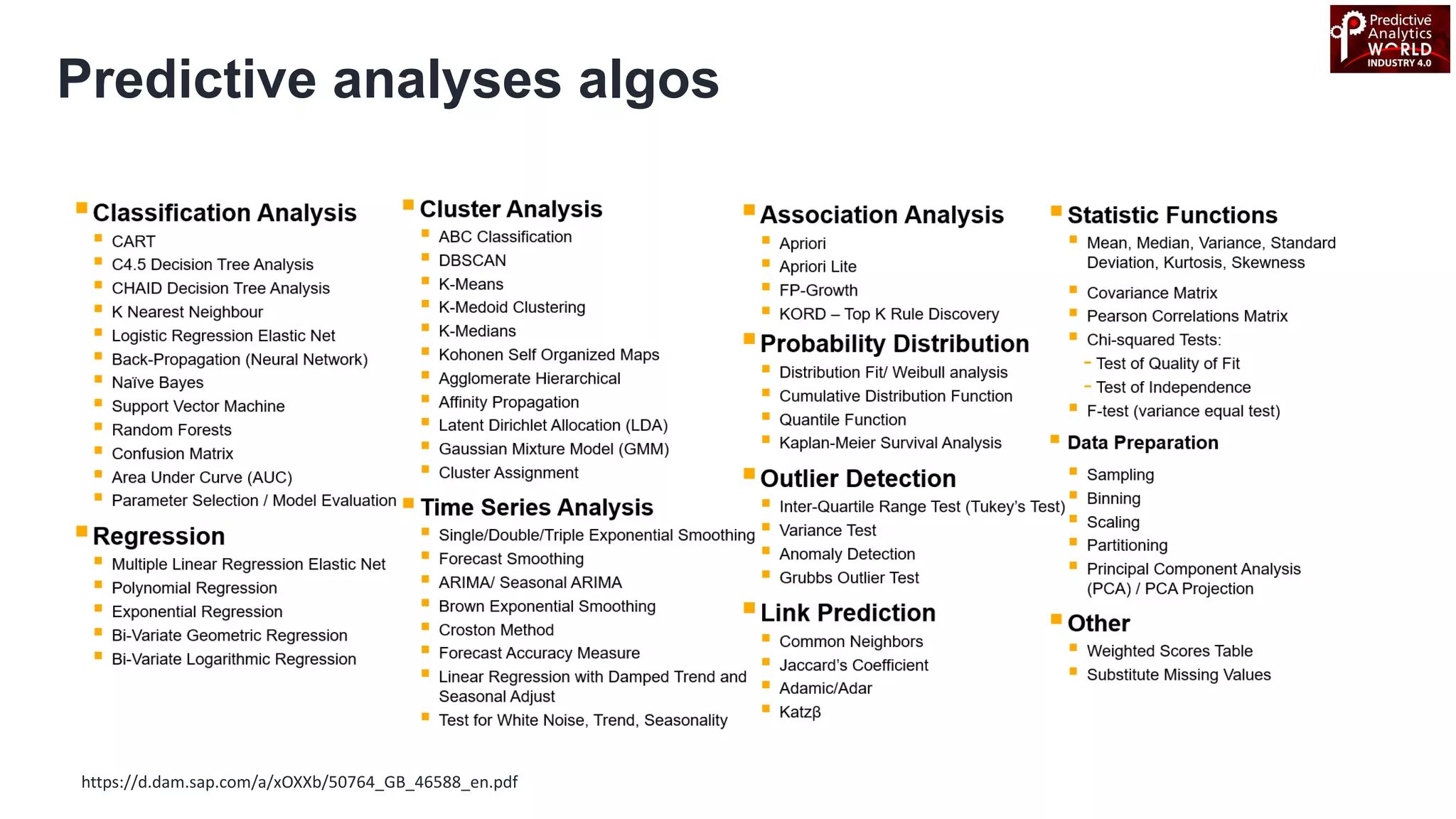

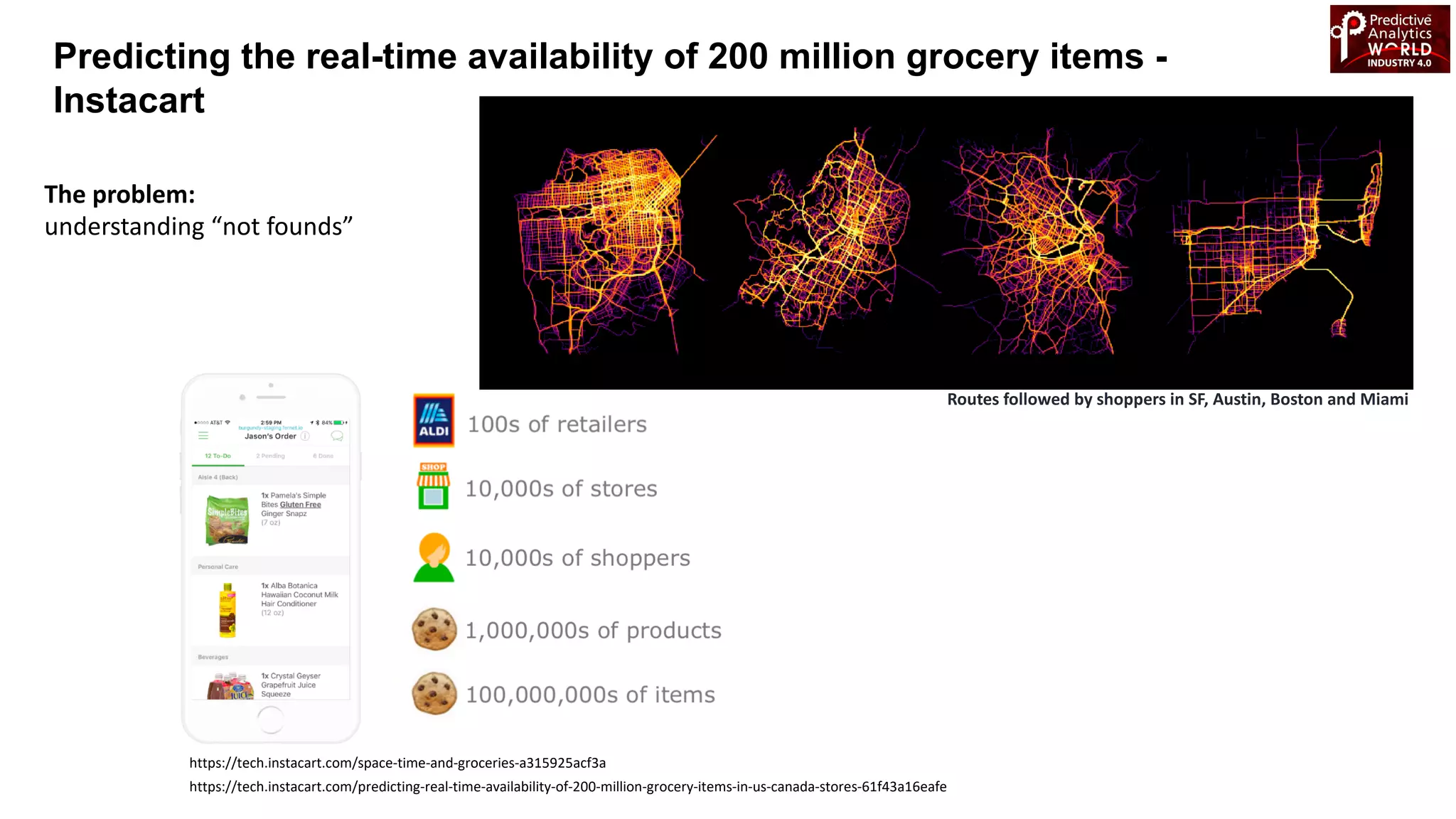

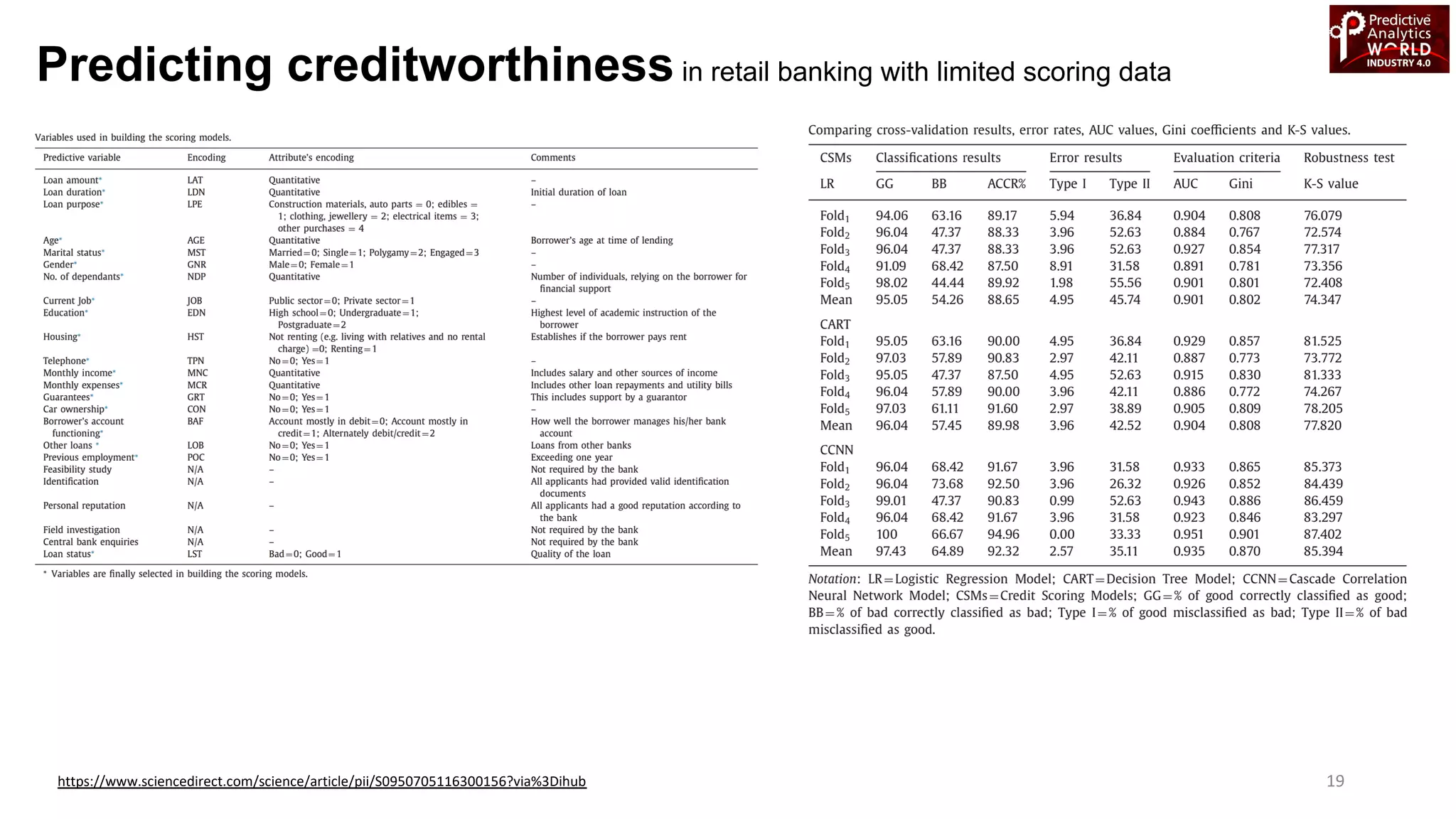

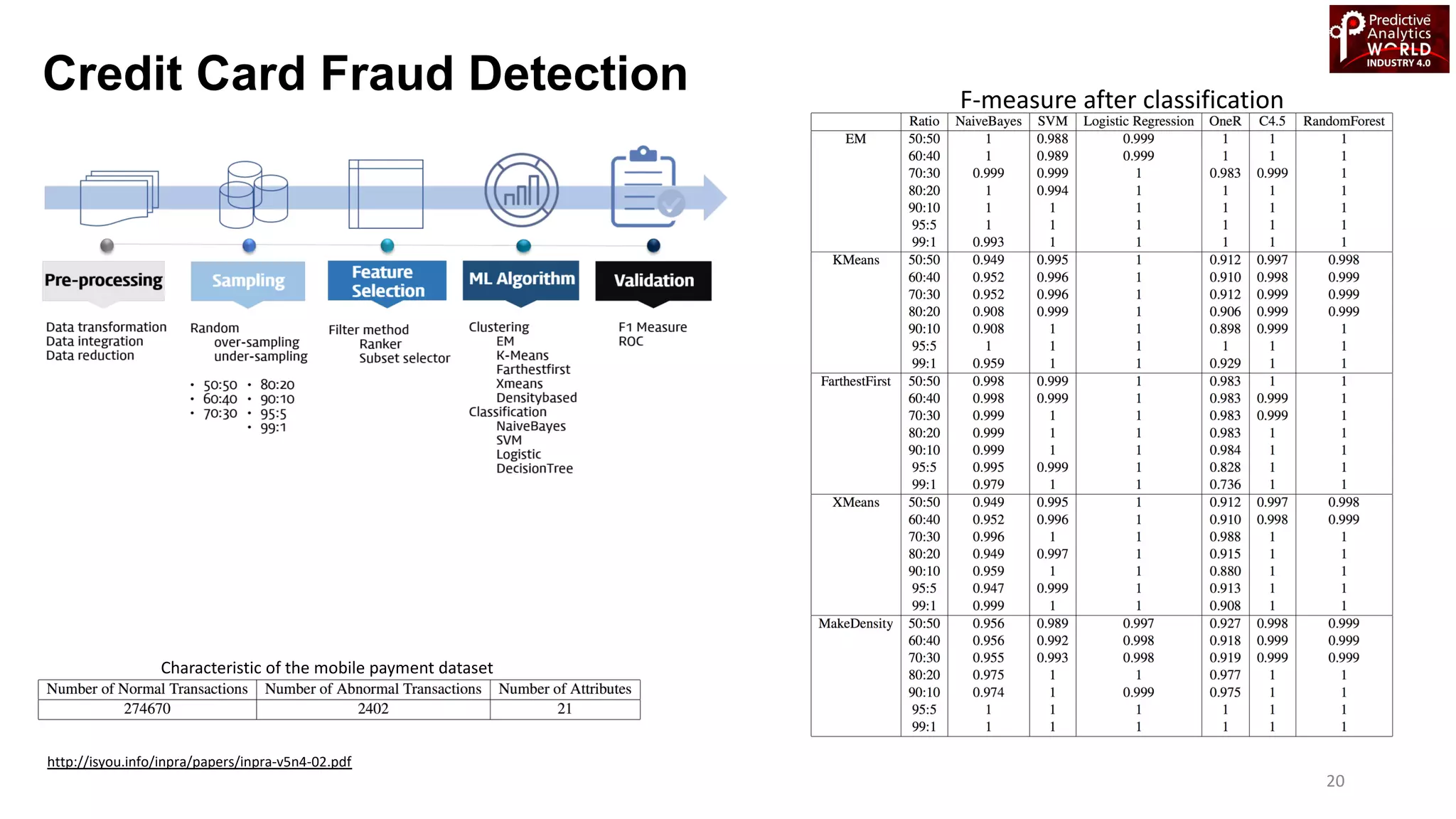

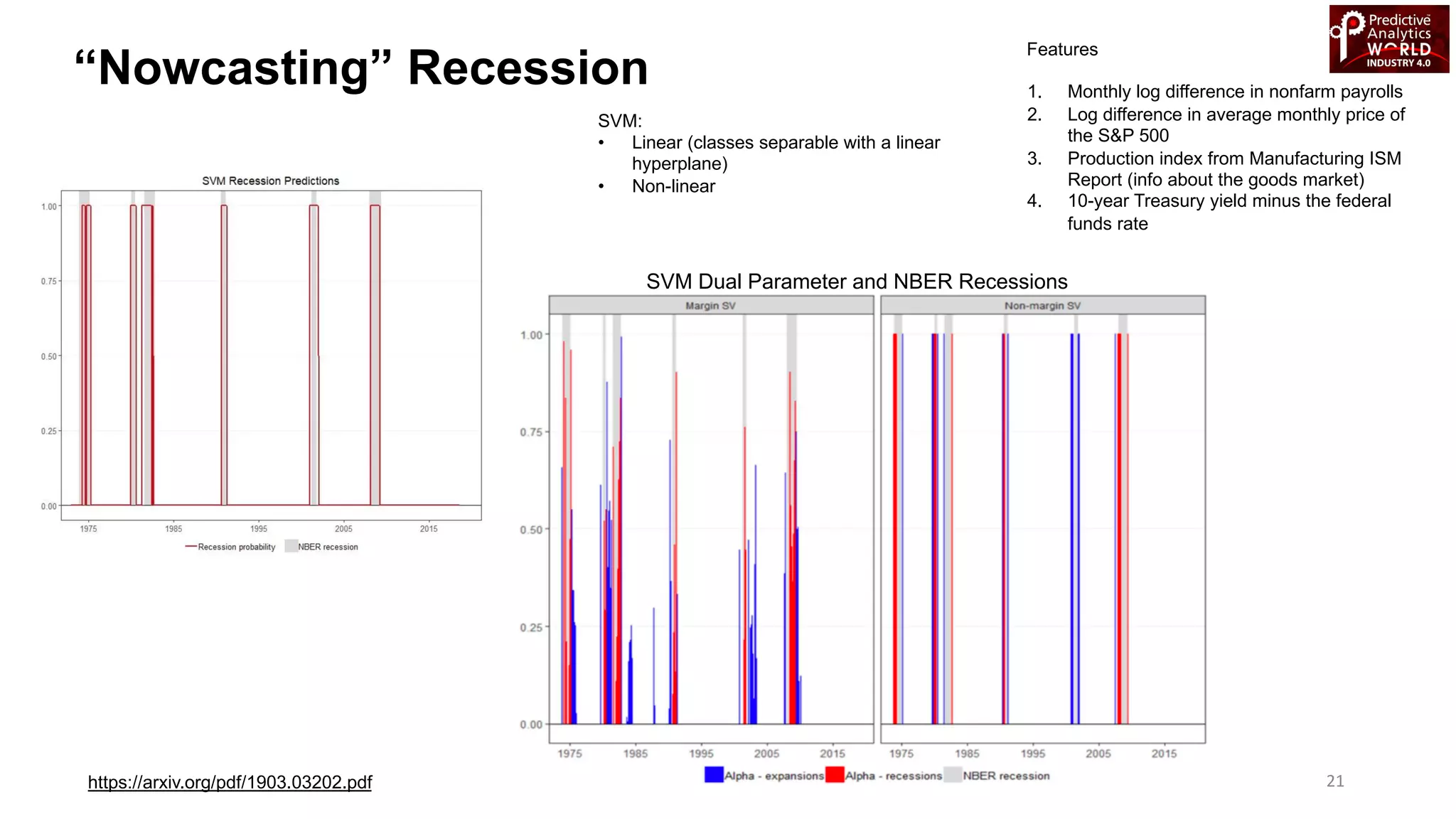

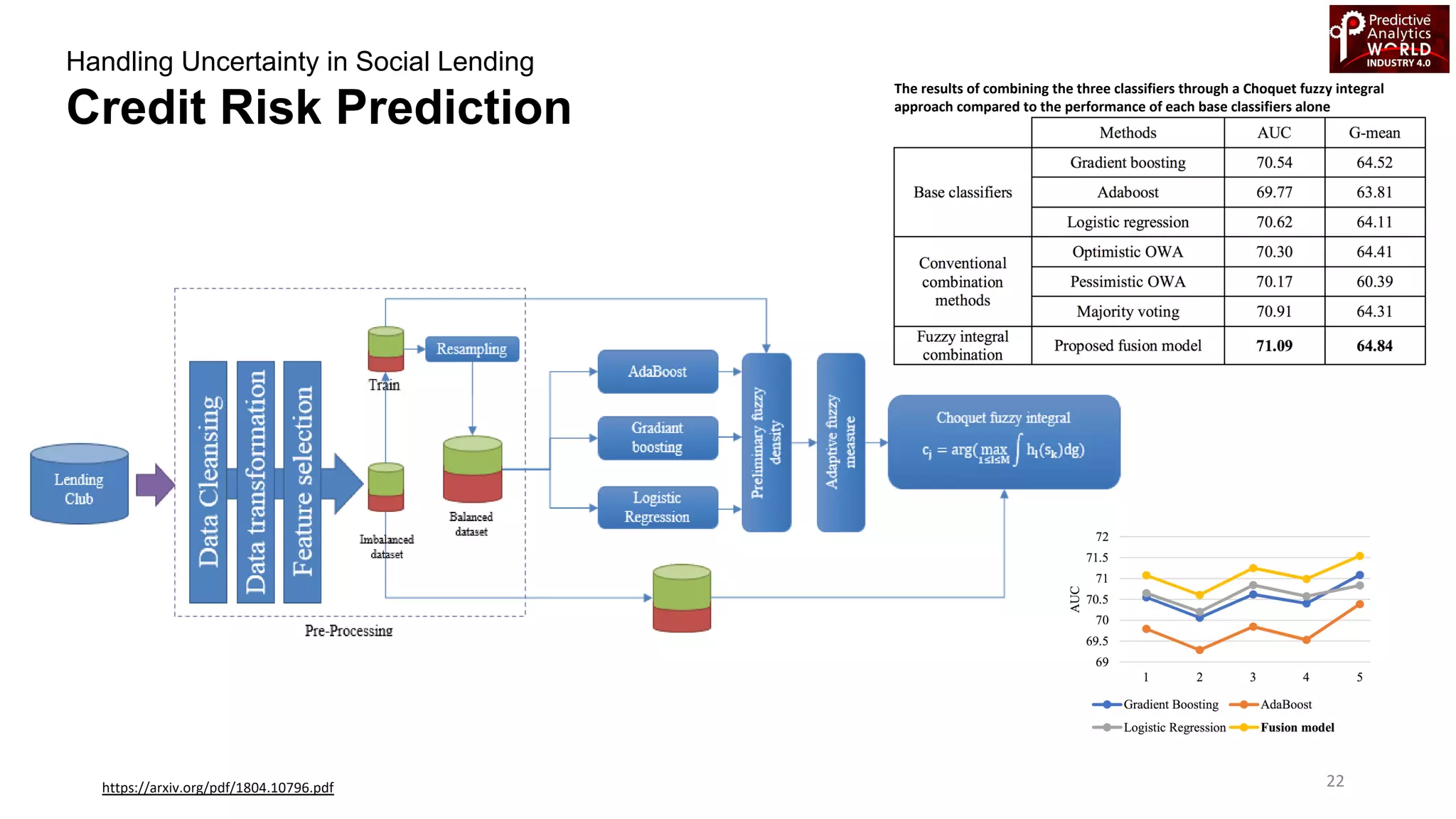

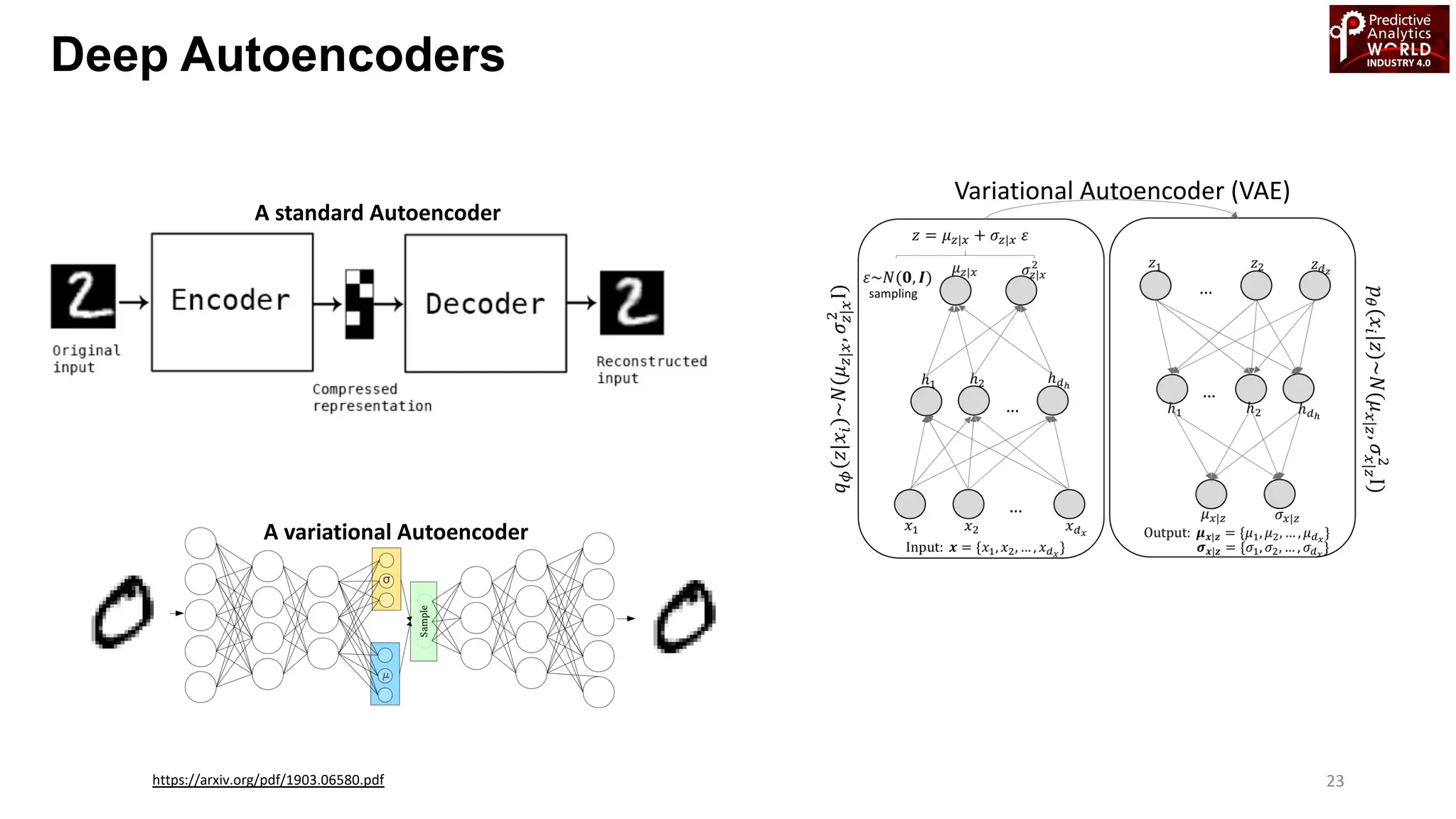

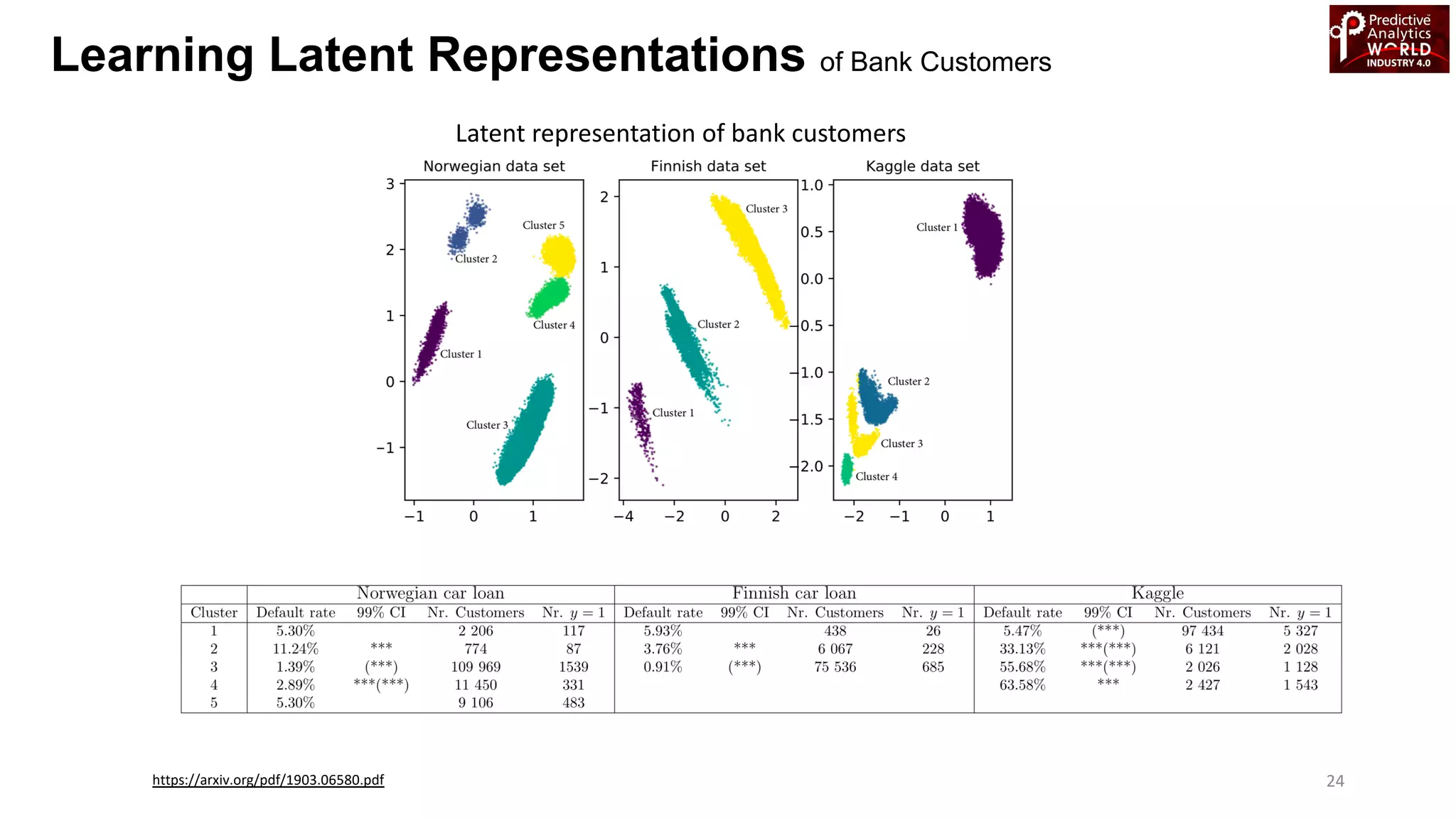

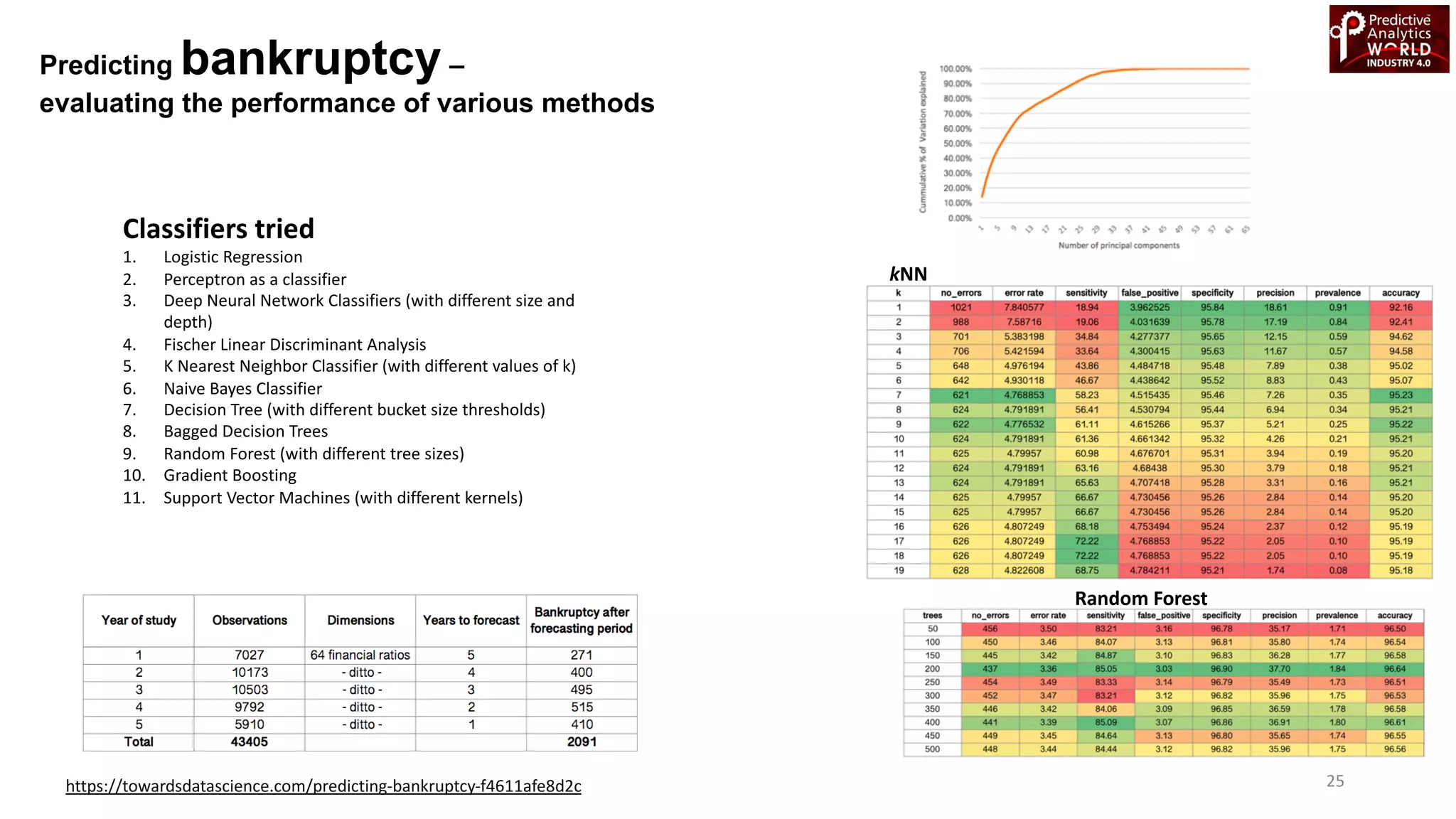

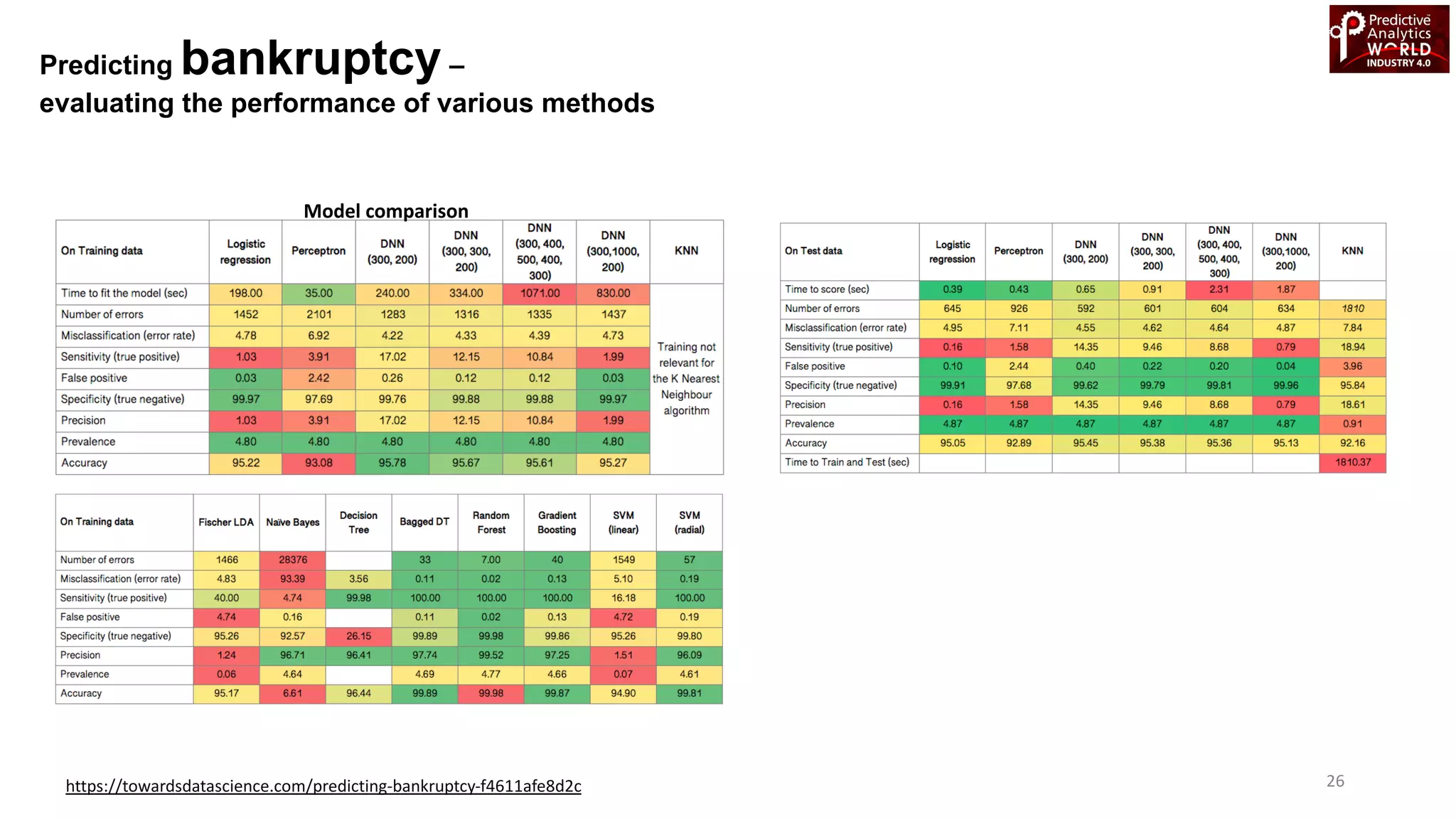

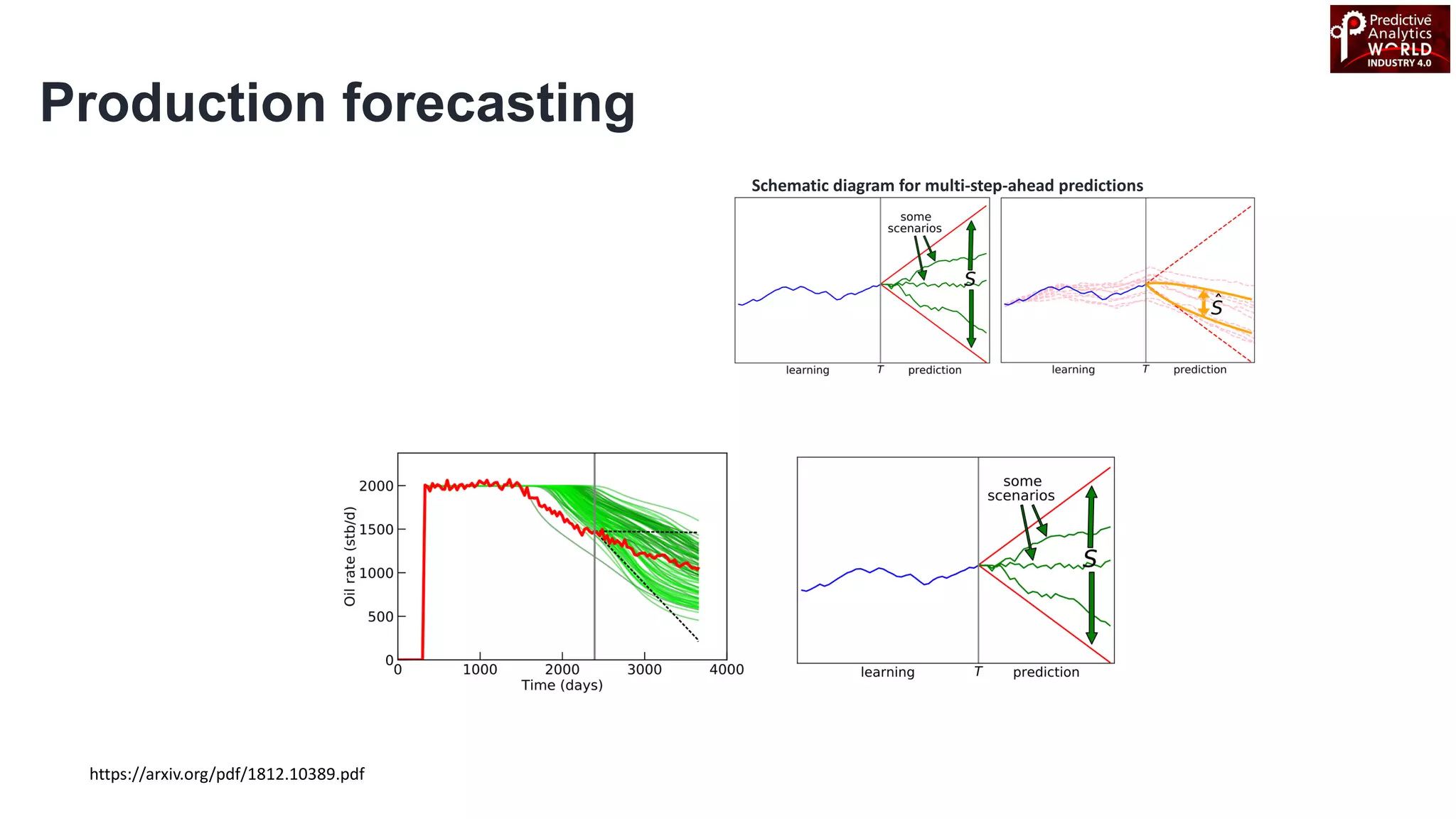

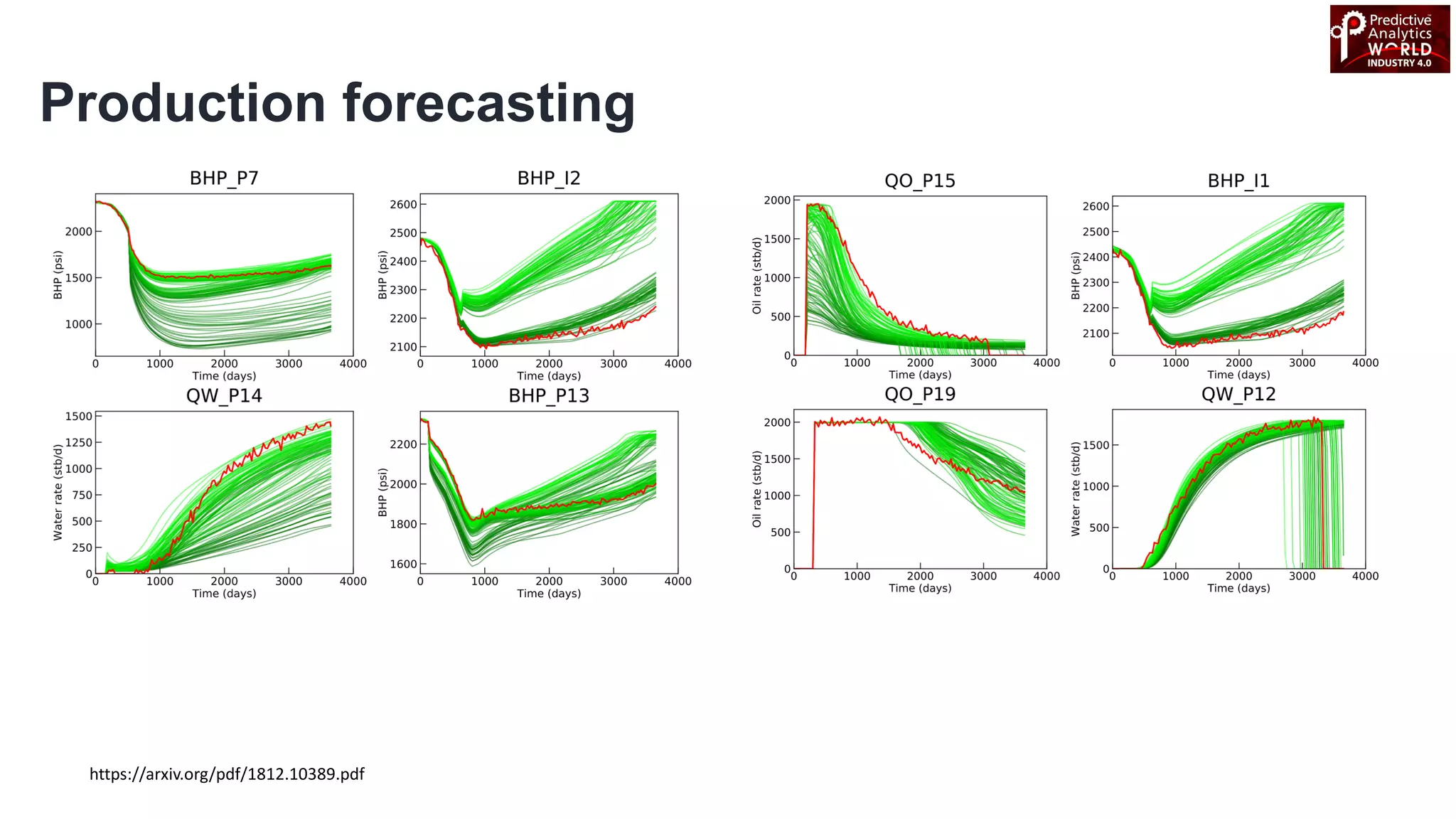

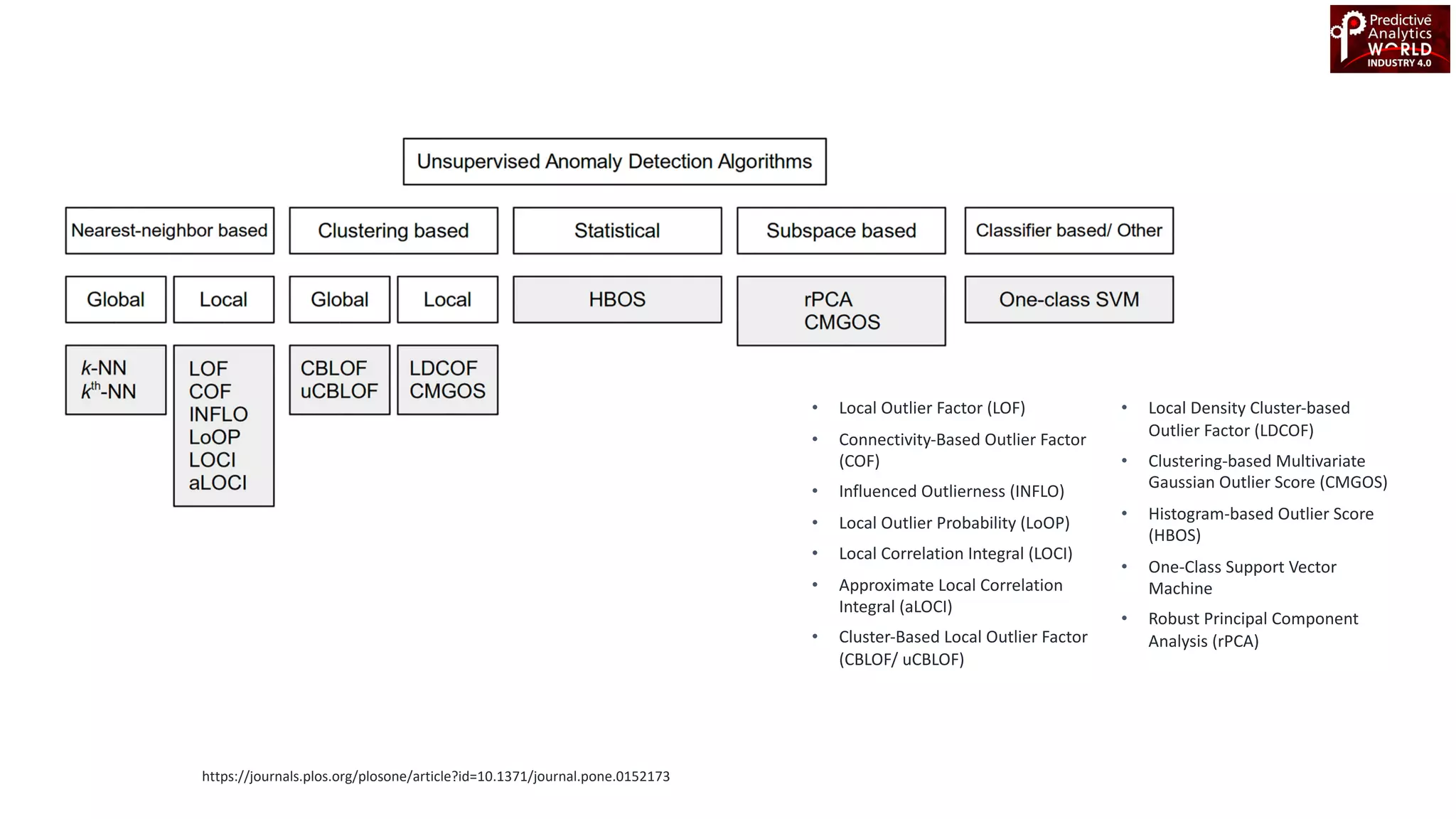

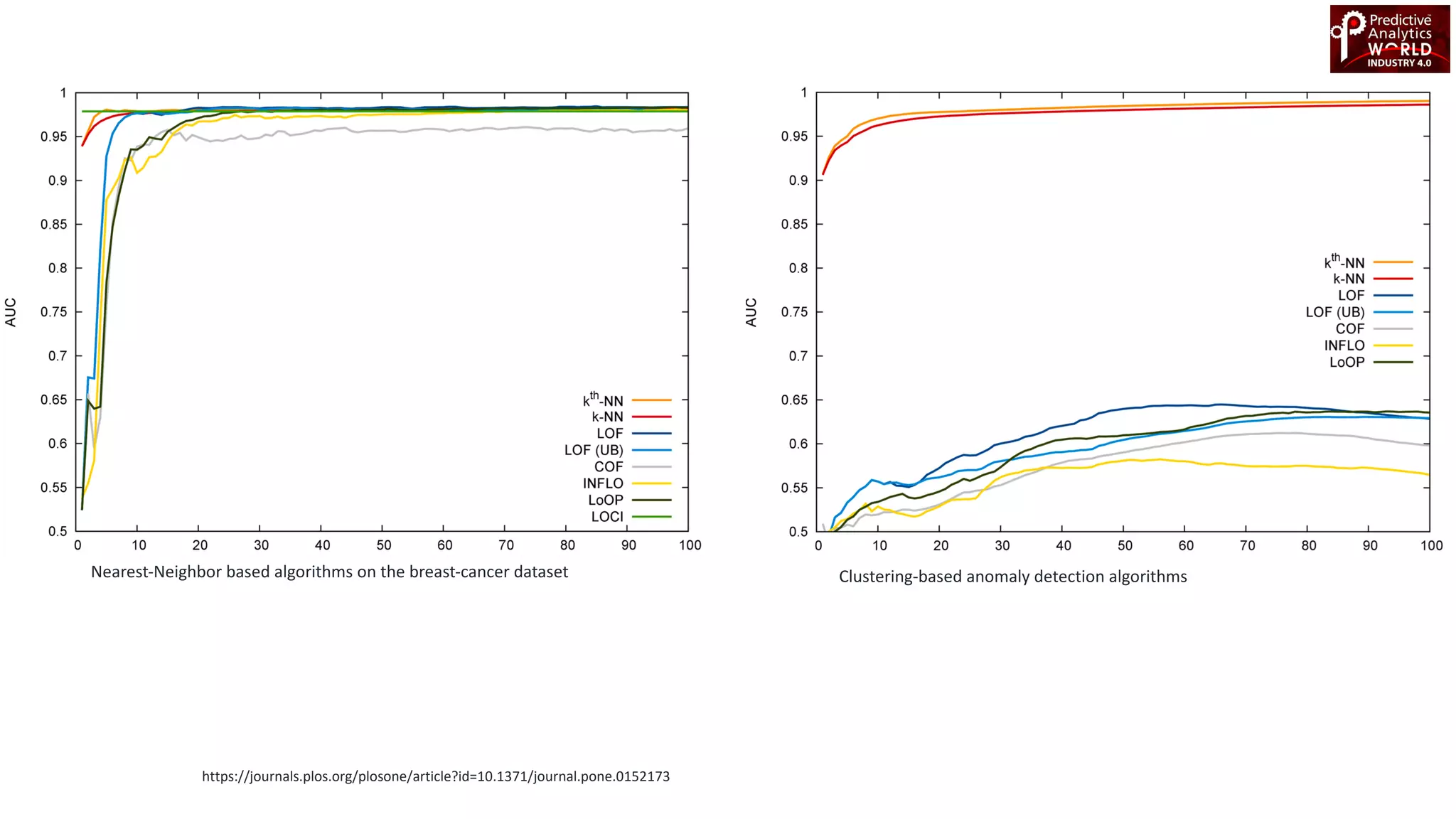

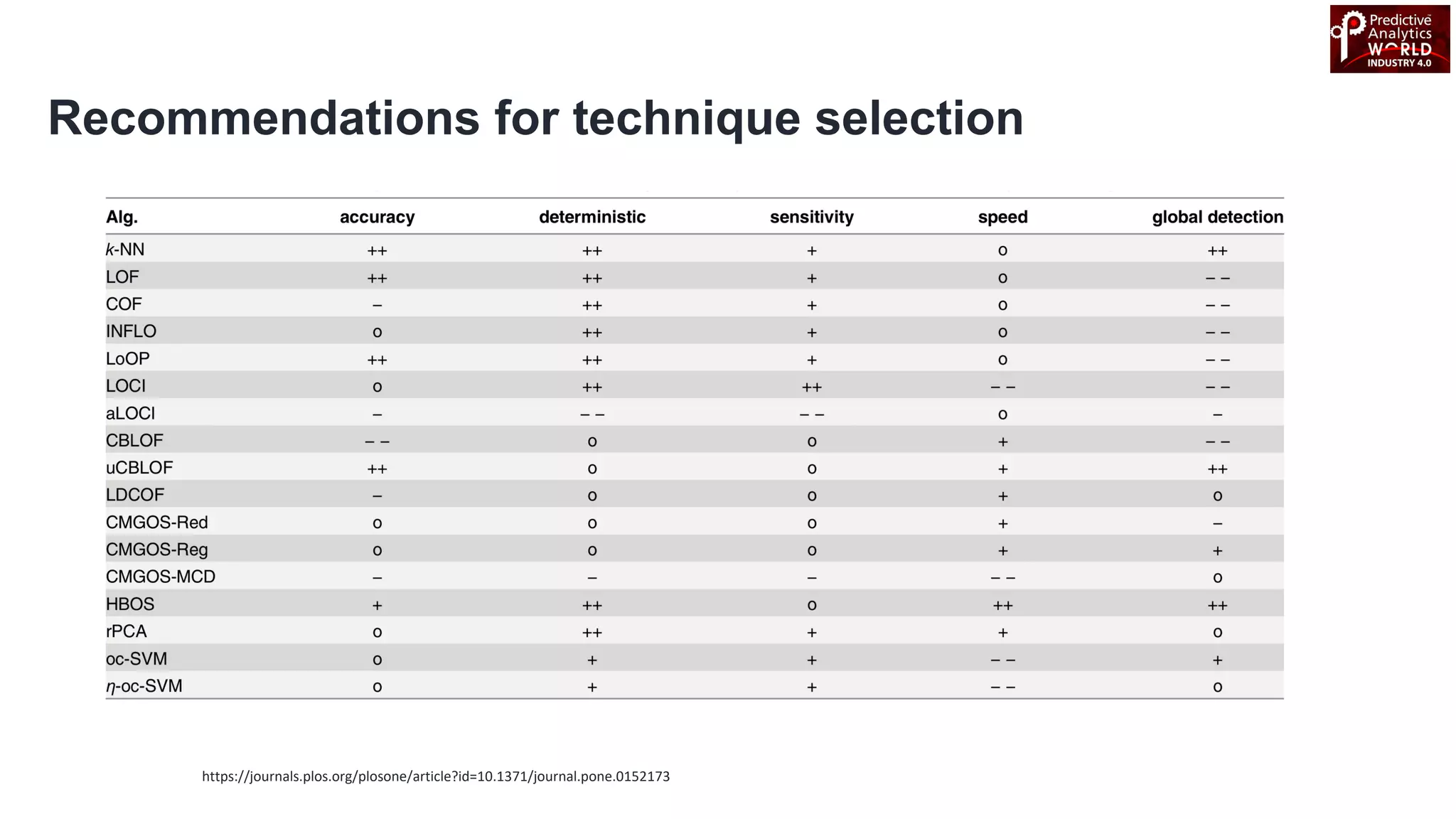

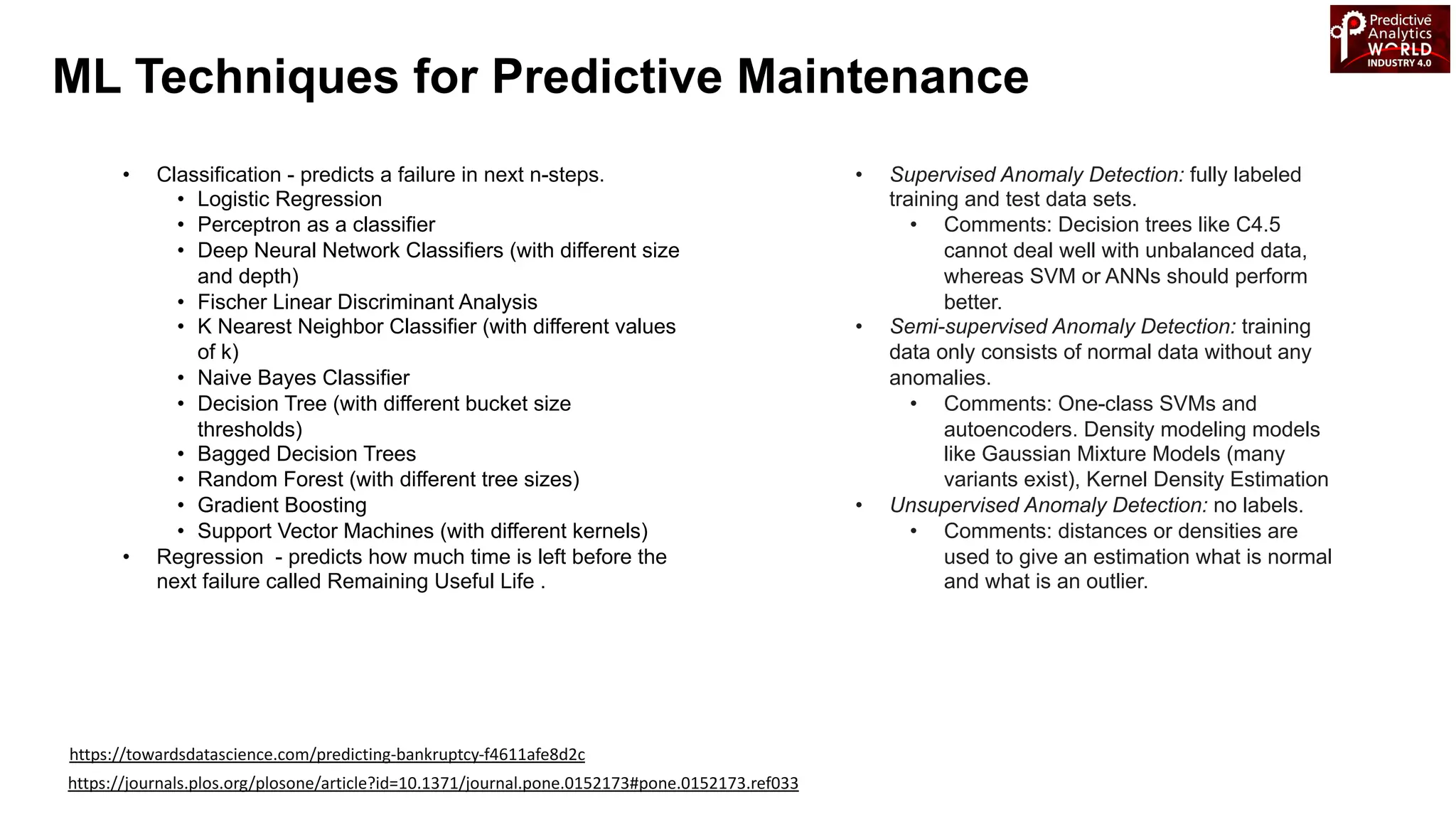

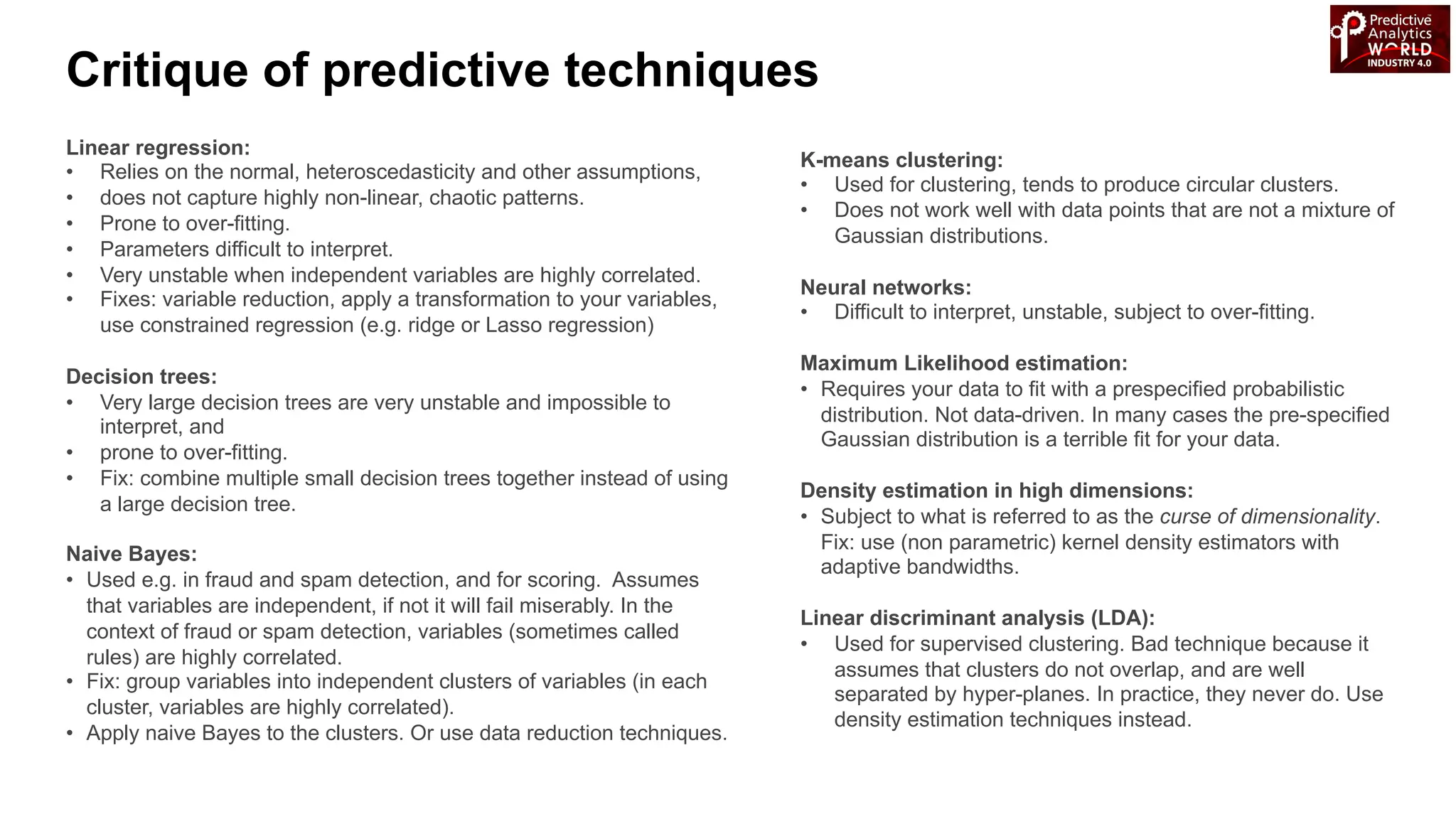

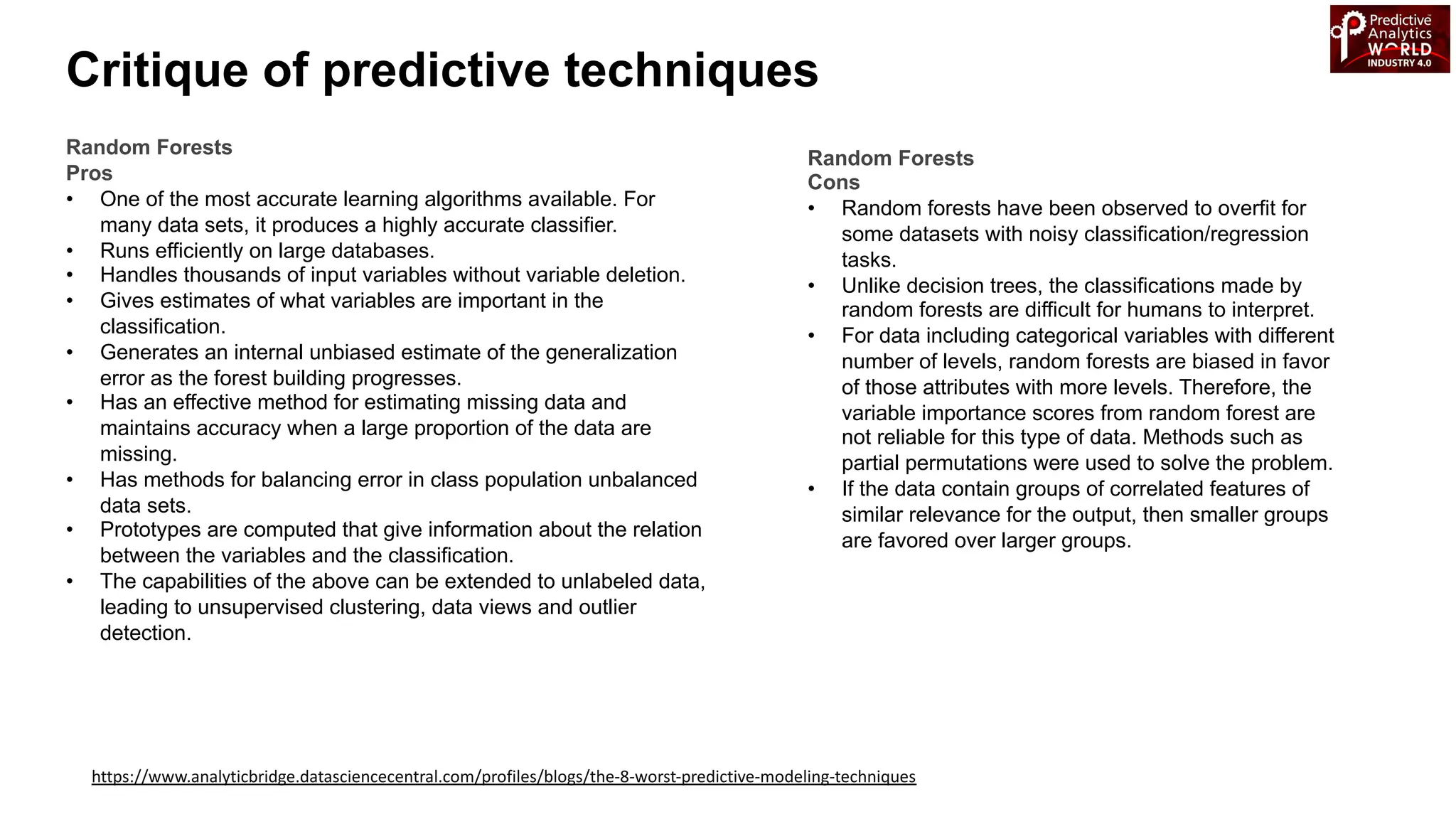

The document reviews various machine learning techniques for predictive modeling across different domains including fraud detection, credit risk prediction, and bankruptcy forecasting. It discusses feature engineering, anomaly detection algorithms, and compares the effectiveness of classifiers like random forests, SVM, and neural networks. Additionally, it emphasizes the importance of selecting appropriate methods based on specific use cases and data characteristics.