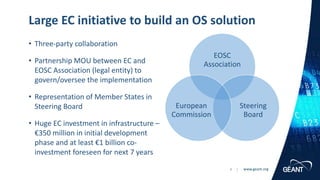

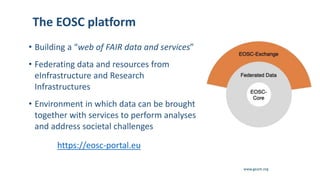

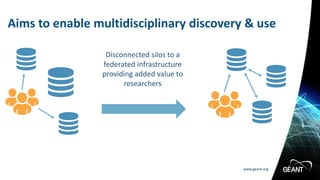

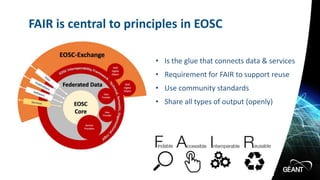

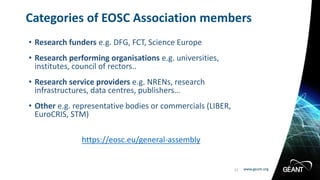

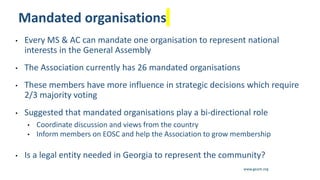

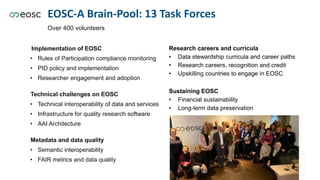

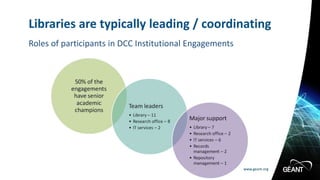

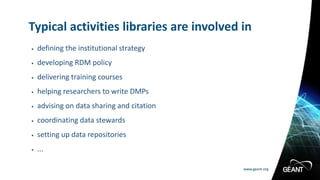

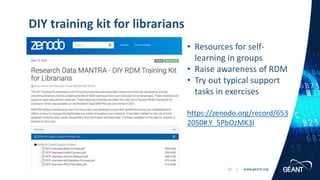

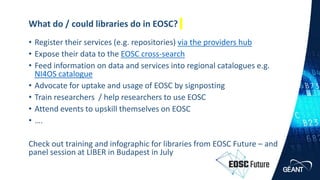

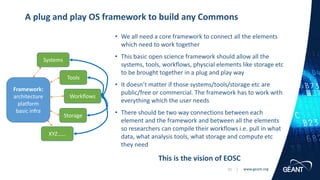

The document outlines the European Open Science Cloud (EOSC) initiative, which aims to create a federated infrastructure for open science, facilitating the discovery and use of research data across disciplines. It highlights the significant role of libraries in supporting this initiative by establishing research data management services, developing policies, and training researchers to utilize EOSC resources. The EOSC Association serves as a governance body to advocate for the community and promote alignment with EU research policies, involving a diverse membership of funders, universities, and service providers.