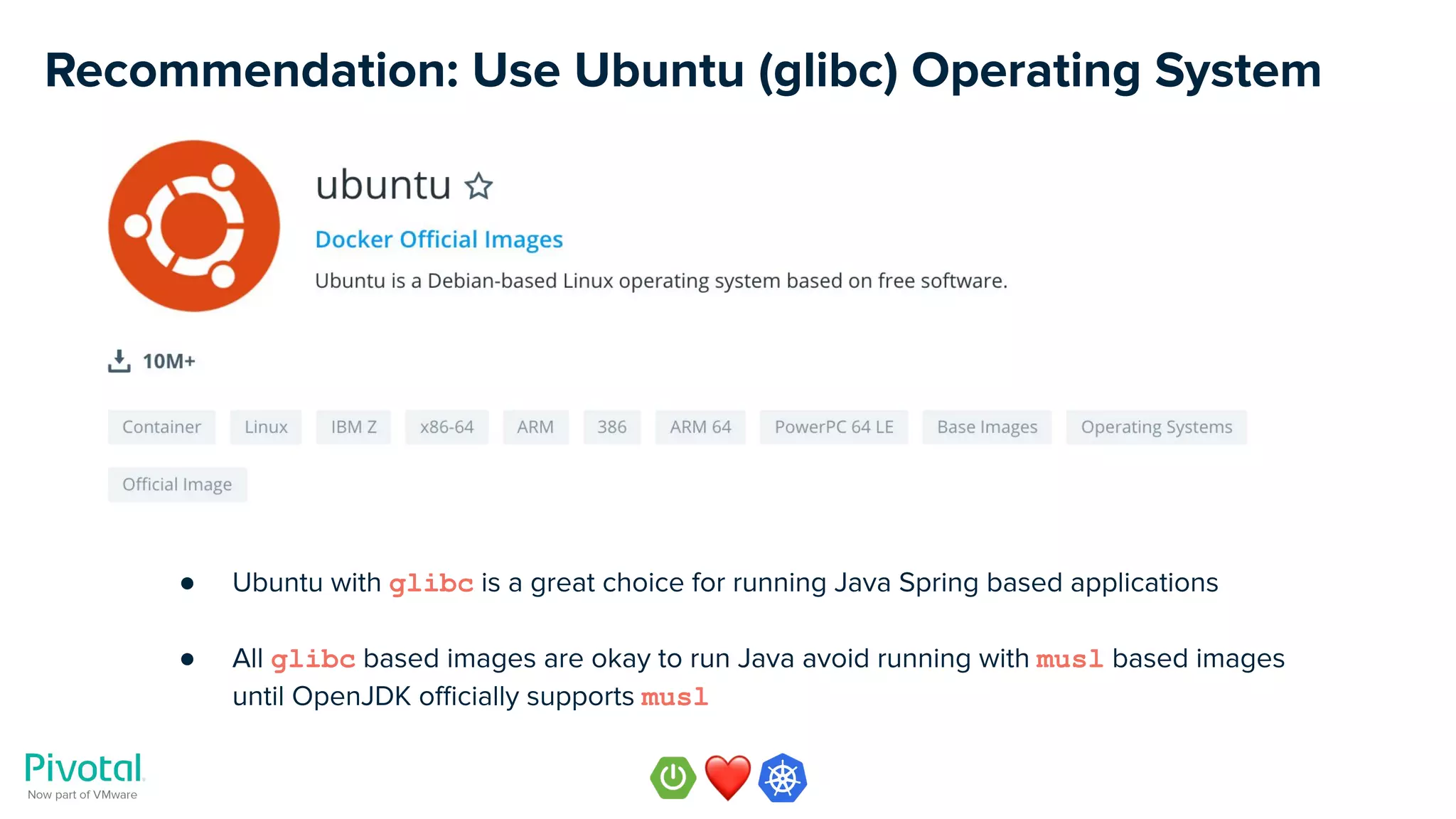

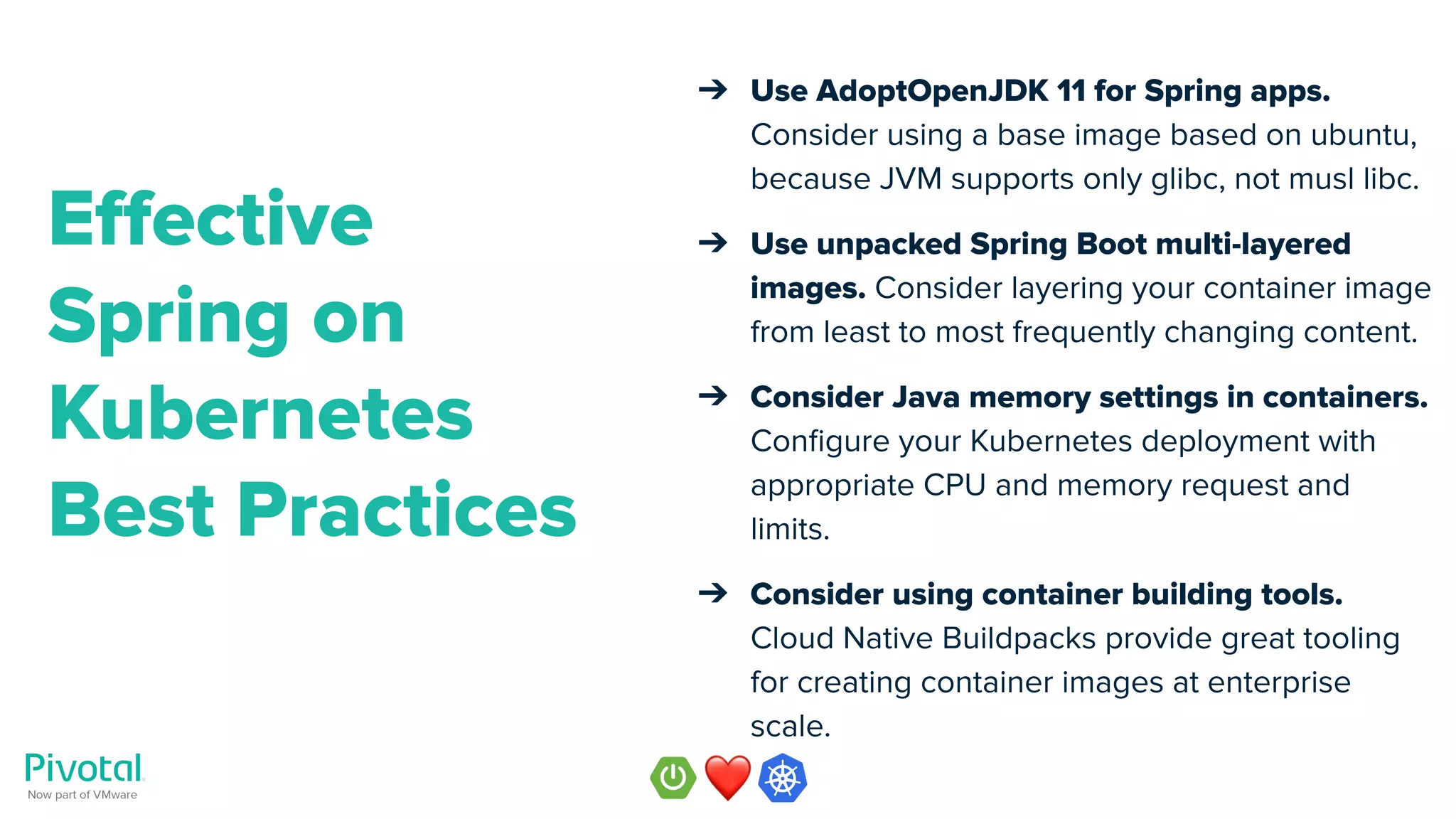

The document outlines best practices for containerizing and running Spring Boot applications on Kubernetes, emphasizing the importance of using the appropriate operating system and Java distribution. Recommendations include using Ubuntu with glibc and AdoptOpenJDK for building Docker images, as well as optimizing Dockerfiles for multi-layered builds to enhance efficiency. It also covers JVM configuration for improved performance within containerized environments.

![Musl libc vs. GNU libc

● “musl is a new general-purpose

implementation of the C library. It is

lightweight, fast, simple, free, and aims to

be correct in the sense of

standards-conformance and safety.”

● Used by Alpine Linux as its C Library

● Smaller than GNU libc (glibc)

● Has functional differences to glibc [1] [2]

● glibc (GNU C library) is the defacto

standard C library

● Used by Ubuntu, Debian, CentOS, RHEL,

SUSE

● Much larger than musl C library

● Large C/C++ code bases might depend on

the behaviour of GNU libc implementation

(bugs in glibc that can’t be fixed due to

backward compatibility - become features)](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-12-2048.jpg)

![OpenJDK does not officially support musl libc

Source: https://openjdk.java.net/jeps/8229469 [3]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-13-2048.jpg)

![Source: https://www.infoq.com/news/2019/06/docker-vulnerable-java [4]

OpenJDK Docker Image Incident](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-17-2048.jpg)

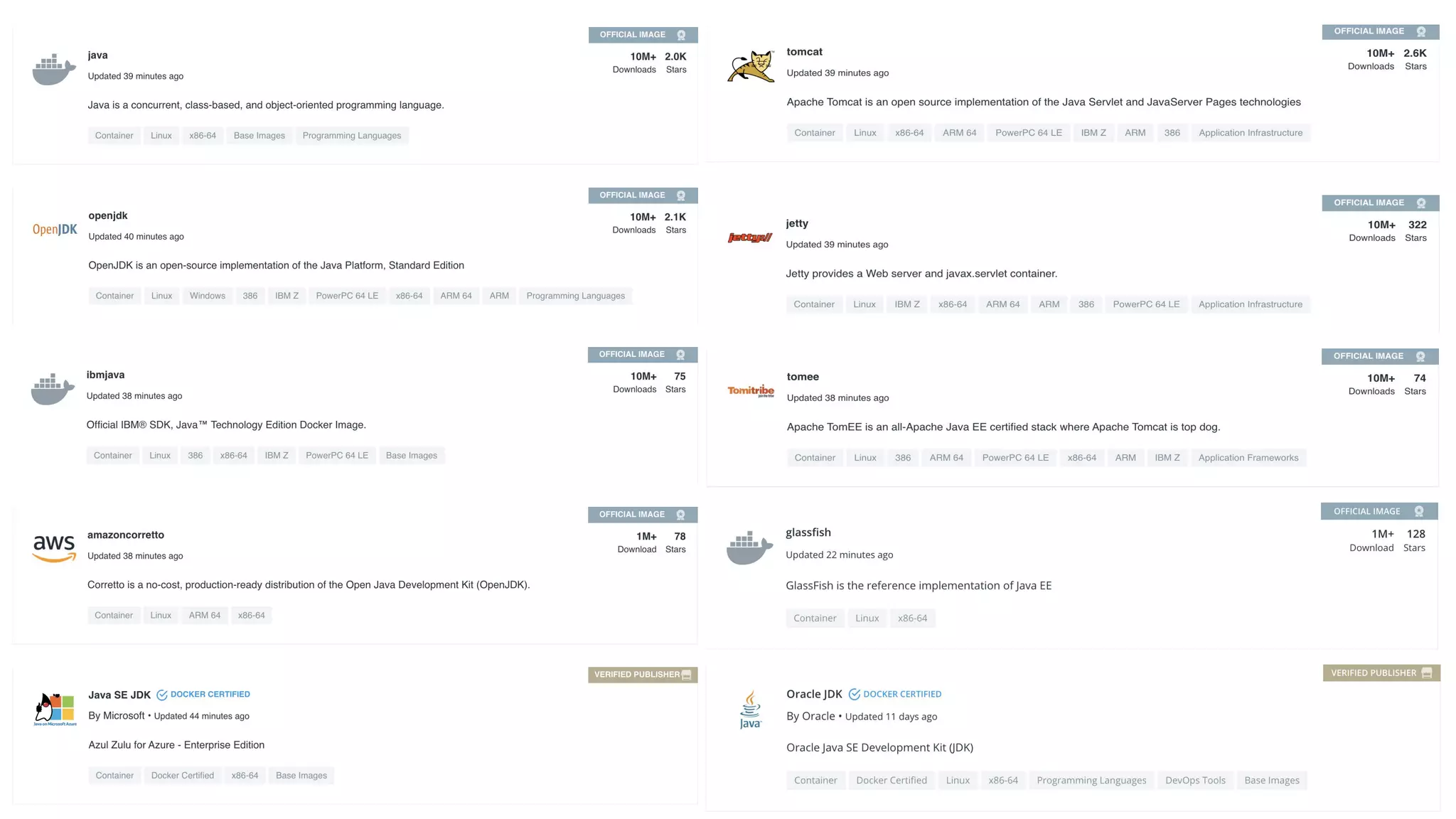

![Docker Official Images* and Verified Publishers

● Docker Official Images are curated set of Docker repositories, hosted on Docker Hub,

reviewed and published by the Docker team [5]

● All images in the Official Images repository are scanned for vulnerabilities

● Docker Verified Publisher are curated set of Docker repositories from ISV that are Hub

Verified Publisher in Technology Partner Program at Docker [6]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-18-2048.jpg)

![OpenJDK vs AdoptOpenJDK Java distributions

● OpenJDK means few different things

○ OpenJDK project with repository with the Java source code [7]

○ OpenJDK Java distribution of binaries maintained by Oracle [8]

● AdoptOpenJDK distribution [9] are built and maintained by the community

● AdoptOpenJDK distribution provides many benefits such as:

○ Java versions will be supported for longer time (especially LTS versions)

○ Favourable licensing terms

○ Supported by many big vendors (e.g. Amazon, Azul, GoDaddy, IBM, Microsoft, Pivotal)

● See Matt Raible’s [10] great blog post [11] on the topic of Java SDK choices

● AdoptOpenJDK has also been added as a Docker repository (i.e. Docker Official Images) [12]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-19-2048.jpg)

![Recommendation: Use AdoptOpenJDK

● Use the official AdoptOpenJDK image repository at

https://hub.docker.com/_/adoptopenjdk [13]

● Upstream build of OpenJDK with no modifications

● Binaries available for many operating systems, CPU

architectures, and JDK versions

● Offers a hassle free API for downloading binaries

● Open Quality Assurance systems (AQuA) [14]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-20-2048.jpg)

![AdoptOpenJDK Image Variants

● Many official and non-official image variants of AdoptOpenJDK, see details [15]

● Slim builds [16] are stripped down JDK builds that remove functionality not typically needed while

running in a cloud, applets, fonts, debug symbols, additional charsets, Java source, etc.

● Alpine based AdoptOpenJDK images use glibc for Java [17], [18]

● https://hub.docker.com/u/adoptopenjdk [19] has variety of AdoptOpenJDK

images for non-official variants](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-21-2048.jpg)

![Java Version History

● New Java version every 6 months

● Long Term Support (LTS) Java version

● Current LTS is Java SE 11

● Next LTS is Java SE 17 (Sep 2021)

Source: https://en.wikipedia.org/wiki/Java_version_history [20]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-24-2048.jpg)

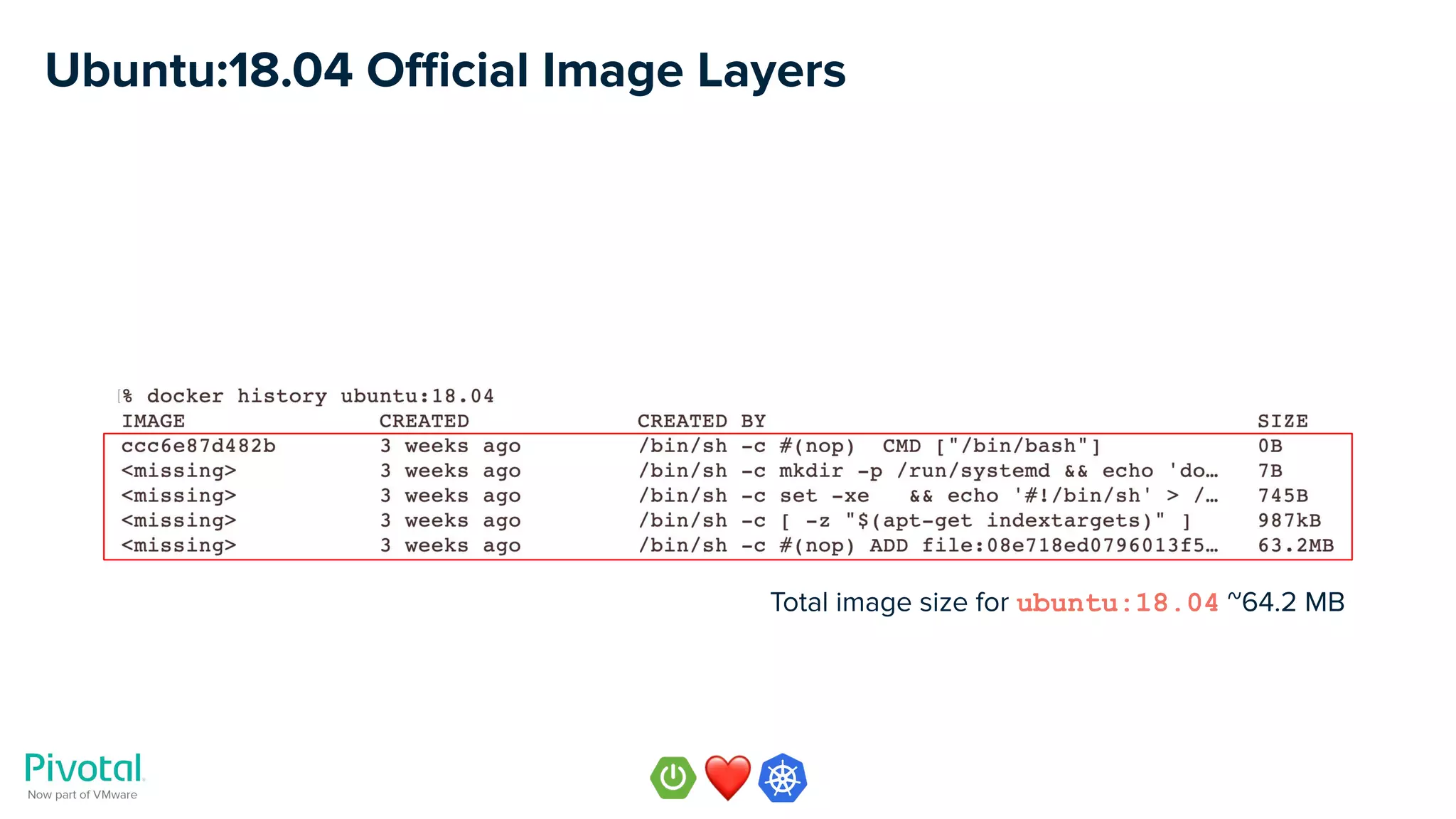

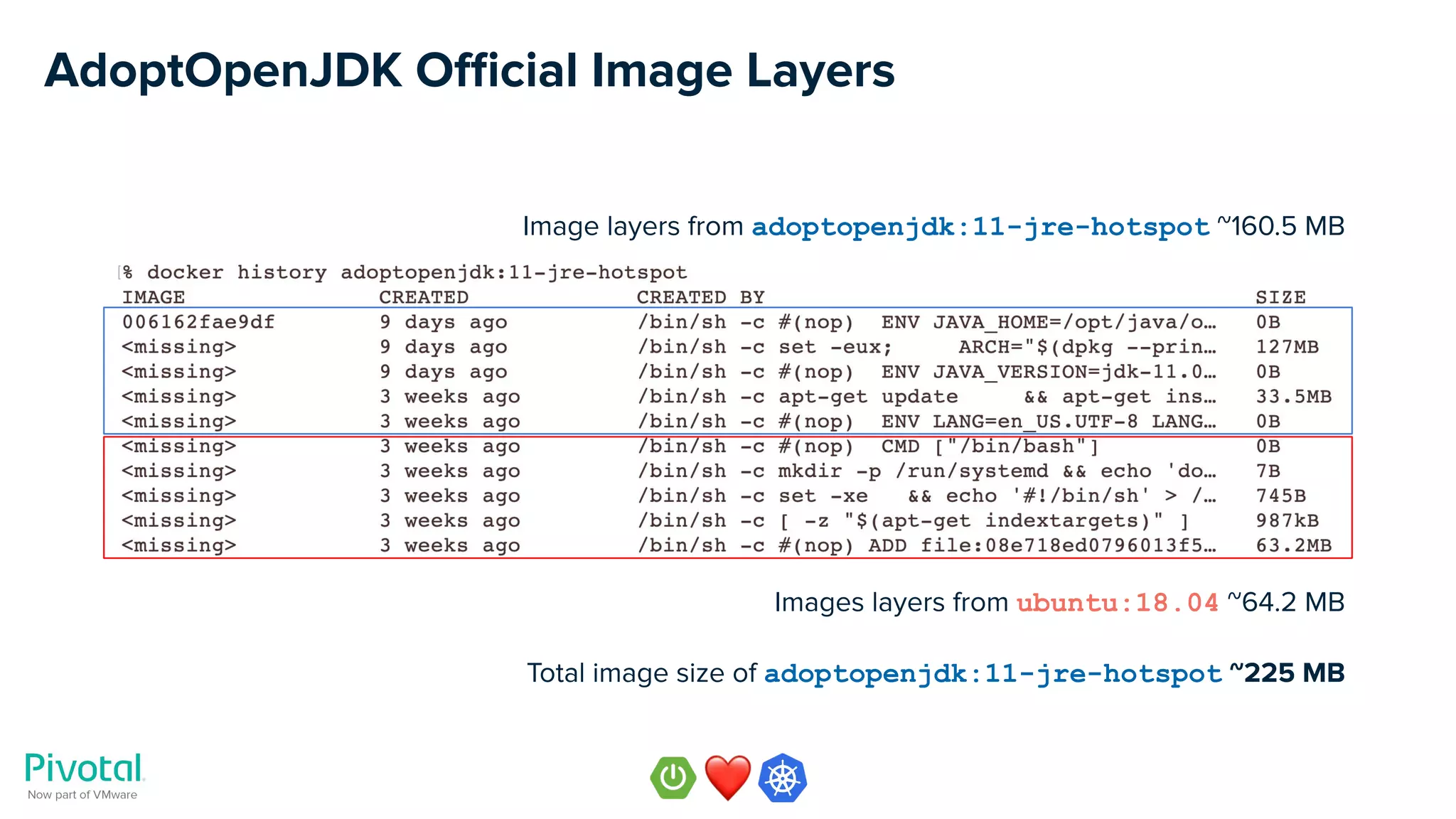

![Item 1: Use AdoptOpenJDK 11 for Spring apps

● Official AdoptOpenJDK images at https://hub.docker.com/_/adoptopenjdk [21] based

on ubuntu

○ adoptopenjdk:11-jdk-hotspot ~423 MB for builds

○ adoptopenjdk:11-jre-hotspot ~225 MB for running applications

○ Based on ubuntu:18.04 official base image

● Leverage variants from https://hub.docker.com/r/adoptopenjdk/openjdk11 [22] if you

are concerned about image size or want an non-ubuntu based image (e.g. alpine)](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-27-2048.jpg)

![Software & Support for OpenJDK, Spring, and Tomcat [23]

Pivotal’s Java™ Experts Support 24/7 Simple & Fair Pricing](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-28-2048.jpg)

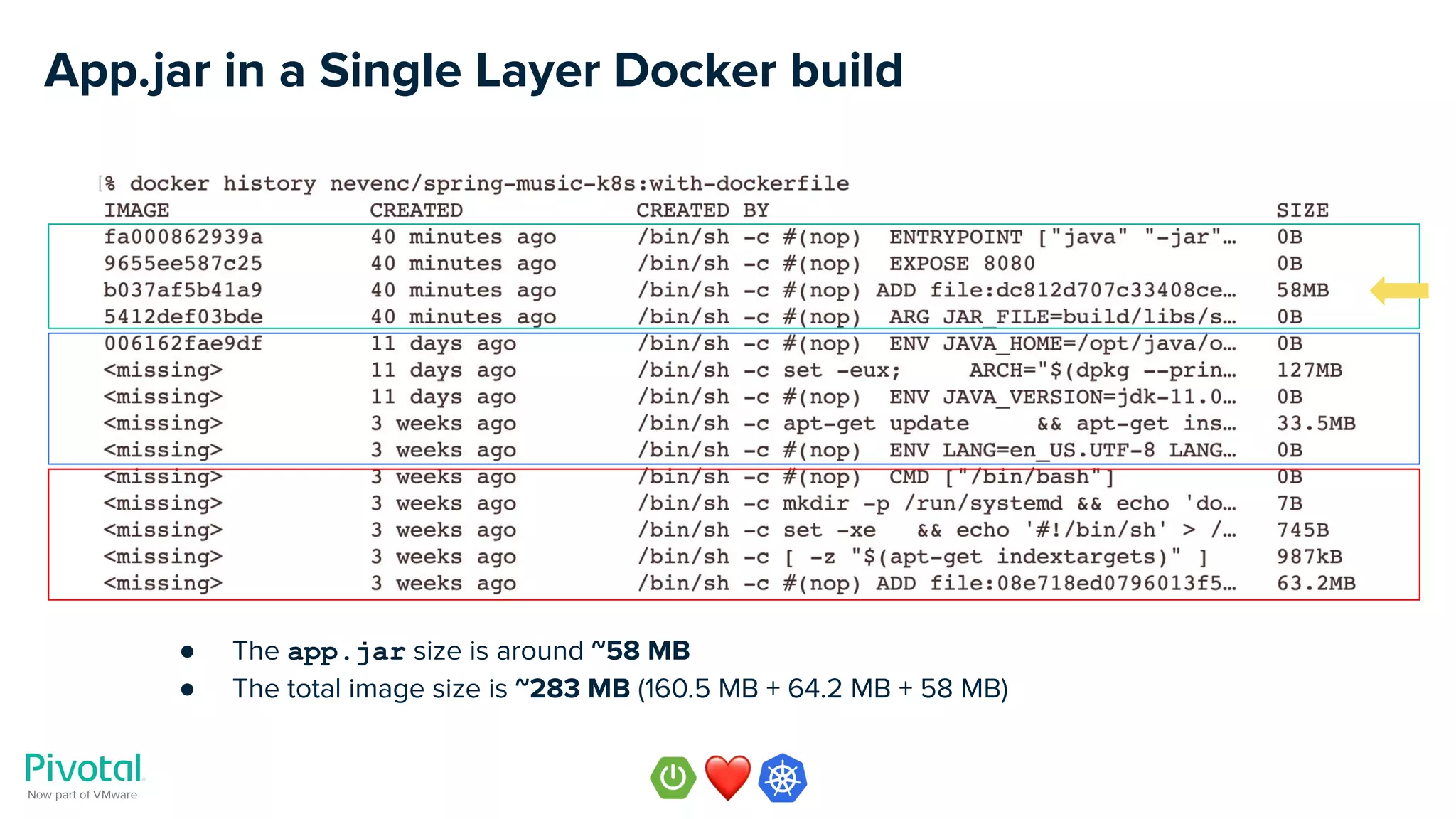

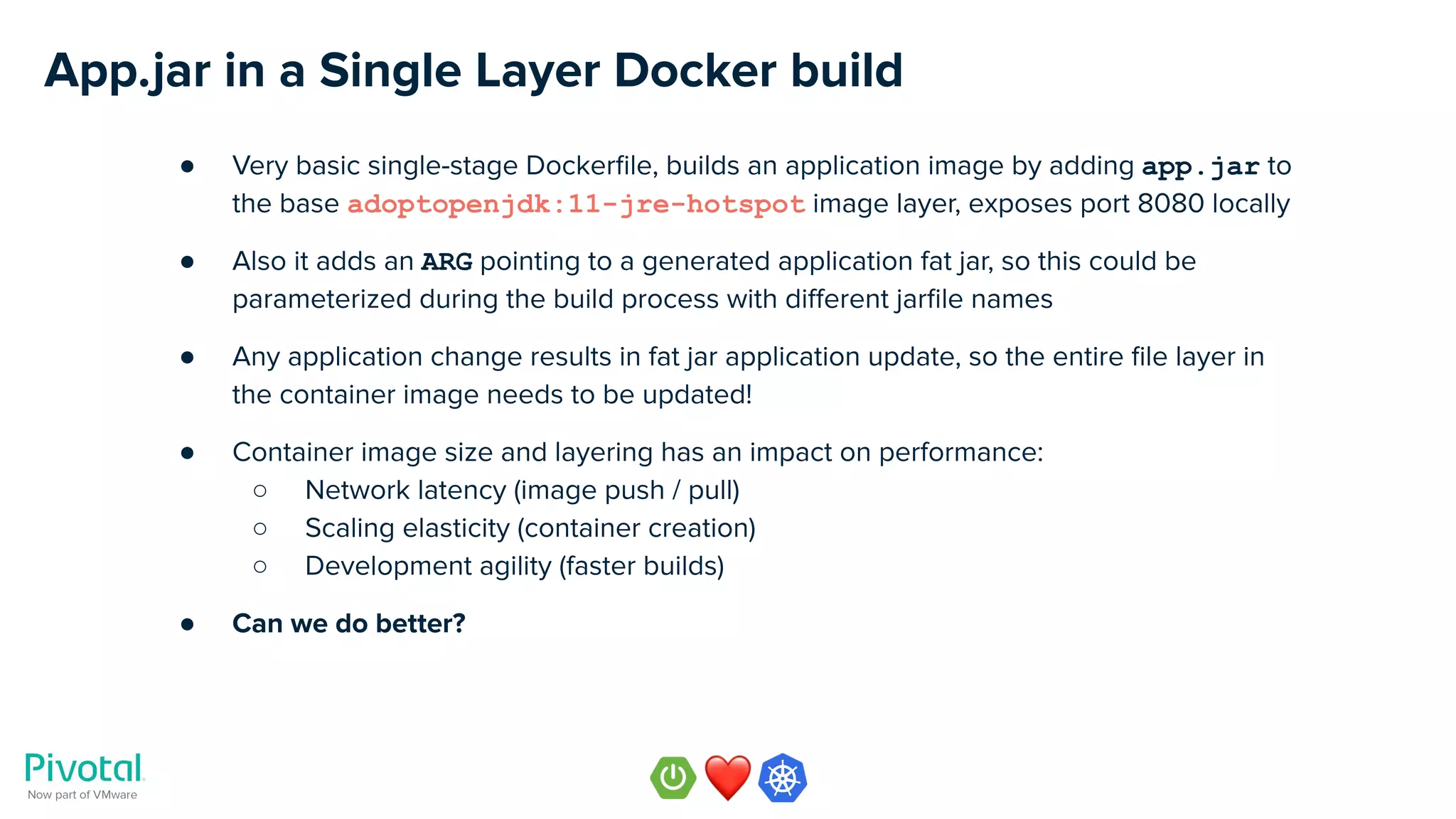

![App.jar in a Single Layer Docker build

FROM adoptopenjdk:11-jre-hotspot

ARG JAR_FILE=build/libs/*.jar

ADD ${JAR_FILE} app.jar

EXPOSE 8080

ENTRYPOINT ["java","-jar","/app.jar"]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-32-2048.jpg)

![App.jar in a Single Layer Docker build

● Build the application binary

./gradlew build

● Build the container image

docker build -t nevenc/spring-music-k8s:with-dockerfile -f Dockerfile .

● Build the image with a JAR_FILE argument

docker build -t nevenc/spring-music-k8s:with-dockerfile

--build-arg JAR_FILE=build/libs/spring-music-1.0.jar -f Dockerfile .

● Run the image locally

docker run -it -p8080:8080 nevenc/spring-music-k8s:with-dockerfile

● Run the image on Kubernetes

kubectl create deployment spring-music

image=nevenc/spring-music-k8s:with-dockerfile

kubectl expose deployment spring-music --port=8080 --type=NodePort

● Examples code

https://github.com/nevenc/spring-music-k8s [24] [25]

HANDS-ON EXAMPLE](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-35-2048.jpg)

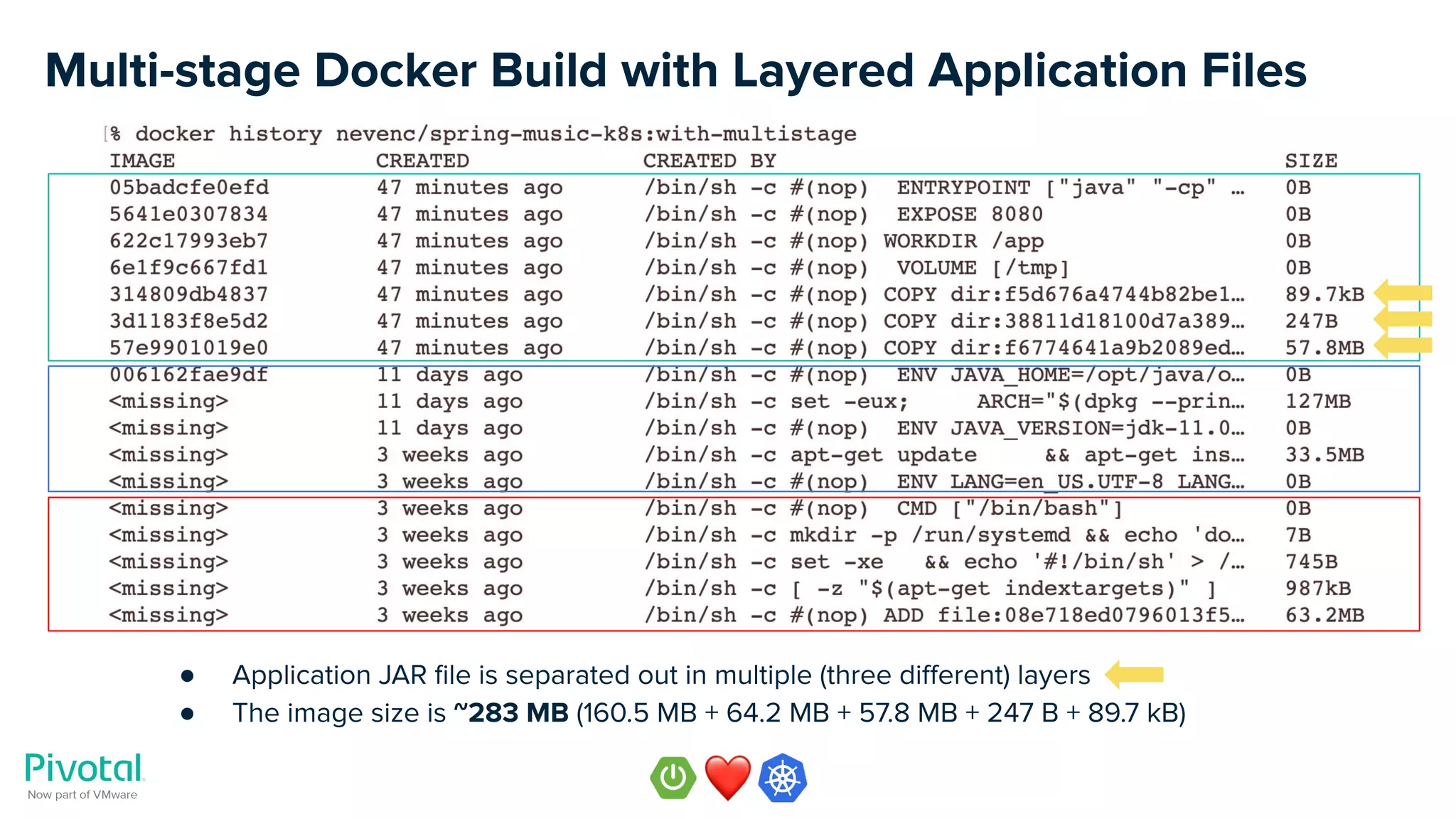

![Multi-stage Docker Build with Layered Application Files

# Stage 1: Extract layers of the app

FROM adoptopenjdk:11-jdk-hotspot AS build

ARG JAR_FILE=build/libs/*.jar

ADD ${JAR_FILE} app.jar

RUN mkdir /app

&& cd /app

&& jar xf /app.jar

# Stage 2: Build layered container image

FROM adoptopenjdk:11-jre-hotspot

COPY --from=build /app/BOOT-INF/lib /app/lib

COPY --from=build /app/META-INF /app/META-INF

COPY --from=build /app/BOOT-INF/classes /app

VOLUME /tmp

WORKDIR /app

EXPOSE 8080

ENTRYPOINT ["java","-cp","/app:/app/lib/*","org.cloudfoundry.samples.music.Application"]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-37-2048.jpg)

![Multi-stage Docker Build with Layered Application Files

● Build the application binary

./gradlew build

● Build the image with

docker build -f Dockerfile.multistage -t nevenc/spring-music-k8s:with-multistage .

● Run the image locally

docker run -it -p8080:8080 nevenc/spring-music-k8s:with-multistage

● Run the image on Kubernetes

kubectl create deployment spring-music

--image=nevenc/spring-music-k8s:with-multistage

kubectl expose deployment spring-music --port=8080 --type=NodePort

● Example code

https://github.com/nevenc/spring-music-k8s [26] [27]

HANDS-ON EXAMPLE](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-40-2048.jpg)

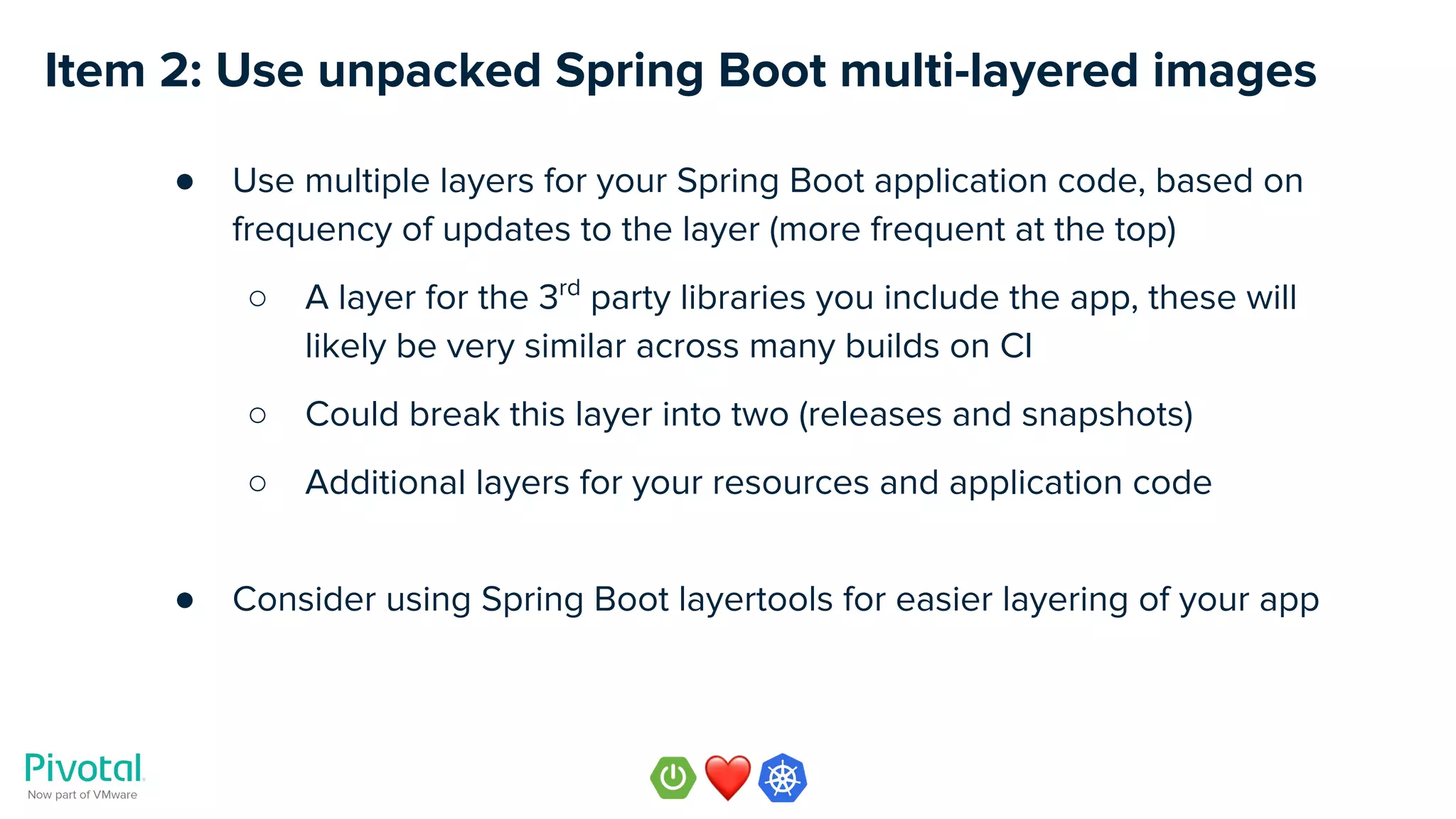

![Spring Boot Layertools

● Spring Boot team has been actively adding new features to support cloud-native and

container-friendly tools, see release notes [28] for Spring Boot 2.3.0 M1

● Support for building jar files with contents separated into layers has been added to

both Maven and Gradle plugins

● The layering separates the JAR’s contents based on how frequently they will change

● Building more efficient Docker images with more frequently changing layers on top

● Layertools provide built in tools for listing and extracting layers](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-41-2048.jpg)

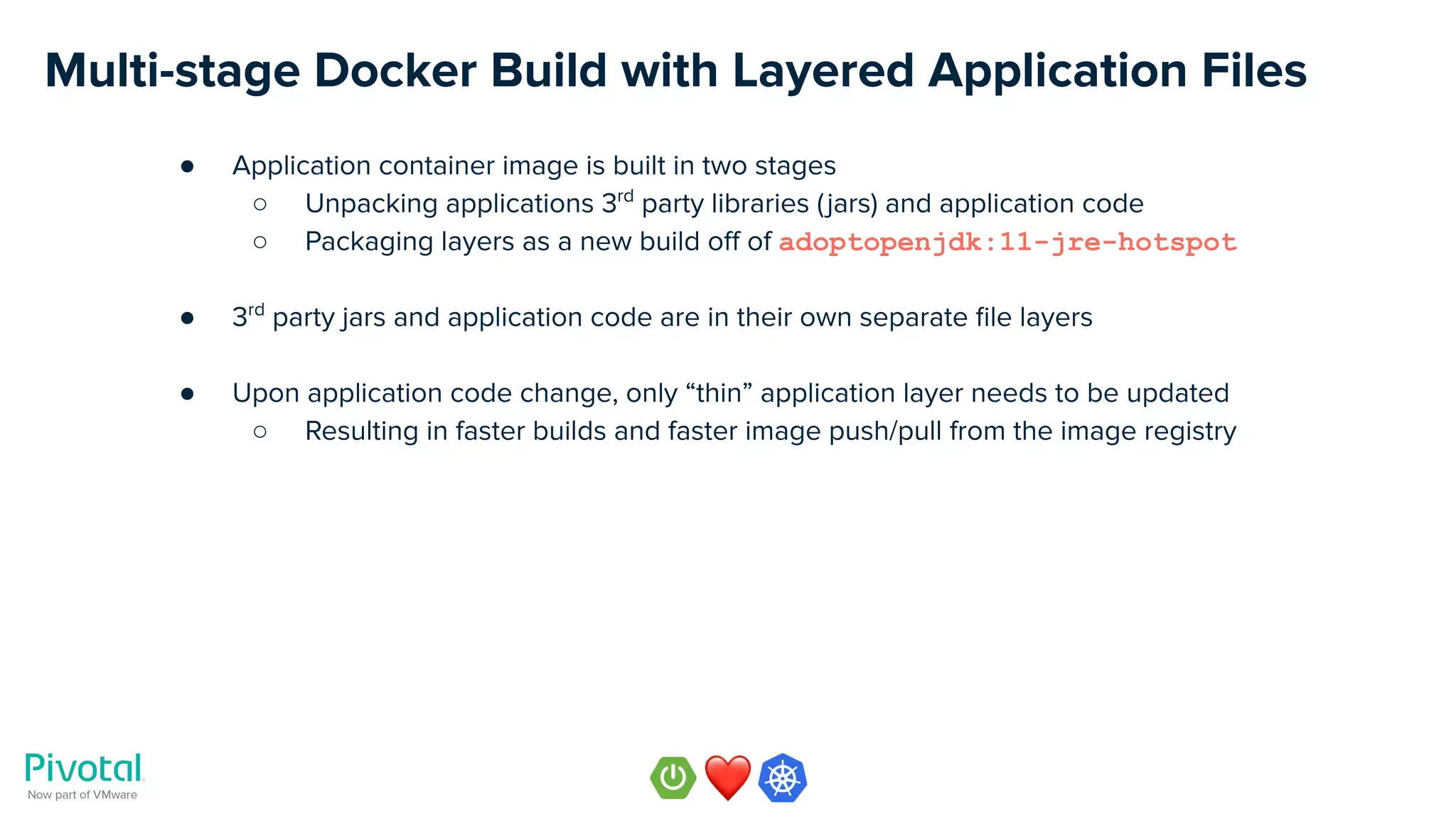

![Multi-stage Docker Build with Layertools

# Stage 1: Extract layers of the app

FROM adoptopenjdk:11-jdk-hotspot AS build

WORKDIR application

ARG JAR_FILE=build/libs/*.jar

ADD ${JAR_FILE} app.jar

RUN java -Djarmode=layertools -jar app.jar extract

# Stage 2: Build layered container image

FROM adoptopenjdk:11-jre-hotspot

WORKDIR application

COPY --from=build application/dependencies/ ./

COPY --from=build application/snapshot-dependencies/ ./

COPY --from=build application/resources/ ./

COPY --from=build application/application/ ./

EXPOSE 8080

ENTRYPOINT ["java","org.springframework.boot.loader.JarLauncher"]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-42-2048.jpg)

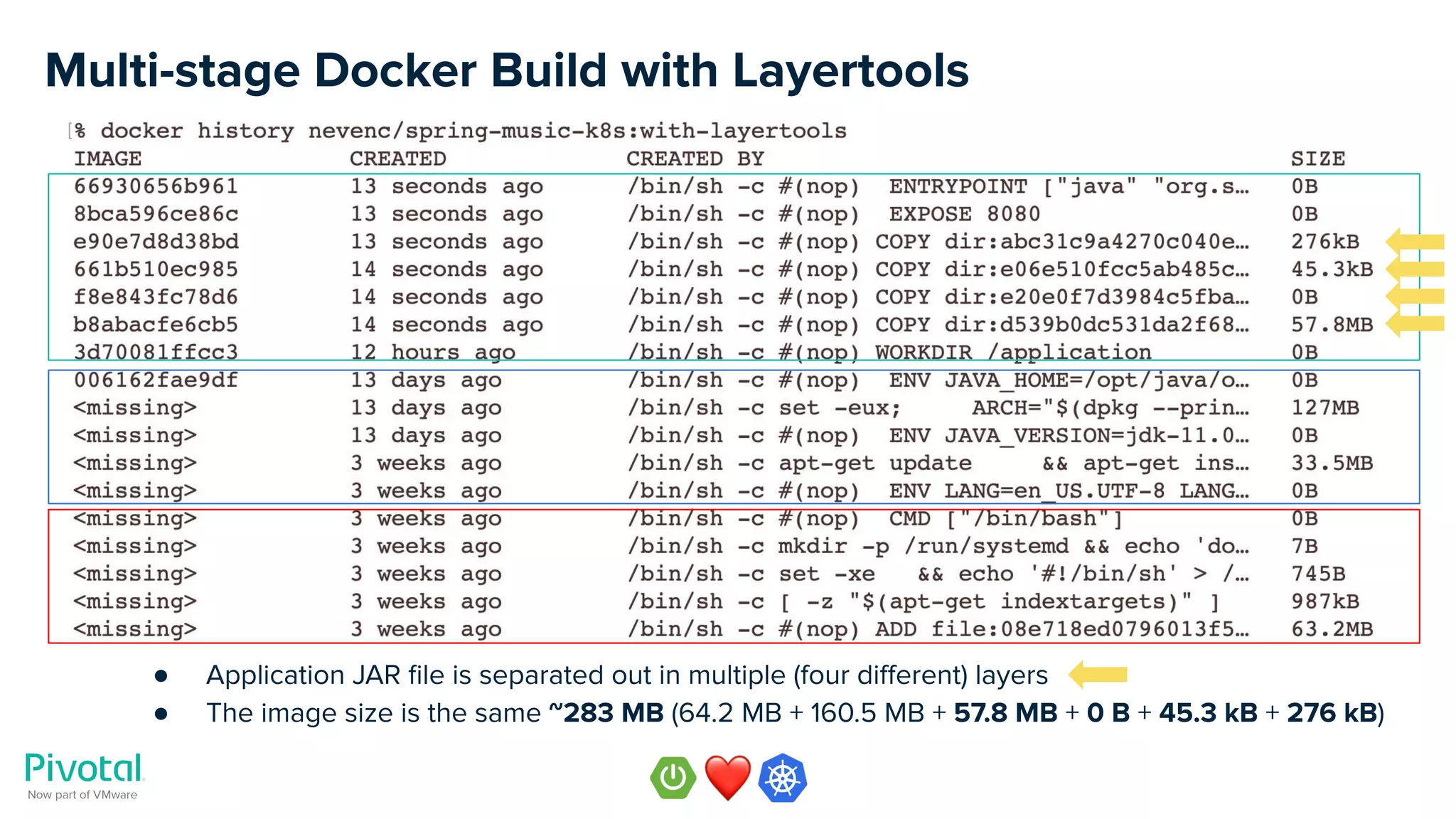

![Multi-stage Docker Build with Layertools

● Application container image is built in two stages, similarly as before

○ Unpacking application using layertools

○ Packaging layers as a new build off of adoptopenjdk:11-jre-hotspot

● Layers are separated out based on how frequently they typically change, e.g.

○ dependencies

○ snapshot-dependencies

○ resources

○ application

● This results in even better optimization of Docker image layering for efficiency

● More details and examples on Phil Webb’s [29] blog post [30]

● Please try these new features and provide your feedback to Spring Boot team!](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-43-2048.jpg)

![● Rebuild the application binary (with appropriate layered bootJar)

./gradlew -b build.gradle.layertools build

● List image layers

java -Djarmode=layertools -jar build/libs/spring-music-k8s-1.0.jar list

● Build the image

docker build -f Dockerfile.layertools -t nevenc/spring-music-k8s:with-layertools .

● Run the image locally

docker run -it -p8080:8080 nevenc/spring-music-k8s:with-layertools

● Run the image on Kubernetes

kubectl create deployment spring-music --image=nevenc/spring-music-k8s:with-layertools

kubectl expose deployment spring-music --port=8080 --type=NodePort

● Example code

https://github.com/nevenc/spring-music-k8s [31] [32]

Multi-stage Docker Build with Layertools

HANDS-ON EXAMPLE](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-45-2048.jpg)

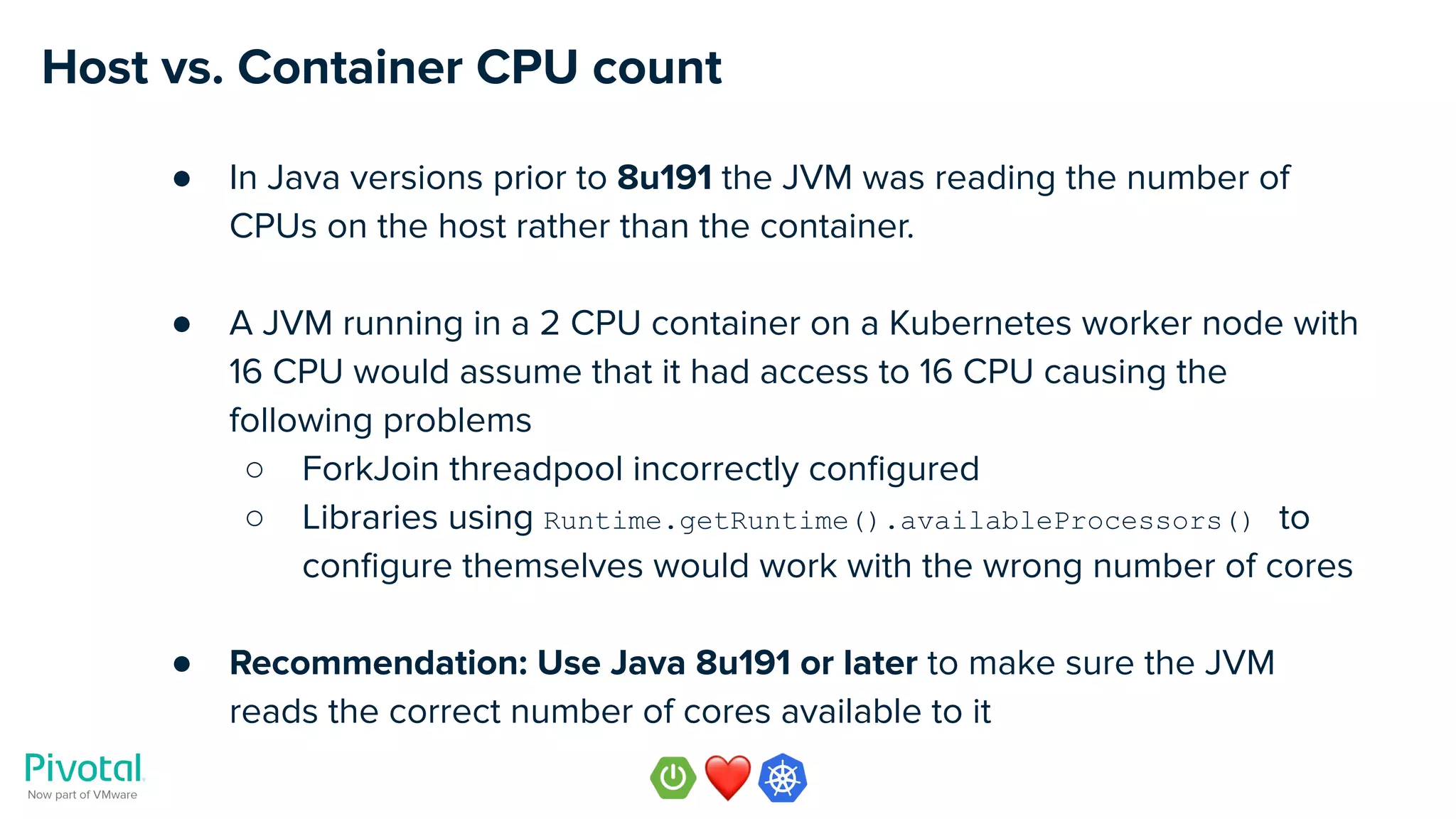

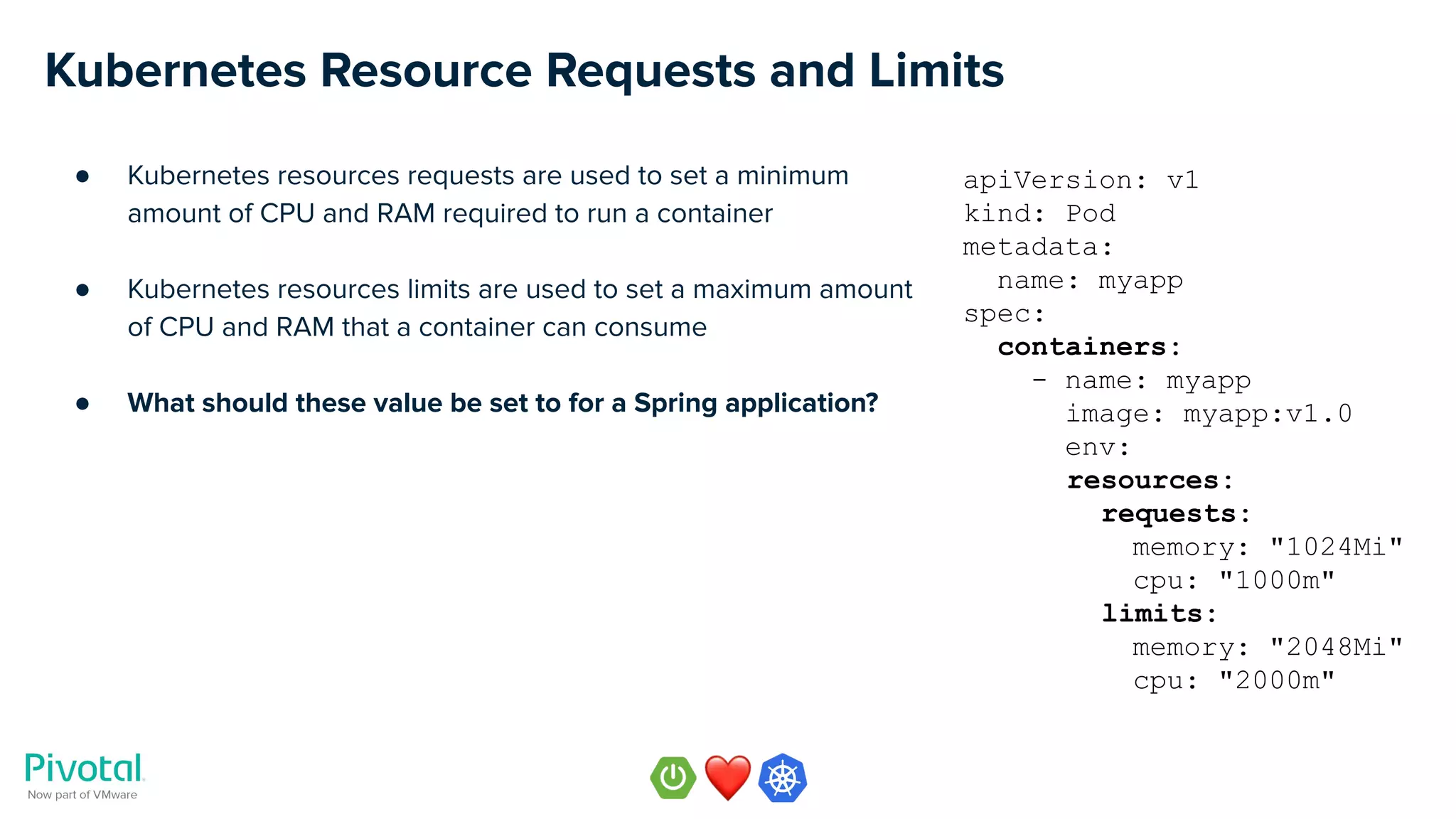

![CPU Requests vs. Limits

● CPU requests are measured in millicores, a core is

○ 1 vCPU on GCP / Azure / AWS

○ 1 hyperthread on your own hardware

● Requests are minimum guaranteed amount of CPU millicores

allocated to the container

● Limits are the maximum amount of CPU milicores that the

container is allowed to consume

● Kubernetes defines three quality of service classes

○ Guaranteed → requests == limits

○ Burstable → requests < limits

○ Best Effort → requests and limits not set

● For more details refer to Kubernetes documentation [33] [34]

apiVersion: v1

kind: Pod

metadata:

name: myapp

spec:

containers:

- name: myapp

image: myapp:v1.0

env:

resources:

requests:

memory: "1024Mi"

cpu: "1000m"

limits:

memory: "2048Mi"

cpu: "2000m"](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-51-2048.jpg)

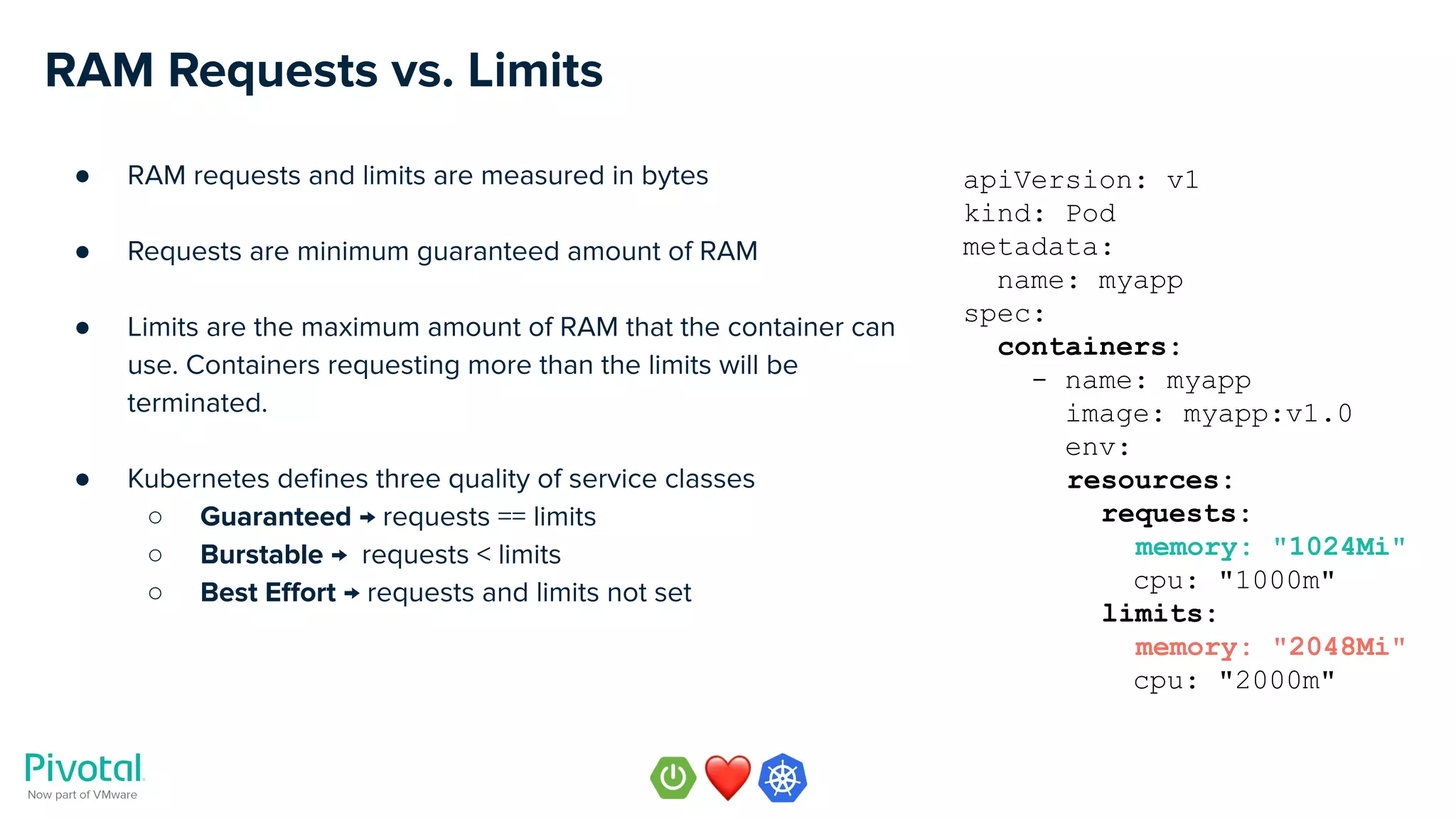

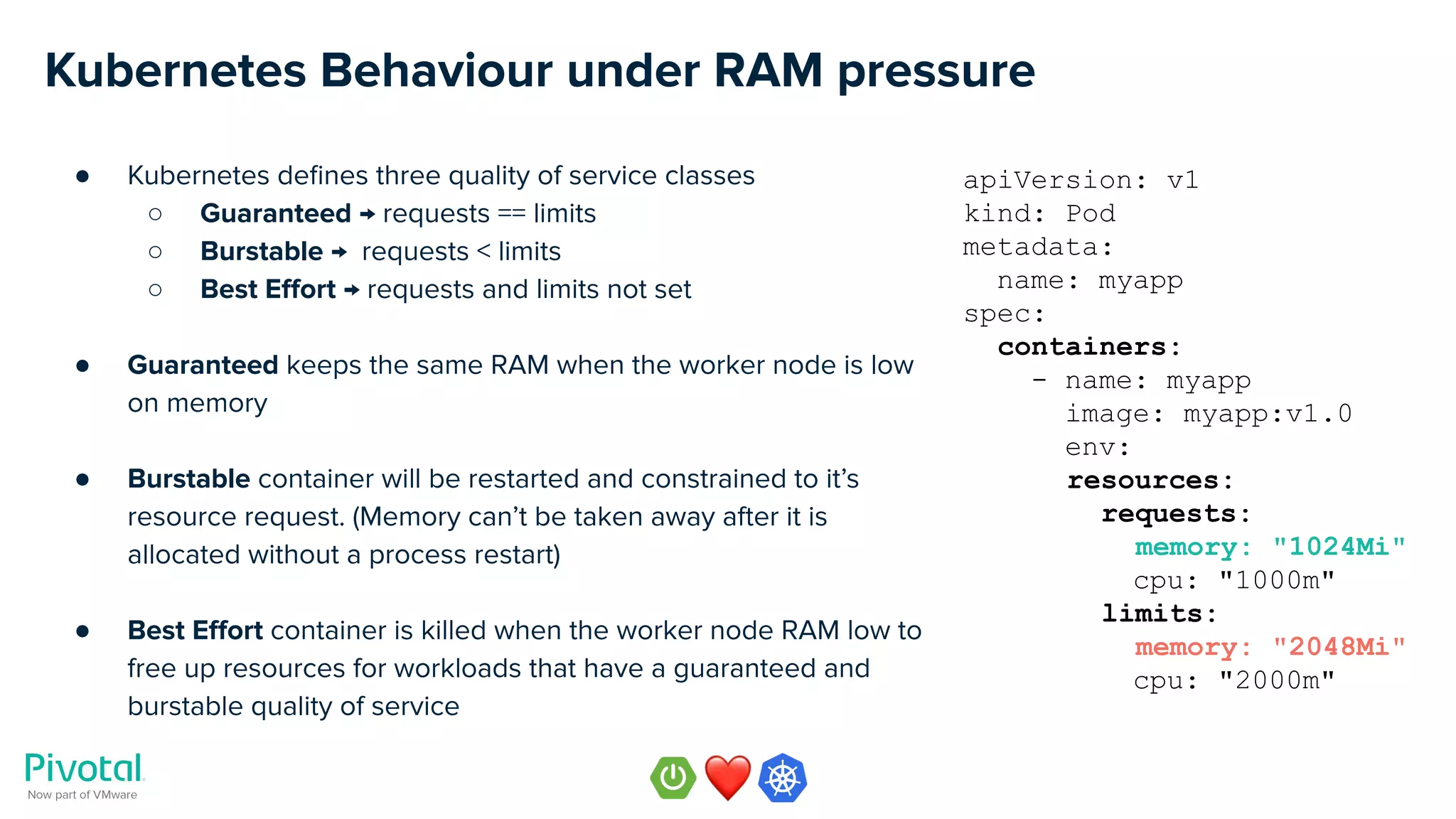

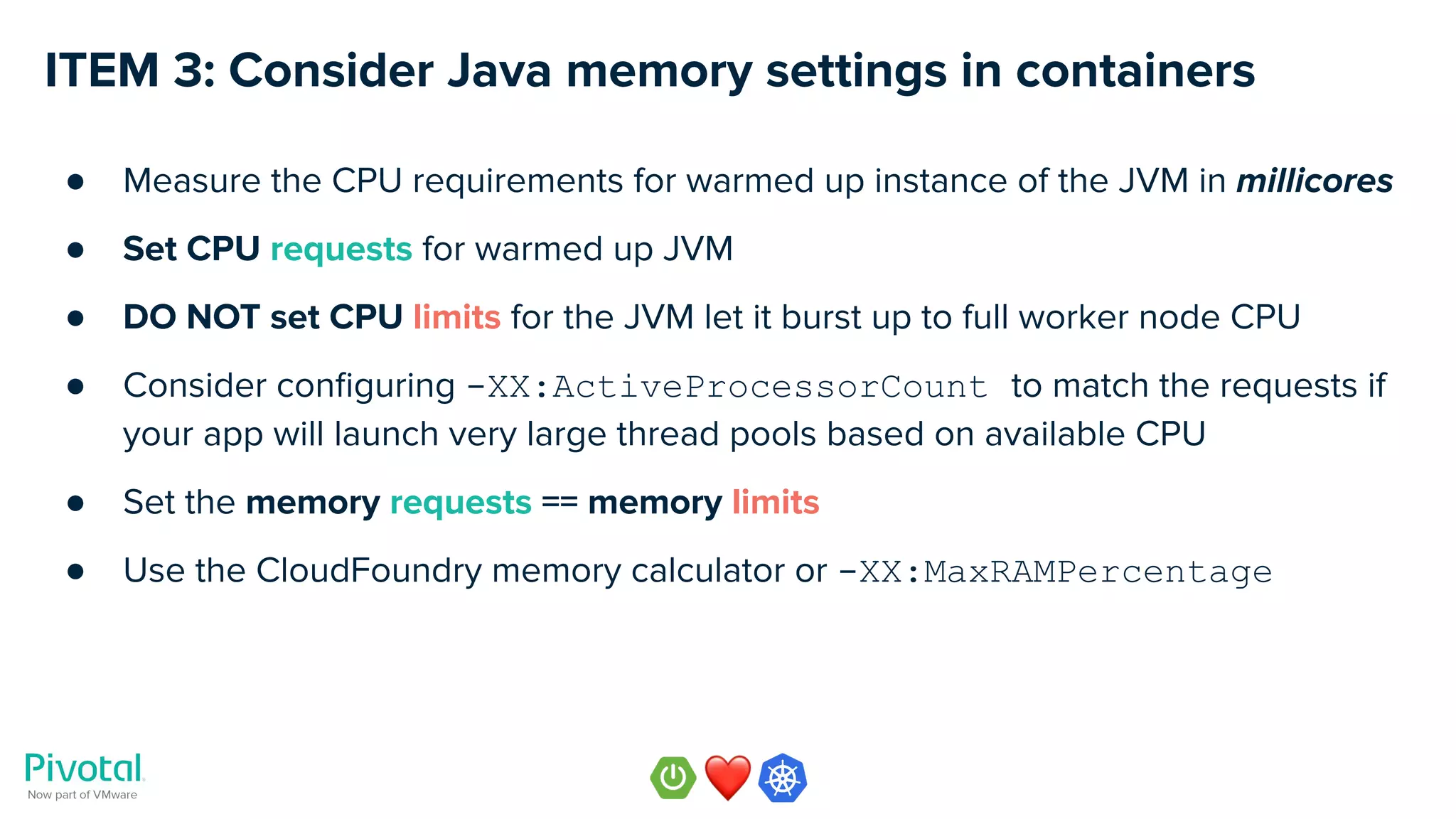

![JVM & Kubernetes RAM Requests & Limits

● Set the memory requests == memory limits

● JVM must be configured so that it does not consume more RAM than the limit across

all memory used by the JVM

○ Metaspace

○ Code cache

○ Heap

○ … etc

● Two choices to configure JVM memory consumption

○ -XX:MaxRAMPercentage=75.0 (Java 8u191 or later, Java 11)

○ Use the CloudFoundry memory calculator

https://github.com/cloudfoundry/java-buildpack-memory-calculator [35]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-57-2048.jpg)

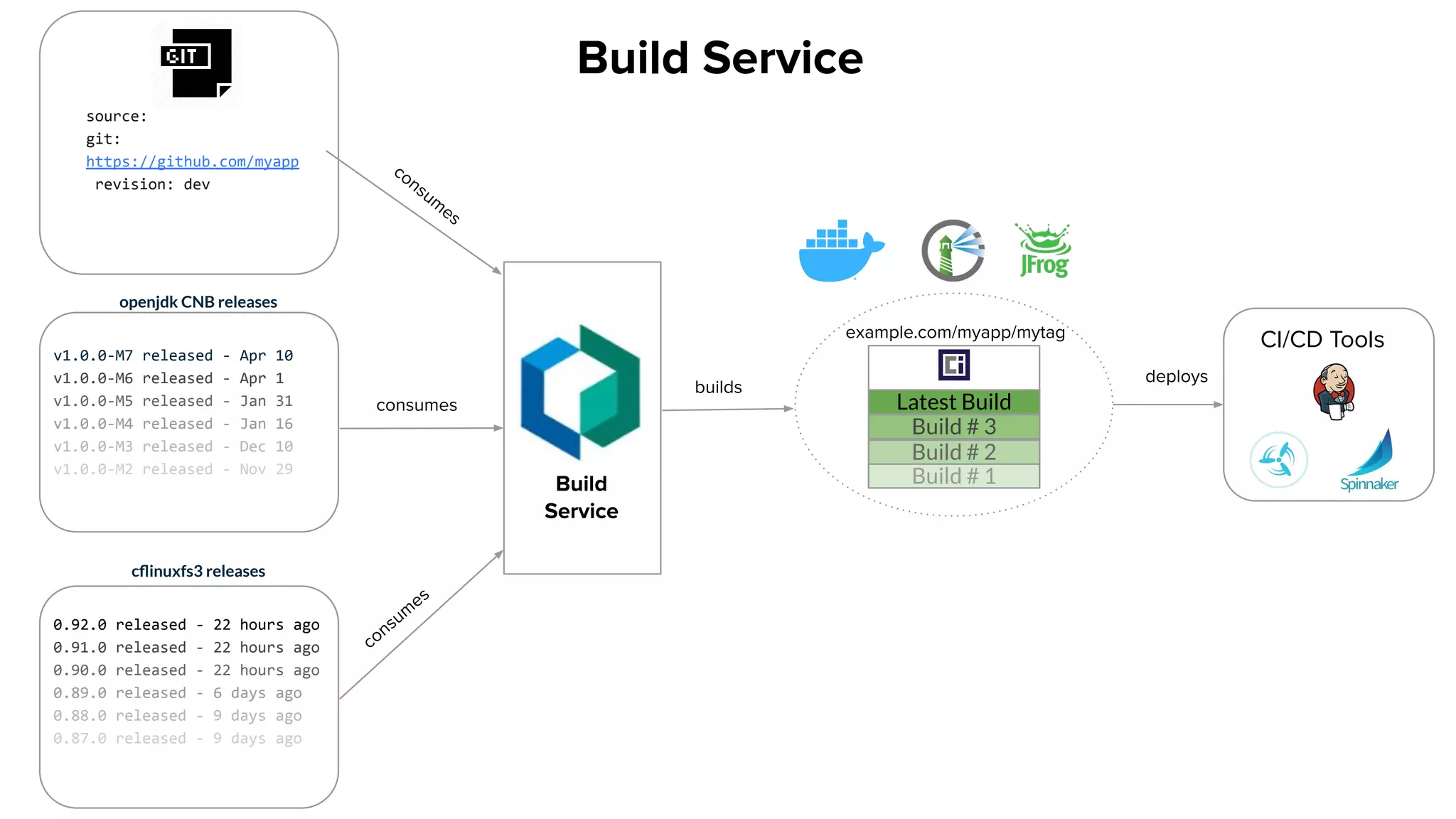

![ITEM 4: Consider using container building automation

● Consider different ways of building your container image of your Spring app

○ Your own multi-staged Dockerfile containing separate file layers

○ Cloud Native Buildpacks [36]

○ Other container building tools, e.g. jib [37]

● Cloud Native Buildpacks are supported in Spring Boot 2.3.x](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-60-2048.jpg)

![Multi-stage Docker Build with Cloud Native Buildpacks

● Rebuild the application binary

./gradlew -b build.gradle.cnb build

● Build the image using cloud native buildpacks

./gradlew -b build.gradle.cnb bootBuildImage

● Run the image locally

docker run -it -p8080:8080 nevenc/spring-music-k8s:with-cnb

● Run the image on Kubernetes

kubectl create deployment spring-music --image=nevenc/spring-music-k8s:with-cnb

kubectl expose deployment spring-music --port=8080 --type=NodePort

● Example code

https://github.com/nevenc/spring-music-k8s [38] [39]

HANDS-ON EXAMPLE](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-61-2048.jpg)

![Multi-stage Docker Build with JIB

● Rebuild the application binary

./gradlew -b build.gradle.jib build

● Build the image using jib

./gradlew -b build.gradle.jib jib

● Run the image locally

docker run -it -p8080:8080 nevenc/spring-music-k8s:with-jib

● Run the image on Kubernetes

kubectl create deployment spring-music --image=nevenc/spring-music-k8s:with-jib

kubectl expose deployment spring-music --port=8080 --type=NodePort

● Example code

https://github.com/nevenc/spring-music-k8s [40] [41]

HANDS-ON EXAMPLE](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-62-2048.jpg)

![Cloud Native Buildpacks (CNB) Bring Developer Productivity to K8s

Pluggable, modular tools that

translate source code into OCI

images.

● Portability via the OCI [42] standard

● Greater modularity

● Faster builds

● Run in local dev environments for faster

troubleshooting

● Developed in partnership with Heroku [43]

● CNCF project [44]](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-64-2048.jpg)

![Cloud Native Buildpacks (CNB) Concepts

● Cloud Native Buildpacks (CNB) [45]

○ pack

○ buildpack

○ builder

○ stack

○ lifecycle](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-65-2048.jpg)

![Learn more about Cloud Native Buildpacks

● Additional resources (videos and articles)

○ Cloud Native Buildpacks on Heroku Blog [46]

○ “CNB: Industry standard build process for kubernetes and beyond” [47]

- by Emily Casey, Pivotal now part of VMware

○ “Pack to the Future: Cloud-Native Buildpacks on k8s” - [48] [49]

- by Joe Kutner, Heroku and Emily Casey, Pivotal now part of VMware

○ “Introducing kpack - a Kubernetes Cloud Native Build Service“ [50]

- by Matthew McNew, Pivotal now part of VMware

● Start exploring Cloud Native Buildpacks and provide your feedback](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-66-2048.jpg)

![Cloud Native Buildpacks Demo

● b2b app is a modular app with three app components (all built in Spring Boot)

○ b2b-accounts, b2b-confirmation, b2b-payments

● They rely on two backing services

○ redis, rabbitmq

● The build system includes

○ Concourse for driving pipelines, Kpack build system, Github Repo, Docker registry

● We will look at two use cases

○ CASE 1: Updating a component (e.g. b2b-accounts UI change)

○ CASE 2: Updating a builder with more recent Java runtime, patching all apps images

● Example code

https://github.com/turbots/b2b [51]

HANDS-ON EXAMPLE](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-69-2048.jpg)

![Two additional videos not to miss!

“Spring Cloud on Kubernetes” [52]

by Ryan Baxter [53] and Alexandre Roman [54],

Platform Architects at Pivotal, now part of VMware

“Best Practices to Spring to Kubernetes

Easier and Faster” [55]

by Ray Tsang [56], Developer Advocate, Google](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-71-2048.jpg)

![Reference Links (1)

[1] https://wiki.musl-libc.org/functional-differences-from-glibc.html

[2] http://www.etalabs.net/compare_libcs.html

[3] https://openjdk.java.net/jeps/8229469

[4] https://www.infoq.com/news/2019/06/docker-vulnerable-java/

[5] https://docs.docker.com/docker-hub/official_images/

[6] https://www.docker.com/sites/default/files/d8/2018-12/Docker-Technology-Partner-Program-Guide-120418.pdf

[7] http://hg.openjdk.java.net

[8] http://jdk.java.net/

[9] https://adoptopenjdk.net/

[10] https://twitter.com/mraible

[11] https://developer.okta.com/blog/2019/01/16/which-java-sdk

[12] https://github.com/docker-library/official-images/pull/5710#issuecomment-483219593

[13] https://hub.docker.com/_/adoptopenjdk

[14] https://blog.adoptopenjdk.net/category/test

[15] https://github.com/AdoptOpenJDK/openjdk-docker

[16] https://github.com/AdoptOpenJDK/openjdk-docker/blob/master/slim-java.sh

[17] https://github.com/AdoptOpenJDK/openjdk-docker/blob/master/11/jre/alpine/Dockerfile.hotspot.releases.full

[18] https://github.com/sgerrand/alpine-pkg-glibc/

[19] https://hub.docker.com/u/adoptopenjdk

[20] https://en.wikipedia.org/wiki/Java_version_history](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-72-2048.jpg)

![Reference Links (2)

[21] https://hub.docker.com/_/adoptopenjdk

[22] https://hub.docker.com/r/adoptopenjdk/openjdk11

[23] https://pivotal.io/pivotal-spring-runtime

[24] https://github.com/nevenc/spring-music-k8s

[25] https://asciinema.org/a/YYtaKSboDzRIPi97gjMLN79vv

[26] https://github.com/nevenc/spring-music-k8s

[27] https://asciinema.org/a/WFndMwtbhl7yQQ2w1bQp1XbZZ

[28] https://github.com/spring-projects/spring-boot/wiki/Spring-Boot-2.3.0-M1-Release-Notes

[29] https://spring.io/team/pwebb

[30] https://spring.io/blog/2020/01/27/creating-docker-images-with-spring-boot-2-3-0-m1

[31] https://github.com/nevenc/spring-music-k8s

[32] https://asciinema.org/a/z2UxCHespgtdZyeJ7KRmWisPl

[33] https://kubernetes.io/docs/concepts/configuration/manage-compute-resources-container/

[34] https://kubernetes.io/docs/tasks/configure-pod-container/assign-cpu-resource/

[35] https://github.com/cloudfoundry/java-buildpack-memory-calculator

[36] https://buildpacks.io/#learn-more

[37] https://github.com/GoogleContainerTools/jib

[38] https://github.com/nevenc/spring-music-k8s

[39] https://asciinema.org/a/dzoxgoqMEEt9Y8AP406kBPKxk

[40] https://github.com/nevenc/spring-music-k8s](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-73-2048.jpg)

![Reference Links (3)

[41] https://asciinema.org/a/spE1JEtoC1TimQak1yI2OSs5A

[42] https://github.com/opencontainers/image-spec

[43] https://twitter.com/PivotalPlatform/status/1113426937685446657

[44] https://landscape.cncf.io/selected=buildpacks

[45] https://buildpacks.io/docs/concepts/

[46] https://blog.heroku.com/docker-images-with-buildpacks

[47] https://content.pivotal.io/blog/cloud-native-buildpacks-for-kubernetes-and-beyond

[48] https://www.youtube.com/watch?v=J2SXkmOo8iQ

[49] https://www.slideshare.net/SpringCentral/pack-to-the-future-cloudnative-buildpacks-on-k8s

[50] https://content.pivotal.io/blog/introducing-kpack-a-kubernetes-native-container-build-service

[51] https://github.com/turbots/b2b

[52] https://youtu.be/pYpruogcb6w

[53] https://twitter.com/ryanjbaxter

[54] https://twitter.com/alexandre_roman

[55] https://youtu.be/YTPUNesUIbI

[56] https://twitter.com/saturnism](https://image.slidesharecdn.com/webinar-effectivespringonkubernetes-200212004707/75/Effective-Spring-on-Kubernetes-74-2048.jpg)