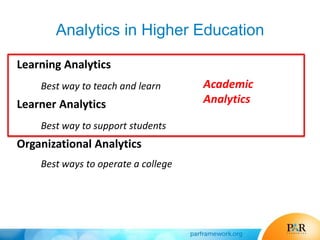

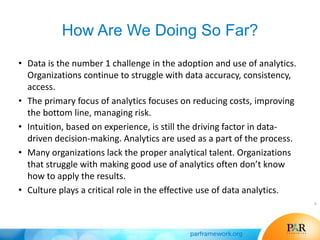

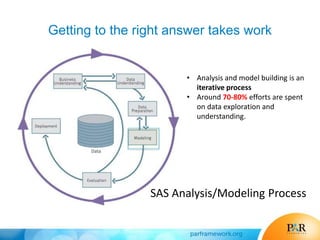

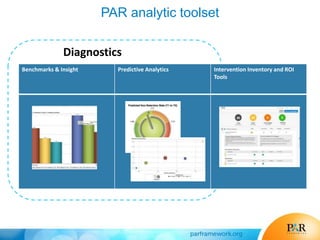

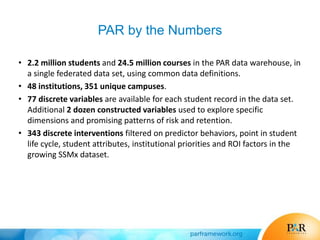

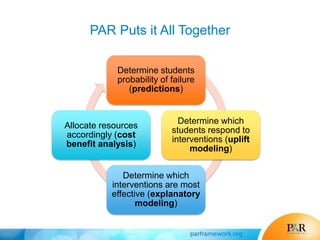

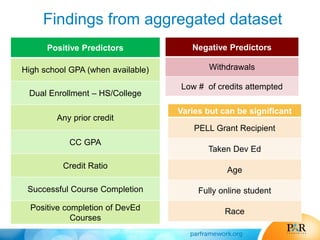

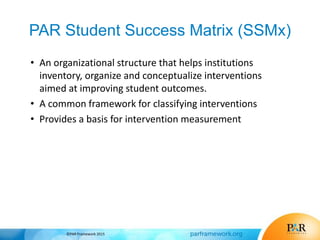

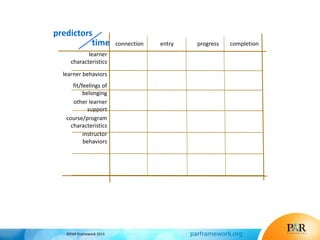

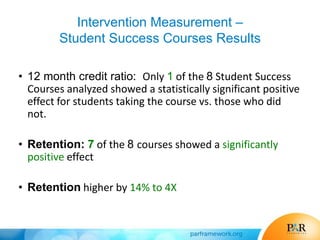

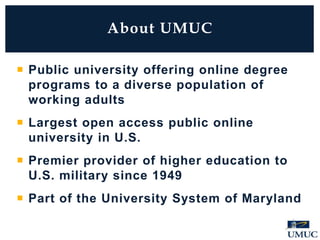

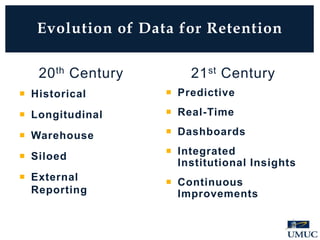

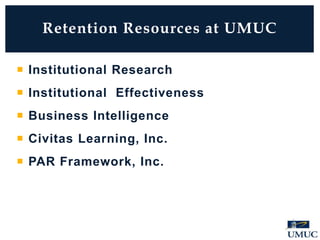

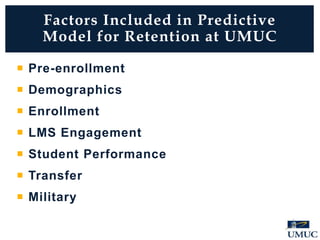

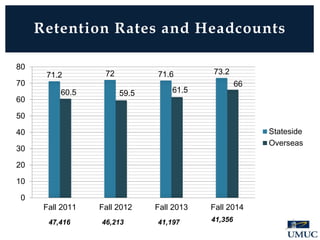

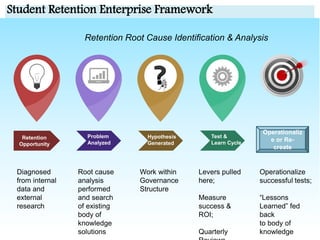

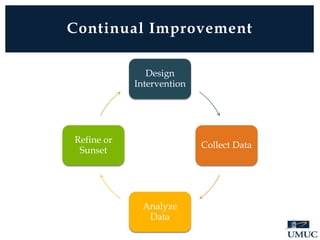

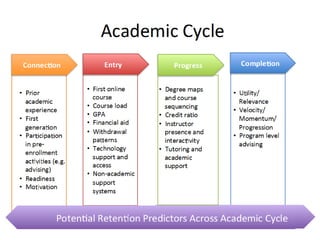

This document discusses how analytics can be used to improve student success. It begins by describing a session that shows how analytics identify opportunities to improve student success. Participants will learn how to connect predictions of risk to interventions most likely to work under different conditions. The document then discusses how data is changing education and how analytics can be applied in areas like enrollment management, student services, and program design. It provides examples of how predictive analytics have been used at various institutions to improve retention, successful course completion, and graduation rates. The document emphasizes linking predictions of risk to specific interventions and measuring the impact and ROI of different interventions.