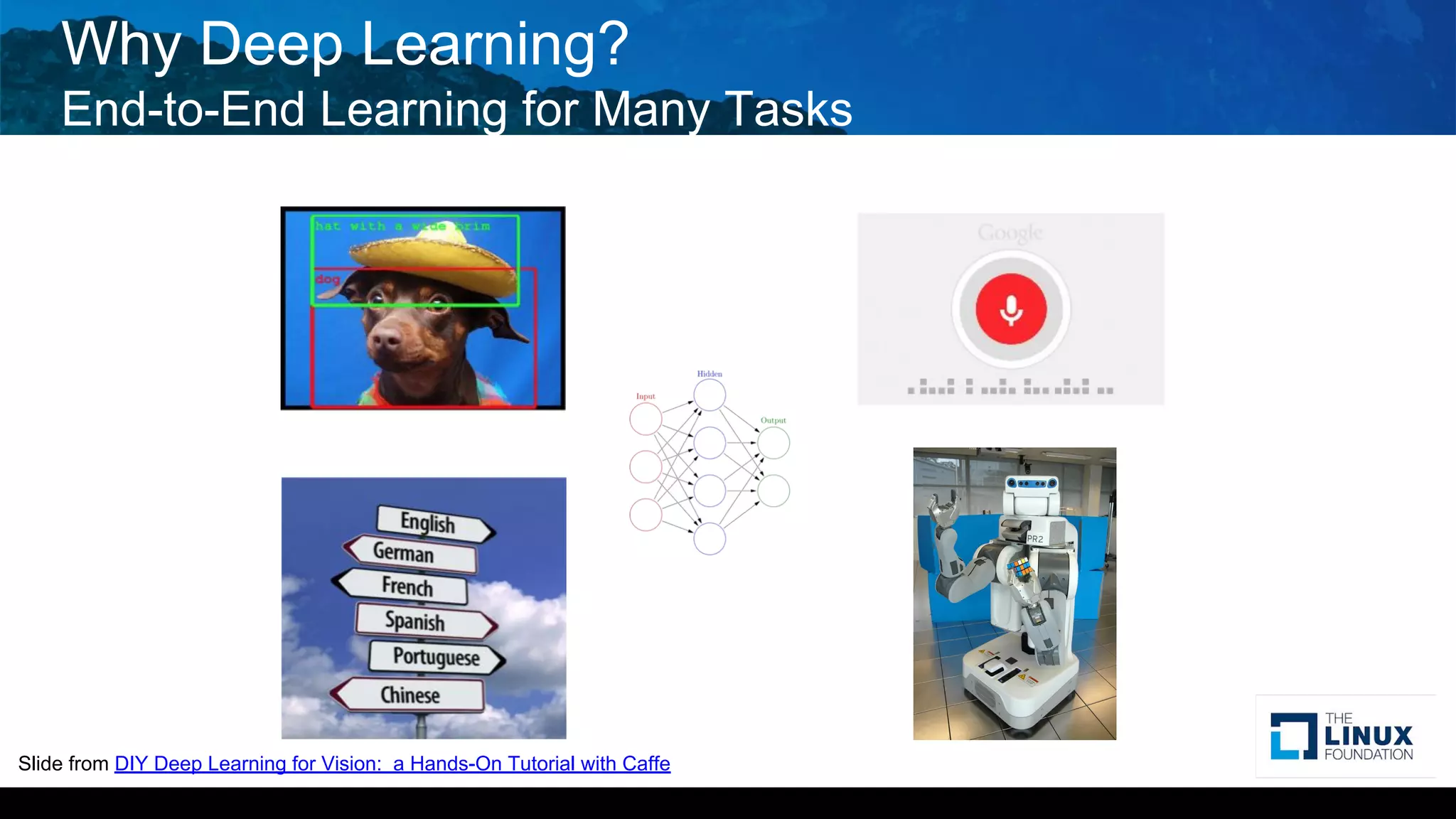

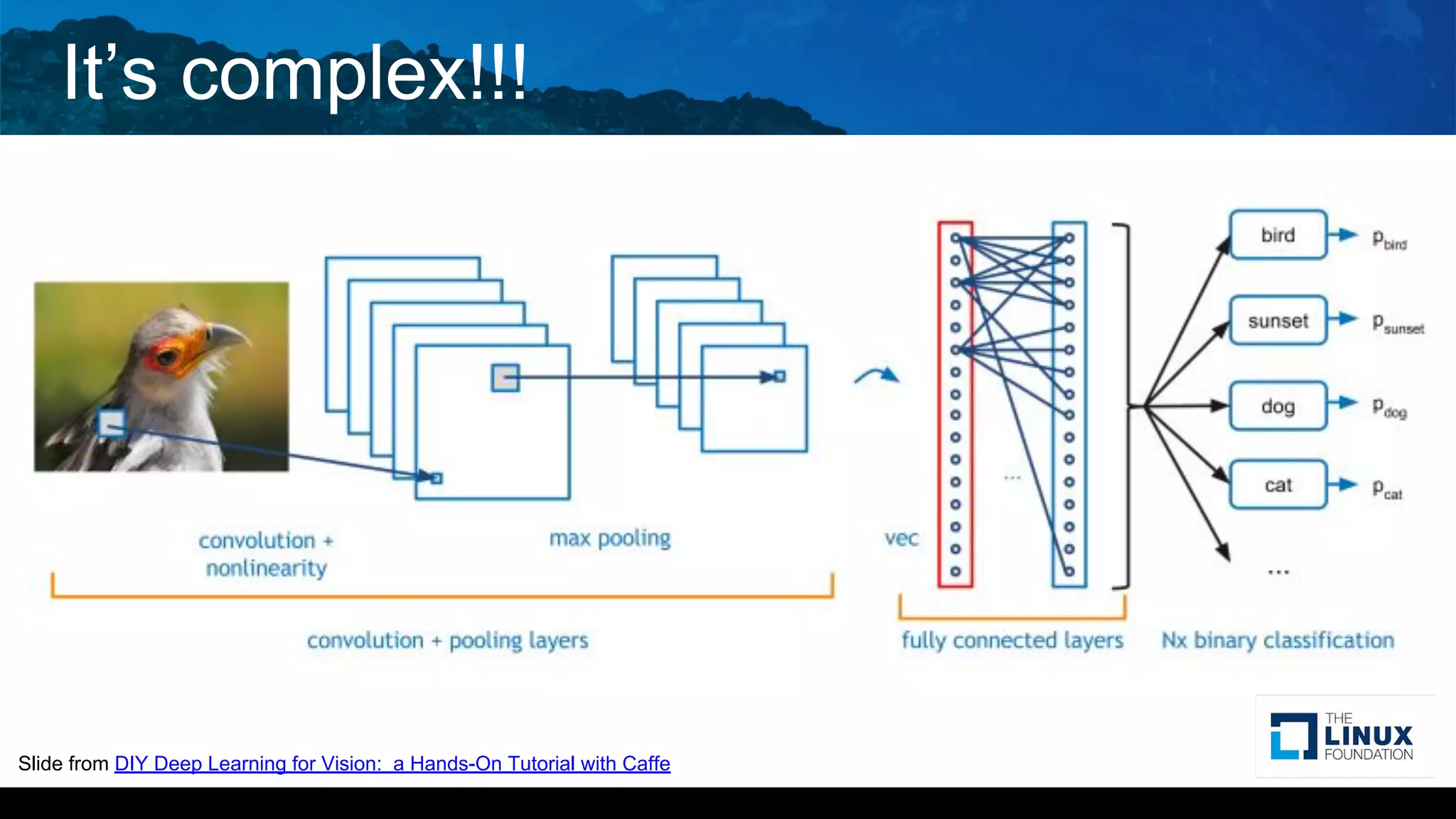

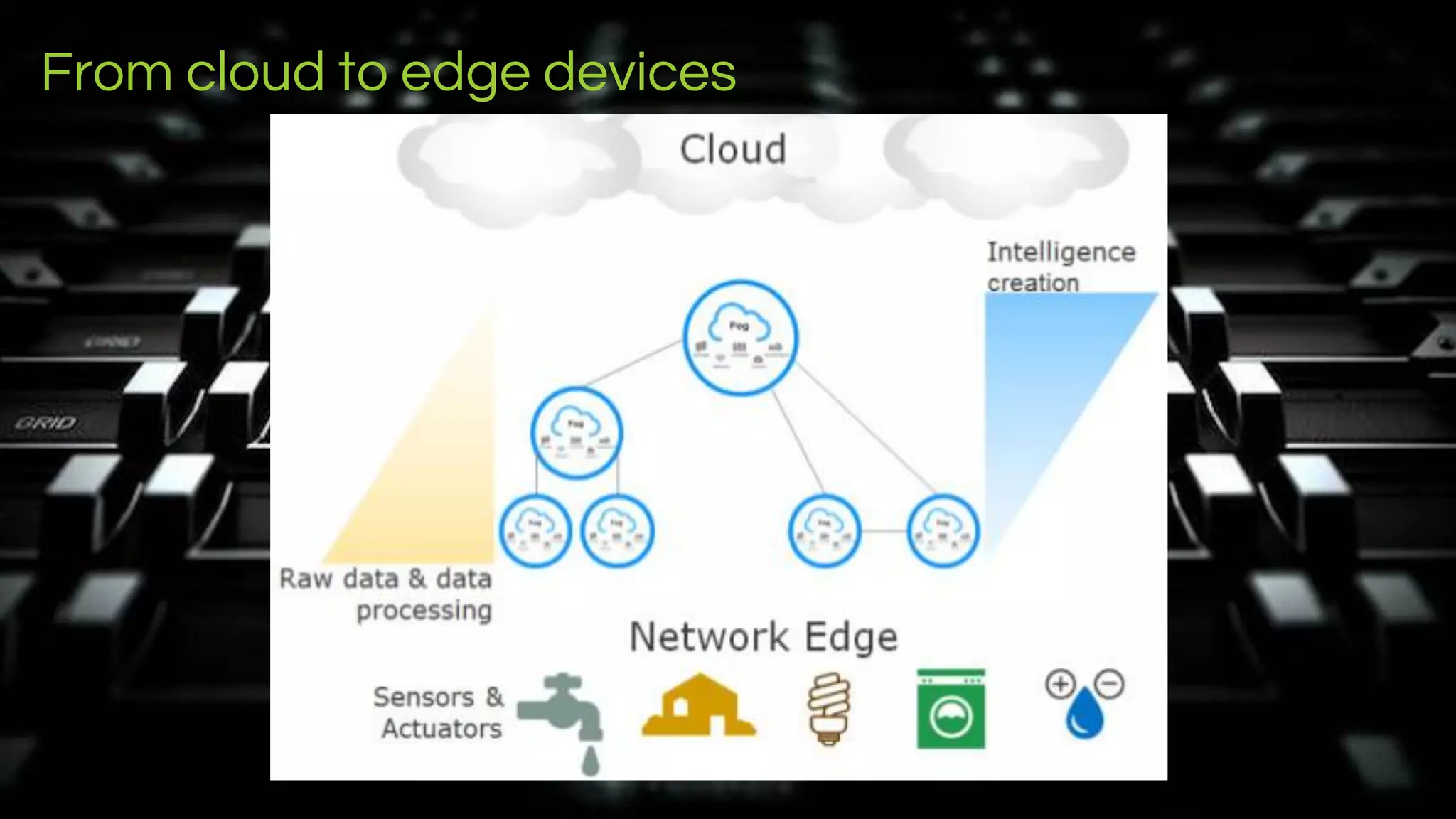

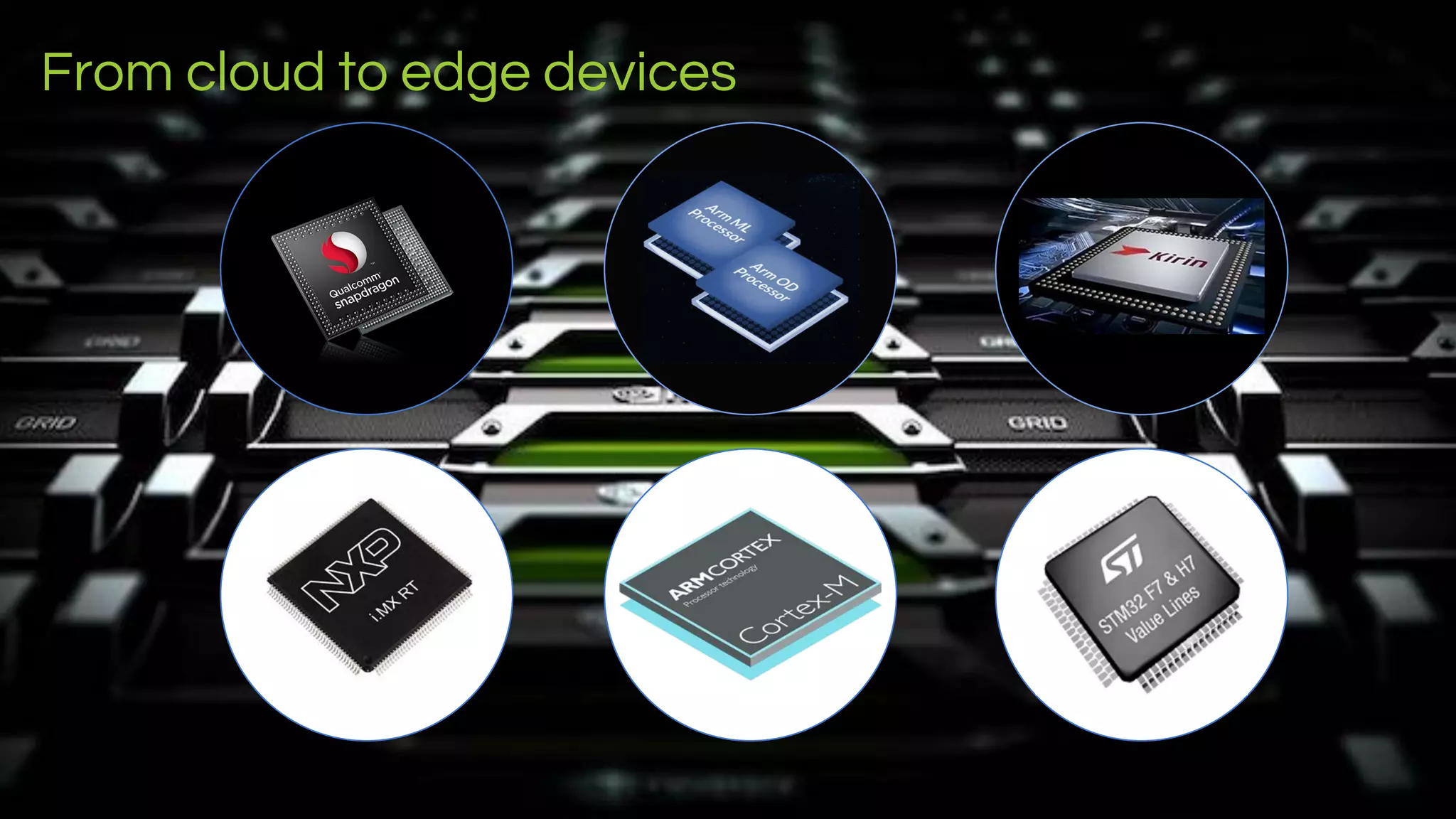

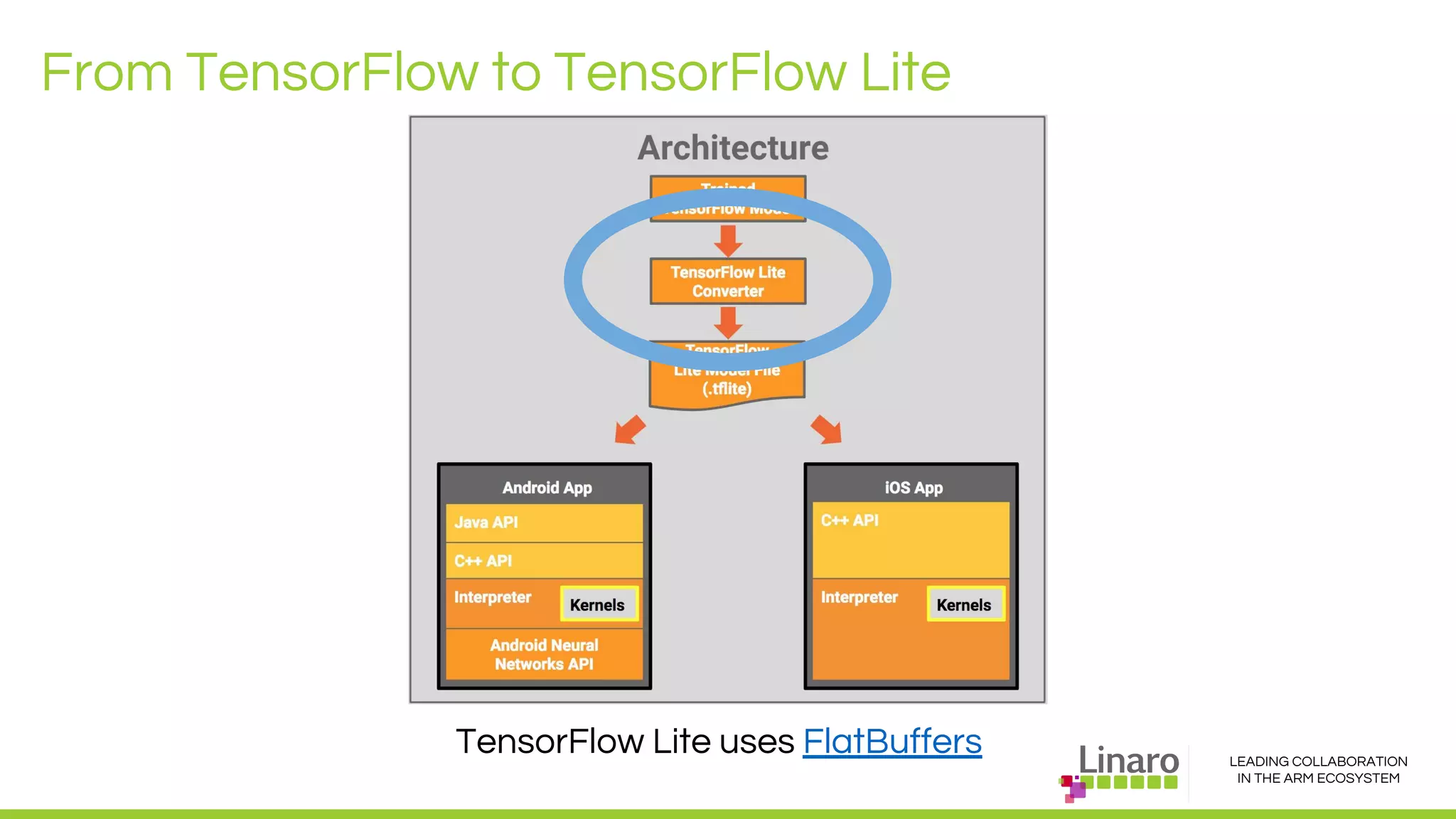

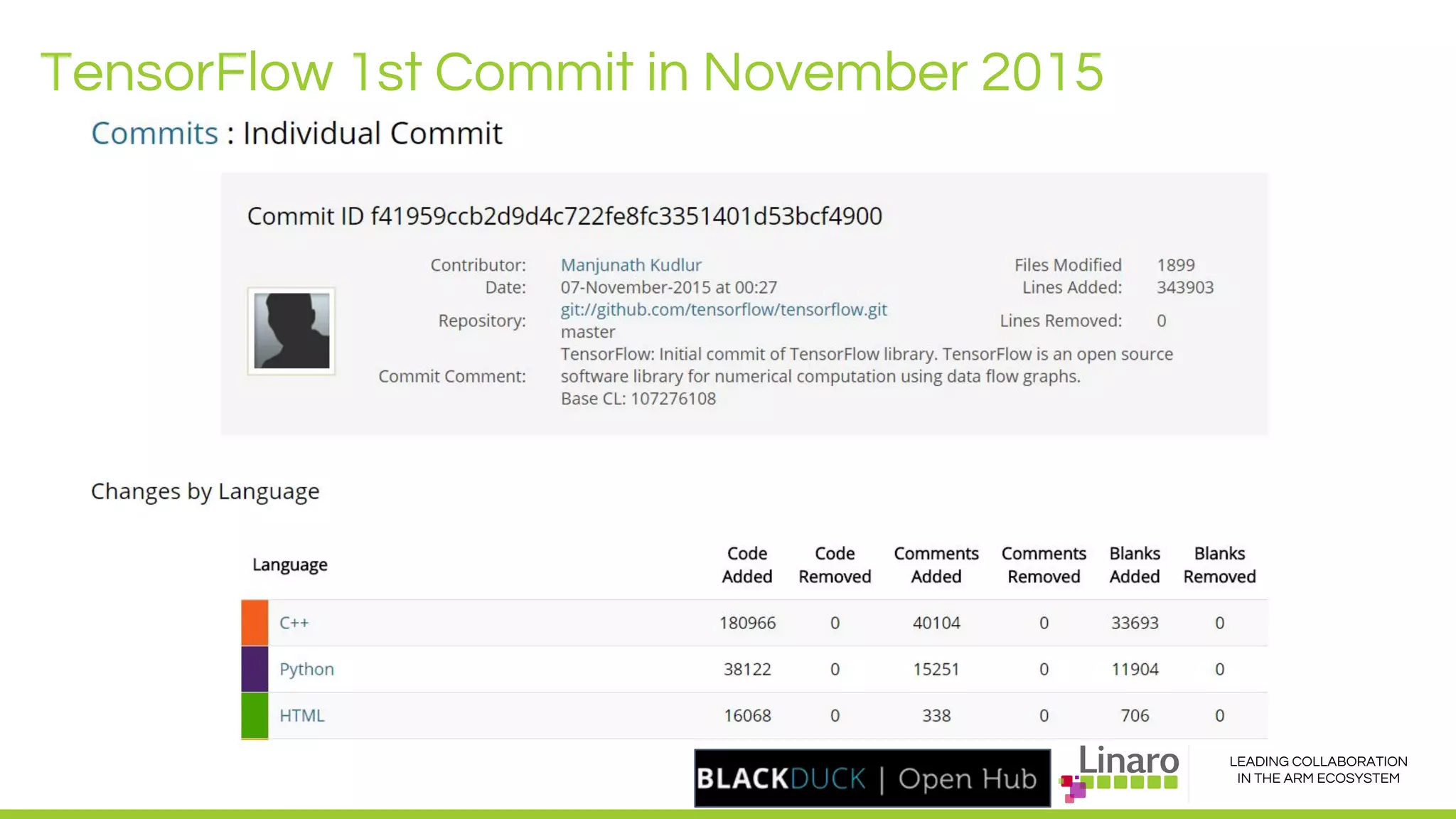

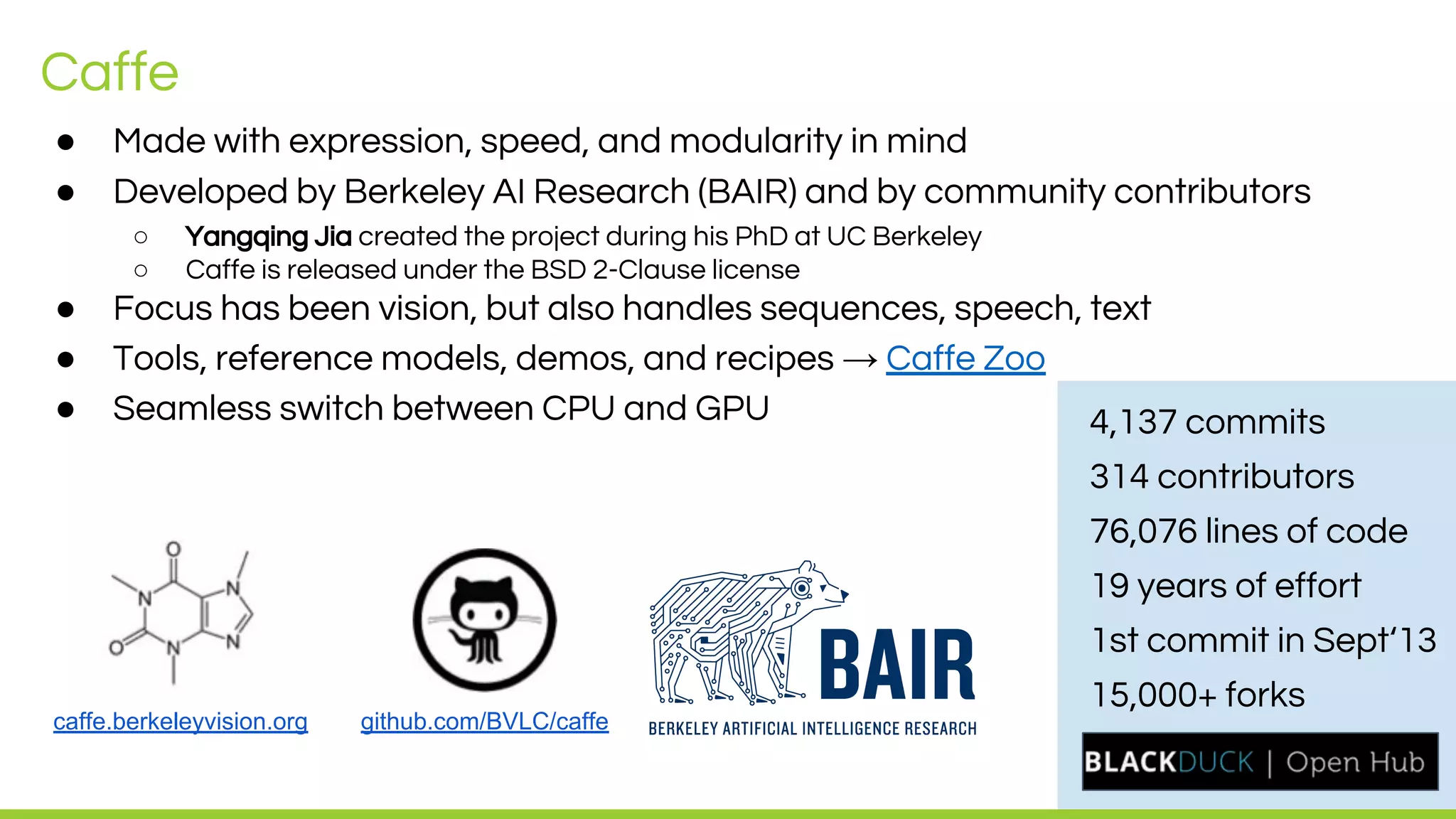

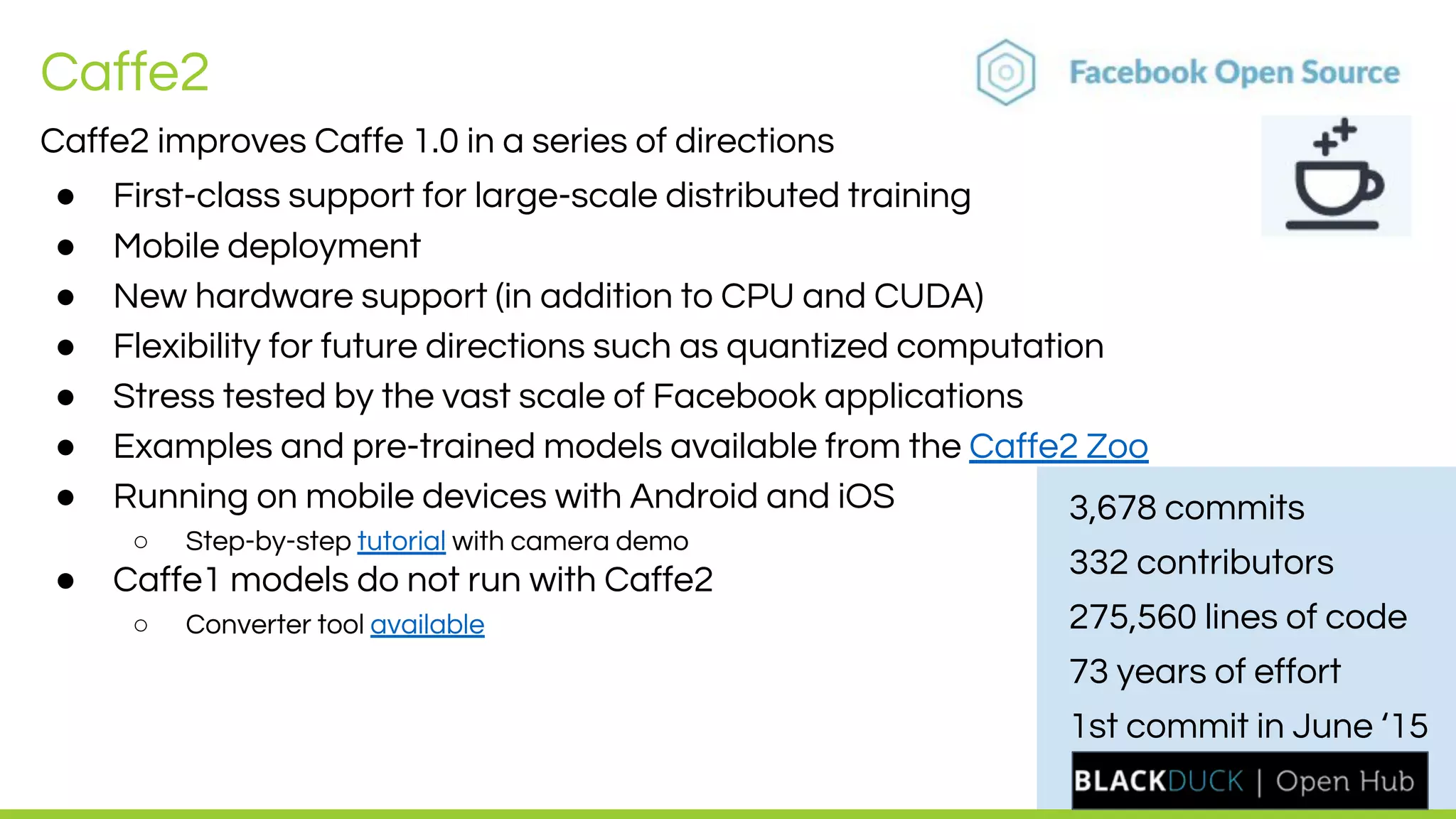

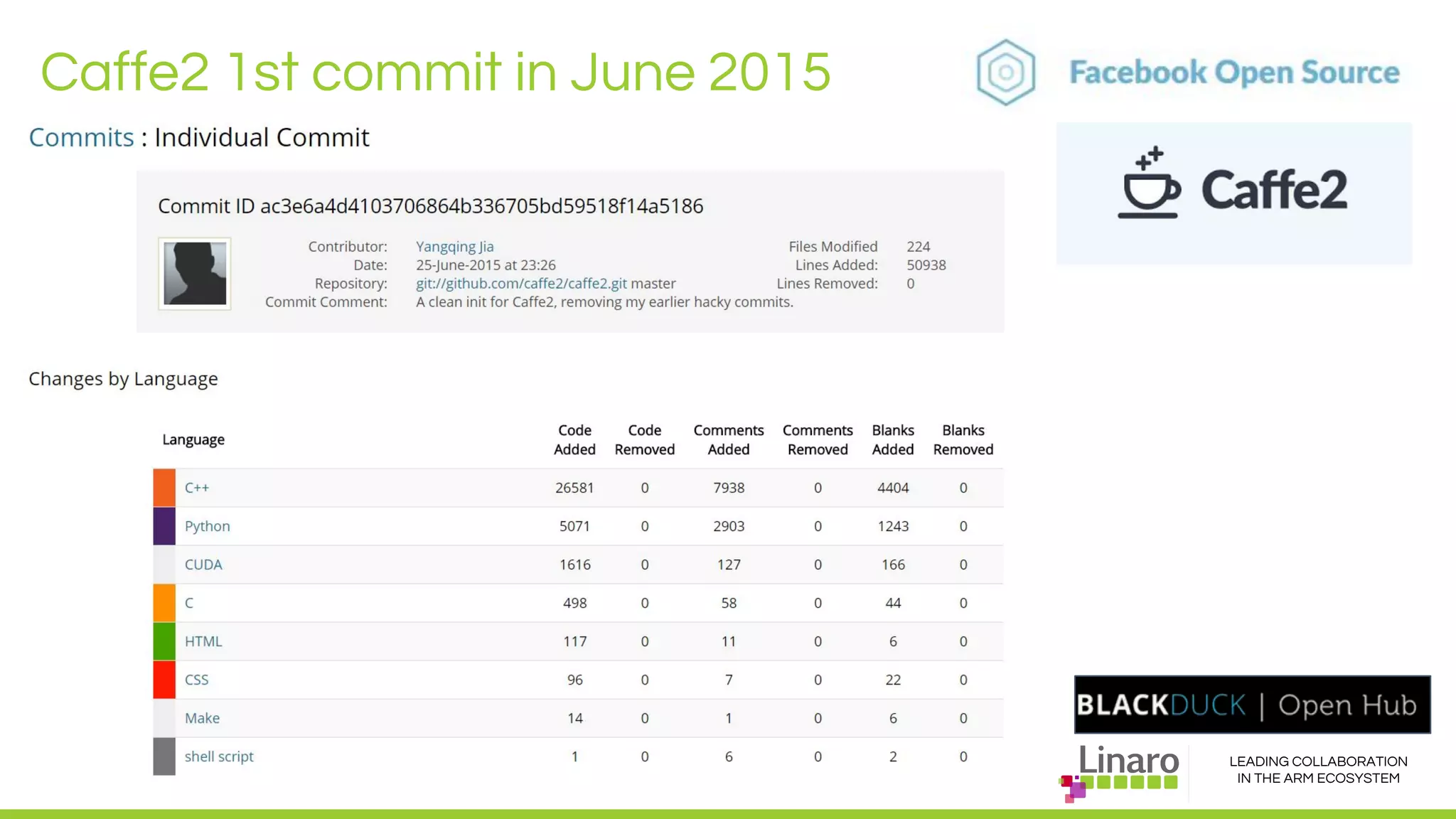

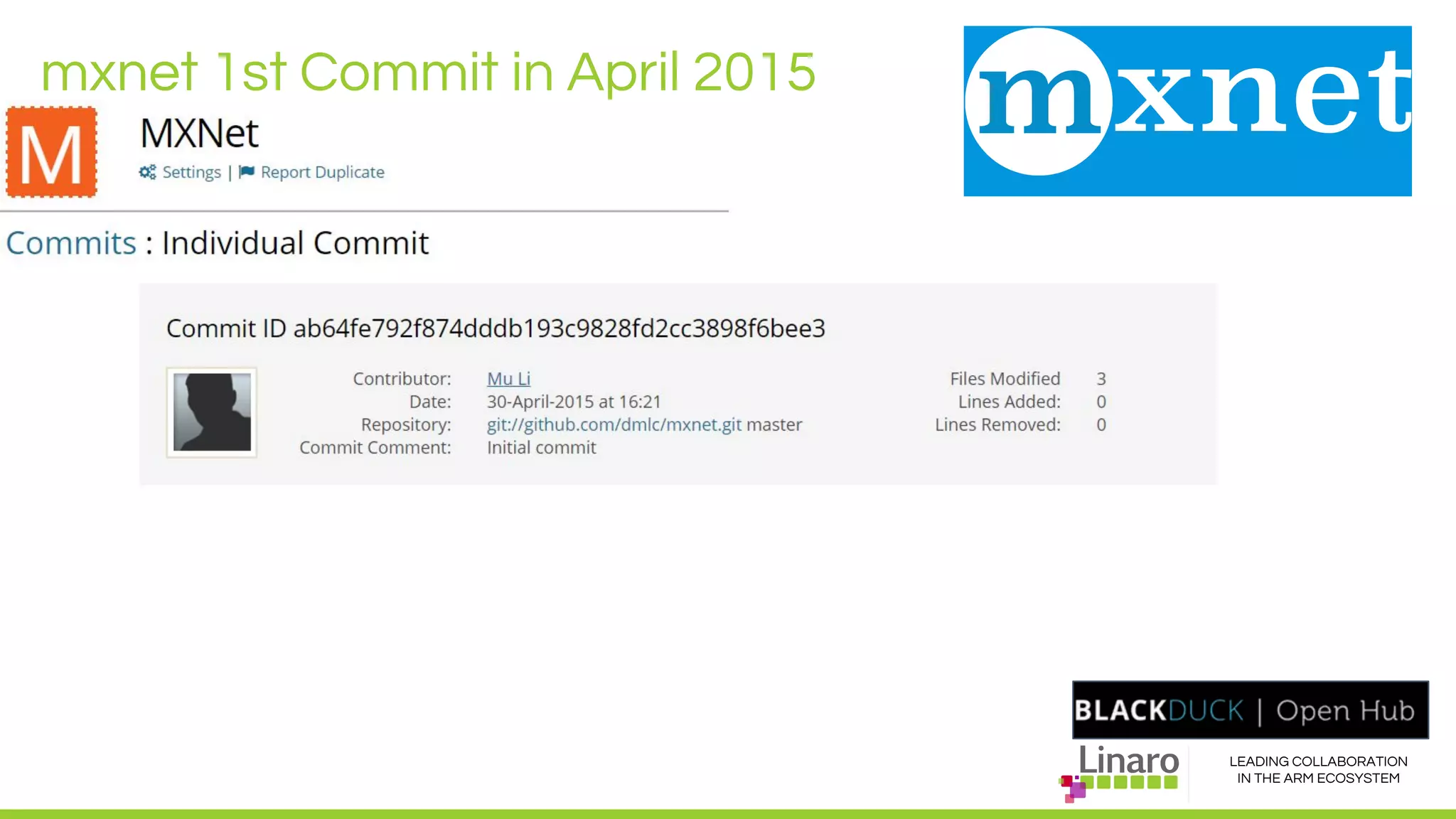

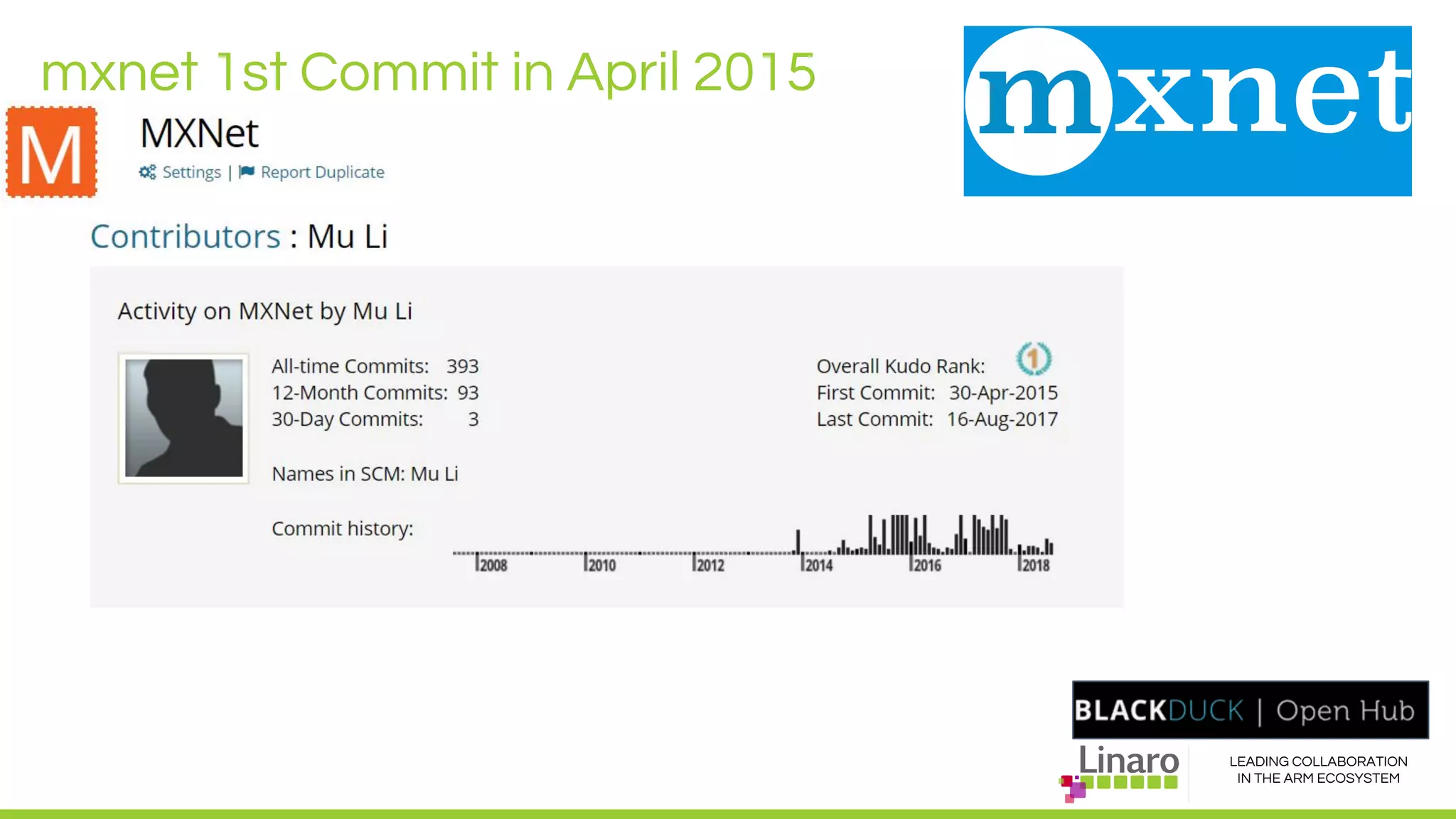

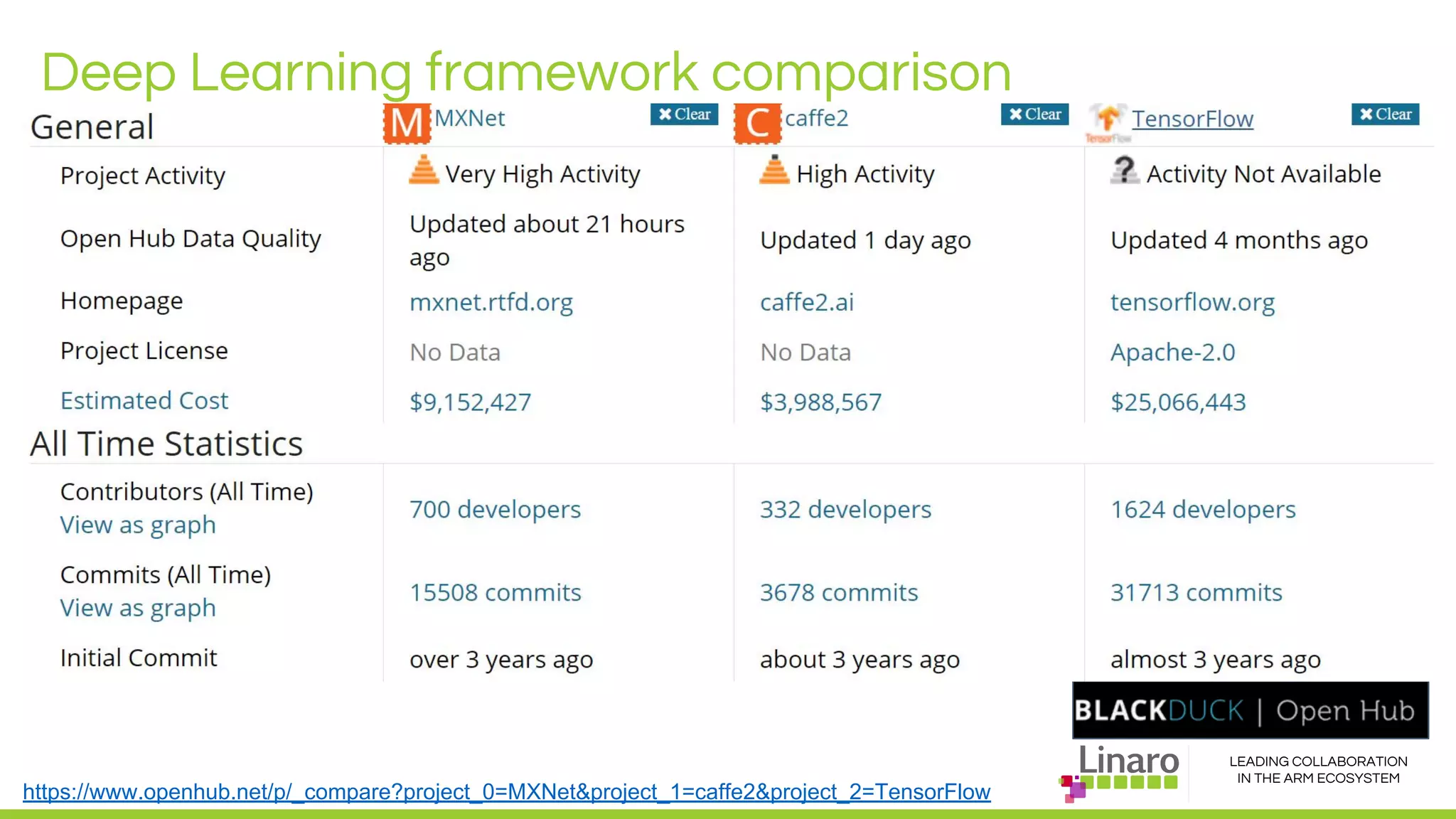

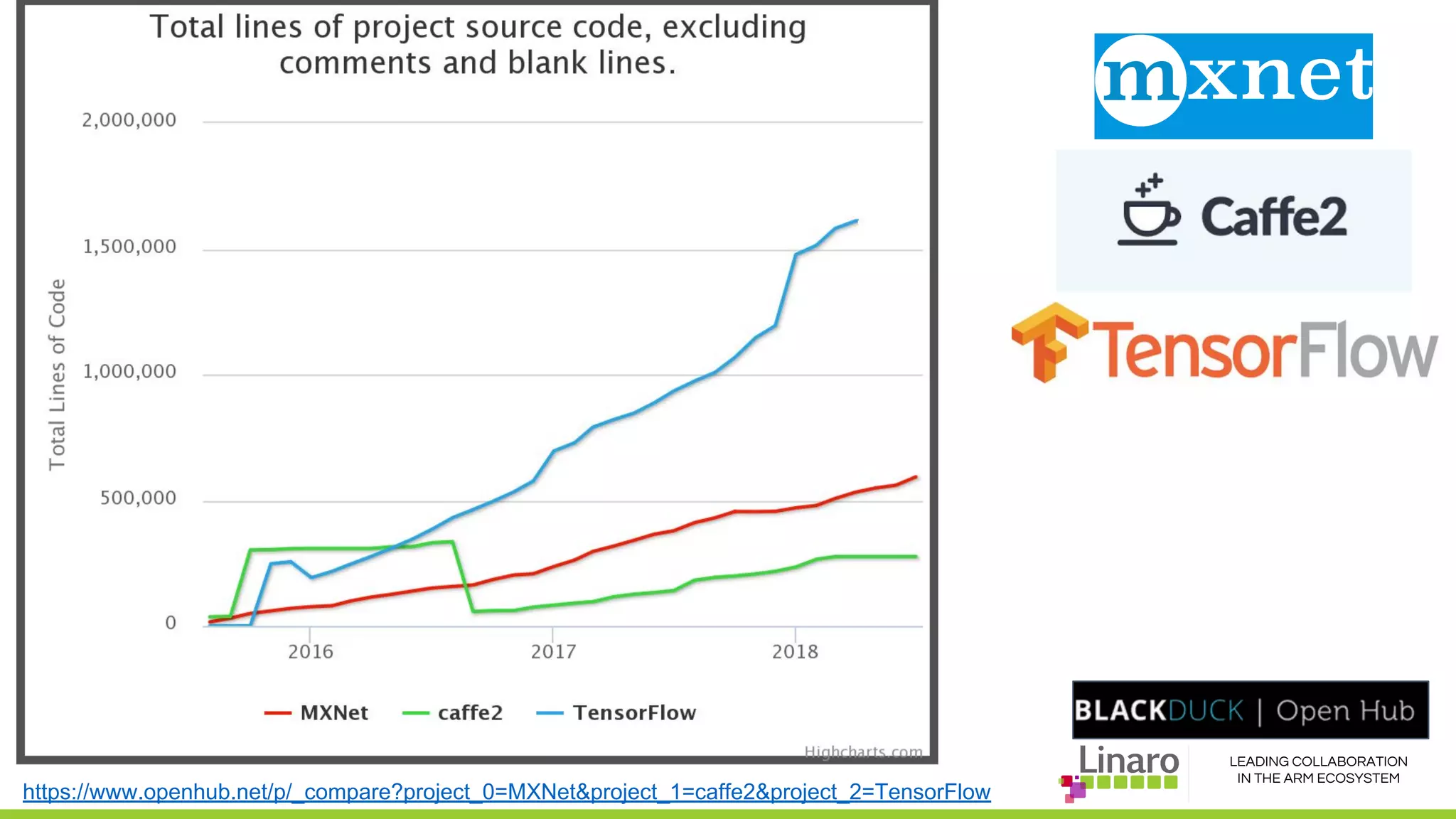

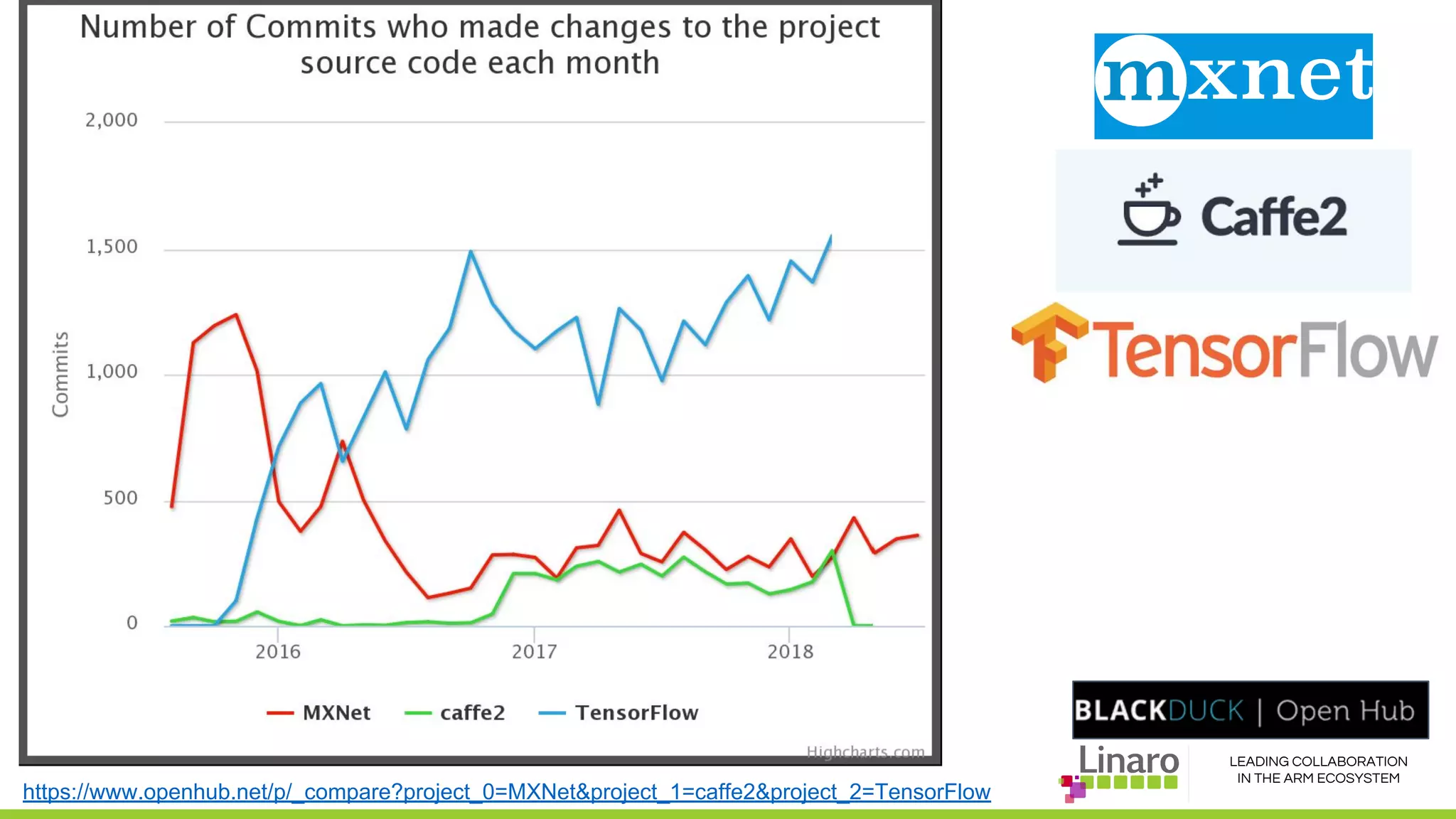

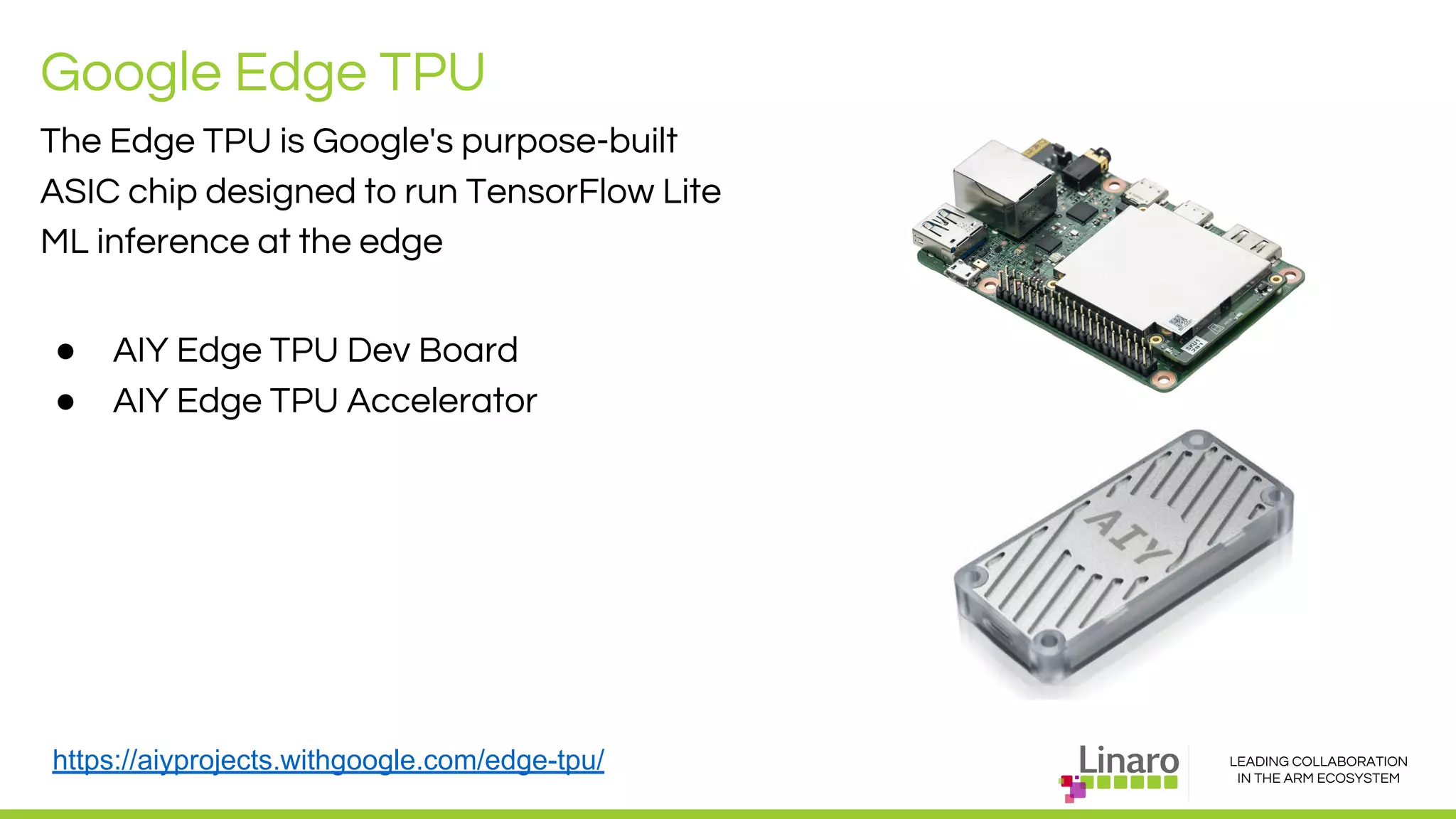

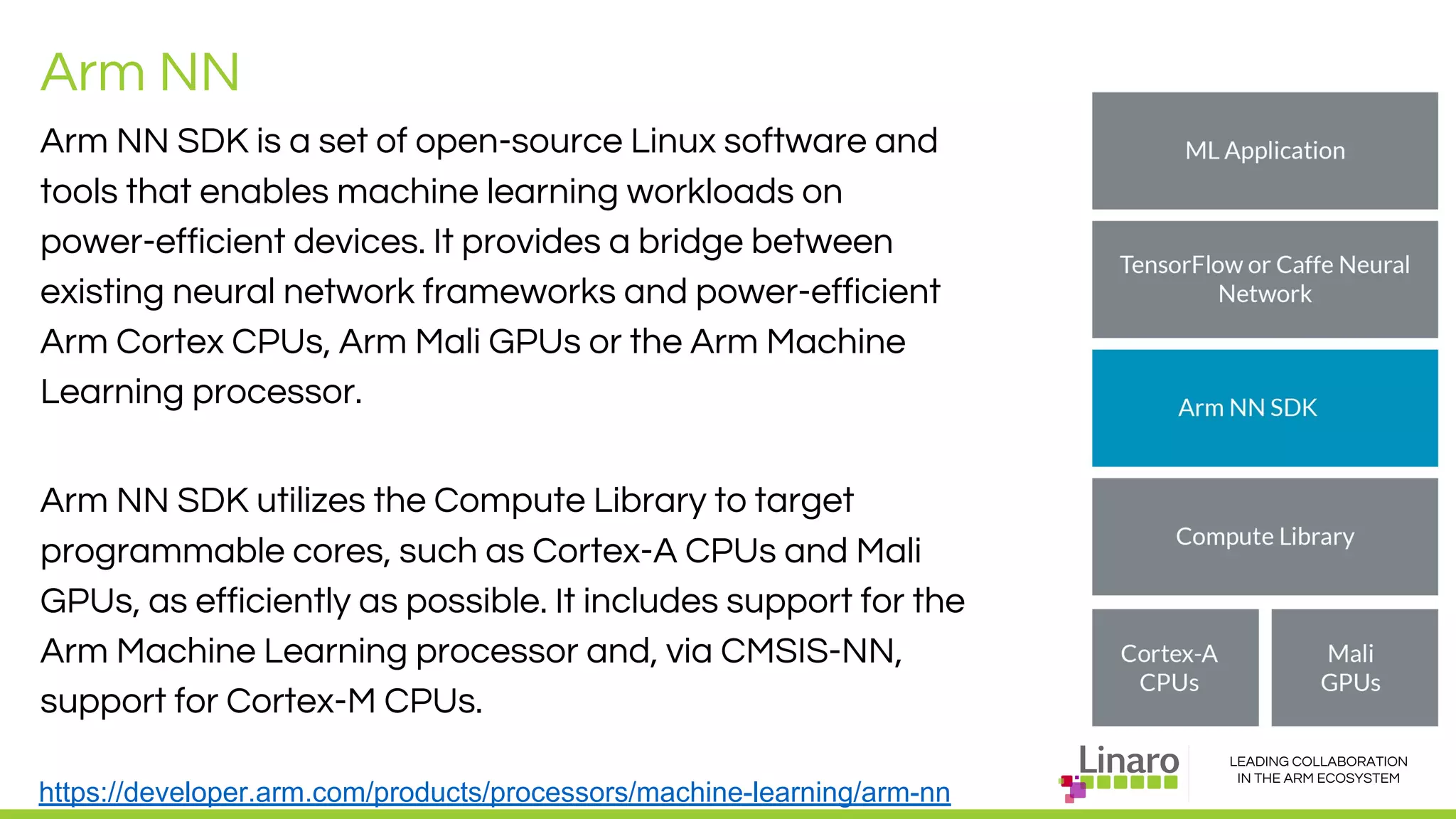

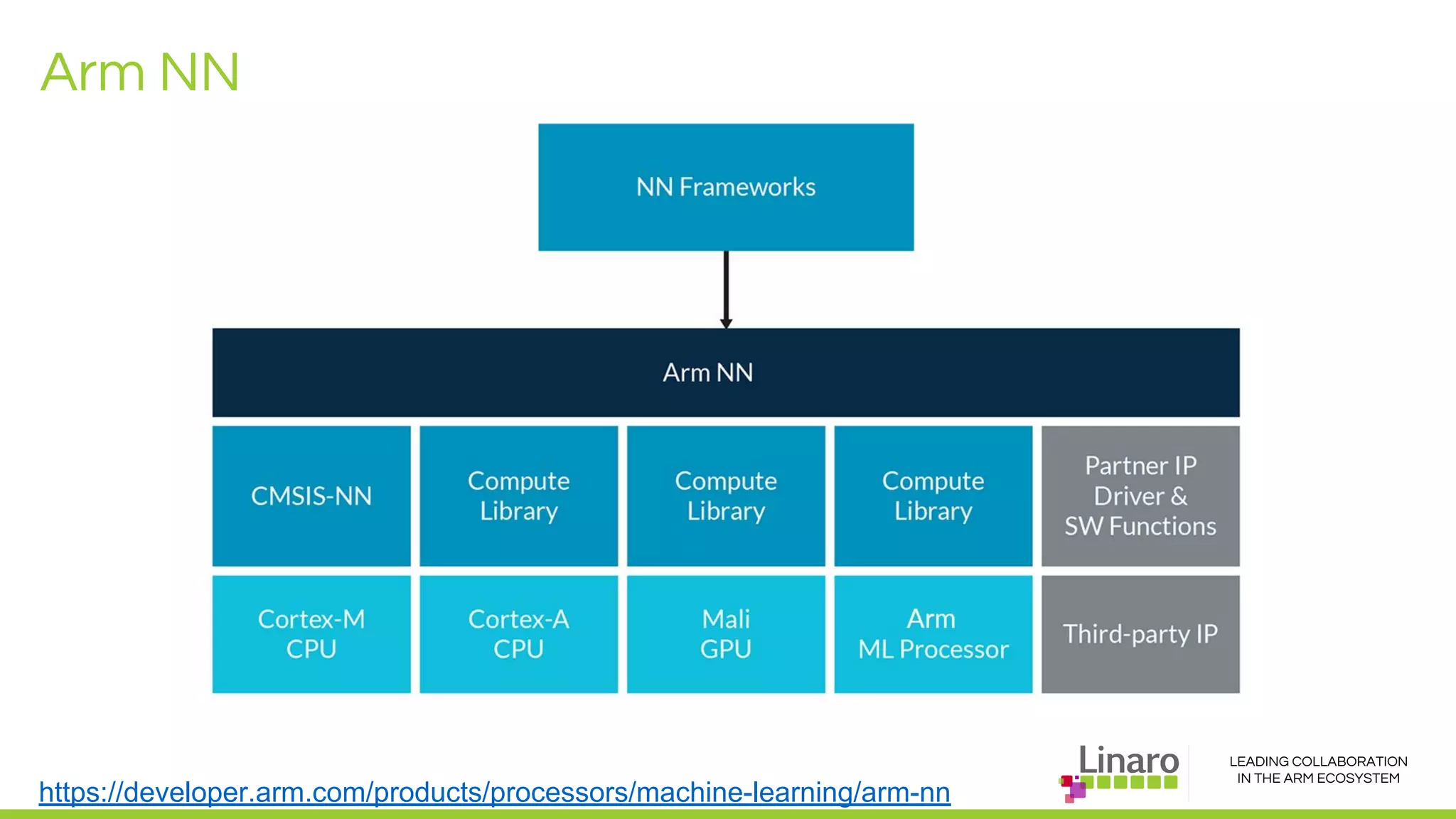

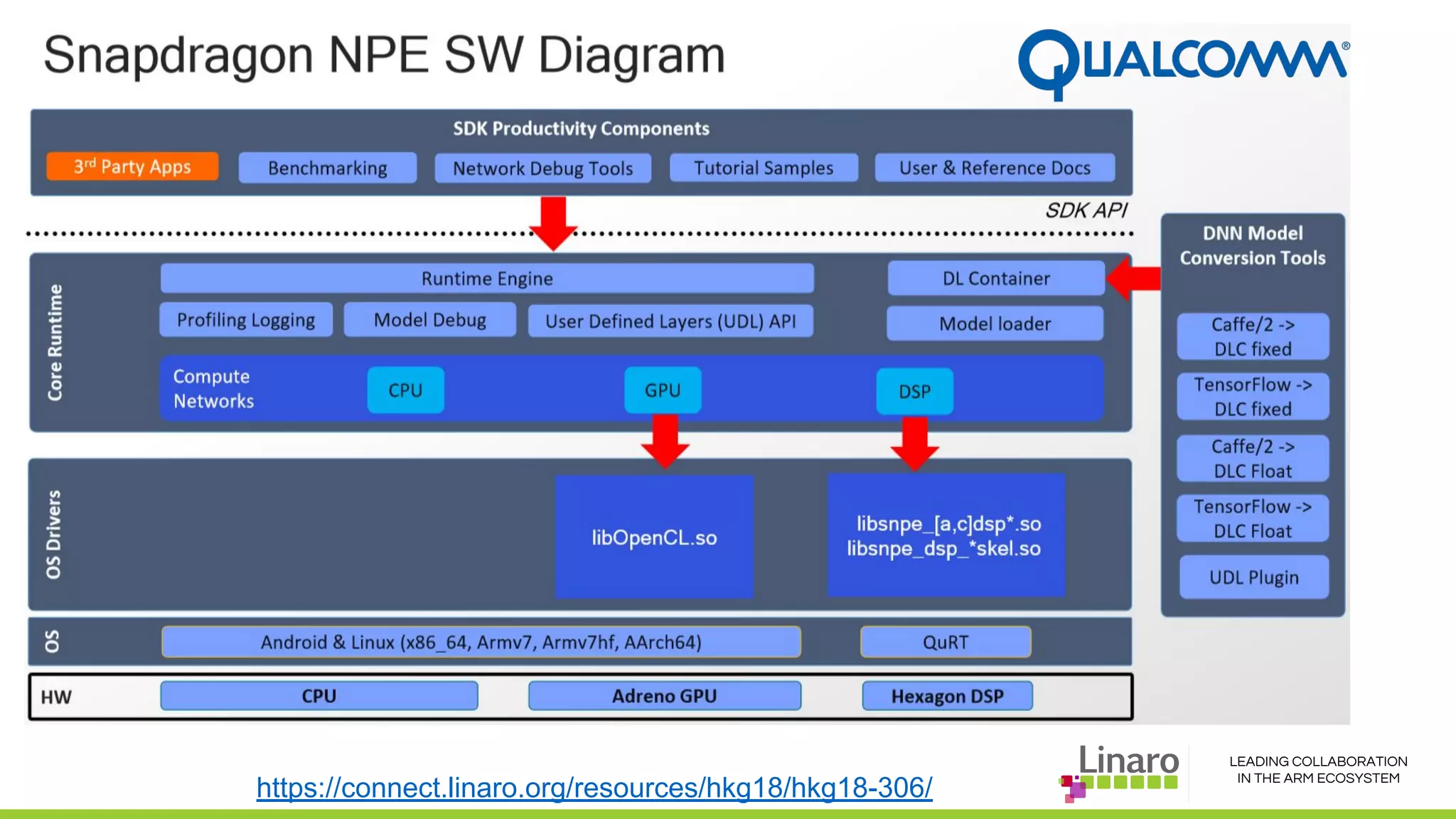

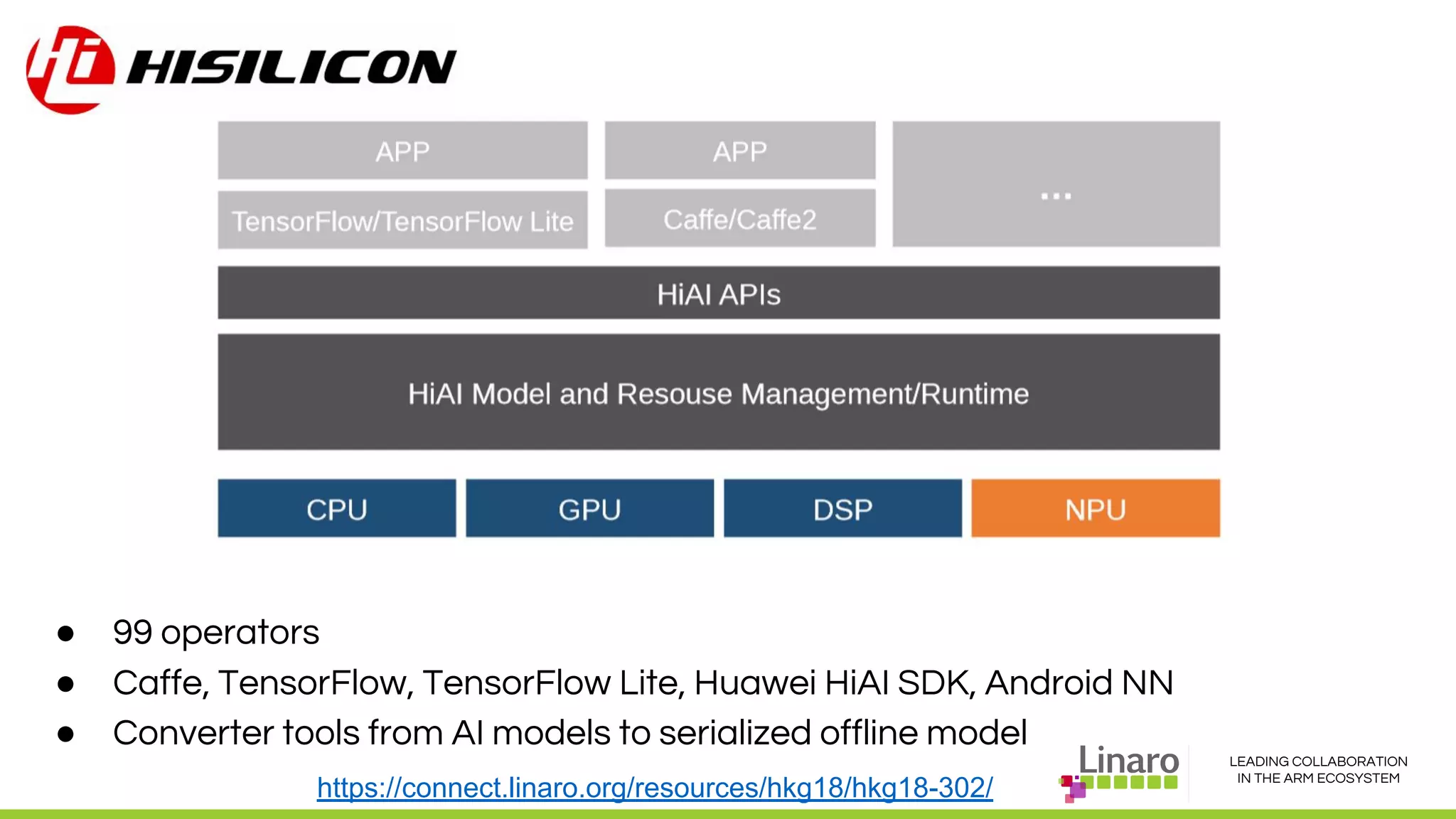

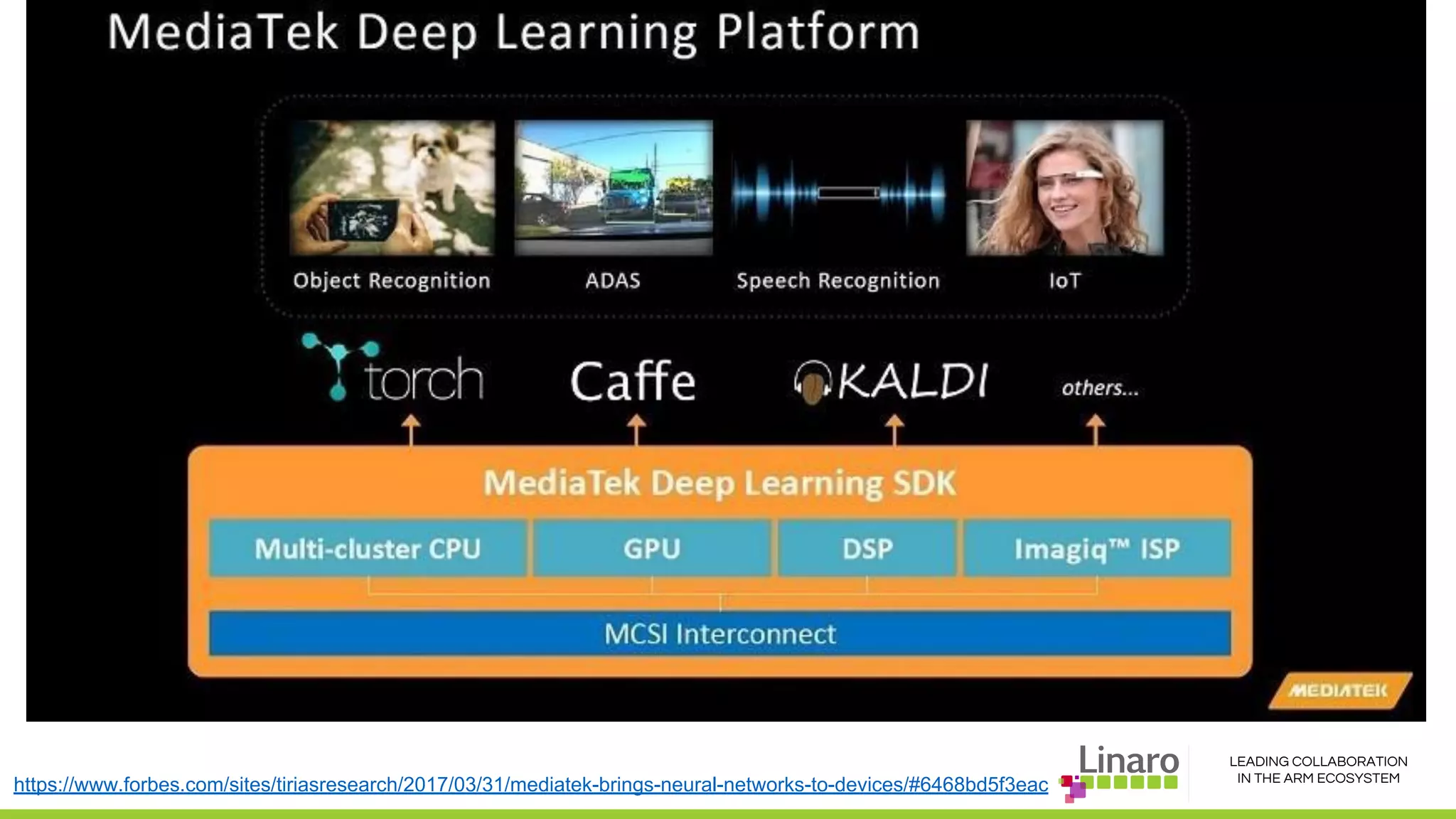

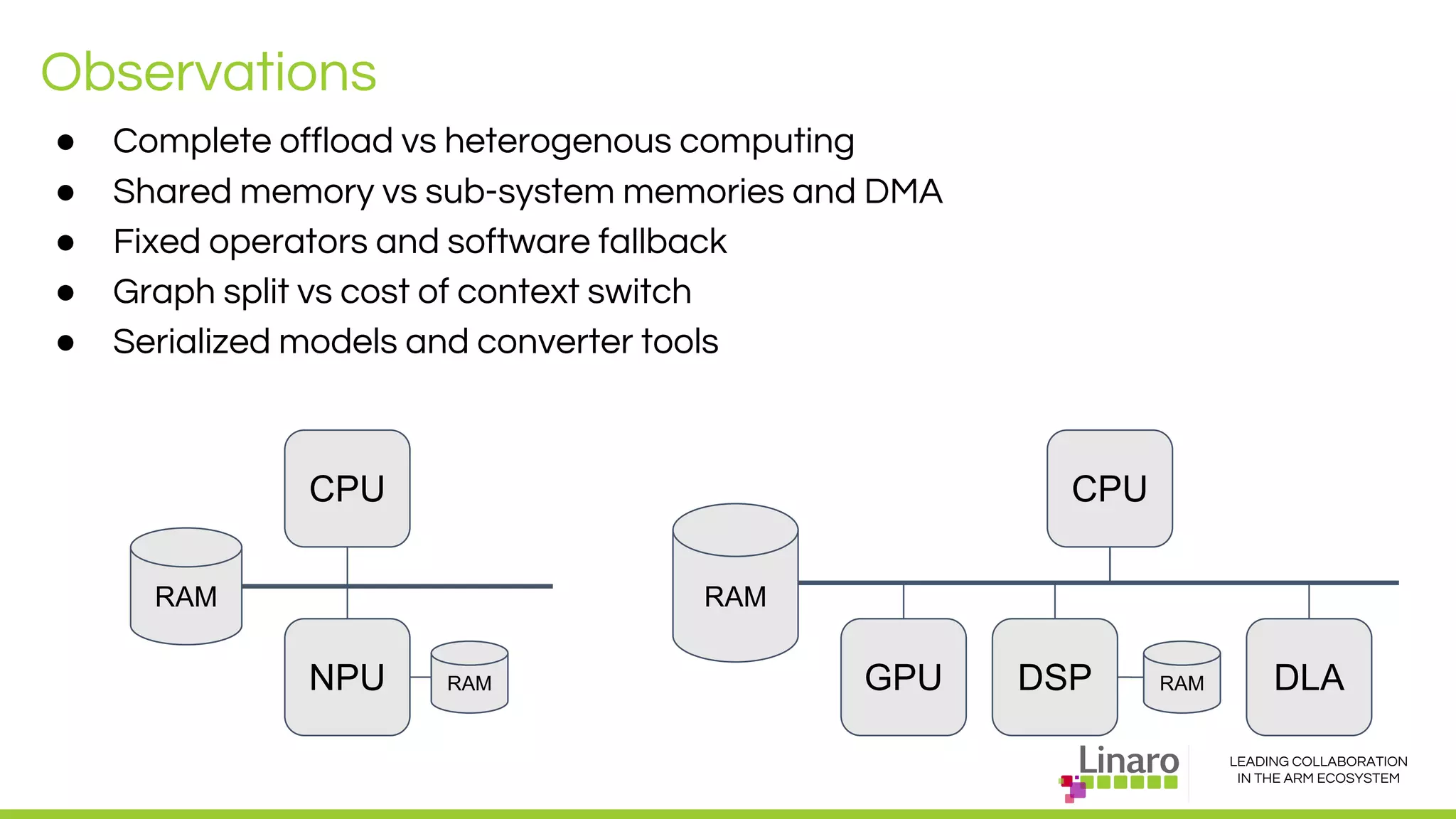

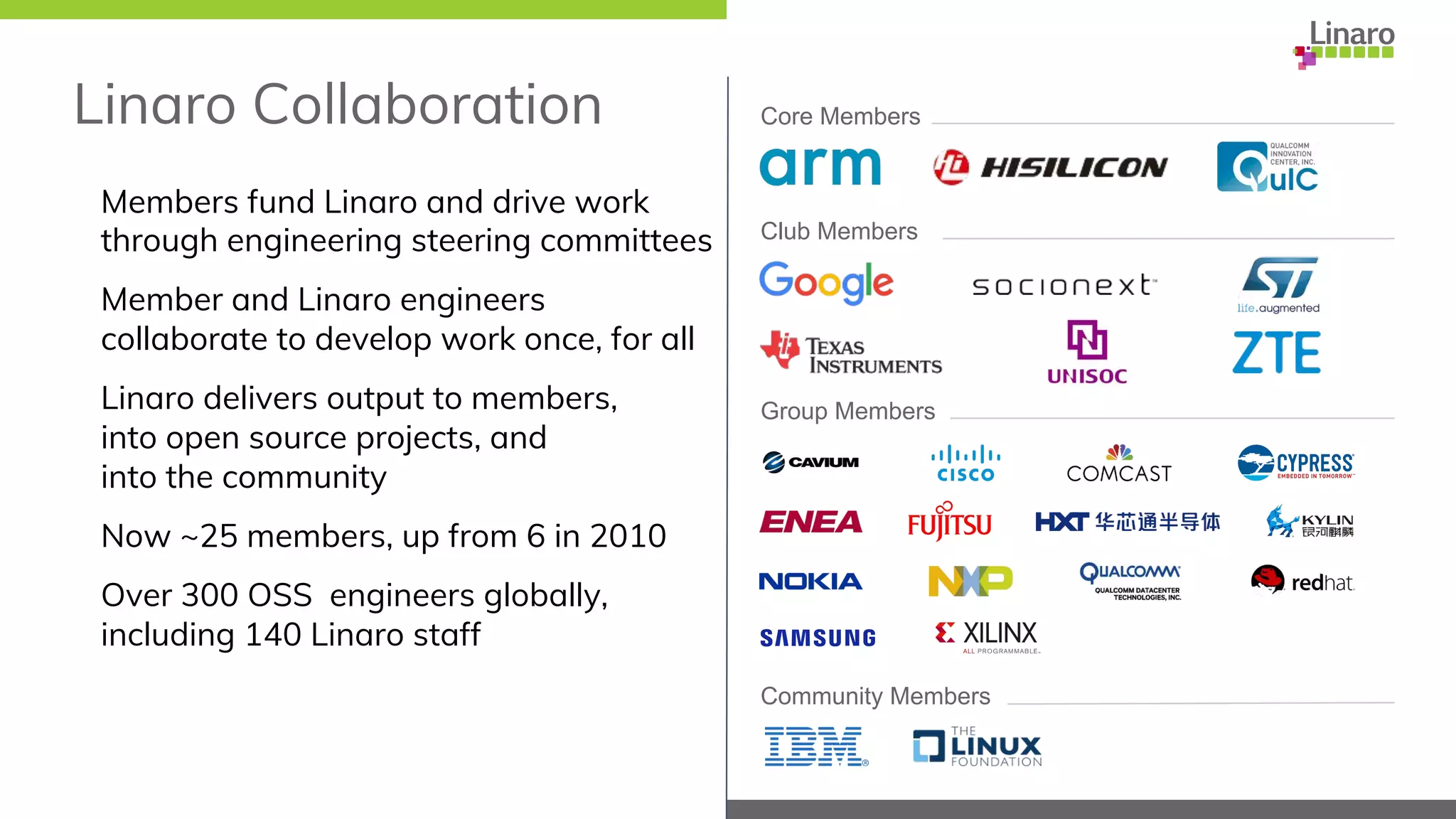

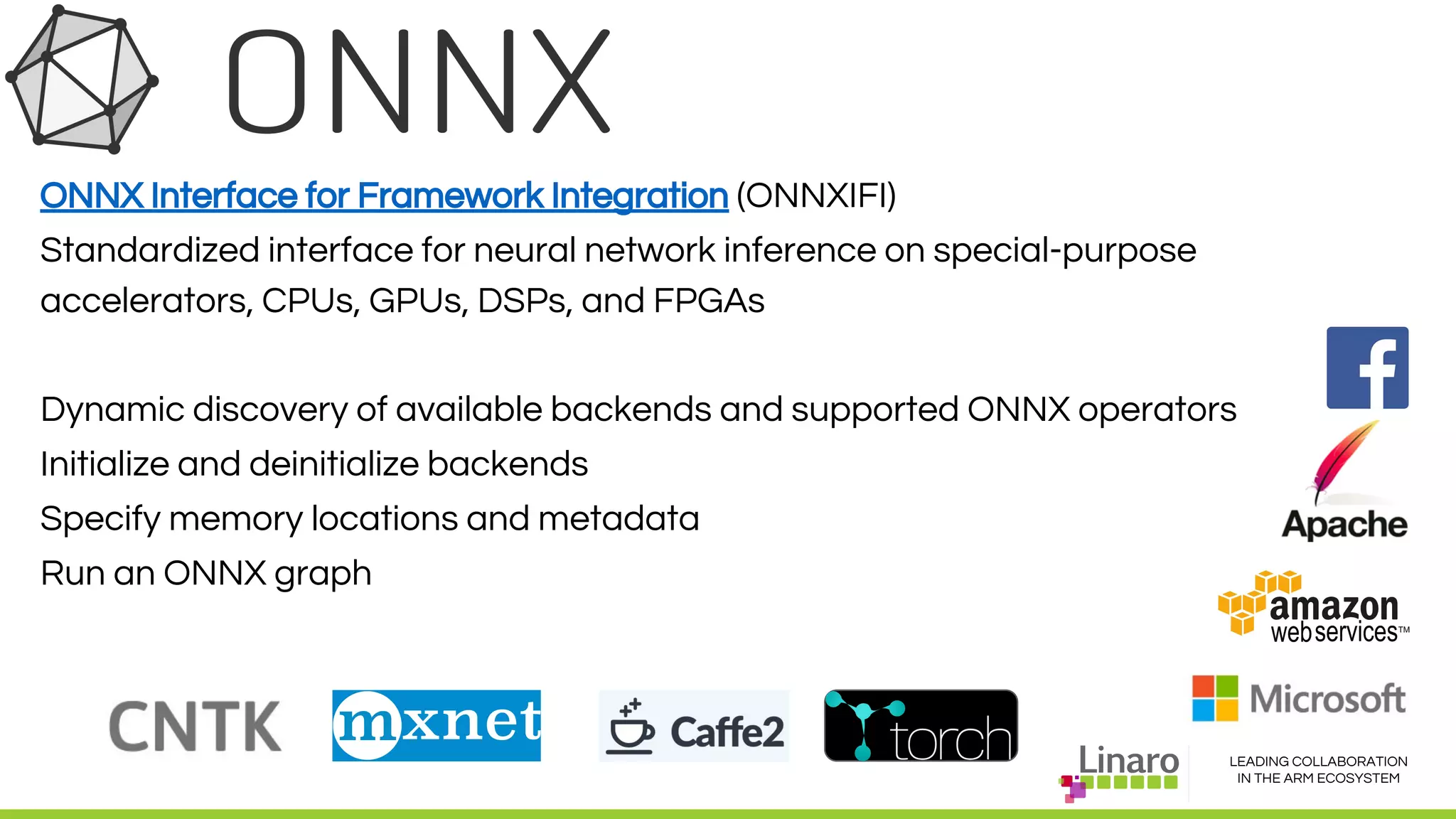

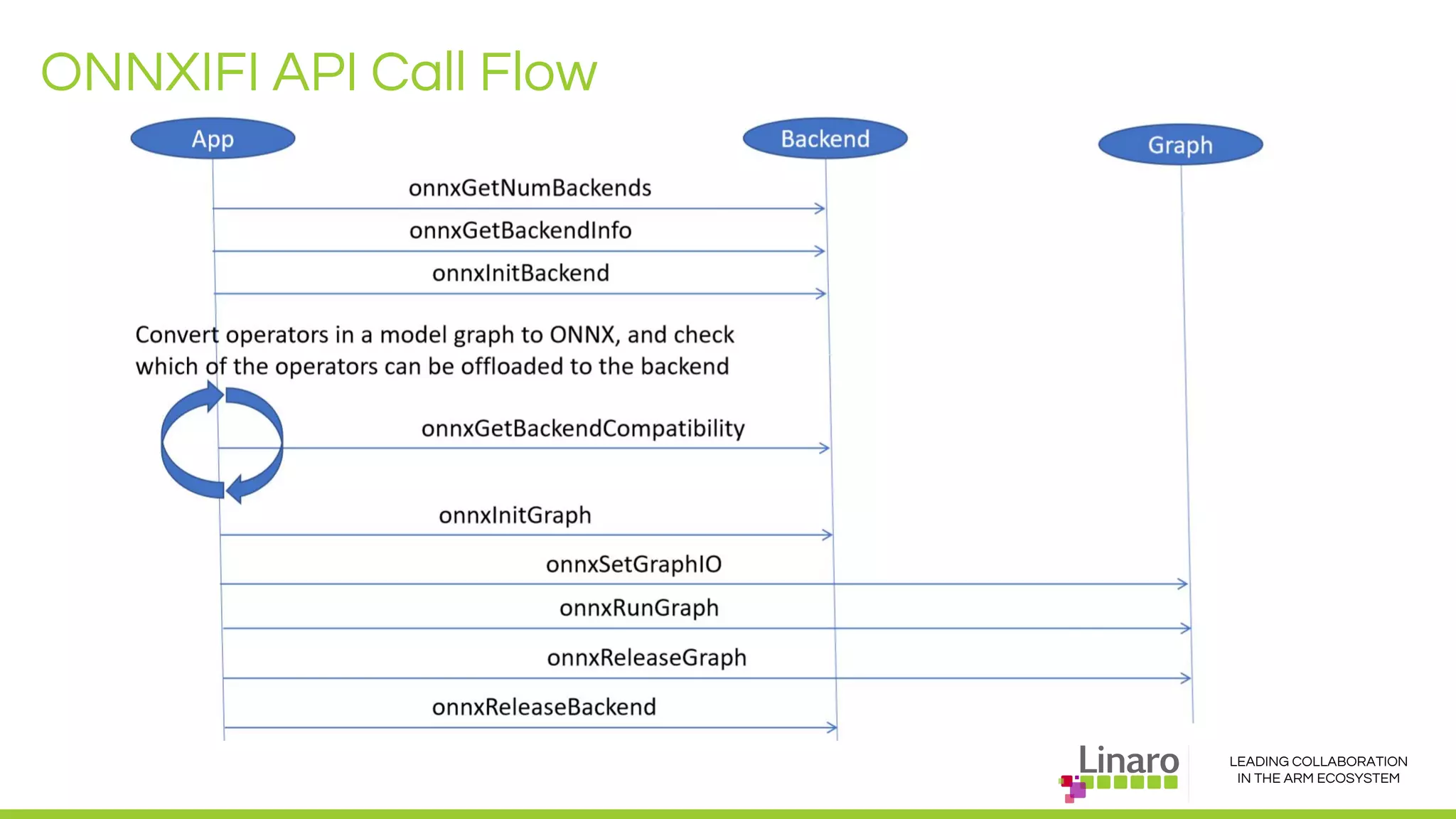

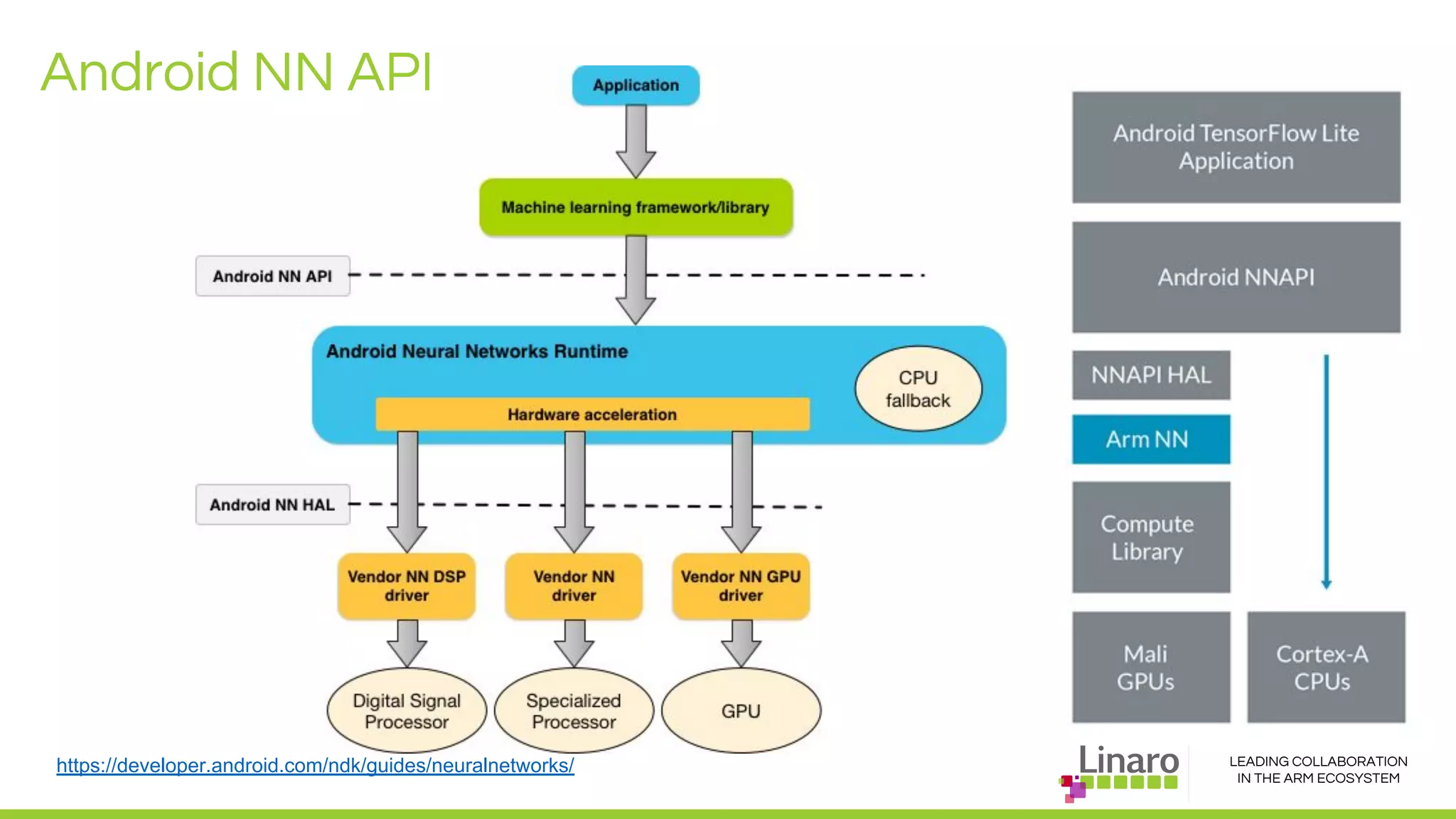

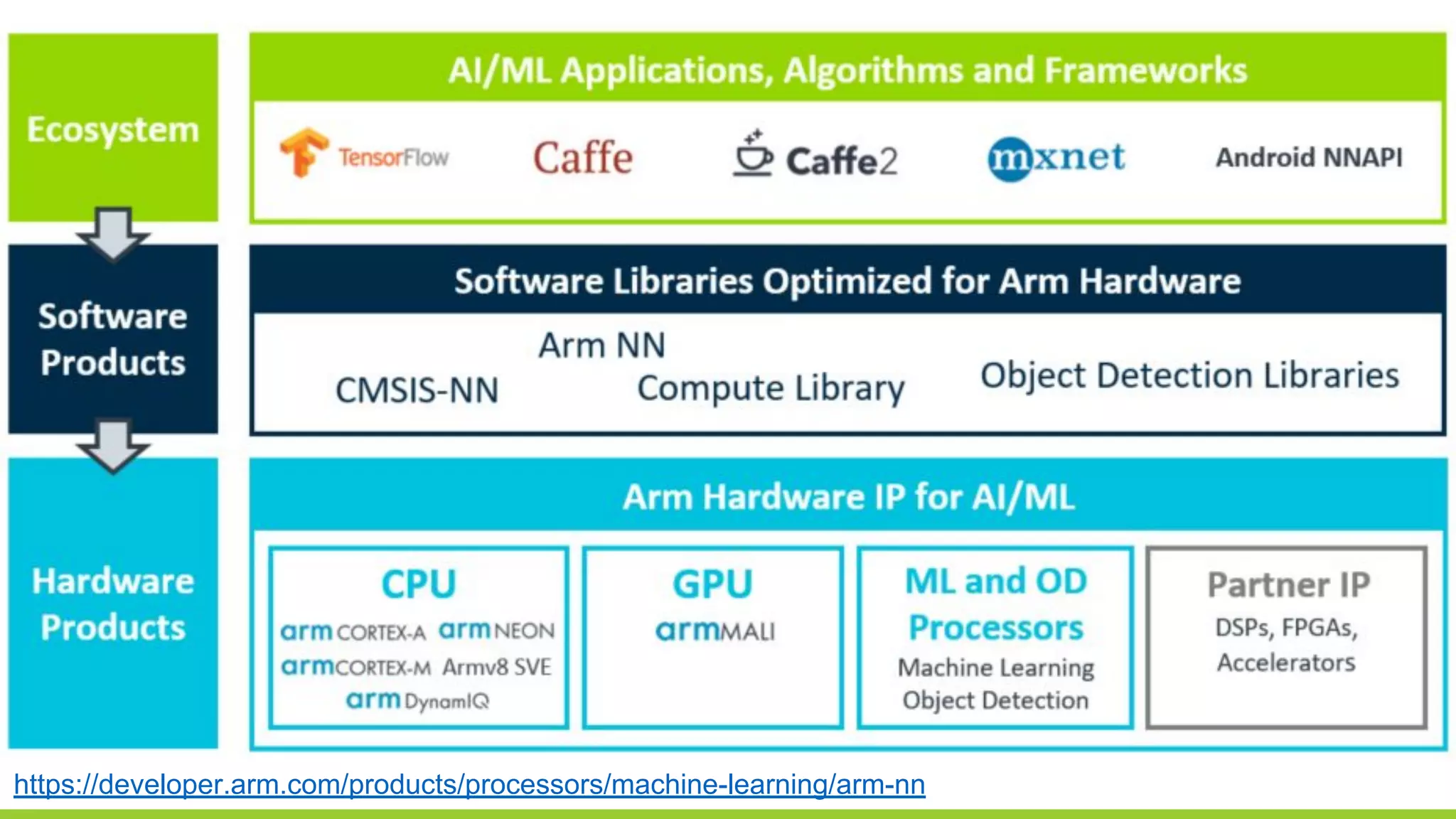

The document discusses deep learning neural network acceleration at the edge, emphasizing various AI and ML frameworks like TensorFlow, Caffe, and MXNet, and their evolution and capabilities. It highlights the importance of collaboration within the ARM ecosystem and addresses privacy, bandwidth, and latency issues when deploying deep learning at the edge. Additionally, it outlines different hardware solutions and architectures aimed at improving performance for AI tasks, encouraging Linaro members to collaborate on open-source projects to drive innovation.