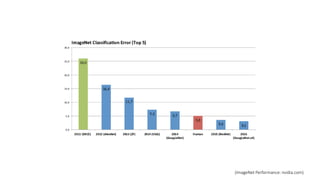

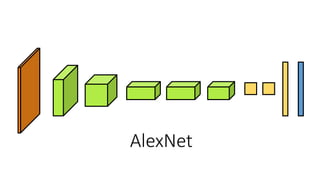

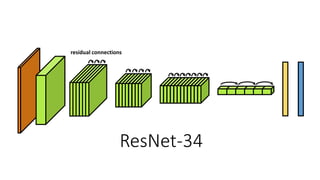

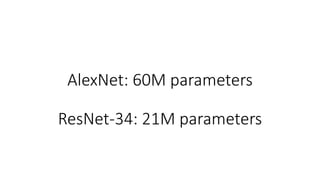

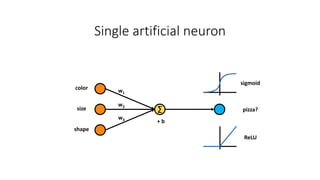

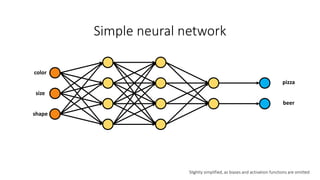

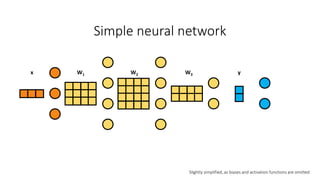

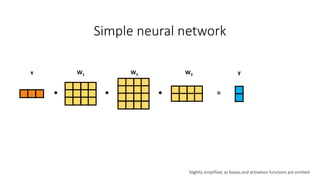

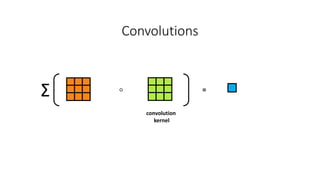

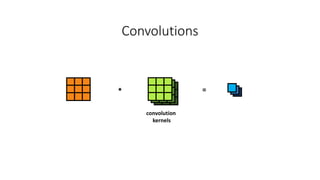

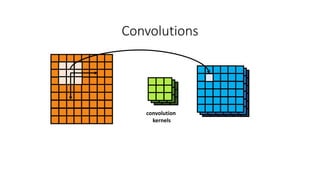

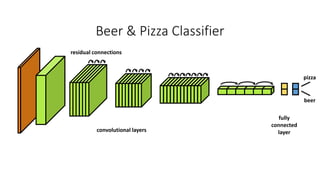

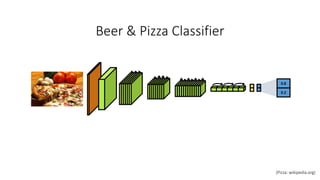

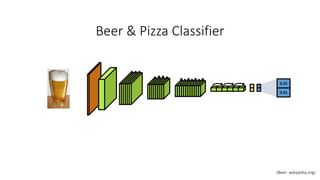

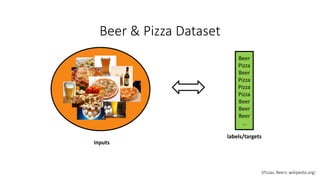

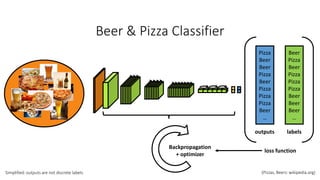

The document provides a hands-on introduction to image classification using deep learning, specifically focusing on neural networks and datasets like ImageNet. It discusses the architecture of neural networks, including concepts like convolutional layers, residual connections, and the importance of using pre-trained networks for transfer learning. A practical example of classifying beers and pizzas illustrates the application of these concepts.