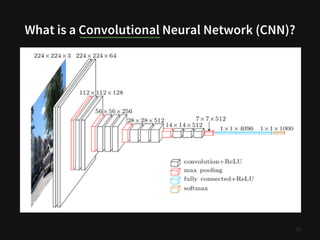

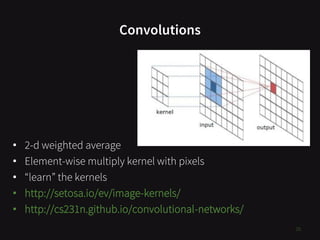

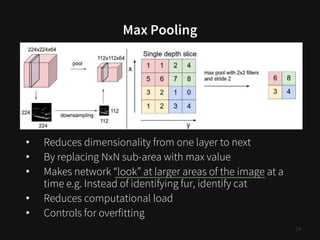

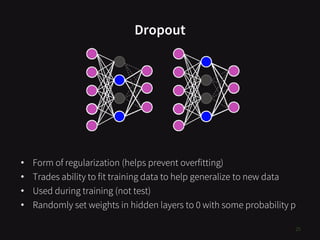

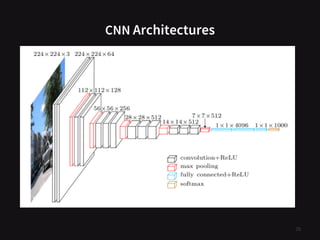

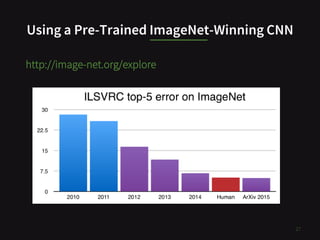

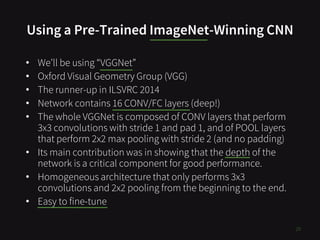

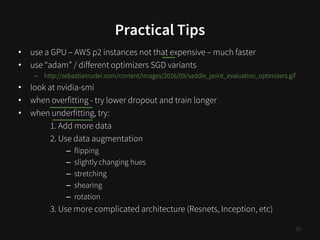

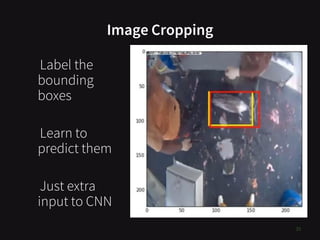

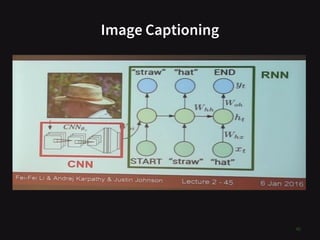

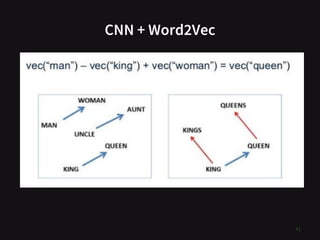

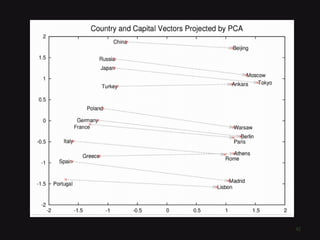

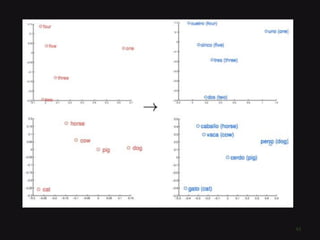

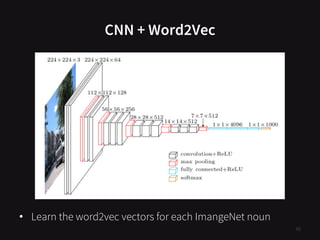

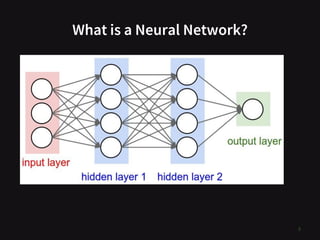

The document presents an overview of convolutional neural networks (CNNs) for image classification, detailing their structure and function, including key concepts like convolutions, max pooling, and dropout. It emphasizes the process of using pre-trained CNNs, such as VGGNet, for fine-tuning in new tasks and provides practical tips for optimization. Additionally, it covers related topics such as image cropping, captioning, and the integration of CNNs with word2vec for enhanced functionality.

![What is an Image?

• Pixel = 3 colour channels (R, G, B)

• Pixel intensity = number in [0,255]

• Image has width w and height h

• Therefore image is w x h x 3 numbers

13](https://image.slidesharecdn.com/ctdlcnntalk20170620-170621151937/85/Convolutional-Neural-Networks-for-Image-Classification-Cape-Town-Deep-Learning-Meet-up-20170620-13-320.jpg)