Embed presentation

Download as PDF, PPTX

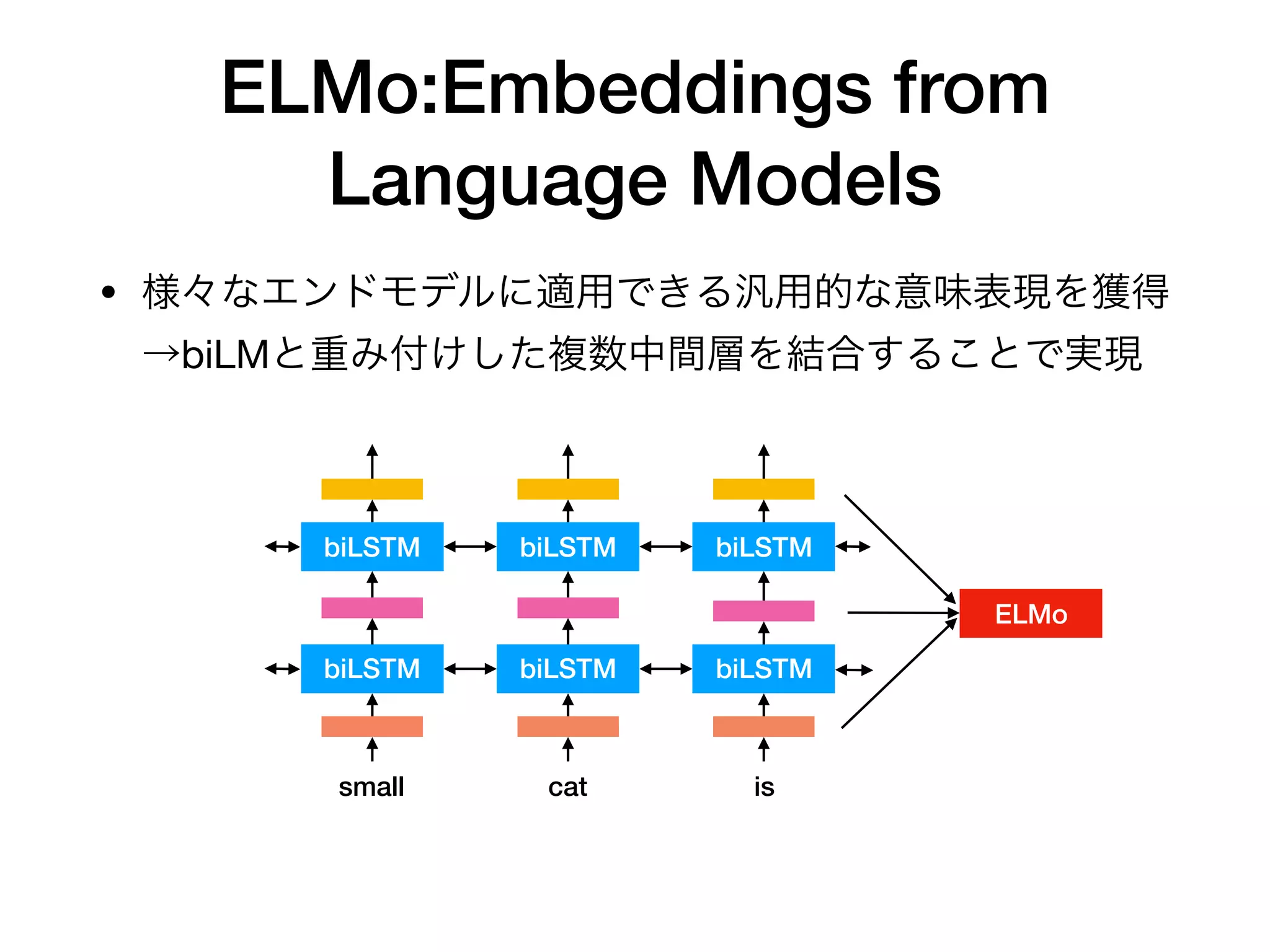

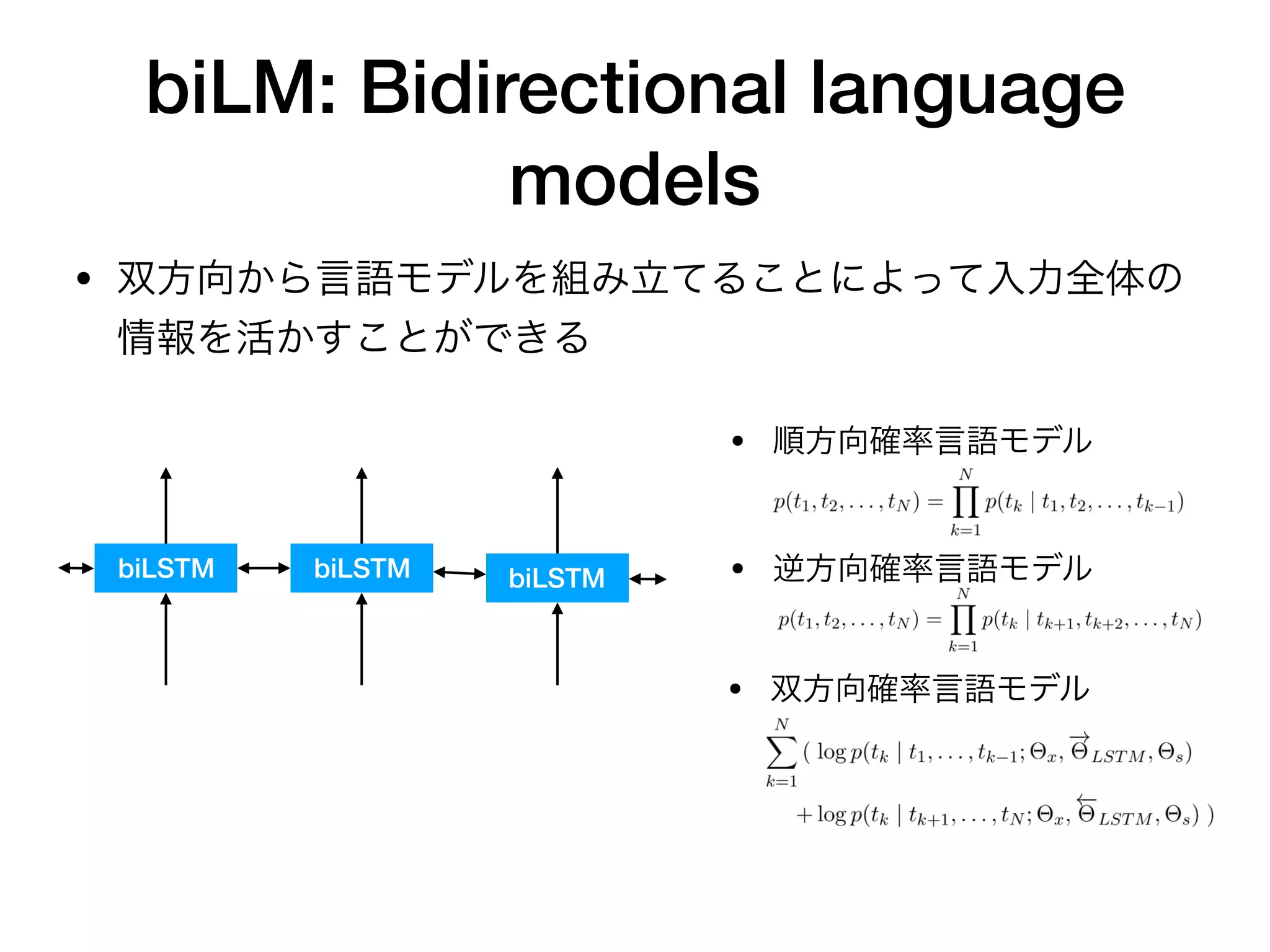

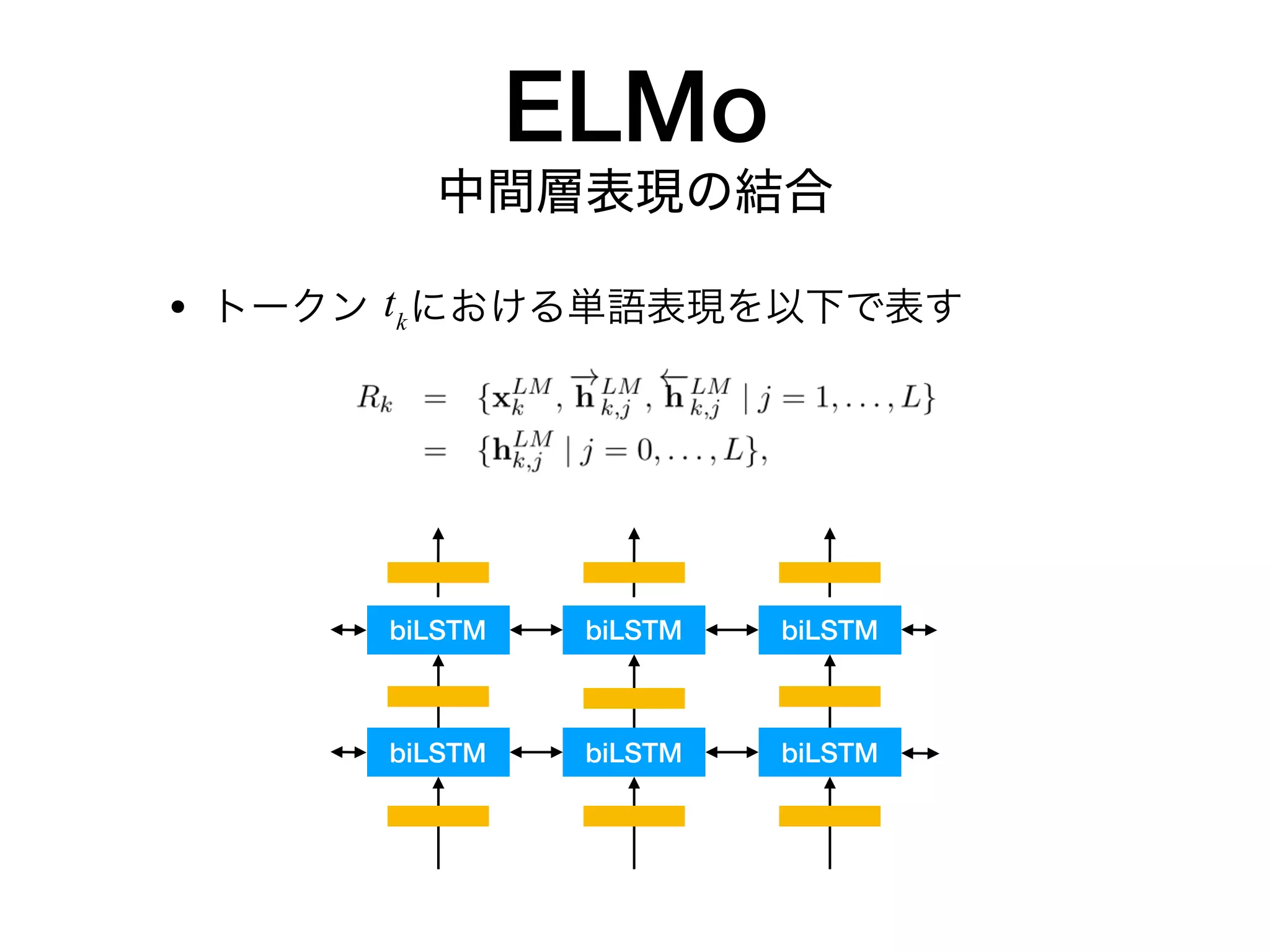

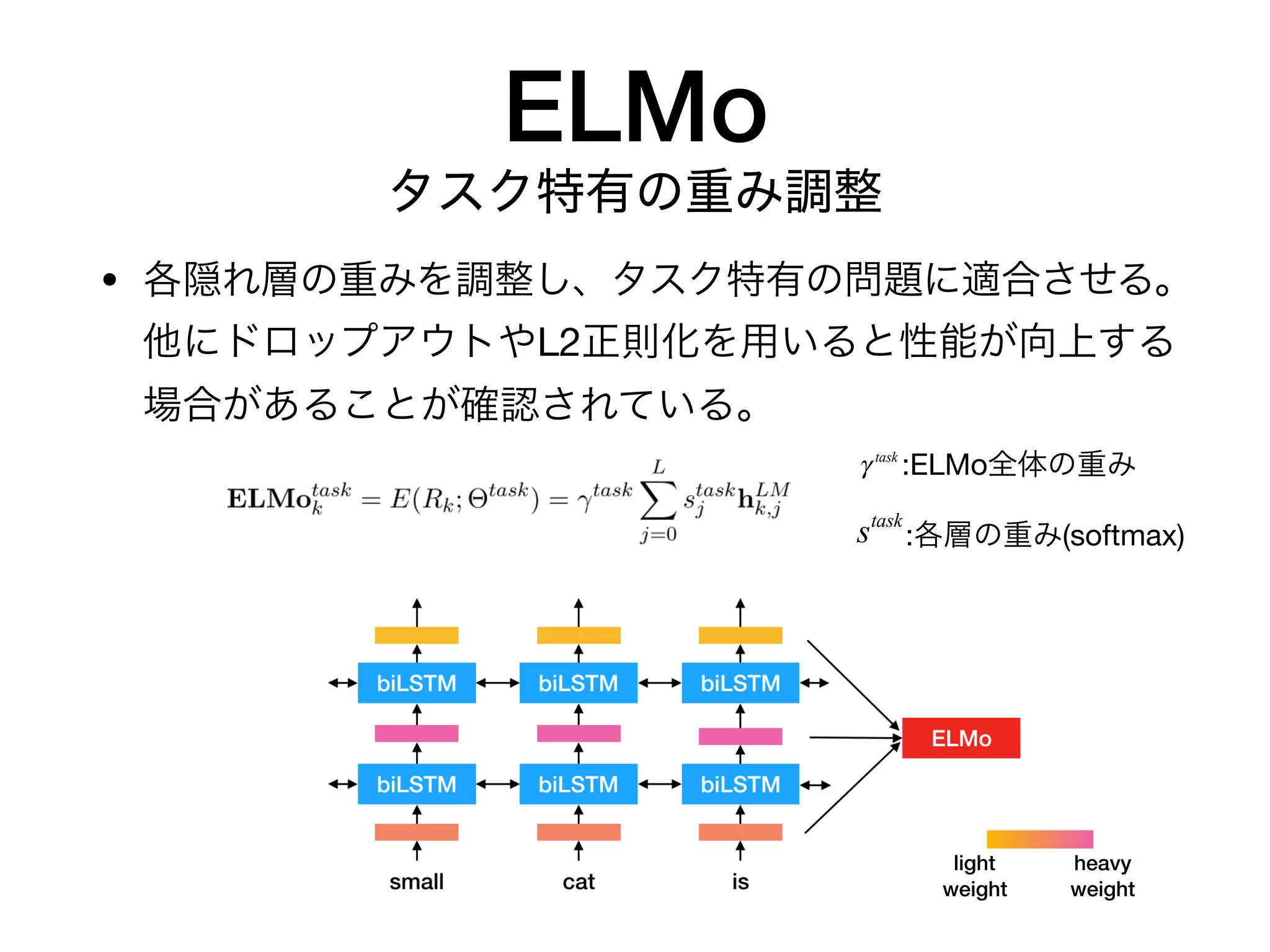

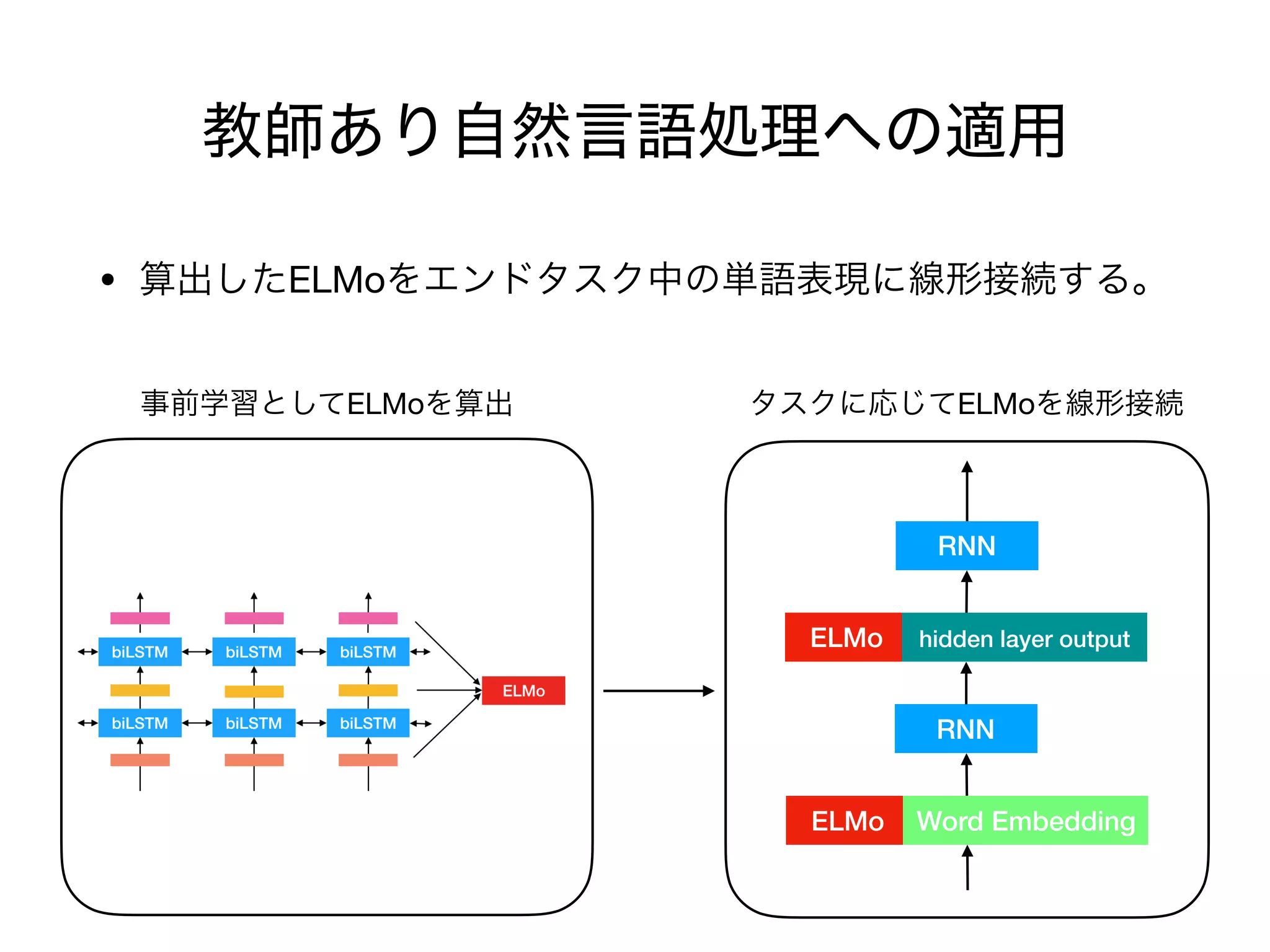

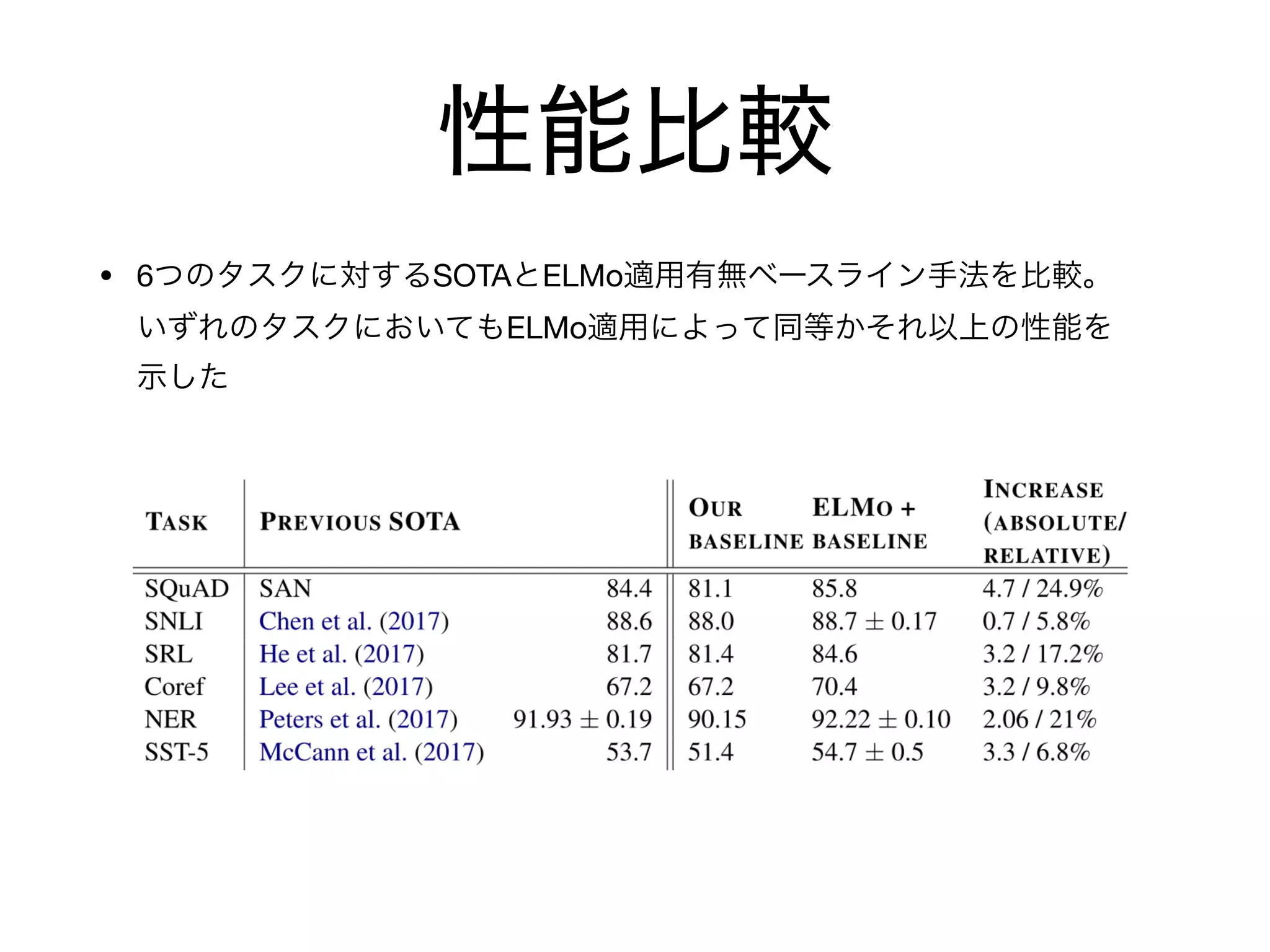

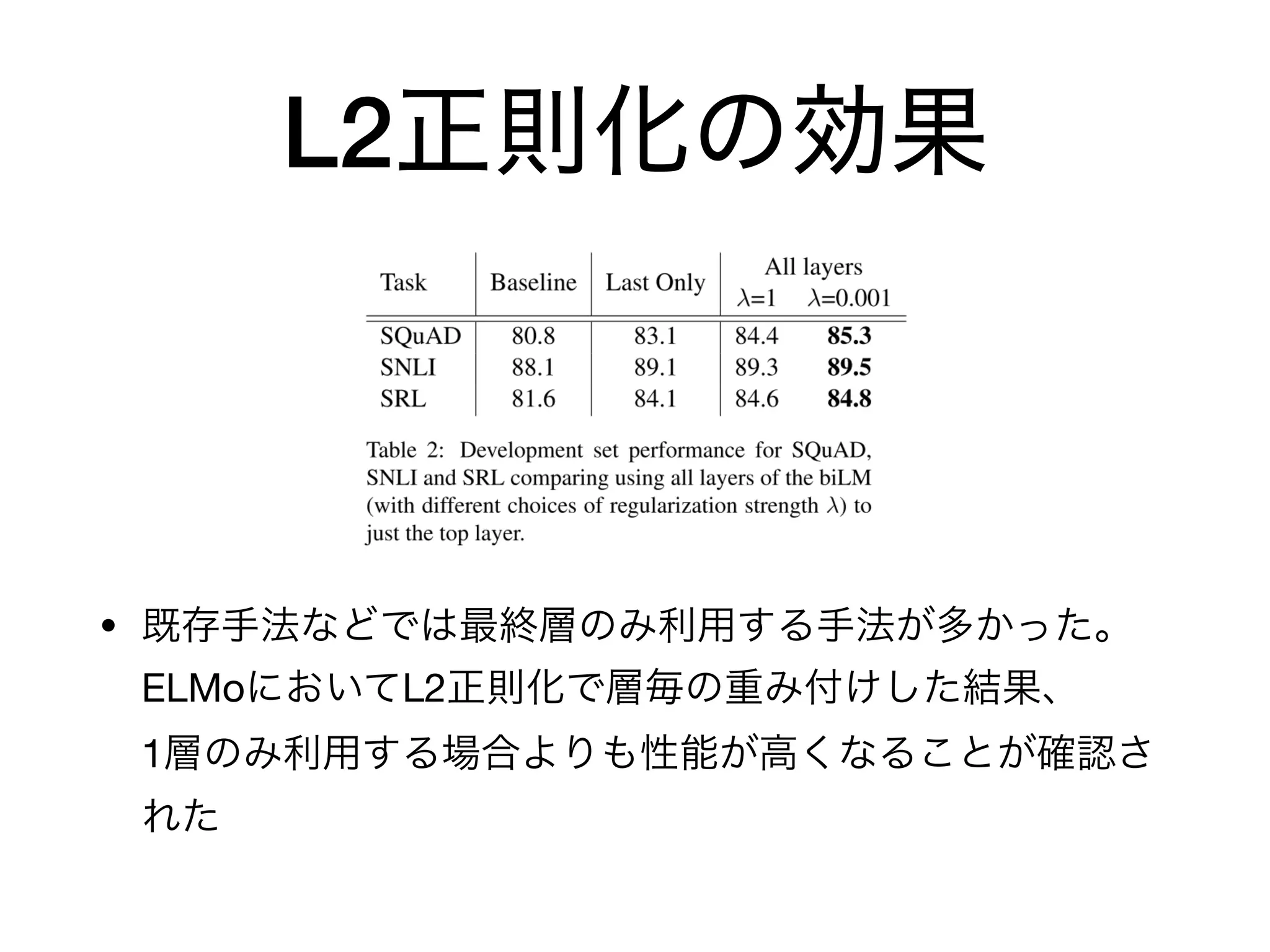

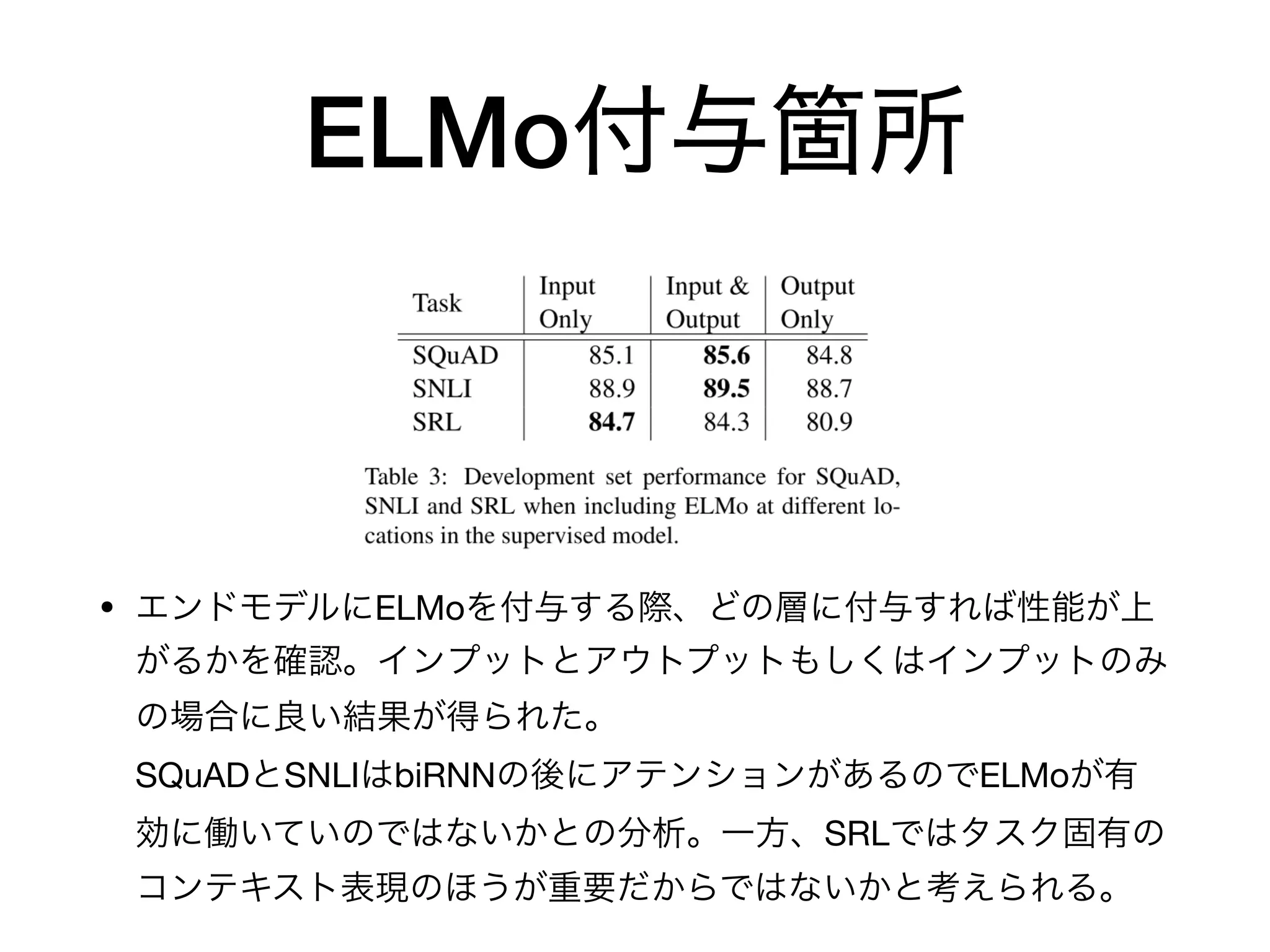

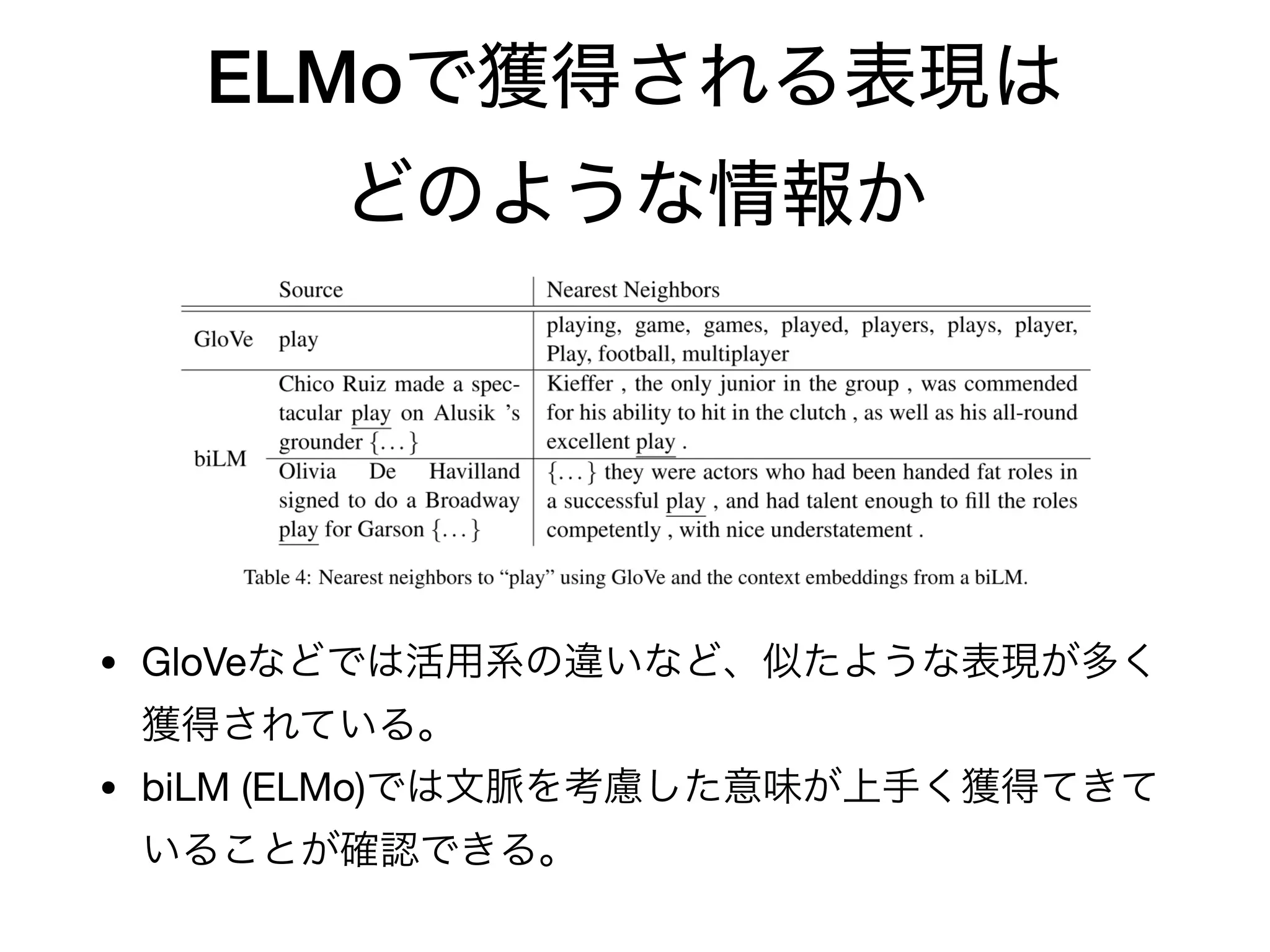

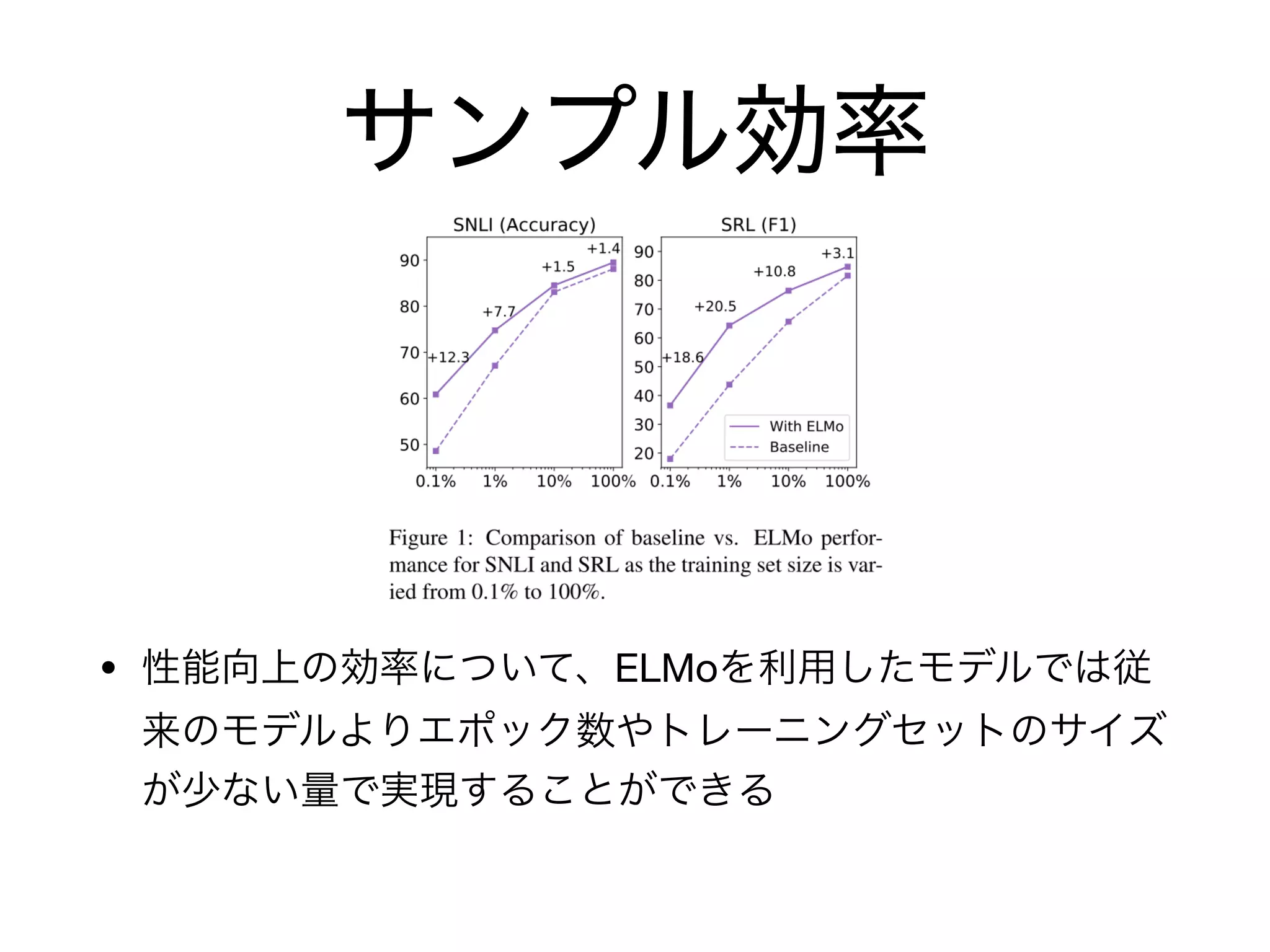

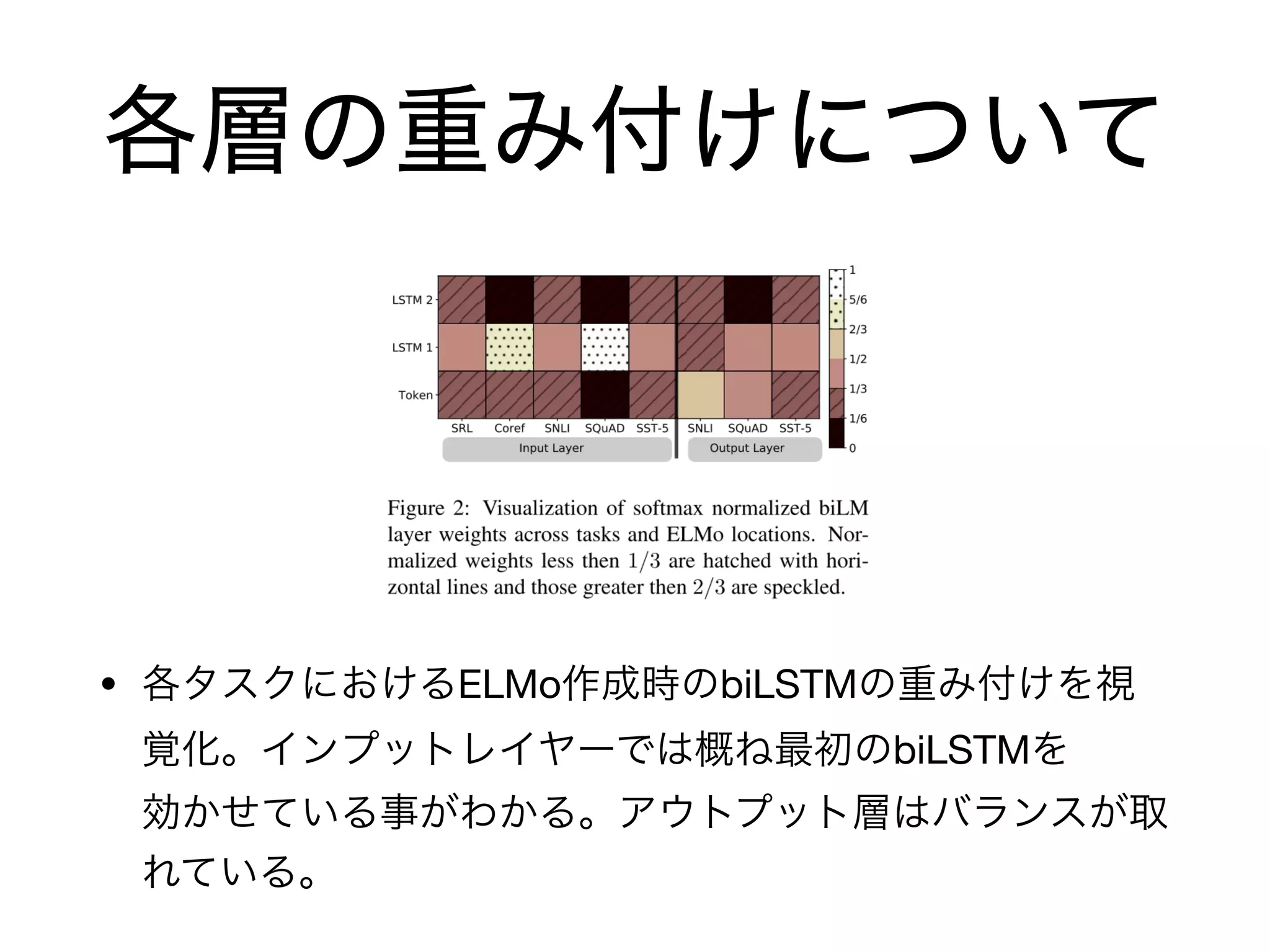

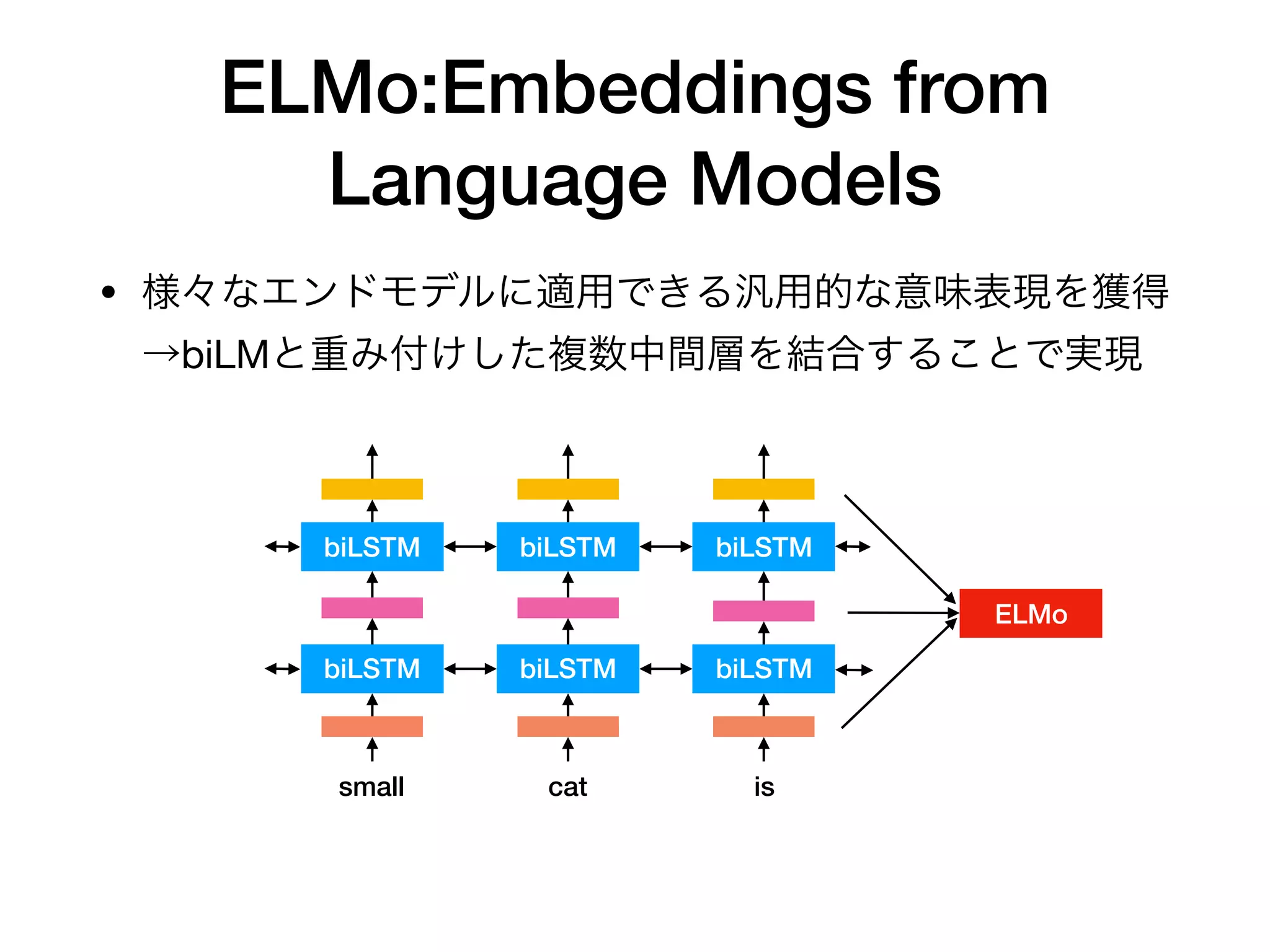

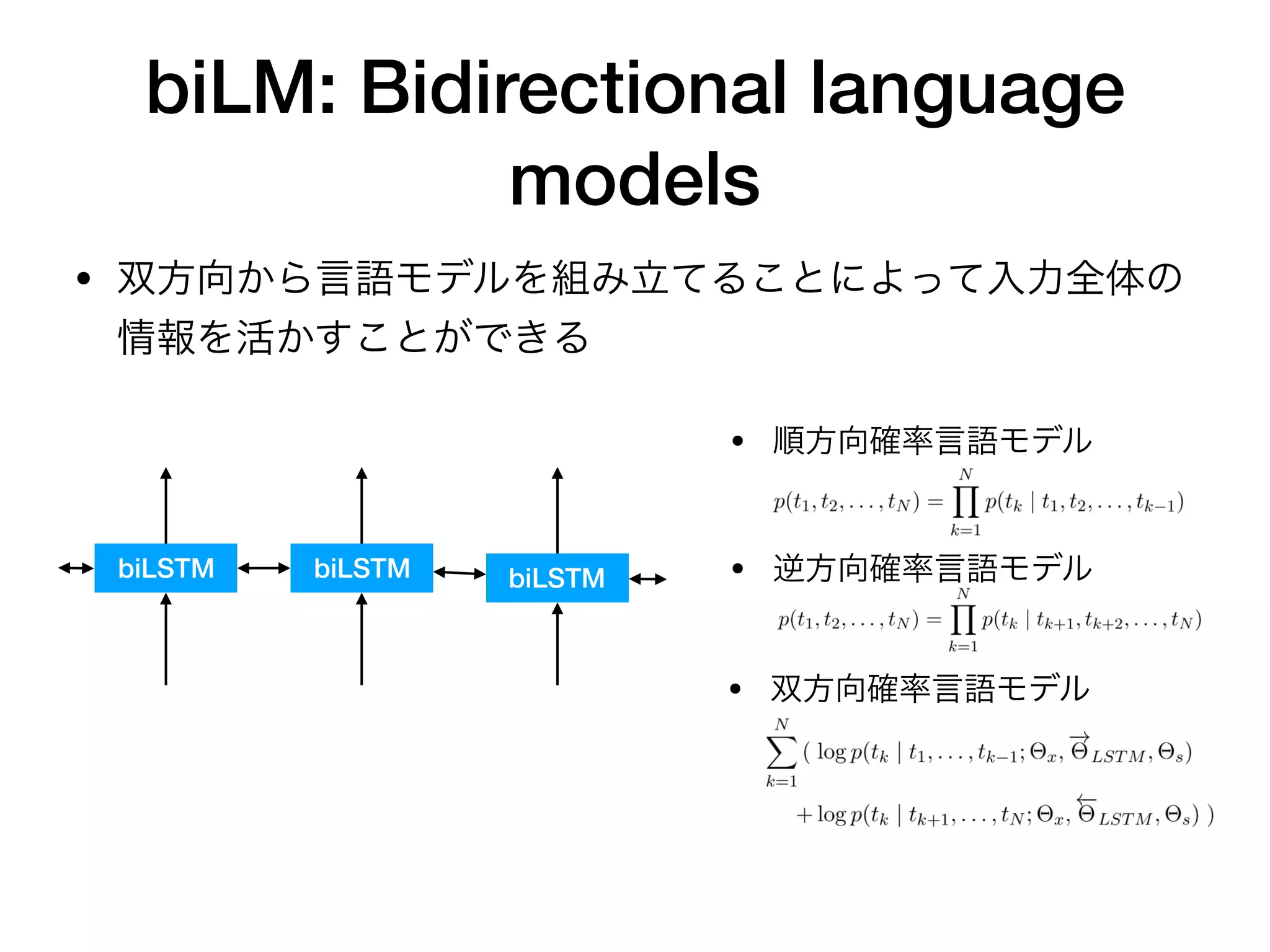

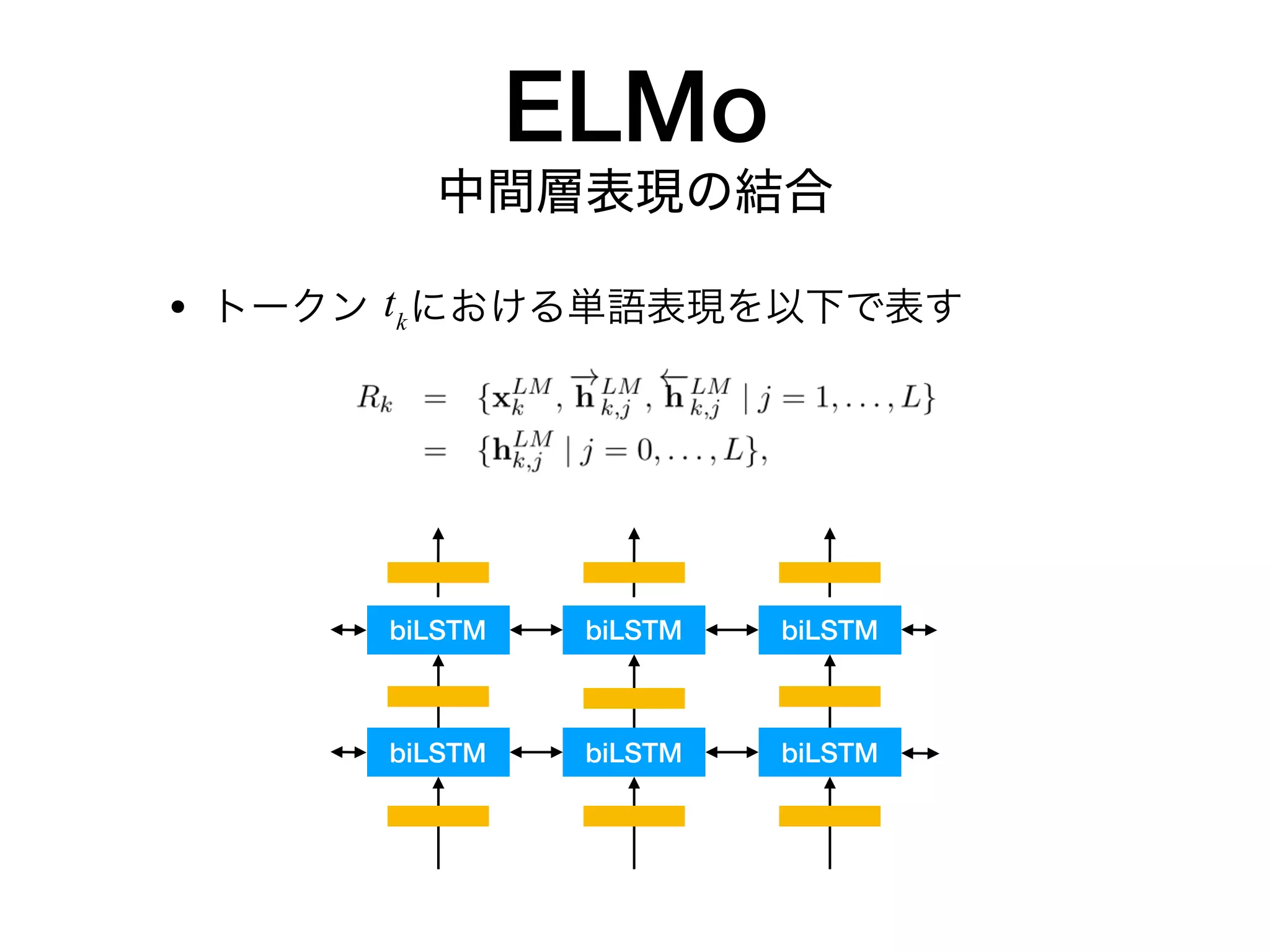

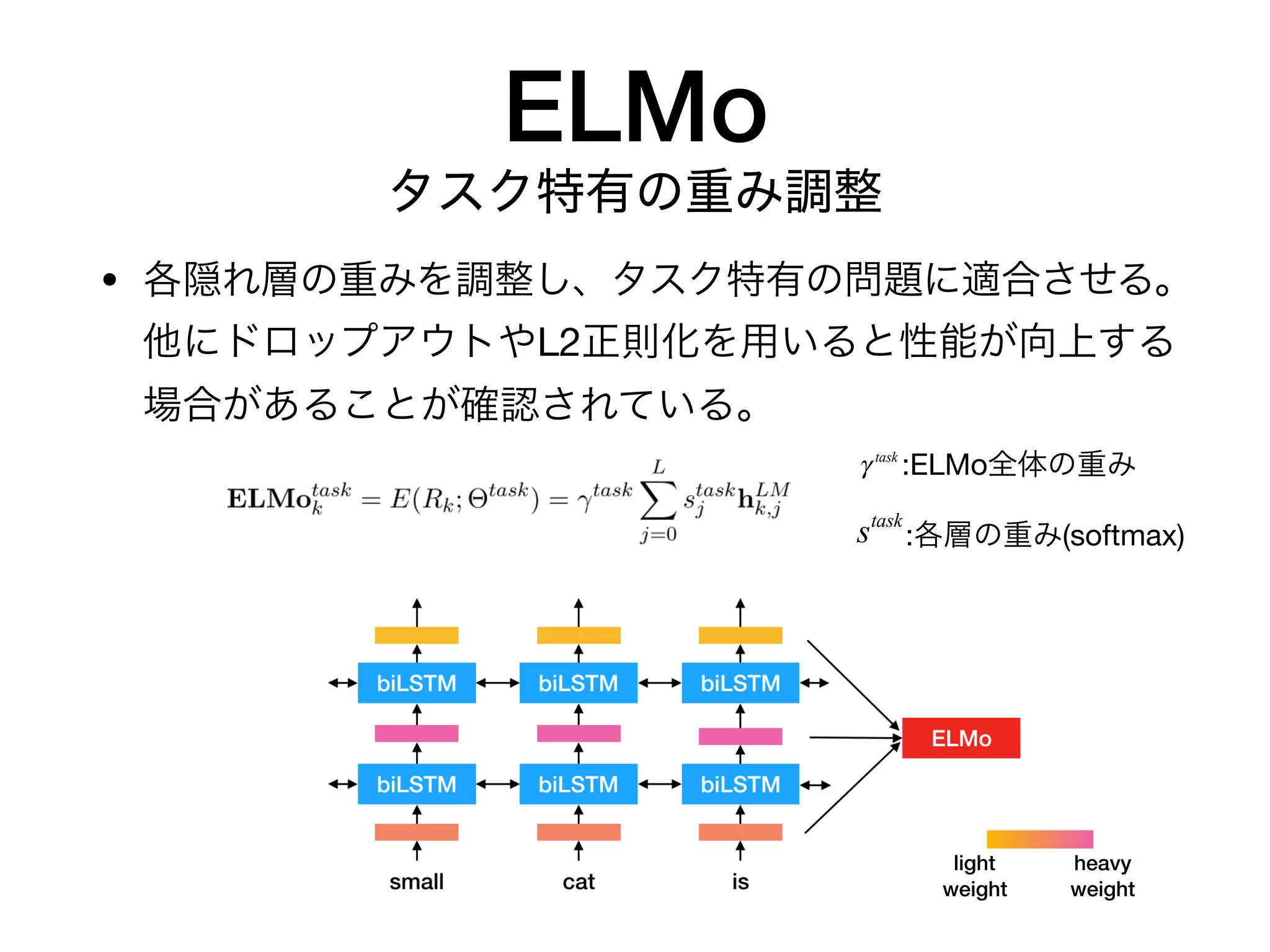

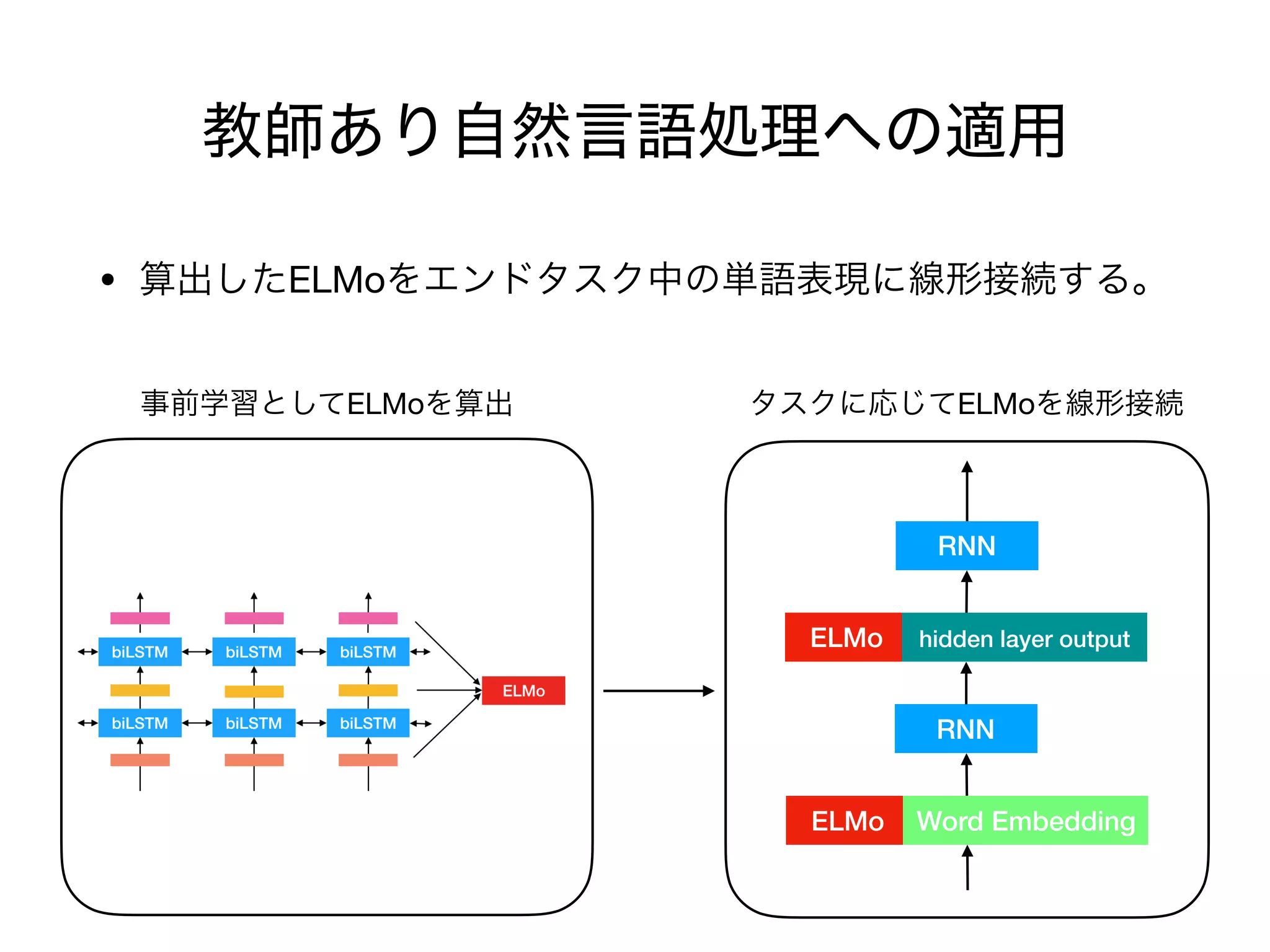

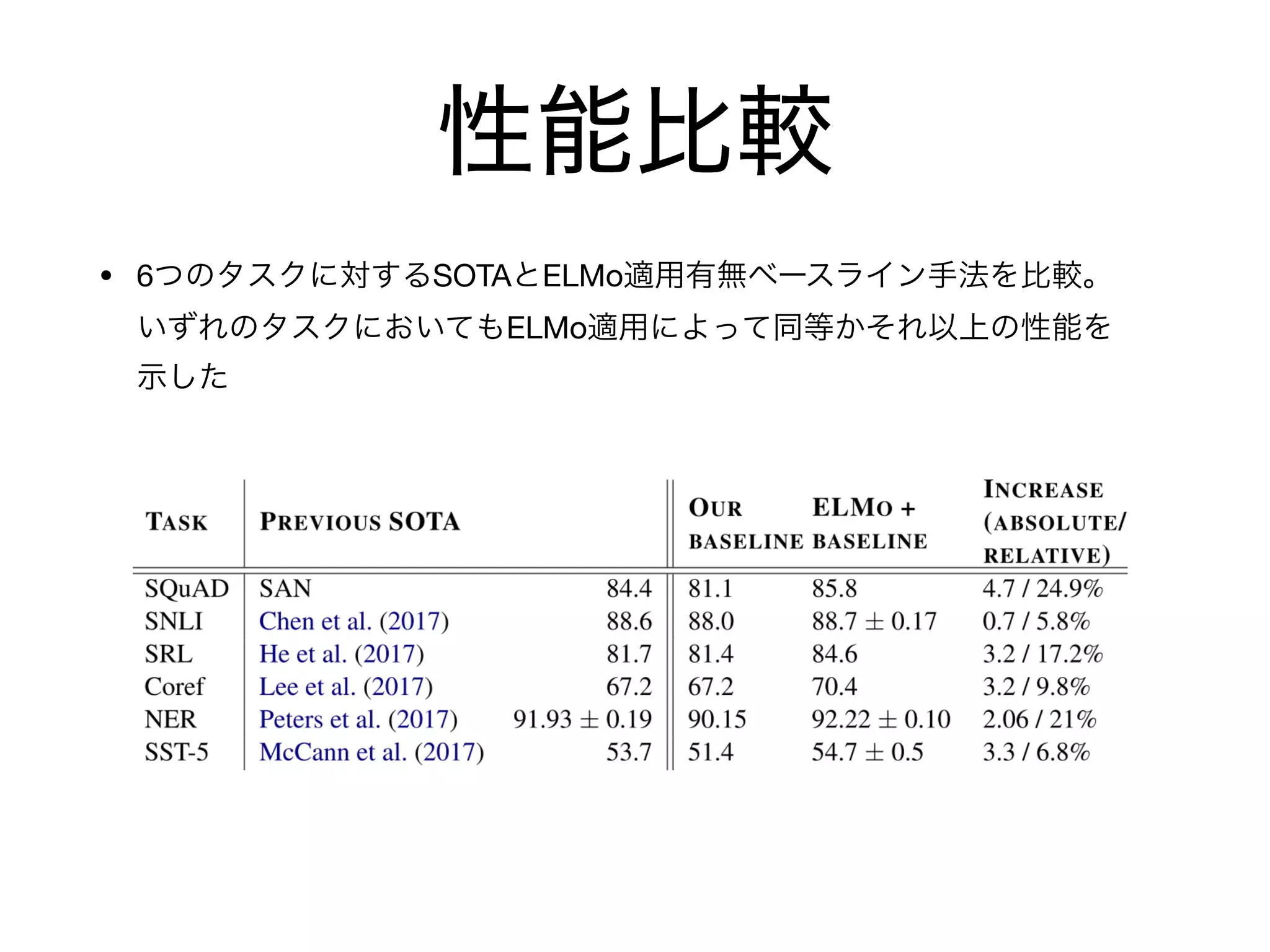

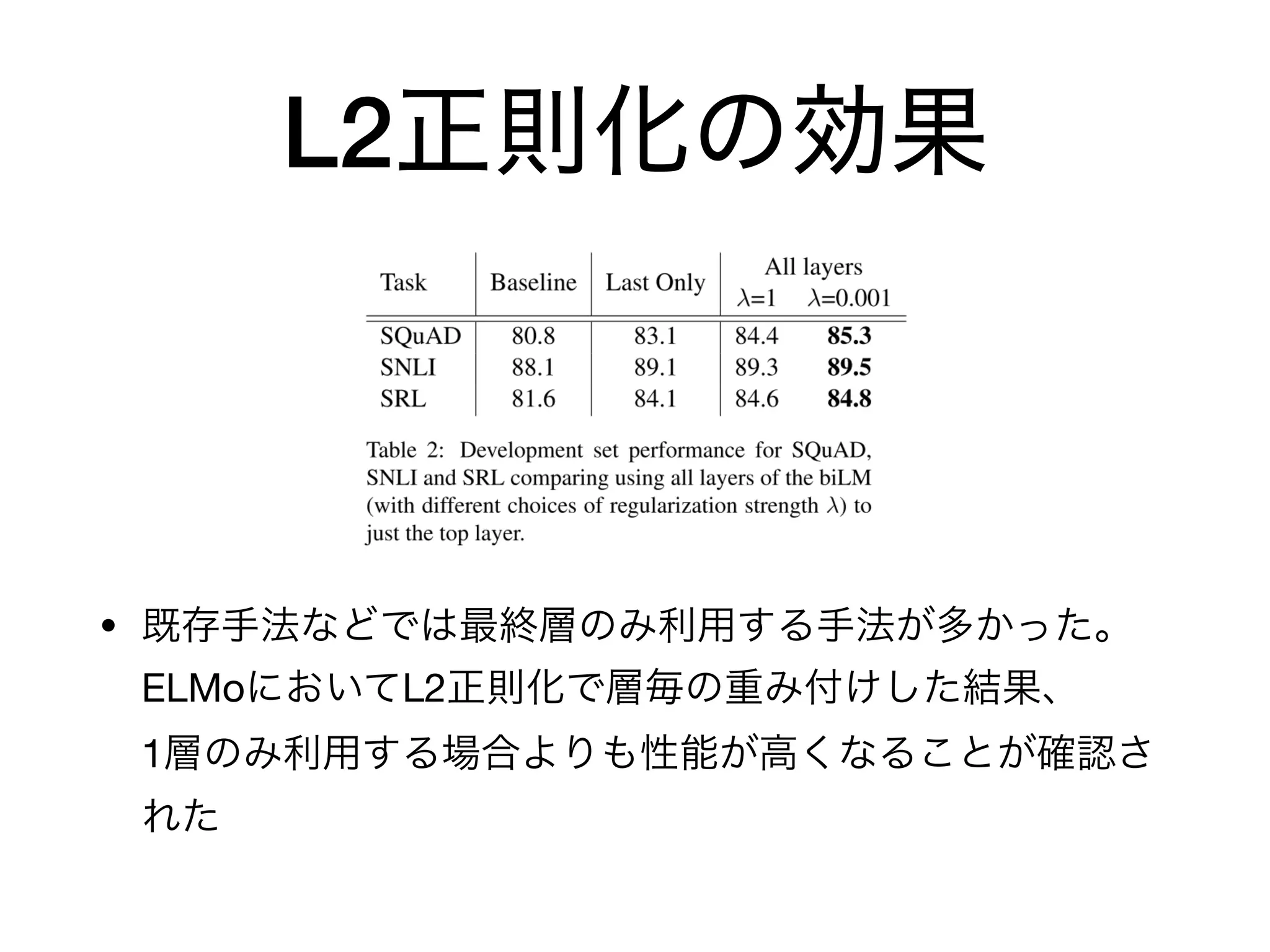

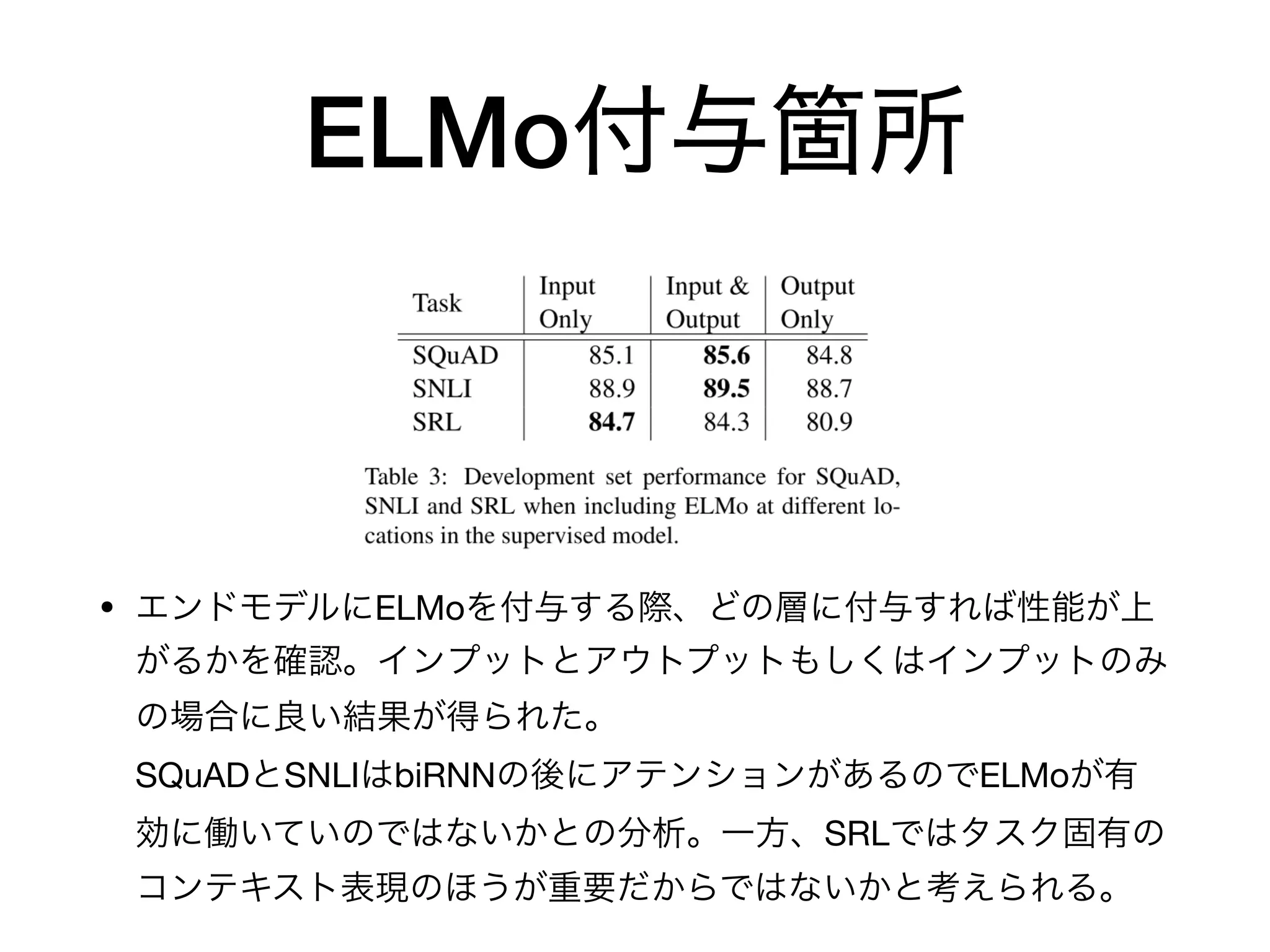

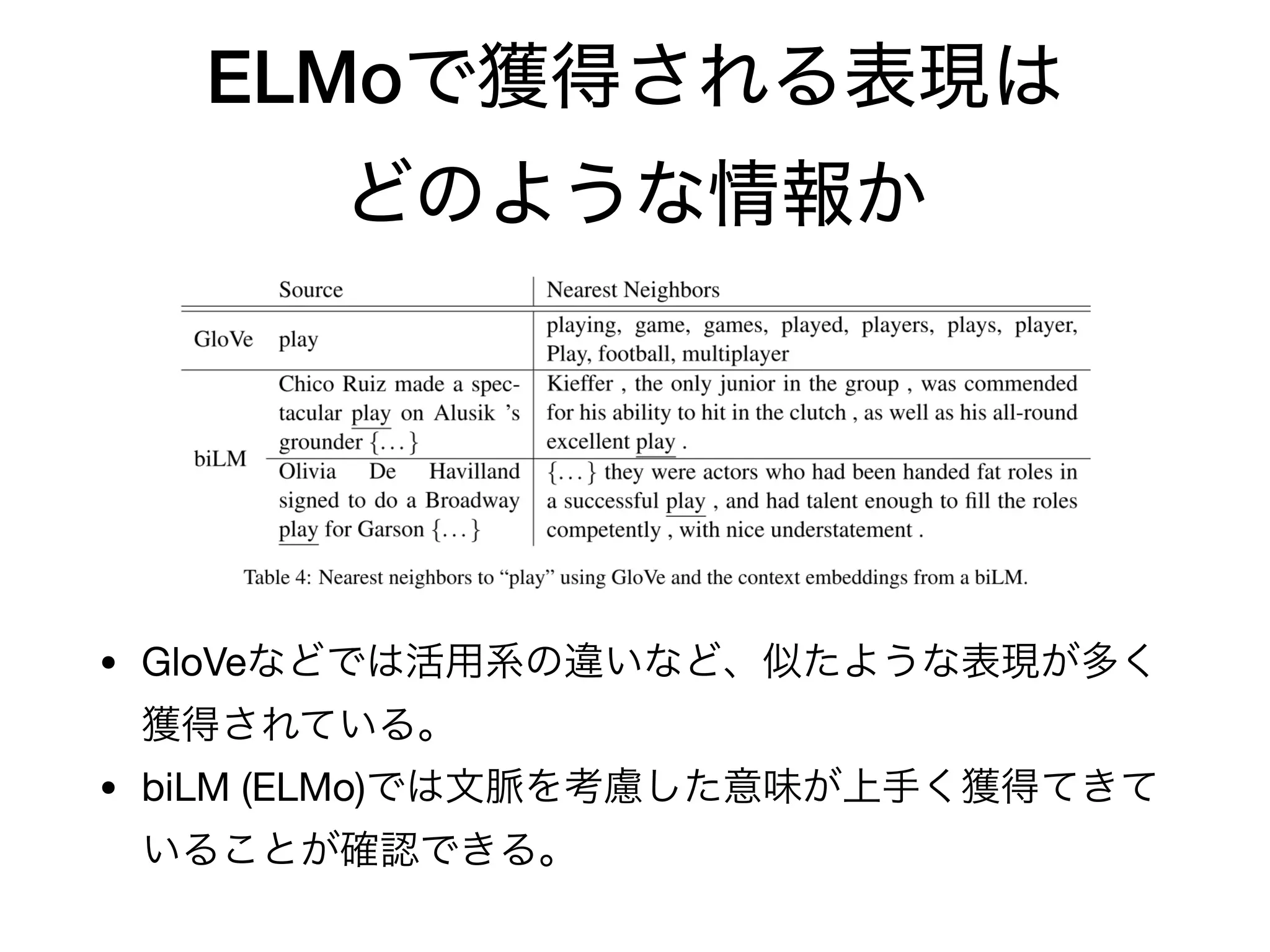

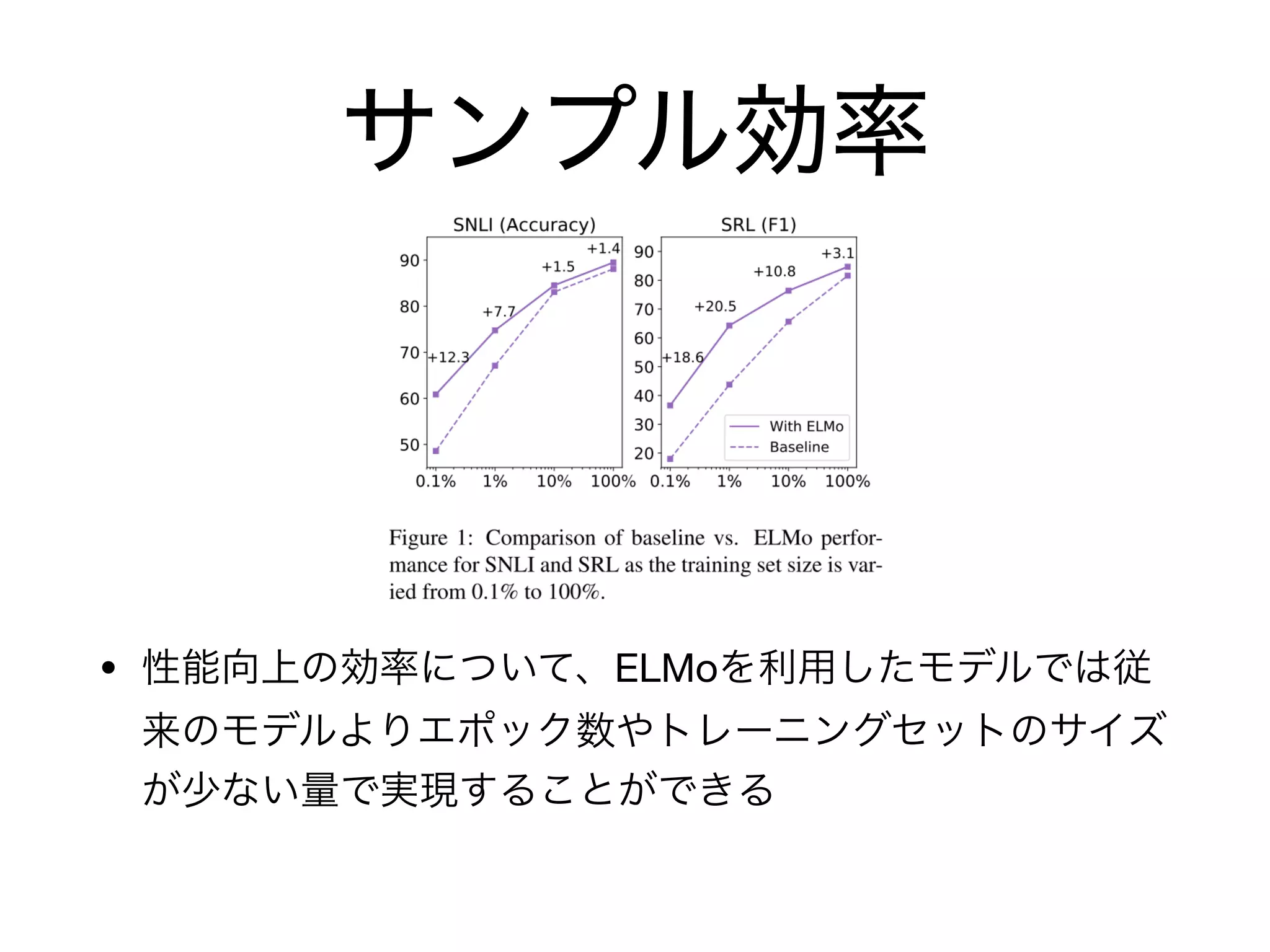

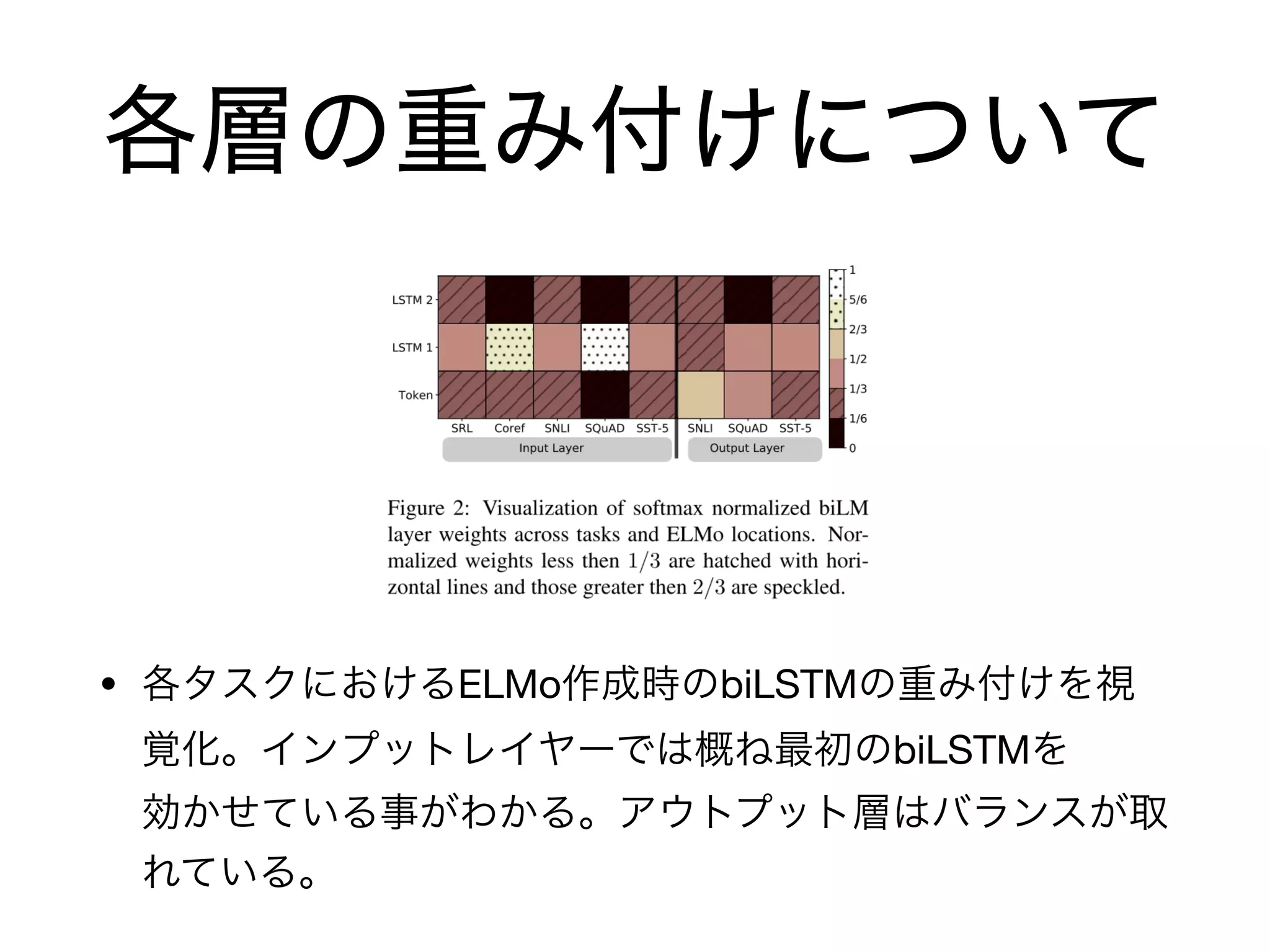

This document discusses ELMo (Embeddings from Language Models), which are deep contextualized word representations from a bidirectional language model (biLM) pre-trained on a large text corpus. ELMo uses character convolutions and biLSTMs to produce word vectors that model both syntax and semantics, capturing polysemy. It then describes how ELMo vectors can be used as general purpose features for many NLP tasks like question answering and semantic role labeling and achieves state-of-the-art results on several benchmarks.