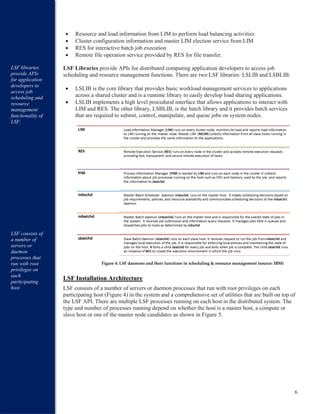

IBM Platform LSF provides an intelligent architecture for scheduling workloads across technical computing clusters. Its modular design separates key elements like scheduling policies and resource management. LSF uses a master-slave model where the master node intelligently schedules jobs to slave nodes based on workload requirements and node resource availability. Core components like the LIM, PIM, and RES help distribute work across heterogeneous resources, while the LSF scheduler supports multiple concurrent policies aligned with business needs. Together this architecture optimizes shared resource utilization to maximize productivity.