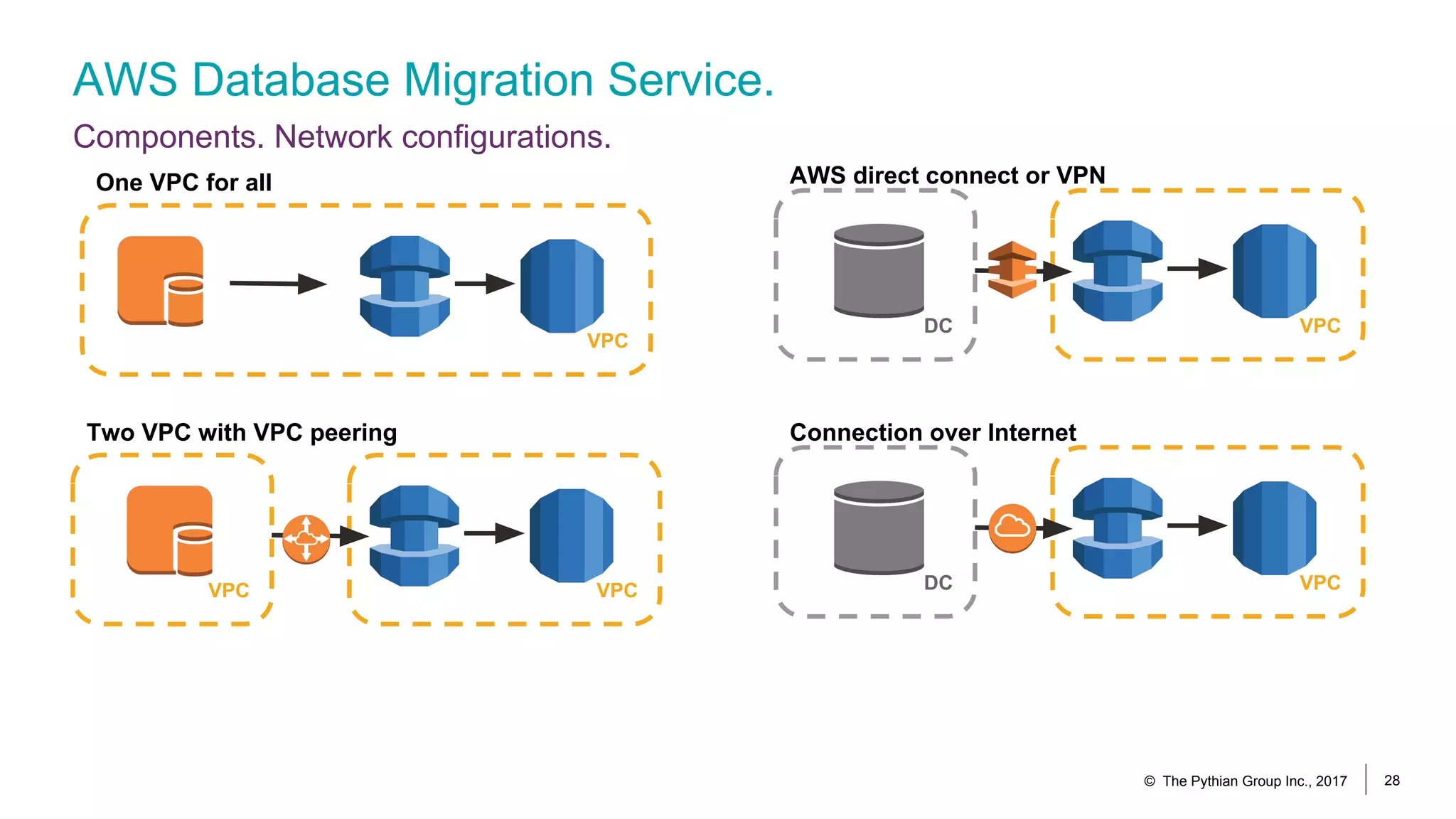

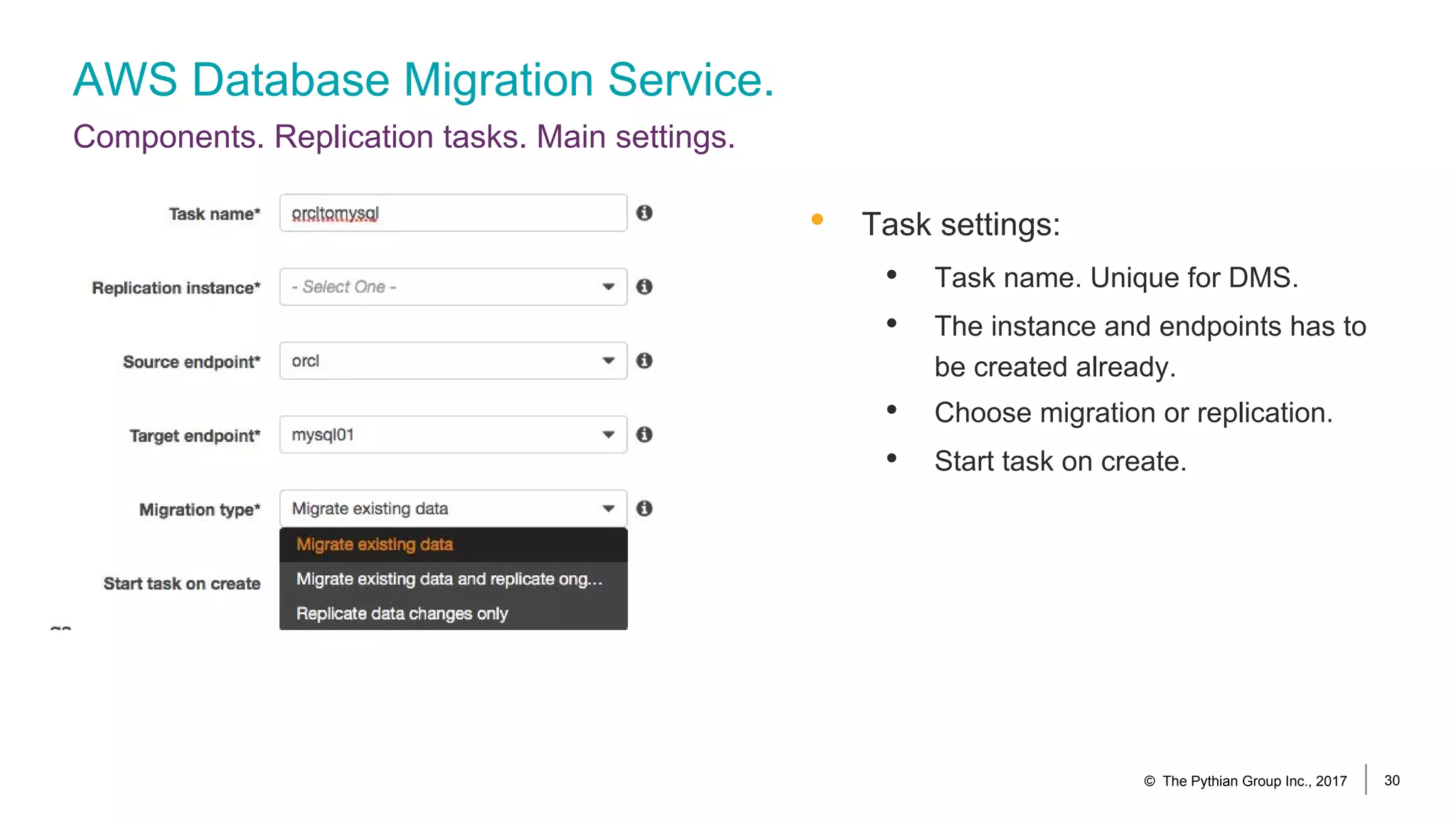

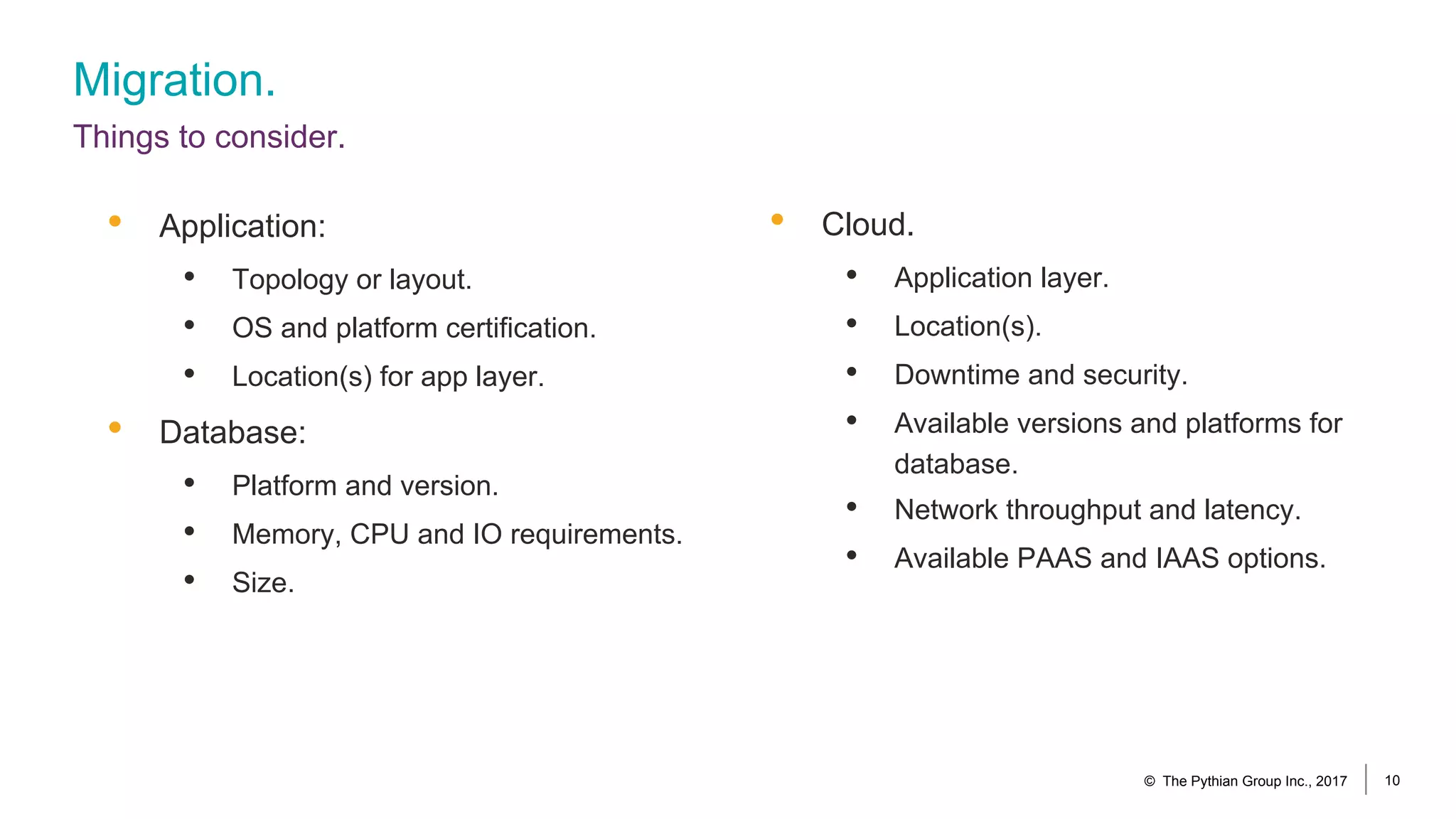

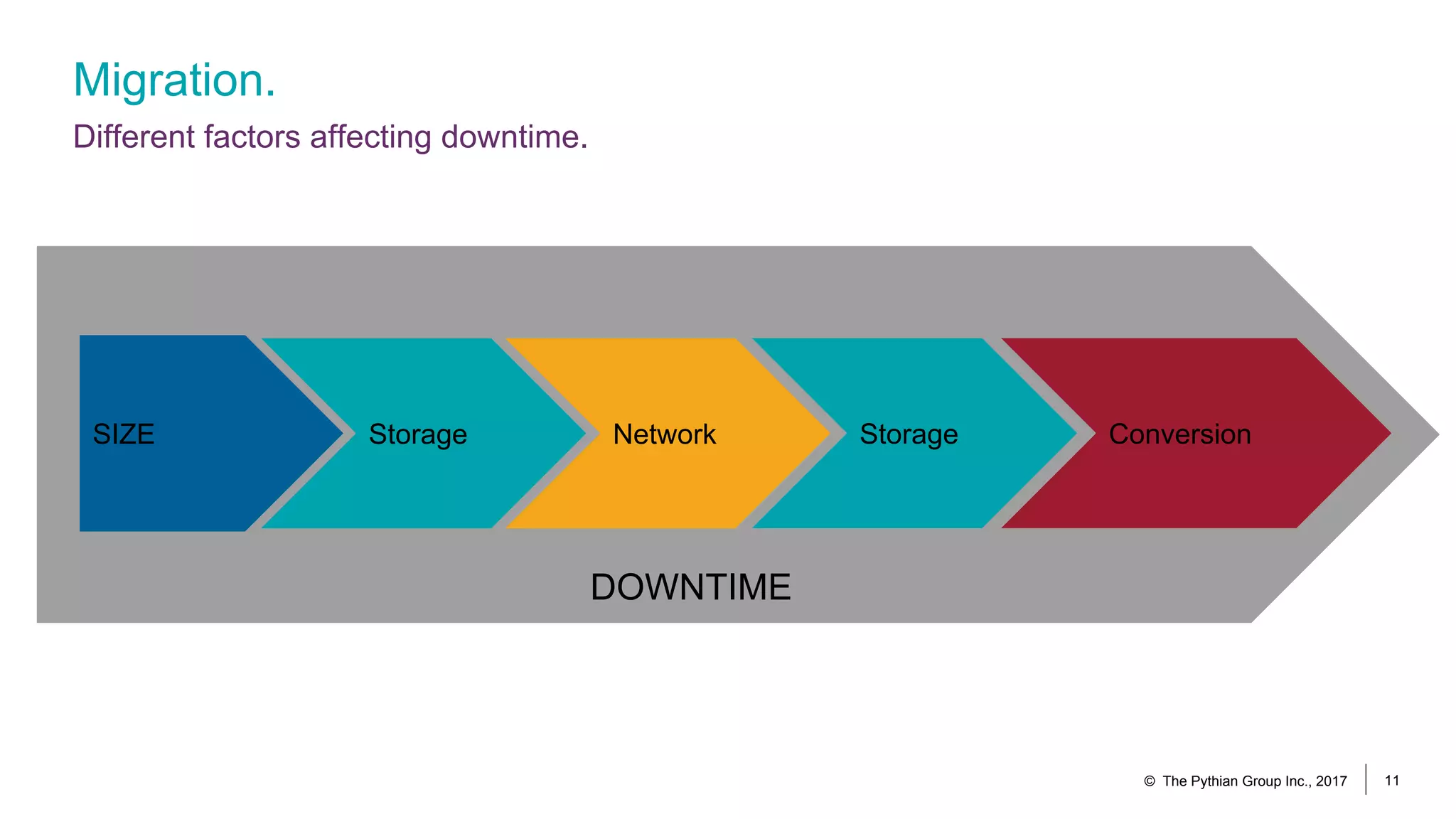

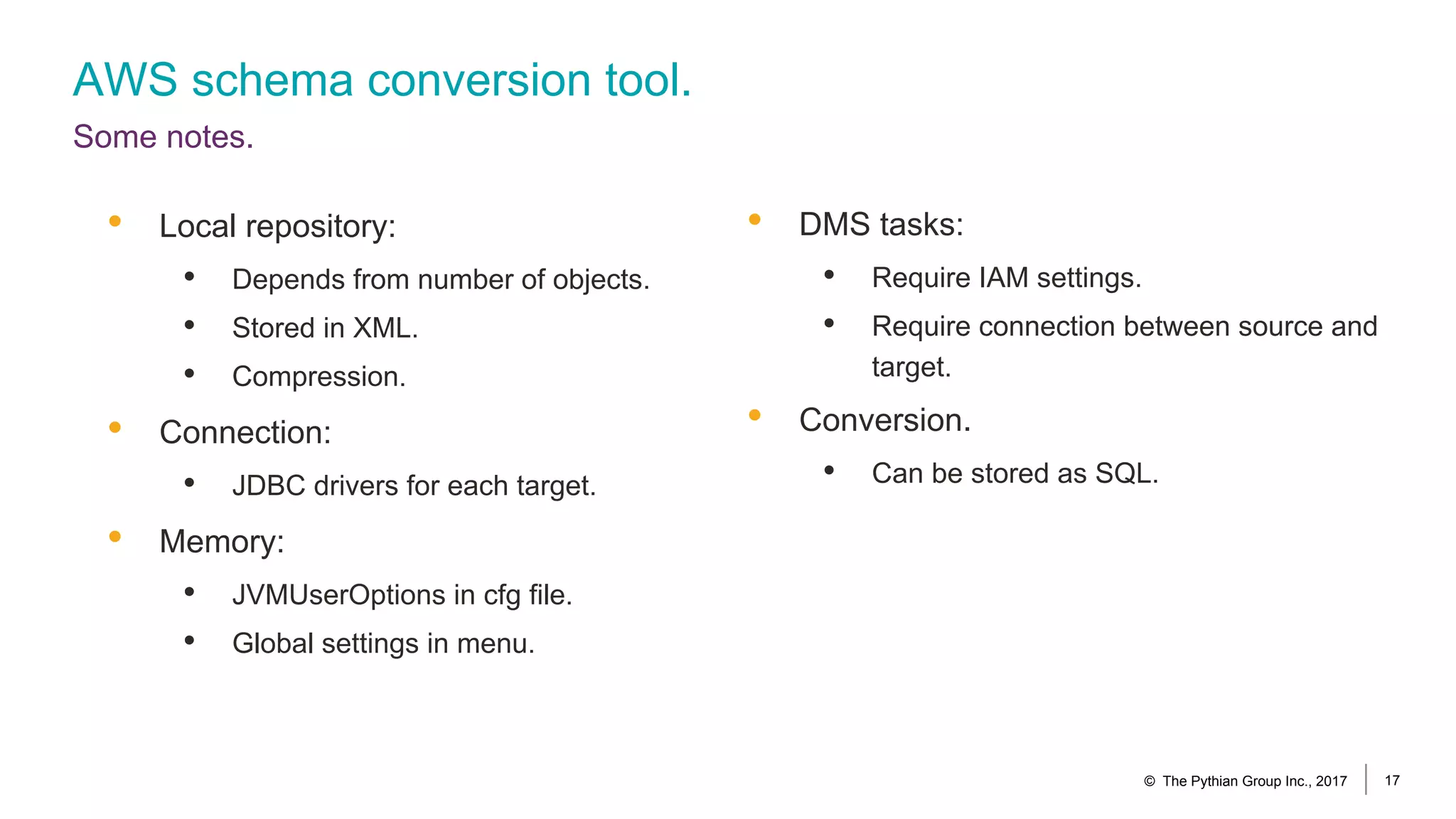

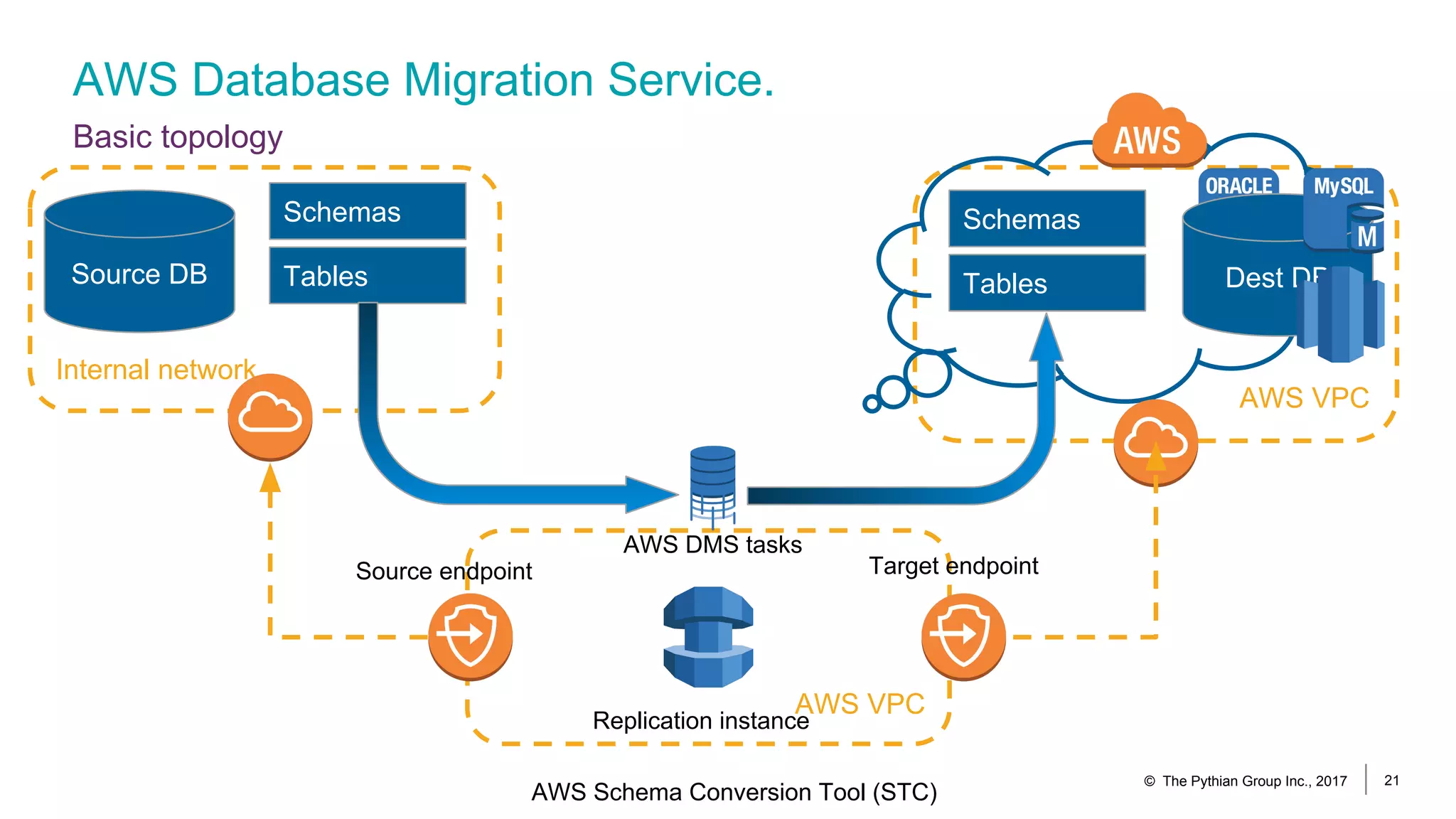

The document outlines the use of AWS Database Migration Service (DMS) and the AWS Schema Conversion Tool for cloud migrations, detailing the migration goals and considerations, including downtime reduction strategies. It highlights the various components of DMS, such as replication instances and network configurations, while also discussing alternative migration tools like Oracle Data Guard and GoldenGate. Additionally, the document emphasizes the importance of planning and the potential obstacles companies may face during the migration process.

![AWS Database Migration Service.

Components. Replication instance.

© The Pythian Group Inc., 2017 26

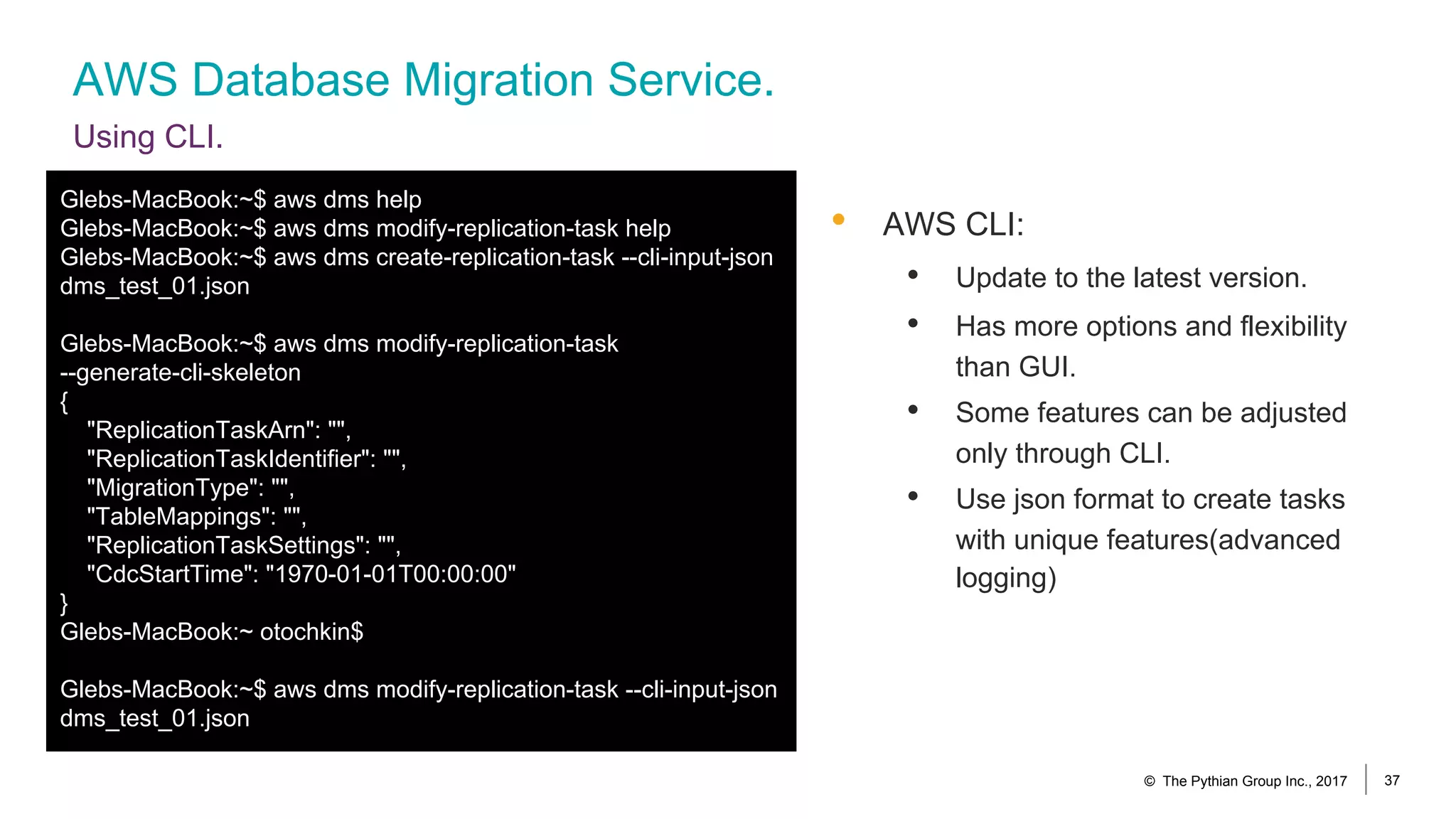

Glebs-MacBook:~$ aws dms describe-replication-instances --query

'ReplicationInstances[*].{ARN:ReplicationInstanceArn,MaintenanceWindow:PreferredMaintenanceWindow}'

[

{

"MaintenanceWindow": "fri:10:46-fri:11:16",

"ARN": "arn:aws:dms:us-east-1:2221234262:rep:ZXUE23B45HRVGF6ZNHN723GP6E"

}

]

Glebs-MacBook:~$ aws dms modify-replication-instance --replication-instance-arn

arn:aws:dms:us-east-1:2221234262:rep:ZXUE23B45HRVGF6ZNHN723GP6E --preferred-maintenance-window

"sun:10:46-sun:11:16"

…….](https://image.slidesharecdn.com/howtousedmsinawsmigrations-170911061230/75/db-tech-showcase-Tokyo-2017-C24-Taking-off-to-the-clouds-How-to-use-DMS-in-AWS-migrations-by-The-Pythian-Group-Inc-Gleb-Otochkin-26-2048.jpg)