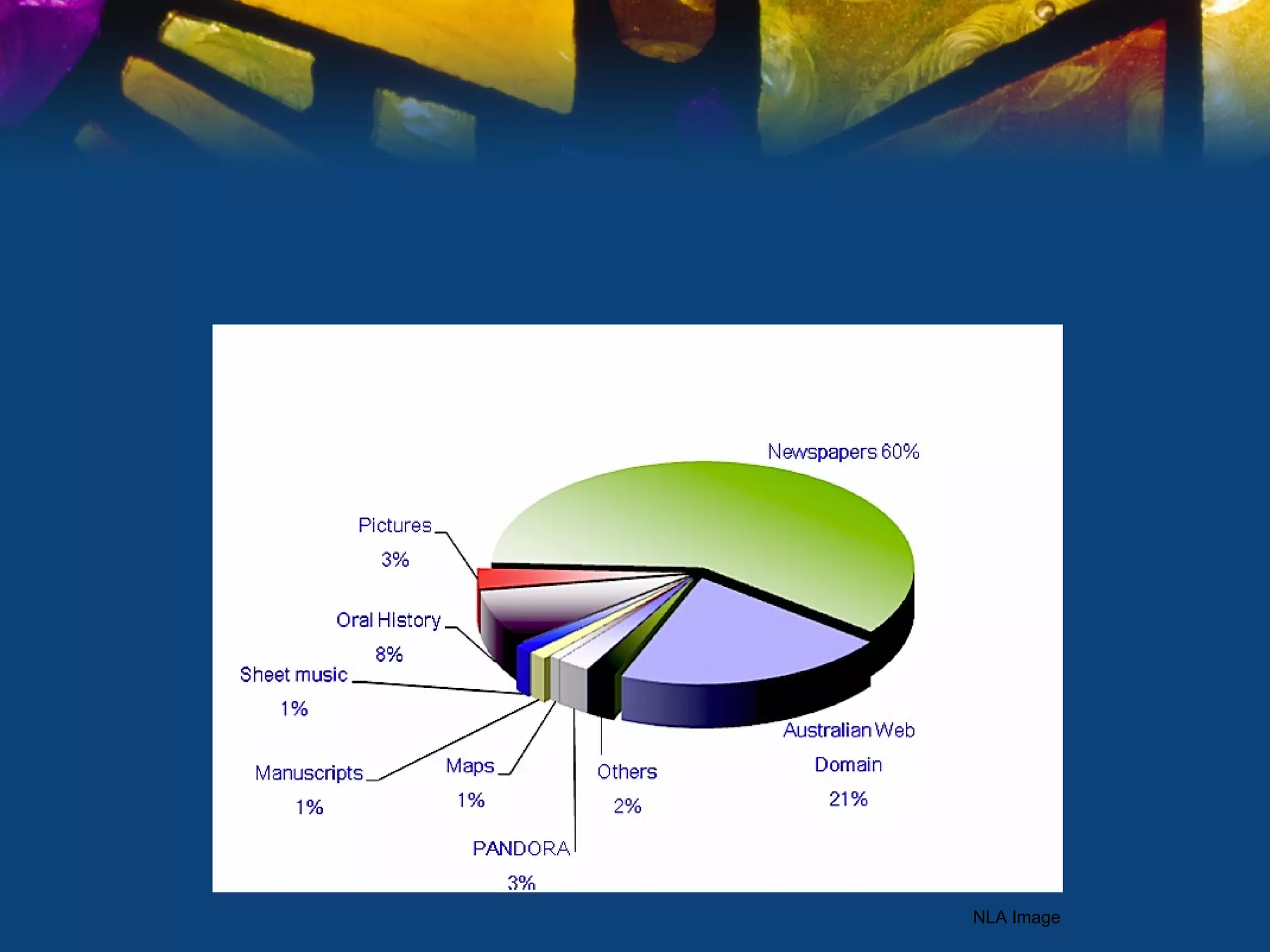

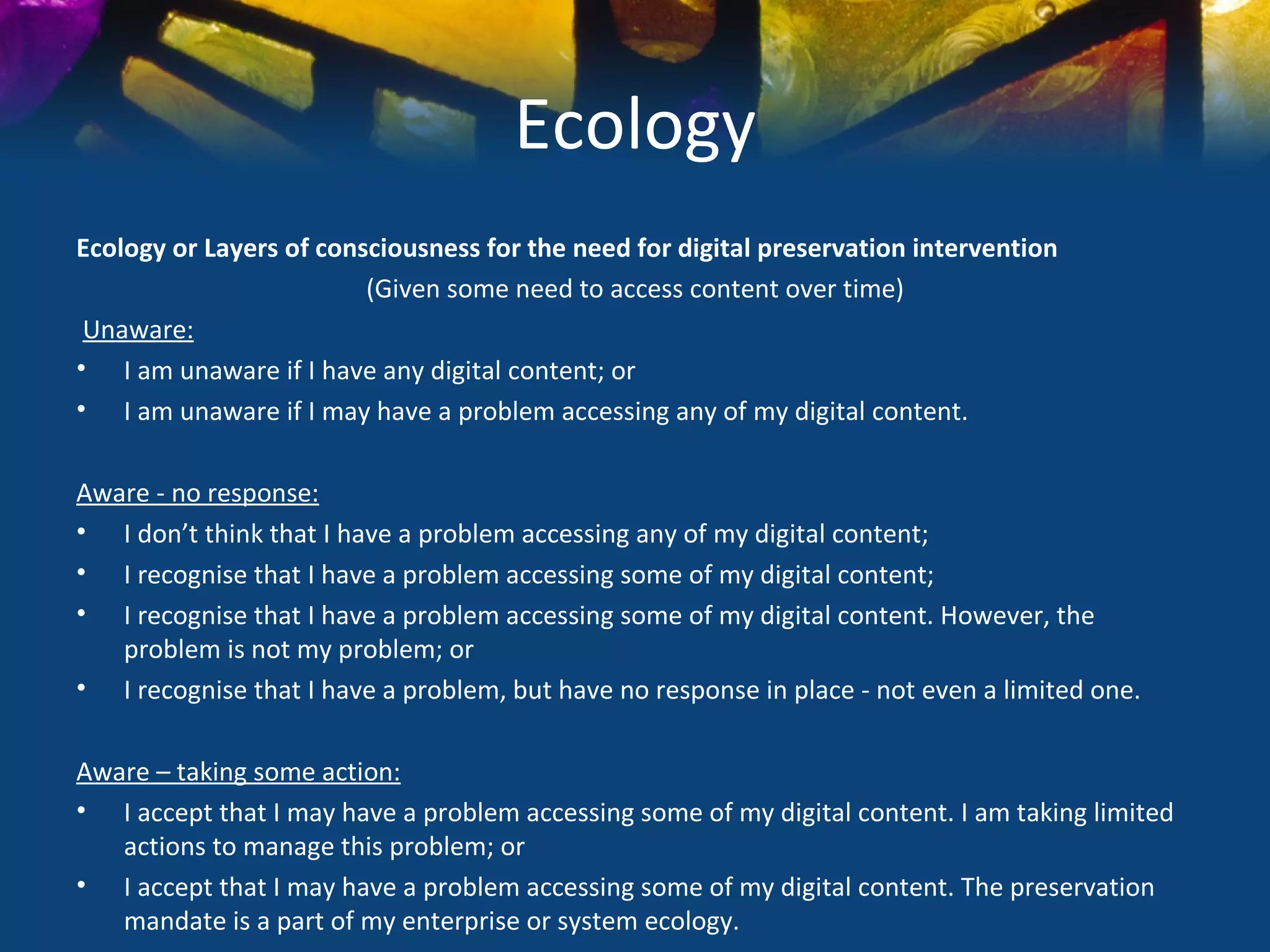

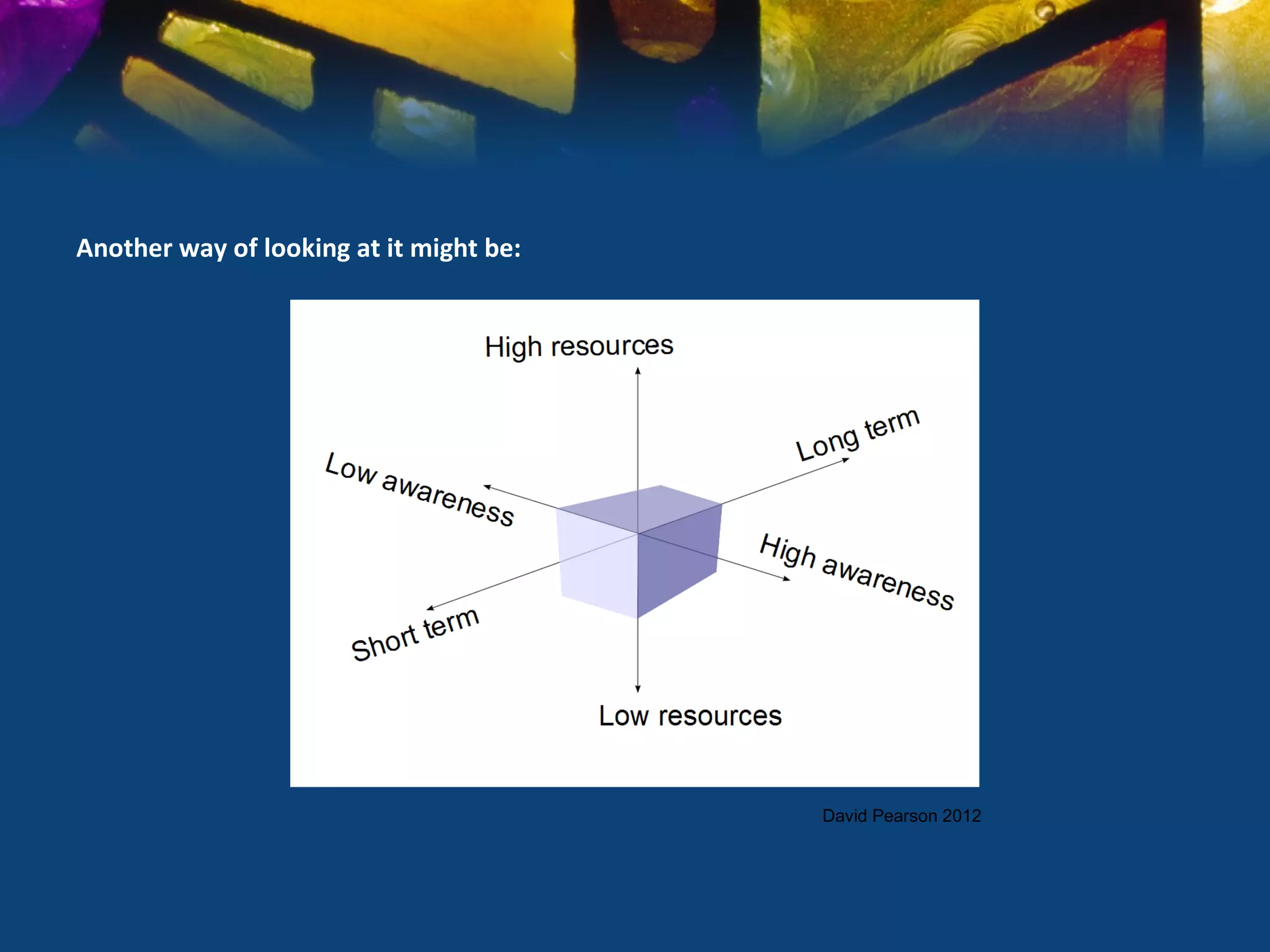

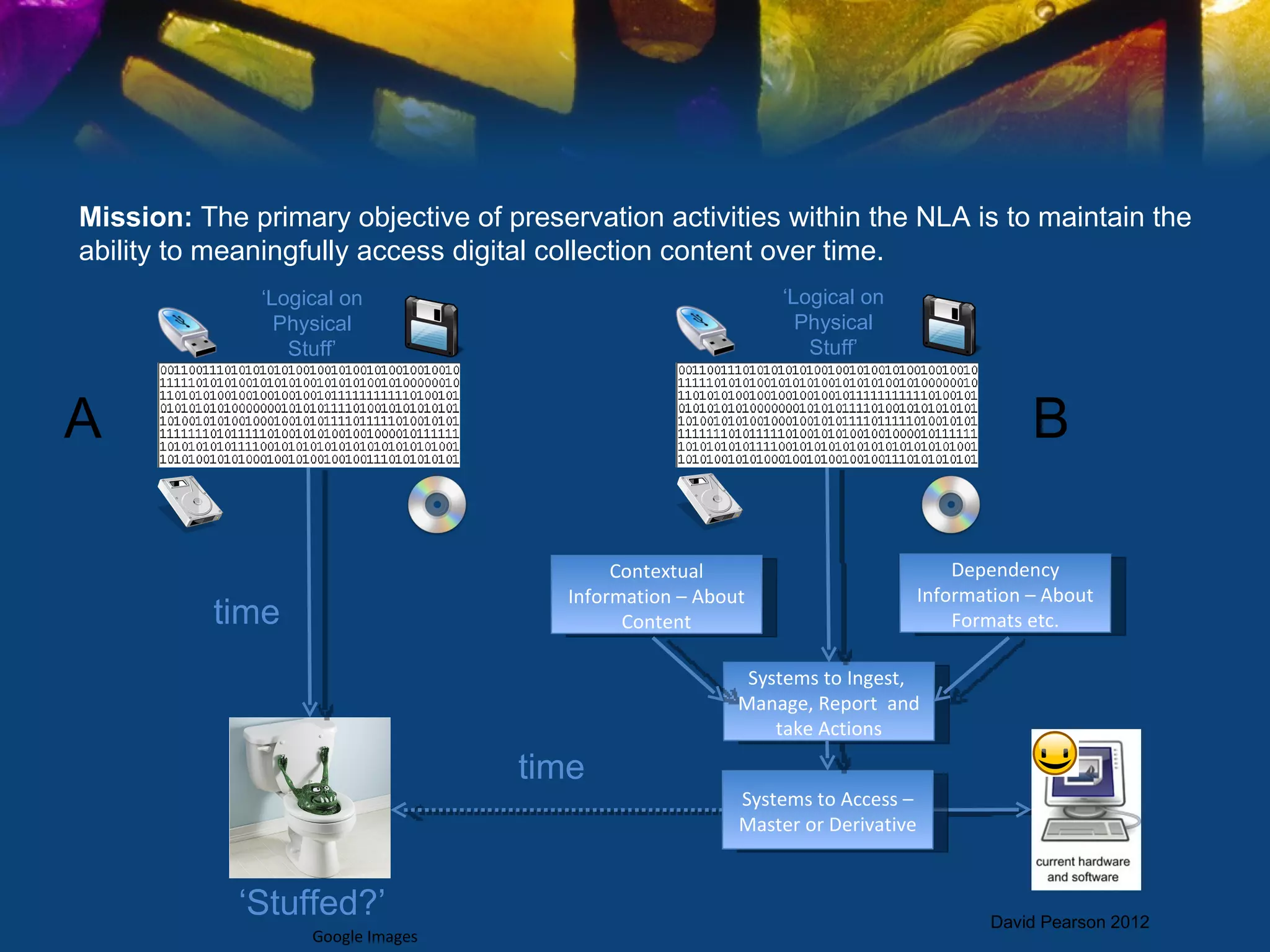

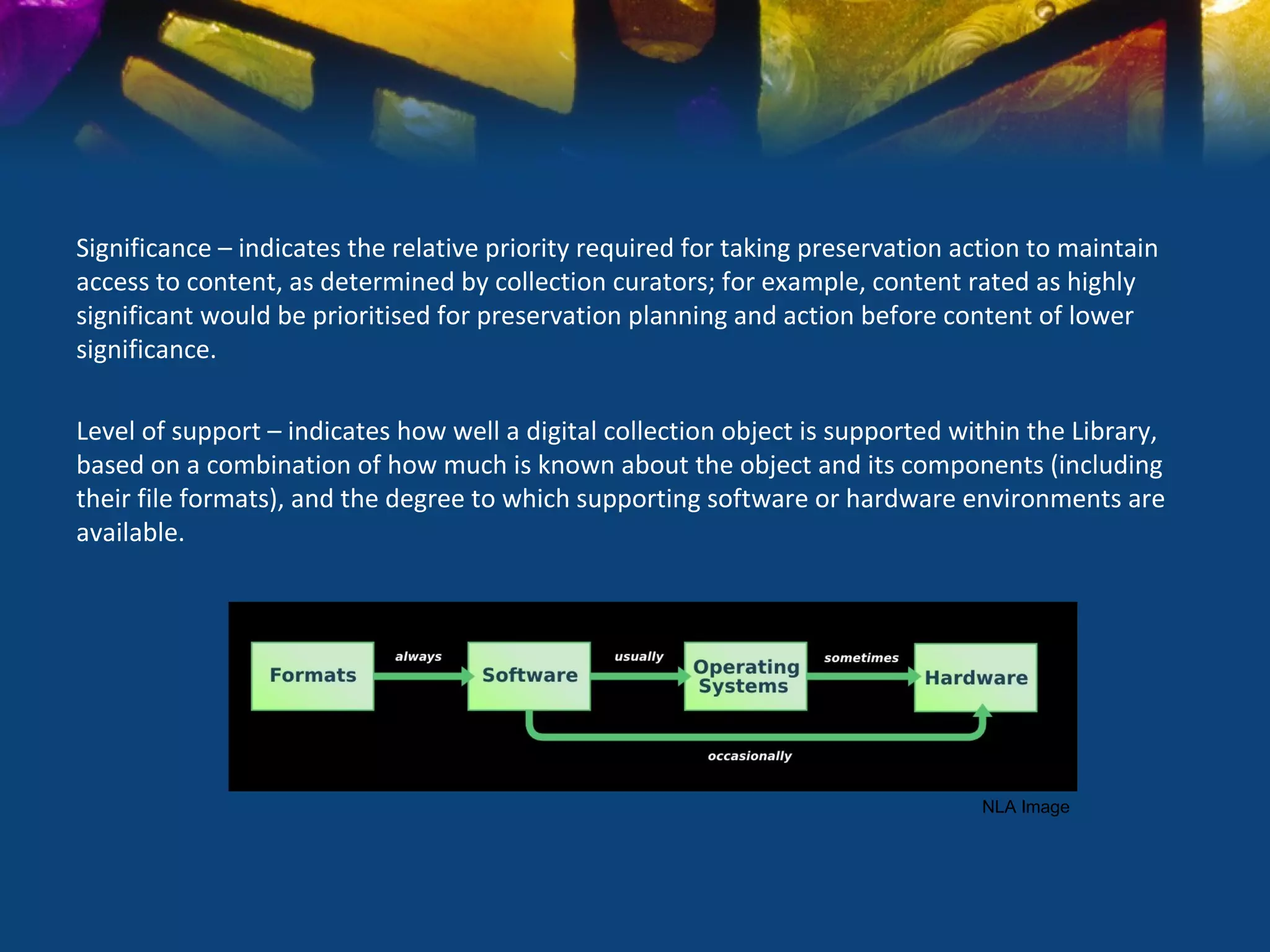

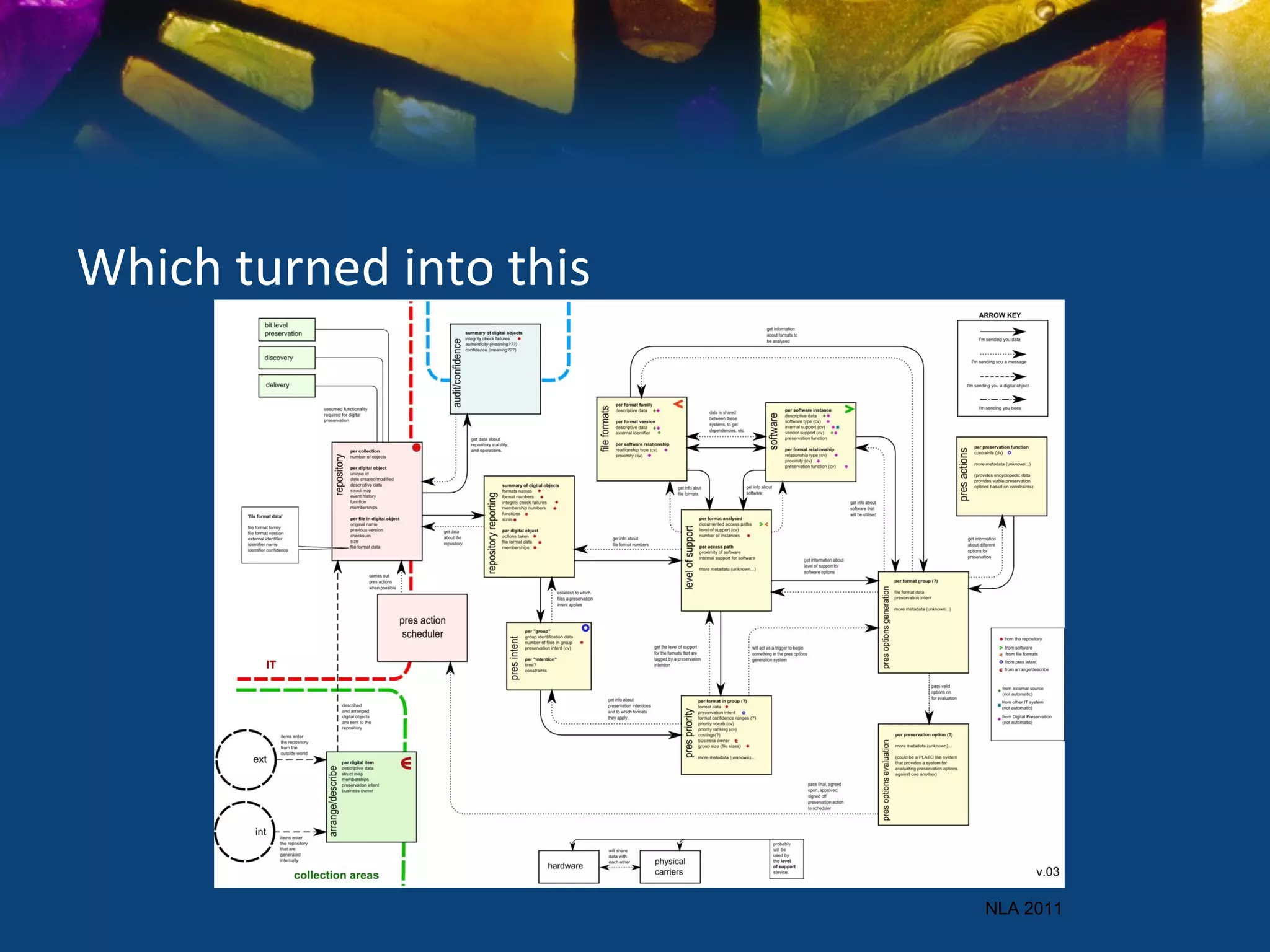

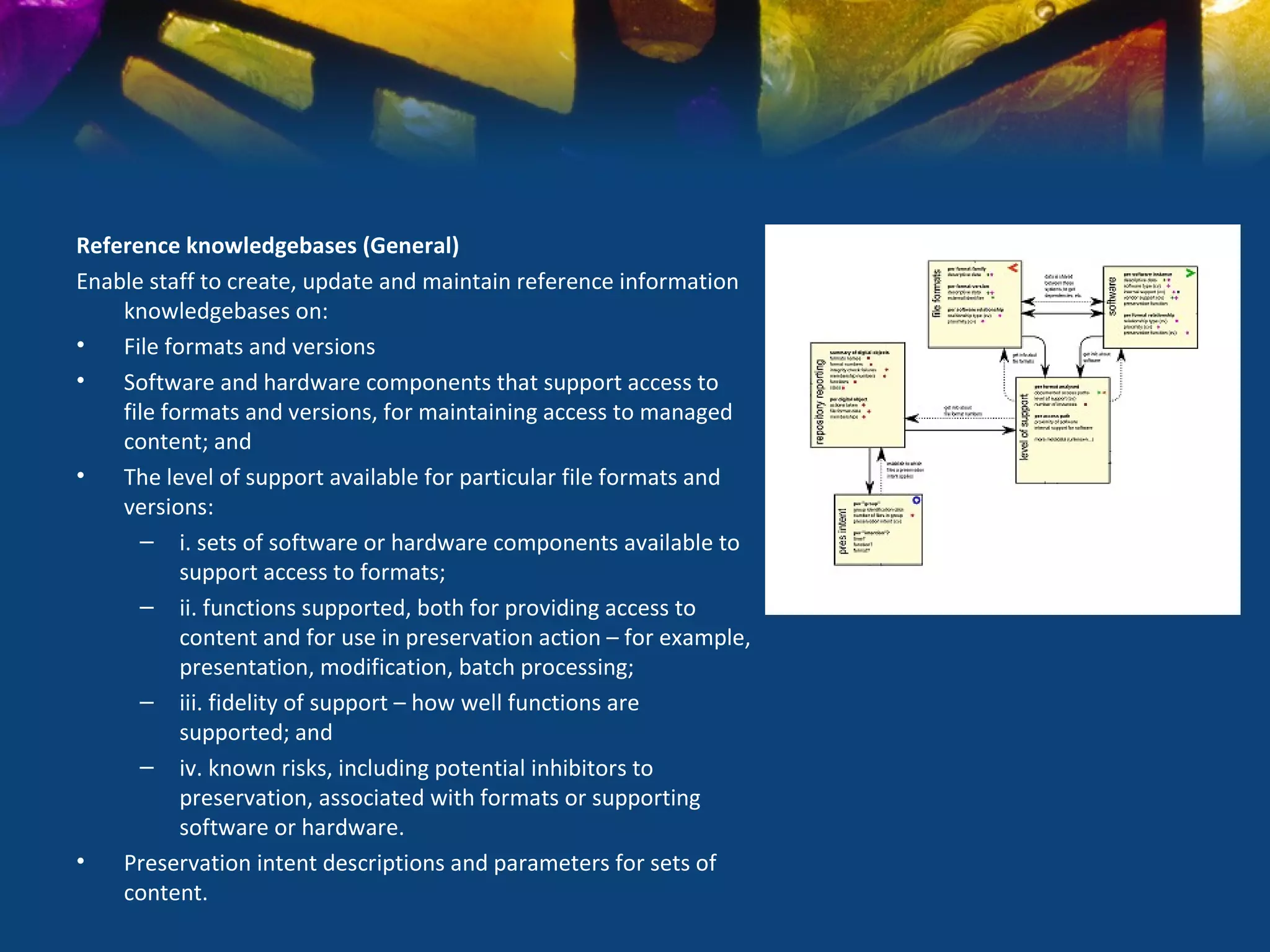

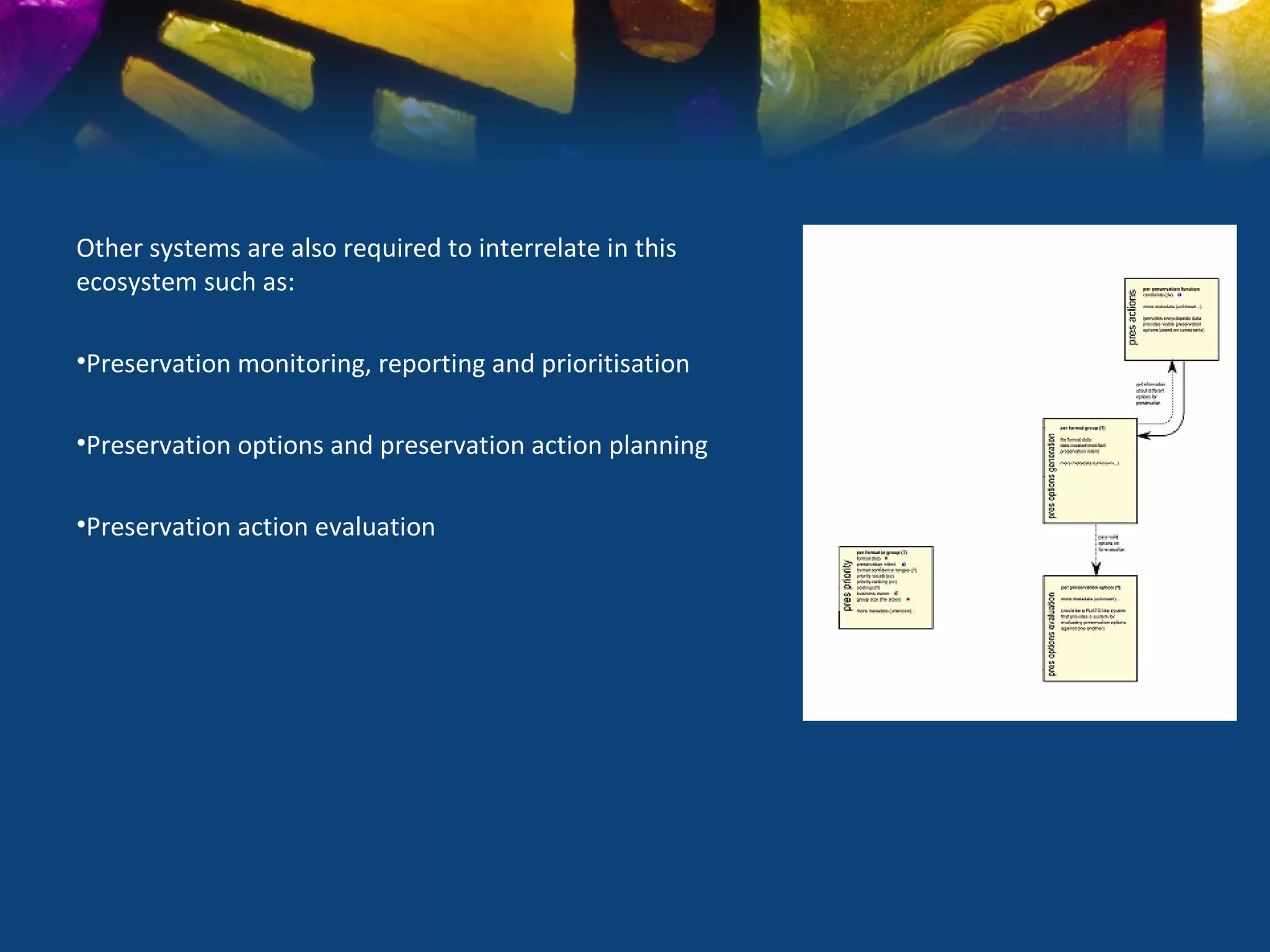

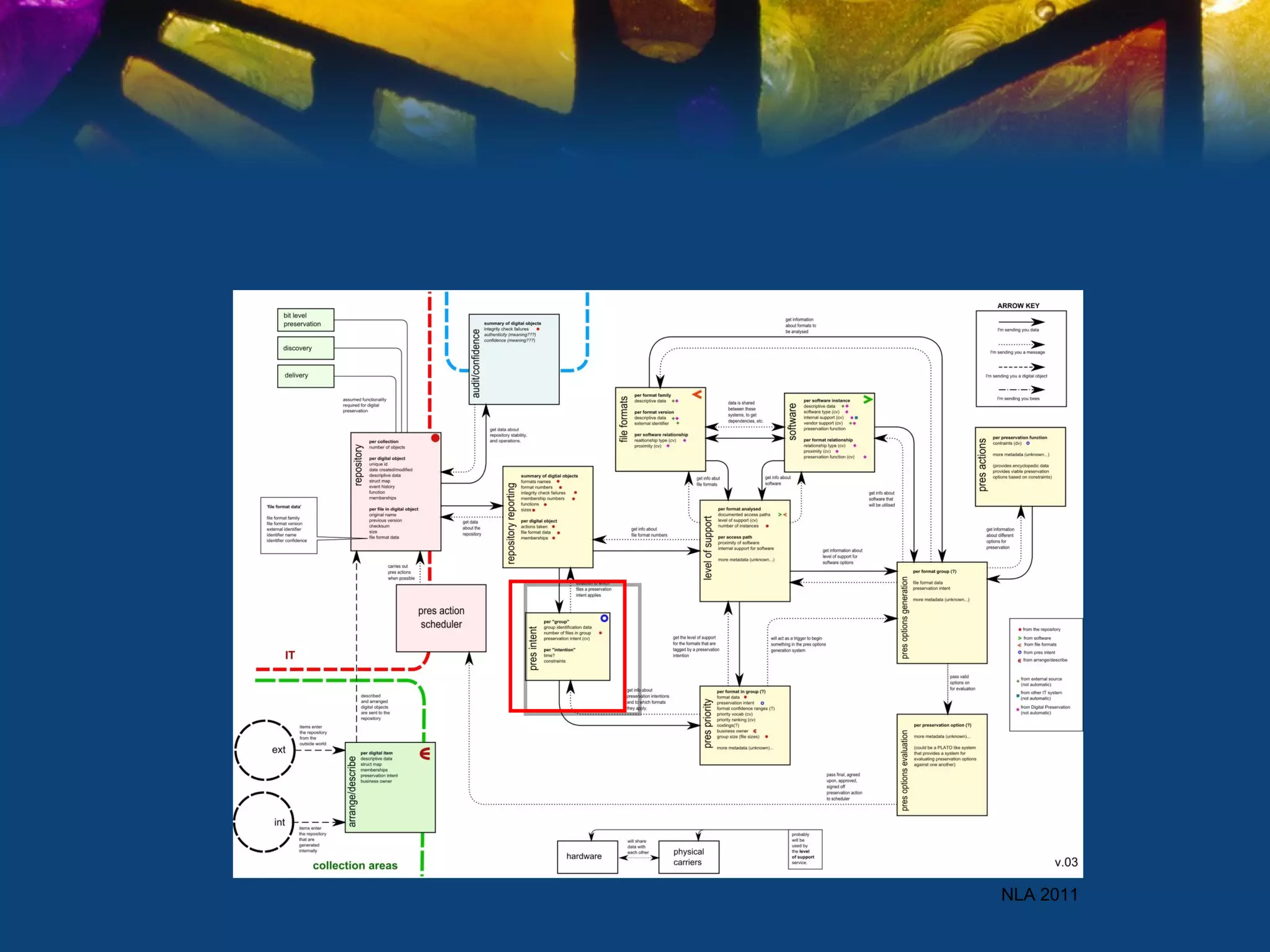

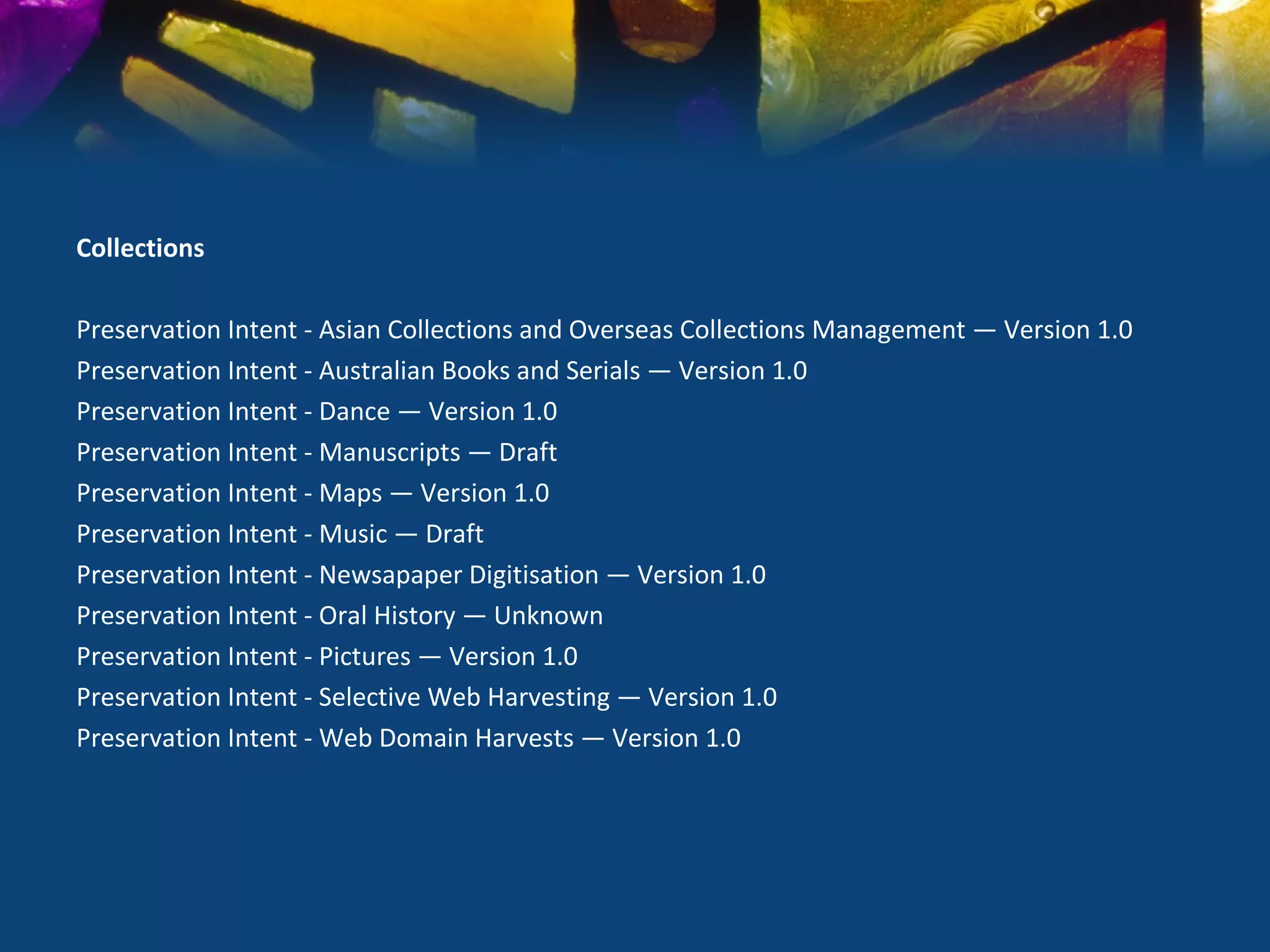

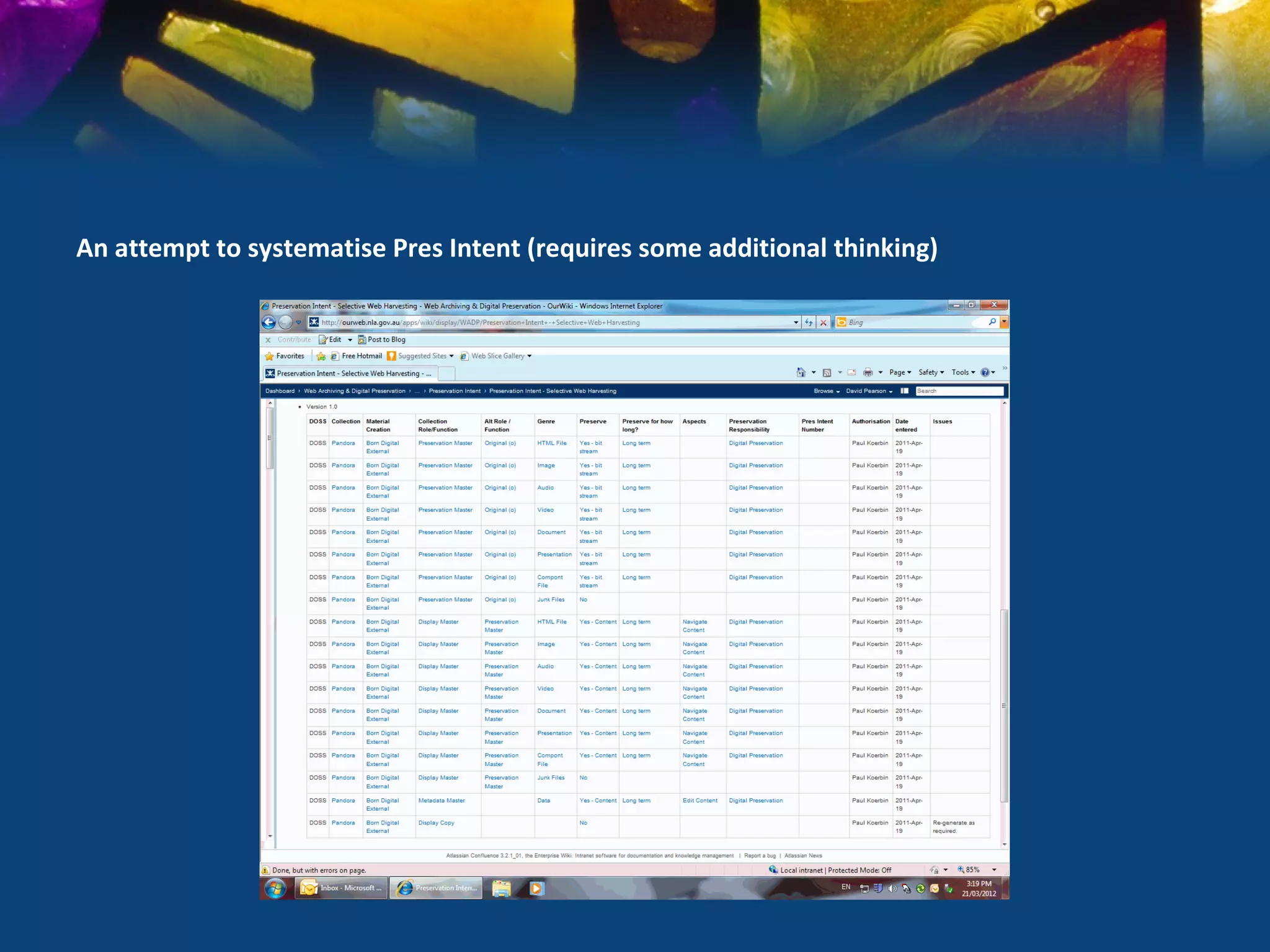

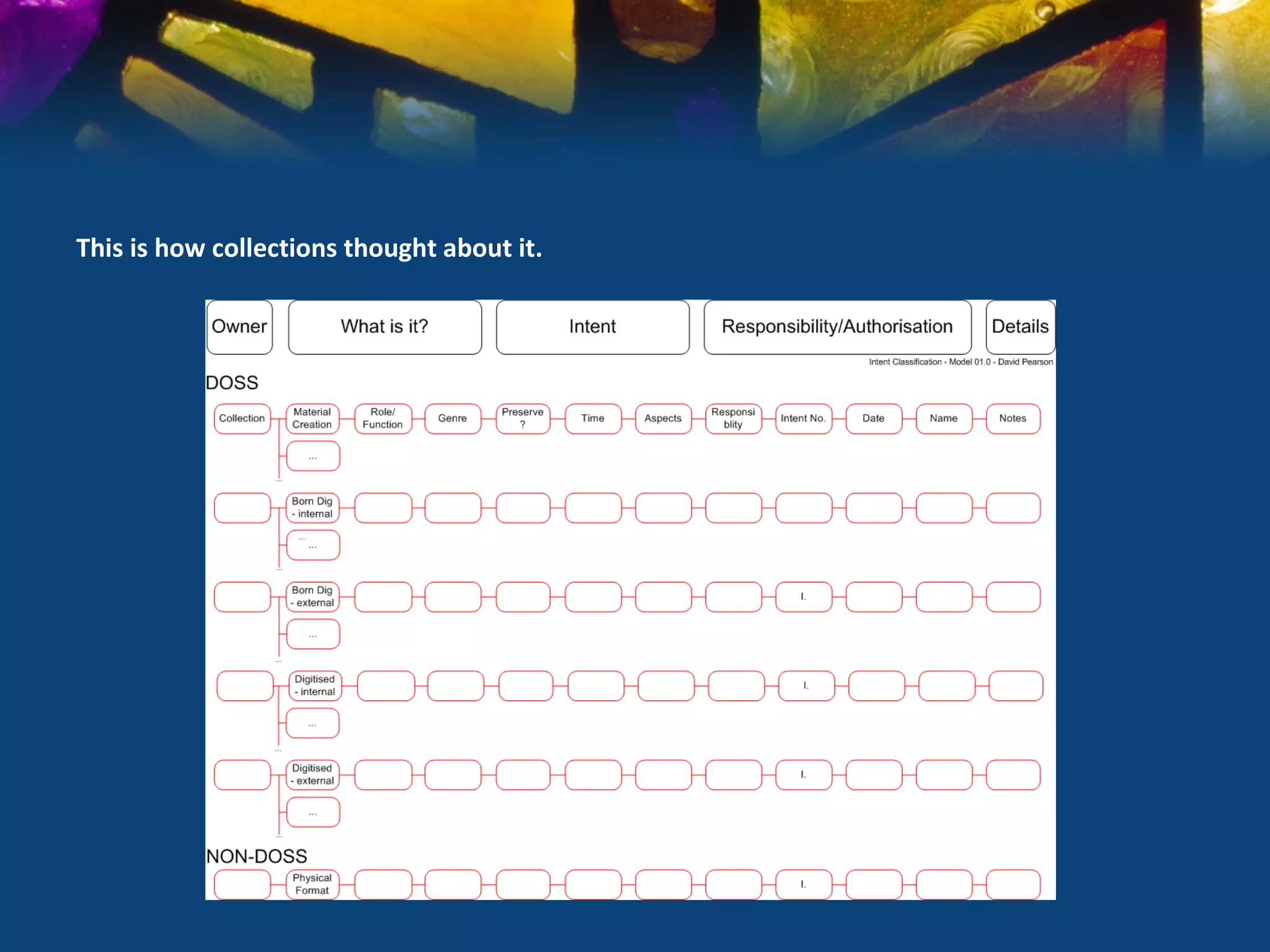

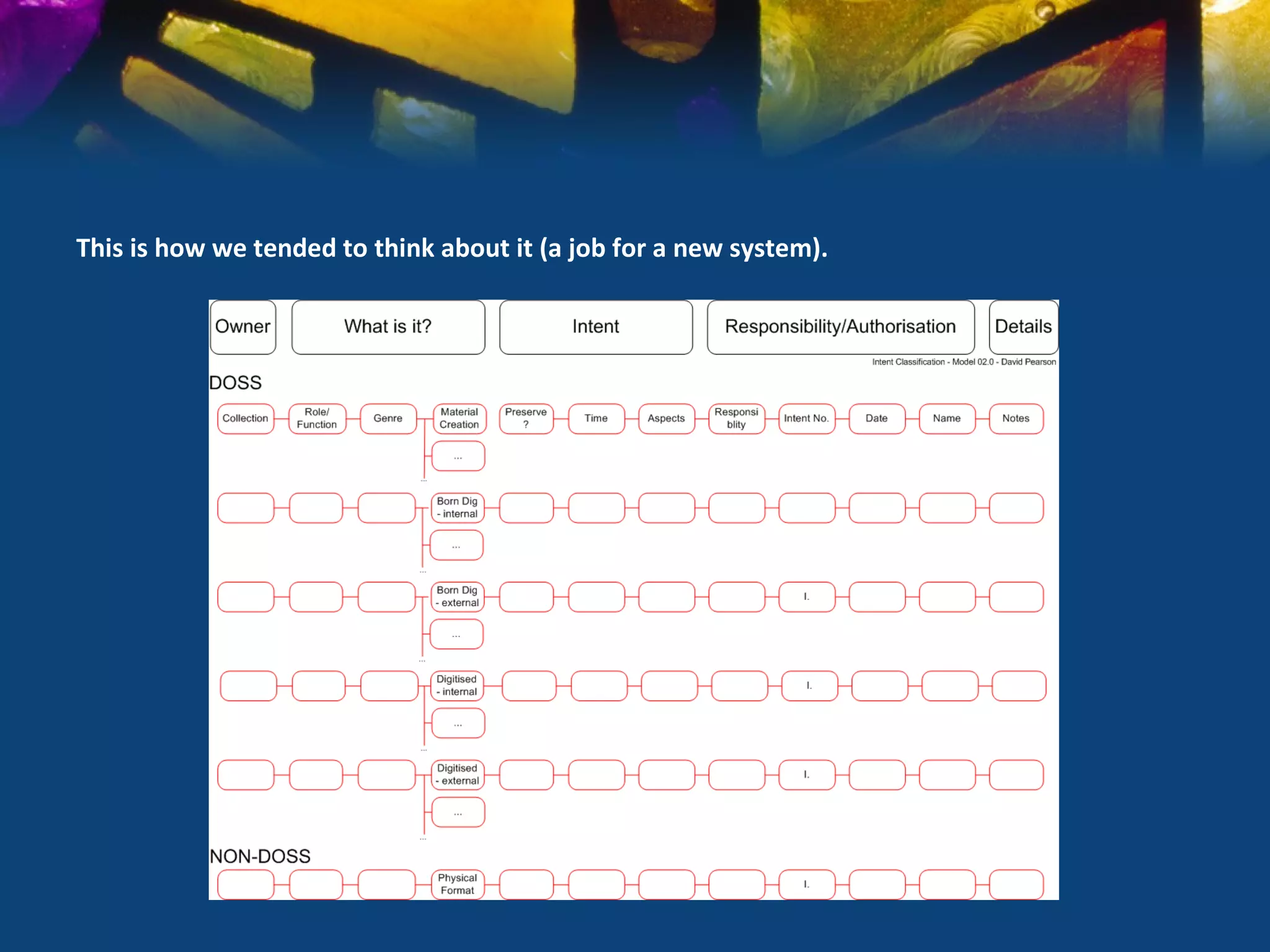

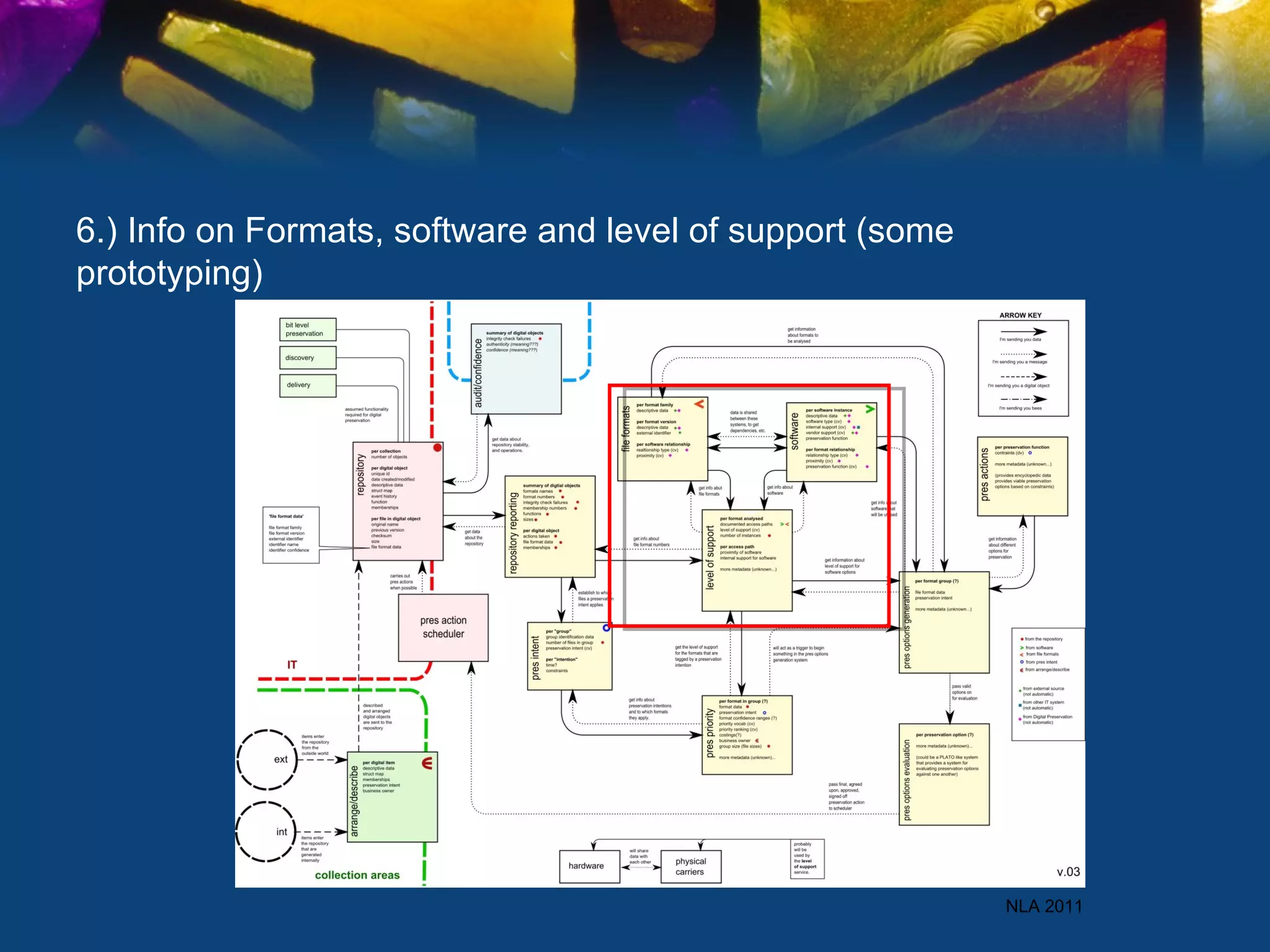

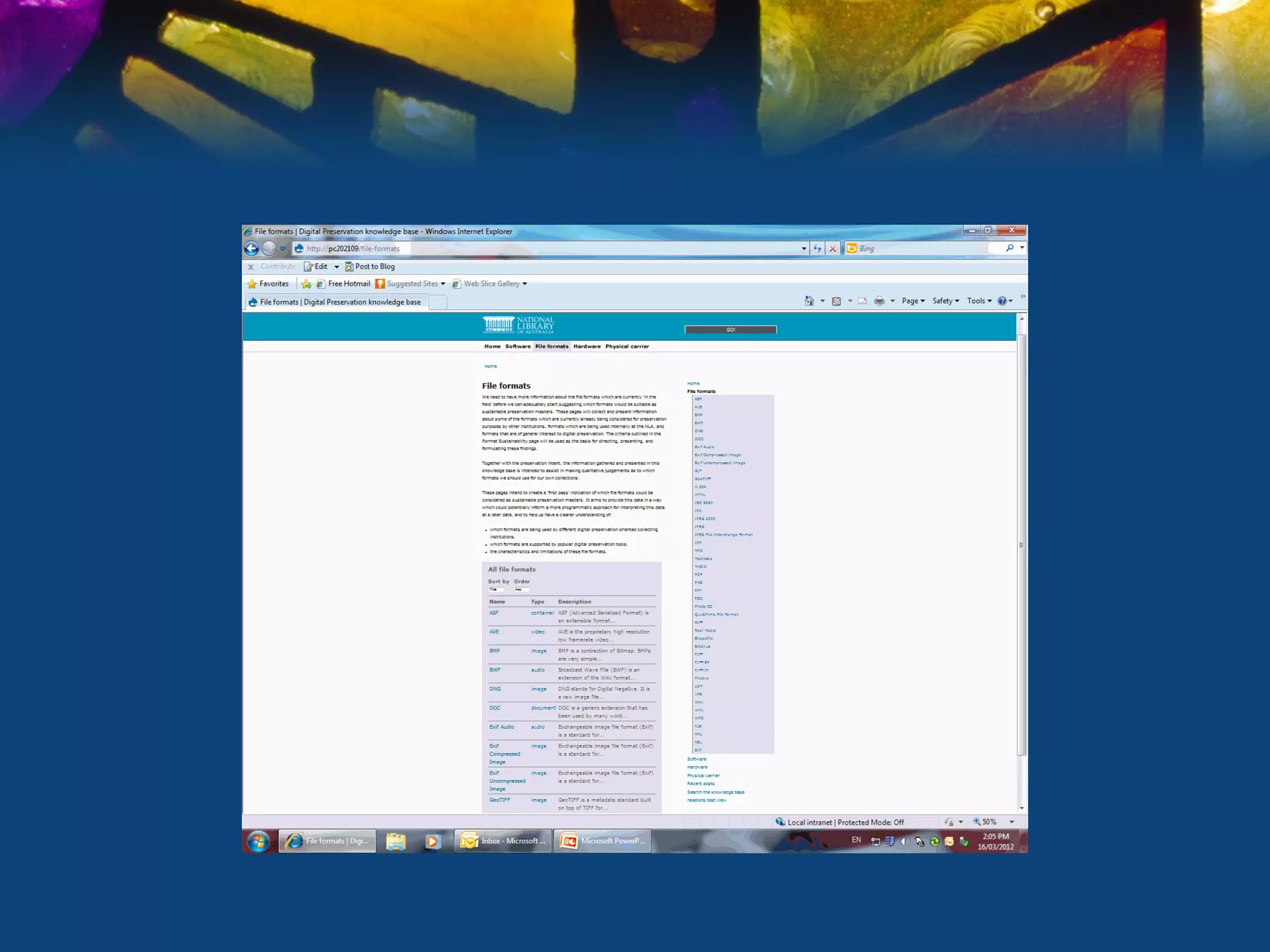

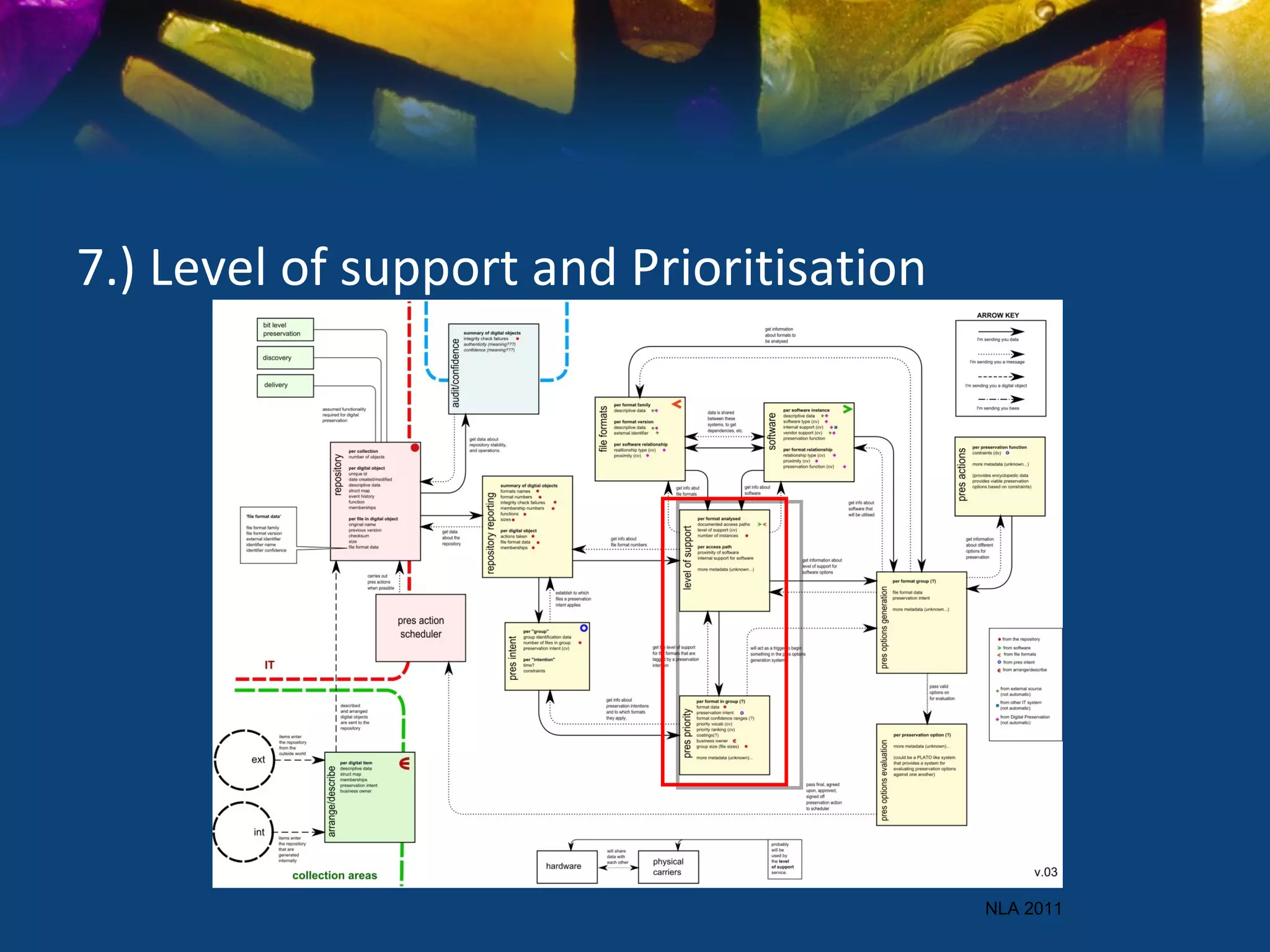

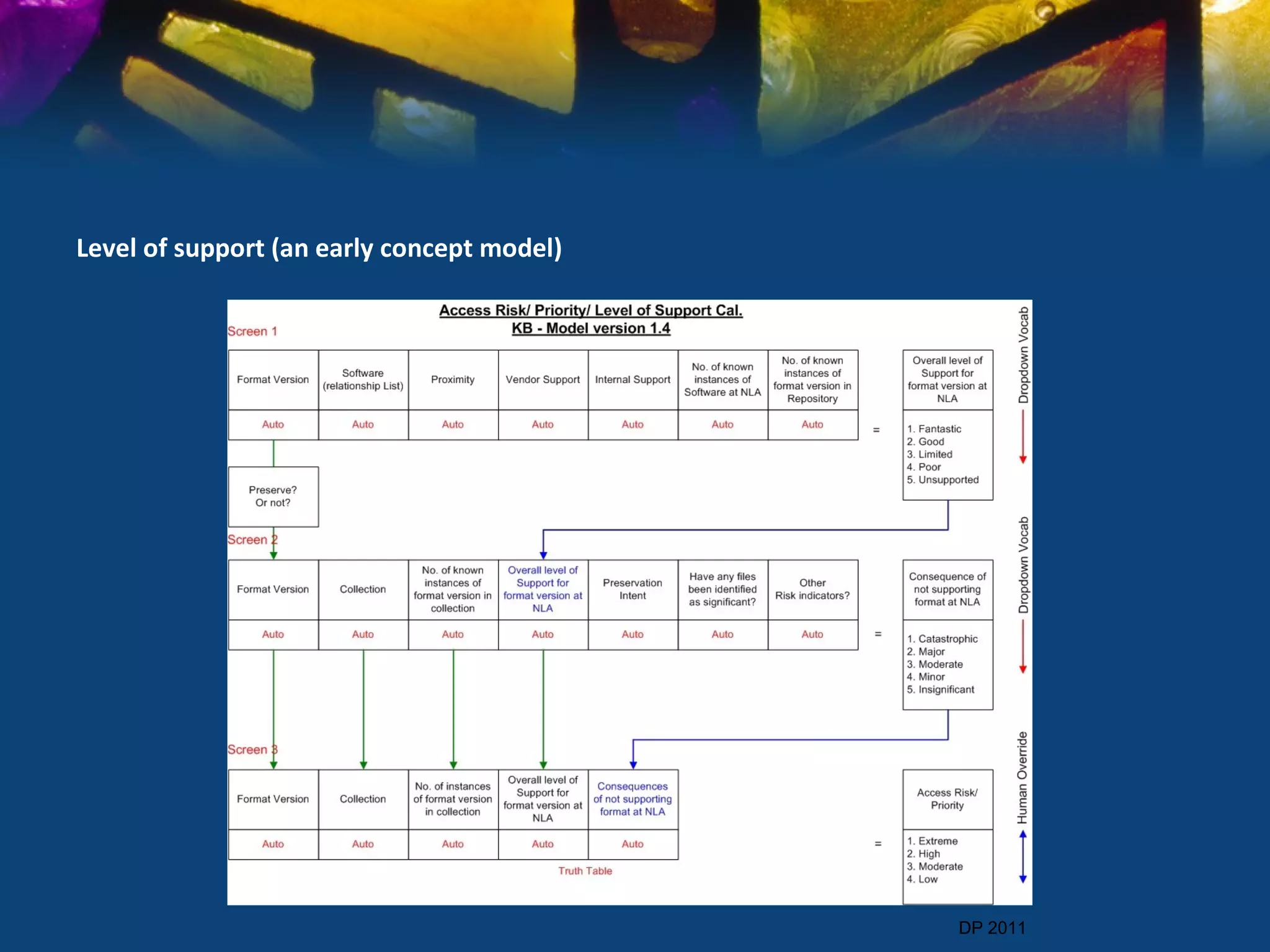

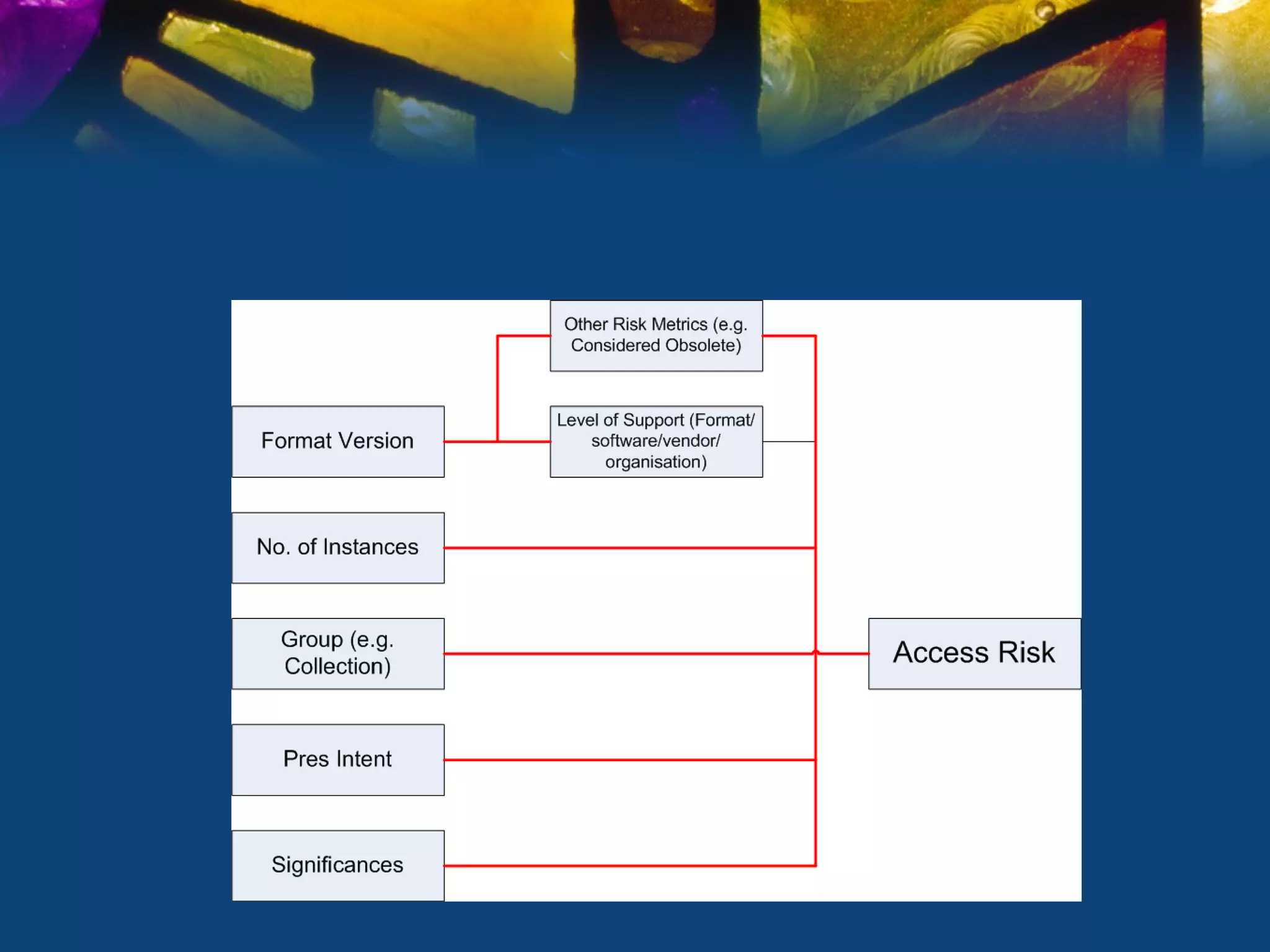

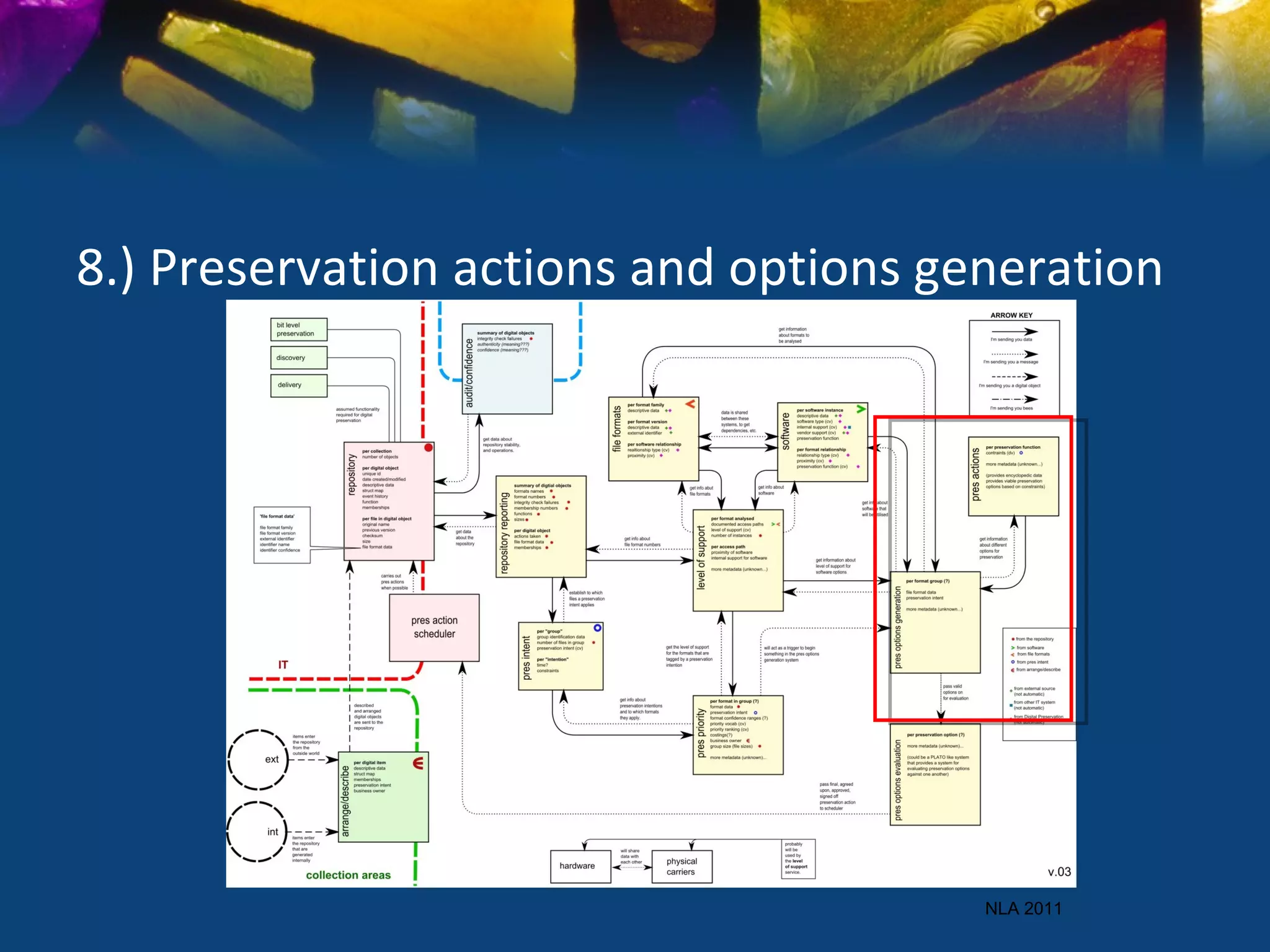

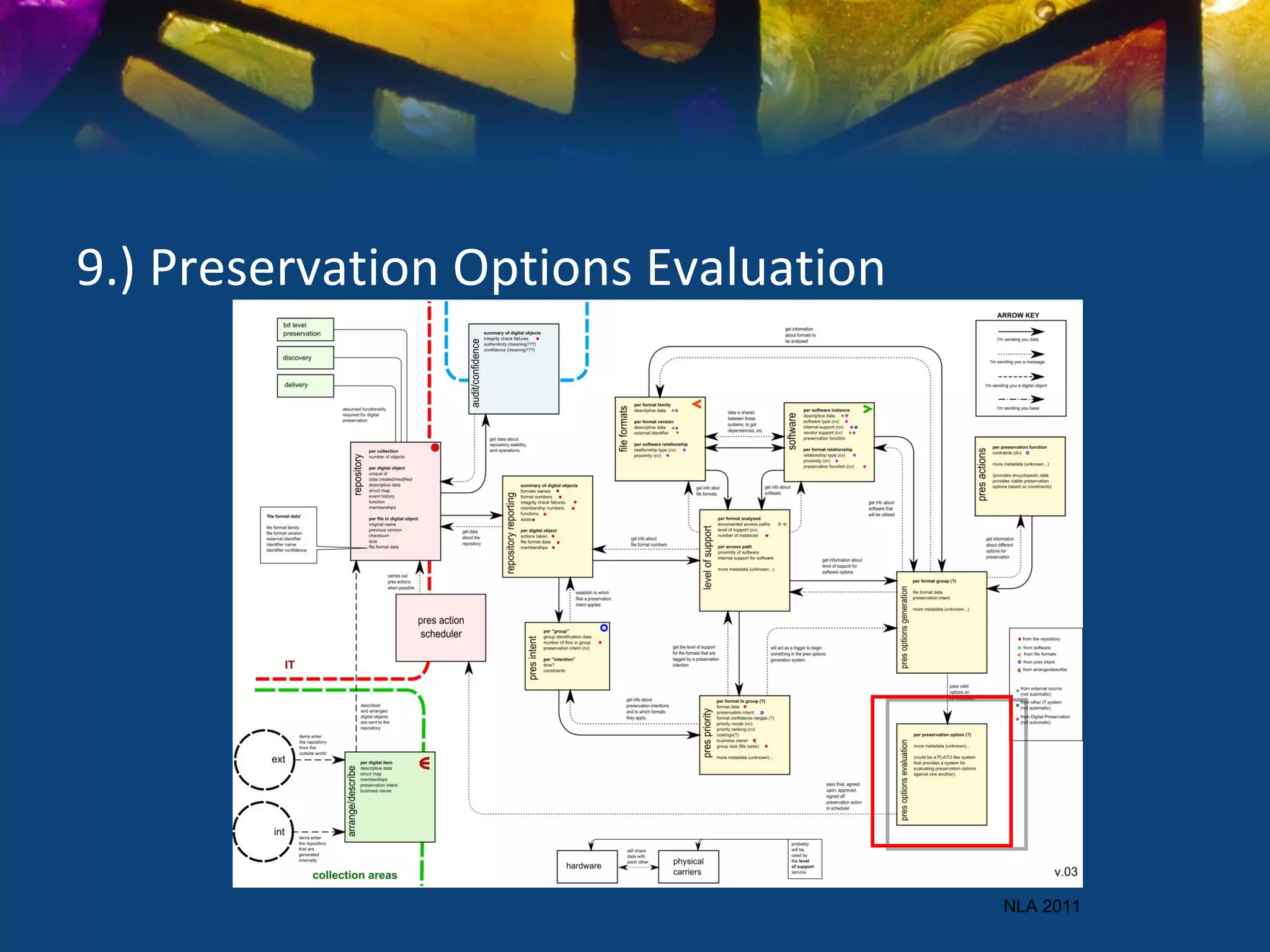

The document discusses the National Library of Australia's approach to digital preservation. It addresses the various types of digital materials in the library's collections, preservation responsibilities, required preservation processes, and approaches to prioritizing preservation treatment. It describes how understanding these areas led the library to develop systems for preservation assessment and reporting to help manage risks to digital content over time. The goal is to maintain long-term access to content while addressing different levels of complexity, formats, and preservation needs across collections.