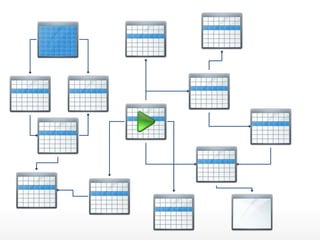

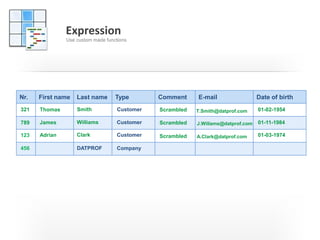

The document discusses the challenges and strategies of test data management, particularly in the context of agile development where frequent software iterations occur. It emphasizes the importance of effective data handling, including subsetting, anonymization, and applying privacy protection techniques to safeguard sensitive customer information. Additionally, it highlights the necessity of using various data management tools to streamline processes while ensuring compliance with regulations.