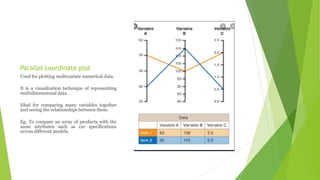

This document discusses data visualization and various techniques used to visually represent data. It defines data visualization as the pictorial or visual representation of data using visual elements such as charts, graphs, and diagrams. It describes different types of visualization including linear, planar, volumetric, temporal, multidimensional, tree, and network visualizations. It also discusses specific techniques like isolines, isosurfaces, streamlines, parallel coordinate plots, and timelines. The document outlines applications of data visualization in fields like education, science, and systems visualization. It notes that big data poses challenges for traditional visualization techniques due to its large volume and speed of generation.